Single-channel DRAM is bad news for PC gaming but depending on what CPU you have, it's not actually as awful as you might think

Cache to the rescue.

While PC gaming has never been an ultra-cheap hobby, the current AI-induced global memory supply crisis has made it painfully pricey. With DRAM kits and SSDs now three to four times more expensive than they were 12 months ago, nothing is off the table when it comes to finding ways to save some money.

All of which got me thinking: Is it worth using a single stick of memory compared to shelling out for a dual-channel kit, be it separately or as part of a prebuilt gaming PC? We're always told that this isn't a good idea, but if you bought a 32 GB kit with a friend and split the memory between the two of you, that would actually save you a bit of money over buying a full 16 GB kit.

So armed with a deerstalker hat, magnifying glass, pipe... oh, and a host of PC parts, I've delved into the whole single vs dual-channel DRAM debate. What I've discovered will help you make the right choice when it comes to choosing your next gaming PC or planning a full upgrade.

Article continues belowWhat is a DRAM channel?

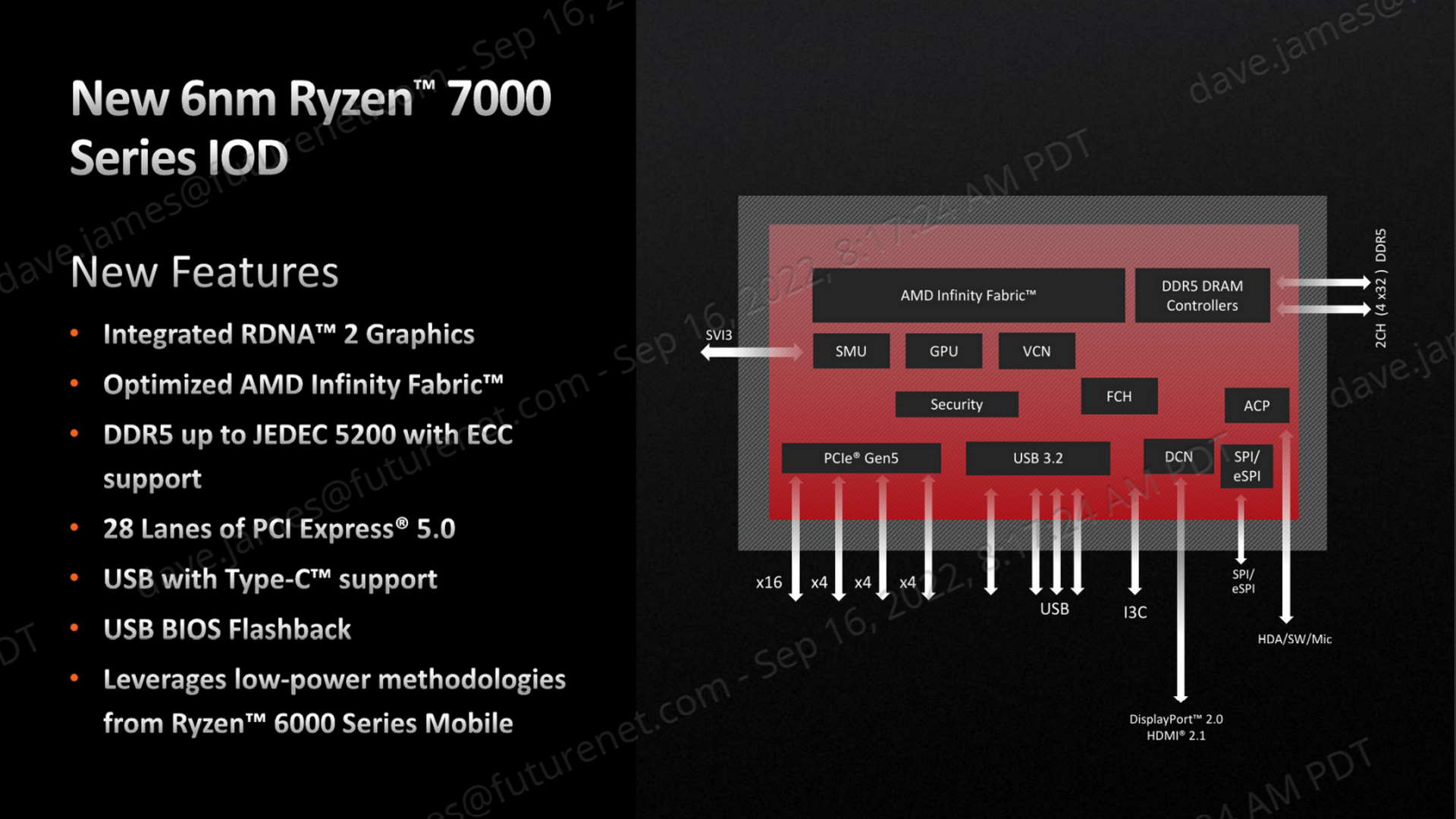

For more years than I can remember, CPUs in desktop PCs and laptops have sported two independent memory controllers. Originally, they were part of the motherboard chipset (specifically the bit called the Northbridge), but they're now all buried inside the processor die.

The controllers basically handle everything to do with the computer's system memory, reading and writing data, telling the DRAM chips when to refresh, and so on. By having two independent controllers, i.e. dual channels to the memory, you can theoretically get twice the performance, though separate controllers also help to reduce latencies because you can have one reading while the other is writing, for example.

Which pretty much suggests that using a single channel is bad news, right? Well, not necessarily, because modern CPUs have a lot of cache, which goes a long way to reducing the data demands on the memory controllers. If the data required by an instruction is already stored in cache, it doesn't need to be fetched from the system memory.

So for CPUs with lots of cache, single-channel DRAM might not be as much of an issue as you think. Especially if the DRAM is fast, with lots of bandwidth, too. There's only one way to find out.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Big cache, fast DRAM

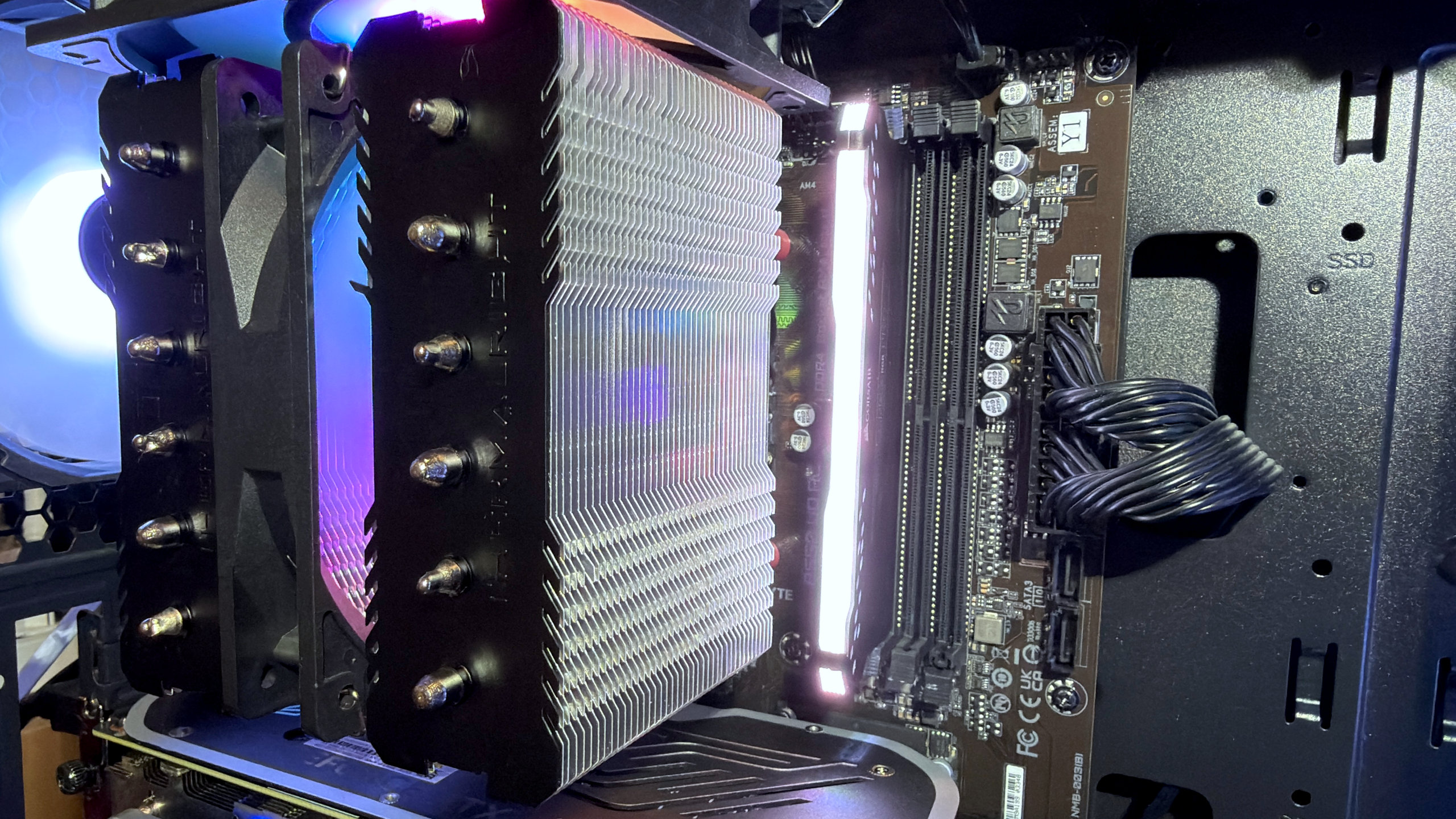

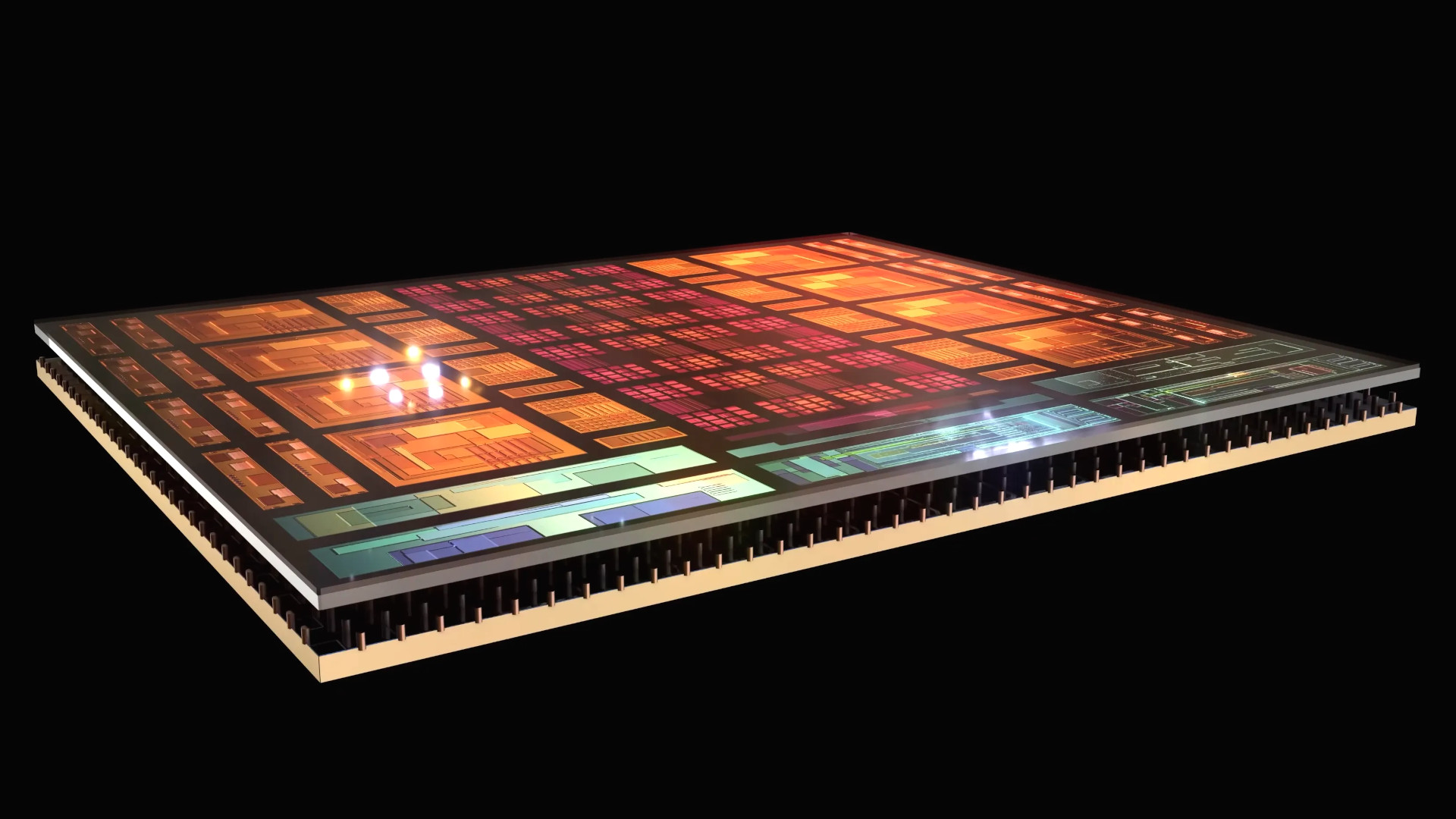

To kick things off, I'm using a Ryzen 9 9950X3D, a processor with an enormous 128 MB of Level 3 cache, split 96+32 across its two core chiplets. Even though games will pretty much only use one of those chiplets, AMD's software ensures that it will be the 96 MB chiplet.

That's been paired with a GeForce RTX 5090 and 32 GB of dual-channel DDR5-6000 CL32, and while that's not the fastest memory you can buy, it's still pretty speedy stuff. Sure, these are some of the most expensive parts you can stuff in a gaming PC, especially the graphics card, but in gaming, this particular CPU isn't a great deal faster than a Ryzen 7 9800X3D or any Zen 5-based processor with 3D V-Cache, for that matter.

The results below are for six different games, all running at 4K native resolution (no upscaling or frame generation), with every graphics option set to the maximum value. Where available, ray tracing or path tracing has been enabled, too. This is to ensure that the game is putting the biggest load it can onto the graphics card, to see what impact single-channel DRAM has in GPU-limited situations.

DRAM channel benchmarks | Max graphics

Ryzen 9 9950X3D, RTX 5090

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 28 Avg FPS, 21 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 28 Avg FPS, 20 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 28 Avg FPS, 20 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 81 Avg FPS, 35 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 76 Avg FPS, 26 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 81 Avg FPS, 33 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 65 Avg FPS, 38 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 64 Avg FPS, 38 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 64 Avg FPS, 38 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 142 Avg FPS, 67 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 119 Avg FPS, 54 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 136 Avg FPS, 64 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 156 Avg FPS, 73 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 140 Avg FPS, 66 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 143 Avg FPS, 68 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 383 Avg FPS, 238 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 357 Avg FPS, 202 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 374 Avg FPS, 238 1% Low FPS |

Well, half of the games are GPU-limited in this instance, because the RTX 5090 has no problems churning out the frames in Spider-Man Remastered, Baldur's Gate 3, and Counter-Strike 2. On the other hand, Black Myth: Wukong, F1 25, and Hogwarts Legacy are somewhat grindy with ray tracing/path tracing active.

I tested each game with the full dual-channel 32 GB, then tested them again with one DIMM removed. Finally, I swapped that single 16 GB stick of DRAM for a 32 GB one. It does have slightly better timings (two cycles snappier across the board), but it's not enough of a difference to really stand out.

If you cycle through each game's results, using the chart's drop-down menu, you'll see that single-channel DRAM doesn't cause much of a performance drop, but only when the game isn't restricted by how much memory is present. For example, the 1% low frame rates in Hogwarts Legacy fall by 26% when using one 16 GB DIMM, but that decrease is only 6% when using a 32 GB stick.

Interestingly, Counter-Strike 2 shows practically no difference in performance, single vs dual channel DRAM, which shows that the game isn't shifting a great deal of data around during gameplay. Or rather, not enough to trouble the 9950X3D's massive L3 cache and the RTX 5090's enormous VRAM.

Now let's take a look at a polar opposite scenario. I redid every test, but this time at 1080p native resolution and with every graphics option set to the lowest value. Visual niceties, such as ray tracing, were all disabled.

DRAM channel benchmarks | Min graphics

Ryzen 9 9950X3D, RTX 5090

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 269 Avg FPS, 129 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 260 Avg FPS, 120 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 267 Avg FPS, 125 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 239 Avg FPS, 92 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 238 Avg FPS, 85 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 238 Avg FPS, 85 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 380 Avg FPS, 258 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 353 Avg FPS, 237 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 360 Avg FPS, 237 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 247 Avg FPS, 136 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 246 Avg FPS, 130 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 246 Avg FPS, 132 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 182 Avg FPS, 86 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 174 Avg FPS, 70 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 171 Avg FPS, 74 1% Low FPS |

| Product | Value |

|---|---|

| DDR5-6000 | 32 GB dual channel | 645 Avg FPS, 212 1% Low FPS |

| DDR5-6000 | 16 GB single channel | 616 Avg FPS, 202 1% Low FPS |

| DDR5-6000 | 32 GB single channel | 631 Avg FPS, 211 1% Low FPS |

The size of the frame rates clearly indicates that we're now in a more CPU-limited scenario, with the GPU barely being used at all in some cases. Naturally, you'd expect the amount of DRAM bandwidth to matter here, because if the game's frame rate is waiting almost entirely on the CPU to churn out data, anything that will slow it down will surely affect the overall performance.

Once again, the power of AMD's 3D V-Cache comes to the rescue. Everywhere except in Baldur's Gate 3, though, as the 1% low frames took a 19% tumble with a single 16 GB DIMM and a 14% hit when using a 32 GB single-channel stick.

The performance figures don't quite paint a full picture, either, because using single-channel memory caused noticeable microstutters in every game. It was never especially bad, though there were times when I missed the apex of a corner in F1 25 because of a minor stutter.

However, none of this really matters because if you can afford a prebuilt gaming PC with a Ryzen 7 9800X3D, for example, it will almost certainly come with a dual-channel memory kit. And if you're upgrading your current gaming PC to fit such a processor, you'll probably be happy to fork out for a 32 GB twin-DIMM setup.

But what if your budget is considerably lower than this? What about if you're considering getting a more affordable gaming PC that uses an older DDR4-powered Ryzen CPU? Specifically, one that has nowhere near as much cache as a 9950X3D.

Average cache, average DRAM

To answer that question, I grabbed my Ryzen 5 5600X test rig, which has 32 MB of L3 cache. For a six-core, 12-thread processor, that's actually quite a decent amount, though it's in the same ballpark as most six/eight-core CPUs. This chip also uses DDR4 memory, so together, we're down on cache and bandwidth compared to the previous setup.

I slapped 16 GB of DDR4-3200 CL16 and a GeForce RTX 4070 inside, to essentially make it an 'affordable gaming PC', and started another batch of gameplay testing. But rather than pulling one DIMM out, I just retested everything with a single 16 GB DIMM, as 8 GB of system memory would seriously affect the performance of the games.

For settings, I used a resolution of 1440p but with DLSS/FSR Quality enabled, so the actual rendering resolution is less than 1080p. This does force games to become somewhat CPU-limited, so I also set every game to use its High graphics preset. I know CS2 gamers would never do this, but we've already seen that this particular game isn't especially sensitive to memory bandwidth.

DRAM channel benchmarks

Ryzen 5 5600X, RTX 4070

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 116 Avg FPS, 56 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 103 Avg FPS, 45 1% Low FPS |

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 92 Avg FPS, 35 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 81 Avg FPS, 29 1% Low FPS |

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 188 Avg FPS, 125 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 171 Avg FPS, 98 1% Low FPS |

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 107 Avg FPS, 64 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 104 Avg FPS, 57 1% Low FPS |

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 69 Avg FPS, 41 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 58 Avg FPS, 32 1% Low FPS |

| Product | Value |

|---|---|

| DDR4-3200 | 16 GB dual channel | 281 Avg FPS, 100 1% Low FPS |

| DDR4-3200 | 16 GB single channel | 267 Avg FPS, 94 1% Low FPS |

And now we can see what happens when we don't have lots of nice cache to lean on, nor the large amount of memory bandwidth afforded by DDR5. With this setup, single-channel DRAM takes a fair slice off the overall performance, and a pretty big chunk off the 1% lows.

The exception to this is Counter-Strike 2, as expected, and the above results really do show that the game just wants a fast GPU to fling out the frames, and a handful of fast CPU cores to feed the graphics chip with instructions. The amount of data passing between the CPU and system memory just isn't enough to trouble single-channel DRAM.

Every other game clearly didn't like it, and my results show that you're going to be leaving a lot of potential performance on the table if you try to save money by using one stick of memory in your upgrade. It also means that you need to look very carefully at any prebuilt gaming PC to see if it's being sold with dual-channel DRAM or not.

This is especially true if the rig uses something like a Ryzen 7 8700F. That uses DDR5, but the chip only has 16 MB of L3 cache, so just as with the 5600X, it would be heavily affected by using single-channel memory.

Coping with single-channel DRAM

If you've already bought a prebuilt gaming PC and it has just one stick of memory installed in its motherboard, then don't panic. Yes, we've seen that if the CPU doesn't have lots of cache and access to plenty of DRAM bandwidth, games will run slower, but there is one thing you can do to help offset it. Or rather, hide it.

It's worth noting that the performance results for the Ryzen 5 5600X setup were mostly limited by the CPU, due to the use of upscaling dropping the rendering resolution right down.

However, if you disable that entirely or increase the graphics settings, you can shift the performance barrier more towards the graphics card. The overall frame rates will be lower, but four of my six tested games had plenty of capacity to drop some frames per second, and still run at a comfortable 60 fps.

In other words, the more you can make the GPU the limiting factor in a game's performance, the lower the impact from using single-channel memory will be. It's a sign of the times that I'm having to write all of this, especially when affordable 32 GB DDR4 and DDR5 kits existed less than a year ago, but until those days return once more, PC gamers will just have to use every trick in the book to get by.

1. Best gaming laptop: Razer Blade 16

2. Best gaming PC: HP Omen 35L

3. Best handheld gaming PC: Lenovo Legion Go S SteamOS ed.

4. Best mini PC: Minisforum AtomMan G7 PT

5. Best VR headset: Meta Quest 3

Nick, gaming, and computers all first met in the early 1980s. After leaving university, he became a physics and IT teacher and started writing about tech in the late 1990s. That resulted in him working with MadOnion to write the help files for 3DMark and PCMark. After a short stint working at Beyond3D.com, Nick joined Futuremark (MadOnion rebranded) full-time, as editor-in-chief for its PC gaming section, YouGamers. After the site shutdown, he became an engineering and computing lecturer for many years, but missed the writing bug. Cue four years at TechSpot.com covering everything and anything to do with tech and PCs. He freely admits to being far too obsessed with GPUs and open-world grindy RPGs, but who isn't these days?

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Join The Club

Join The Club