The Massachusetts institute of technology have been experimenting with their computers' AI. Specifically the way they deal with the meaning of words. You might think that the best way to analyse this kind of thing would be with a human to PC conversation, like in Short Circuit. That's not the case.

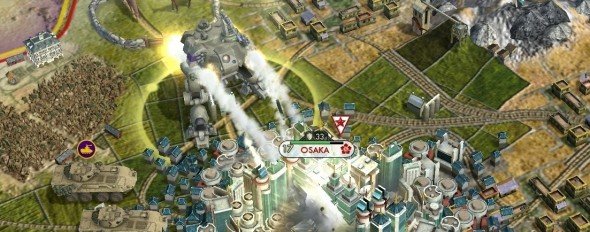

Instead, the boffins handed over PC classic, Civilization, and let the AI get on with it. They sucked - winning a mere 46 per cent of the time. The difficulty setting the machines were playing on has not been specified.

Then the researchers handed over the instructions and taught the PCs a "machine-learning system so it could use a player's manual to guide the development of a game-playing strategy." They didn't teach the PC how to play Civ, but they taught them how to read about it. The system had no pre-programmed notion of turn-based strategy or even what the objects in the world represented. The system was a noob.

Article continues belowThe AI continued to button-mash but, this time around, when words appeared on-screen the software compared them to text in the manual. It searched for other related words close-by and tried to guess what it all meant. The computer started "reading" the manual and impementing tactics in-game, just like we used to before the days of streamlined tutorials. Its win ratio was boosted from 46 per cent to a reasonable 79.

Associate professor of computer science and electrical engineering, Regina Barzilay, offered insight into why they used a game manual to prove their point. She reckons game manuals have “very open text. They don't tell you how to win. They just give you general advice and suggestions and you have to figure out a lot of other things on your own.”

Civ was picked because it's a really fun game, and they didn't want the computers to get bored during the testing.

Not really. The researchers picked Civ because, “every action that you take doesn't have a predetermined outcome, because the game or the opponent can randomly react to what you do." It forced the computer to develop a "technique to handle very complex scenarios that react in potentially random ways.”

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

These kind of systems could make developer's jobs a lot easier. Computers could start automatically creating AI algorithms that perform better than the ones that us stupid humans spend months creating. Alternatively, they could just write the manual and hand it over. Maybe.

What's the best AI you've ever played against? Most of us vote for SupCom's Sorian AI mod . Craig goes for the original F.E.A.R. - but he's never read an instruction manual in his life.

(via Reddit )

Join The Club

Join The Club