Could a new generation of dedicated AI chips burst Nvidia's bubble and do for AI GPUs what ASICs did for crypto mining?

Cheaper, more efficient ASICs for AI are coming.

A Chinese startup founded by a former Google engineer claims to have created a new ultra-efficient and relatively low cost AI chip using older manufacturing techniques. Meanwhile, Google itself is now reportedly considering whether to make its own specialised AI chips available to buy. Together, these chips could represent the start of a new processing paradigm which could do for the AI industry what ASICs did for bitcoin mining.

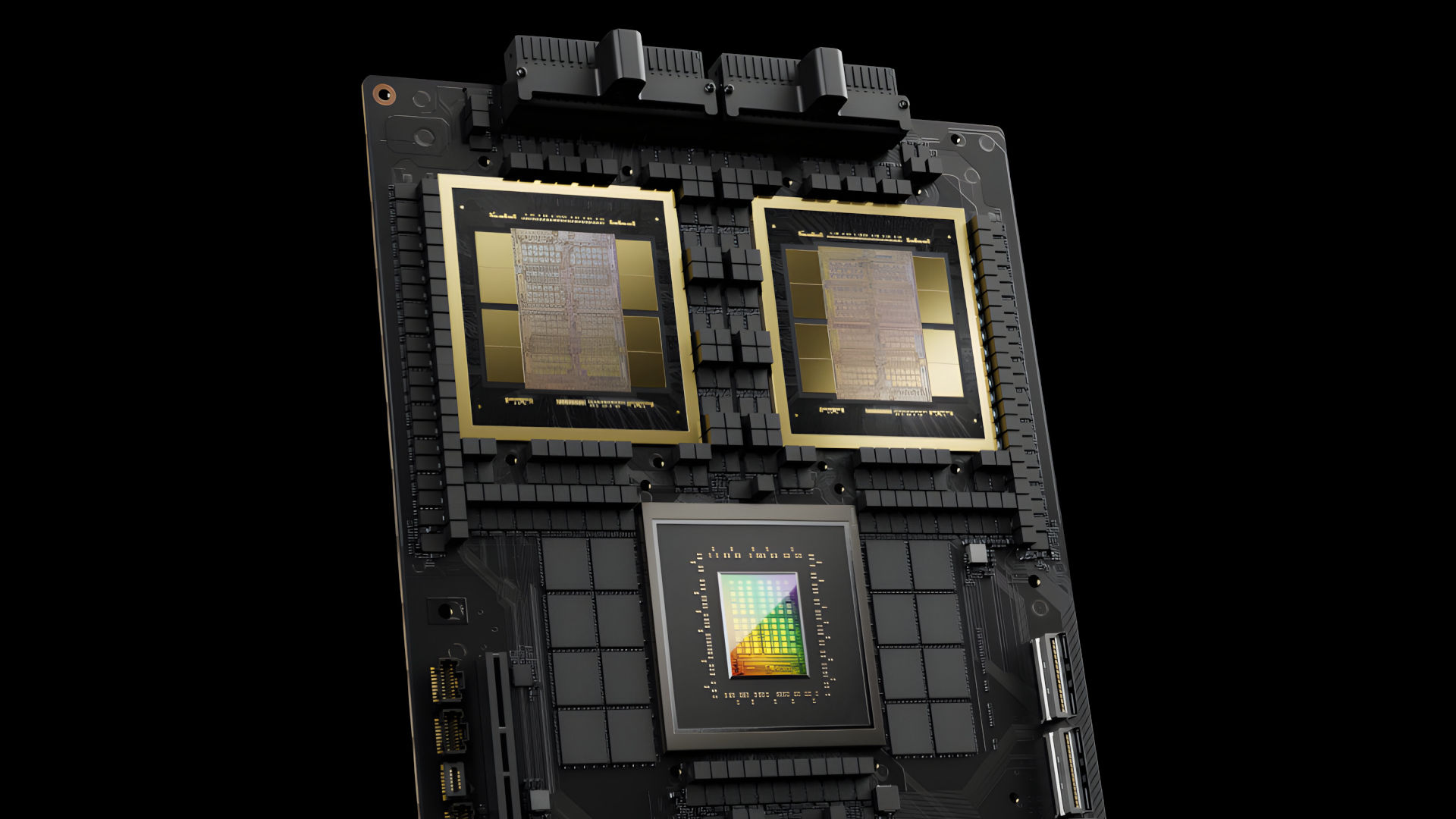

In both cases, we're talking about chips broadly known as TPUs or tensor processing units. They're a kind of ASIC, or application-specific integrated circuit, that's designed at hardware level to do a very narrow set of AI-related tasks very well. By contrast, Nvidia's GPUs, even its AI-specific GPUs as opposed to its gaming GPUs, are more general purpose in nature.

The parallel here is bitcoin mining, which used to be done on graphics cards before a new generation of dedicated mining chips, also ASICs, came along and did the same job much more efficiently. Could the same transition from more general purpose GPUs to ASICs hit the AI industry?

The Chinese startup is Zhonghao Xinying. Its Ghana chip is claimed to offer 1.5 times the performance of Nvidia's A100 AI GPU while reducing power consumption by 75%. And it does that courtesy of a domestic Chinese chip manufacturing process that the company says is "an order of magnitude lower than that of leading overseas GPU chips."

By "an order or magnitude lower," the assumption is that means well behind in technological terms given China's home-grown chip manufacturing is probably a couple of generations behind the best that TSMC in Taiwan can offer and behind even what the likes of Intel and Samsung can offer, too.

While the A100 is an old AI GPU dating from 2020 and thus much slower than Nvidia's latest Blackwell GPUs, it's the efficiency and cost gains of the new Chinese ASIC that will be most appealing to the AI industry.

As for Google, it has been making TPUs since 2017. But the more recent explosion in demand for AI hardware is reportedly enough to make Google consider selling TPUs to customers as opposed to merely hiring out access.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

According to a report on The Information (via Yahoo Finance), Google is in talks with with several customers, including a possible multi-billion-dollar deal with Meta, and has ambitions to "capture" 10% of Nvidia's AI revenue. Google's TPUs are also more narrowly defined and likely more power efficient than Nvidia's AI GPUs.

Of course, there are plenty of barriers to the adoption of these new AI ASICs. Much of the AI industry is heavily invested in Nvidia hardware and software tools. Moving to a totally new ASIC-based platform would mean plenty of short term pain.

But what with Nvidia said to be charging $45,000 to $50,000 per B200 GPU, companies arguably have every reason to suck up that pain for long term gains.

Moreover, if more efficient ASICs do make inroads into the AI market, that could reduce demand for the most advanced manufacturing processes. And that would be good news for all kinds of chips, including GPUs for PC gaming.

Right now, we're all effectively paying more for our graphics cards because of the incredible demand for large, general purpose AI GPUs built on the same silicon as gaming GPUs. If at least a chunk of the AI market shifted to this new ASIC paradigm, well, the whole supply-and-demand equation would shift.

Heck, maybe even Nvidia would view gaming as important, again. We can but hope!

1. Best gaming chair: Secretlab Titan Evo

2. Best gaming desk: Secretlab Magnus Pro XL

3. Best gaming headset: HyperX Cloud Alpha

4. Best gaming keyboard: Asus ROG Strix Scope II 96 Wireless

5. Best gaming mouse: Razer DeathAdder V3 HyperSpeed

6. Best PC controller: Xbox Wireless Controller

7. Best steering wheel: Logitech G Pro Racing Wheel

8. Best microphone: Shure MV6 USB Gaming Microphone

9. Best webcam: Elgato Facecam MK.2

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Join The Club

Join The Club