The price of graphics cards really hammers home my biggest issue with transhumanism

We don't just need better gaming PCs, we're working towards better versions of ourselves—for a price.

You're plugging in a new gaming mouse. It's swift, accurate, it makes you feel in control. There you are, interfacing with an immensely powerful piece of technology that gives you access to an incomprehensibly vast web of ideas, and power enough to stream entire universes into your eyeballs. This is the transhumanist dream, or at least one of them: to augment yourself into a more impressive specimen with the wonders wrought by new technology.

But there's a problem with this need for bigger, better tech—it feeds the corporate fires and pushes up prices. As is the case with the latest graphics card offerings.

It's likely you're caught up in your own special flavour of transhuman idealism.

Whether you're pushing for faster response times, higher pixels per inch, or a more impressive refresh rate, it's likely you're caught up in your own special flavour of transhuman idealism. And that need to feel enhanced will undoubtedly see companies out there poised to take advantage, which means one of the biggest arguments against transhumanism, at least for me, is coming back with a vengeance: The fear that all this expensive augmentation might alienate those on lower income brackets.

We're not talking about dumping your physical body, joining with the singularity, and living forever just yet. And I'm not here to argue that capitalism is bad (that's a conversation for another day). But in the wake of this desperate need to keep up with the graphical intensity of upcoming games, hot damn are graphics cards getting expensive.

From the dawn of gaming, we've been scrambling for ways to optimise our experience. As graphics have improved, we've been called to upgrade our machines so they can not only handle new games with higher graphics settings, but push out framerates incomprehensibly fast to give us a fighting chance at today's competitive games. And for the most part we've been graced with a string of graphics card offerings within the realms of affordability.

Nvidia and AMD's latest generation of GPUs, on the other hand, has us wondering "Where the heck do GPU prices go from here?" When you've got the RTX 4080 leaping to $1,199 from the $699 MSRP of the previous generation's RTX 3080, the price of improving your setup so you can reach the frame rates you're after is getting silly.

The spike in demand due to global, pandemic-related lockdowns, and the subsequent second coming of cryptocurrency mining, meant GPUs were hot property and resellers more than manufacturers made extra bank off that. Obviously Nvidia, and to a lesser extent AMD, made way more money from all of the cards suddenly flying off the shelves, but a greedy eye on the further inflated prices at retail and from resellers has led to a long-term pricing hangover we're still dealing with.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

The RTX 3080 Ti, with its 72% increase in price and 18% increase in core counts over the RTX 3080 was a warning of what we were in store for with this latest generation of cards.

Of course, it's not all down to our increased demand for graphics cards, there are other factors at play here. One of the main arguments is that wafer costs from TSMC are pushing up the prices. And though the actual figures are unclear, it still wouldn't explain things fully since we've only seen a $100 increase from the RTX 4090 over its predecessor.

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

As for AMD cards, it's demand (or lack thereof) that's seen prices slip below MSRP soon after the launch of its latest GPU series. Which gives you an idea of how strange it is that even AMD—the minority player—deigns to price cards the way it does.

Sure they're cheaper than that of Nvidia's new generation, but not as powerful. So the question remains: Why are GPUs so expensive? As our Jeremy puts it, it all seems like a "concerted effort to push GPUs upmarket."

And while fallout from the silicon shortage of yesteryear has mostly cleared up, and supply for this stretch of GPUs hasn't been an issue, Moore's Law—the idea that as performance increases, the price of chips will halve—is indeed going down the tube.

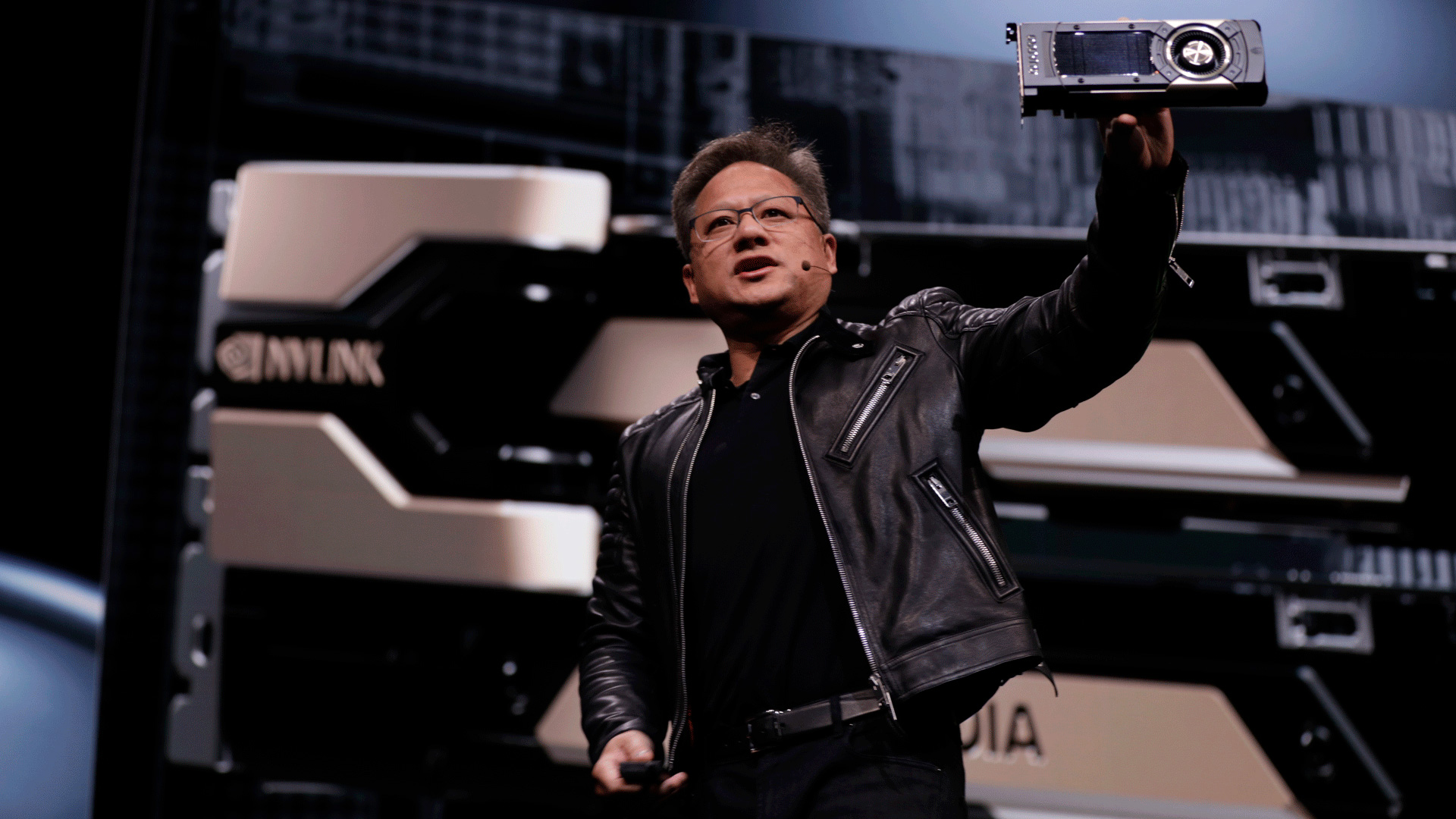

As Nvidia CEO Jensen Huang puts it "the idea that the chip is going to go down in cost over time, unfortunately, is a story of the past."

AMD tries to slide in on the argument, saying that Moore's Law isn't dead, it's just that the countless improvements to transistor technology means "they're more expensive."

However they wish to spin in, this latest generation of cards still seems needlessly expensive. Nvidia is using far less complex silicon, far smaller GPUs, smaller memory buses, and positioning them higher than equivalent chips of the previous generation. They're simply using a lot more L3 cache and taking advantage of a newer node's ability to be clocked higher to deliver the performance gains. Along with DLSS 3 and Frame Generation, of course.

AMD, too, has moved on to a chiplet design for its graphics silicon. As well as the future potential of being able to iterate more chiplets in one overall GPU, the ethos behind the move will be as it was in the CPU space: driving down manufacturing costs. Making one simple processing chiplet, but being able to spin it out for multiple iterations, should cut AMD's costs down the line.

We're stepping in a very scary, somewhat elitist direction.

That means manufacturing costs are either lower now, or will be significantly lower in time, and the margins will be far higher, too, because everyone is still charging more money.

Whatever the reason, whether it be demand, manufacturing cost, or technological complexity, we're stepping in a very scary, somewhat elitist direction. One that could see some of us relegated to only playing older games, low-power indies alone, or worse: potato graphics forever. And while it's not the same level of alienation as with building cryogenic freezing chambers, so the rich can be resurrected when technology allows it, it's maybe time to put a foot down on the increasing price of GPUs, past the point of inflation of course.

If you're still desperate to get your hands on the latest GPUs, I'd take a look at the cheap graphics card deals page we have going. We're doing our small part in the fight against extortionate graphics card prices, at least.

Having been obsessed with game mechanics, computers and graphics for three decades, Katie took Game Art and Design up to Masters level at uni and has been writing about digital games, tabletop games and gaming technology for over five years since. She can be found facilitating board game design workshops and optimising everything in her path.

Join The Club

Join The Club