ChatGPT is 'so wildly incorrect' that an Australian whistleblower is suing it for defamation

ChatGPT mistakenly identified Melbourne man as the perpetrator of the very crime he uncovered.

We all know ChatGPT gets stuff wrong. As in very wrong, and all the time. While that can be amusing, it's less funny if ChatGPT is mistakenly identifies you as a criminal. And it's less funny still if you were in fact the person who originally uncovered the crime in question.

Indeed, you might find it so unfunny, you decide to sue for defamation. Which is exactly what Brian Hood, a Melbourne Australia-based politician is doing.

ChatGPT apparently identified Hood, now the mayor of a local authority in northwest Melbourne, as one of the central perpetrators of the so called Securency bribery scandal. The chatbot describes how Hood pleaded guilty to the charges in 2012 and was sentenced two two and half years in prison.

And it still does if you ask ChatGPT about the scandal today.

In fact, it was Hood who actually alerted authorities to the bribery going on at a banknote printing business called Securency, which was then a subsidiary of the Reserve Bank of Australia. At the time, Victorian Supreme Court Justice Elizabeth Hollingworth commended Hood for his "tremendous courage" in coming forward.

Hood apparently lost his job after blowing the whistle and suffered years of anxiety as the legal case dragged on and on.

When Hood learned of the false information being propagated by ChatGPT, he says he, "felt a bit numb. Because it was so incorrect, so wildly incorrect, that just staggered me. And then I got quite angry about it.”

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Reportedly, Hood's lawyers have begun initial proceedings for defamation against OpenAI for defamation but have yet to hear back.

Given the routine errors the chatbot makes, it's easy to see how this happened. It's correct that Hood's name is associated with the crime. But ChatGPT has got it all back to front, as anyone who has used the chatbot knows it does all too easily.

That's a bit annoying if you're asking it to explain the difference between USB 3.2 and USB 3.2x2, but rather more concerning when it's disseminating out-and-out defamatory falsehoods about real, living people.

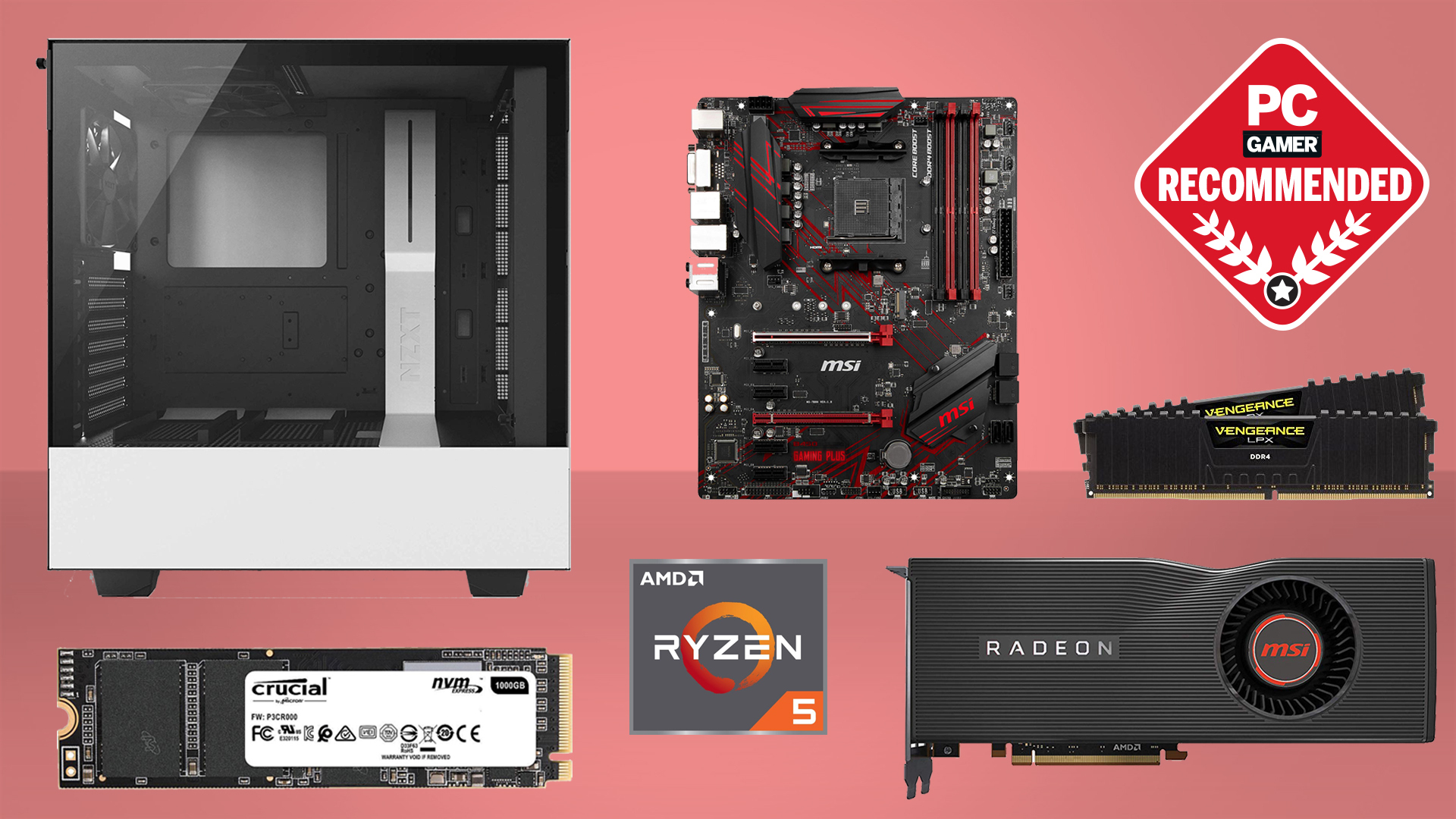

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

The case looks like it will be the first to test the basic principle of whether an AI chatbot's creators can be held liable for what it churns out.

Hood's argument will no doubt center on the fact that ChatGPT has been rolled out for wide public use and that its creators at OpenAI have explicitly stated that the model is experimental and prone to errors.

Open AI, for its part, will probably point to the disclaimer on the ChatGPT interface which states that the bot, "may produce inaccurate information about people, places, or facts."

Either way, add this to the long and growing list of new, interesting and unintended problems that these new chatbots are creating. It's such a huge workload, we'll probably need to use an AI to clean it all up.

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.

Join The Club

Join The Club