Nvidia’s Drive PX 2: The Shape of Things to Come

CES always brings out some interesting products, and while many of them aren’t directly related to our normal Maximum PC coverage, there’s plenty of overlap. Case in point is Nvidia’s new Drive PX 2 solution, which the company is billing as a one-stop solution for in-car artificial intelligence, deep learning, and autonomous driving. Perhaps more interesting is what Nvidia doesn’t directly state, but we’ll get to that in a moment. First, let’s dissect all those buzzwords to see what’s really going on.

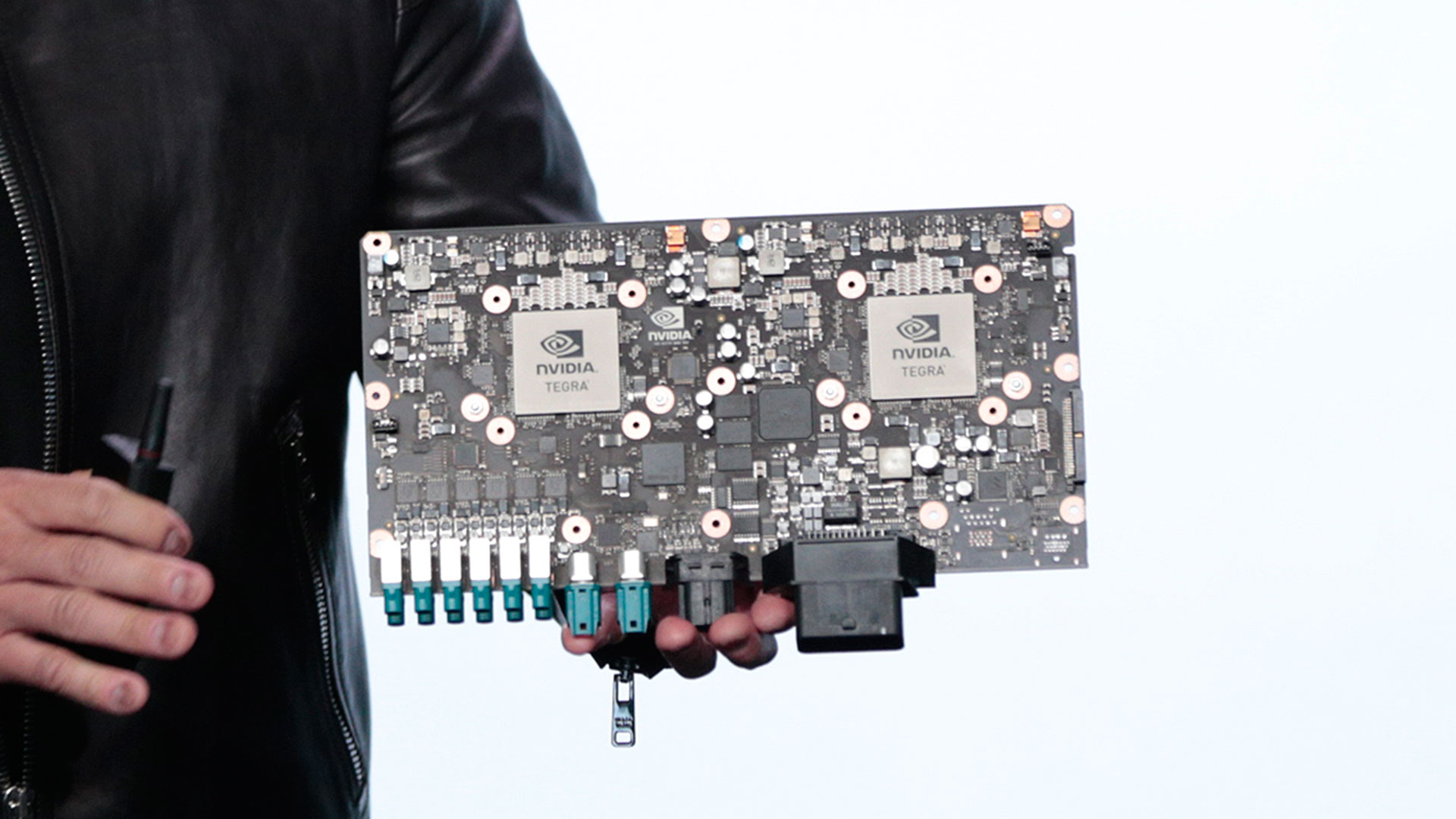

The Drive PX 2 is the follow-up to last year’s Drive PX, which used two Tegra X1 processors to provide some of the same features being touted today. Interestingly, Tegra X1 took a long time to make it into a tablet, and then it's only the 10-inch Pixel C. This is presumably because power requirements are high, though it also showed up in the Nvidia Shield box for use with TVs/home theaters. In terms of core hardware, Tegra X1 features four high-performance ARM Cortex-A57 cores and four low-power Cortex-A53 cores, then pairs those with a Maxwell GPU sporting 256 CUDA cores.

Drive PX 2 significantly ups the ante, by using two next-generation Tegra processors (Nvidia hasn’t provided a specific name yet) that have four ARM Cortex-57 cores along with two Denver cores. Denver, if you’ll recall, was used in the second version of the previous generation Tegra K1, and while it wasn’t always faster than the non-Denver TK1, it did excel in certain tasks. Along with the CPU cores, this future Tegra part will also feature Pascal GPU assets, though Nvidia doesn’t specify how many CUDA cores will be present.

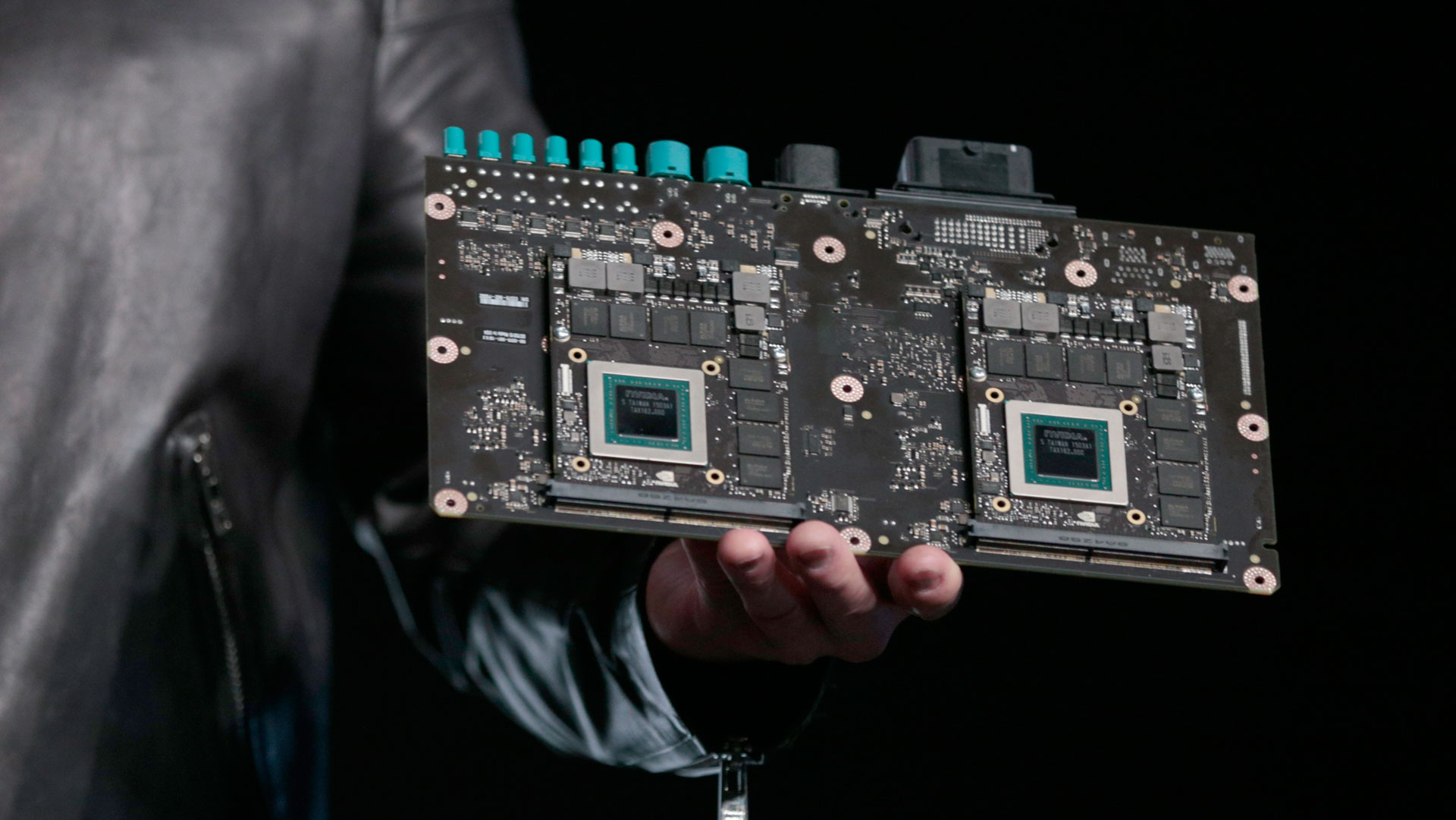

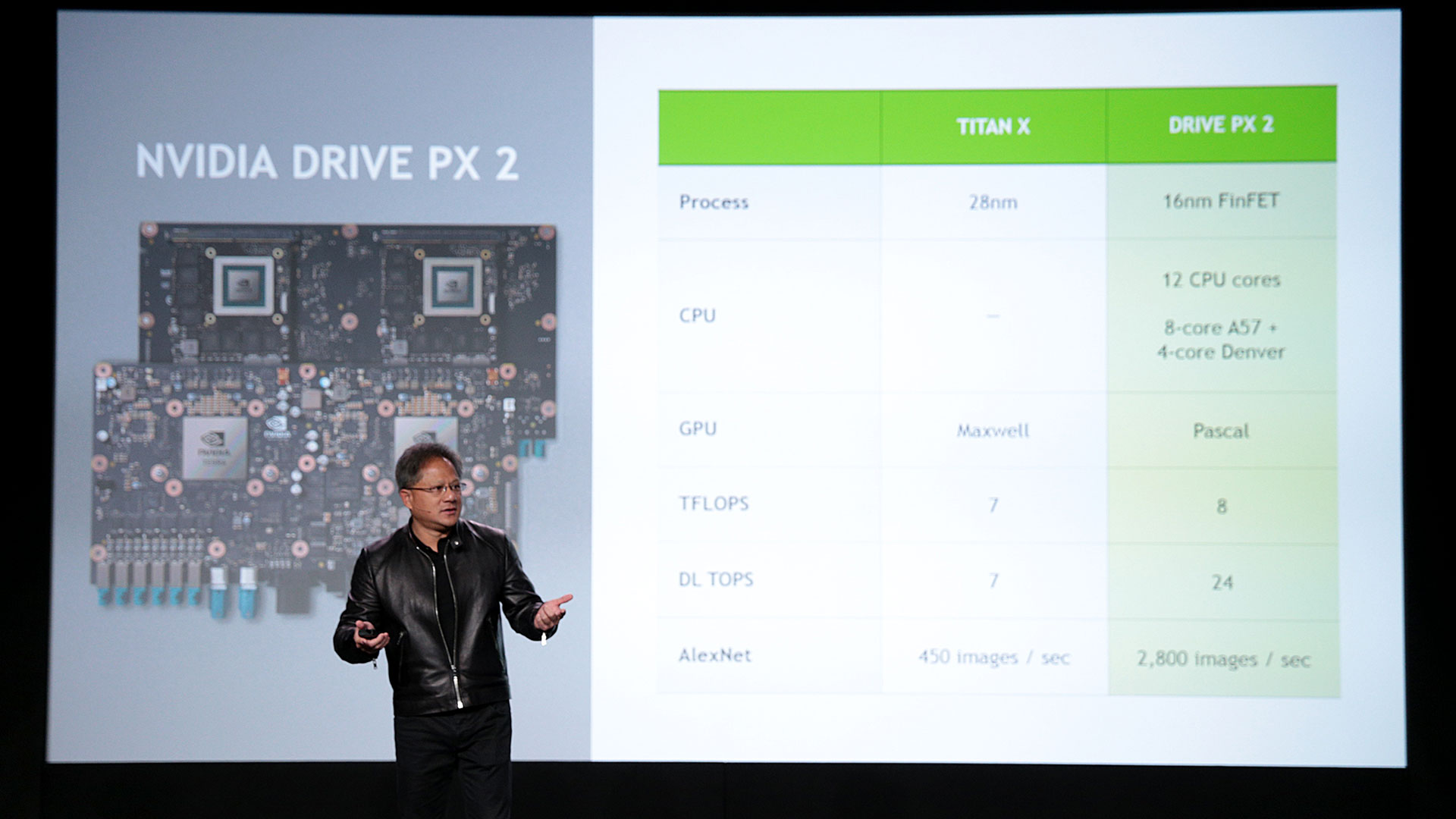

So far so good, but where Drive PX 2 really leaves the original behind is in its integration of two additional Pascal discrete GPUs. It seems Jen-Hsun may be holding a mockup of the final Drive PX 2 hardware, as the GPUs at least appear nearly identical to the GTX 980 for Notebooks MXM modules, but we’ll let that pass here; final hardware will certainly have Pascal GPUs. All told, the PX 2 will deliver around 8 TFLOPS of performance, whereas a single Titan X GPU is capable of “only” 7 TFLOPS.

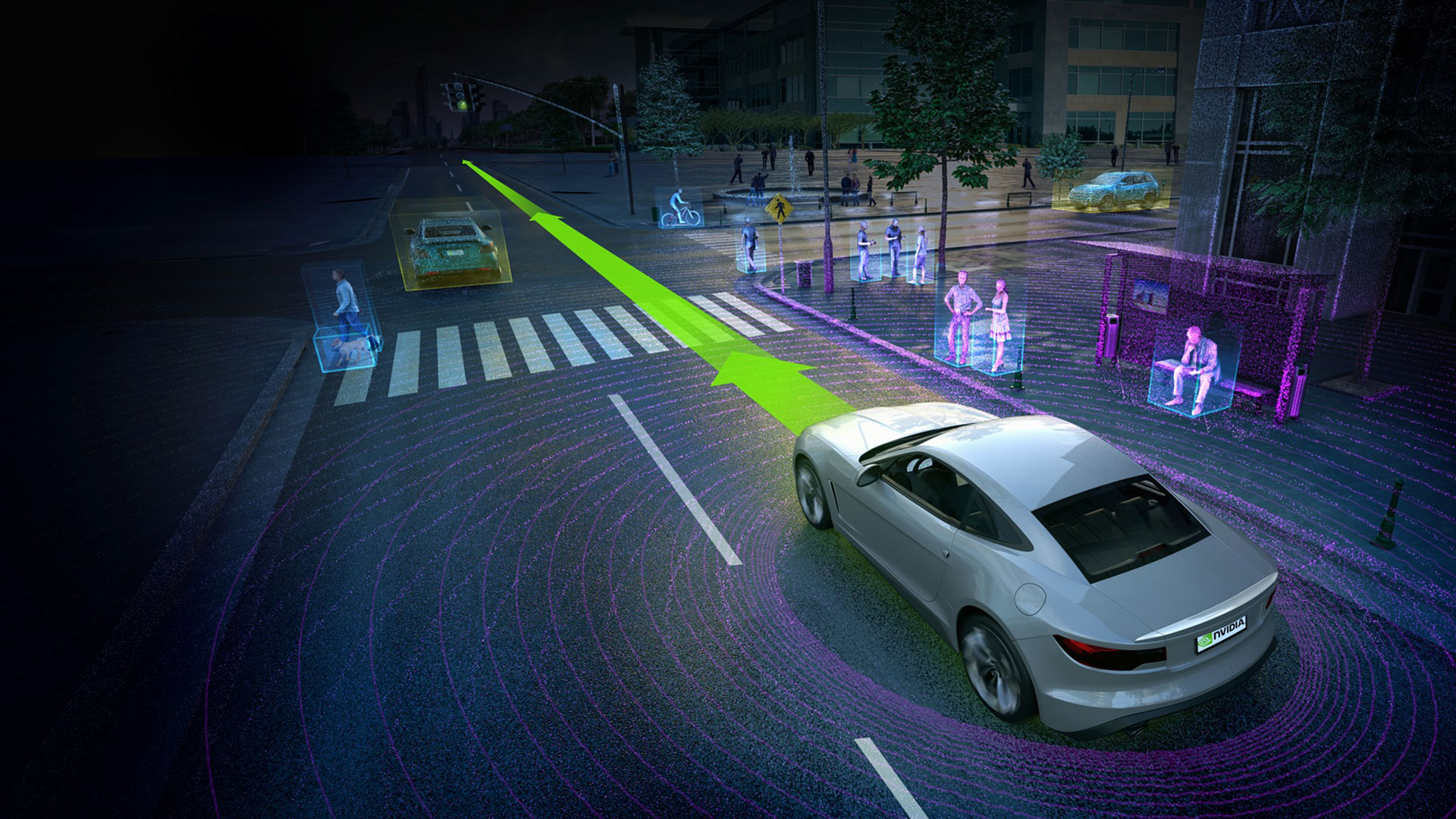

That’s not the end of the story, however. Pascal is a new architecture, and one of the changes appears to be some optimizations specifically related to “deep learning.” This is a term Nvidia and others use to talk about what we used to call neural networks, a branch of computer science that attempts to create artificial intelligence using models that try to emulate the brain and its neurons. Neural networks/deep learning have proven quite powerful in computer vision and speech recognition applications, among others, so it makes sense that they’d be useful in automated vehicles.

For the deep learning aspects, Nvidia provides a separate classification of performance, called Deep Learning Operations, or DL TOPS (Tera OPS, i.e., trillions of Operations Per Second). Interestingly, where the Titan X / Maxwell architecture appears to have the same rating for TFLOPS (floating point operations) and DL TOPS, Drive PX 2 / Pascal look to have DL TOPS performance that’s three times higher than the TFLOPS rating.

Of course, TFLOPS and DL TOPS are somewhat meaningless terms. They represent more of a theoretical peak performance than what you’ll actually see in real-world applications. Nvidia provides a separate measurement of performance using AlexNet, an image classification approach using deep convolutional neural networks. Here, Nvidia quotes performance of 450 images per second with a Titan X compared to 2,800 images per second on Drive PX 2. So in this case, Nvidia shows a single Drive PX 2 delivering more than six times the performance of a single Titan X. Nvidia also notes that the Drive PX 2 is over 10 times faster than the previous Drive PX, which was rated at 2.3 TFLOPS, and being Maxwell-based, that means 2.3 DL TOPS.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

There’s some fuzzy math here where Nvidia shows how a Drive PX 2 is equal to 150 MacBook Pro laptops in terms of performance, but it’s more marketing than anything useful. They use the 6x speedup shown in AlexNet but then revert to TFLOPS, and show the 42 TFLOPS of six Titan X GPUs as equaling the performance of 150 MBP processors with no discrete GPU, so only 0.28 TFLOPS each. But the bottom line is that GPU-assisted deep learning can be orders of magnitude faster than deep learning without GPUs.

All of the above is certainly cool stuff, and Nvidia is partnering with Volvo to have a public trial of 100 Volvo XC90 SUVs hitting the road next year in the carmaker’s Drive Me autonomous car pilot program. Many of us have looked forward to the day of autopilot vehicles, and with Nvidia’s help, we’re inching ever closer to that reality. In fact, Jen-Hsun states that in testing, their trained Drive PX 2 system was able to outperform humans in perceiving road signs and other objects. Since a car/computer won’t get bored and doze off or daydream, long-term the technology can improve the safety of our roads and vehicles. That’s assuming, of course, that no hackers create havoc, and that the software/hardware never suffers from technical glitches.

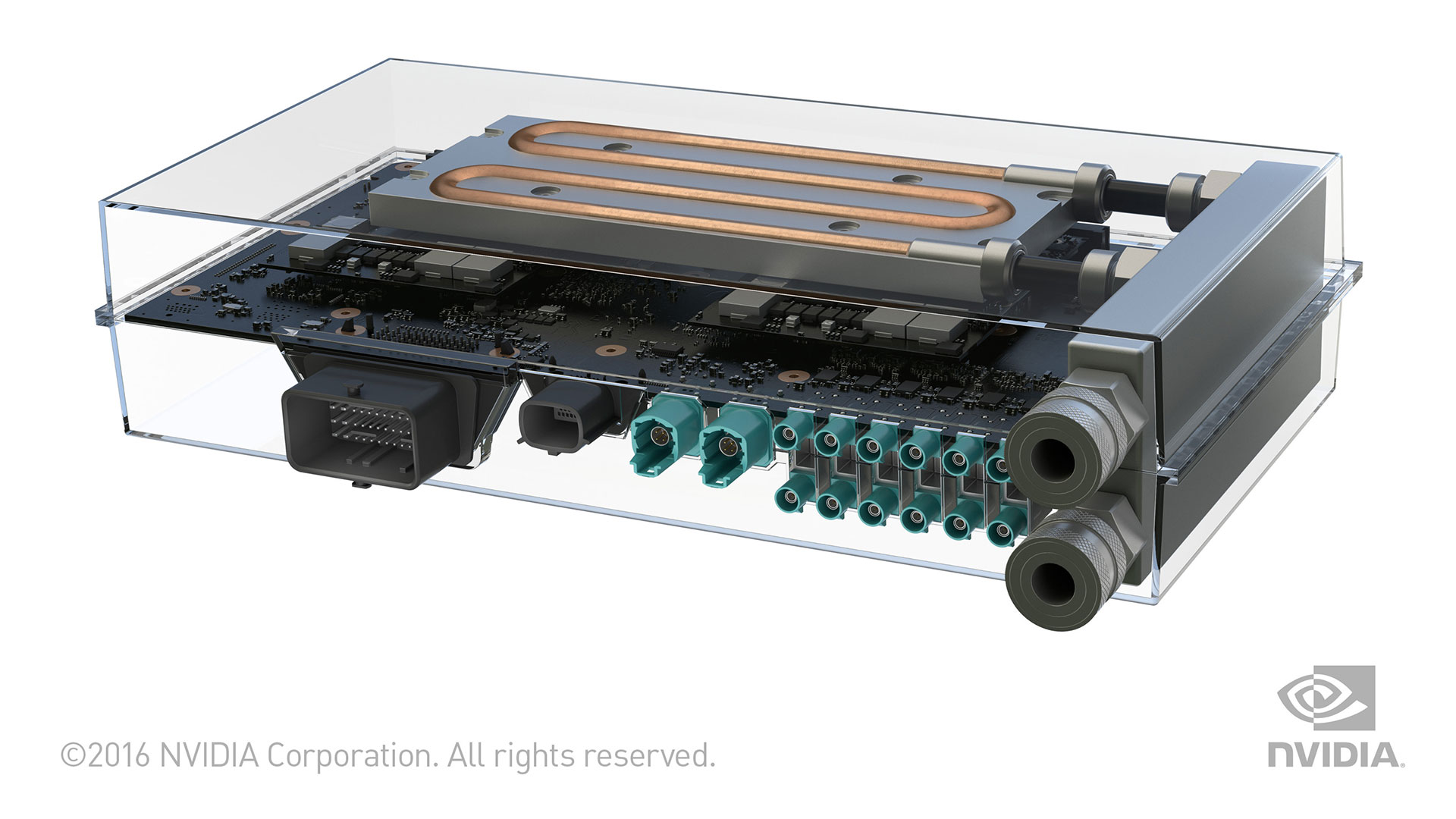

Getting back to the Drive PX 2 hardware, we do know both the Tegra and Pascal GPUs will be manufactured on TSMC’s 16nm FinFET process. AMD revealed that they’re using GlobalFoundries’ (Samsung’s) 14nm FinFET for some of their Polaris GPUs, but they also indicated some GPUs would be made at TSMC as well. The new process should reduce power requirements at the same performance, but Nvidia appears to be going the other route and instead improves performance quite a bit while drawing more power than the original Drive PX—250W, to be precise. To that end, the Drive PX 2 will initially launch in a liquid-cooled configuration, in a module roughly the size of a lunch box.

Unfortunately, despite giving us quite a few examples of performance (e.g., 8 TFLOPS / 24 DL TOPS), we ultimately know very little about Pascal and how it will differ from Maxwell. DL TOPS run substantially faster on Pascal, but that may be a case of using double-precision FP operations or some other factor that may not apply to non-compute scenarios.

Considering Tegra X1 only has 256 CUDA cores compared to 3,072 in the Titan X, yet Nvidia rates the Tegra X1 at 1.15 TFLOPS compared to 7 TFLOPS, it’s impossible to say what sort of Pascal GPUs are in the Drive PX 2, or how Pascal will compare with Maxwell on the desktop. Even if the new Tegra SoC is only equal to the X1 in performance, that means the two discrete Pascal GPUs at most deliver 6 TFLOPS of performance, but it’s probably closer to 4 TFLOPS of performance. That’s 2 TFLOPS per GPU, somewhere between the GTX 950 and 960 in performance, or about the level of a single GTX 965M. Clearly, the two discrete GPUs on the Drive PX 2 are not high-end GPUs.

In other words, if the Drive PX 2 is one of the first products to ship with Pascal hardware, much like AMD’s demonstration of working Polaris GPUs, Nvidia will likely launch Pascal more as an entry-level part instead of a GTX 980 Ti killer. No doubt the second batch of Pascal GPUs will double down on the hardware elements. The race may be on for self-driving vehicles, but we’re also waiting to see who can launch the first FinFET GPU.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.