Nvidia's bringing its AI avatars to games and they can interact with players in real-time. With voiced dialogue. And facial animations

Nvidia announces ACE for Games at Computex 2023.

Nvidia has just announced ACE for Games at its Computex keynote, a version of its Omniverse Avatar Cloud Engine, to animate and give a voice to in-game NPCs in real-time.

CEO Jensen Huang explained that ACE for Games integrates text-to-speech, natural language understanding—or in Huang's words, "basically a large language model"—and automatic facial animator. All under the ACE umbrella.

Essentially, an AI created NPC will listen to a player's input, for example asking the NPC a question, and then generate an in-character response, say that dialogue out loud, and animate the NPC's face as they say it.

Huang also showed off the technology in a real-time demo crafted in Unreal Engine 5 with AI startup Convai. It's set in a cyberpunk setting, because of course it is (sorry, Katie), and shows a player walk into a ramen shop and talk to the owner. The owner has no scripted dialogue but responds to the player's questions in real-time and sends them off on a makeshift mission.

You can watch the demo for yourself here.

It's pretty impressive, and undoubtedly a look into how games may utilise this technology in the future. As Huang said, "AI will be a very big part of the future of videogames."

Best gaming PC: The top pre-built machines from the pros

Best gaming laptop: Perfect notebooks for mobile gaming

Of course, he would say that. Nvidia is the company most set to gain by the sudden surge of AI demand with sales of its AI accelerators. And we have seen some basic integrations of ChatGPT into games already, like when Chris added it to his Skyrim companion and it failed to solve a simple puzzle. But this new ACE platform does appear a lot more polished and properly real-time.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

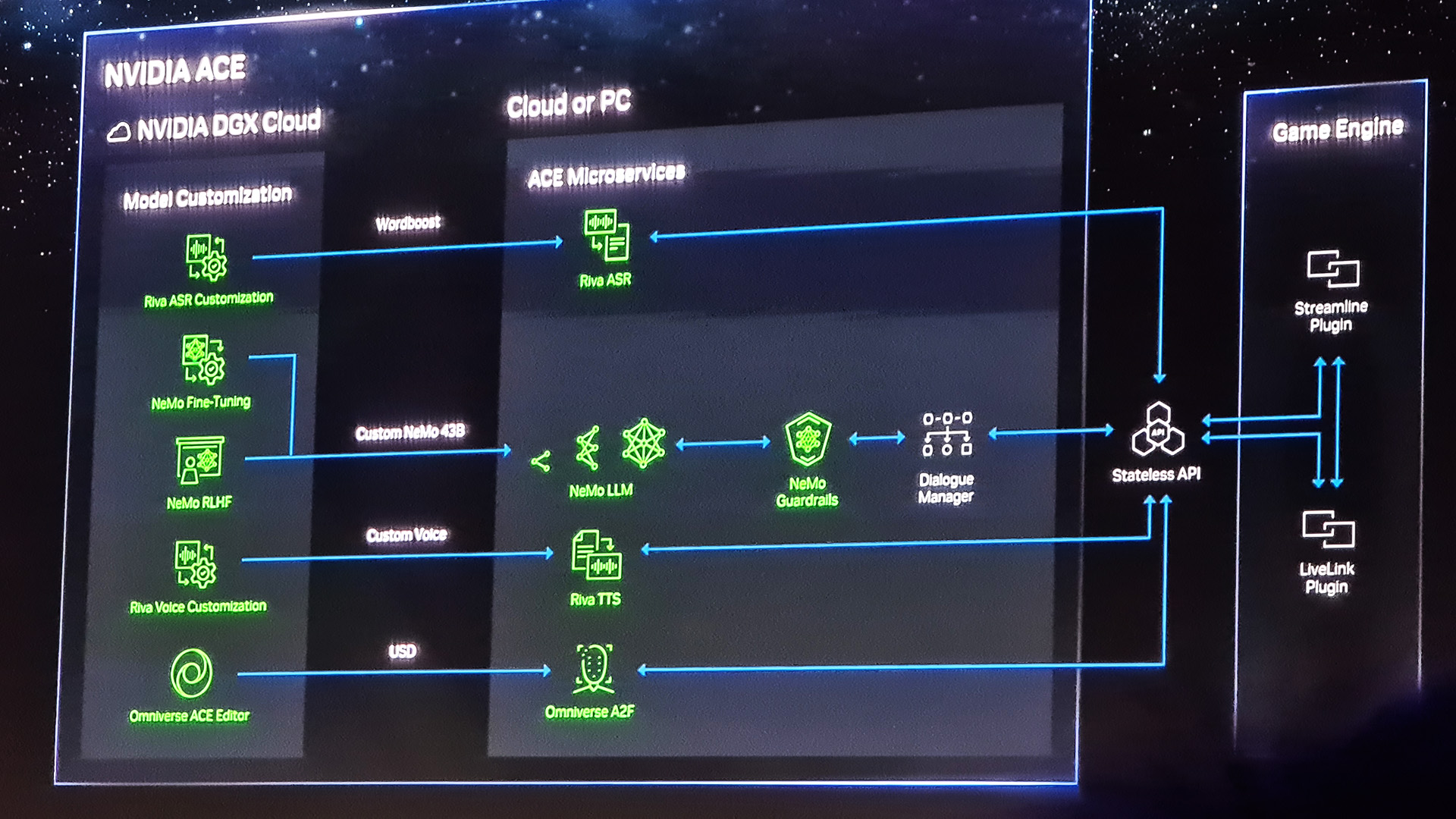

What we don't know is what it took to run the ACE for Games demo, only that it also was running ray tracing and DLSS. It could require more than your average GeForce GPU to run right now, or require a cloud-based component. Huang was a bit light on the details, but I'm sure we'll hear more about this tool as some games actually make moves to use it.

"The neural networks enabling NVIDIA ACE for Games are optimized for different capabilities, with various size, performance and quality trade-offs. The ACE for Games foundry service will help developers fine-tune models for their games, then deploy via NVIDIA DGX Cloud, GeForce RTX PCs or on premises for real-time inferencing," Nvidia says.

"The models are optimized for latency—a critical requirement for immersive, responsive interactions in games."

Latency is going to be a big one here. I'd hate to be subjected to the NPC equivalent of an awkward pause as it loads in its response from the cloud.

So far, Nvidia has confirmed two games using the facial animation technology component of ACE for Games, called Audio2Face. That's S.T.A.L.K.E.R. 2: Heart of Chernobyl and Fallen Leaf, but hopefully we'll get some examples of the whole platform combined. I'd be keen to see the tech in action outside of a demo.

Jacob has been writing about PC hardware and technology for over eight years. He earned his first byline at PCGamesN before joining PC Gamer. He spends most of his time building PCs, running benchmarks, and trying his best to learn Linux.

Join The Club

Join The Club