Intel reveals ambitious plans to enable the ultimate in path-traced eye candy on integrated graphics

Don't get too excited, it's not exactly imminent...

A new Intel blog post has revealed the company's ambitious plans to enable real-time path tracing on not just its Arc discrete GPUs but eventually its integrated graphics, too.

Intel says it will lay out several new approaches to path tracing via research papers introduced at industry conferences later this year.

"With Intel’s ubiquitous integrated GPUs and emerging discrete graphics, researchers are pushing the efficiency of the most demanding photorealistic rendering, called path tracing," Intel says.

"Across the process of path tracing, the research presented in these papers demonstrates improvements in efficiency in path tracing’s main building blocks, namely ray tracing, shading, and sampling. These are important components to make photorealistic rendering with path tracing available on more affordable GPUs, such as Intel Arc GPUs, and a step toward real-time performance on integrated GPUs."

In really broad terms, path tracing is a higher fidelity version of ray tracing where the path of each light ray and how it reflects off multiple surfaces is more accurately simulated. Inevitably, it's even more demanding in terms of GPU resources than conventional ray tracing. So far, path tracing has been implemented on games like Quake II RTX and Portal RTX, neither of which are demanding titles without ray tracing involved.

Intel says one of the papers will propose a novel and efficient method to compute the reflections from what's known as a GGX microfacet surface. Such surfaces are the most widely simulated in the game, animation, and VFX industries and essentially compromise the most common materials in the real world, including textured and rough surfaces.

The idea is that most material surfaces are made up of a large number of microfacets, each with its own particular slope or angle to the viewer or camera. These slopes can modelled en masse via a facet slope distribution function.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

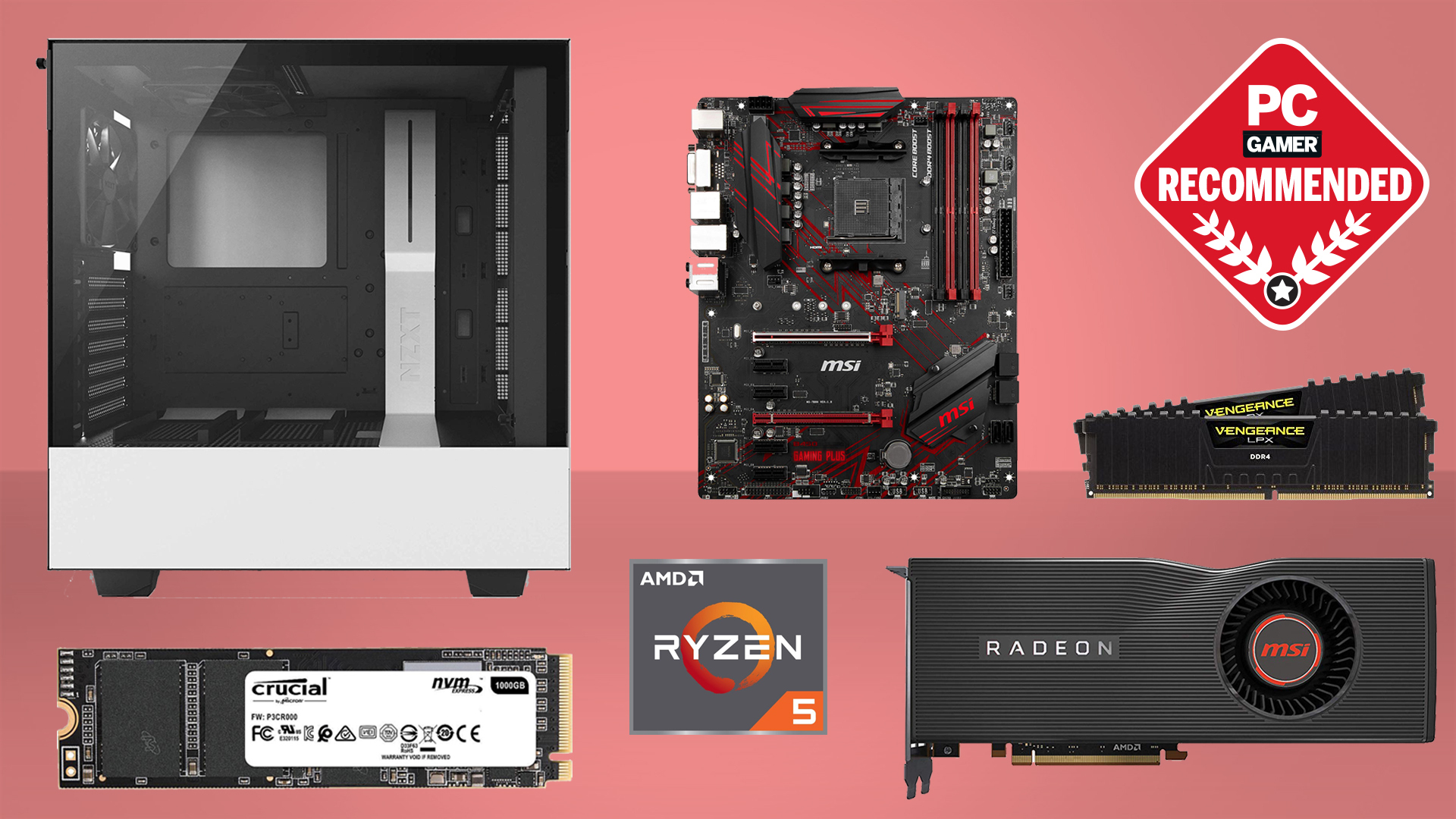

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

The second paper provides a faster and more stable method to render glinty surfaces, which tend to be avoided especially in game engines due to the high computational cost of simulating them.

Exactly how all of this ties into Intel's graphics isn't clear. Do they reflect, no pun intended, upcoming hardware features that will be exclusive to Intel GPUs? Or will the learnings of these initiatives be something that the entire graphics industry can benefit from?

Whatever, the general tone of Intel's blog post implies that these are more long-term aspirations than firm targets for the near terms. We're not expecting Intel integrated GPUs to be running Cyberpunk with full path tracing at 4K next year, in other words.

But the basic ambition to achieve real-time path tracing on its integrated GPUs and Intel's emphasis on "affordability" are still very welcome. It's certainly a more democratic take on high fidelity graphics than Nvidia and AMD are currently pursuing in which $300 buys you a low-spec graphics card.

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.