Our Verdict

The Arc A770 Limited Edition is far from perfect but does deliver very playable frame rates at 1440p and with ray tracing enabled. It's certainly not a bad start for Intel Arc. But while it focuses on facing down Nvidia and the popular RTX 3060, it is AMD's RX 6600 XT that simply offers a better alternative right now.

For

- Excellent DX12 and Vulkan performance

- Impressive ray tracing acceleration

- 16GB of GDDR6

- Well-made and good looking cooler shroud

- Affordable

- A good option to have in the entry-level

Against

- Doesn't run as well on DX11 and older APIs

- Resize BAR is essential

- AMD RDNA 2 price drops are tough to beat

- Best at 4K but not quite a genuinely 4K-capable card

- High power draw

PC Gamer's got your back

This is a moment we've been waiting for. With the Intel Arc A770 Limited Edition arriving from October 12, a third player will have truly entered the gaming graphics card market, and finally with a genuinely competitive product for gaming. That's not something anyone has been able to remark on for a very long time—it's been a mostly competitive two-horse race for decades.

A two-horse race gets you rapid technological progress, but throwing another billion-dollar company in the mix doesn't hurt our chances of getting genuinely affordable GPUs, too. Yet money alone won't build you a successful product, or a good gaming chip, though it probably helps get you some of the way. That said, Intel has plenty of cash, and even the company's CEO, Pat Gelsinger, admits it's still been a bumpy road to launch for Arc.

And, no, I haven't forgotten about the Arc A380 and Arc A310 graphics cards that launched earlier in the year. I'm simply choosing to ignore them for dramatic effect. The Arc A380 was the true card to kick off the Alchemist GPU generation—limping to launch with a quiet China-only release—but with the Arc A770 we're seeing a mightier thrust into gaming graphics than ever before from Intel. It's sure to be the Alchemist GPU we remember if this all goes to plan.

It's about time we got our hands on an Arc GPU ready to game on, anyways. I for one have been patiently waiting for this moment all year. The Arc A770 Limited Edition is a $349 graphics card going up against the entry-level graphics cards from Nvidia and AMD, and while it may not have always been aimed at these more affordable cards, we can be thankful that it is. With enthusiast GPUs from the next-gen already towering over us with price tags in excess of $2,000, it's the budget PC that has a hazy future ahead of it.

The Arc A770 Limited Edition might just help with that. We shouldn't forget that Intel is also releasing the Arc A750 Limited Edition alongside it, which is going for $289.

Arc A770 architecture and specs

What architecture powers the Arc A770?

The underlying architecture powering Intel's Alchemist graphics cards is called Xe-HPG, or High Performance Graphics. It's closely related to Xe-LP, Xe-HP, Xe-HPC—the Xe architecture combined covers everything from integrated graphics to supercomputers. That's actually one reason why Xe-HPG is a little late out of the gate. But Xe-HPG is here now and it is a little different to the few Xe-based GPUs we've seen in the past.

There are numerous changes to make the Xe architecture better suited to our gaming PCs, and the Arc A770 Limited Edition embodies those changes to the fullest.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

The Arc A770 uses Intel's ACM-G10 GPU. That's the same GPU as the Arc A750, though that card doesn't offer quite as many Xe-cores. The full G10 GPU within the A770 comes with eight Render Slices, and each Render Slice contains four Xe-cores and four Ray Tracing Units (RTU). So take all those bits, stick them together, and you've got the Arc A770 GPU.

Let's go one level lower into the Xe-core, the building block of the Alchemist GPU. There are 32 Xe-cores in total on the Arc A770, the maximum amount available on the G10 GPU, and these each contain 16 256-bit Vector Engines and 16 1024-bit Matrix Engines. These also share 192KB of low-level cache.

The Vector Engines are our friend for rasterised rendering, and what's interesting about them is that they come in pairs—Intel says its Vector Engines "run in lockstep" and share a Thread Control with another. If you're keen on GPU architectures, you might notice that's a similar general approach to the one AMD has with RDNA 2—the red team's architecture also locking together two Compute Units.

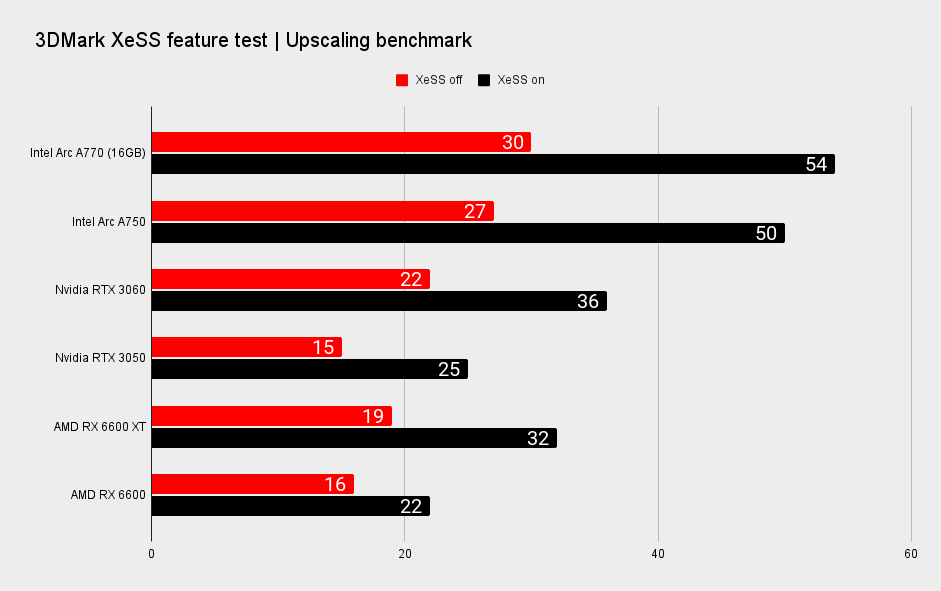

It's the Matrix Engines connected to the Vector Engines that are the more exciting addition. These are the key to accelerating MAC, DP4a, and XMX instructions. The first two are common among more modern graphics cards, though the latter is Intel's own creation for its new GPUs. XMX stands for Xe Matrix Extensions. With proper acceleration, this instruction can be used to provide 16 times more inference compute power than a traditional GPU vector unit. That might not sound like it matters much for gaming, but bear in mind Intel's new XeSS upscaling technology will run on XMX where supported.

And, in our own testing, XMX makes a world of difference to the performance uplift you can expect from XeSS.

Intel has also fitted a Xe media engine for hardware acceleration within popular video software, including streaming apps, such as OBS Studio. The Xe media engine supports VP9, AVC, HEVC, and AVI. The last one is probably going to be a big deal for all manner of streaming content.

Perhaps the single most important thing for gamers to understand about Intel's Xe-HPG architecture is that it's been designed from the ground up for DX12 Ultimate. That has a few knock-on effects for what we can come to expect from Intel's Arc A770, and goes some way to explaining some of the performance disparity we see between DX12 and DX11 games.

DX11 and older APIs do not gel quite so well with the Xe-HPG formula. Destiny 2, Apex Legends, The Witcher 3—these are just a handful of older yet still extremely popular games that will not run as well as a game with a more modern API will on an Arc Alchemist GPU. Generally, the more modern the game, the better performance we're seeing on Arc.

Though most major games today will use DX12 or Vulkan, so you can be fairly sure that the Next Big Thing will run at its best on an Arc A770 graphics card.

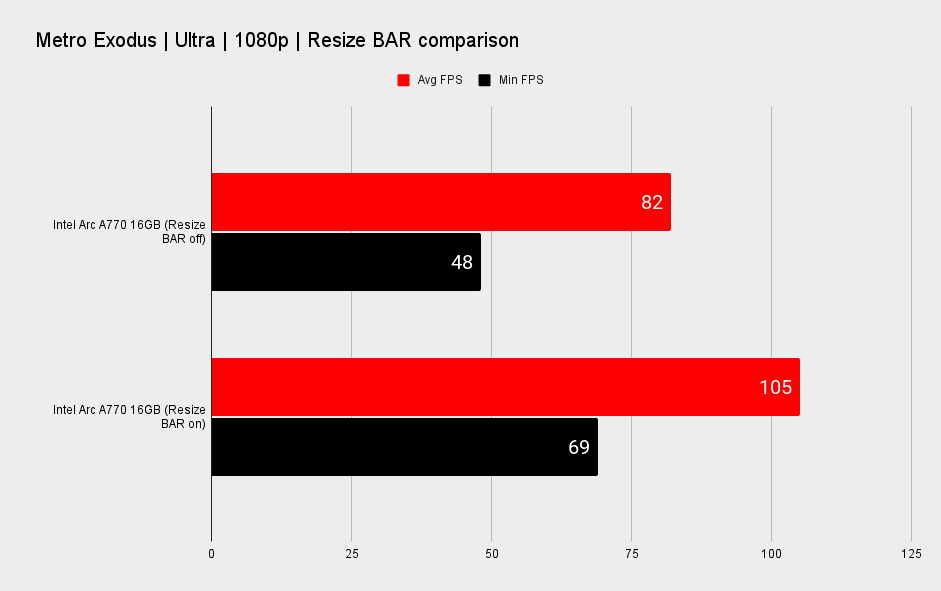

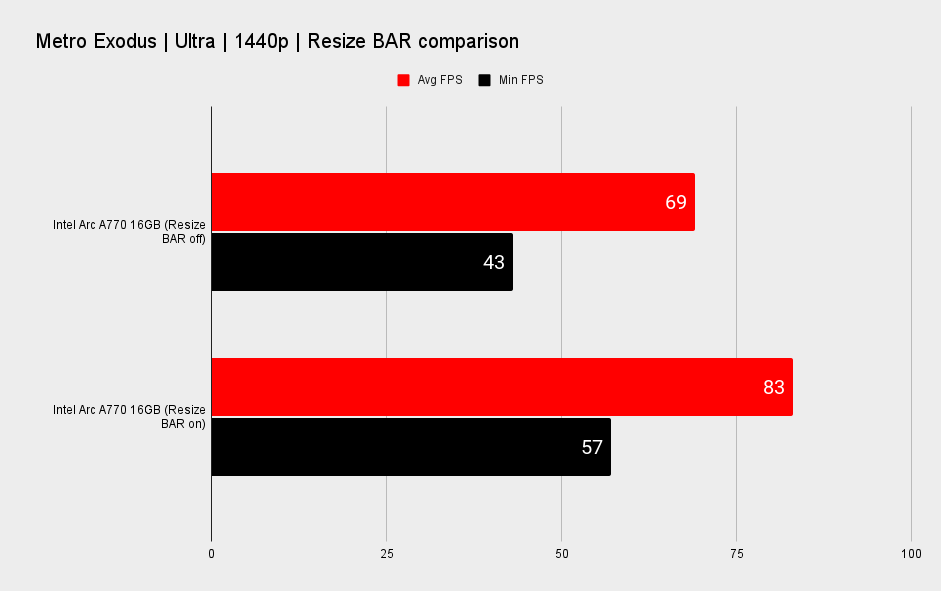

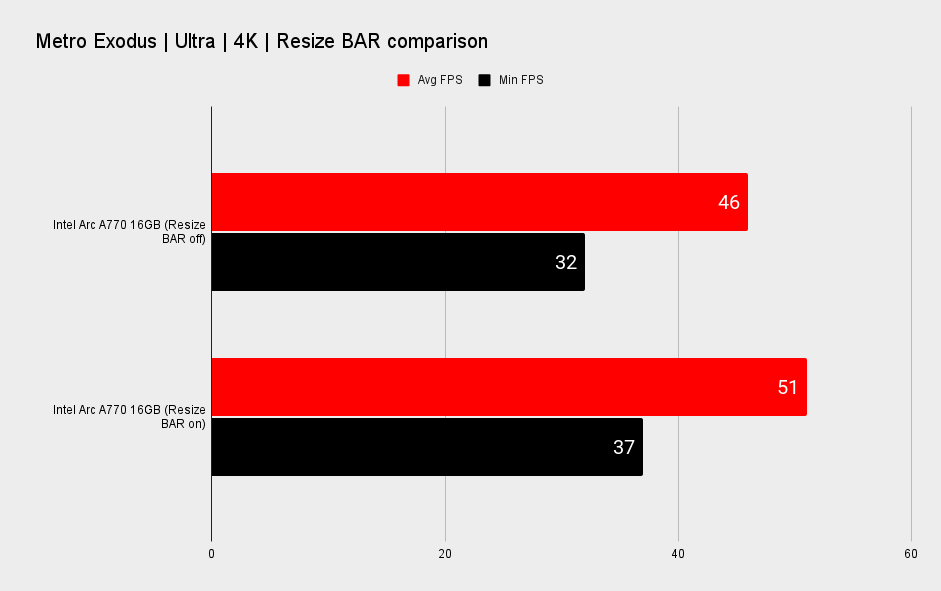

If you thought Intel Arc was already being quite picky, let me tell you all about how it's highly recommended that you switch Resizable BAR (Resize BAR) on with an Intel Arc GPU installed in your machine. In fact, Intel's graphics guru Tom Petersen told journalists in a Q&A in the lead up to Arc's launch that "if you don't have Resize BAR, buy an RTX 3060."

So, yeah, Resize BAR is pretty important.

Generally, the more modern the game, the better performance we're seeing on Arc.

Resize BAR is a feature that allows your CPU and GPU to better communicate with one another over PCIe. Offering a wider channel of communication, essentially. Most modern CPUs and motherboard chipsets support Resize BAR: AMD Ryzen 3000/5000/7000 and Intel 10th/11th/12th Gen CPUs all work just fine. AMD calls its more proprietary Resize BAR feature, which operates specifically between Ryzen CPU and Radeon GPU, 'Smart Access Memory' (SAM), however, other GPU vendors' cards also work in conjunction with AMD CPUs. Same goes for Intel CPUs and Nvidia or AMD GPUs.

If you're playing games on a PC with an older generation CPU or motherboard, and therefore unable to activate Resize BAR, you might find performance once again slips away from you with an Arc A770 graphics card. I tested this out in Metro Exodus, one of the few games heavily optimised for Arc, and you can expect a dip in frame rates of between 22–10% with Resize BAR disabled, depending on the resolution.

Intel does tell me that it hopes to minimise the impact that Resize BAR has on Arc's performance with later driver updates, but I don't have any further information on when that might be or what sort of impact it might have.

The general advice for maximising performance on Arc, then: Run games in DX12 or Vulkan where possible and switch on Resize BAR. I have enabled Resize BAR on every GPU tested for the purposes of performance testing in the section below, not only Intel's.

What's inside the Arc A770?

We've been through the building blocks of the Xe-HPG architecture but let's focus more on this particular card itself: the Intel Arc A770 Limited Edition.

The Intel Arc A770 Limited Edition shroud is one of the card's stronger points. It's relatively quiet, cool, and it looks great—just professional enough for an Intel product but still leaning into that gamer aesthetic we've come to expect from today's more and more extreme graphics cards.

| Header Cell - Column 0 | Intel Arc A770 Limited Edition (16GB) | Intel Arc A750 Limited Edition |

|---|---|---|

| Generation | Alchemist | Alchemist |

| Xe-cores / XMX Engines | 32 / 512 | 28 / 448 |

| Render slices | 8 | 7 |

| Ray tracing units | 32 | 28 |

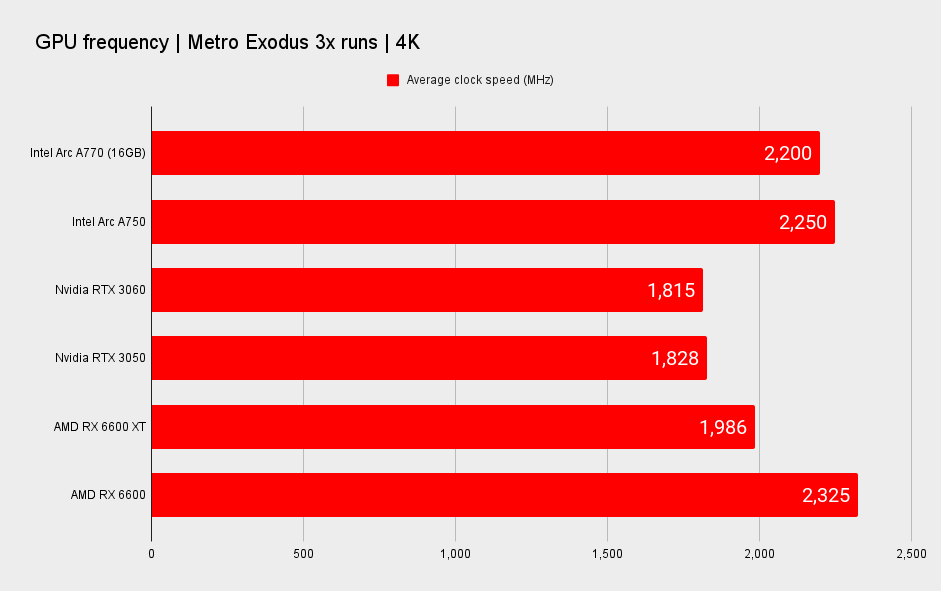

| Graphics clock (MHz) | 2,100 | 2,050 |

| Memory config | 16GB GDDR6 @ 17.5Gbps | 8GB GDDR6 @ 16Gbps |

| Memory interface | 256-bit | 256-bit |

| Memory bandwidth | 560 GB/s | 512GB/s |

| System interface | PCIe Gen 4 x16 | PCIe Gen 4 x16 |

| Power (TBP) | 225W | 225W |

| Power connector | 1x 8-pin, 1x 6-pin | 1x 8-pin, 1x 6-pin |

| HW accelerated media | AV1, HEVC, H.264, VP9 | AV1, HEVC, H.264, VP9 |

| Display outputs | 3x DisplayPort 2.0, 1x HDMI 2.1 | 3x DisplayPort 2.0, 1x HDMI 2.1 |

| Form factor | 10.5-inch length, dual slot | 10.5-inch length, dual slot |

| API support | DirectX 12Ultimate, OpenGL 4.6, OpenCL 3.0, Vulkan 1.3 | DirectX 12Ultimate, OpenGL 4.6, OpenCL 3.0, Vulkan 1.3 |

| OS support | Win 10/11, Ubuntu | Win 10/11, Ubuntu |

| Intel Deep Link Technologies | Yes | Yes |

| Warranty | 3-years | 3-years |

| Price | $349 | $289 |

It's generally quite a tame card, at least compared with what we've come to expect from new graphics cards, but I should've expected as much from Intel. What I hadn't expected was the overflow of RGB lighting on the fans and around the entire outer edge of the shroud, which is quite lovely to look at. It's surprisingly quiet even when running at full whack—the fans seem fairly well-suited to getting the job done here. We'll get to it a bit further down the page, but it's notable how the Arc A770 runs relatively cool for its power demands and relatively slim construction.

Intel's Arc A770 Limited Edition is a great version of a surprisingly decent GPU, and despite what it sounds like Intel doesn't intend to stop making them so long as there is demand.

I should note that this is the 16GB model of the Intel Arc A770. Intel is making two available: one with 16GB of GDDR6 and another with 8GB of GDDR6. Only the 16GB version will be available in this Intel-made trim, with 8GB models being made available by Intel's partners sometime after the card's release. We don't have a firm date for those, however. There is a cheaper card from Intel on the way, too, the Arc A750, and this will also be available in a Limited Edition look.

Arc A770 performance

How does the Arc A770 perform?

CPU - Intel Core i9 12900K

Motherboard - Asus ROG Strix Z690-F Gaming WiFi

RAM - Trident Z 5 RGB 32GB (2x 16GB) DDR5 @5,600MHz (effective)

CPU cooler - Asus ROG Ryujin II 360mm liquid cooler

PSU - Gigabyte Aorus P1200W

Monitor - Gigabyte M32UC

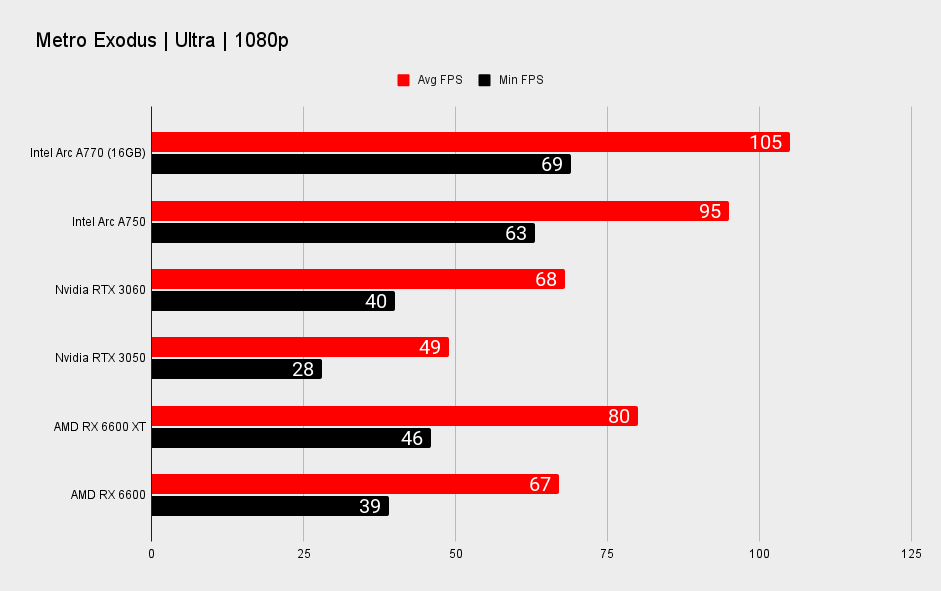

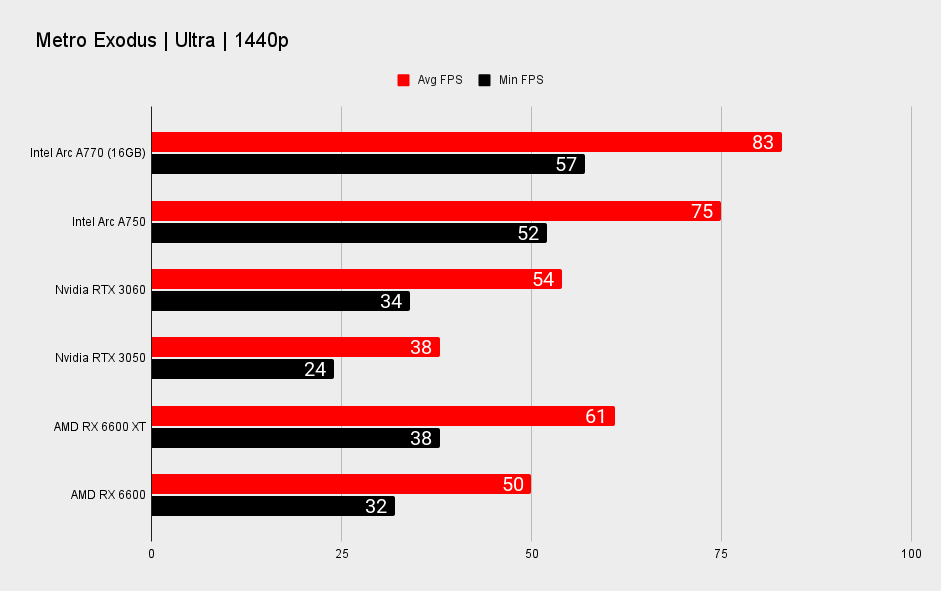

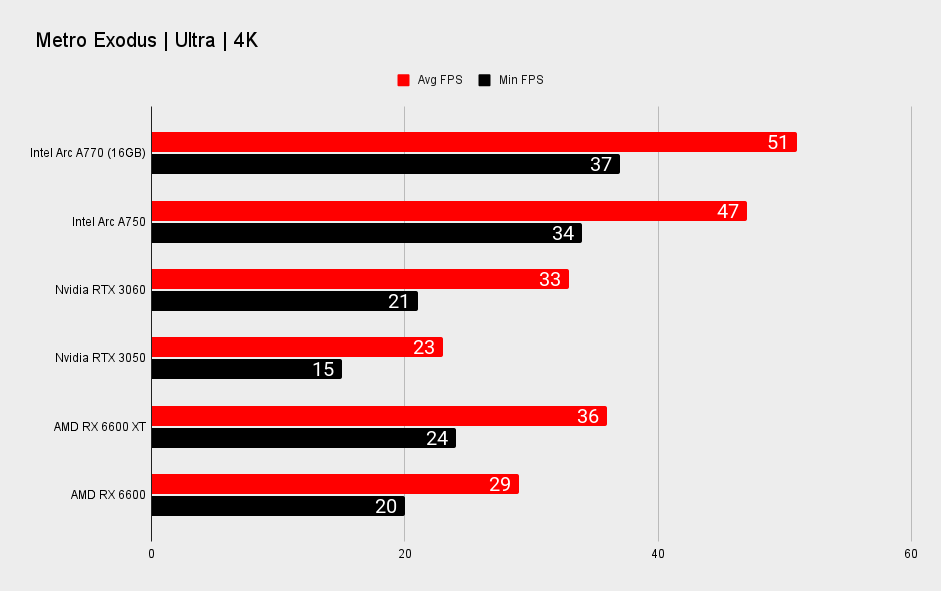

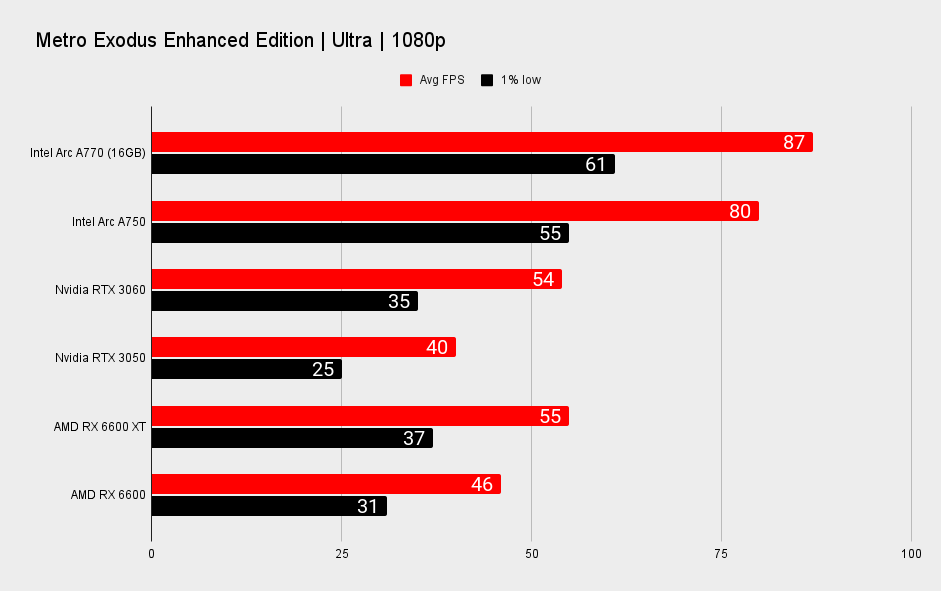

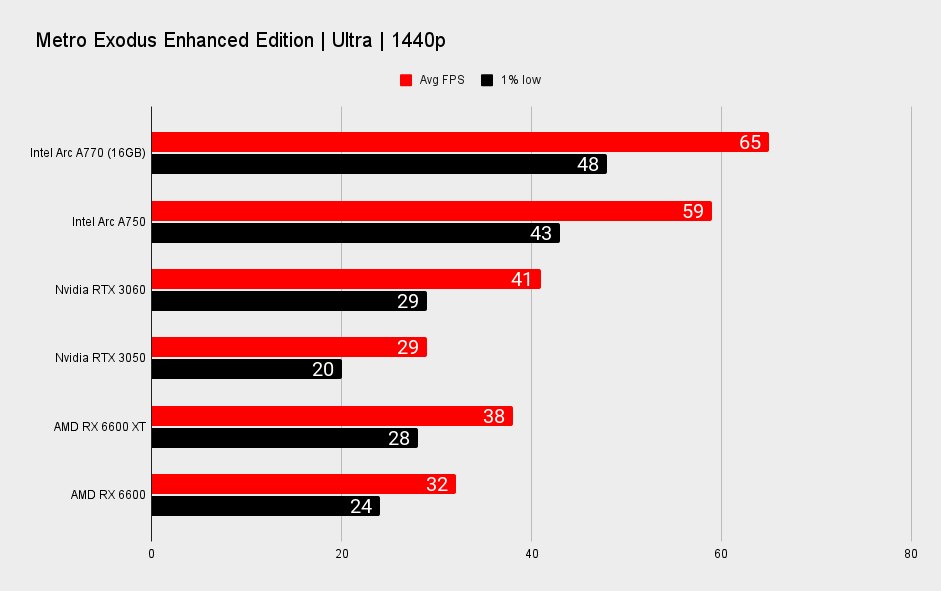

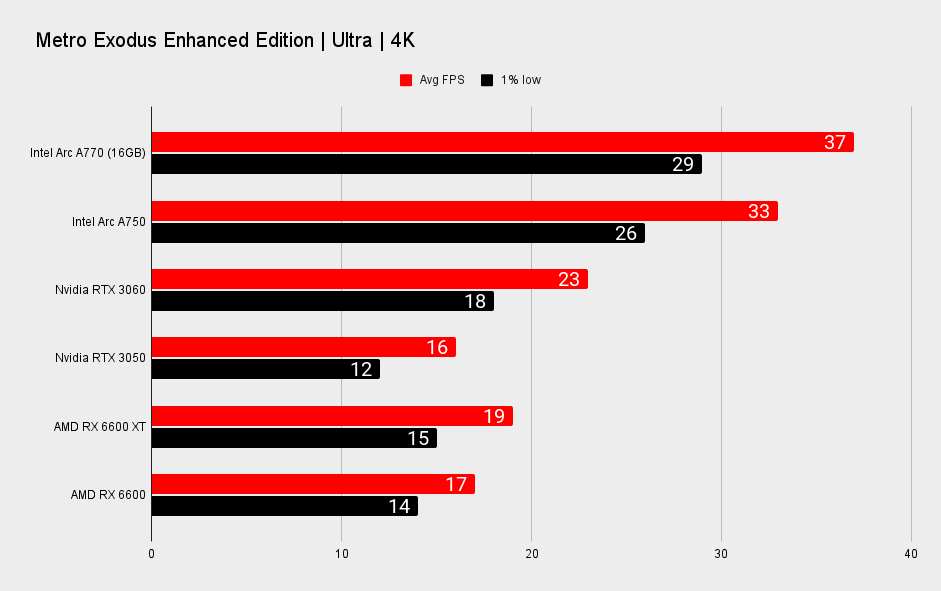

It takes a well optimised DX12 game to show the Intel Arc A770's best side. Take Metro: Exodus, for example. This is a DX12-powered game that's not known for going easy on graphics cards, yet the Arc A770 delivers significantly higher frame rates than Nvidia's RTX 3060 in this title. The Arc A770 is around 54% faster across all resolutions, in fact. You can chalk that up to Metro Exodus being specifically optimised for Intel's Arc GPUs, according to the company, which goes to show what the Arc A770 is able to offer if given the proper attention on a per-game basis.

But it's not always like that.

The optimist in me would like to think that this is the sort of performance that Intel's Arc A770 will eventually reach in all DX12 and Vulkan titles. After all, this is performance encroaching on that RTX 3070-grade we had initially expected, and perhaps Intel had also expected, from Arc earlier in the year.

But let's be completely straight here: You cannot expect that sort of performance out of the Arc A770 at all times, and to do so would require cherry-picking a few good apples from the benchmark bunch. Performance on the Arc A770 is strangely variable and unlike anything we've seen from Nvidia or AMD these past few years.

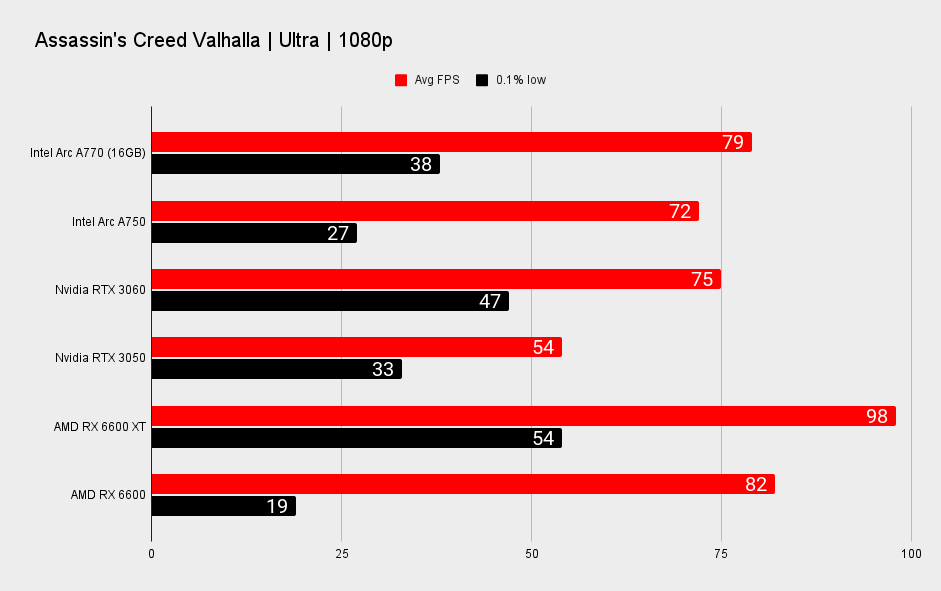

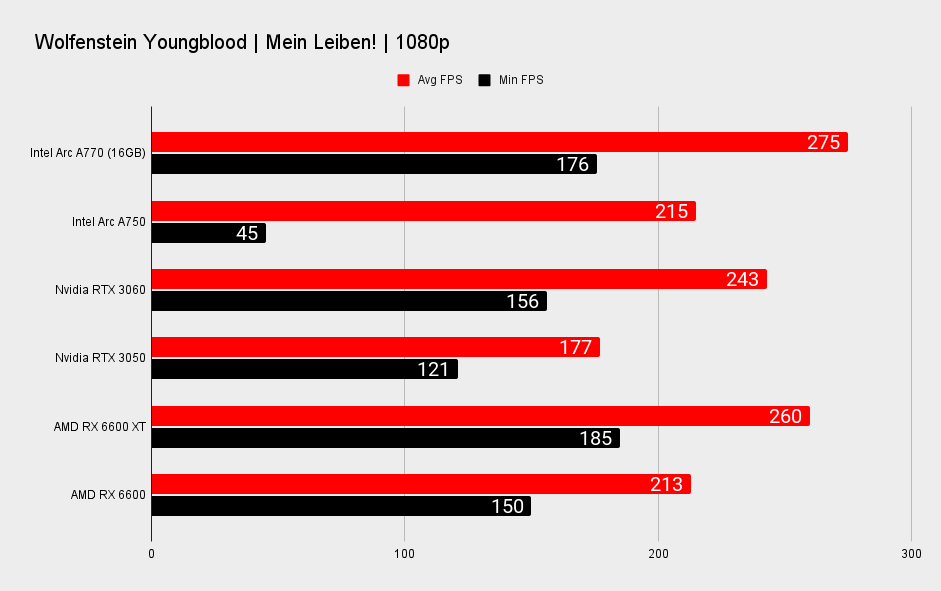

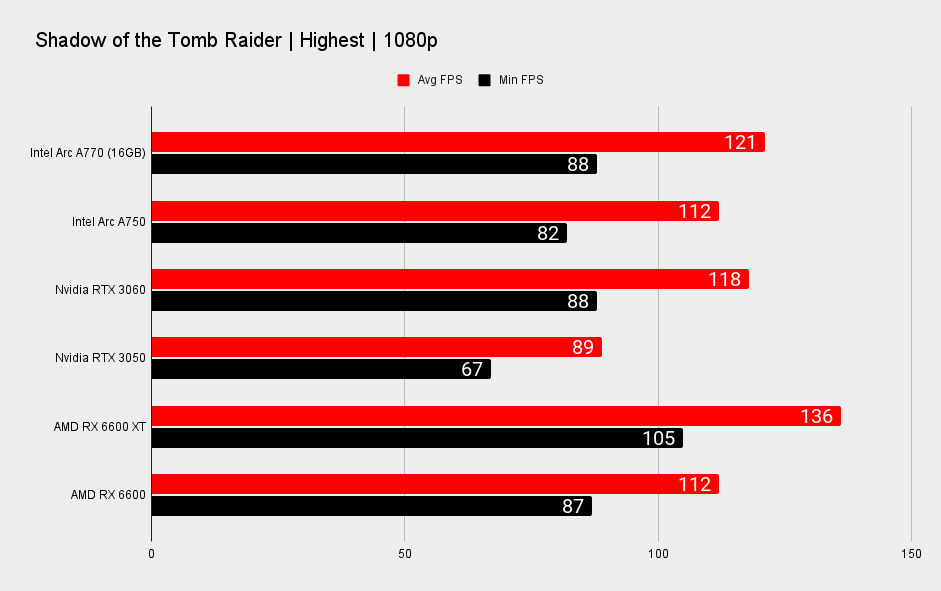

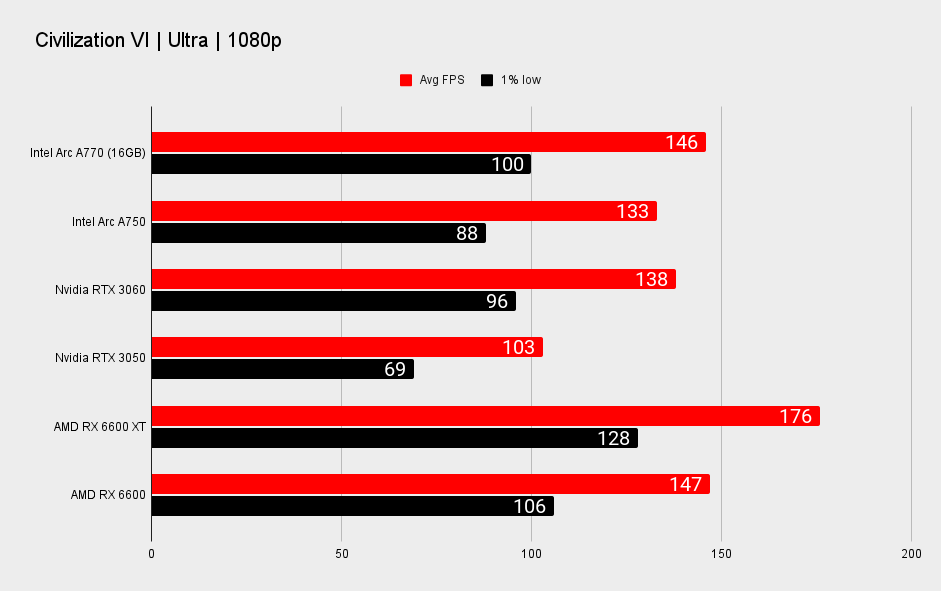

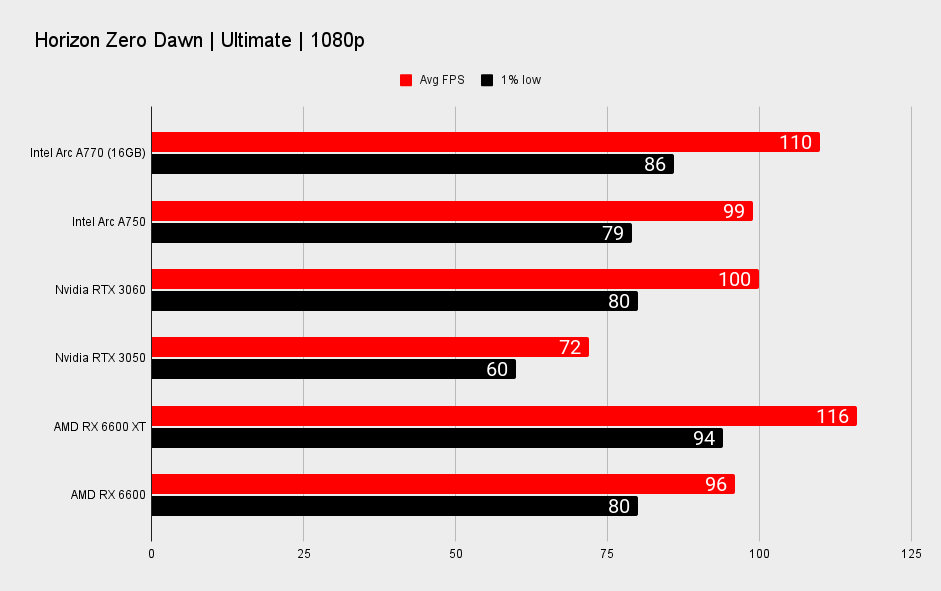

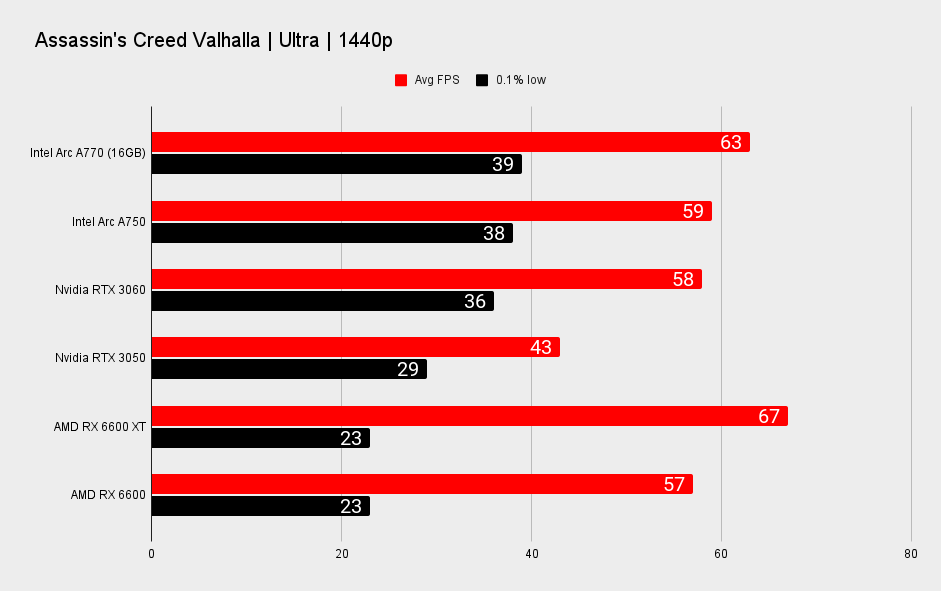

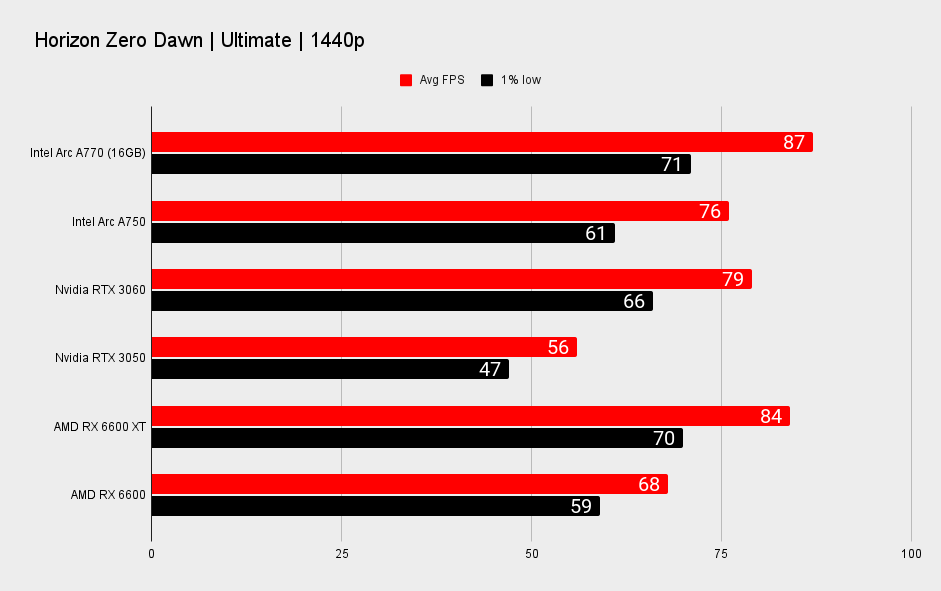

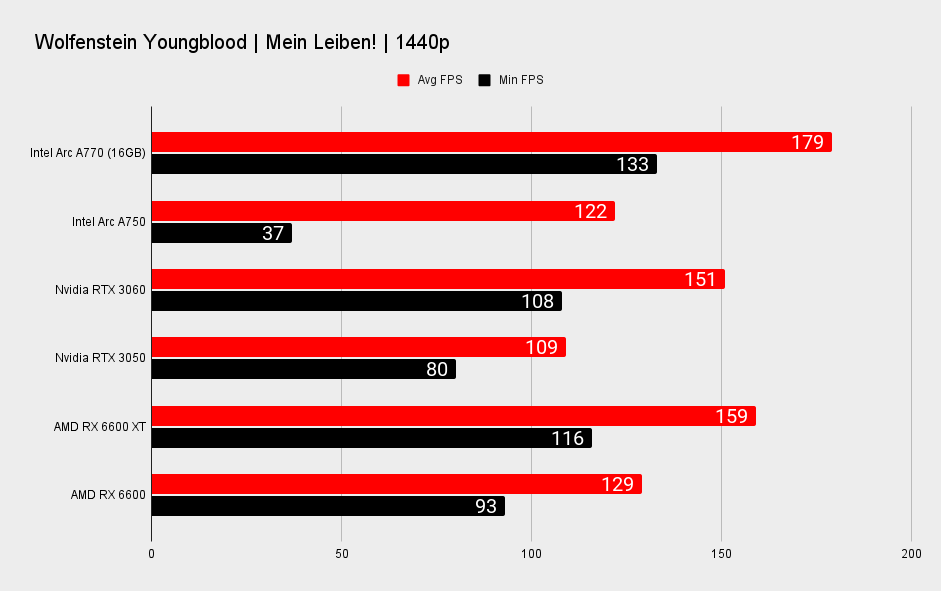

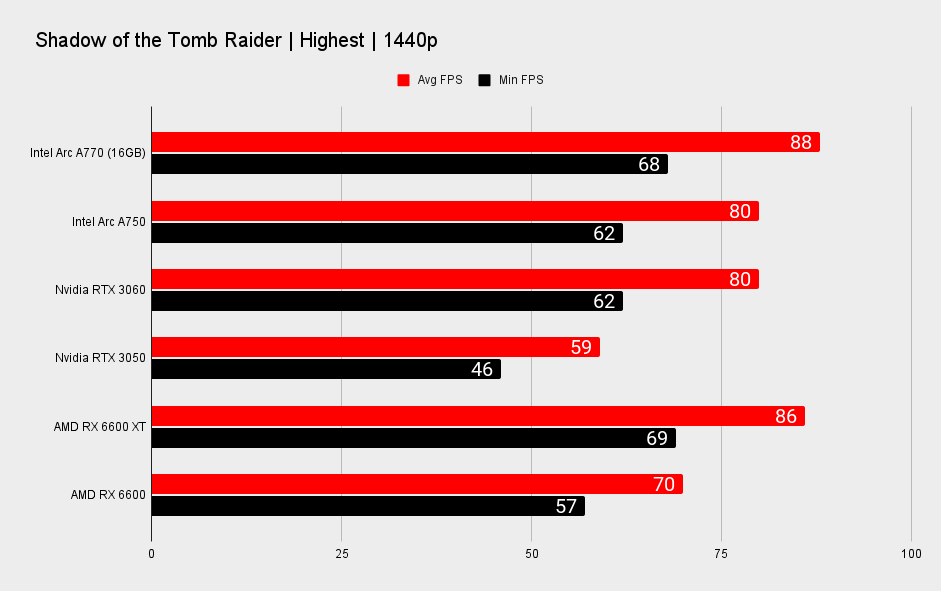

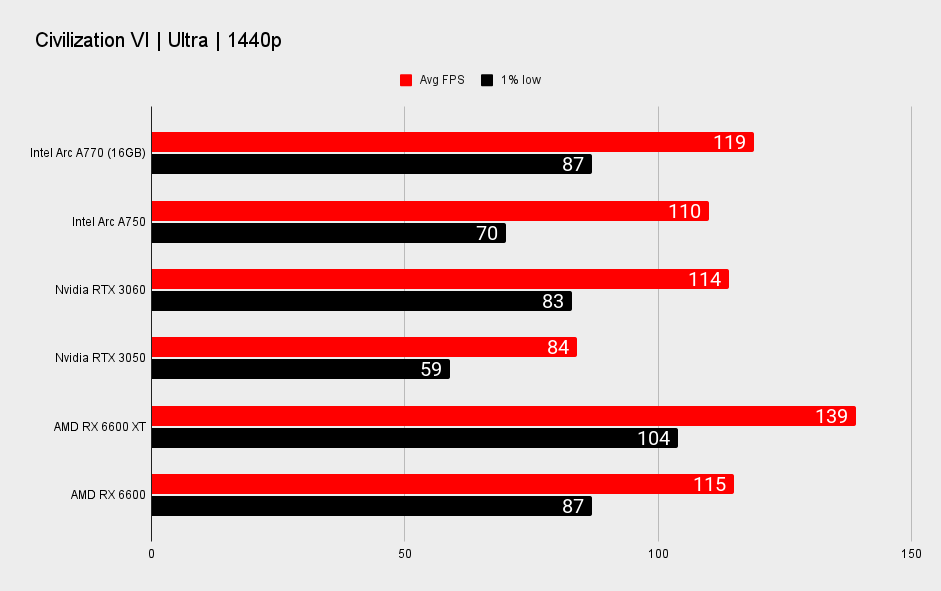

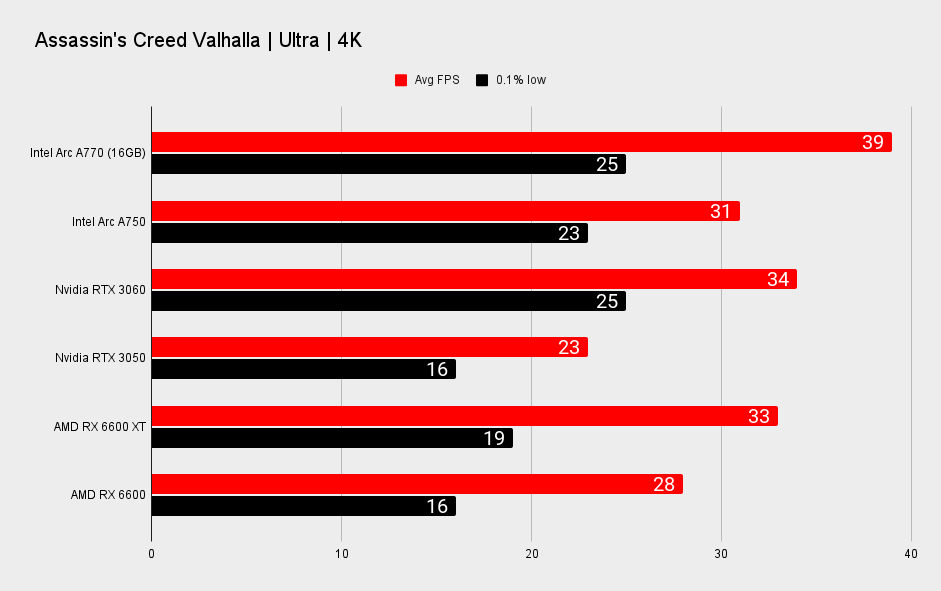

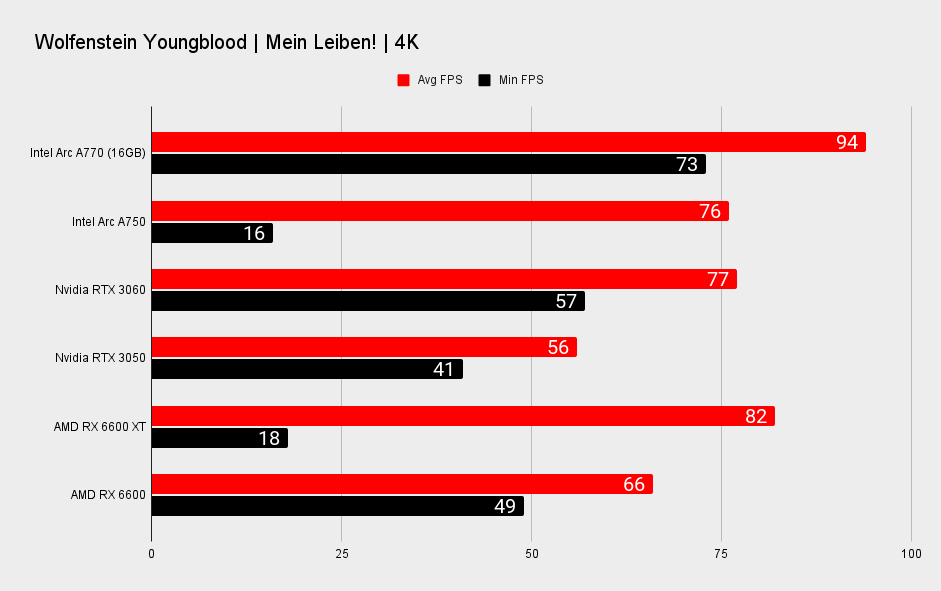

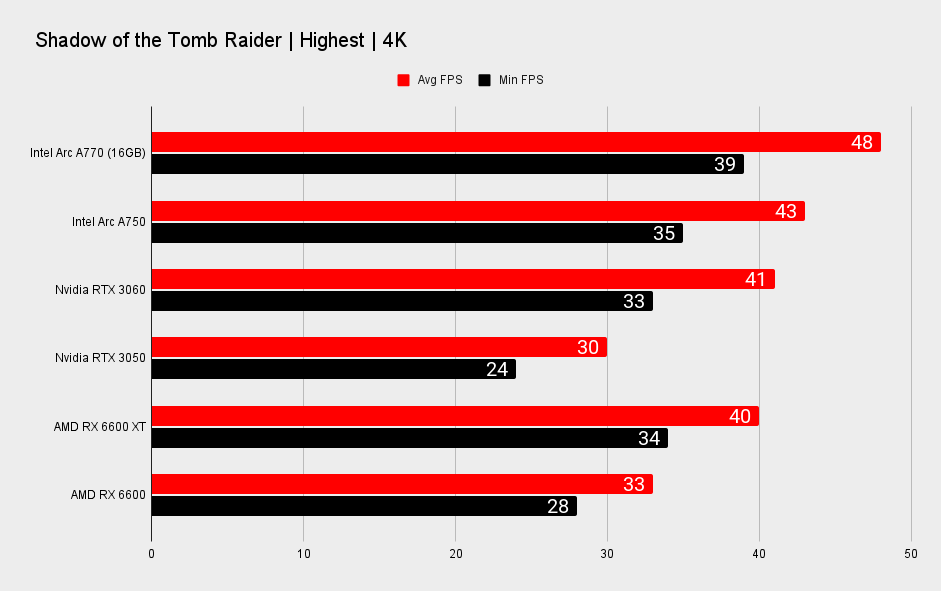

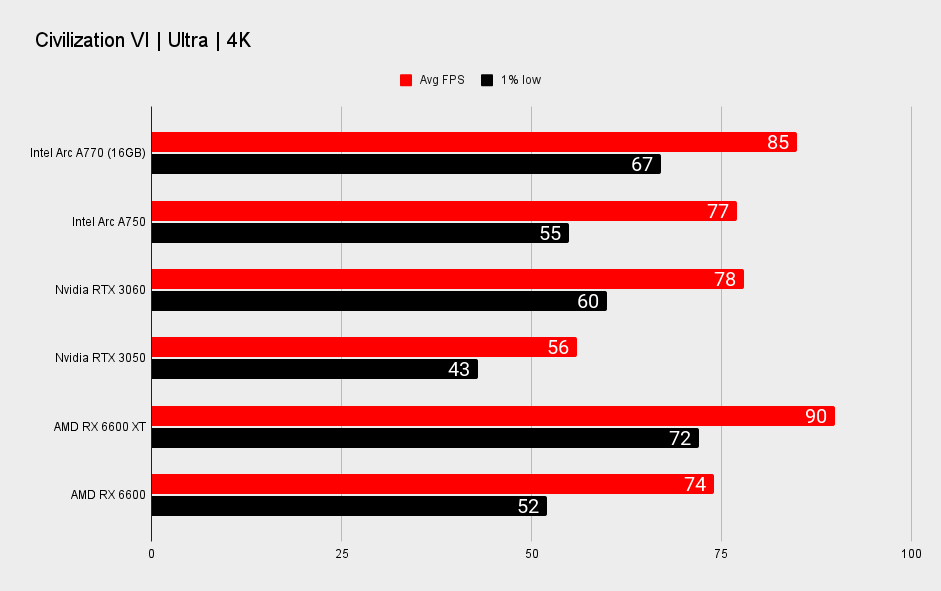

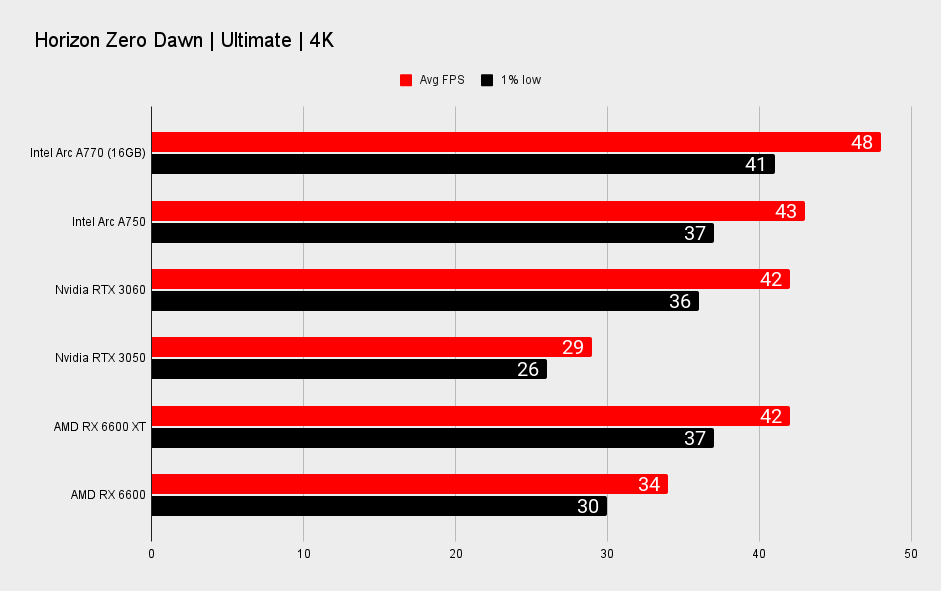

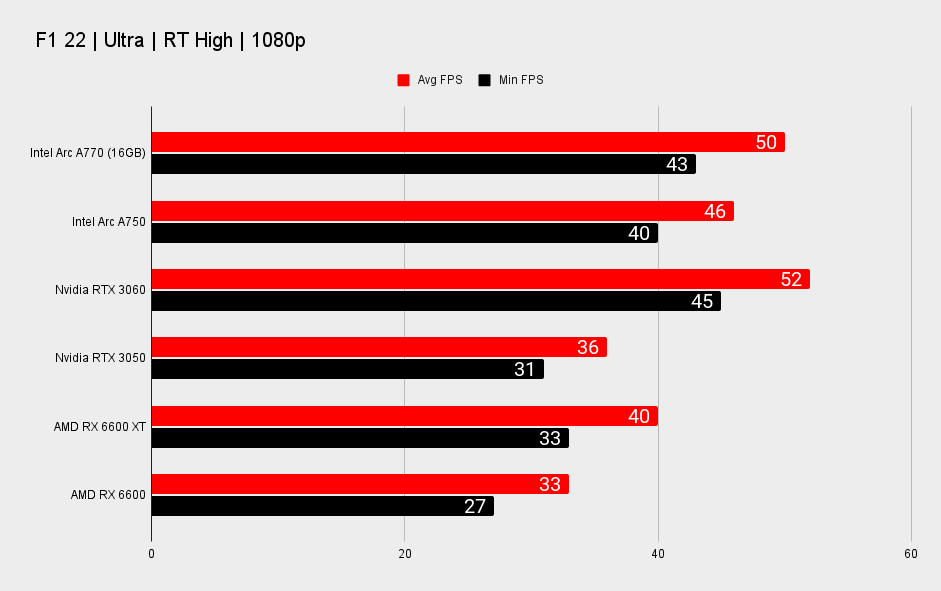

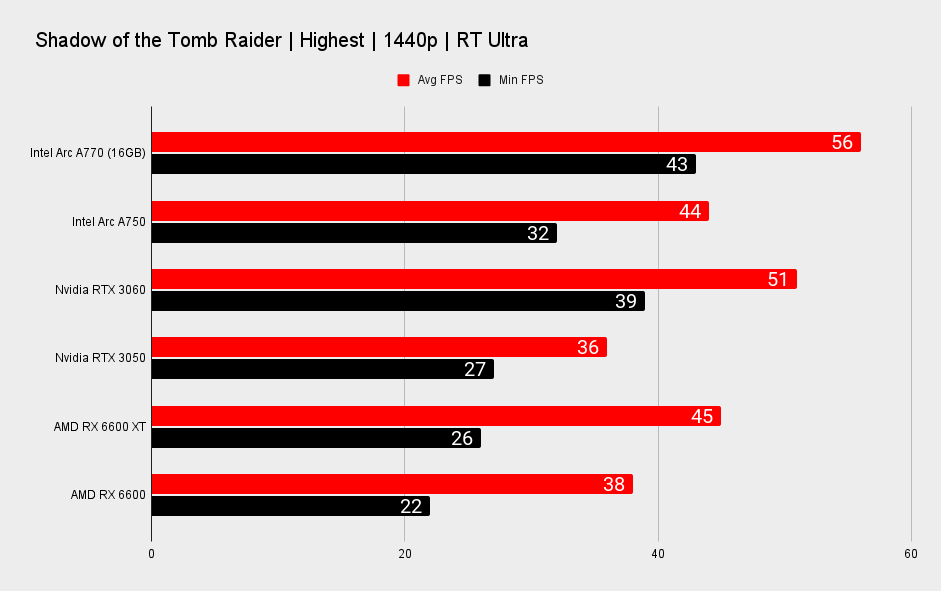

1080p performance

On the one hand: Metro Exodus, Shadow of the Tomb Raider, Horizon Zero Dawn, Civilisation VI, Assassin's Creed Valhalla, and Wolfenstein Youngblood. The Arc A770 is reliably quicker than Nvidia's GeForce RTX 3060 in these titles and able to deliver smooth, consistent frame rates at 1080p and 1440p. It's also beating Nvidia's card at 4K, though generally we expect to see less playable frame rates at this resolution on these more affordable cards.

Performance on the Arc A770 is strangely variable.

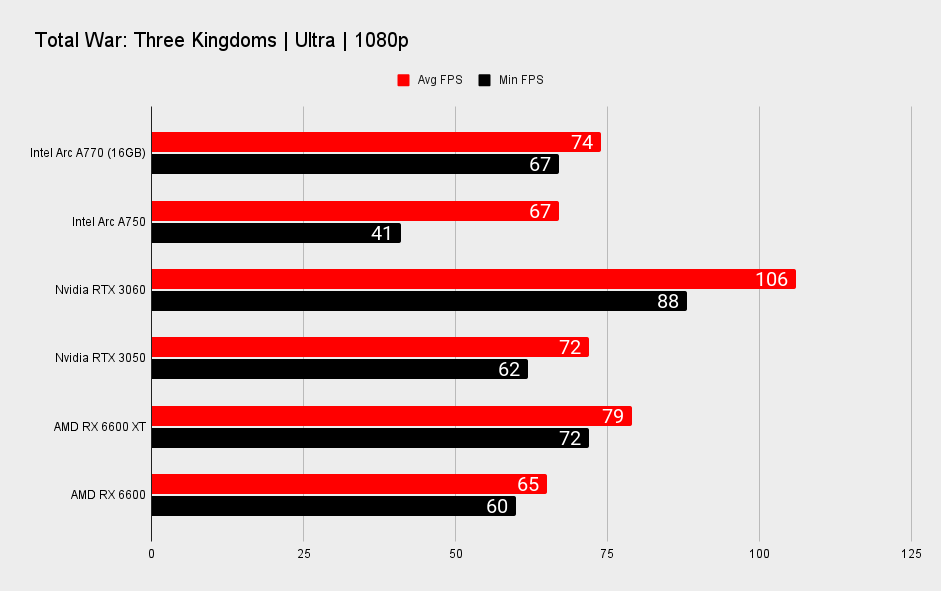

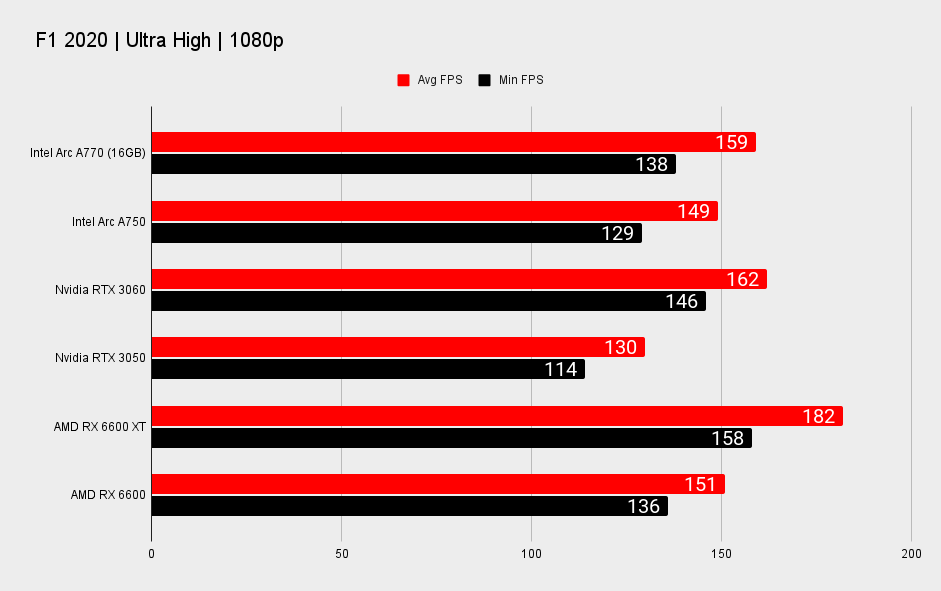

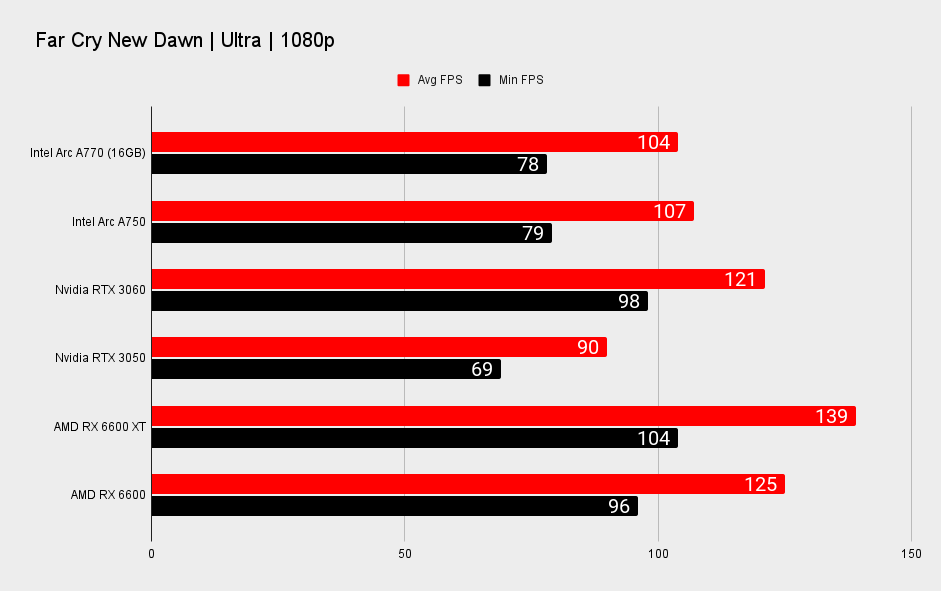

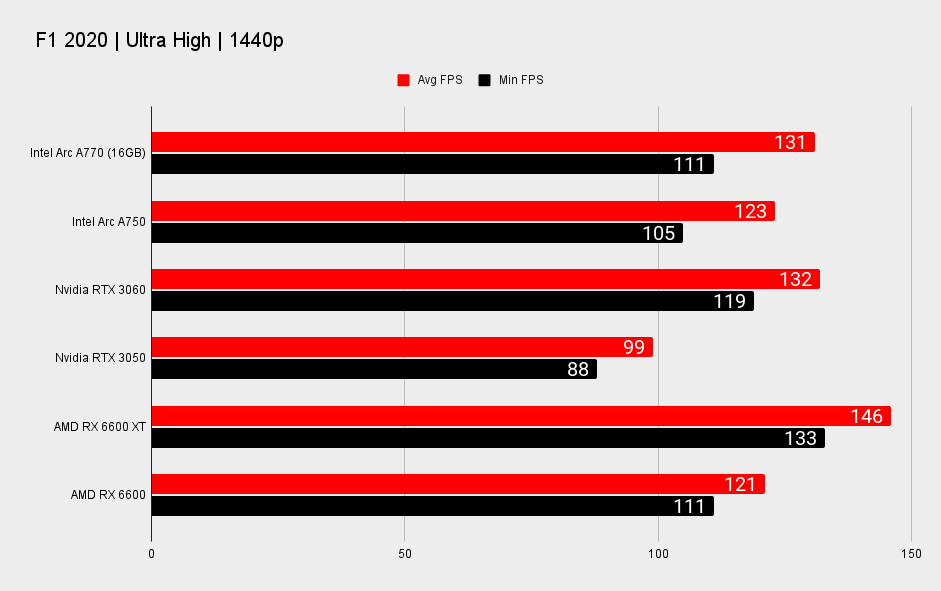

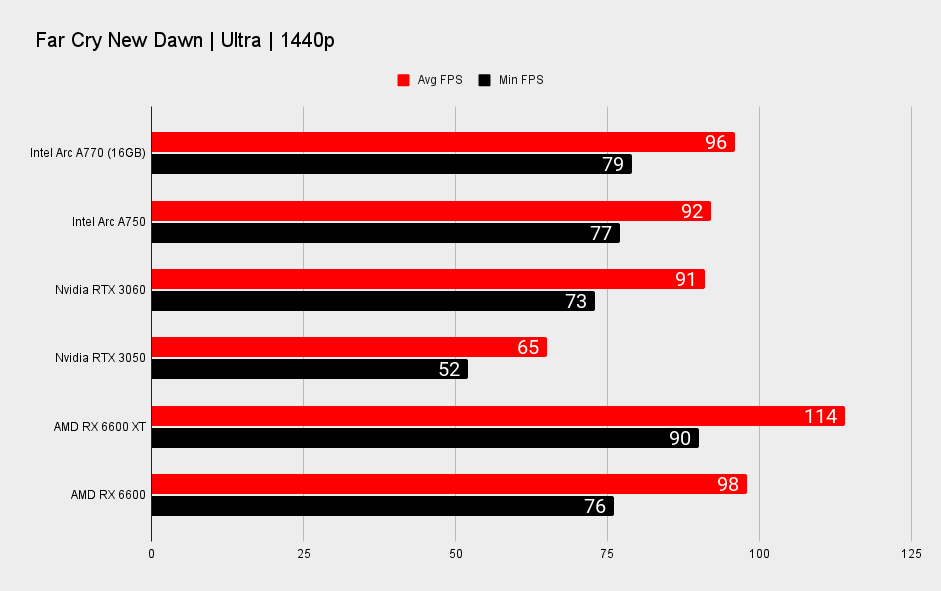

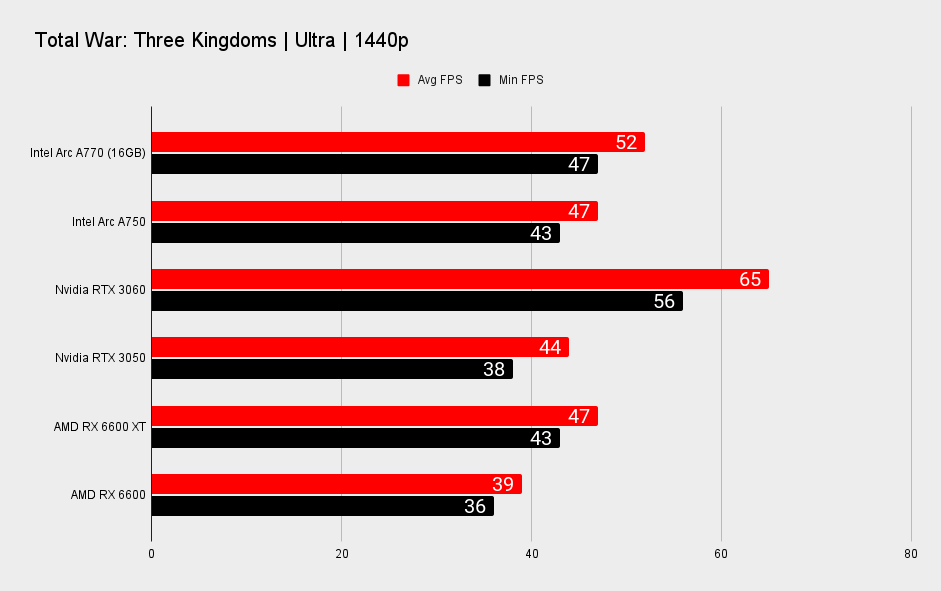

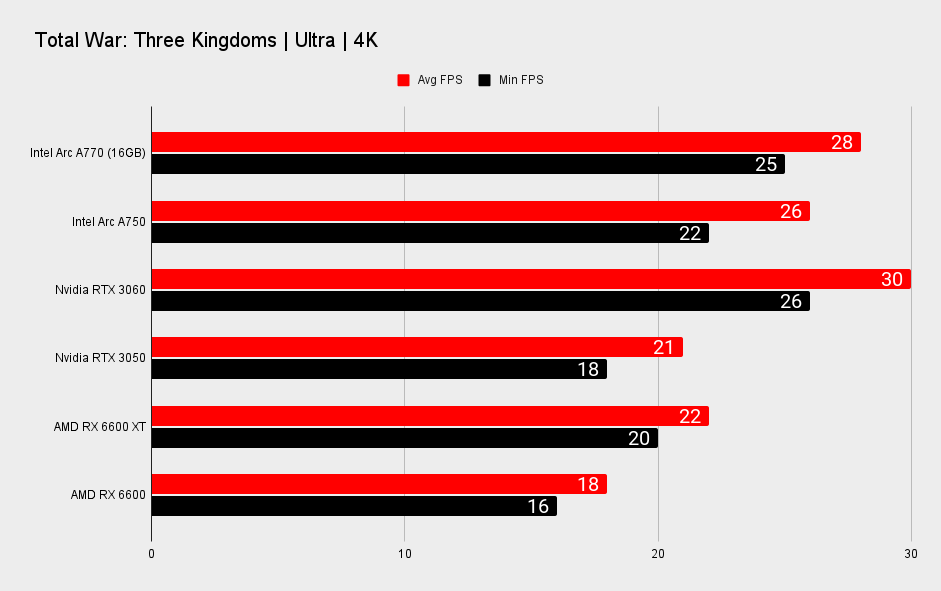

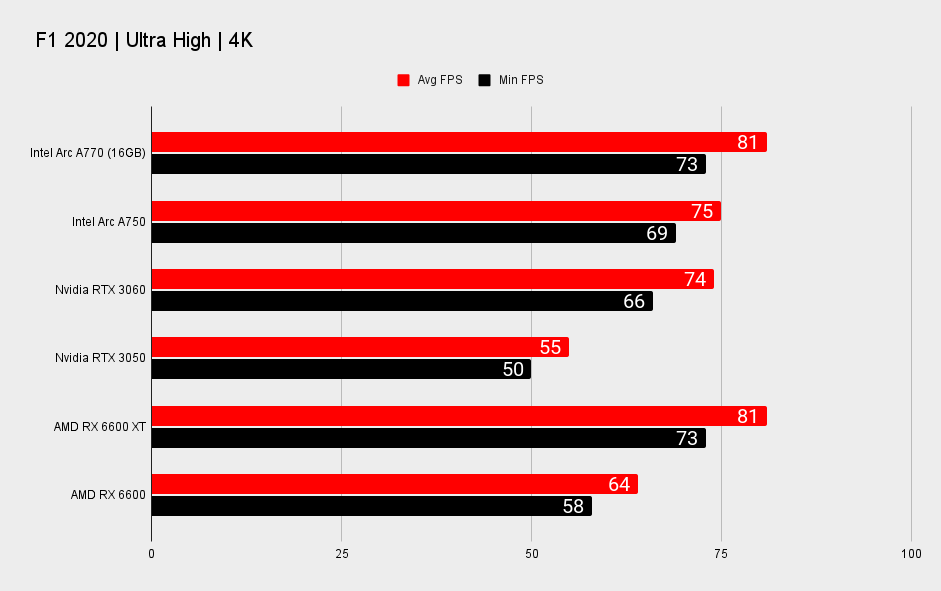

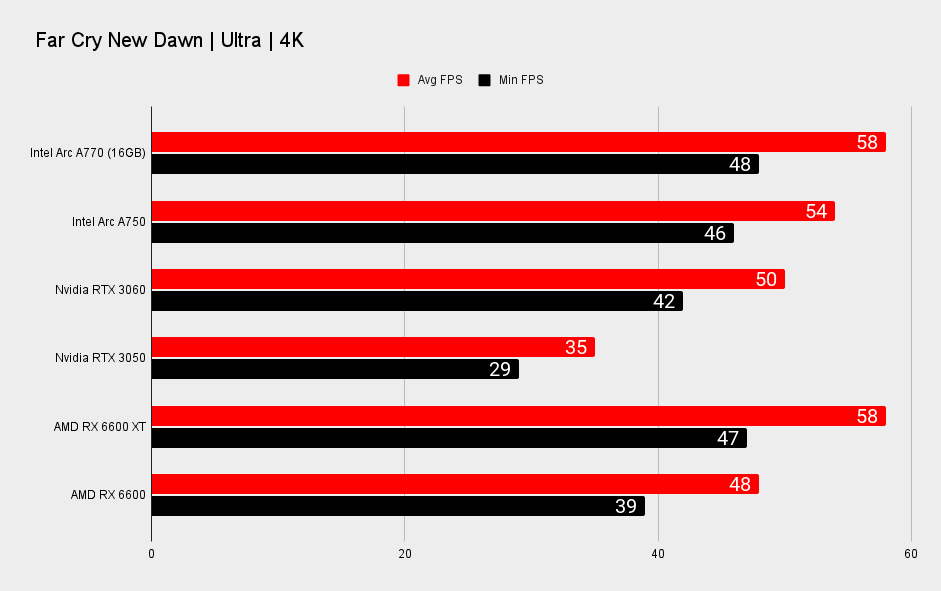

On the other hand, Total War: Three Kingdoms and, to a lesser extent, Far Cry New Dawn and F1 2020. These range from running only a couple points slower on Arc to up to 30% slower than an RTX 3060 in Total War.

Clearly, most of the games that are running better on the Arc A770 are using either the DX12 or Vulkan API.

Generally, DX11 game performance on the Arc A770 wasn't as bad as I was expecting it to be. Though that may have been the luck of the draw with our longstanding choice of game for our benchmarking suite. With just under a week to review both of Intel's new graphics cards, we've had to stick to our standard benchmarking suite, but there will be other cases to uncover, too.

We know that League of Legends, God of War, Destiny 2, and Rocket League don't take particularly well to Intel's GPUs from the company's own benchmarking numbers.

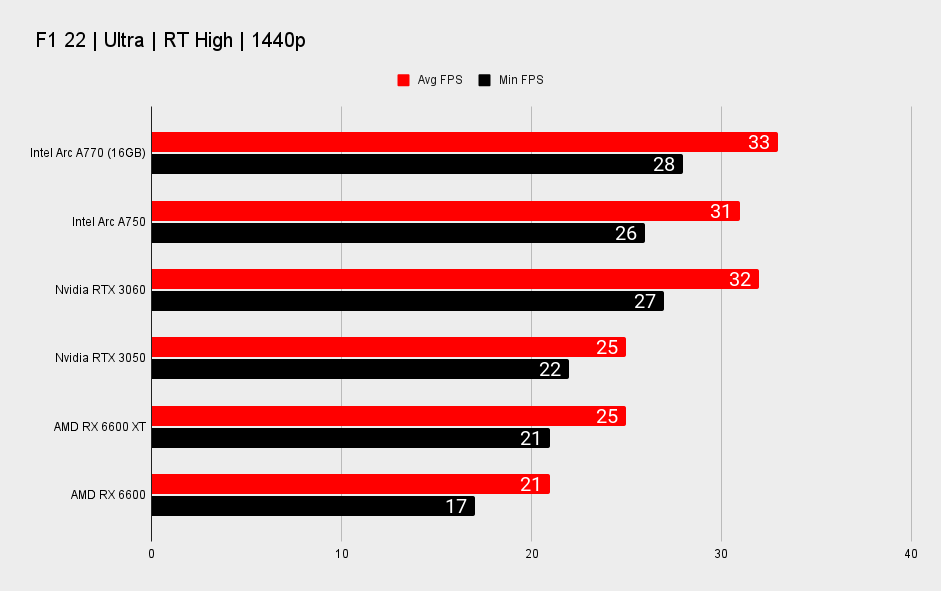

1440p performance

But something I've failed to mention so far, which might be glaringly obvious to anyone flicking through our performance graphs, is AMD's RX 6600 XT. This graphics card is as much a match for the Intel Arc A770 at 1080p, if not faster, and its 1440p performance might also have Intel worried, depending on the game. That's probably why the company has not really made a point of referencing this card in any of its pre-launch performance graphs.

Intel's problem is that the RX 6600 XT, which would have once set you back $379 or more, is nowadays going for cheaper than the Arc A770 Limited Edition today. What makes matters worse is that AMD released a minutely faster card in the RX 6650 XT. At time of writing one of these is available for just $300. More often, however, you'll find this card going for around $320–340.

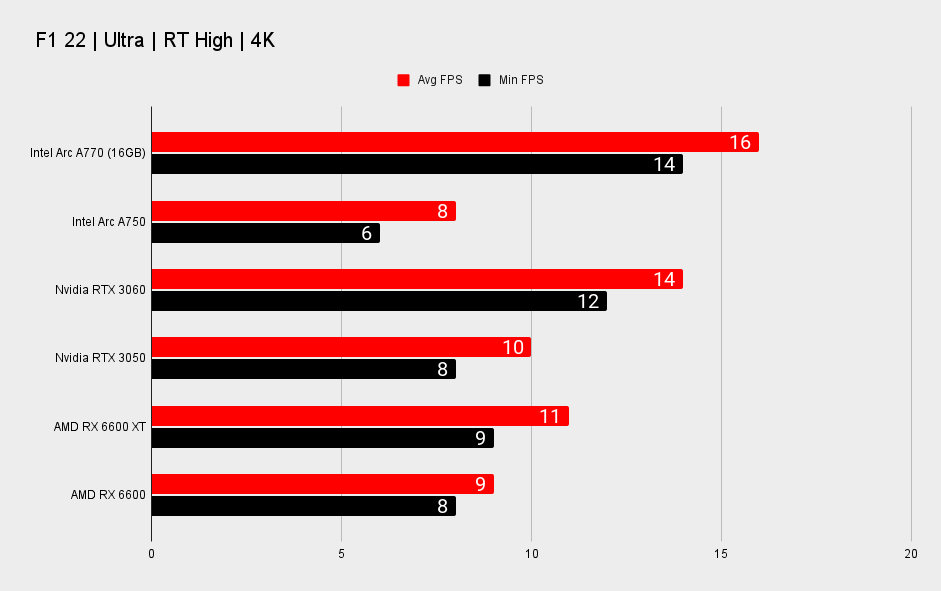

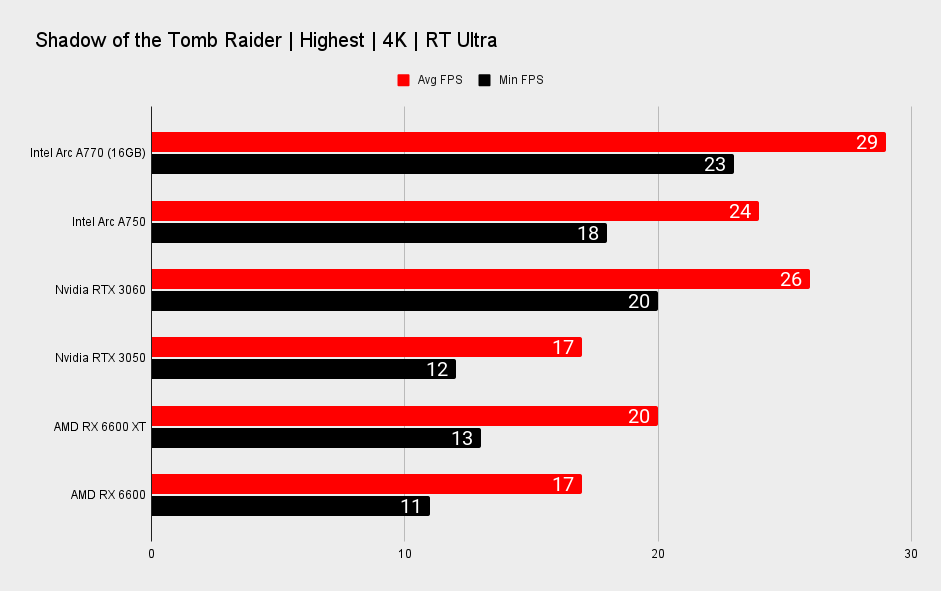

4K performance

The Arc A770 does take an unsuspecting lead at 4K, however. Now, granted, none of these cards are particularly adept at 4K gaming—there are simply too many pixels buzzing around the screen for them to manage—but as a point of competition it's interesting to wonder why this is the case.

In two out of the three games that the Arc A770 falls behind an RTX 3060 at 1080p, if you crank up the resolution to 4K, the Arc graphics card actually comes out on top. This is likely due to the increased memory capacity and memory bandwidth of Intel's GPU. The Arc A770 delivers memory bandwidth of 560GB/s. The RTX 3060 just 360GB/s. That means despite having a chunky 12GB of VRAM, Nvidia's card isn't quite as effective at putting it all to good use.

The RX 6600 XT, with its relatively low memory bandwidth (partially bolstered by 32MB of Infinity Cache), isn't always able to stretch a lead from the Arc A770 at a 4K resolution either.

Though there are times when it's not quite so rosy for Alchemist. In the worst performing game on Arc, Total War: Three Kingdoms, even the Arc A770's bountiful bandwidth isn't enough to shut the door on Nvidia.

You'll want to consider upscaling algorithms to get higher frame rates out of any one of these affordable graphics cards at 4K, anyways.

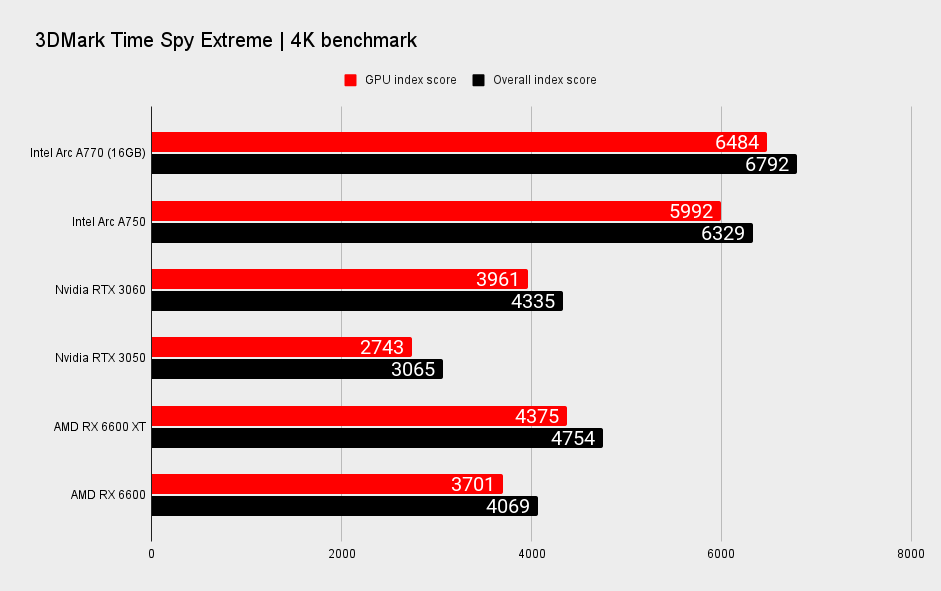

Synthetic benchmark performance

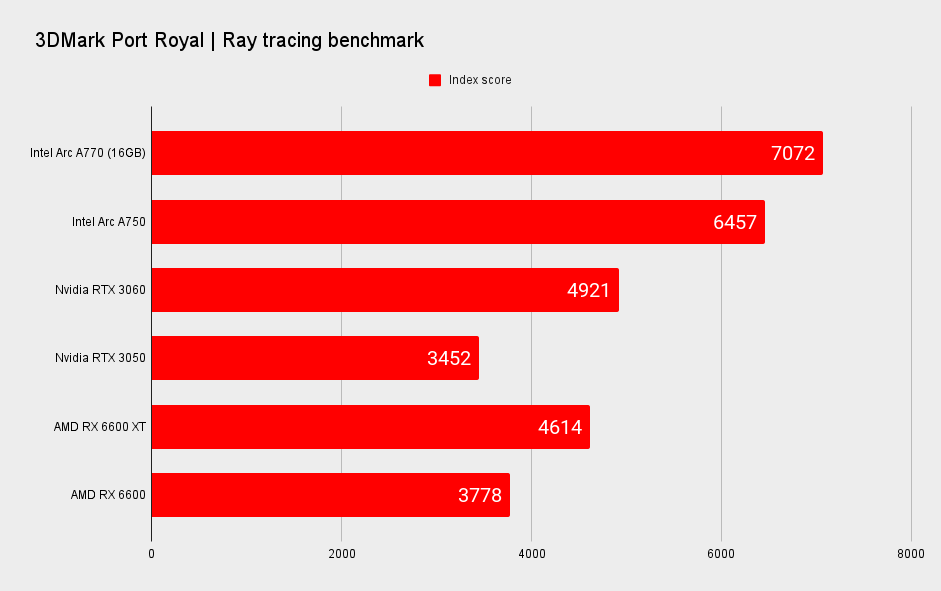

The Arc A770 seems to smash 3DMark benchmarks. A couple of these use ray tracing in some capacity, which might explain why Intel does so well, but Time Spy is also a DX12 benchmark and so plays to the Arc A770's strengths.

The thing to note here is that in 3DMark's new XeSS Feature Test you can see for yourself the impact that XMX acceleration has on XeSS upscaling performance. It's considerable. That could be seriously handy if XeSS takes off with game developers looking for a free boost to performance. It could also help mitigate some of the ray tracing performance hit, though Intel is surprisingly squared away on that front.

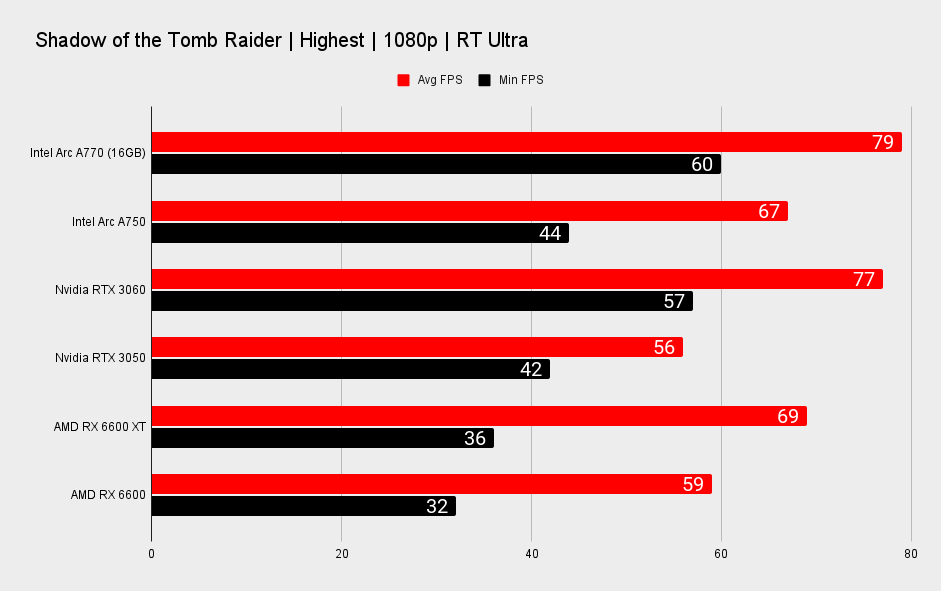

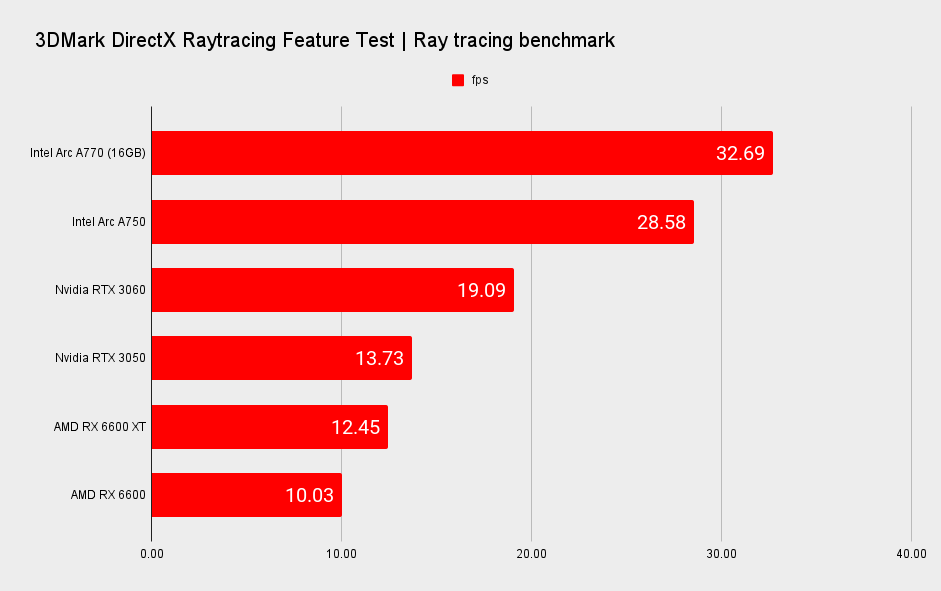

Ray tracing performance

It came as a bit of a surprise to me when Intel talked up its ray tracing acceleration as being competitive with Nvidia's—generally considered the best in the biz for real-time ray tracing—but it shows in-game. The A770 is, generally, on a level with the RTX 3060 for ray tracing performance, if not coming out slightly better in ray-traced games on an even footing.

It's in these ray-traced titles—Metro Exodus, F1 22, and Shadow of the Tomb Raider—where AMD's RX 6600 XT really struggles to keep up. RDNA 2's Ray Accelerators have never been much of a match for Nvidia's RT Cores, so that's no real surprise, but it's also for this reason why I am so surprised to see Intel seemingly nailing ray tracing with its first-generation acceleration.

Thermals and power

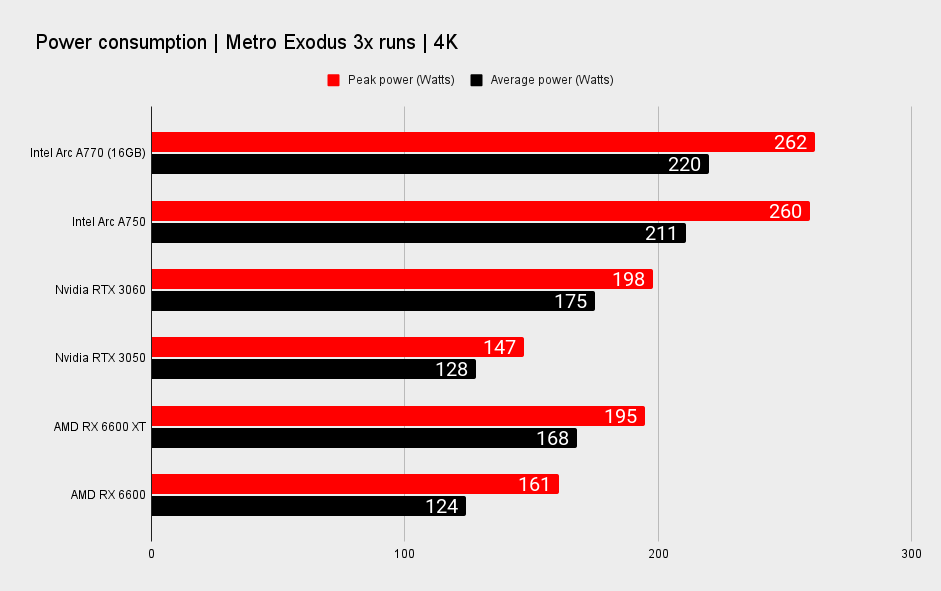

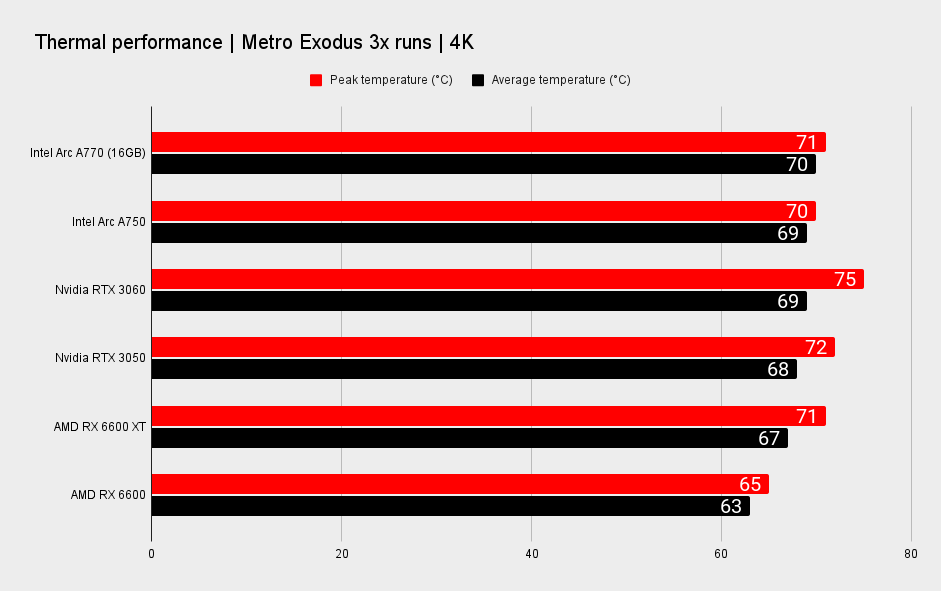

What of power, I hear you cry? The Arc A770 Limited Edition is a power-hungry card, consuming up to 262 watts during our power draw test. It rests around an average of 220 watts, but even then it's still far in excess of our Zotac RTX 3060.

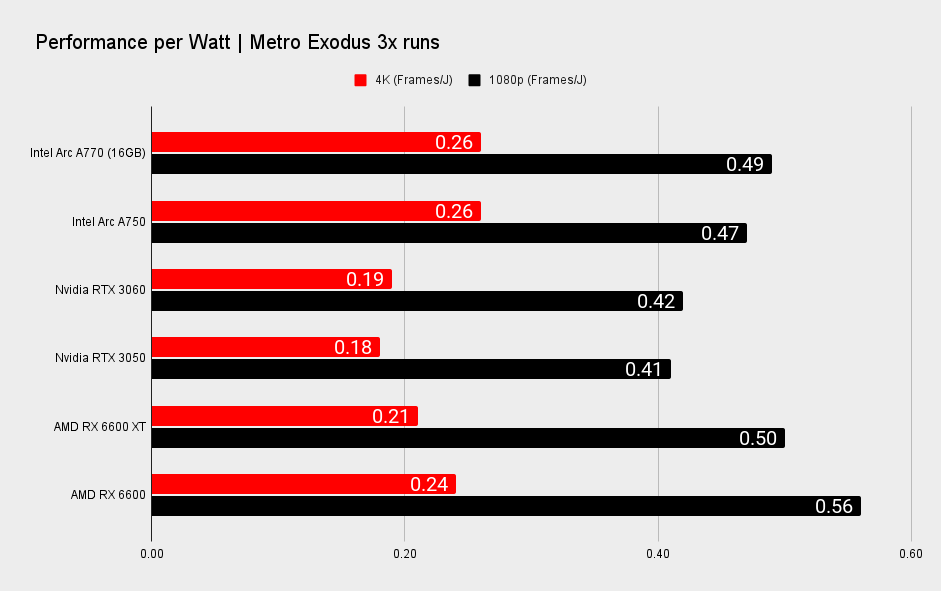

What can be said for the Arc A770 in terms of power is its actual efficiency when used with an optimised game, such as our power benchmark, Metro Exodus. It's not wasting power so much as it is asking for more and doing more with it, and relative efficiency is high for both 1080p and 4K runs. Metro Exodus has been our standard power draw benchmark for a couple of years now, but of course it's also the game that is best optimised for Intel Arc in our benchmarking suite.

The Arc A770 isn't always managing to do as much with its high power draw. If you measure power draw in the worst game of the lot for Arc, Total War: Three Kingdoms, it's a different story. At 4K, the Arc A770 manages just 0.13 Frames/J. At 1080p, it's just 0.39 Frames/J. These results are from the extremes on either end, and you'll likely actually experience something between the two.

Generally the Arc A770 tends to fall on the side of more power hungry than its competitors, but once again Intel's variable performance makes it tough to say exactly where it stands. This graphics card's performance is so dependent on the game being played that your mileage will massively vary.

Also while we can accurately extract power measurements from the card, our usual testing applications couldn't gather power and temperature data from either the Arc A770 or Arc A750. Intel's own graphics software, Arc Control, lets you run an overlay with the information, however, so I had to manually measure these metrics through that app.

Arc A770 analysis

How does the Arc A770 stack up?

Intel has managed to make the Arc A770 Limited Edition a competitive card through its more affordable $349 price tag, but would I recommend someone buy an Arc A770 for their next PC build?

Performance isn't excellent across all APIs and that does make it a harder recommendation. Not just because the odd DX11 game gives the Arc A770 a migraine, but also because I don't want to have to explain all of these caveats to the next person to ask me for help building a PC. At least in the Xe-HPG architecture's happy place, namely DX12 and Vulkan, it delivers excellent performance for 1080p and 1440p gaming. Especially in Metro Exodus. An added bonus is the Arc A770's actually decent ray tracing performance.

So in some respects, yes, I would say it's worth considering. But not before you've weighed up all your options.

I don't enjoy mulling what ifs for reviews, as it quickly gets too speculative, but Intel is betting on the RTX 3060 maintaining an inflated price tag in order to keep its GPUs looking like the much more affordable option. That might be a good call, price drops don't appear to be on the Nvidia CEO's mind right now, but there's definitely going to be some wiggle room for Nvidia on the price of that card. Whether it will choose to even acknowledge Arc, however, I'm not so sure.

The supply of Intel's Alchemist generation will play a role in how much of an impact they have in the market and on existing GPU prices. Nvidia isn't going to be dropping prices of anything unless Intel has a whole lot of these chips ready to go. Statements to this very point from Intel's Tom Petersen suggest Intel will take things slow to begin with.

Regardless, I feel the strongest competition to Intel's Arc A770 is from AMD. The RX 6600, RX 6600 XT, and RX 6650 XT encircle Intel's Arc lineup at launch, and I'm not sure AMD even needs to sweeten the deal any more to win over PC gamers on a budget. The affordable market really is a squeeze right now. Should one of Intel's partners produce a third-party card that's even a touch more expensive than MSRP, I'd probably recommend sticking to the tried and tested driver package of Team Radeon.

In fact, I'd recommend an RX 6600 XT at $329 to pretty much anyone that asked, regardless of whether it's actually any cheaper than Arc.

It's less buggy than I had thought it would be, at least.

There are simply more caveats to performance on Intel's Arc Alchemist graphics cards than the rest. There's also the maturity of AMD and Nvidia's drivers that shines through in their performance and software packages. Intel may be able to play catch up—the company does say it plans to reduce its dependence on Resize BAR—but I suspect that there's no catching up with the decades of API driver development you get with Nvidia and AMD. Older APIs may always be left out in the cold in favour of newer ones.

I don't blame Intel for a focus on the latest APIs, though it would be wrong to consider it anything but a negative for customers in this crossover period in game dev. At least in the company's defence, while there once was a lengthy period of time when DX12 had teething issues and DX11 seemed irreplaceable for modern games, that's come and gone now. Some popular service games and competitive titles still use DX11 today, and probably will continue to do so for a long time, but we're seeing a significant and sustained shift from game developers to DX12 and Vulkan.

Ultimately, I don't blame you if you fall into the better safe than sorry camp and buy an RX 6600/XT. The Intel Arc A770 is a riskier buy. That said, I will put it forward for its frame rates in optimised games and its ray tracing chops—you will get the better end of a bargain at least some of the time.

Arc A770 verdict

Should you buy an Arc A770?

For what I had at one time expected to be a pretty brutal graphics card launch, Intel has brought Alchemist back from the brink and turned it into something. Something is better than nothing and the Intel Arc A770 is absolutely one better than that. If there was more stability across frame rates I don't think I'd have qualms in calling Intel's first-generation GPUs a qualified success, but as it stands today it's much tougher to make any sort of sweeping statement.

You just never know exactly how this graphics card is going to perform. It'll either knock the socks off an RTX 3060 or be buried alive by it. It's both praiseworthy and lacklustre, depending on the game you're playing on it. I'm not sure I expected anything more of a first-generation graphics card release. It's less buggy than I had thought it would be, at least.

The card itself is very well put together, too. The Intel Arc A770 Limited Edition looks and feels the part of a modern graphics card and its thermal performance is genuinely solid. On that front Intel, and prospective buyers, can be thoroughly happy.

Intel is also competitive with its price, and I think that's what puts the Intel Arc A770 Limited Edition into any sort of contention today. We're all still feeling the shockwaves of the GPU shortage—let's be honest, an RTX 3060 should be available for under its MSRP by now, even though it's not—and Intel has made an attempt to soothe cries from PC gamers for something, anything, more affordable. It's perhaps a shame Intel couldn't get this card out sooner, when AMD and Nvidia weren't dropping prices for their GPUs. Then again, Intel is using TSMC to manufacture its GPUs, the same chipmaker as AMD for RDNA 2 GPUs, so who knows if its supply would've been any better than the rest.

I wouldn't be disappointed if it came bundled with a cheap pre-built PC.

Ultimately, with the performance of the Arc A770 landing where it has, and not where Intel had hoped it to be, the company faced a decision: either price the card competitively or let the whole discrete gaming GPU project die on the vine. I'm glad it stuck this one out and tried to make the most of it, because it has ended up becoming something I can see making a good few PC gamers happy enough.

I might not choose the Intel Arc A770 for my next PC build—AMD seems to have a better hold on the budget market than anyone else today—but I wouldn't be disappointed if it came bundled with a cheap pre-built PC, either.

I have at times this year feared the worst for Intel's first gaming GPUs, but after this past week of testing, I'm actually more excited about what's to come. This first generation was always going to be a tough launch for Intel, and I think it ended up being even tougher than initially expected. However, if this is the baseline for the next generation, Battlemage, and after that Celestial, Intel appears on the right trajectory towards parity with the top guns in graphics at some point in time. And that's something I think we can all agree would be great for PC gaming.

Bring on Battlemage.

The Arc A770 Limited Edition is far from perfect but does deliver very playable frame rates at 1440p and with ray tracing enabled. It's certainly not a bad start for Intel Arc. But while it focuses on facing down Nvidia and the popular RTX 3060, it is AMD's RX 6600 XT that simply offers a better alternative right now.

Jacob has been writing about PC hardware and technology for over eight years. He earned his first byline at PCGamesN before joining PC Gamer. He spends most of his time building PCs, running benchmarks, and trying his best to learn Linux.