Console gaming isn't that much simpler than PC gaming anymore

PC gaming is easier than ever, while the new consoles are getting more complex with PC-style graphics settings.

The first time I moved the PlayStation 5 between my desk and my TV, I booted it up to a screen I wasn't expecting: it chastised me for not fully shutting the system off, then quickly checked over its filesystem for corruption. PlayStation has had a similarly grumpy filesystem since the PS3, but on my PC, I can't remember the last time Windows cautioned me that my system hadn't shut down properly and asked to boot into safe mode. We all know what it's like to troubleshoot our PCs (is your RAM all the way in?) and crashy games, but today's reliable hardware and programs like Steam make PC gaming less fiddly today than it's ever been. Gaming on a console, meanwhile, is starting to feel a lot more like gaming on a PC.

As consoles grow more powerful and more sophisticated, they also slowly lose that "just plug it in" simplicity that once defined them. Slam a cartridge into a Nintendo 64, turn it on, and you were playing. On the PlayStation 2 and Xbox, those with fancy TVs and video cables could turn on progressive scan or even 720p output to make their games look better, but that was about as complex as it got. But today the conversation around every big new console game is dominated by the same conversations players have been having on PC for decades—resolution or framerate? My perpetually stoned college roommate was making the same choice when Crysis came out in 2007, except instead of a binary choice he was turning every graphics option to the max so he could shoot down very detailed trees at 17 frames per second.

Just a few years ago console games rarely offered any kinds of performance settings at all. It became common with the launch of the PS4 Pro and Xbox One X, beefed up consoles that could use more CPU power to run games at higher resolutions or higher framerates, but rarely both. Now as a matter of course console players have to choose between the two. Do they want a smoother, more responsive game, or a better image?

Article continues belowRay tracing, the hot PC tech of 2018, is making that decision more complicated. It's not just about resolution anymore; it's about lighting, too, and fancy reflections, the kinds of small touches that help games inch closer to photorealism. This new binary choice sounds way simpler than PC gaming and its dozens of sliders and graphics options, but there's one thing the consoles are missing that's a given on PC: Transparency.

On my PC, it's trivial to turn on a framerate counter to see how a game is performing. I can adjust some individual graphics settings, even if I don't know exactly what they do, and see how the picture changes. Did my framerate go up or down? Were those higher resolution textures worth the hit? On consoles, it's not necessarily clear what changes between the two settings, or if "performance mode" even guarantees a locked 60 frames per second. Digital Foundry has amassed millions of followers by expertly breaking down how games differ between these modes and between consoles, because it's really hard to scrutinize yourself.

When Digital Foundry authoritatively says a game runs at a higher resolution or smoother framerate on Xbox or PlayStation, that carries weight, and both console makers have leaned into that, making it a major part of their marketing in a way it wasn't a decade ago. Running older games at 60 or even 120 fps is a core promise from Microsoft, and just this week it said it wants first-party Bethesda games to be "first or better or best" on its platform. The question of which console to buy is no longer just about which one has the games you want to play; it's about which one plays the games you want a little better, too. That sounds an awful lot like buying a new graphics card.

Console players are learning to compromise in the same way most PC players have always had to, unless they could afford the very best hardware. On my GTX 1080, I know a lot of new games can't hit my monitor's 144 Hz refresh rate, but I'll usually find a mix of settings that guarantee my framerate doesn't dip below 60. I have to make peace with lower quality shadows or textures or less anti-aliasing. Console players now have to make peace with what they're missing out on when they choose a picture mode. 4K or 60 fps? Shouldn't my brand new $500 console be able to do both? Nope—welcome to the world of tough decisions.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

The PS5 and Xbox support 120 Hz refresh rates, which means at some point the conversation shifts from '30 vs. 60' to 'what about 120?'

It seems like this console generation is poised to hew closer and closer to PC gaming, offering more choices beyond that performance/quality binary. The Xbox Series X version of Bright Memory includes its full graphics options with sliders for things like anisotropic filtering and reflection and shadow quality. Bright Memory was made by a single developer and I doubt it'll be a trendsetter, but more granularity does seem inevitable.

Demon's Souls on the PlayStation 5 lets you adjust motion blur, an option in far too few console games. Many games now offer customization like disabling the HUD or using a minimalist mode, something you could once only do on PC by editing a game's files. Photo modes are commonplace, thanks to the years dedicated screenshotters have spent running their games at 8K and using tools like Cheat Engine to freeze time and get the perfect shot.

And both the PS5 and the Xbox Series X support 120 Hz refresh rates on compatible TVs, which means at some point the conversation shifts from "30 vs. 60" to "what about 120?" In a few years, it might make a lot of sense for developers to offer more choices that let console players push their framerates beyond 60 fps, even if the baseline is still a ray traced 30.

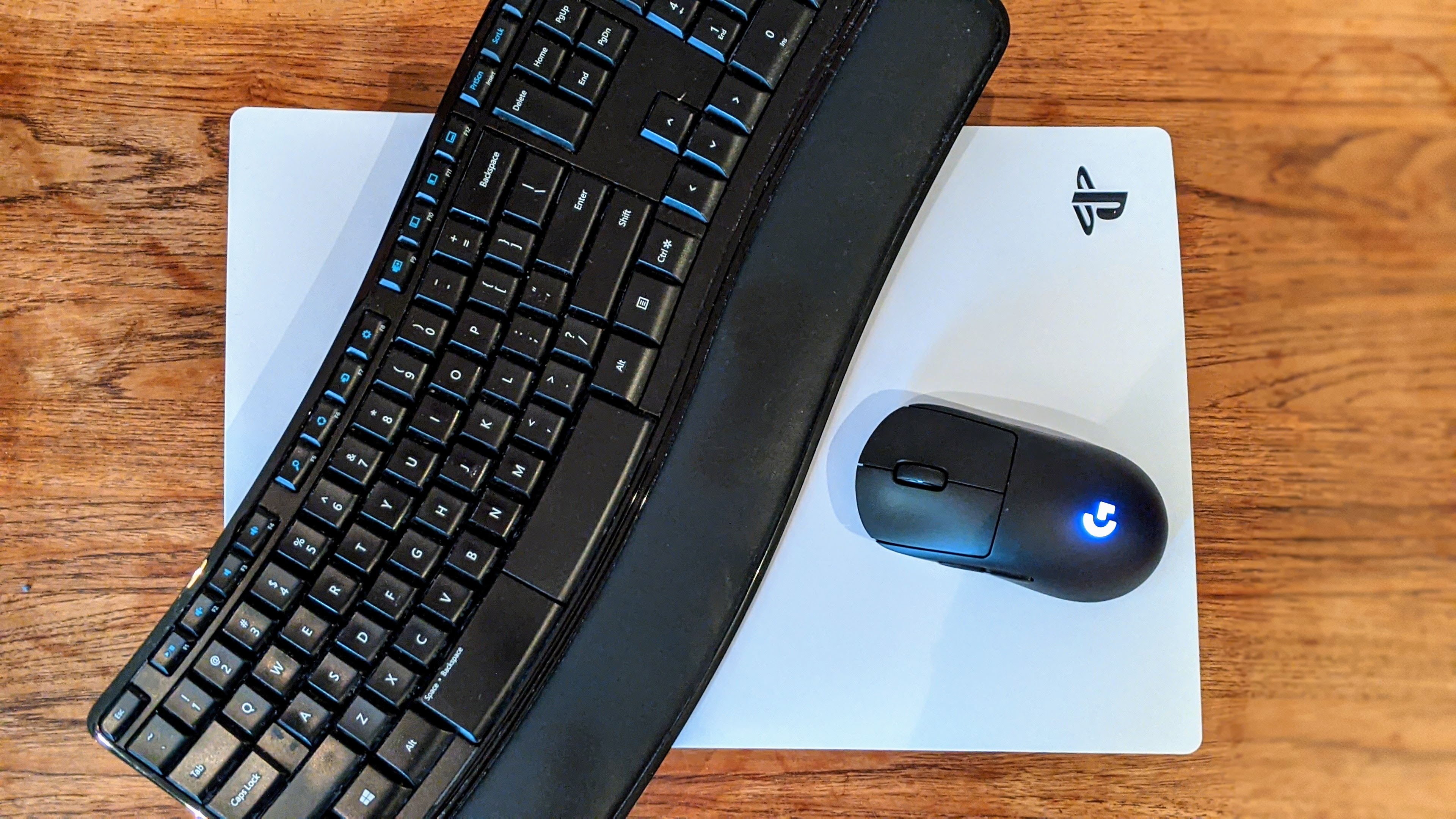

As console technology continues to advance, Sony, Microsoft and game developers will all have to reckon with an audience increasingly invested in how their games perform, and offer as much detail in the user interface as possible for things like HDR and variable refresh rates and so on. If the UI is too simple, that can end up making it harder to do basic tasks (I was briefly baffled that I had to go into the PS5's Settings menu just to see my video files, since it has a whole section on its dashboard called "Media.")

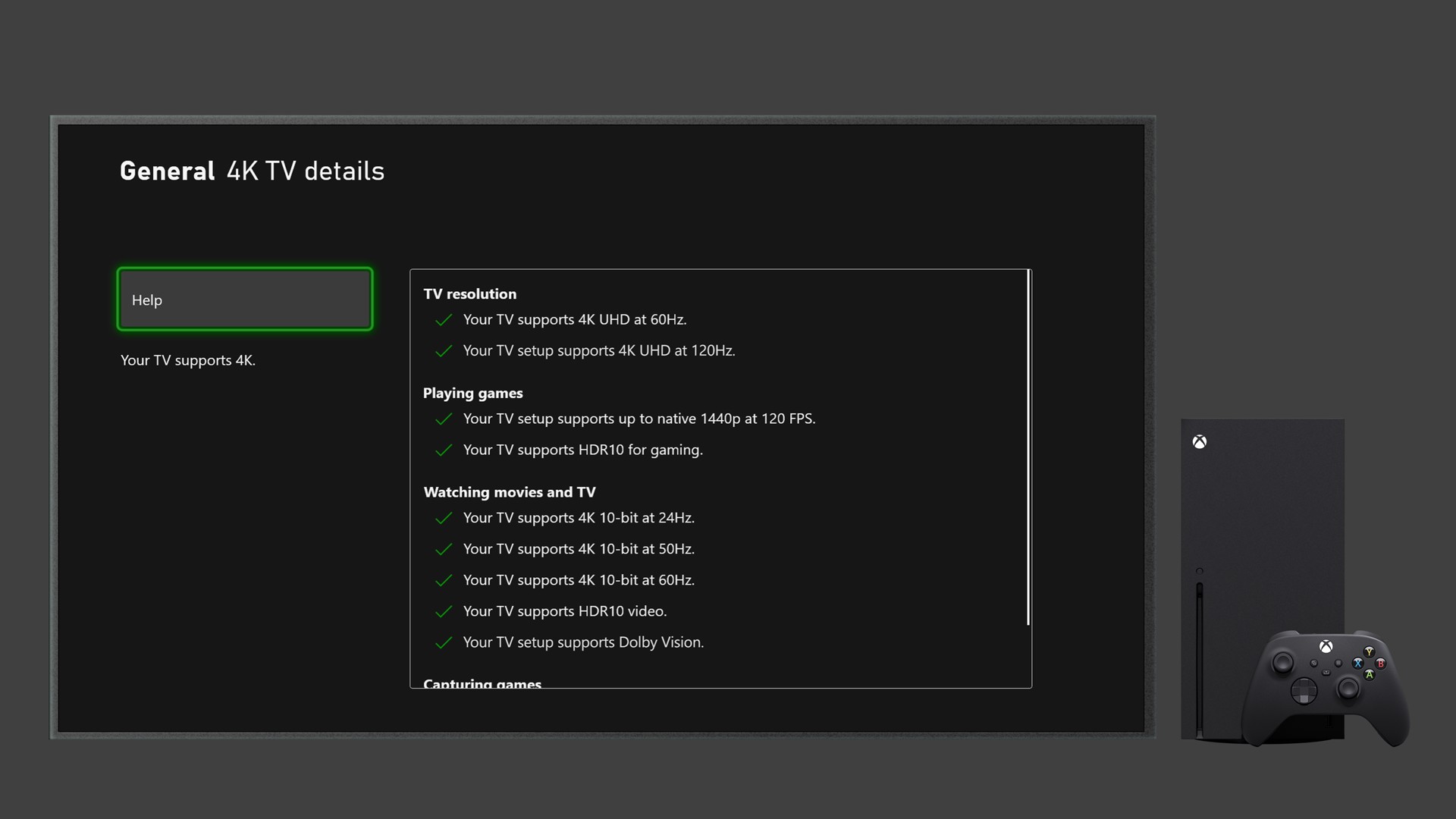

Microsoft is already doing a great job here, with informative network and video settings menus that help explain things to the less tech savvy. Every game with graphics settings should explain what they do, too.

Even though I still mostly game on PC, I'm excited to see the consoles embrace so many of PC gaming's defining characteristics. It should now be obvious to millions more players that, yes, the human eye really can see a difference between 30 fps and 60 fps, that hard drives have been holding back load times for years, and that scrutinizing technical considerations like frame pacing and anti-aliasing between console versions can help you better appreciate just how complex games are. Consoles may still only be offering a binary performance option for now, but they've still made millions of players savvier to what that choice entails.

Wes has been covering games and hardware for more than 10 years, first at tech sites like The Wirecutter and Tested before joining the PC Gamer team in 2014. Wes plays a little bit of everything, but he'll always jump at the chance to cover emulation and Japanese games.

When he's not obsessively optimizing and re-optimizing a tangle of conveyor belts in Satisfactory (it's really becoming a problem), he's probably playing a 20-year-old Final Fantasy or some opaque ASCII roguelike. With a focus on writing and editing features, he seeks out personal stories and in-depth histories from the corners of PC gaming and its niche communities. 50% pizza by volume (deep dish, to be specific).

Join The Club

Join The Club