An ‘ultra-bandwidth’ successor to HBM2e memory is coming but not until 2022

It will be a while before high-end GPUs adopt the next-gen memory standard.

Micron is developing new memory standards to power the graphics cards of tomorrow. A few days ago we covered the revelation that Nvidia's upcoming GeForce RTX 3090 will feature GDDR6X memory at up to 21Gbps and, in the same tech brief we extrapolated that information from, Micron also mentions an ultra-bandwidth HBMnext standard that is in development.

Unfortunately the tech brief does not go into great detail about what to expect from HBMnext, AKA the next generation of high bandwidth memory, other than when it is coming.

"HBMnext is projected to enter the market toward the end of 2022. Micron is fully involved in the ongoing JEDEC standardization. As the market demands more data, HBM-like products will thrive and drive larger and larger volumes," Micron states.

And that's all we get on the matter, under a section of the tech brief appropriately titled, "A Glimpse Into the Future."

Micron was a bit more forthcoming about its HBM2e memory solution, which it says is fully JEDEC-compliant and on track to arrive this year. It will be available in four-layer 8GB and eight-layer 16GB stacks, with transfer speeds of up to 3.2Gbps. For comparison, the latest revision of HBM2 found on Nvidia's V100 accelerator hits 2.4Gbps.

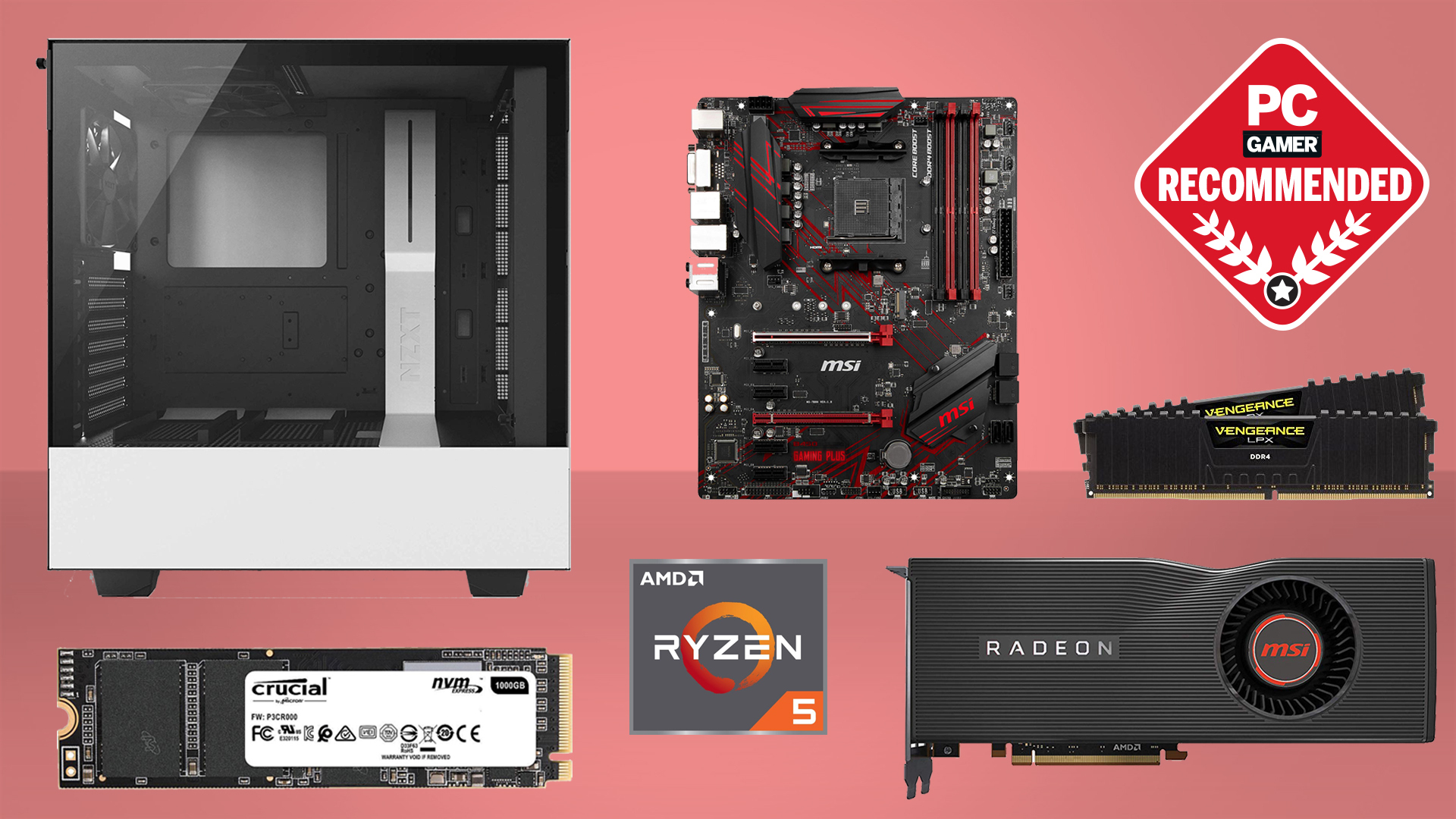

Best CPU for gaming: the top chips from Intel and AMD

Best graphics card: your perfect pixel-pusher awaits

Best SSD for gaming: get into the game ahead of the rest

HBM2e is primarily intended for accelerators used for AI training, and Micron is not the only one producing it—so are Samsung and SK Hynix. However, AMD has dabbled with high bandwidth memory on some of its consumer products, and there are rumors that at least one version of its next-generation Navi GPUs will feature HBM2e, though nothing has been announced yet.

The main reason HBM memory is not featured more prominently in the consumer space is because it is comparatively expensive to GDDR5 or GDDR6.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

"HBM is a powerful version of ultra-bandwidth solutions, but it is a relatively high-cost solution due to the complex nature of the product. HBM is targeted toward very high-bandwidth applications that are less cost sensitive," Micron notes.

This type of memory essentially entails stacking multiple layers, which reside on the same silicon imposer as the GPU. The end result is greater density and higher throughput at lower clock rates, but at a far higher price tag.

Micron has yanked its tech brief offline, though NuLuumo on Reddit posted a mirror link where you can still download and view as a PDF document.

Thanks, Tom's Hardware

Paul has been playing PC games and raking his knuckles on computer hardware since the Commodore 64. He does not have any tattoos, but thinks it would be cool to get one that reads LOAD"*",8,1. In his off time, he rides motorcycles and wrestles alligators (only one of those is true).