Our Verdict

The Radeon RX 5700 XT is faster and more efficient than its predecessors. While it lacks ray tracing support, it's the best GPU AMD currently offers.

For

- More efficient RDNA architecture

- Beats Vega 64 and 2060 Super

- Good 1080p and 1440p performance

Against

- No ray tracing support

- Higher temps and power than Nvidia

- The "dented" shroud looks weird

PC Gamer's got your back

After months of waiting and speculation, AMD's Radeon RX 5700 XT is finally here, joining the ranks of the best graphics cards. Sporting a new RDNA architecture that boosts performance while reducing power requirements, and with a price drop to keep AMD competitive with Nvidia's new RTX 2060 Super that launched last week, expectations from the faithful have been high. This is AMD's first new GPU architecture since GCN (Graphics Card Next) came out all the way back in 2012. Our primary purpose today is to show how it performs, check out the new features, and determine how it stacks up to the competition—both from Nvidia as well as AMD's existing portfolio.

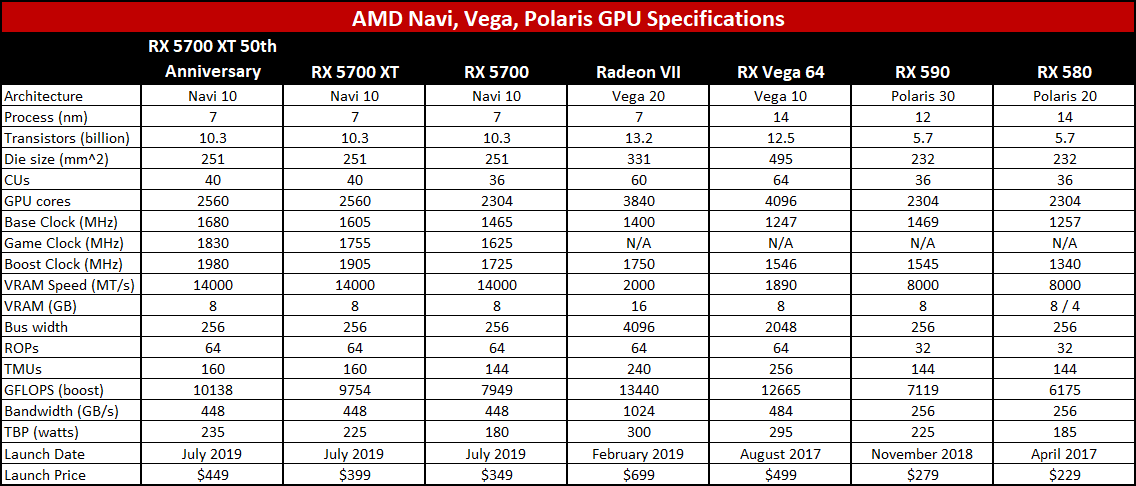

I've previously discussed AMD's new Navi / RDNA architecture in detail, so if you want to find out exactly what makes the Radeon RX 5700 XT tick that's a good place to start. The short summary is that AMD has focused on improving efficiency, reworked the Compute Units (CUs) to boost utilization and performance, and shifted to TSMC's 7nm process technology. Here's a quick look at the specs, comparing the new RX 5700 models with several of AMD's previous generation GPUs:

All three RX 5700 models use the same Navi 10 GPU, with the 5700 XT cards sporting fully enabled chips while the RX 5700 disables four CUs and 256 cores. Clockspeeds also vary for the GPU, but the GDDR6 memory in all cases runs at 14Gbps (14 GT/s). The new Navi 10 GPUs end up with a die size slightly larger than the previous Polaris GPUs, with only a few extra CUs. However, each CU has been reworked with the new RDNA architecture, and the result as we'll see shortly is that even with fewer cores / CUs the RX 5700 XT easily outperforms the Vega 64—and it does so while using substantially less power.

I mentioned this previously, but AMD's addition of a new 'Game Clock' muddies the waters, with AMD reporting maximum performance using the boost clock instead of the game clock. The game clock is a relatively conservative estimate of the actual in-game clockspeeds the RX 5700 family will see, meaning games will usually run at higher clockspeeds. That's the same approach Nvidia takes with its boost clock, while AMD's boost clock is (sort of) the maximum clock the GPUs will see (there's actually still some 'silicon lottery' luck involved where some GPUs may actually exceed the stated boost clock).

Anyway, don't get too hung up on comparing GFLOPS as there are architectural differences and in the end it's gaming performance that matters. For example, the RX 5700 XT has theoretical performance of 9754 GFLOPS while the Vega 64 has theoretical performance of 12665 GFLOPS. But the Navi / RDNA architectural changes more than make up for that deficit—just like Nvidia's RTX 2060 Super and its 7181 GFLOPS ends up delivering far better results in most games than the numbers would otherwise suggest. Basically, GFLOPS comparisons across architectures usually end up as apples and oranges.

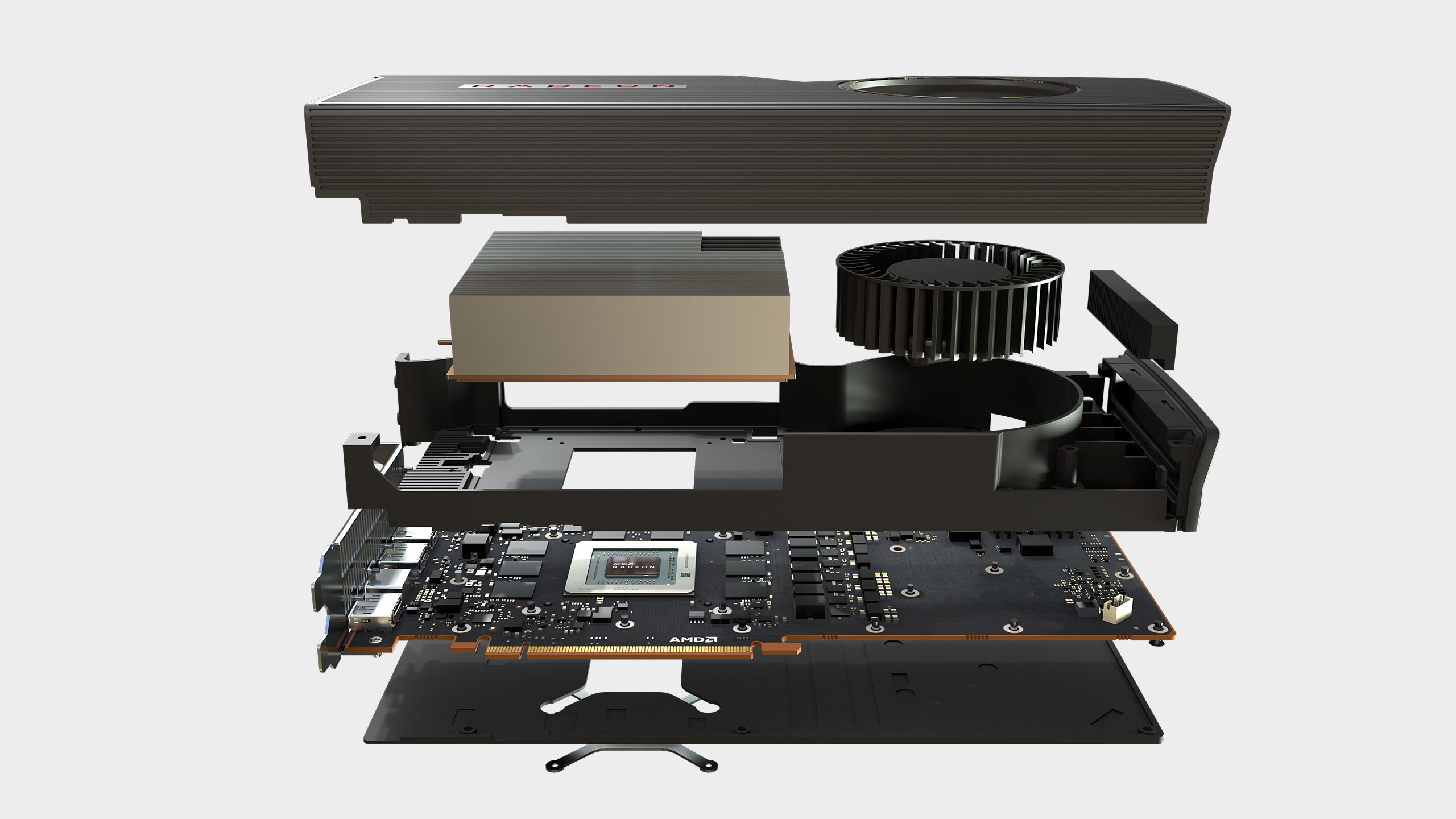

AMD provided reference models of the RX 5700 XT and RX 5700 (but not the 50th anniversary limited edition) for testing. While the circuit boards, memory, and GPU are the same, there are some design differences for the cooling shroud. Specifically, the RX 5700 XT has a grooved shroud with a curved section that has resulted in some "dented" jokes. The idea is that the indent provides for additional airflow, particularly in confined spaces—like if you're running CrossFire. I'm not sure how much it really matters, and often the curviness looks more like a weird manufacturing defect than something truly necessary, but it ends up mostly as an aesthetic opinion. Some may like it, some won't, and there will inevitably be custom cards from AMD's partners that ditch the blower cooling and go with two or three fans.

AMD says the new cooling is better than its previous generation cards, though I'm not fully convinced. It's not extremely loud—a far cry from the cooling on the Vega cards—but that probably has more to do with the lower power requirements than any massive change in the cooling design. Idle noise and thermals tend to be about the same for most GPUs, but playing a game is a different matter. Compared to the RTX 2060 Super, the RX 5700 XT runs just as quiet, but uses about 25W more power at the outlet and runs about 11C hotter. It's not the end of the world, but objectively Nvidia wins by comparison.

Speaking of Nvidia, it muddied the waters last week with its launch of the RTX 2060 Super and RTX 2070 Super—models with more cores than the non-Super variants, plus 2GB extra VRAM for the 2060 Super. AMD initially targeted pricing and performance that would put the RX 5700 XT ahead of the RTX 2070, but the RTX 2070 Super changed the GPU landscape. Rather than going after the 2070 Super, AMD responded by announcing new pricing for the RX 5700 family, dropping the price on the 5700 XT by $50 and matching Nvidia's price on the RTX 2060 Super. It was a smart move, because while $449 would have been difficult to justify, $399 is a good fit for the 5700 XT.

The reduction in price was also absolutely necessary (and perhaps even premeditated). I'll look at the value proposition later, but $400 is less of a mental barrier than $450 or $500. With competition between AMD and Nvidia heating up, anyone looking to buy a new graphics card will benefit. The lack of a viable RTX competitor last year is arguably a major factor in the high launch prices for the RTX line. Now that Navi is here, Nvidia has dropped the price you'll pay for relatively similar performance—e.g., the 2060 Super is nearly the same performance as the 2070, and the 2070 Super lands relatively close to the 2080.

Just because pricing is the same on the RX 5700 XT and RTX 2060 Super doesn't mean performance, features, and API support are the same. AMD has opted to not support ray tracing (either DirectX Raytracing, aka DXR, or Vulkan-RT) with its Navi GPUs. There's no hardware level RT acceleration and no Tensor processing clusters for AI and machine learning. And just as critically, even though it's possible to support DXR via drivers and shader calculations, AMD isn't doing that either (at least not yet). If you want an AMD GPU with hardware DXR support, you'll need to wait for Navi 20, which will also feature in the next generation Xbox and PlayStation consoles.

Let's get to the testing, where we're using our standard GPU test bed—full specs are to the right. The overclocked Core i7-8700K running at 5.0GHz helps ensure the CPU isn't a bottleneck, along with DDR4-3200 CL14 memory and fast SSD storage for the same reason. CPU bottlenecks are less of a concern with midrange and budget GPUs, but for the RX 5700 and Nvidia's RTX cards, CPU performance it can be a factor, particularly at 1080p.

We've benchmarked using the latest drivers available at the time of testing, including retesting older GPUs to ensure our results are up to date. All Nvidia GPUs were tested with the 430.86 drivers, except for the two Super cards which used 431.16. For AMD, we used the 19.16.2 drivers on the previous generation GPUs, with new drivers for the RX 5700 cards. Ideally, we'd retest all GPUs with the latest drivers, but that requires about one day per GPU. Sadly, there simply isn't enough time to do that, especially when new drivers arrive every few weeks.

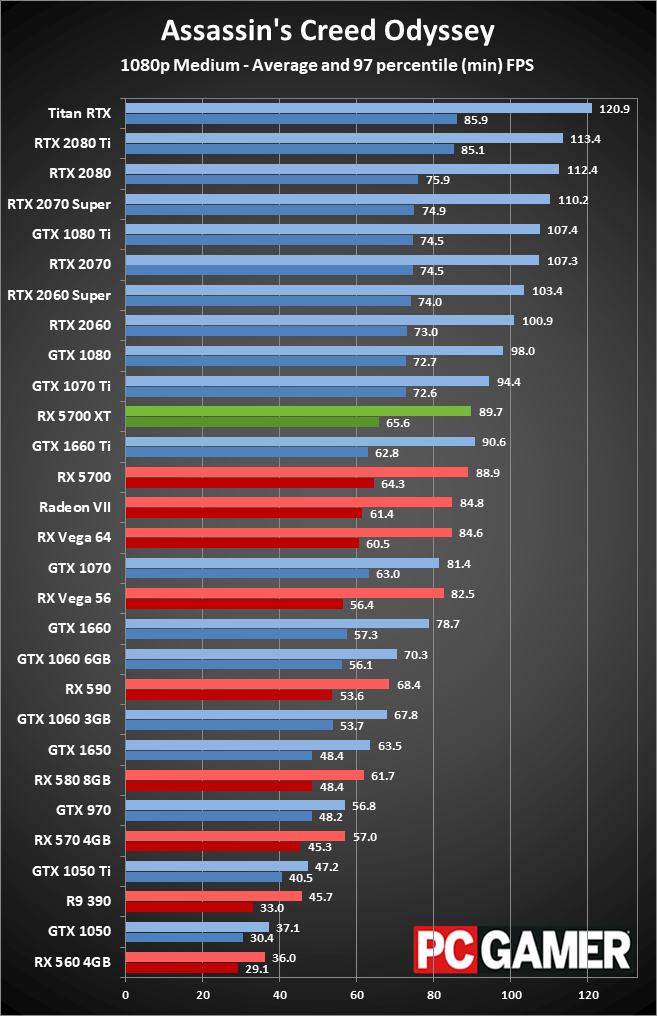

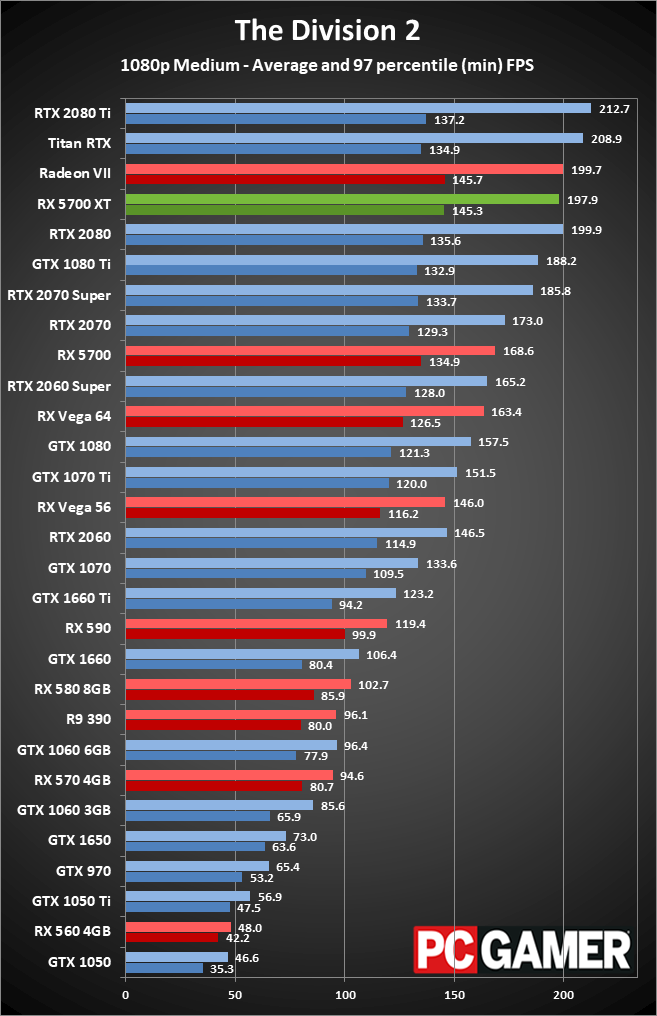

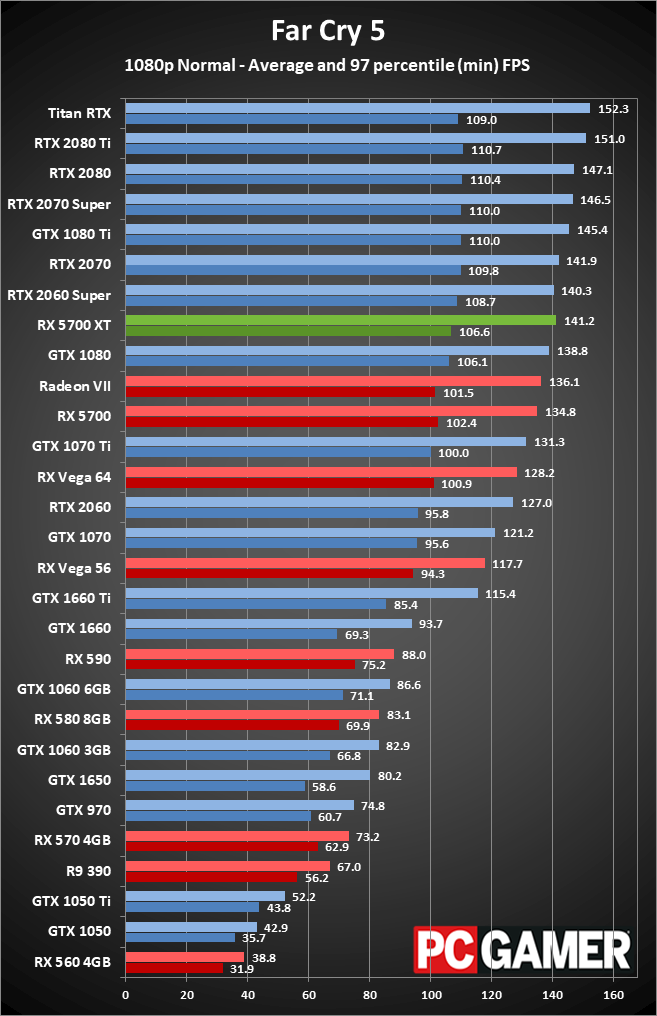

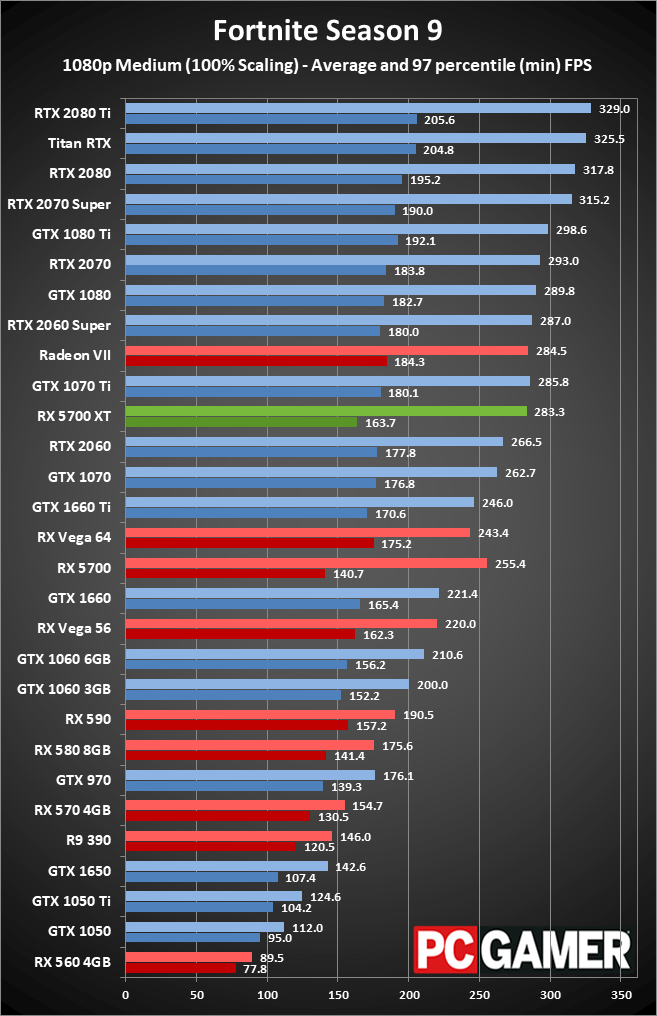

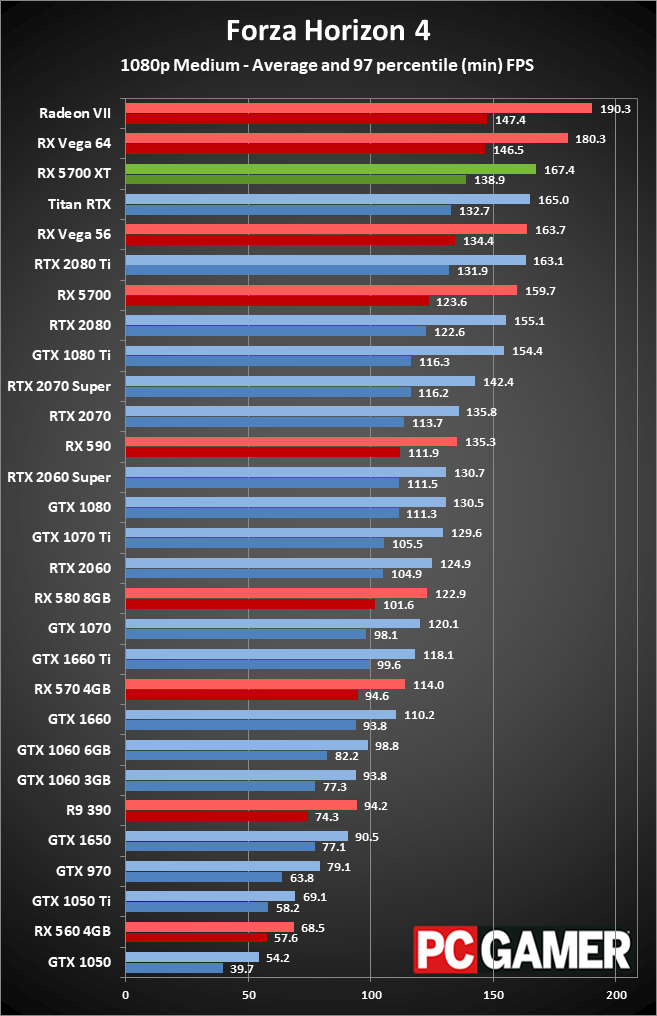

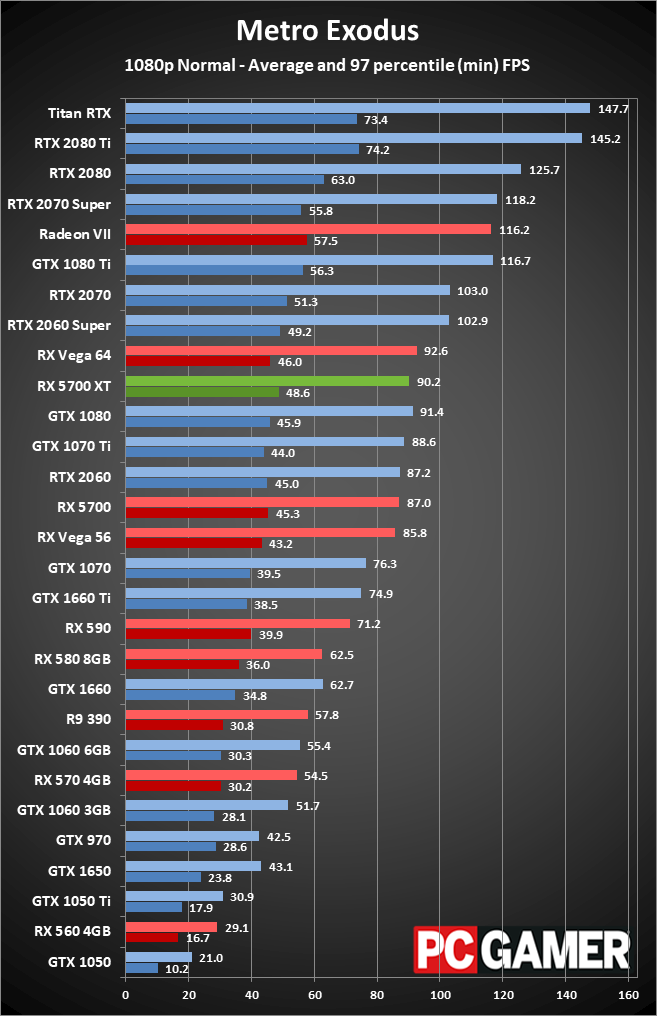

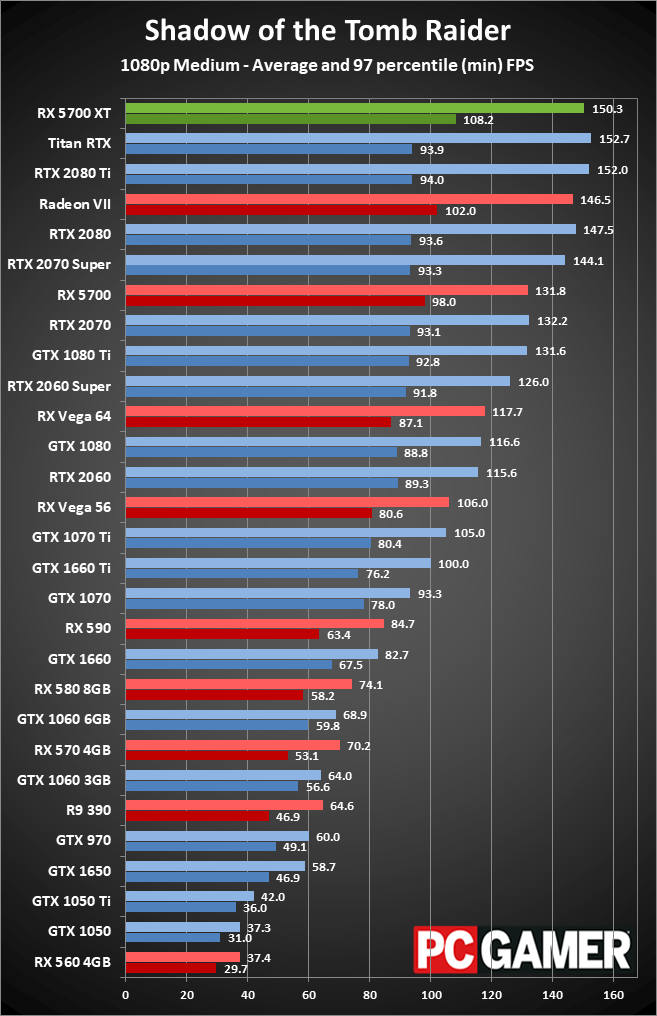

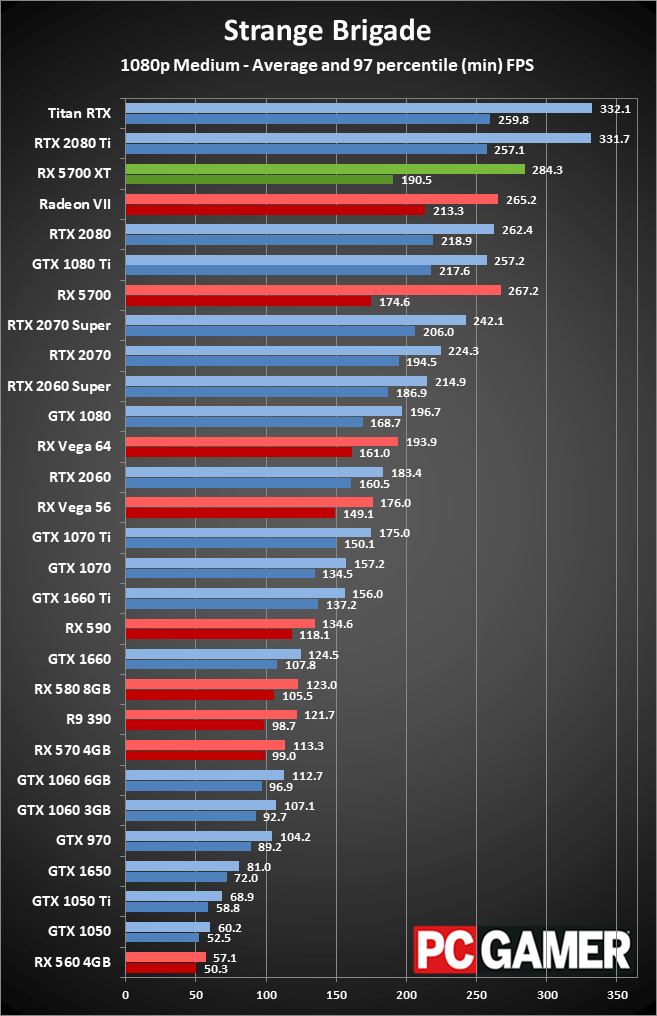

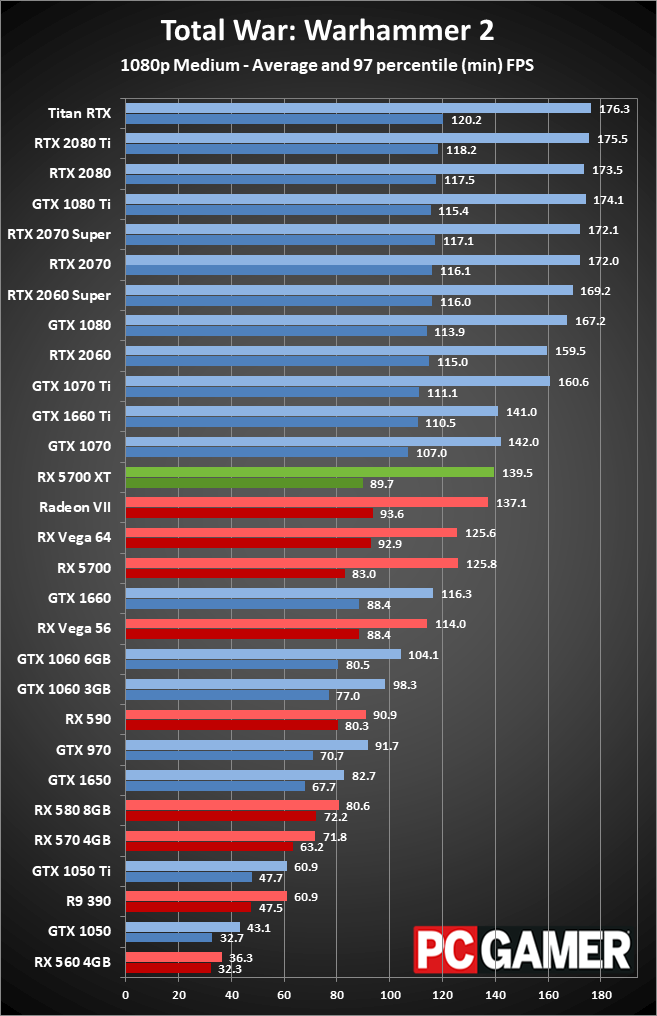

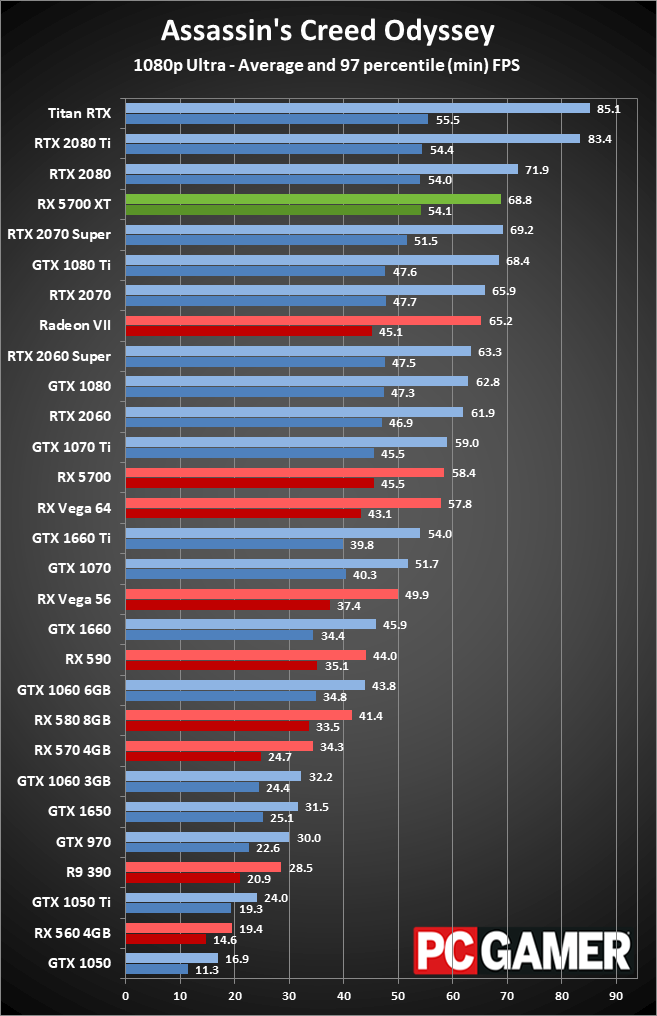

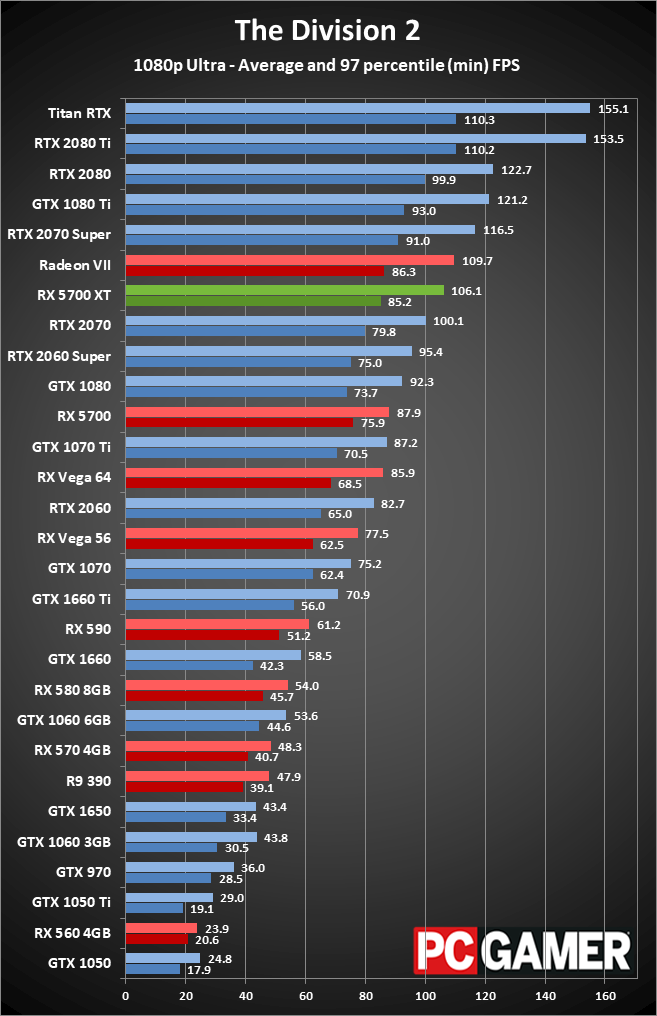

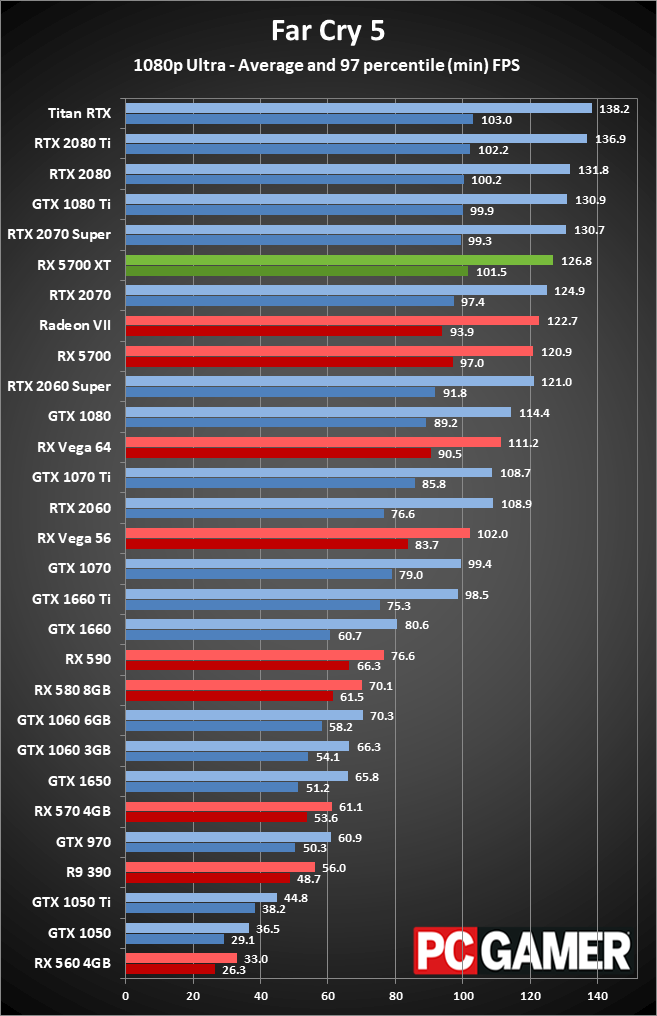

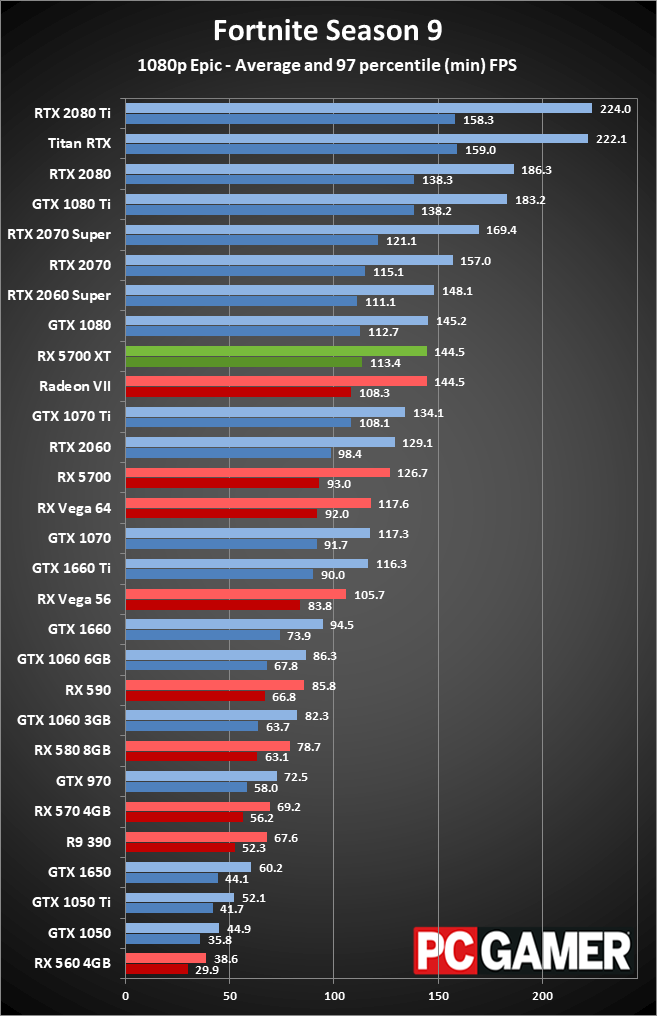

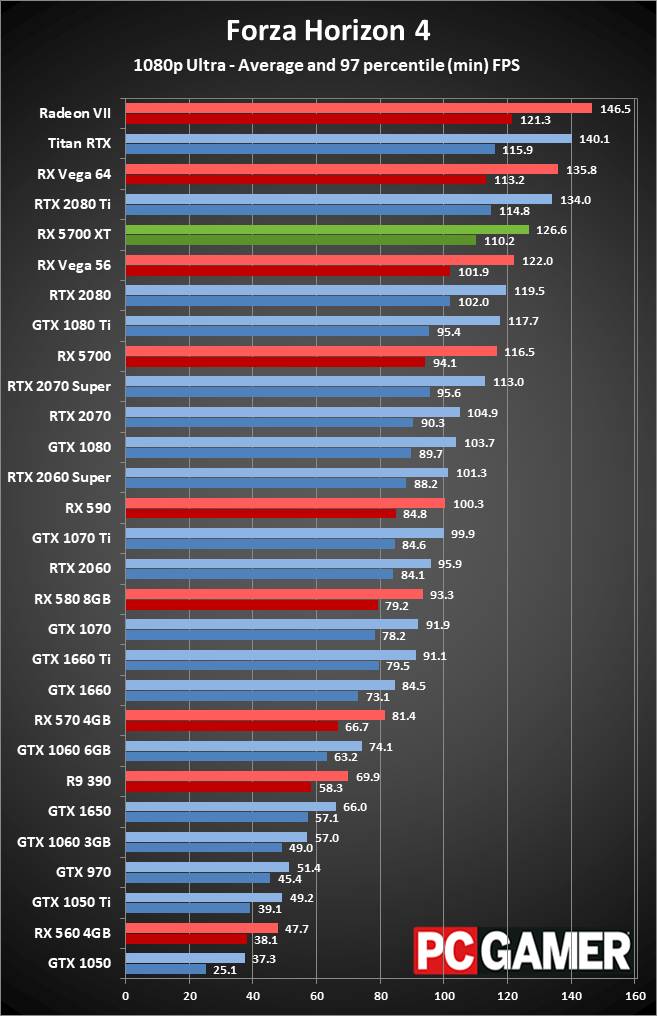

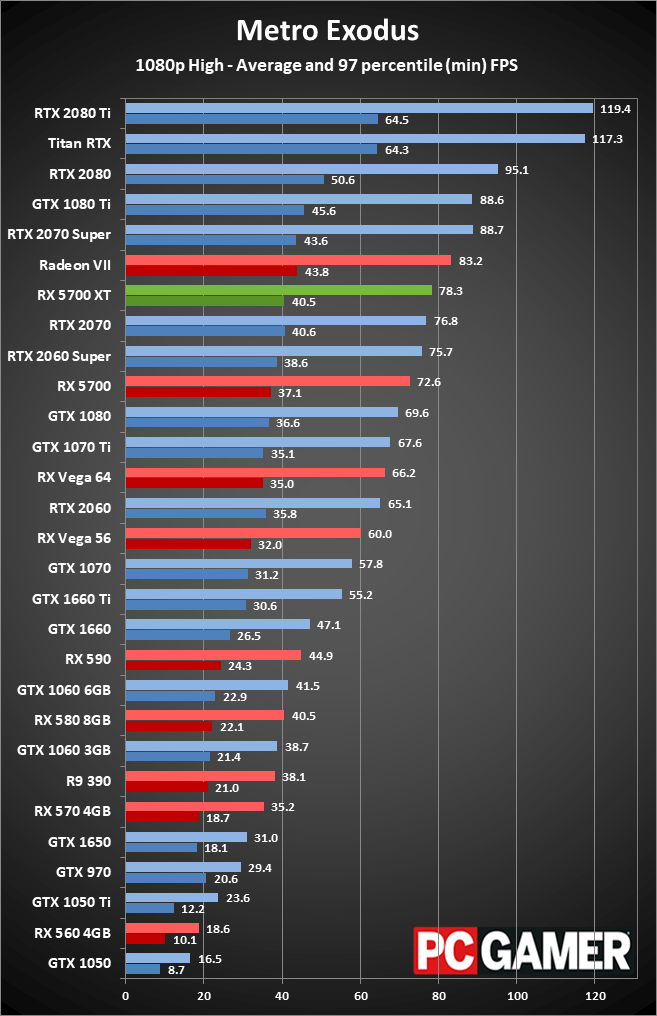

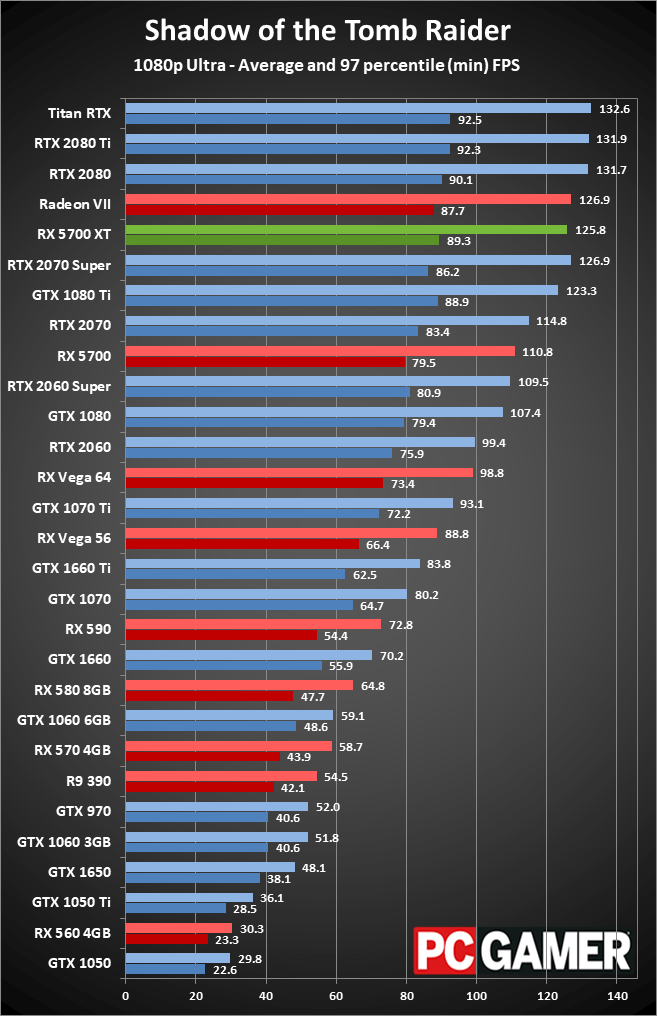

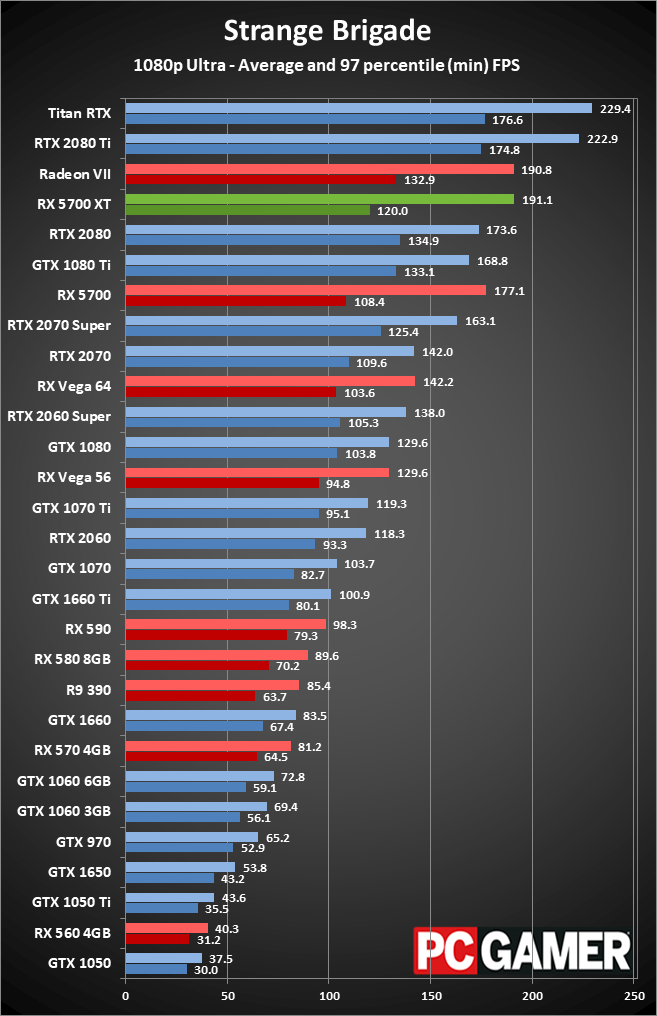

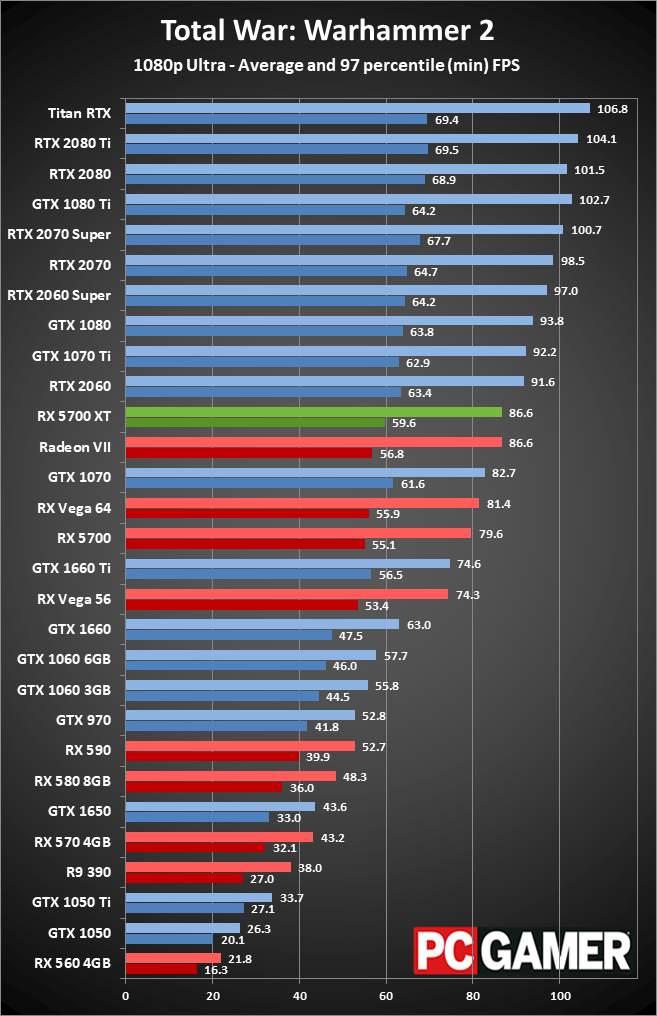

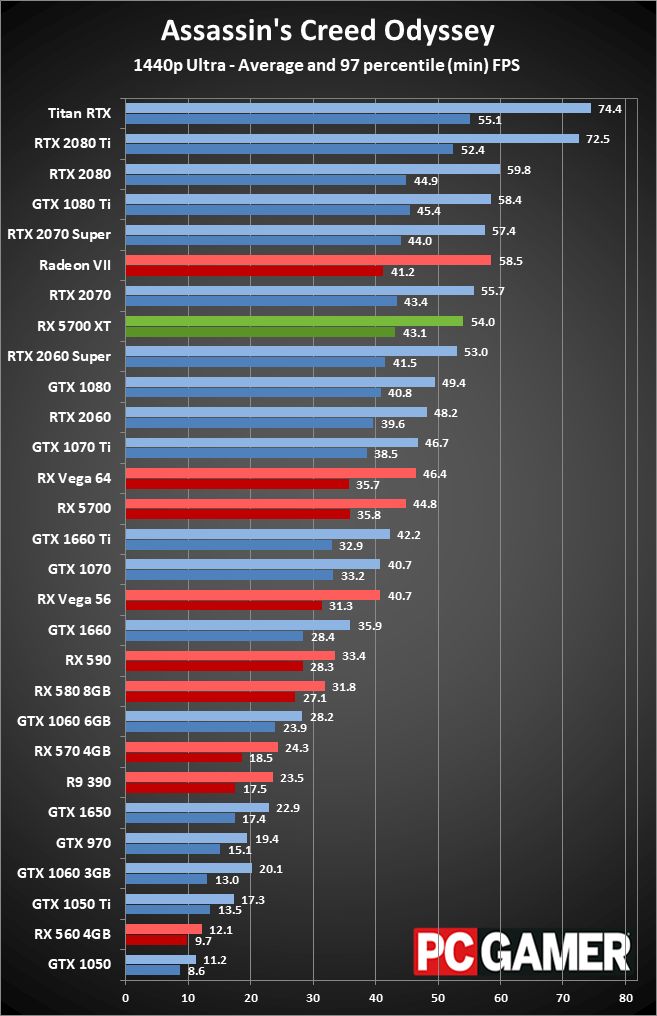

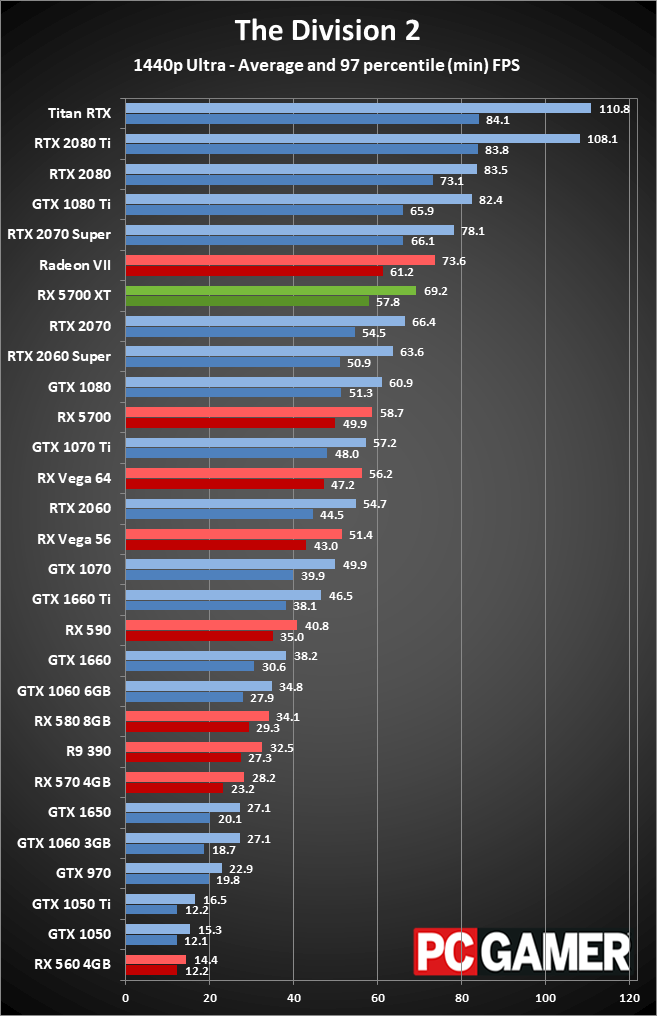

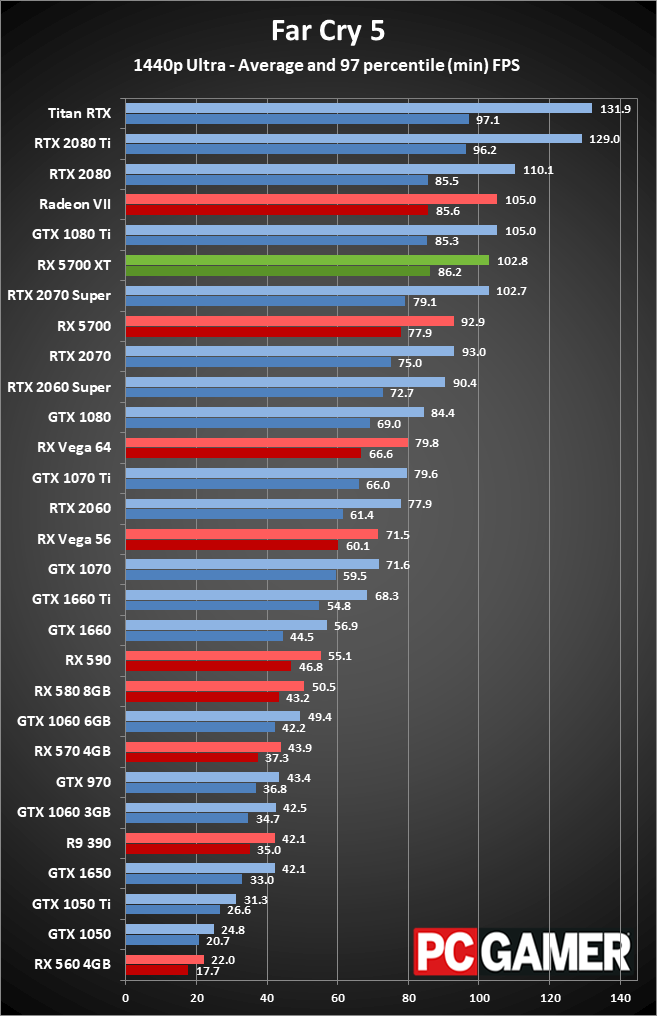

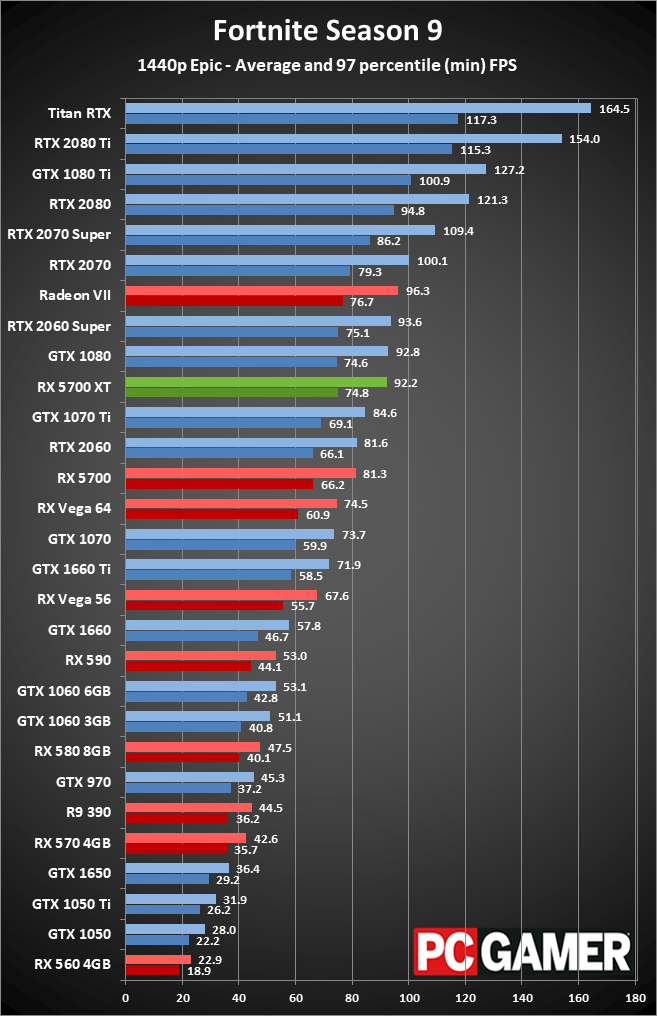

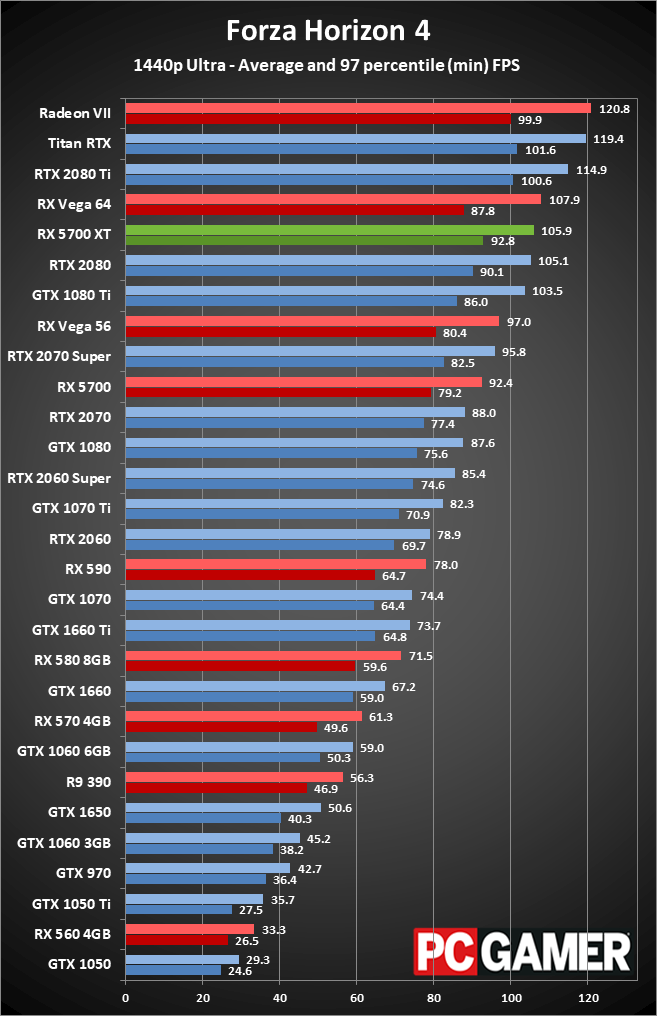

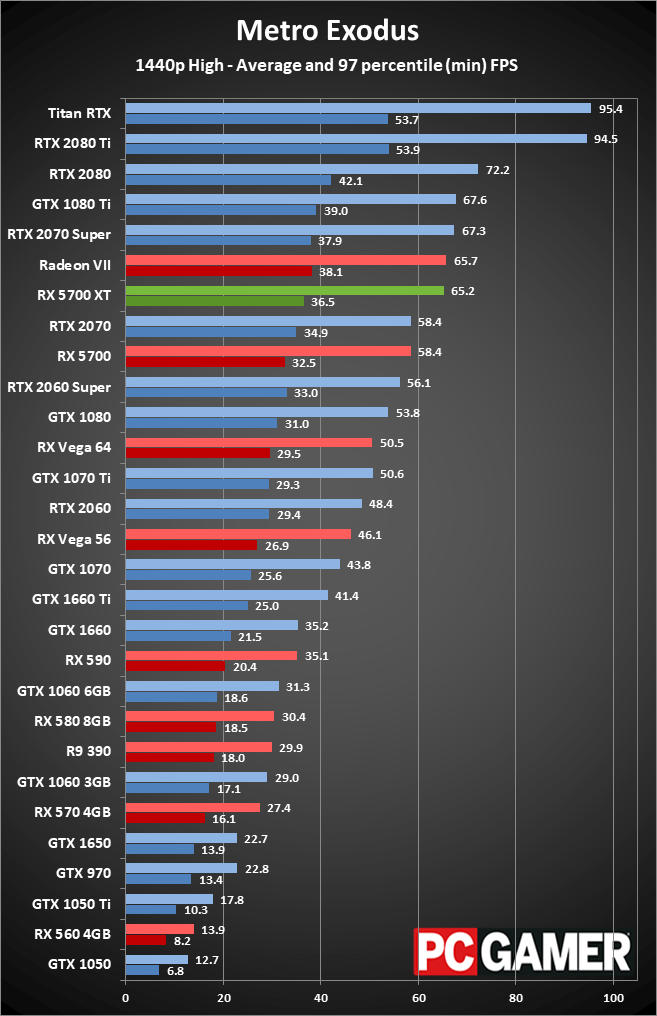

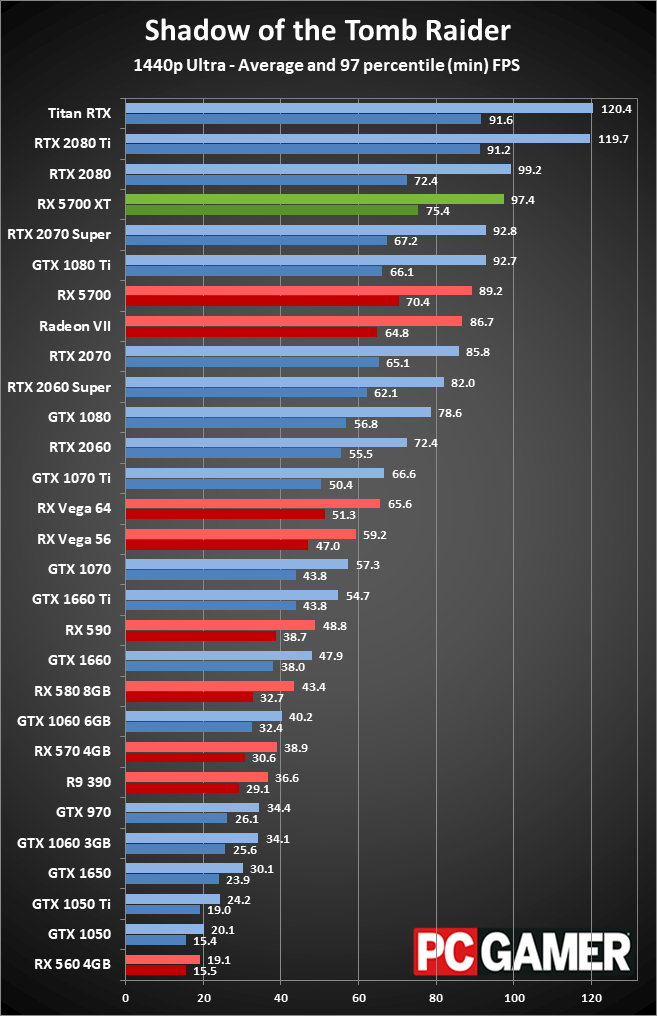

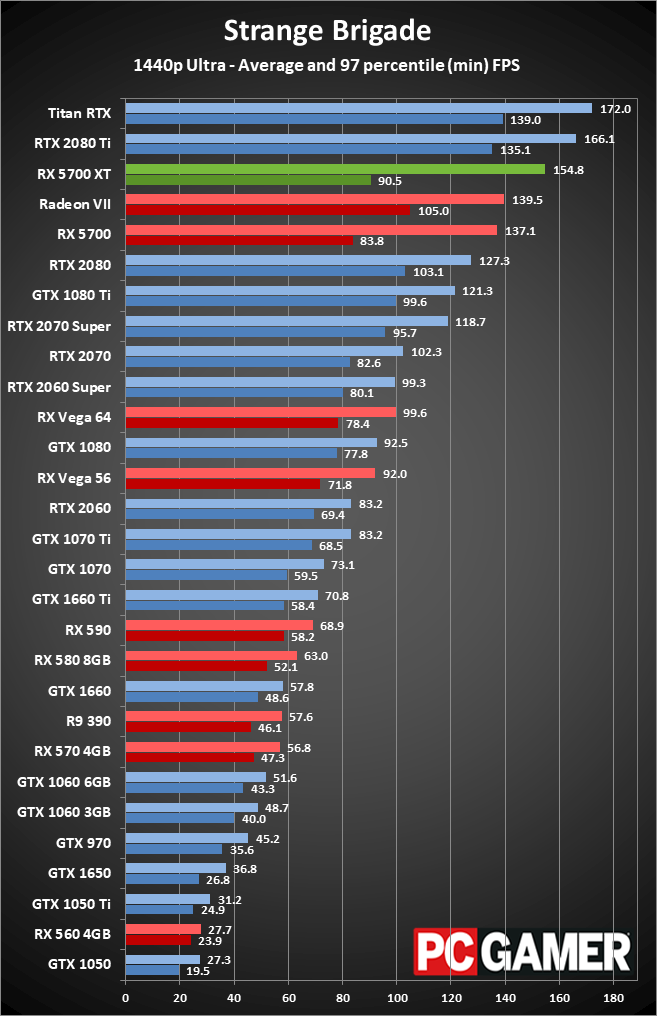

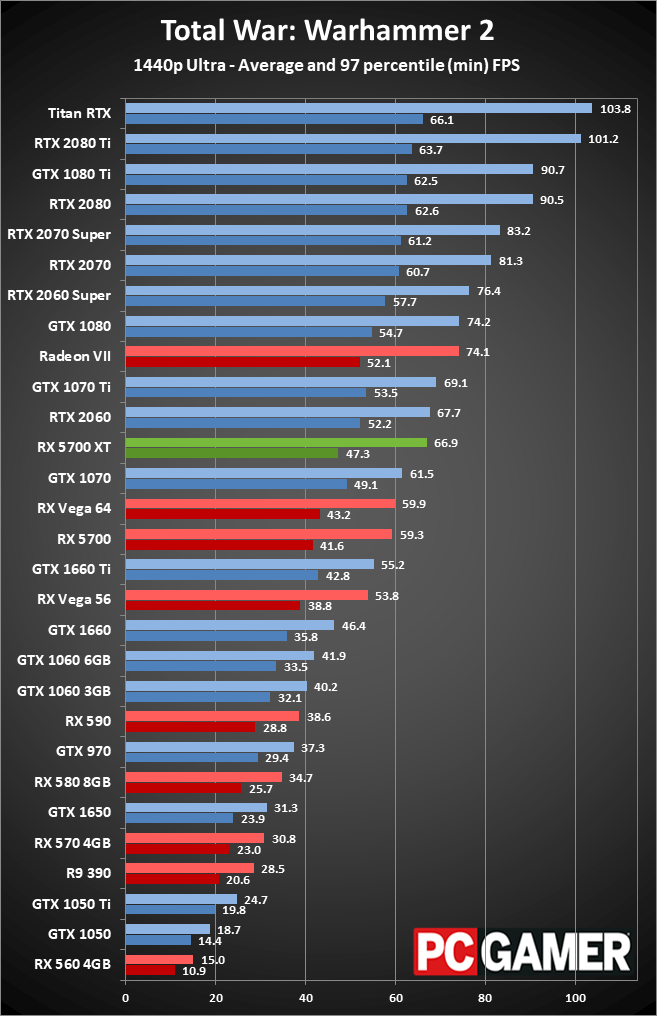

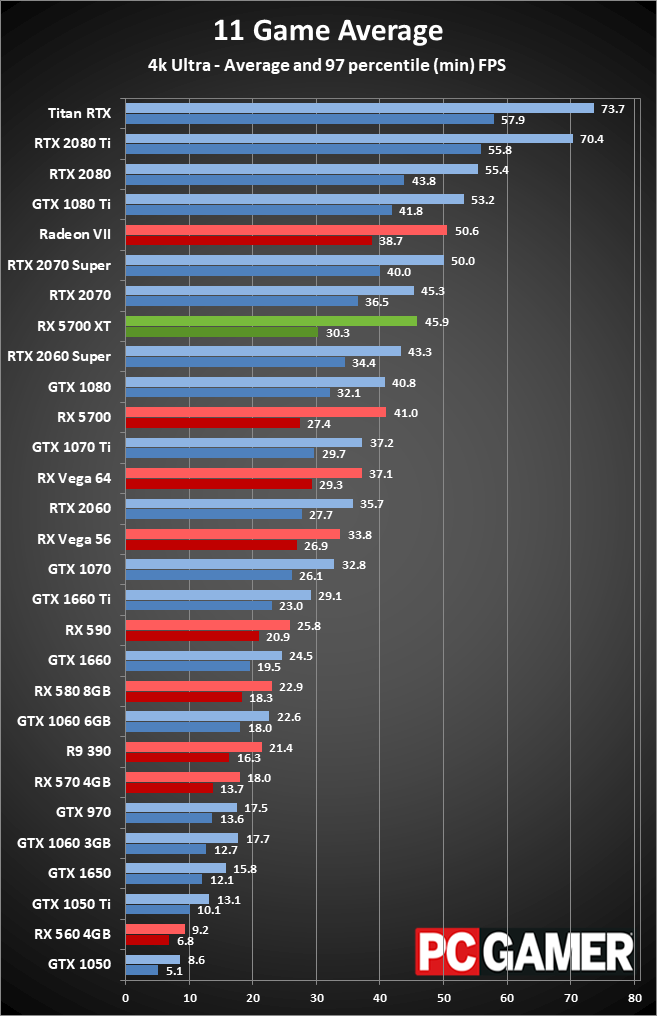

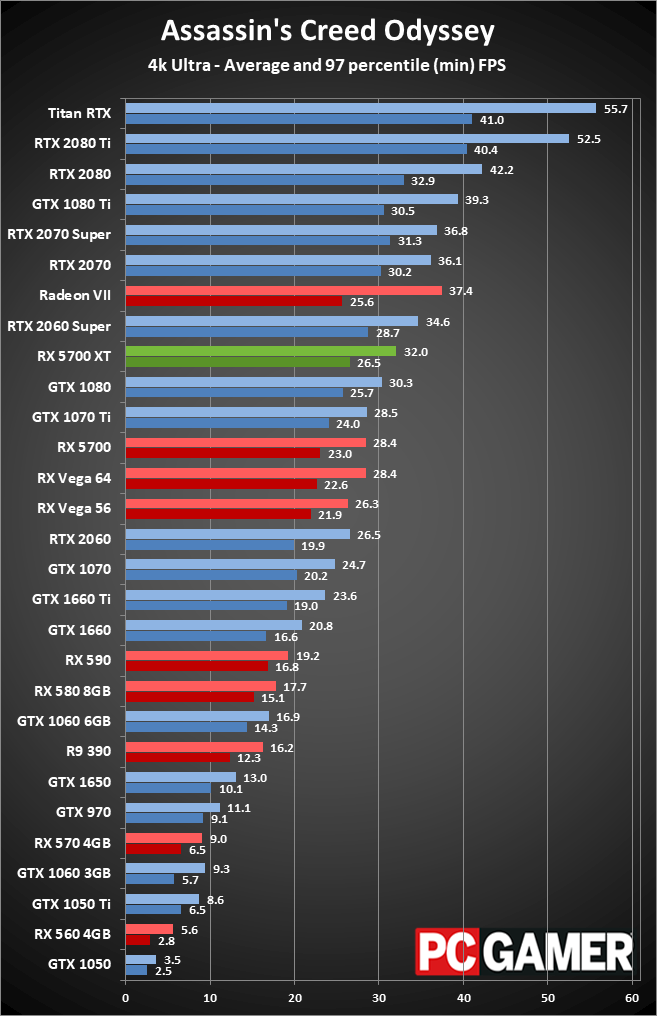

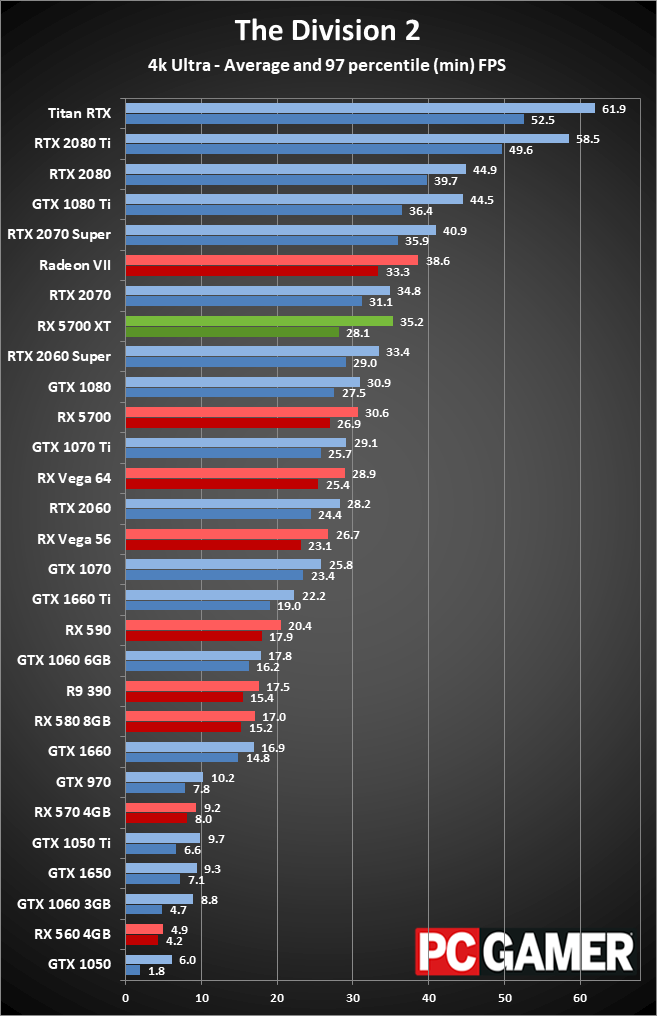

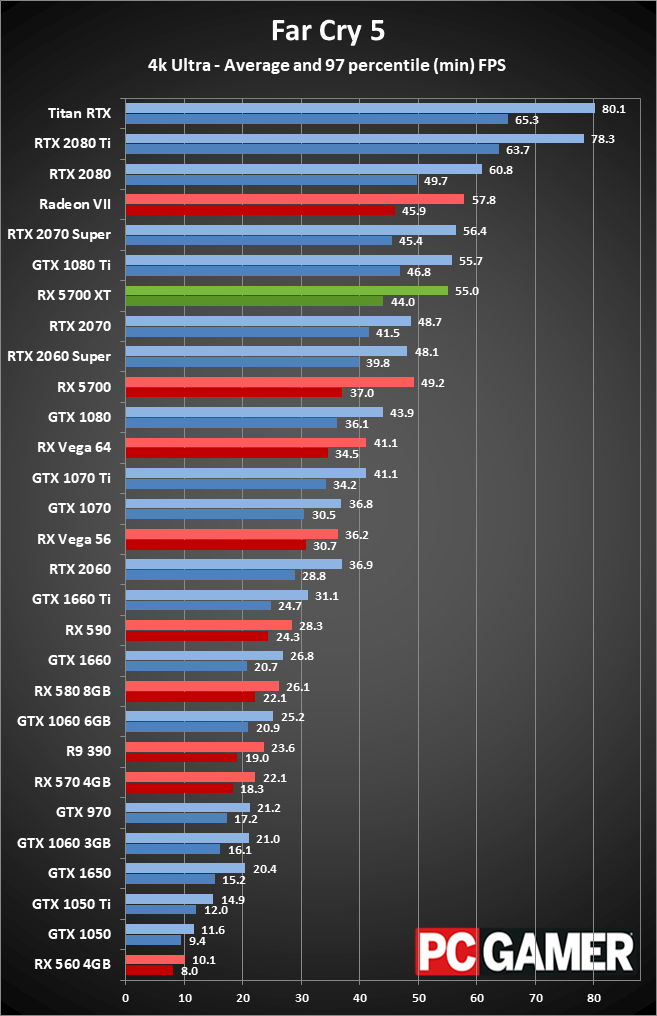

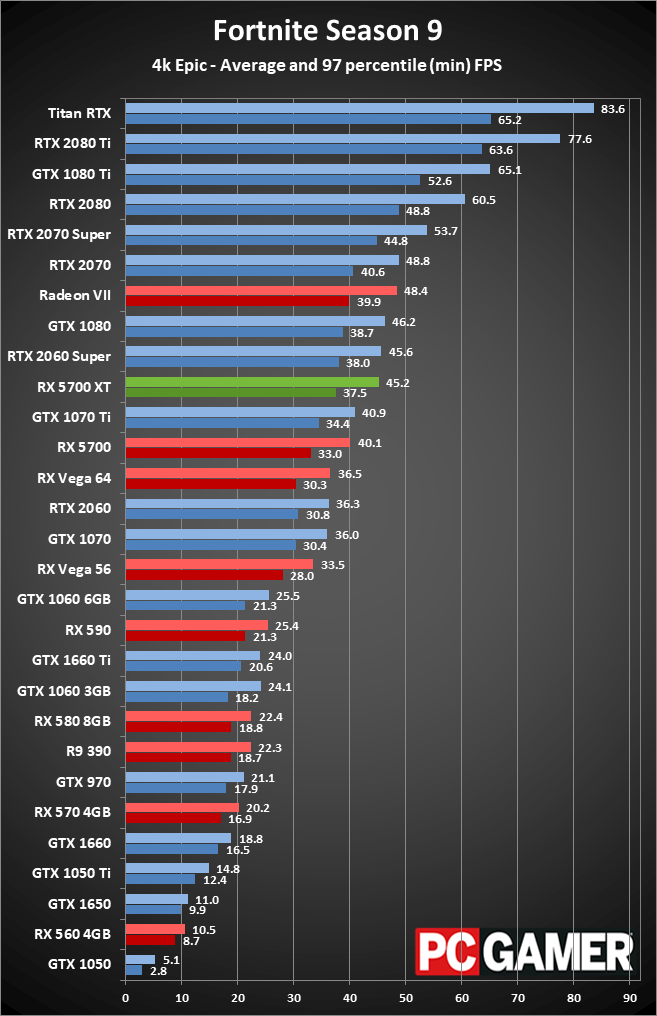

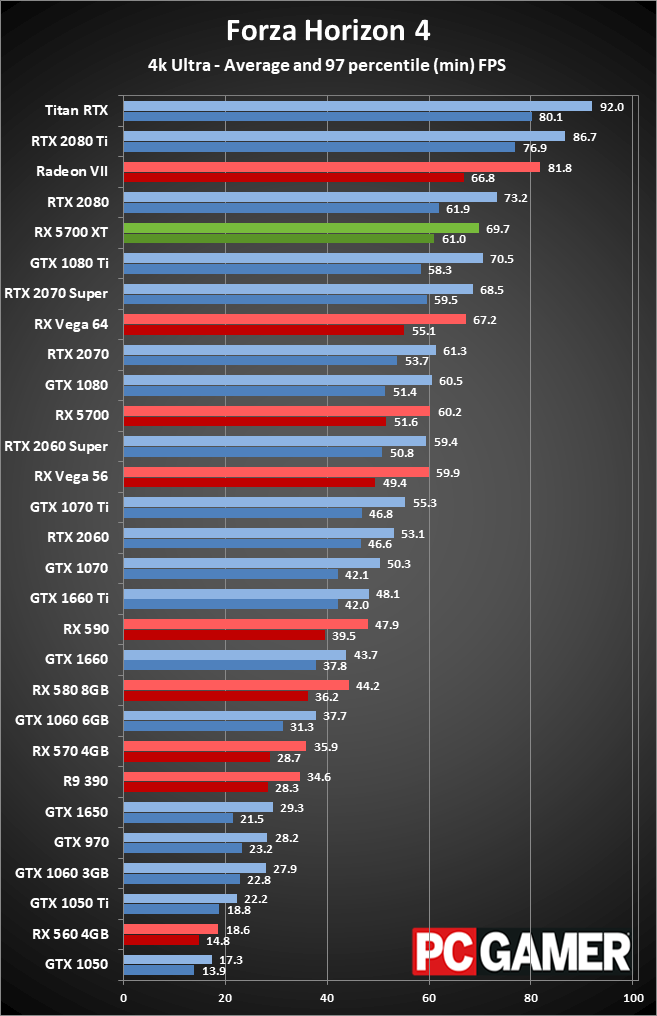

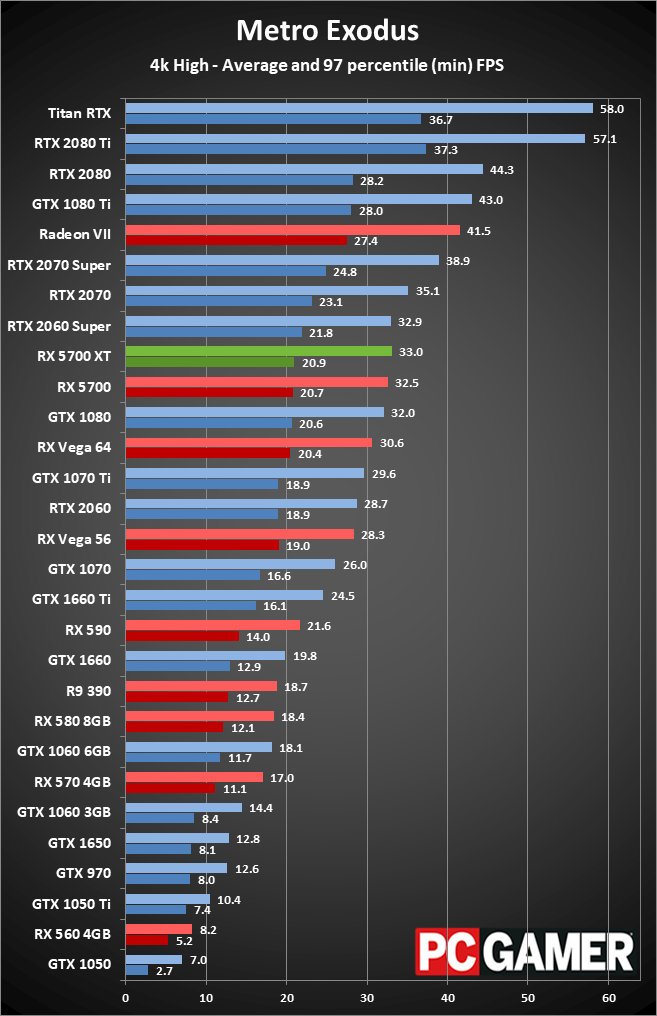

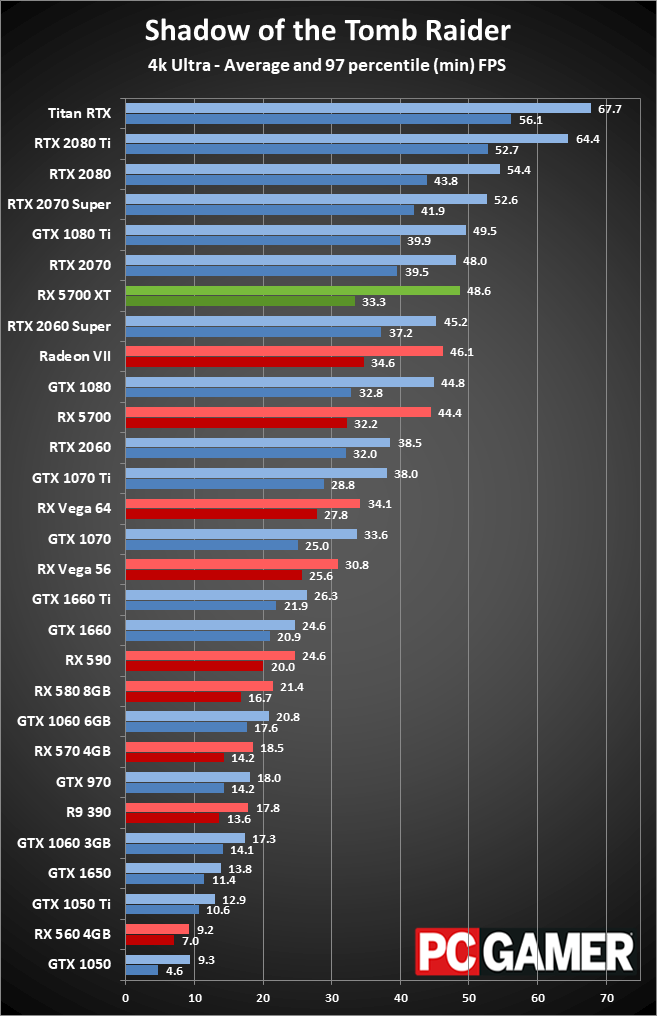

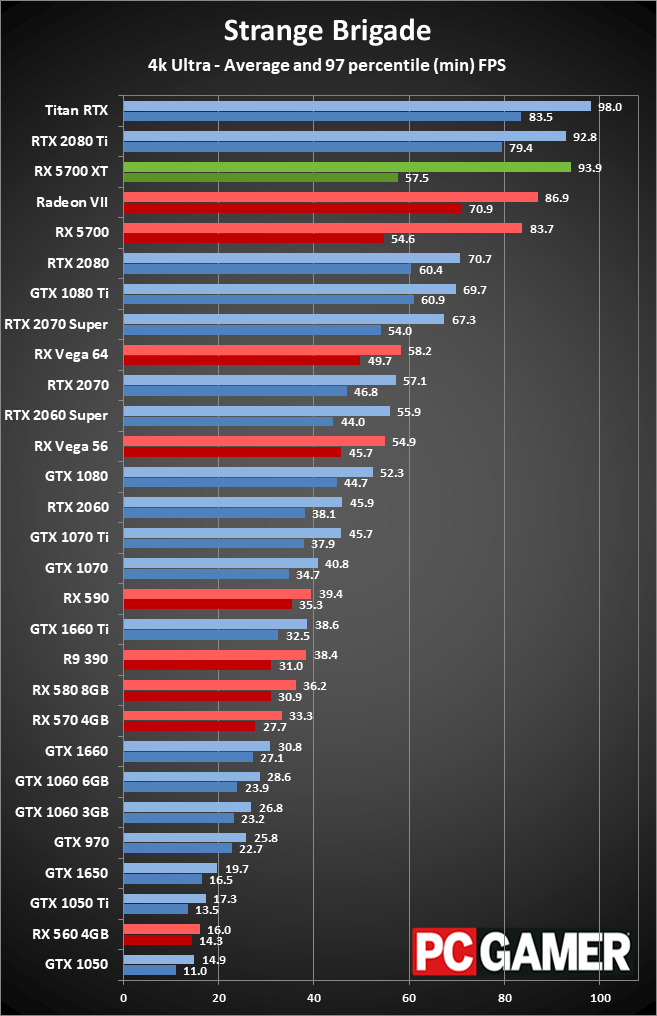

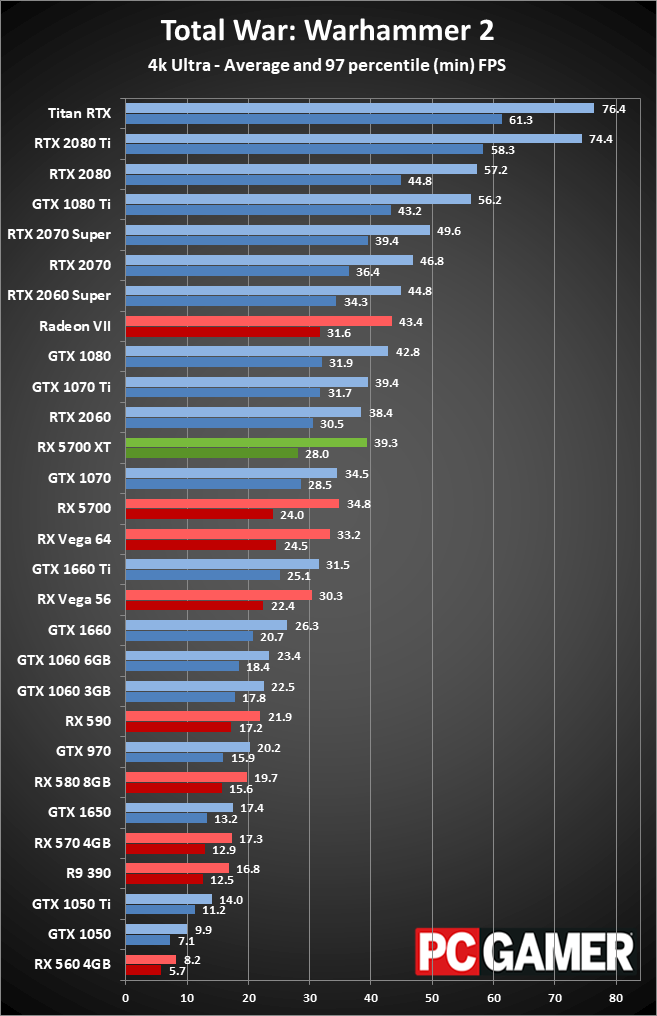

The selection of games we're using for GPU testing has been updated, and we don't run DXR or DLSS for any of the benchmarks. That allows for meaningful comparisons between the various GPUs, since AMD has no support for DXR at present. The 11 games we're using consist of a pretty even mix of AMD and Nvidia promoted titles—The Division 2, Far Cry 5, Strange Brigade, and Total War: Warhammer 2 sport AMD branding, while Assassin's Creed Odyssey, Metro Exodus, and Shadow of the Tomb Raider are promoted by Nvidia. DirectX 12 is utilized in most cases where available, with the exception of Total War: Warhammer 2 where the "DX12 Beta" performance is particularly weak on Nvidia GPUs. We also checked Vulkan performance (in Strange Brigade), and found that the DX12 implementation is currently a bit faster so we stuck with that.

Each card is tested at four settings: 1080p medium (or equivalent) and 1080p/1440p/4k ultra (unless otherwise noted). Every setting is tested multiple times to ensure the consistency of the results, and we use the best score. Minimum FPS is calculated by summing all frametimes above the 97 percentile and dividing by the number of frames, so it's the "average minimum fps" rather than an absolute minimum. That makes it a reasonable representation of the lower end of the performance scale, rather than looking only at the single worst framerate from a benchmark run.

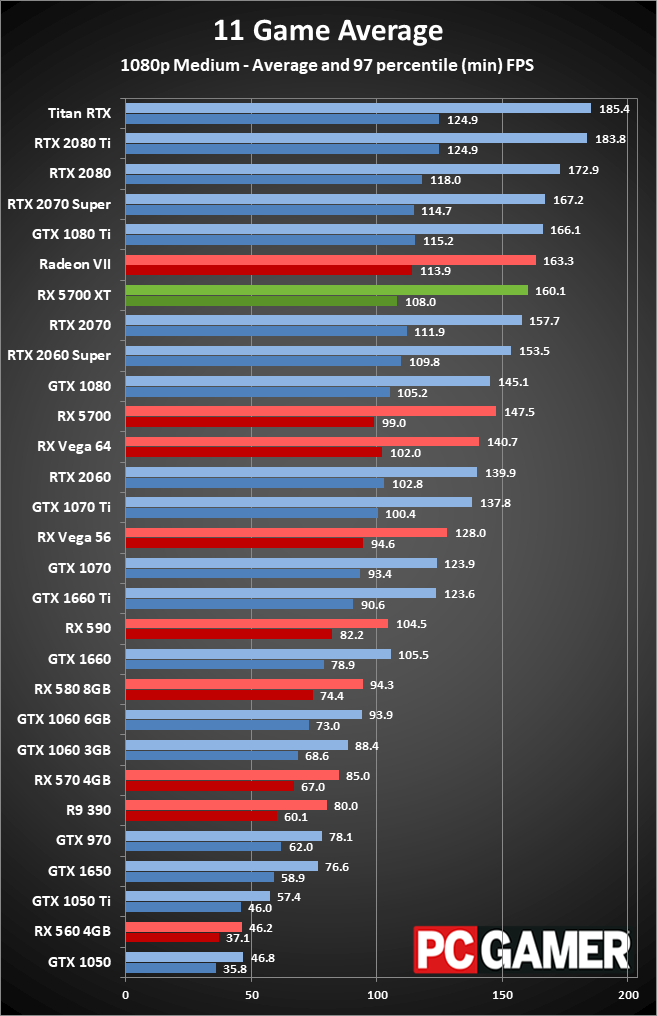

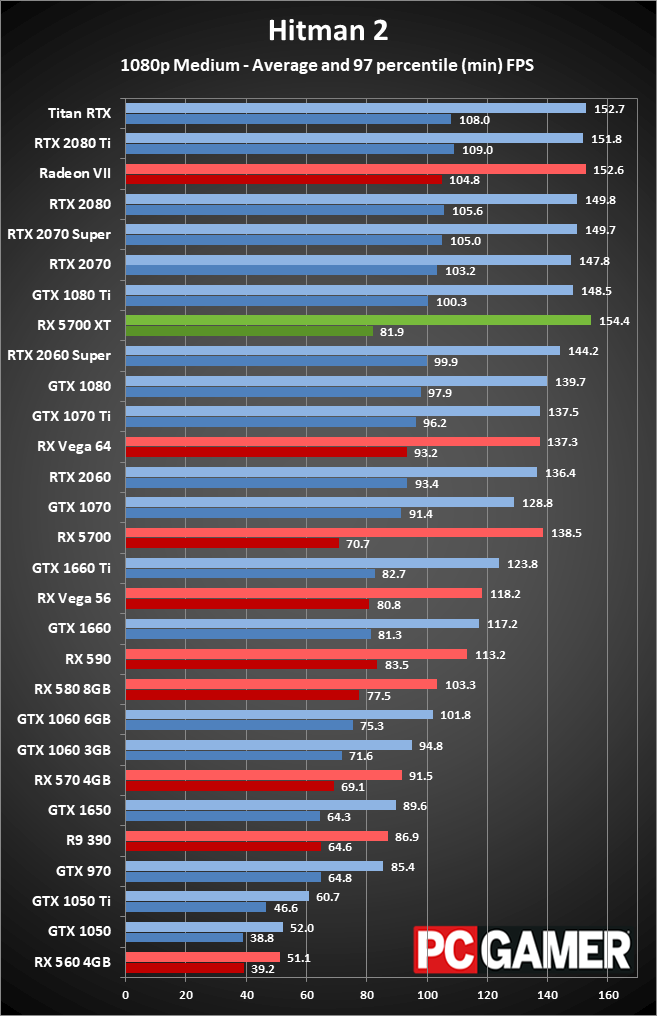

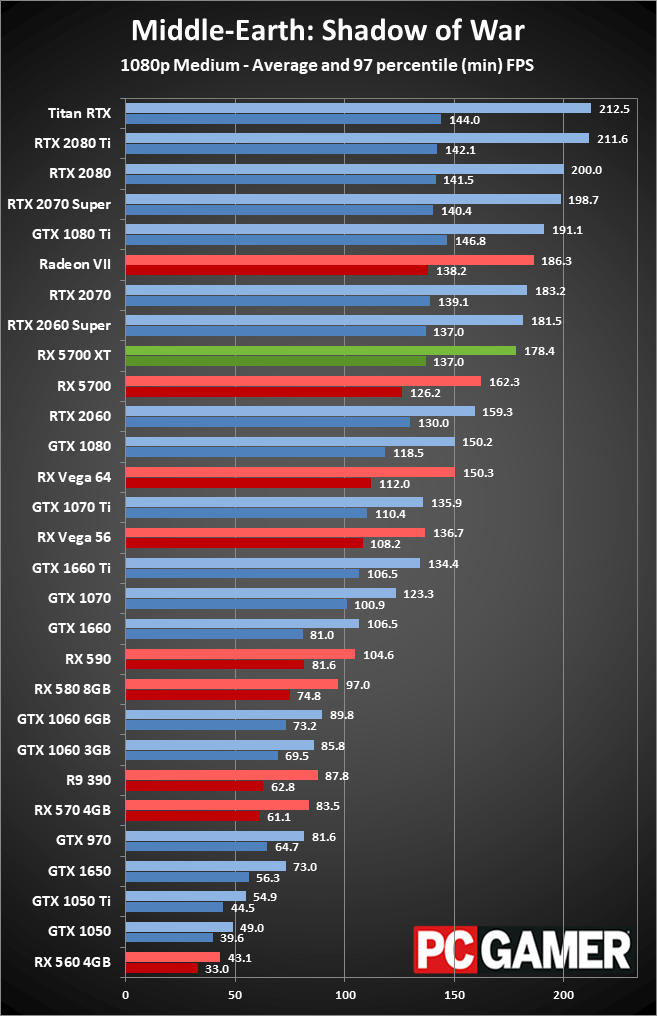

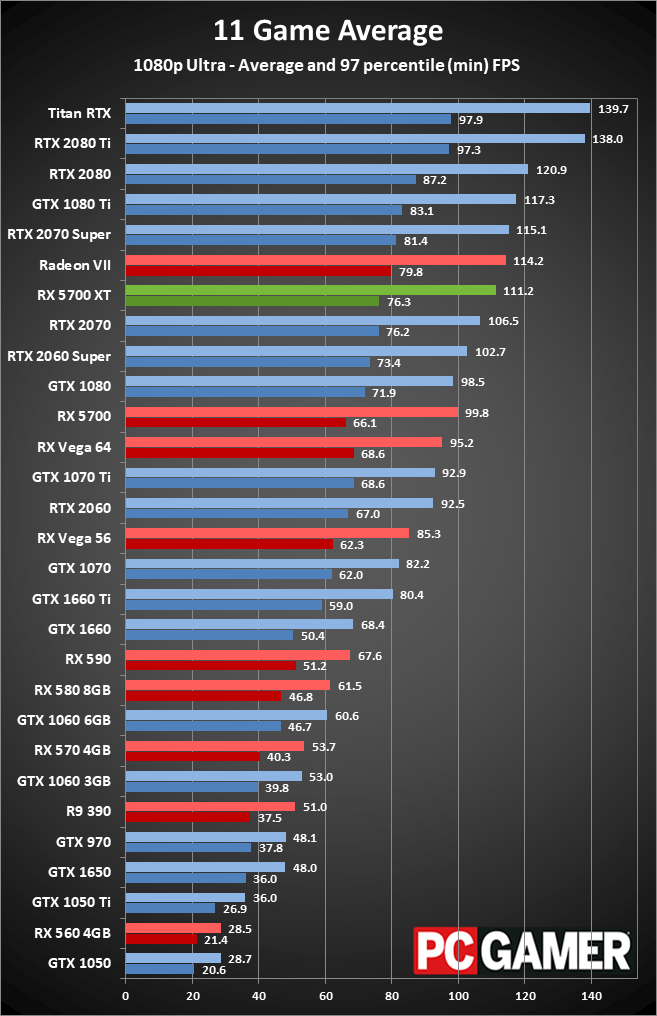

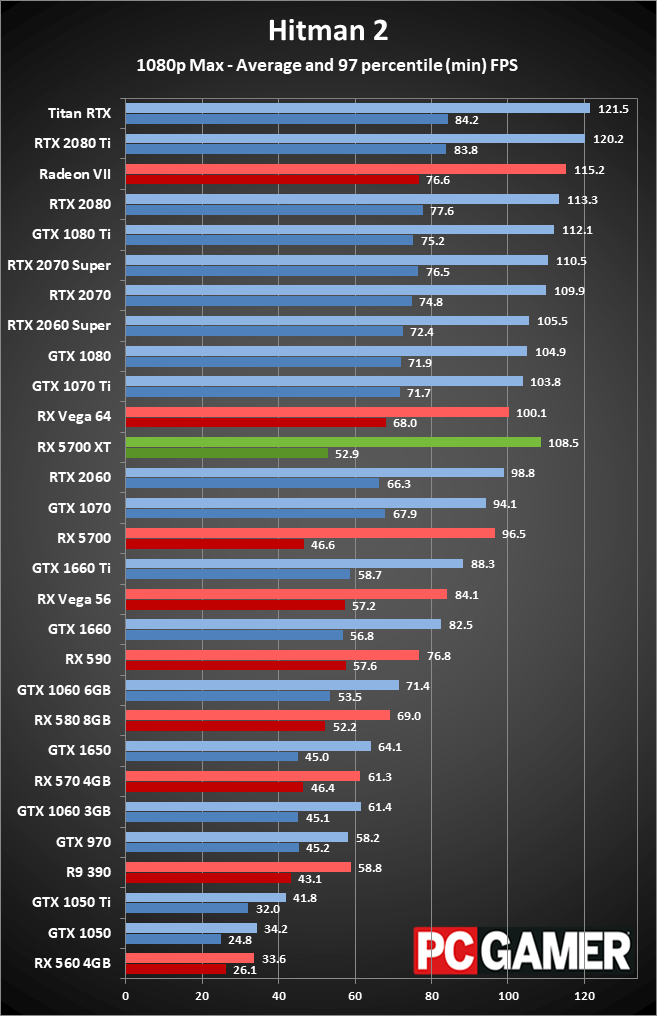

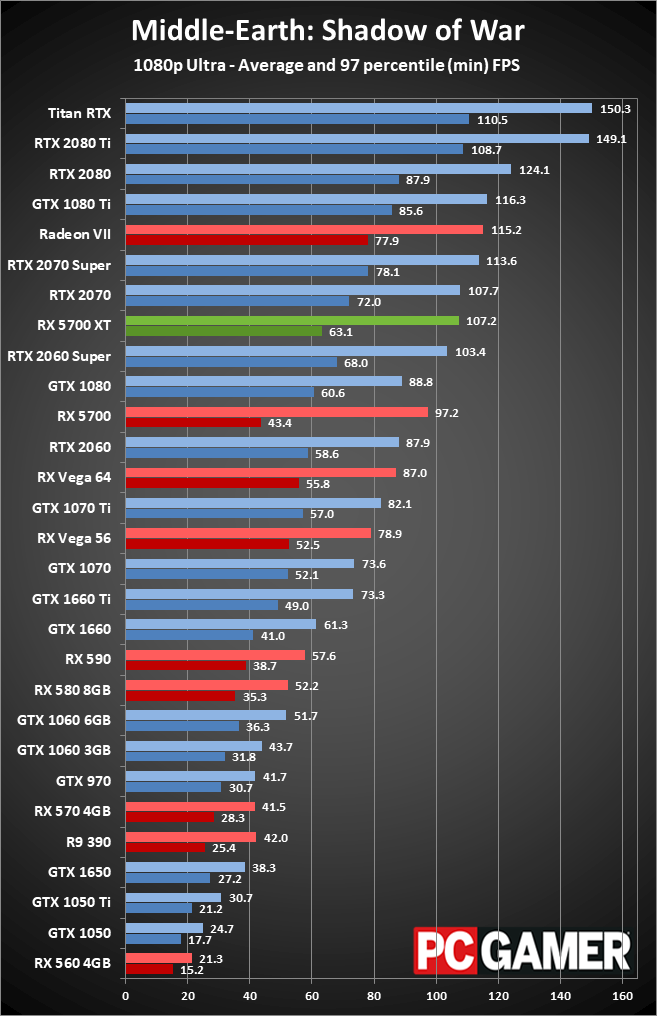

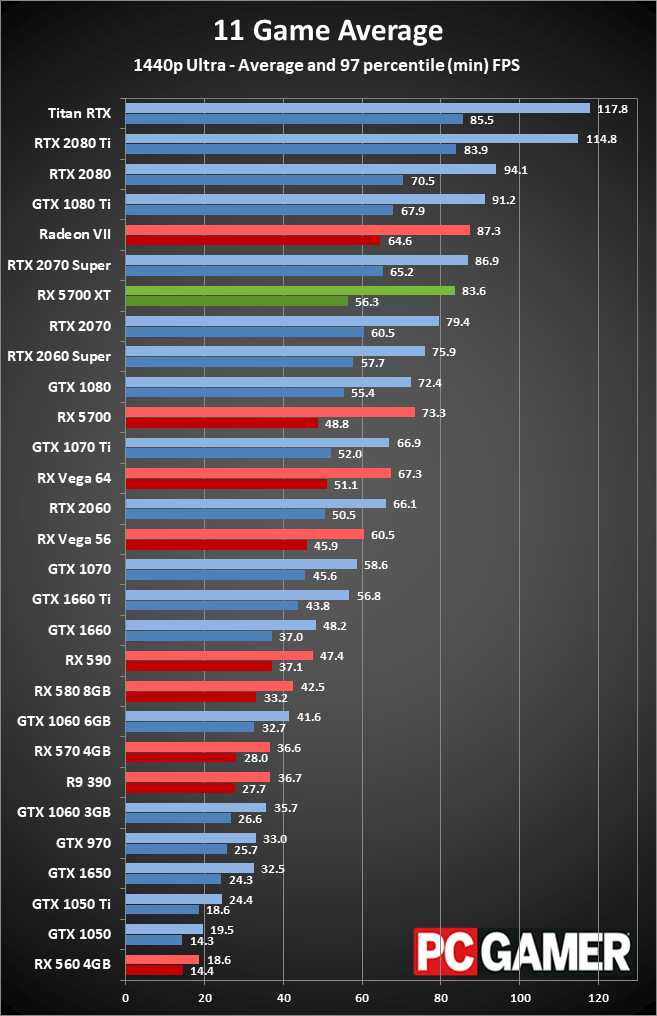

Here are the results, starting with 1080p. You might think that's not the primary target for a $400 graphics card, but if you're hoping to max out the capabilities of a 144Hz monitor, 1080p makes that easier than 1440p.

Radeon RX 5700 XT performance

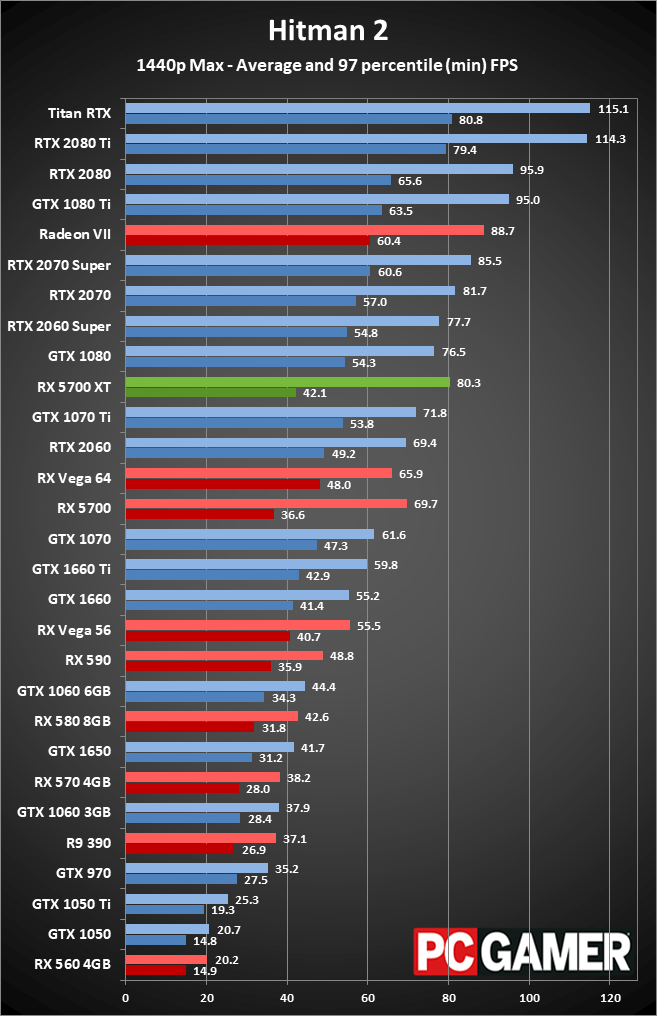

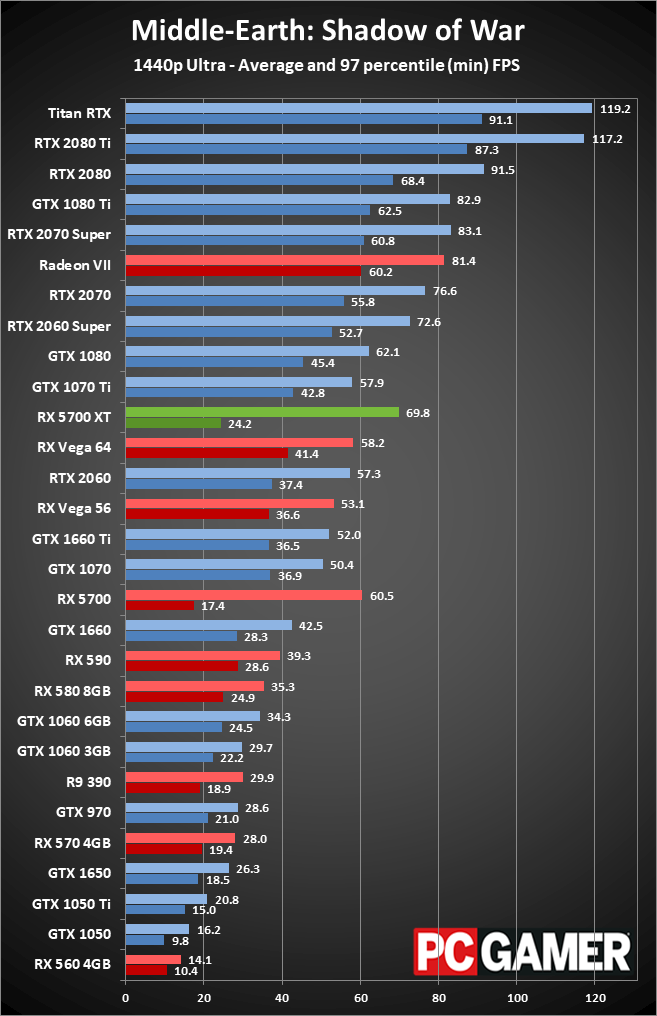

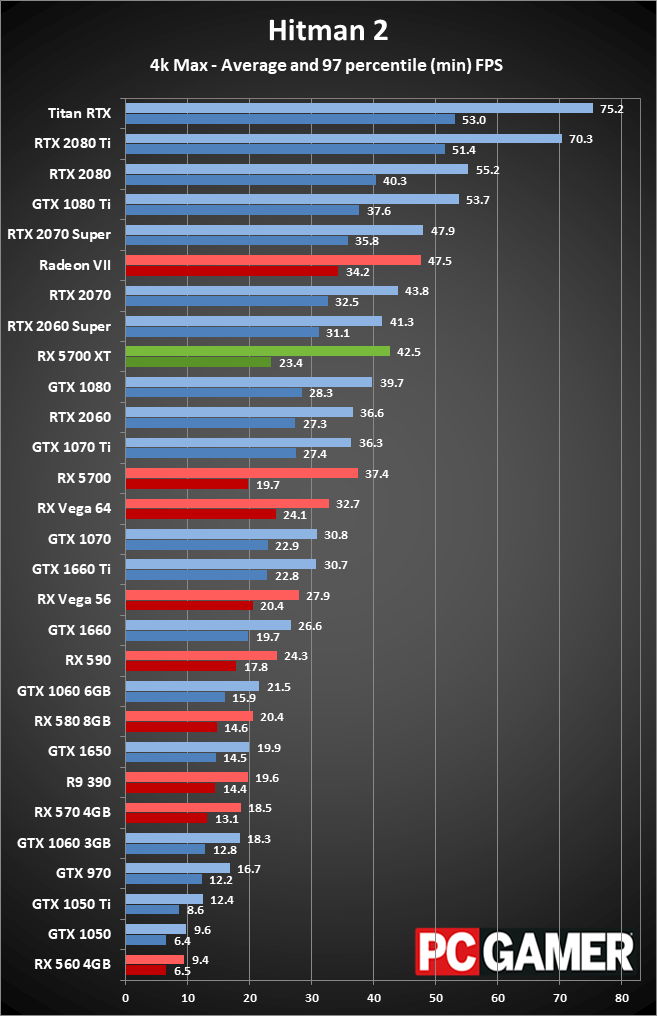

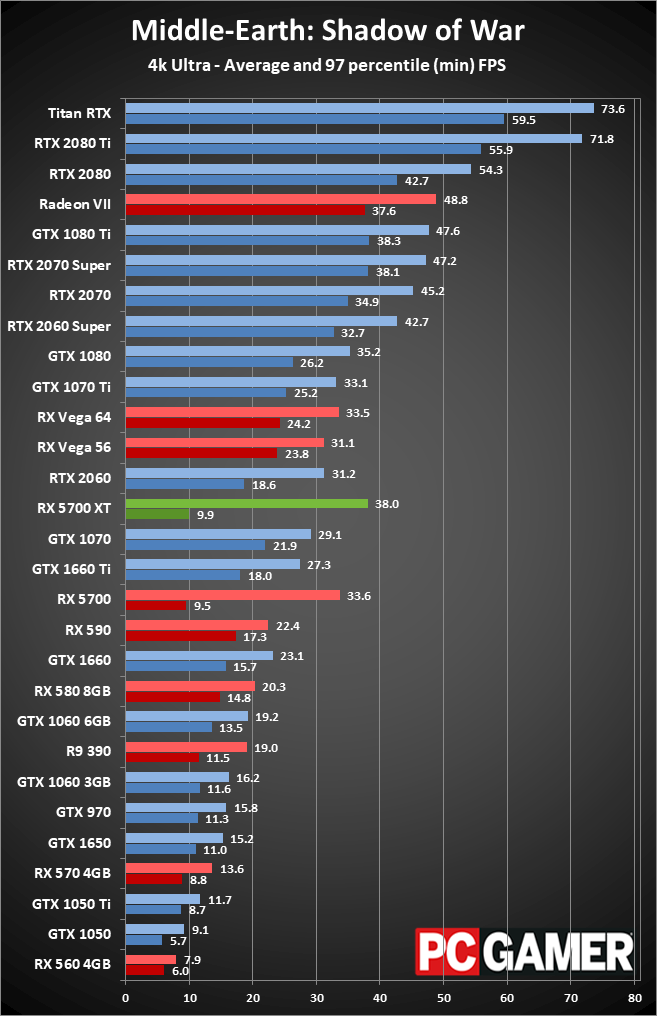

I'm not going to spend a ton of time analyzing the individual results, as you can flip through the charts. There are some performance anomalies I'm still trying to track down, but overall the performance and positioning of the various GPUs makes sense. The RX 5700 XT has some poor minimum fps results in several games (Hitman 2, Shadow of War, and Warhammer 2 specifically), but that's likely a result of the new architecture and launch drivers. I'll be retesting and updating the charts over the coming days, but in general it looks like the RX 5700 XT is around 5-10 percent faster than the RTX 2060 Super.

If anything, that gap is likely to grow as AMD irons out any remaining driver issues. Or maybe not, as Strange Brigade performance is quite a bit higher than expected and updated drivers might change that. Either way, performance is close enough that it shouldn't be the overriding factor when choosing between AMD and Nvidia. AMD is slightly faster and uses slightly more power, while Nvidia counters with features like DXR support and DLSS that AMD currently chooses to ignore.

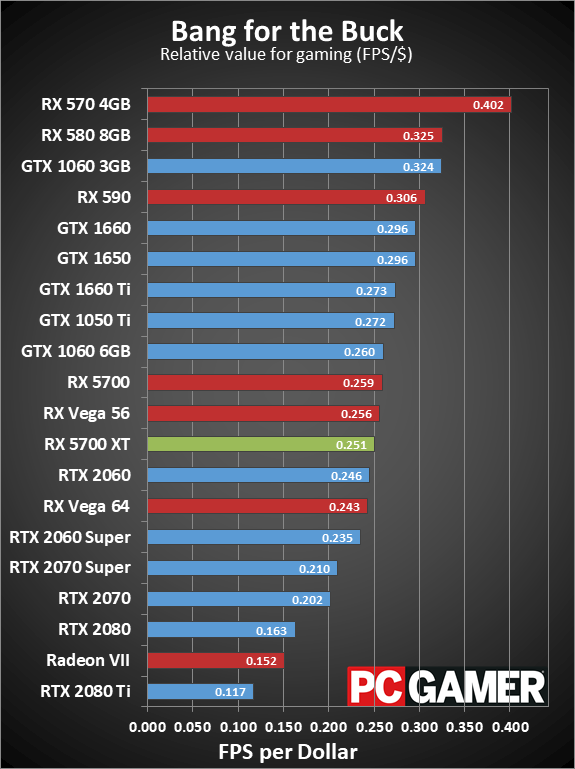

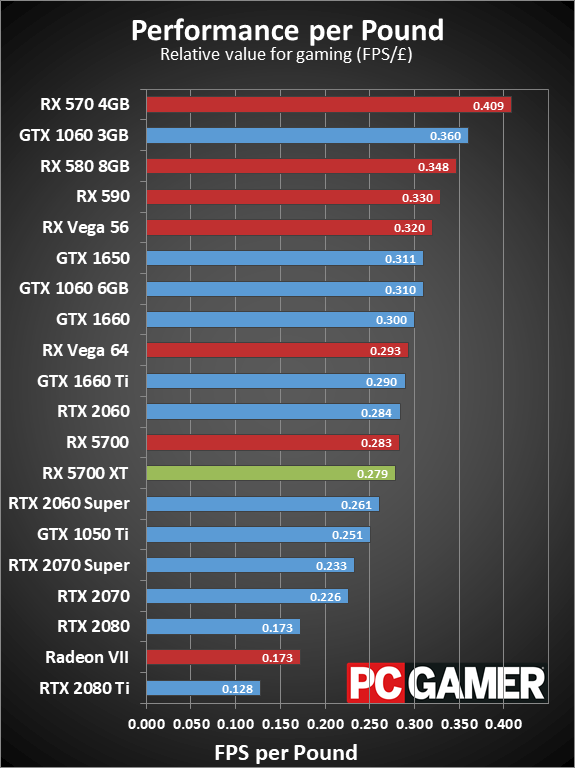

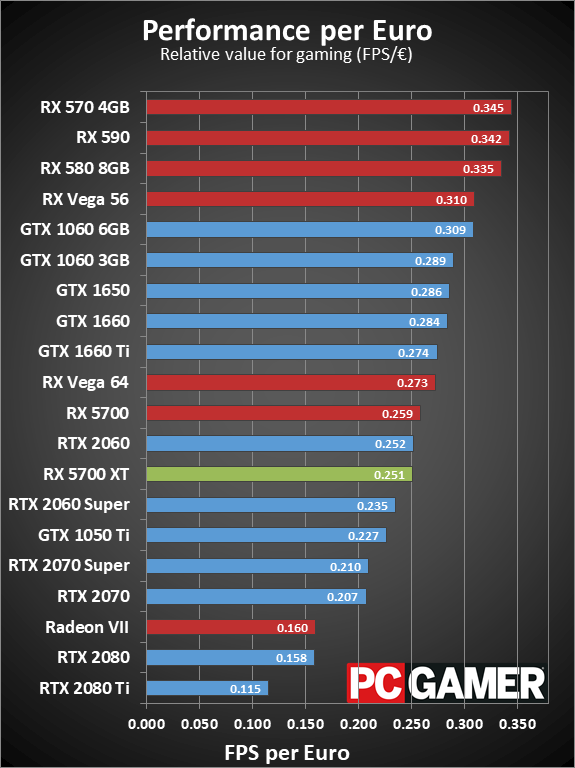

From a value perspective, there's still no beating the RX 570. I don't know if AMD is even manufacturing more Polaris GPUs at this point (probably not), but the 570 has had clearance pricing for pretty much all of 2019. The problem is that the 570 is really only good for 1080p medium to high quality gaming; there are many games where 1080p ultra at 60fps just isn't happening on that card. The RX 5700 XT, on the other hand, easily handles 1080p ultra and even 1440p ultra. There may be individual games where settings will have to be dropped if you want a steady 60fps at 1440p (e.g., Assassin's Creed Odyssey), but in most other games the 5700 XT is hitting 60fps, and in some cases even 100fps or more is viable. Pair up the RX 5700 XT with a FreeSync display, and any game that can hit 50fps or more will feel smooth.

That's pretty much all of the games in my test suite, which brings up another accolade for AMD. After several years of marketing and debate, Nvidia has opted to start supporting FreeSync displays on its GTX and RTX cards. AMD can't support FreeSync on a G-Sync display, unfortunately, and Nvidia has conducted testing and says most FreeSync displays don't meet its standards for official G-Sync Compatible certification, but it certainly feels like a net win for AMD and the open FreeSync (Adaptive Sync) standard.

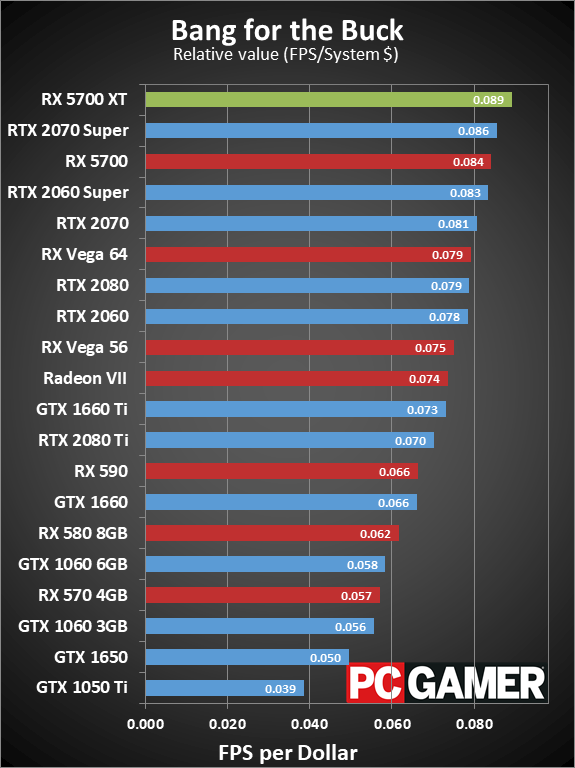

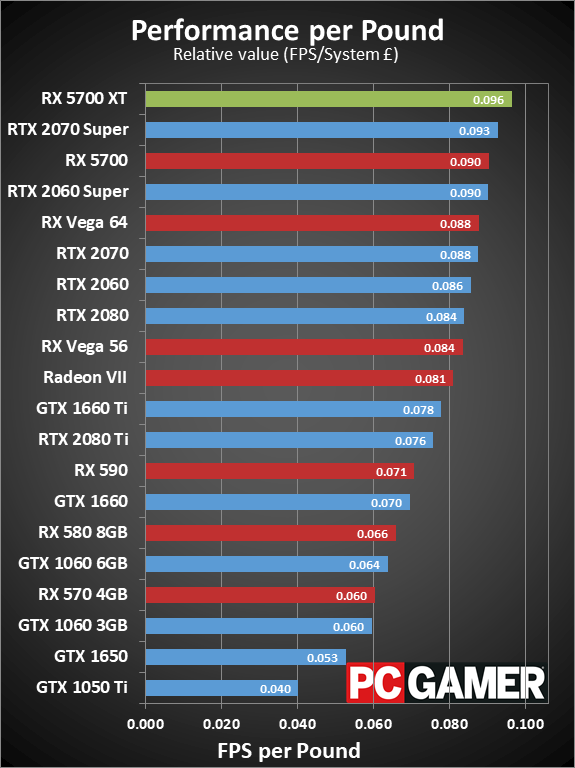

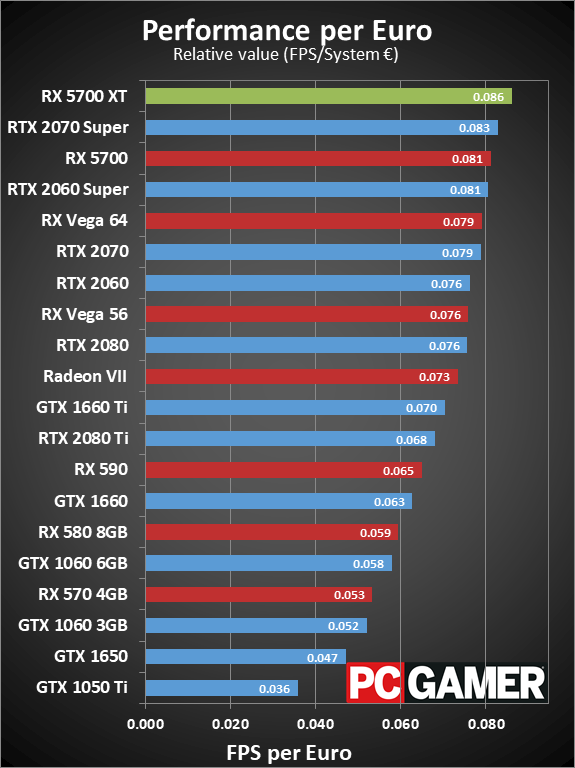

The other aspect of value is for building a completely new PC, and this is where the RX 5700 XT really looks good. For all three monetary markets I checked, the RX 5700 XT ranks at the top of our system value charts, just ahead of the 2070 Super, RX 5700, and 2060 Super. If you're building or planning to build a new gaming PC any time soon, outside of the budget realm (i.e., less than $750 for the whole PC), I'd strongly advise going for a high-end GPU like AMD's RX 5700 XT or one of Nvidia's new RTX Super cards.

Radeon RX 5700 XT is a welcome addition to the GPU market

There's plenty to like with AMD's new Navi GPUs and the RDNA architecture. Performance and efficiency are substantially improved relative to the previous generation Vega and Polaris cards. Some of that is thanks to the 7nm manufacturing process, a good portion is thanks to the architectural improvements, and the reduced launch price is certainly a step in the right direction.

Incidentally, at the AMD even during E3 when pricing was originally revealed, I told AMD as much. Paraphrasing, I said, "You know that as soon as you announce pricing for the 5700 cards, Nvidia is going to respond, right? $399 for 2070 performance is a good deal, $450 would be high, and $500 is going to price the 5700 XT right out of the competition." I suspect that at some level, AMD always intended to drop the price. Starting at $450 meant Nvidia could drop to $400, which AMD could then match. Neither company wants the price wars to get too bloody.

Overall performance looks good, especially if you ignore the outliers that cropped up. Comparing the RX 5700 XT with the RTX 2060 Super, from a pure performance perspective AMD comes out ahead. However, you're still giving up on ray tracing if you go with AMD. Maybe it won't matter, but there are enough games coming down the pipeline with ray tracing support that I would rather have it than not, especially for relatively similar performance. (Hello, Cyberpunk 2077.)

AMD does have some features that could be worth exploring as well, including anti-lag, FidelityFX, and Radeon Image Sharpening (for games that don't directly include FidelityFX support). I haven't had time to fully investigate those features, or overclocking of the RX 5700 XT, but none of those would radically change what I think of the new graphics cards.

The good news is that AMD is now competitive with Nvidia's lower tier RTX cards, in both performance and pricing. It still can't touch the RTX 2080 and 2080 Ti, but it doesn't really need to right now. Some people might be willing to spend $700 or $1,200 on an extreme graphics card, but far more people will be interested in a $350-$400 high-end graphics card that can push 60-144fps at 1080p/1440p.

For those users, AMD's Radeon RX 5700 XT is a welcome addition, and getting three months of free access to Microsoft's Xbox Game Pass (including Gears of War 5 when it launches in September) as part of the package helps sweeten the deal. You may not get fancy ray tracing support, but if you've been hoping for a new graphics card upgrade that doesn't require supporting Nvidia, this is currently the best AMD has to offer and it's definitely worth considering.

The Radeon RX 5700 XT is faster and more efficient than its predecessors. While it lacks ray tracing support, it's the best GPU AMD currently offers.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.