Samsung's new GDDR6W graphics memory doubles performance and capacity

But it won't necessarily make for significantly faster graphics cards.

Samsung has announced its next-gen memory tech for high performance graphics cards: GDDR6W. Claimed to offer double the capacity and performance of conventional GDDR6 memory, GDDR6W is said to be comparable with HBM2E for outright performance and will enable overall graphics memory bandwidth of 1.4TB/s. For reference, Nvidia's beastly GeForce RTX 4090 currently tops out at 1TB/s using GDDR6X.

Samsung is bigging up the new tech as being key to enabling "immersive metaverse experiences." However, the new tech probably won't enable more memory bandwidth or faster graphics cards in the short term. But more on that in a moment.

Samsung says the new memory spec boosts per-pin bandwidth to 22Gbps over the maximum 16Gbps spec of GDDR6 (GDDR6X tops out at 21Gbps per pin). However, GDDR6W doubles overall bandwidth per memory chip package from 24Gbps for GDDR6 to 48Gbps, chiefly thanks to doubling the numbers of pins on each memory package.

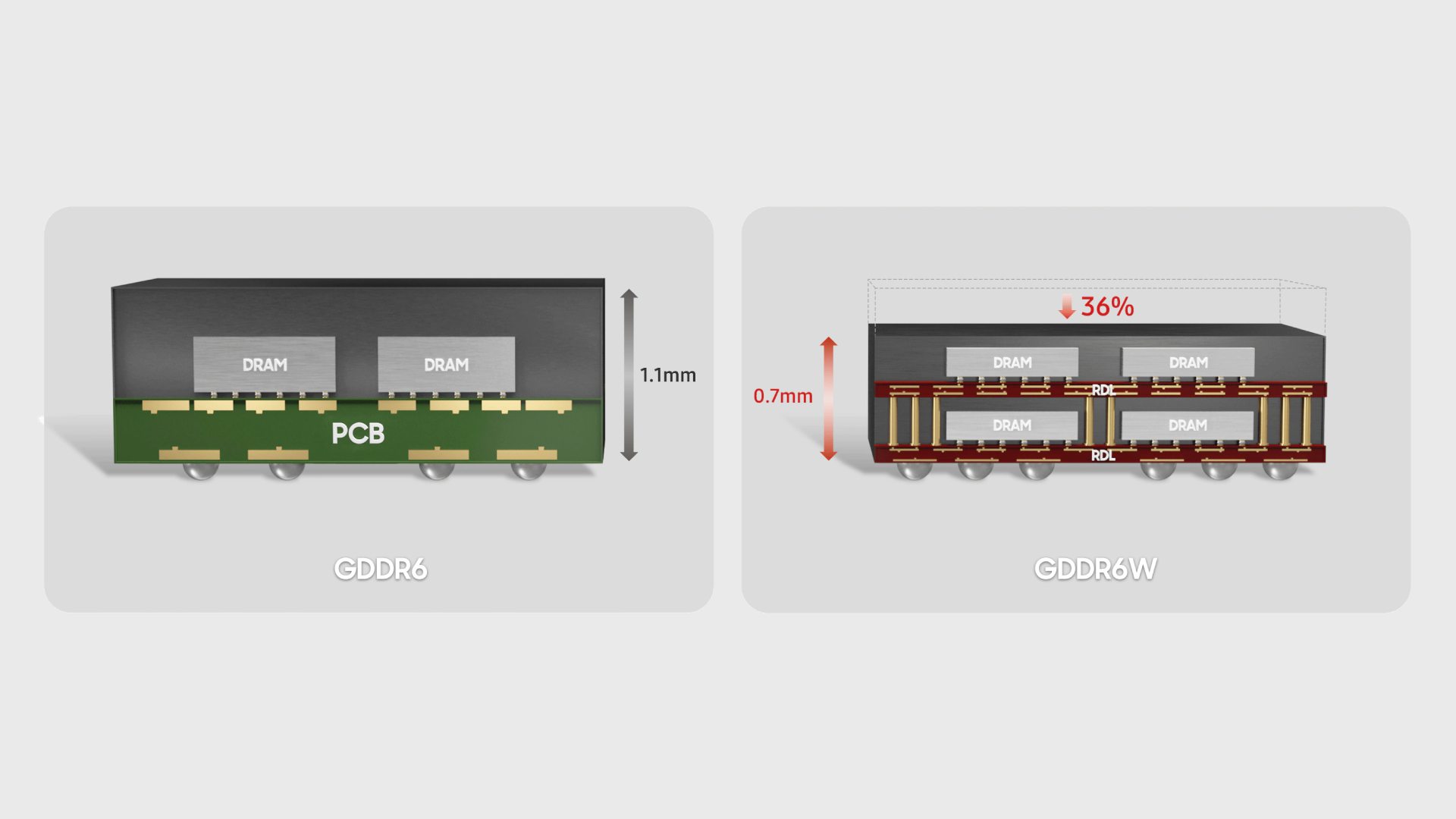

Chip capacity has also doubled from 16Gb to 32Gb. Samsung has achieved all that while maintaining exactly the same physical footprint as GDDR6 and GDDR6X. Perhaps most impressively of all, this has been done courtesy of double stacking memory chips in the package while actually reducing overall package height by 36 percent.

It's worth noting that the 1.4TB/s overall memory bandwidth claim relates to a 512-bit wide memory interface with eight GDDR6W packages totalling 32GB of total graphics memory. An RTX 4090 24GB card uses 12 GDDR6X packages over a 384-bit bus. Given the same bus width, GDDR6X would only be slightly slower than the new GDDR6W standard.

So, the critical point to note here is that Samsung is making direct performance comparisons with GDDR6 rather than GDDR6X, no doubt because GDDR6X is only produced by Micron.

Where GDDR6W has a clear advantage, however, is in capacity. With double the capacity of both GDDR6 and GDDR6X, only half the number of chips are required for a given amount of total memory, opening up the possibility for graphics cards with even more VRAM.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Using that comparison of the RTX 4090, had the card been based on GDDR6W, it would only have needed six memory chips rather than 12 to achieve the same 24GB capacity and 1TB/s bandwidth.

So, here's the critical take away: With GDDR6W you get double the performance (actually slightly more than double, thanks to that 22GBps per pin versus 21Gbps for GDDR6X) and double the capacity per memory package. However, for any given capacity, you only need half the number of packages. In the end then actual memory bandwidth available to the GPU is the essentially the same as GDDR6X at any given capacity.

What GDDR6W really offers then is the option of using fewer packages to achieve the same capacity and performance, very likely at lower cost. Or else upping the capacity and performance to levels not yet seen. If you look at a 24GB RTX 4090 board, for instance, there's very limited space around the GPU for more memory packages.

A 48GB RTX 4090 with double the memory capacity simply wouldn't be possible with GDDR6X, even if that amount of memory would almost certainly be very silly indeed. The point is that GDDR6W opens up possibilities for the future. GDDR6W also looks particularly interesting for laptops. Fewer memory packages will always be a good thing for mobile.

Nvidia currently favours Micron's GDDR6X while AMD is sticking with GDDR6 for its latest graphics cards. Neither has indicated any plans to jump on Samsung's new GDDR6W technology. Indeed, it's not entirely clear whether any existing GPUs, including Nvidia's latest RTX 40 Series and AMD's new Radeon RX 7000, support GDDR6W. However, we think support is likely given GDDR6W essentially amounts to a new packaging tech for GDDR6 rather than a new memory tech per se. Watch this space...

Where are the best Cyber Week graphics card deals?

In the US:

- Amazon - savings on current-gen Nvidia & AMD graphics cards

- Best Buy - the only place to buy Founders Edition cards in the US

- Walmart - RTX 3070 discounts up to $299

- B&H Photo - discounts of up to $100 on select GPUs

- Newegg - save up to 40% on the list price of graphics cards

- RTX 3080 - Yeston RTX 3080 |

$1,099$769 (save $330) - RX 6750 XT - MSI Mech RX 6750 XT |

$549.99$369.99 (save $180) - RX 6700 - XFX Speedster SWFT309 RX 6700 |

$399.99$309.99 (save $50) - RX 6650 XT - Gigabyte OC RX 6650 XT | $304.99 $269.99 (save $35)

- RX 6600 - XFX Speedster 8GB |

$249.99$239.99 (save $10)

In the UK:

- Amazon UK - great deals on last-gen GPUs

- Scan - up to £50 off Nvidia graphics cards

- Box - save up to £400 on high-end GPUs

- Ebuyer - AMD cards with over £300 discounts

- Overclockers - over 40% discounts on last-gen AMD and Nvidia GPUs

- Currys - some discounts on GeForce GPUs

- Laptops Direct - some great GPU deals, but you have to search...

- RTX 3080 - MSI RTX 3080 Ventus 3x Plus |

£899.99£779 (save £120) - RTX 3060 Ti - Palit RTX 3060 Ti |

£499.98£419.99 (save £79.99) - RX 6700 XT - MSI RX 6700 XT Mech |

£499.98£399.99 (save £99.99)

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.