Gaming performance of Ryzen 7 vs. Core i7 with GeForce GTX 1080 Ti

Overclocked R7 1700 vs. i7-5930K with the fastest graphics card on the planet.

The GTX 1080 Ti review covers a lot of the details for Nvidia's latest halo product. $700 is a lot of money to spend on a graphics card, sure, but it's also a lot less than the former champion Titan XP. With a new GPU champion, I wanted to take a moment to quickly revisit the topic of gaming performance for AMD's new Ryzen 7 processors.

I didn't stick with stock CPU clocks either—that's boring and it's not the sweet spot for the Ryzen 7 1700. Since all of AMD's Ryzen processors are multiplier unlocked, and I was able to run the 1700 at 3.9GHz on all eight cores, I used that as my test platform for AMD. I also ran all of the benchmarks with SMT disabled in the BIOS, which improves performance by about five percent—it's a lousy workaround that hopefully won't be necessary at some point in the future, but for now it's a way to improve the gaming performance, at the cost of multi-threaded performance.

AMD System:

Ryzen 7 1700 3.9GHz

Asus Crosshair VI Hero

Kingston HyperX 16GB DDR4-3000

Samsung 960 Evo 500GB

Samsung 850 EVO 2TB

Rosewill Quark 750W

Noctua NH-U12S SE-AM4

Rosewill Cullinan

Intel System:

Intel Core i7-5930K 4.2GHz

Gigabyte GA-X99-UD4

G.Skill Ripjaws 16GB DDR4-2666

Samsung 950 Pro 512GB

Samsung 850 EVO 2TB

EVGA SuperNOVA 1300 G2

Cooler Master Nepton 280L

Cooler Master CM Storm Trooper

With an overclocked Ryzen 7 1700 as the AMD contender, pitting it against my regular GPU testbed is a nearly perfect fight. My Intel testbed is running an i7-5930K overclocked to 4.2GHz—I could push it to 4.5GHz, thanks to the liquid cooling, but I 4.2GHz with a decent air cooler (like the one in the AMD Ryzen system) seems a bit more of a fair fight. Since this is a 6-core/12-thread Intel part, and I'm only running a single GPU, it's also a virtual stand-in for the i7-5820K, i7-6800K, and i7-6850K—in gaming, all four processors running at 4.2GHz will perform within two percent of each other.

Both systems are using otherwise similar components, with 16GB of DDR4 memory. The Intel platform is 'only' running DDR4-2667 while the AMD platform has DDR4-2933, but then it's also quad-channel vs. dual-channel, and in my own testing I haven't seen a huge benefit to higher memory clocks for gaming performance. All the games are installed on a Samsung 850 Pro 2TB SSD, and there's no extra software (streaming utilities, video capture, etc.) running during the benchmarking process.

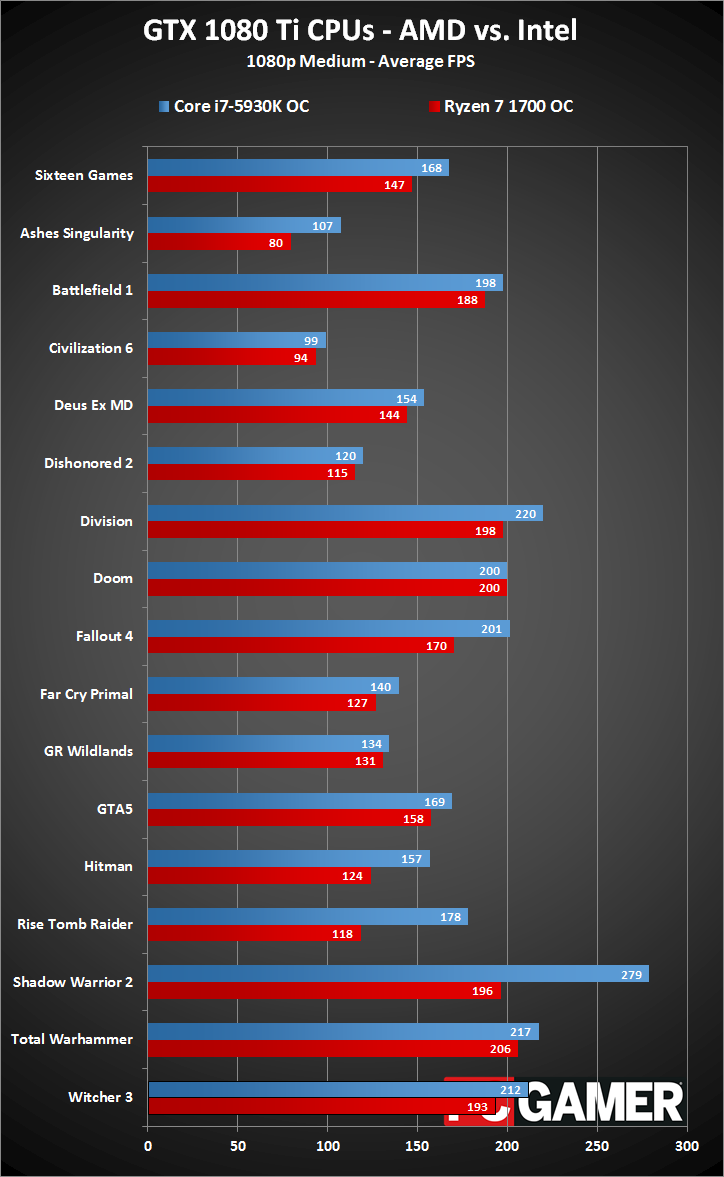

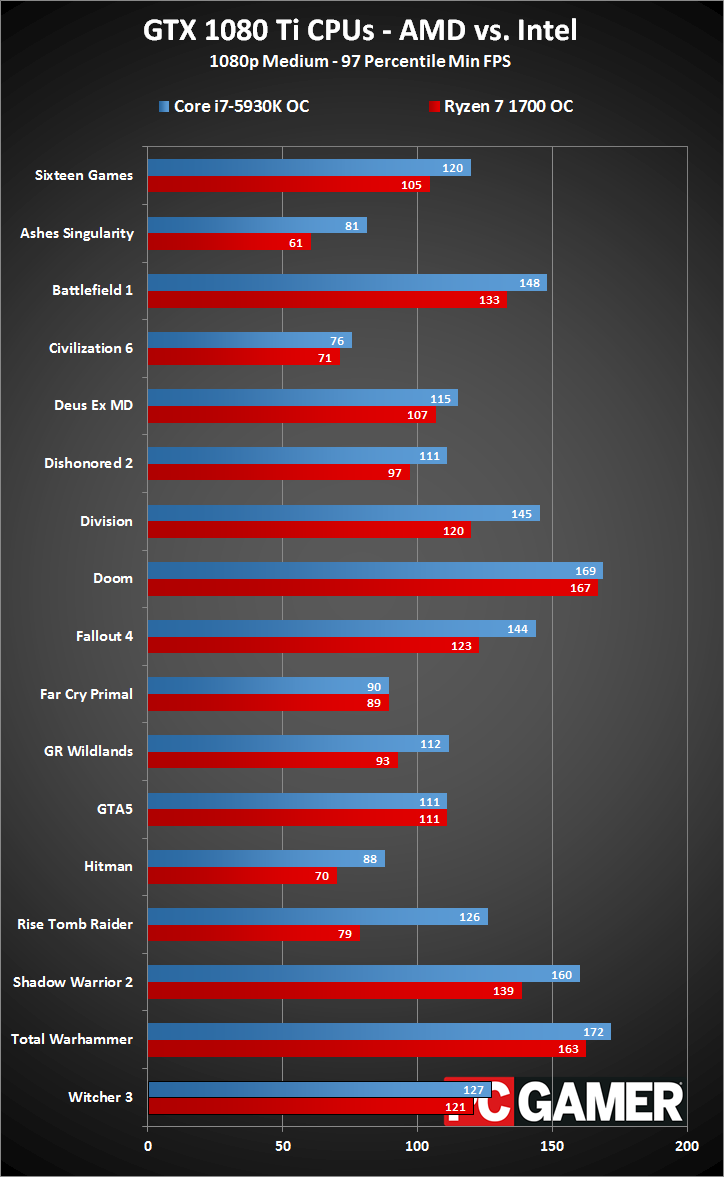

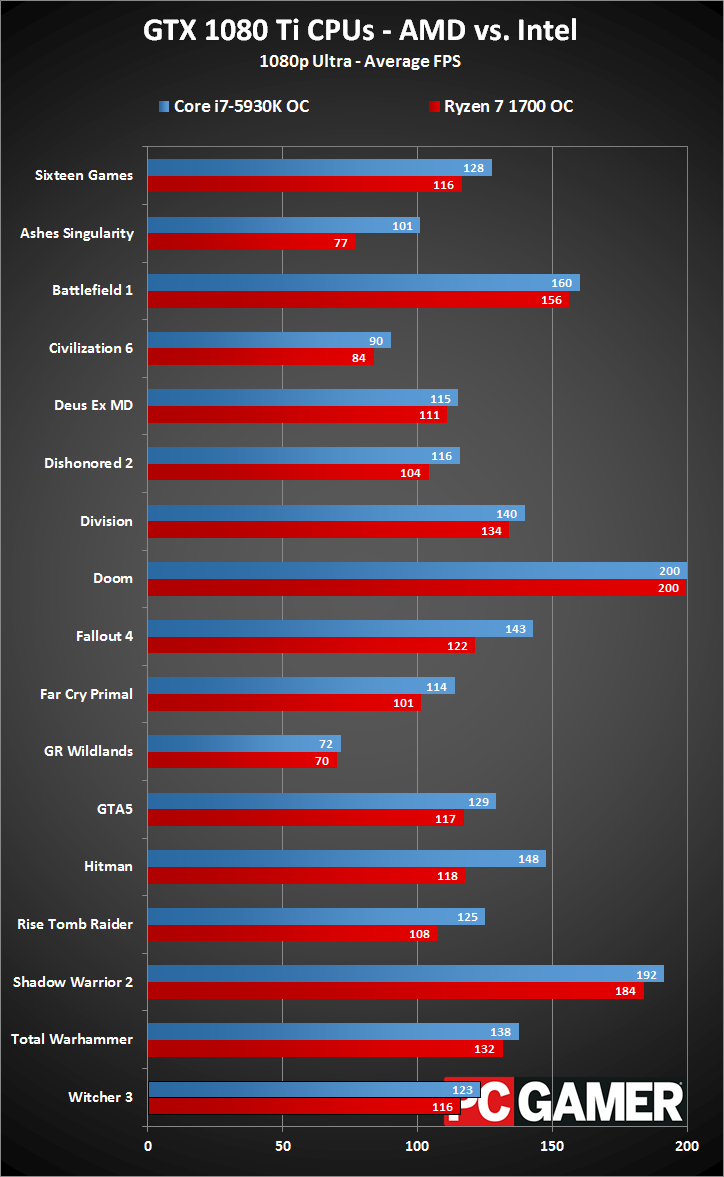

This time, rather than confining testing to just 1080p ultra, I've run the full suite of gaming benchmarks on Ryzen 7. I don't think 1080p medium quality is particularly important on a GPU like this, but I wanted to see if CPU scaling was any better/worse at lower quality settings. Obviously, 1440p and 4K testing push the bottleneck over to the GPU, so the CPU gap should diminish. Here are the results of the testing, and if you want to know the specific settings used for each game, please refer back to the full GTX 1080 Ti review and check the text below each chart.

Slide left/right for 1080p medium minimum fps.

Slide left/right for 1080p medium average fps.

Starting with 1080p medium testing, in ten of the games the difference in performance between the two CPUs is 10 percent or less. Doom ends up as a tie, thanks to the 200 fps framerate cap. Two other games also include caps, Battlefield 1 (200 fps) and Dishonored 2 (120 fps). Both CPUs get close to those limits, with periodic dips in framerate, but the Intel processor maintains a higher minimum framerate (see the second chart in the above gallery).

The remaining six games show double-digit percentage gains. The two lowest are The Division (11 percent) and Fallout 4 (18 percent), but the final four are far more significant. Ashes of the Singularity (35 percent), Hitman (27 percent), Rise of the Tomb Raider (51 percent), and Shadow Warrior 2 (42 percent) favor Intel's CPU by large margins. The overclocked 5930K ends up being 14 percent faster on average at 1080p medium across the tested games.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Looking quickly at the 97 percentile average minimum fps, the overall average is about the same 15 percent lead for Intel, but we get there in a different fashion. This time, nine of the games show double digit percentage gains. Ashes still gives Intel a 34 percent lead, and Rise of the Tomb Raider is a 60 percent lead. Ghost Recon Wildlands (20 percent) shows a big gap in low fps where the average was practically the same. Other noteworthy differences: Battlefield 1 (11 percent), Dishonored 2 (14 percent), The Division (21 percent), Fallout 4 (17 percent), Hitman (25 percent), and Shadow Warrior (16 percent).

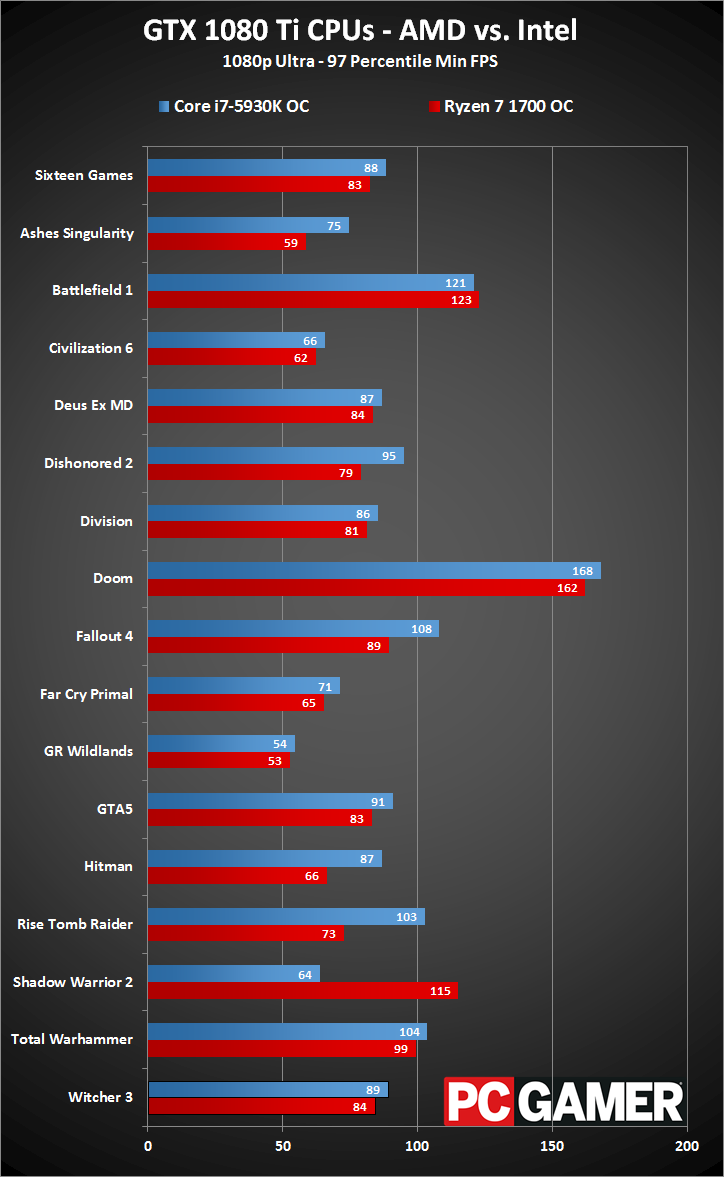

Slide left/right for 1080p ultra minimum fps.

Slide left/right for 1080p ultra average fps.

Moving from medium quality to ultra quality changes the dynamic quite a bit. Intel maintains a ten percent lead on average, with a seven percent lead in minimum fps. However, there are a few anomalies—like the massive drop in minimum fps on Shadow Warrior 2 on the Intel system. (This appears to be a driver bug with Nvidia, though I'm surprised it only showed up on Intel's CPU.) Ashes of the Singularity (30 percent), Fallout 4 (18 percent), Hitman (26 percent), and Rise of the Tomb Raider (17 percent) continue to heavily favor Intel, and minimum fps likewise favors Intel in those four games by 20-40 percent. However, the other games drop to about 10 percent or less difference, with seven games showing less than a five percent difference.

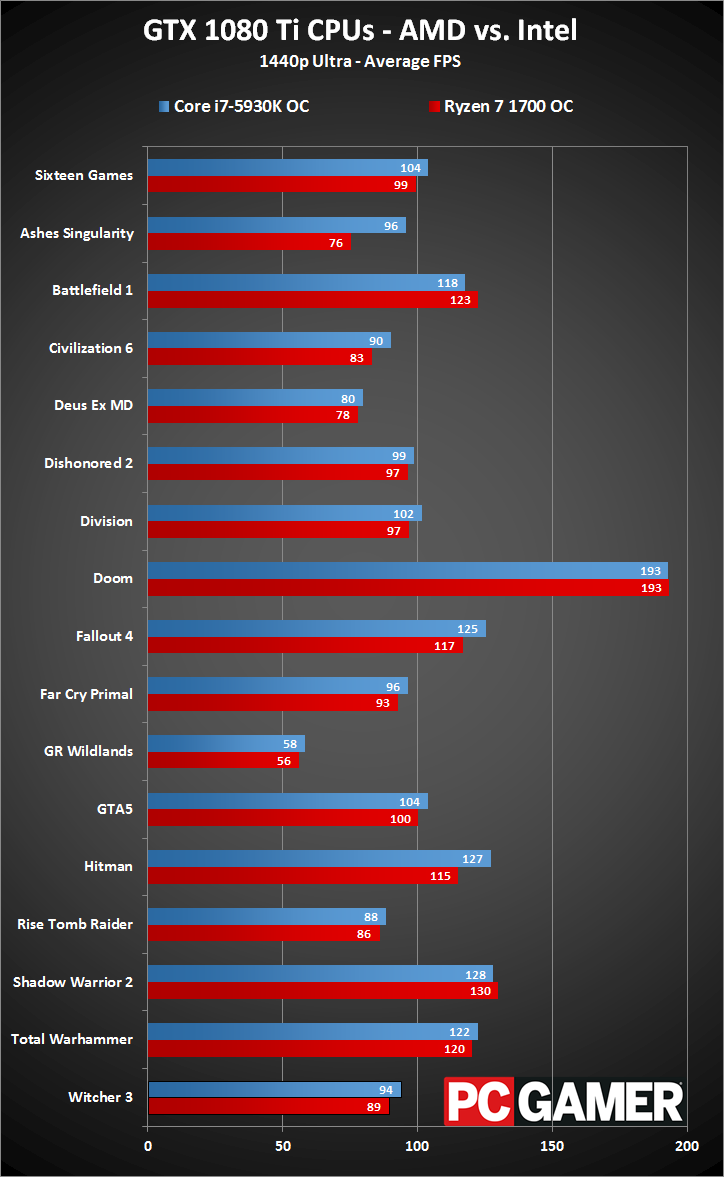

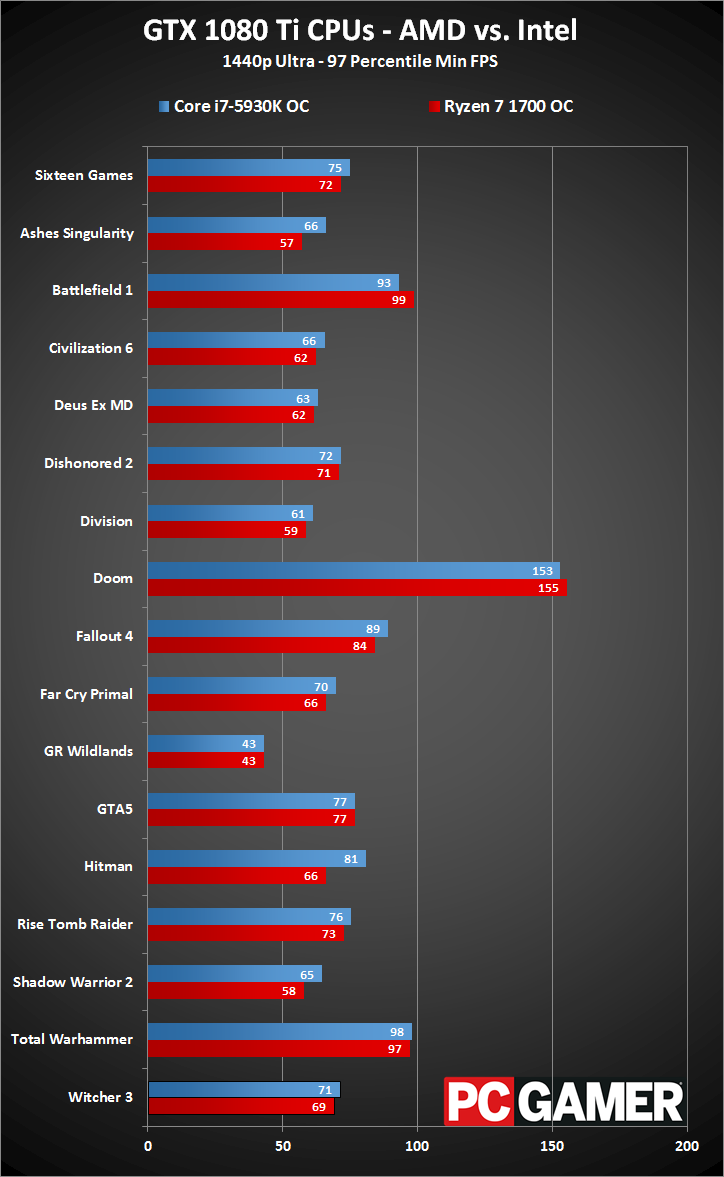

Slide left/right for 1440p ultra minimum fps.

Slide left/right for 1440p ultra average fps.

At 1440p ultra, nearly all of the games drop below a ten percent difference, with an overall average lead of only five percent for Intel. Ashes of the Singularity remains the one exception, with Intel still leading by 27 percent in average fps, and 15 percent in minimum fps. Hitman also gives Intel a 10 percent lead in average framerates and a 23 percent lead in minimum fps. We're getting very close to parity.

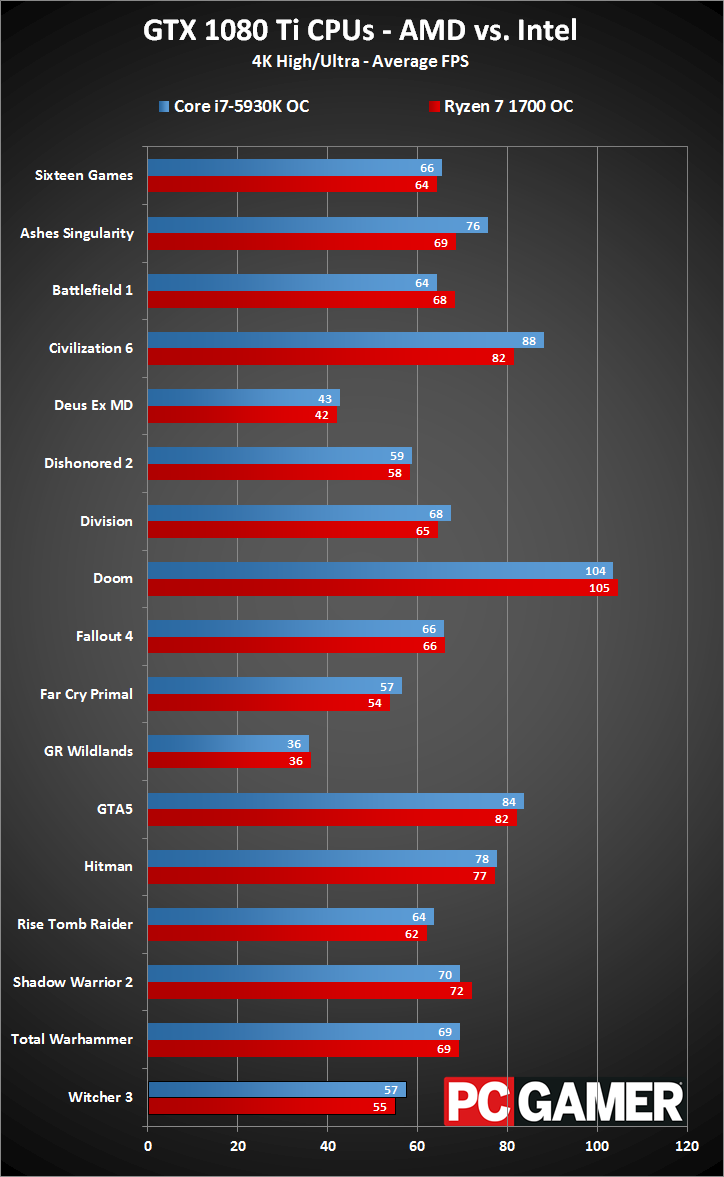

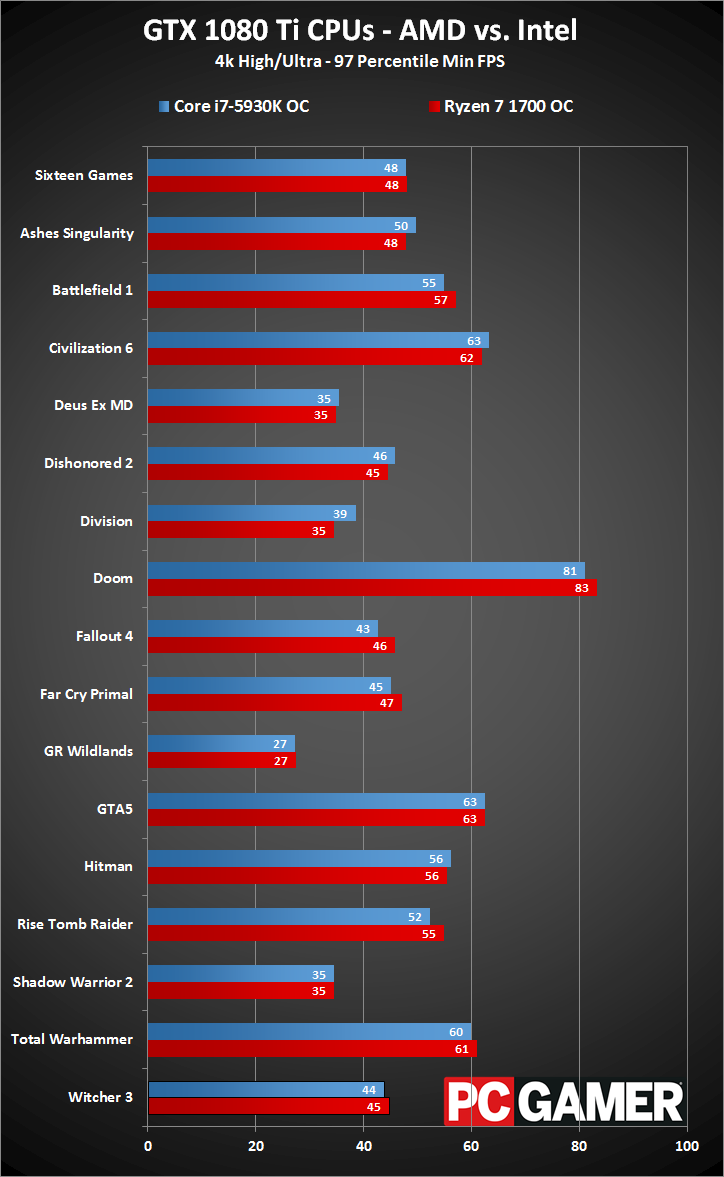

Slide left/right for 4K high/ultra minimum fps.

Slide left/right for 4K high/ultra average fps.

Finally, at 4K high/ultra settings (depending on the game), Ryzen starts to claim a few wins. Mostly it's margin of error stuff, but interestingly there are a few cases where Ryzen boasts higher minimum fps, leading to an overall miniscule lead in that category. Intel still has a tenuous two percent lead in average framerates (and a ten percent lead in Ashes), but for all intents and purposes, gaming at 4K is almost entirely GPU limited and we have a tie.

GTX 1080 Ti CPU scaling

In the most CPU bound testing I did, the overall difference between Intel's Core i7 parts and Ryzen 7 ranges from negligible to as much as 50 percent. And with SMT enabled, Ryzen 7 would drop another 5-10 percent on average. The i7-7700K overclocked to 5.0GHz should be a bit faster than even the 5930K, though I haven't had a chance to fully test this yet. (And I'm traveling again, so it will be a week or more—sorry. Check back in a couple of weeks and I'll see about adding overclocked i7-7700K results to the charts.)

Gaming remains a somewhat perplexing story for AMD's Ryzen 7 parts, and I still hope things will improve over time. The good news is that at higher quality settings and higher resolutions, the gap narrows tremendously, but I'm not convinced people buying a $700 graphics card will want to risk lowered gaming performance at all.

The wildcard is future optimizations and multi-core utilization, which could start favoring Ryzen 7 over the i7-7700K. Developer Oxide has said they're working on a Ryzen-specific update to improve performance in Ashes of the Singularity, for example.

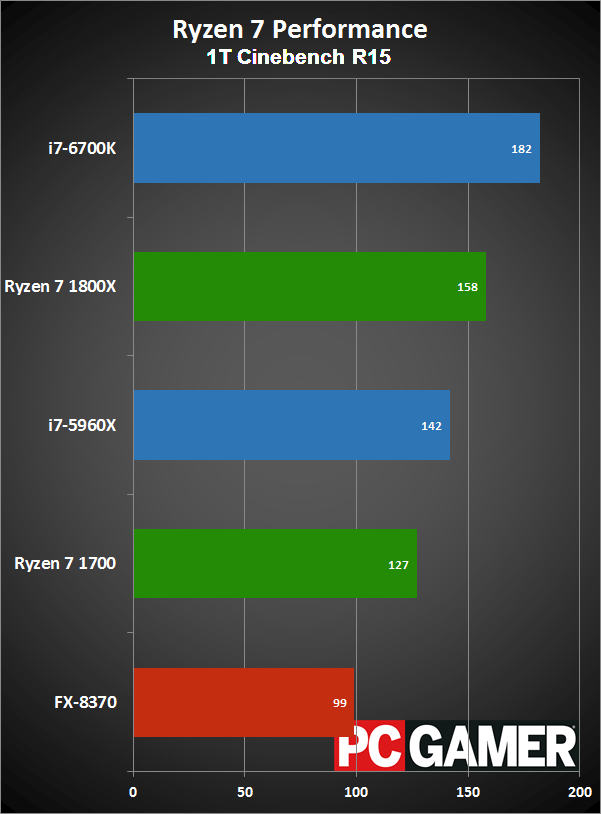

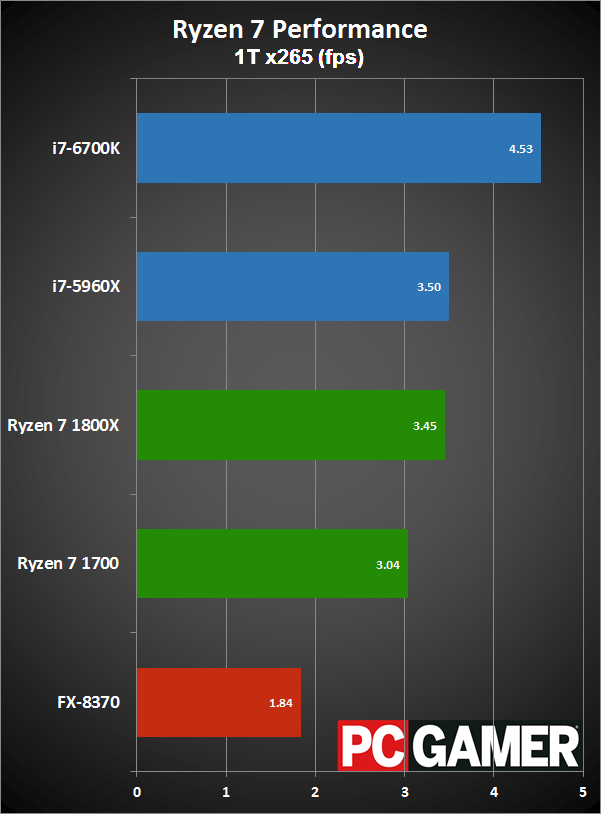

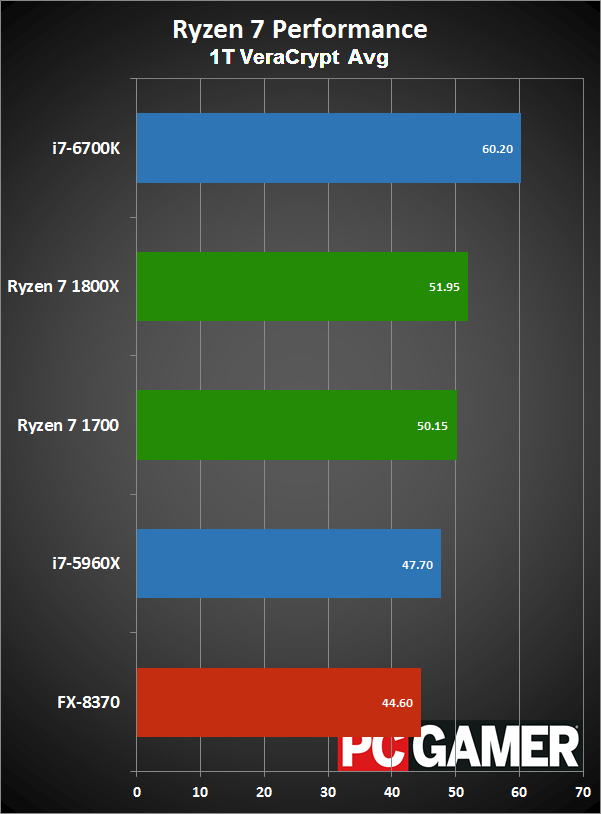

Slide left/right for more charts.

Slide left/right for more charts.

Slide left/right for more charts.

Slide left/right for more charts.

Slide left/right for more charts.

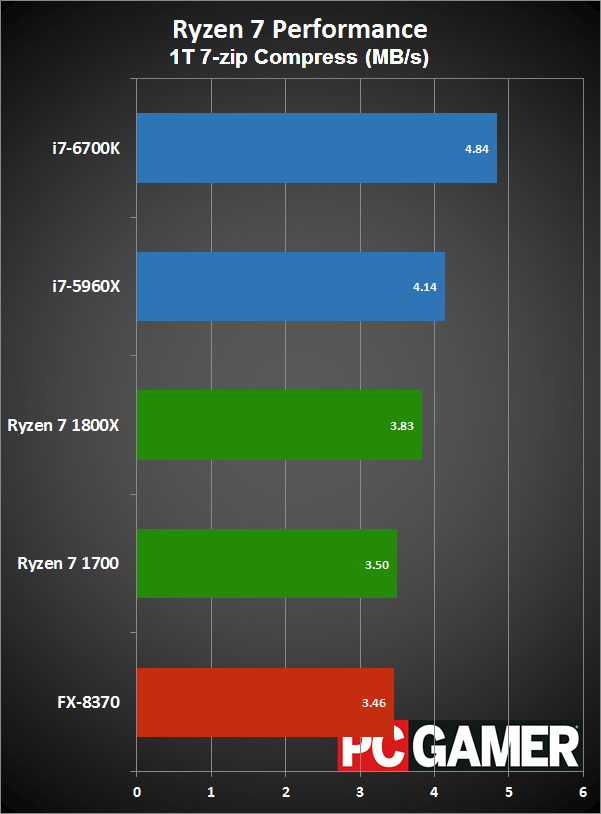

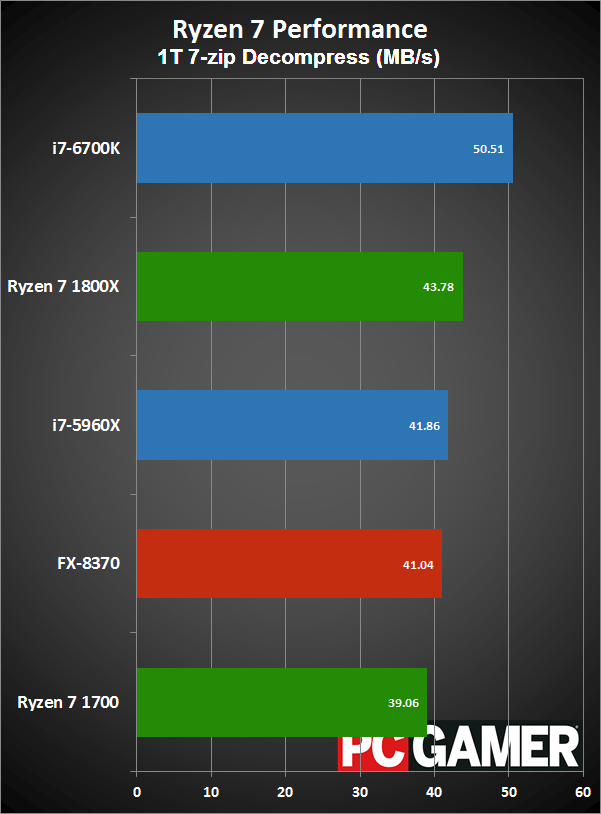

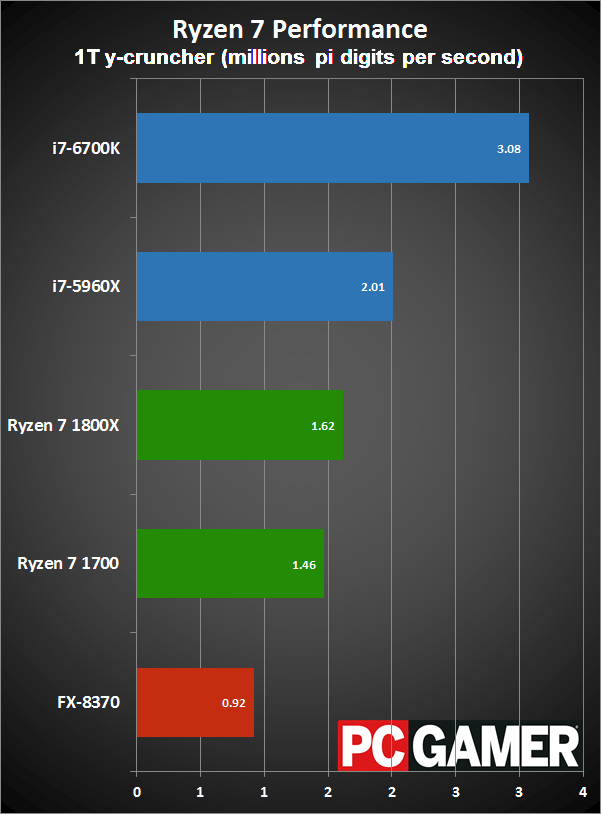

Slide left/right for more charts. Note that y-cruncher makes heavy use of AVX instructions, which favors Intel's CPUs.

The true oddity of all of this is that Ryzen's multi-threaded and even single-threaded performance actually matches up quite nicely with Intel's Haswell/Broadwell CPUs. The above slides are all running on a single thread, at stock clocks, and other than y-cruncher's use of AVX Ryzen is competitive and even slightly faster than Intel's X99 chips (though the higher clockspeed of Skylake/Kaby Lake is another matter). I'm not sure what games are doing (at a low level, in the instruction stream) that causes such a change.

Hopefully AMD can sort things out with Ryzen's gaming performance before the RX Vega comes out, which means they have about two months. As Picard would say, make it so.

But that's purely looking at things from a gaming perspective, and many people do a lot of other things on their PCs. If you ever do video encoding/editing, suddenly the Ryzen 7 1700 starts becoming extremely attractive compared to just about any Intel CPU. It's a slightly lower price than a 7700K, overclocks nearly as well (in my limited sampling) as the more expensive Ryzen 7 parts, and doubles the core/thread counts relative to Intel's Kaby Lake. And even if you're running an uber-GPU like the 1080 Ti, you're probably doing so at 1440p or 4K, in which case sacrificing a bit of gaming performance for the additional cores makes a lot of sense.

My general recommendation for gamers is to spend about 2-3 times as much money on a graphics card compared to the processor, which means the 1080 Ti is a good pairing for the 7700K and the Ryzen 1700—and the i7-5820K and i7-6800K are pretty close as well. For heavy multitaskers and people that are doing computationally intensive stuff outside of games, Ryzen 7 is worth a very serious look. And if you're thinking about running a pair of GTX 1080 Ti cards in SLI, for now I'd lean toward the extra PCIe lanes and overclocking capability of the i7-6850K—it won't be faster in non-gaming workloads, but anyone running 1080 Ti SLI likely won't care about that.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

Join The Club

Join The Club