Warhammer: Vermintide 2 settings guide: demanding for both CPU and GPU

We've tested a huge number of configurations and hardware to see how the game runs.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Warhammer: Vermintide 2 (which scored 80 in our review) is the latest incarnation of the Left 4 Dead style of multiplayer co-op. Developed by Fatshark, the focus is on gritty melee combat, which means you’ll be up close and personal when it comes time to dismember the hordes of Skaven and Rotblood Raiders. I've run a ton of benchmarks to show how the game performs on a variety of hardware, though getting repeatable and useful benchmarks is tricky.

The worst-case scenario for performance typically happens when a horde of Skaven or Rotblood attacks. Unfortunately, using one of those moments as a benchmark is impractical since the number and location of the enemies is basically randomized. I want repeatable results, so I’ve compromised by using the first part of the Empire in Flames level, which is the most demanding level beginning I've found in the collection of maps. The caveat is that framerates drop substantially compared to the average fps I’m showing, and in some cases by more than half. Those taxing horde sequences also tend to strain the CPU more than the GPU, which is something else to consider, but more on that in a moment.

The good news is that the combination of co-op and melee combat favors broadly swinging your sword to hit multiple enemies, so maintaining an extremely high framerate isn’t as critical as in competitive shooters where aiming is more nuanced. However, if you're playing the elf Kerillian, or the fire mage Sienna, aiming ranged attacks can become more of a factor. Regardless, 60fps and above is sufficient, 30-60 fps is still playable, and it's only when you drop below 30fps that things really start to bog down.

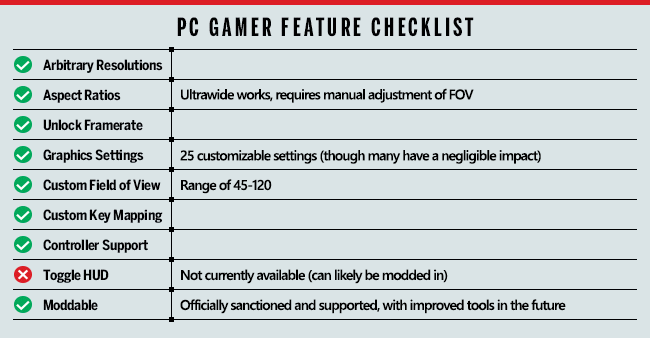

Article continues belowBefore going any further, let's look at the core features list.

Vermintide 2 includes all the key features we like to see in modern games, with the only real omission being the ability to disable the HUD. Which is a bit odd, considering the promotional trailer was clearly created using exactly such an option. Thankfully, with the promised mod support, someone should be able to fix this, but out of box you're stuck with the HUD—the only thing you can disable is the crosshair and subtitles.

Mod support won't officially arrive until about a month after release, according to the roadmap, and once it's available we still have to wait and see how many modders are interested in tinkering with the game.

Vermintide 2 also features a ton of graphics options, but as is often the case, many of the settings don't drastically change the way the game looks. There's also an FOV slider with a wide range of 45-120 degrees. Oddly, the FOV isn't altered when you choose an ultrawide setting, so you have to manually tweak things to get something that looks right to you.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Vermintide 2 settings overview

As our partner for these detailed performance analyses, MSI provided the hardware we needed to test Vermintide 2 on a bunch of different AMD and Nvidia GPUs—see below for the full details. Full details of our test equipment and methodology are detailed in our Performance Analysis 101 article. Thanks, MSI!

All the options for tweaking your graphics settings appear in the Video menu, including resolution, V-sync, FOV, and even a framerate cap. The framerate cap can sometimes be useful if you're sensitive to microstutter—set it to 60 fps as an example and the game won't render more than that many frames per second, which can create a smoother overall experience.

There are five graphics presets (plus 'custom'), ranging from lowest to extreme. Using the presets with a GTX 1060 6GB at 1080p, the presets yield the following results (with performance increase relative to the extreme preset):

- Extreme: 73.2 fps

- High: 87.3 fps (19% faster)

- Medium: 138.0 fps (88% faster)

- Low: 217.8 fps (200% faster)

- Lowest: 267.7 fps (265% faster)

That's a pretty massive range, though image quality can also vary quite a bit—particularly on the lowest preset where virtually all the extra effects are disabled. Note also that those potential increases largely assume that you're not hitting CPU bottlenecks, which likely isn't the case for many PCs.

Vermintide 2 has a wealth of graphical options you can configure, perhaps even too many. There are more than 25 individual settings, many of which only have a very small impact on visuals and performance. Of course, some of the settings will have a larger impact on performance only in certain areas—particle effects for example. But for now, I've done my best to estimate the impact on performance using an easy-to-reach area at the start of the Empire in Flames level.

To help you prioritize where to tune your settings, rather than listing these in order, I'm going to group the settings into three categories: those that have a significant impact on framerates (10 percent or more), those that have a modest effect (around five percent), and those that have little effect (less than three percent).

Settings that cause a substantial change to framerates

Sun Shadows: Enables/disables and sets the quality of sun shadows. This is one of the more immediately visible changes and performance can drop by 10 percent or more compared to off, though I recommend at least a setting of low so the world doesn't look bland.

Volumetric Fog Quality: Allows fog to reflect light to appear more realistic. While that sounds mostly benign, this ended up being one of the biggest influencers of framerate, but the visuals don't appear to change much. You can turn this to off or low for up to a 15 percent boost to fps.

Anti-Aliasing: Sets the AA mode to either off, FXAA, or TAA (Temporal AA). TAA removes the most jaggies but can make the final image look soft. FXAA is only 'approximate' and improves performance by around five percent over TAA. Turning AA completely off can boost fps by about 15 percent.

SSAO: Sets screen space ambient occlusion quality, which makes finer shadow details look better. Turning this down/off can improve performance up to 15 percent.

Settings that cause a modest change to framerates

Bloom: Enables/disables the bloom (ie, overexposure) effect caused by lights. Turning this off can improve performance by 5-7 percent.

Screen Space Reflections: Turns on/off screen space reflections. This can make reflections on some surfaces, including water, look a bit better, but I rarely notice the difference when playing the game. Turning this off can improve performance by 5-8 percent, and I recommend doing so.

Settings that cause little to no change in framerate

Max Stacking Frames: Controls the number of frames that the game can render ahead, which can smooth out framerates at the cost of increased latency. All my testing was done with the Auto setting, and this didn't seem to affect performance at all.

Character Texture Quality: Sets the size of the textures used for characters, with negligible impact on performance unless your GPU lacks VRAM. Note that the Extreme setting will only make a visible difference at resolutions above 1080p. (Game restart required to take effect.)

Environment Texture Quality: Sets texture resolution for the environment. Again, this doesn't affect performance much unless you run out of VRAM, and the Extreme setting is only intended for higher than 1080p resolutions. (Game restart required to take effect.)

Particle Quality: Sets the quality of particle rendering. The menu says this has a 'high' effect on GPU performance, but in testing I didn't see much difference.

Transparency Resolution: Sets the resolution for transparency effects (eg, tree branches and leaves). Has a slight 2-3 percent influence on performance.

Scatter Density: Sets the density of decoration geometry (grass, rubble, etc.), and didn't appear to affect performance much if at all.

Blood Decal Amount: Sets the maximum number of blood decals, from 0 to 500. Doesn't appear to cause a significant change in performance, though in horde battles it might be more important.

Local Light Shadow Quality: Enables/disables and sets the quality of shadow casting local lights. While the name sounds interesting, it didn't affect performance much in testing.

Max Shadow Casting Lights: Sets the maximum number of active shadow casting lights. This can be set to anything from 1 to 10, though it didn't appear to affect performance much. (It might have a greater effect during battles.)

Ambient Light Quality: Controls the quality of ambient light in near proximity to the player. Negligible impact on framerates.

High Quality Fur: What the name says—causes fur (eg, on Skaven) to look more realistic. It's difficult to measure the impact of this as battles are pretty frenetic, so I'd turn this off if you're looking to improve performance.

Sharpness Filter: A sharpening that basically works opposite of AA, attempting to make scenes less blurry. Negligible impact on performance, and I'd leave it on if you're using TAA.

Skin Shading: Enables/disables advanced skin shading. Turning this off improves framerates by 1-2 percent.

Depth of Field: Enables/disables the depth of field effect (eg, distant objects that aren't the focus of attention can look blurry, an effect that's applied when you aim certain ranged weapons). Turning this off improves framerates by up to 3-4 percent.

Light Shafts: Enables/disables light shafts from the sun—in theory, as in practice this seems to do little and the volumetric fog setting controls the presence of light shafts (aka, God rays). Turning this off can improve performance by up to three percent.

Lens Flare: Enables/disables lens flare from the sun (eg, when you look at the sun). Negligible impact on performance.

Color and Lens Distortion: Enables/disables chromatic aberration and lens distortion. Negligible impact on performance.

Motion Blur: Enables/disables motion blur. Negligible impact on performance. Turn it off.

Physics Debris: Enables/disables physically simulated dynamic debris. This didn't appear to affect performance in my testing, but in battles it could be a larger influencer of framerates. (Game restart required to take effect.)

Animation LOD Distance: Controls the amount of detail in the animation—0 for no face and finger animation, 1 for full face/finger animation up to 8 meters, in steps of 0.1. Negligible impact on performance.

That's a ton of settings, but as you can see, only about six of them have more than a minor impact on performance. If you combine all the low impact items and set them to max, it does cause a modest drop in performance of around 15 percent, but most GPUs should be okay with leaving these alone—use the medium or high preset and then focus your tuning on the top six settings if you're looking to improve performance.

MSI provided all the hardware for this testing, consisting mostly of its Gaming/Gaming X graphics cards. These cards are designed to be fast but quiet, though the RX Vega cards are reference models and the RX 560 is an Aero model. I gave both the Vega cards and the 560 a slight overclock to level the playing field, so all the cards represent factory OC models.

My main test system uses MSI's Z370 Gaming Pro Carbon AC with a Core i7-8700K as the primary processor, and 16GB of DDR4-3200 CL14 memory from G.Skill. I also tested performance with Ryzen processors on MSI's X370 Gaming Pro Carbon, also with DDR4-3200 CL14 RAM. The game is run from a Samsung 850 Pro 2TB SATA SSD for these tests, except on the laptops where I've used their HDD storage.

For these tests, I’m using the Nvidia 391.01 and AMD 18.3.1 drivers. Both sets of drivers are Game Ready for Vermintide 2, though in limited testing it doesn’t appear that performance on most parts is substantially changed from other recent drivers (eg, AMD 17.2.3 performance looks the same in my testing).

Something else to mention is that Vermintide 2 supports DirectX 11 and DirectX 12, but after checking performance with both APIs on several AMD and Nvidia cards, I found that DX11 was universally faster (by around 5-10 percent). That may change in the future, as the developers spend more time optimizing the DX12 code, but the results shown here use DX11.

At lower settings and resolutions, just about any modern graphics card will suffice, and while Nvidia GPUs have a slight advantage for now over their AMD counterparts, it's not enough to be a serious concern. Your CPU on the other hand could be a problem, particularly if it's only a dual-core model or one of AMD's older Piledriver (FX-series) parts. You can still play the game, but you'll encounter more frequent stuttering, particularly in large battles.

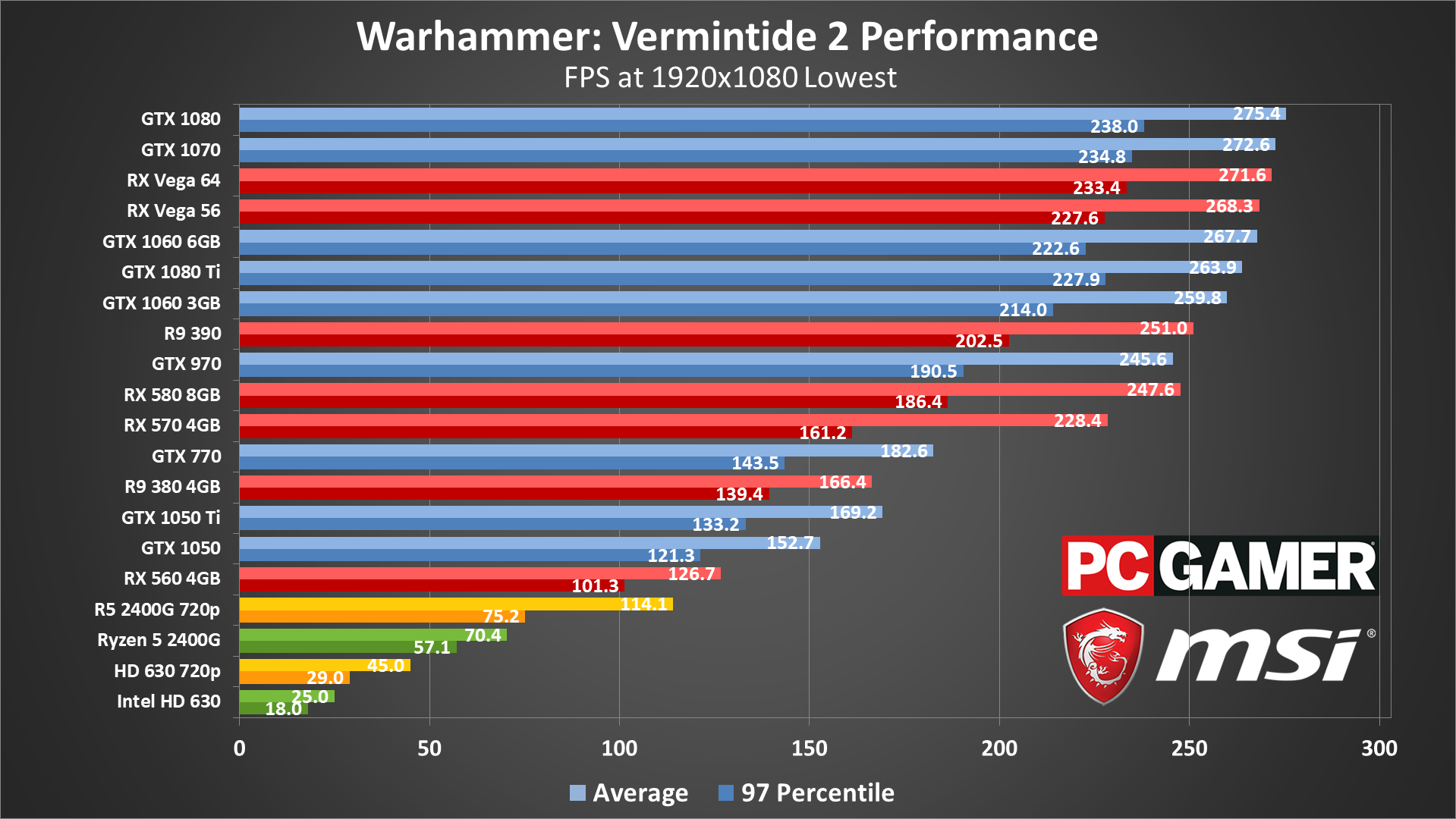

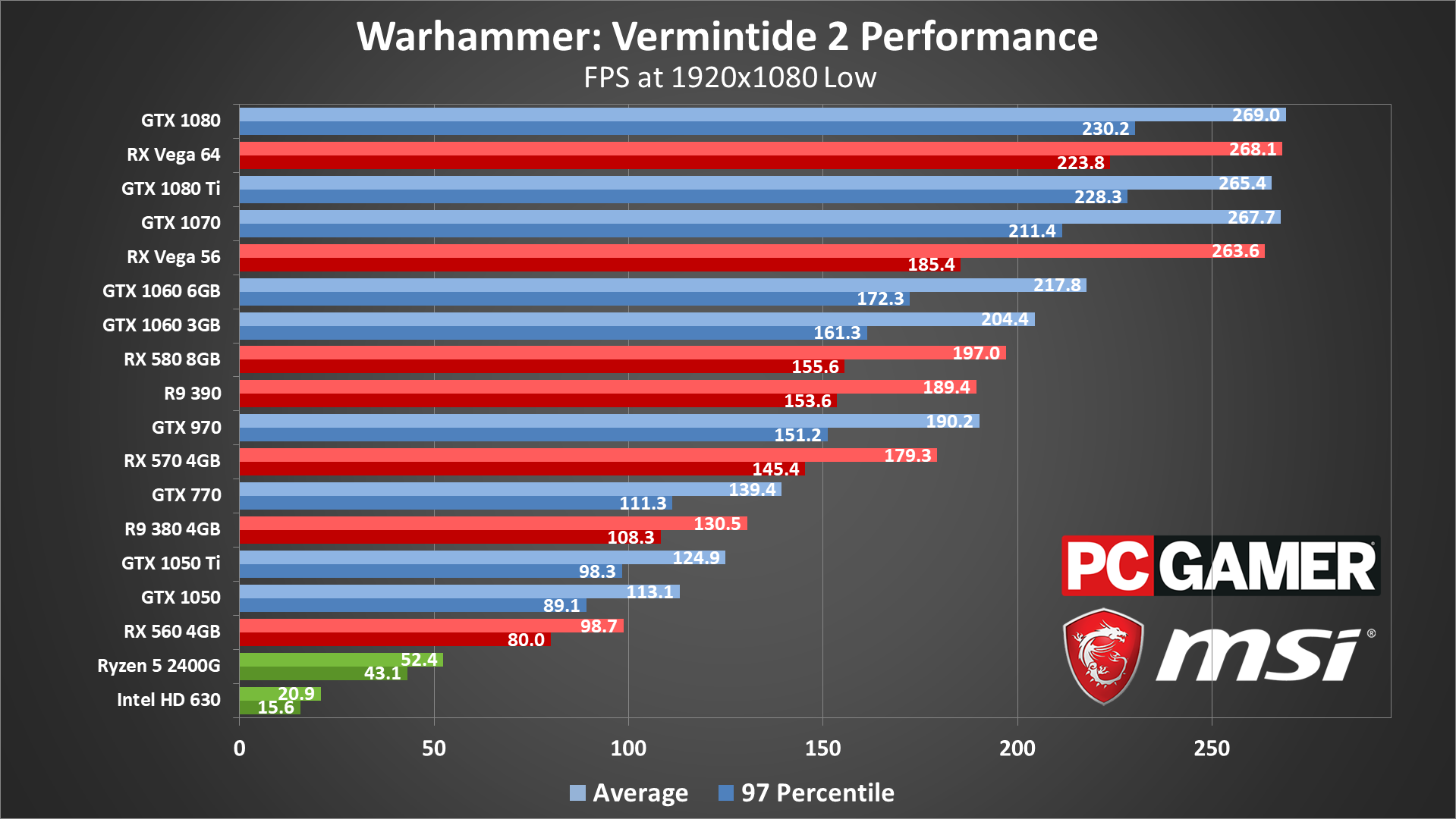

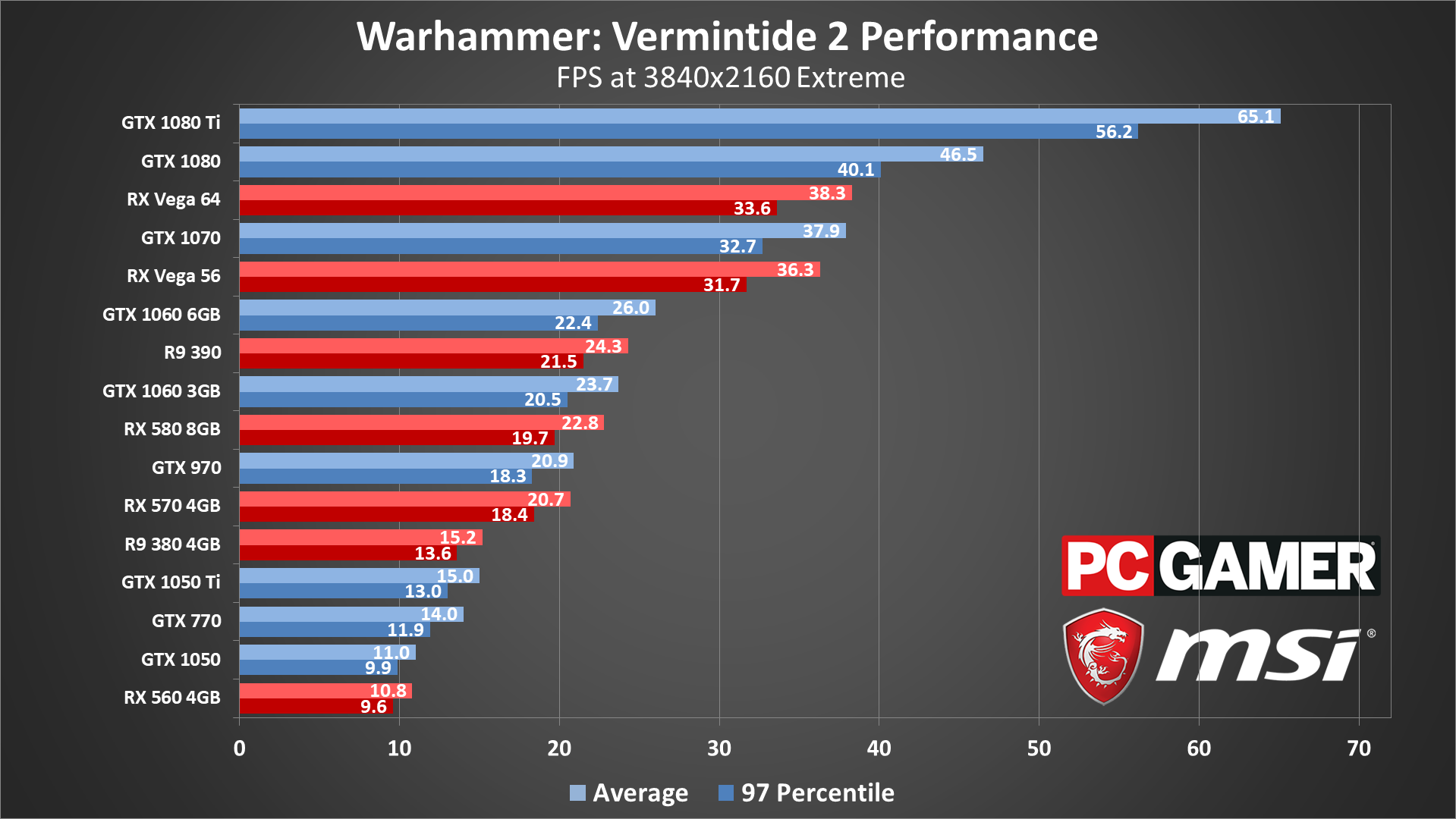

Vermintide 2 graphics card benchmarks

The difference between the lowest preset and the low preset is pretty substantial, with low dropping performance by 25-30 percent on many of the budget and midrange offerings. However, the change in image quality is also significant—the lack of most shadows on the lowest preset makes the game look very flat and dated. Since this isn't a competitive shooter where framerate reigns supreme—half the fun is the visual carnage—I'd suggest avoiding the lowest preset if possible.

The faster dedicated graphics cards—basically GTX 1070 and RX Vega 56 and above—all end up primarily CPU limited at the low and lowest presets. I'll discuss the impact of your CPU on performance more below.

If you're running on integrated graphics, AMD's Ryzen 5 2400G manages 30-60 fps throughout the game at 1080p lowest, but Intel's HD 630 drops well below 30 fps, especially in horde attacks. 720p lowest can double your performance, which makes the HD 630 mostly playable in a pinch.

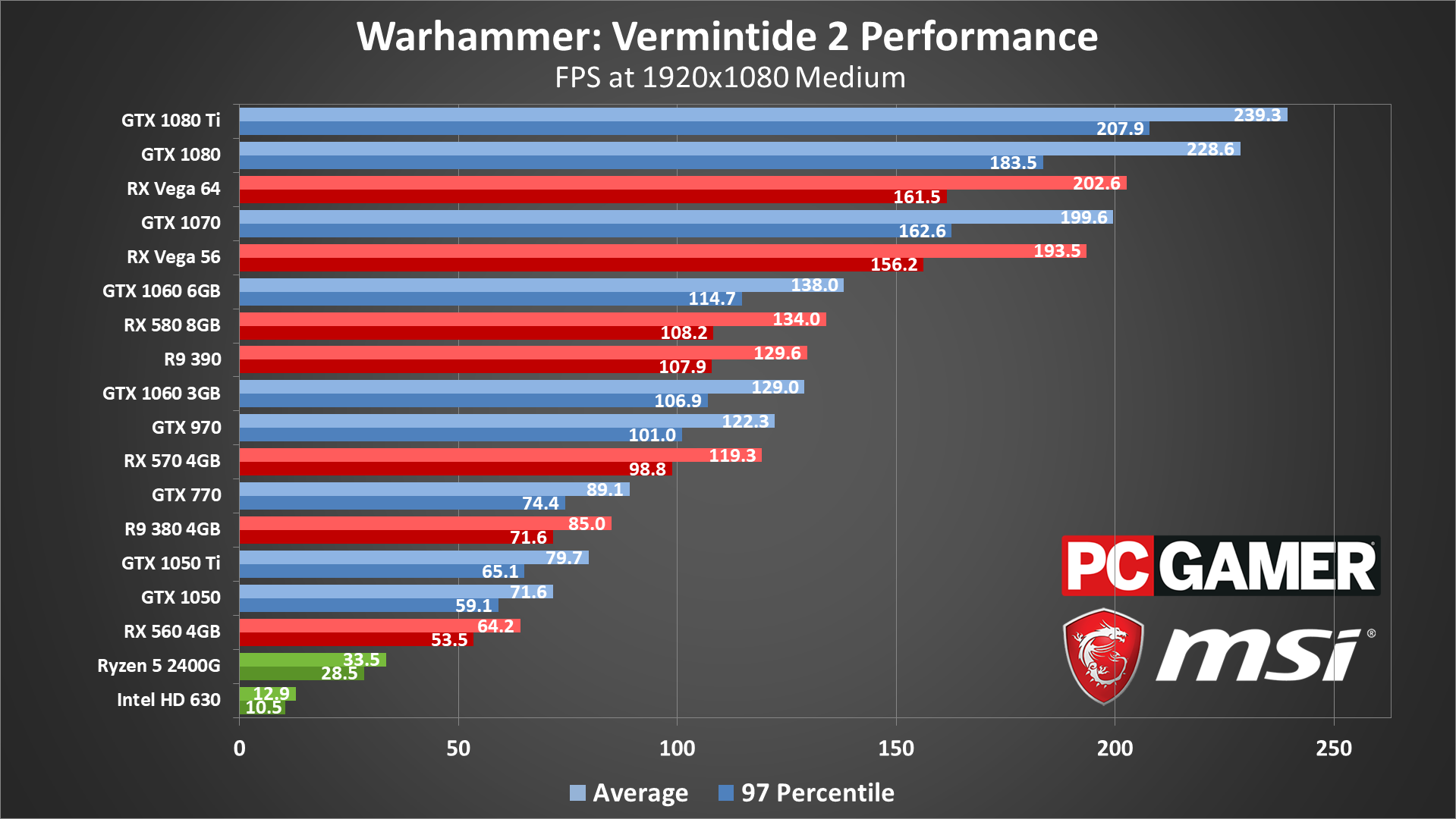

Moving from the low to medium preset at 1080p drops performance 25-35 percent on most GPUs—only the 1080 Ti and 1080 don't take quite that big of a hit. All the mainstream GPUs, even including older cards like a GTX 770, still average more than 60 fps, but that's mostly by virtue of the test scene.

Limited playing on some of the cards shows that horde attacks can drop framerates to about half of what I show in these charts—and that's only with a potent CPU. Realistically, you'll want at least a 4-core/8-thread CPU and a GTX 970 or similar GPU to stay above 60 fps at 1080p medium, though in less demanding scenes you'll see much higher framerates.

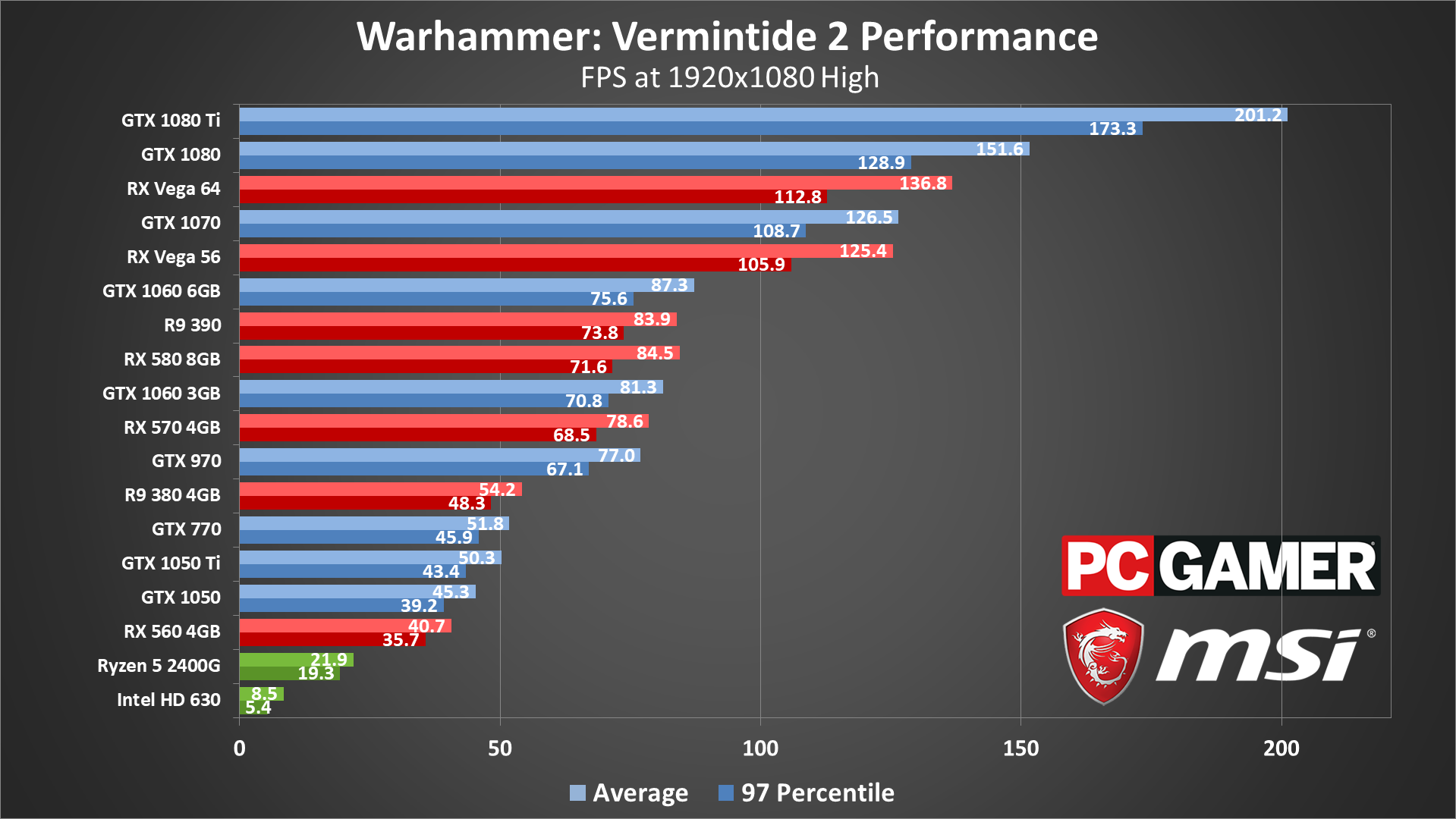

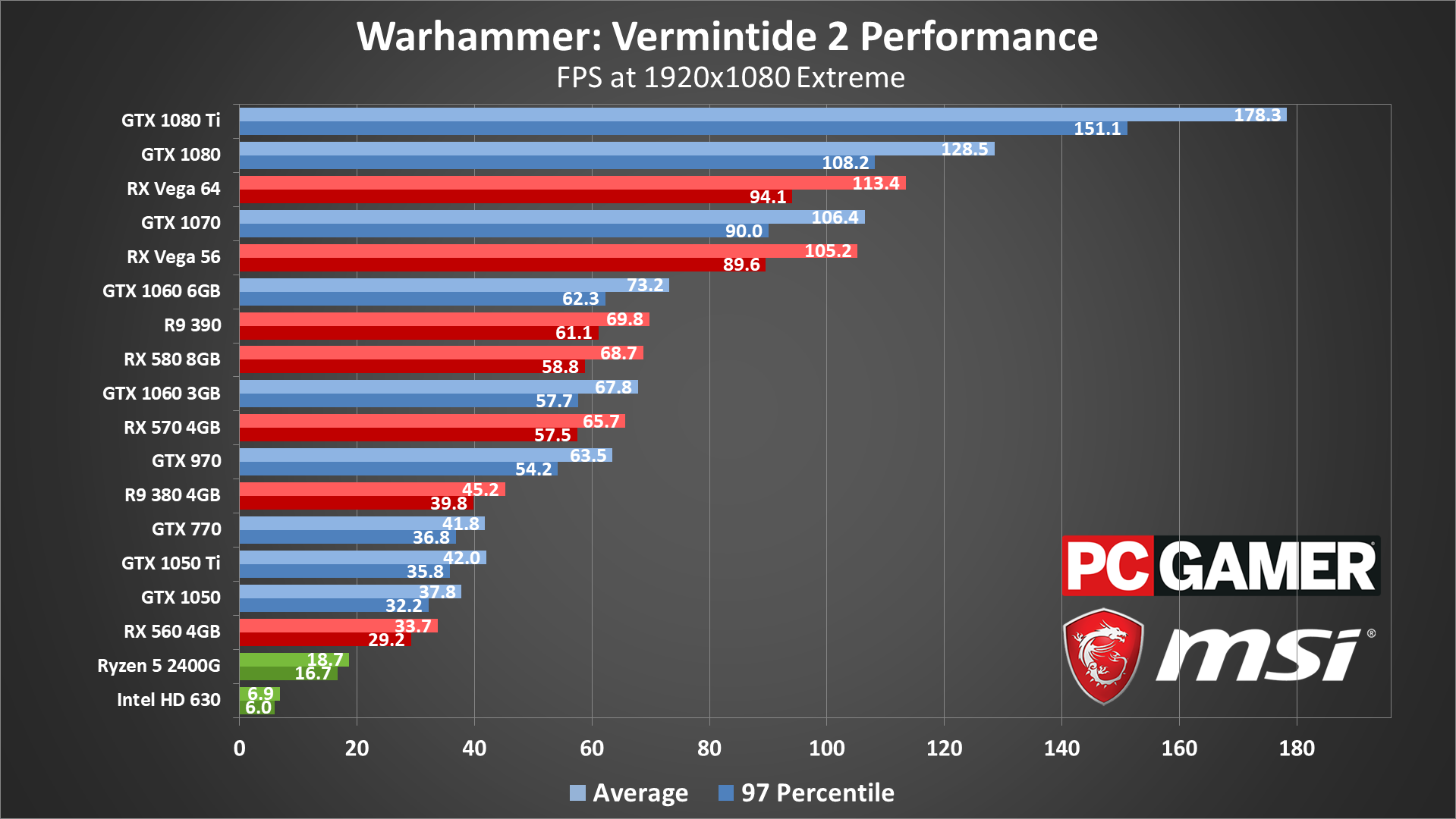

Going from the medium to high presets drops performance another 35 percent or so on most GPUs (a bit less on the 1080/1080 Ti), and moving to the extreme preset knocks off another 10-15 percent. However, there's almost no difference in image quality between the two settings, particularly at 1080p. Unless you're after bragging rights (or you want to stress test hardware like I'm doing), I'd suggest skipping the extreme preset.

The same rules as before apply: the large mobs of enemies that periodically swarm the party can drop framerates by about half of what I'm showing in the charts, though usually only for 30 seconds or so at a time. If you're looking to maintain a smooth 60 fps or more, you'll want an RX Vega 56 or GTX 1070 or better graphics card. (For reference, the GTX 980 Ti performs about the same as the 1070 in this game.)

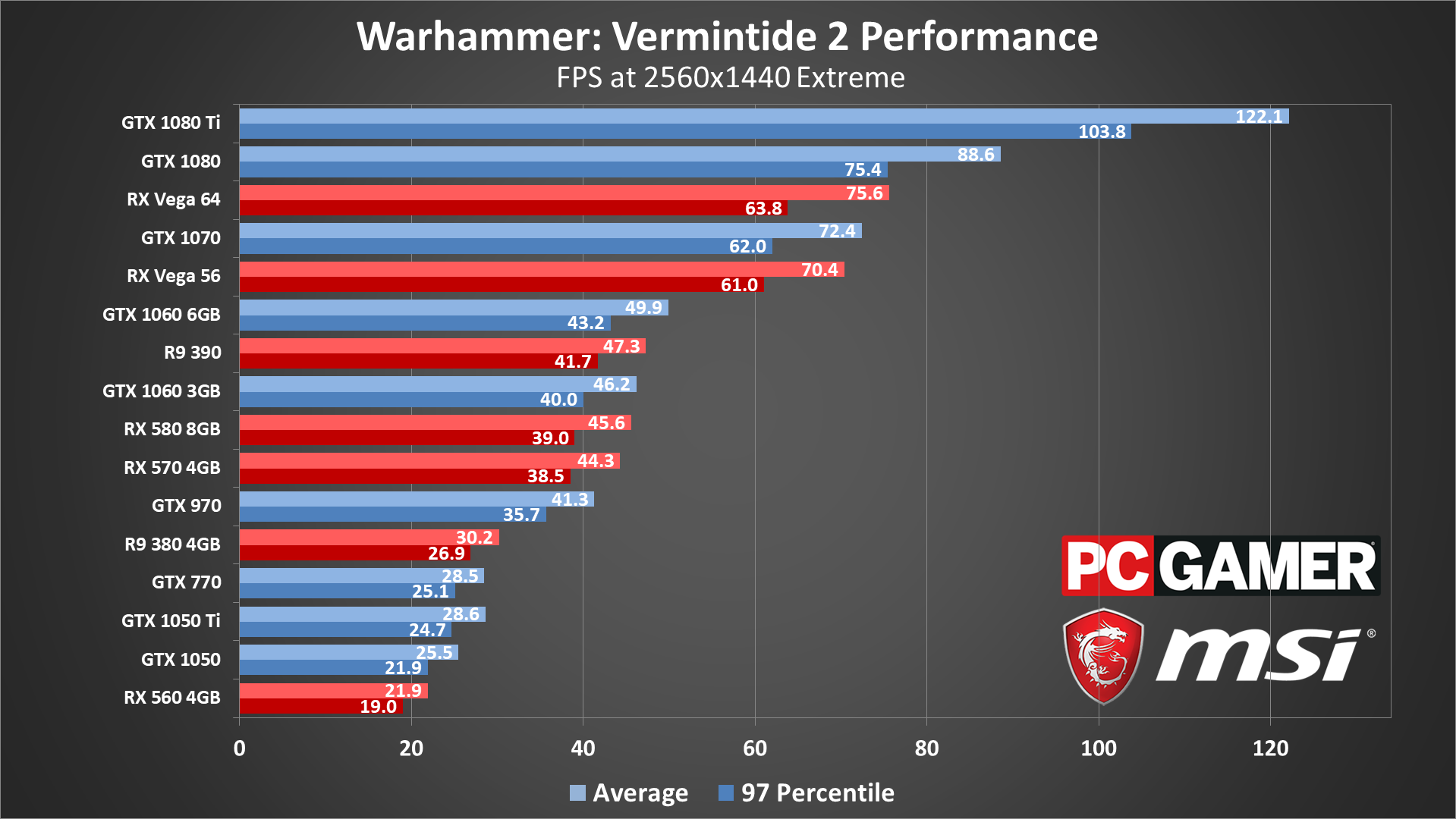

You can improve performance by 20 percent just by using the high preset instead of extreme, and at 1440p most GPUs will need the help—and maybe drop a few other settings down to medium quality for good measure. With those tweaks, the Vega and 1070/980 Ti and above mostly stay above 60 fps, with dips into the 40-50 fps range during swarm attacks. The GTX 1080 Ti is the only card that really has a chance at running extreme quality at 1440p without the occasional dip below 60, and that's only if your CPU is up to the task.

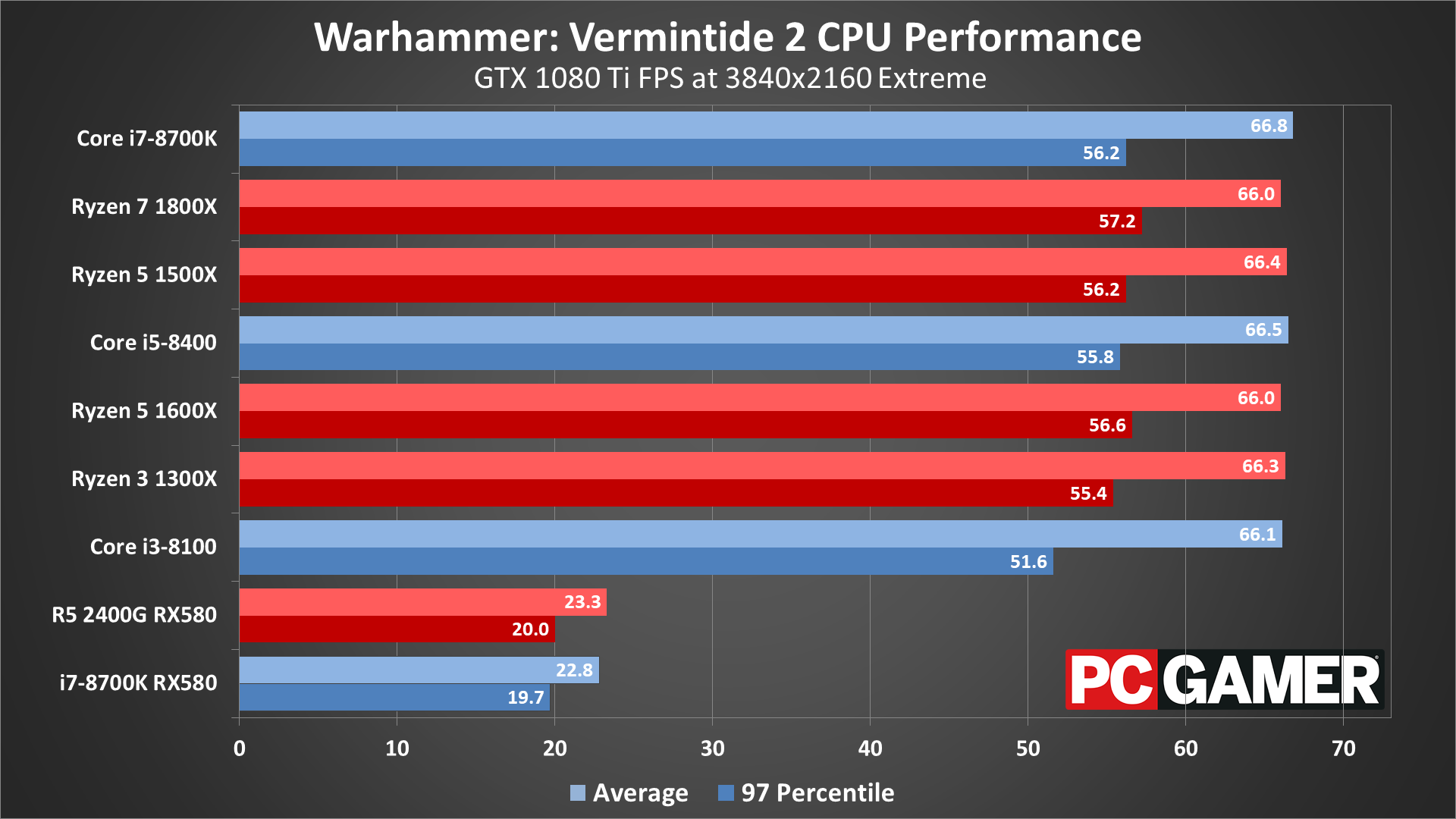

4k gaming is mostly about bragging rights, just like the extreme setting. Combine the two in Vermintide 2 and few cards will average anything close to 60 fps—even the 1080 Ti frequently drops below that mark in the midst of a battle. But as a GPU stress test, this shows the Nvidia cards leading by a small amount over their relative peers on the AMD side—so the 1070 just edges past the Vega 56, while the 1060 6GB holds a more significant lead over the RX 580.

Vermintide 2 CPU performance

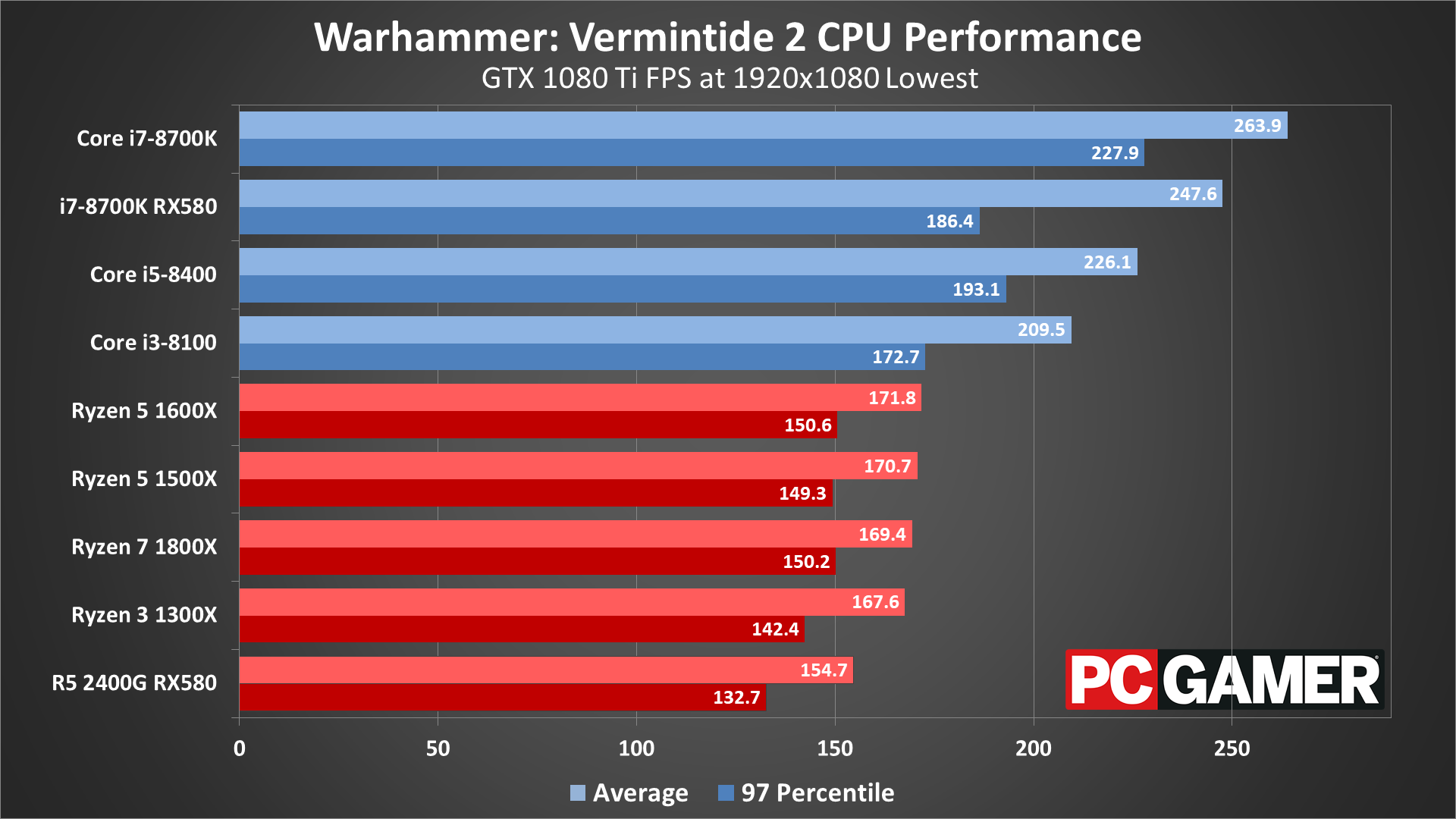

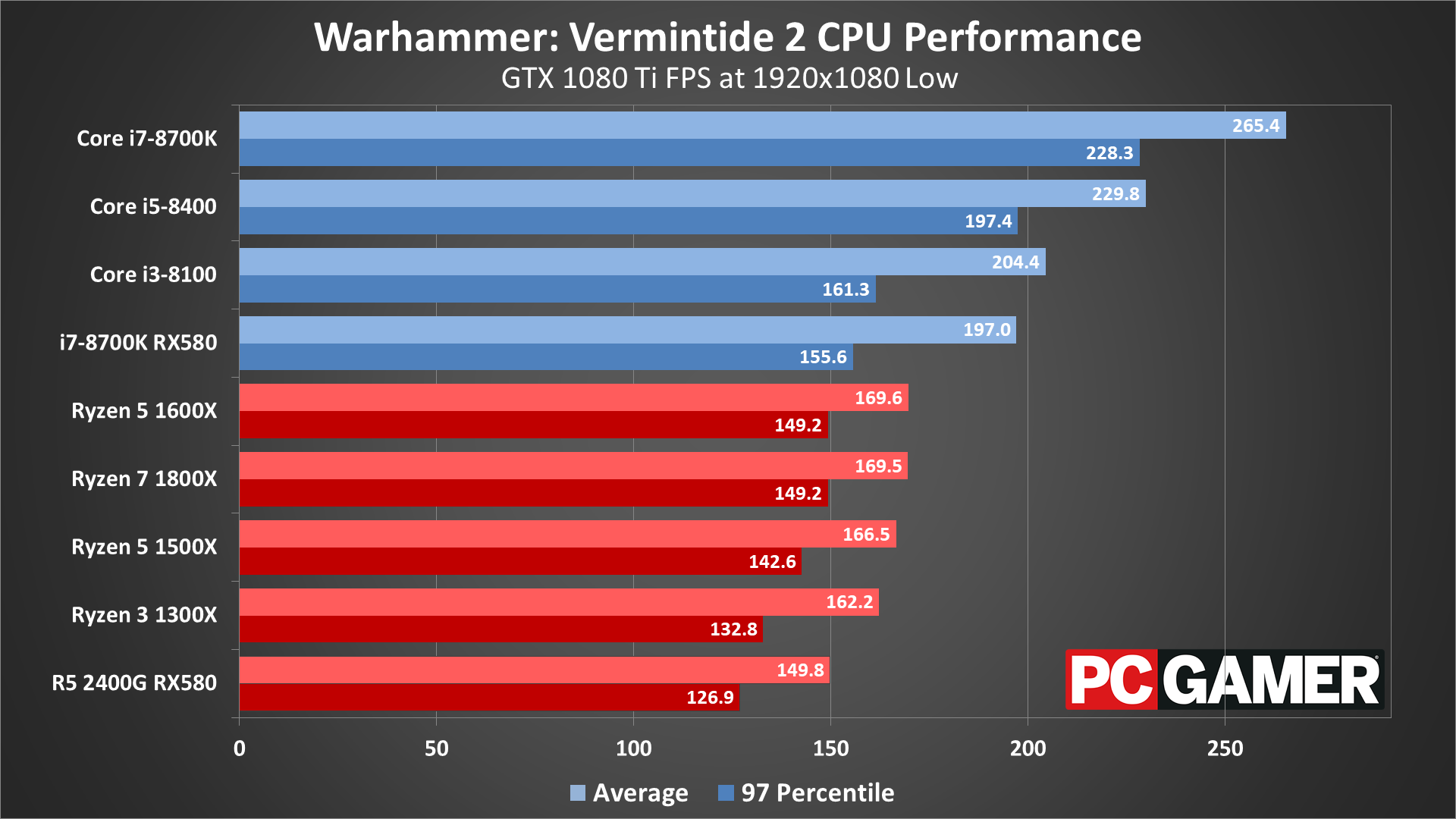

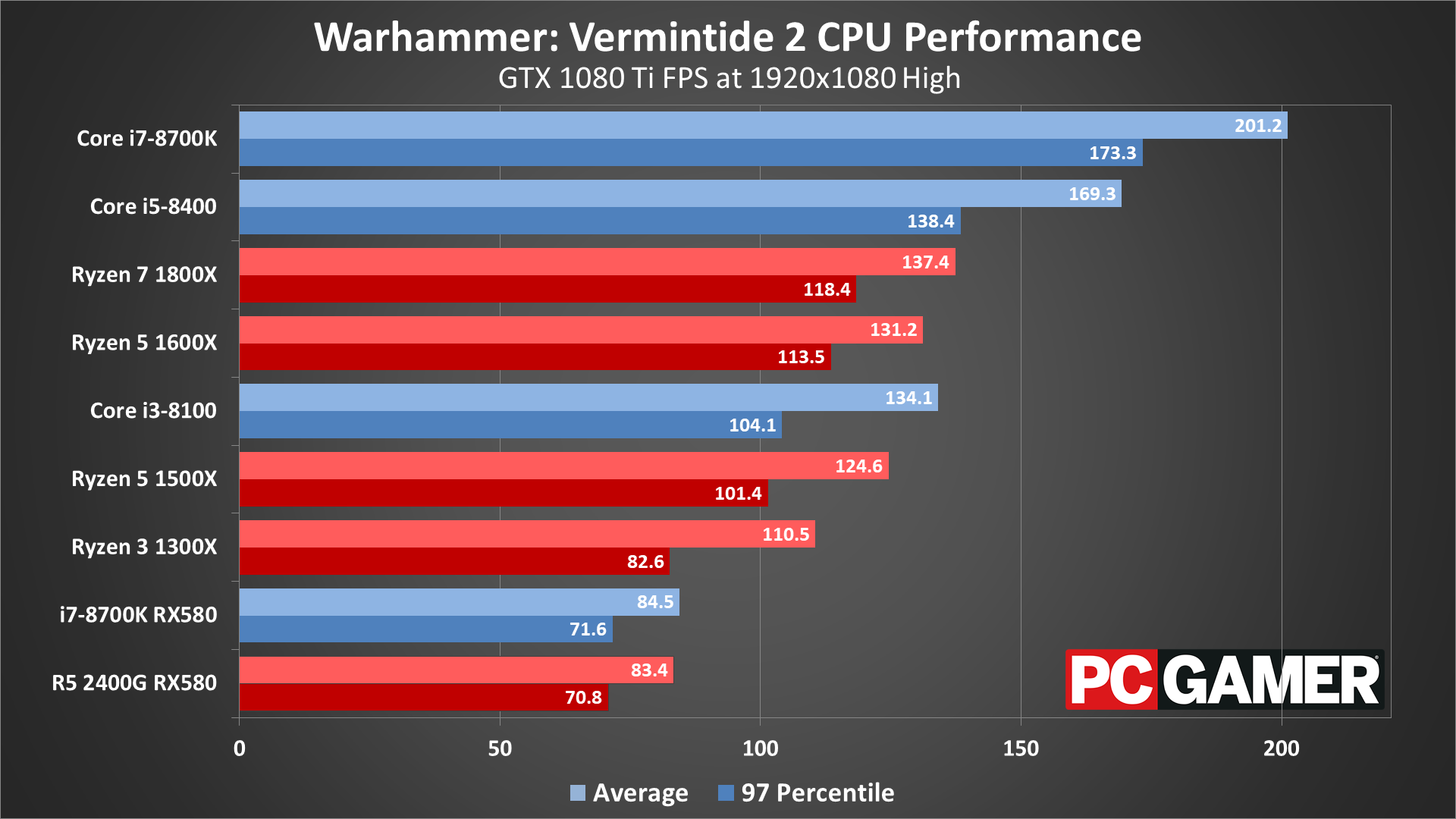

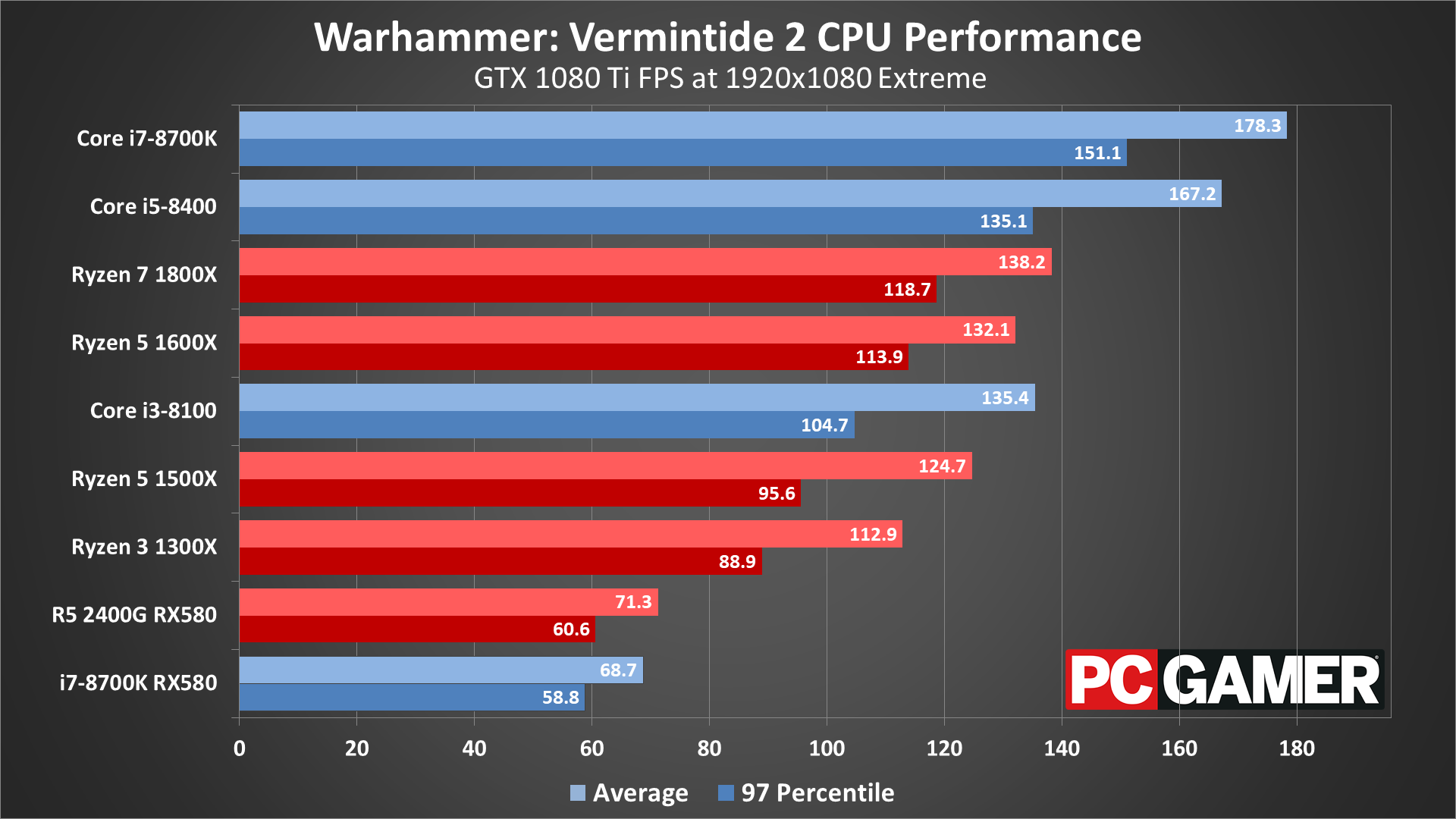

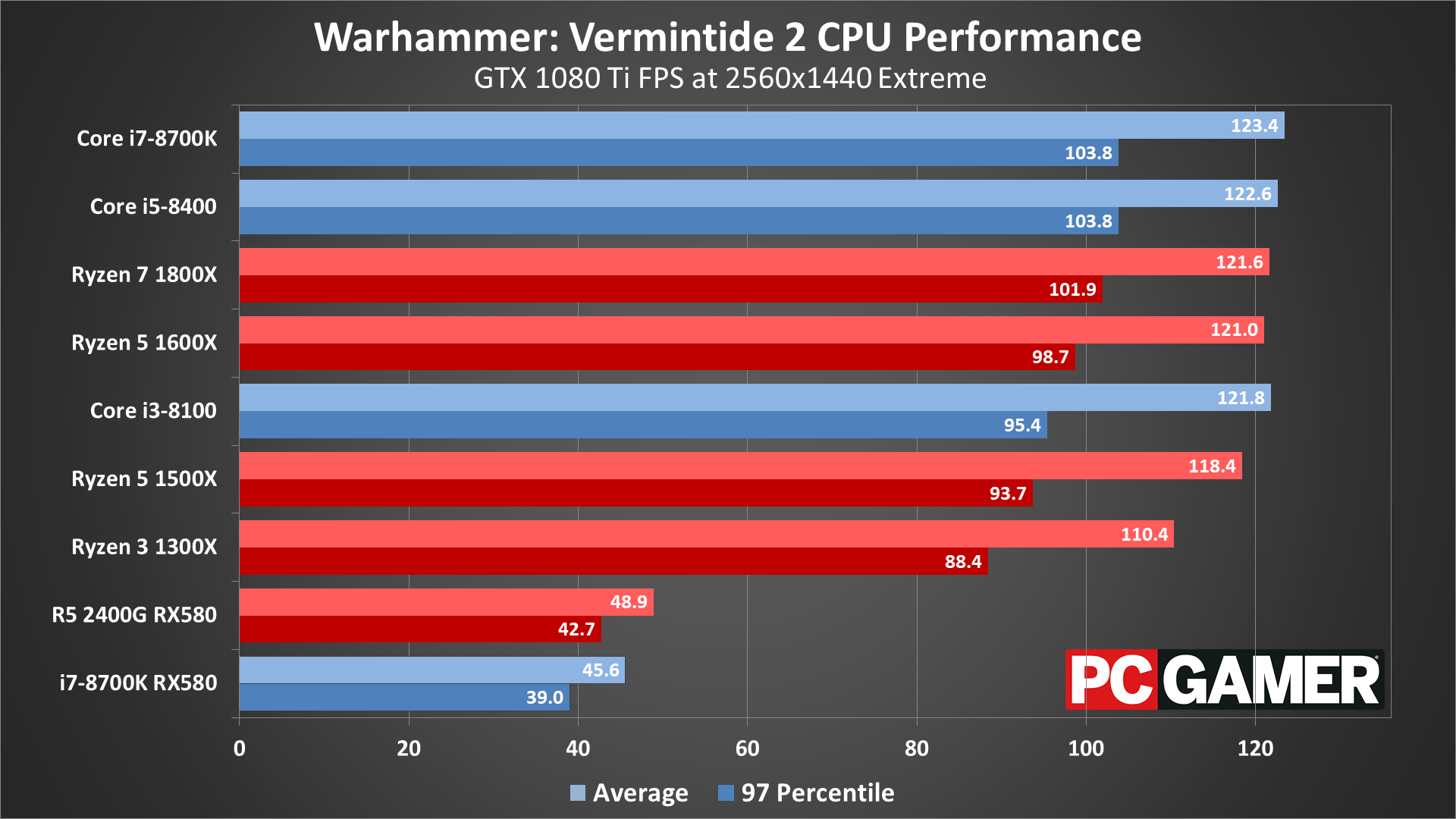

For CPU testing, I've used the GTX 1080 Ti on all the processors. This is to try and show the maximum difference in performance you're likely to see from the various CPUs—running with a slower GPU will greatly reduce the performance gap. Unlike other games, however, Vermintide 2 can hit your CPU pretty hard, even in less demanding scenes. To illustrate this, I've also run the RX 580 8GB on the i7-8700K and on the R5 2400G (which is basically equal to the Ryzen 5 1500X).

At lower quality settings, CPU bottlenecks are clearly a concern, and Intel’s processors easily surpass AMD’s Ryzen parts. Even the RX 580 with an Intel 8700K ends up beating any Ryzen processor with a 1080 Ti. As the quality settings increase, however, the bottleneck starts to shift toward the GPU—though again, large battles tend to tax the CPU more, thanks to the additional AI calculations.

I did a bit more testing of CPUs, playing through the Empire in Flames mission and recording frametimes during intense horde battles. These are definitely not apples-to-apples figures, but using the 1080p medium preset running on a GTX 1080, what I found is that the i7-8700K (overclocked to 4.8GHz) can dip down to 70 fps at times, with battles averaging 100-120 fps. That's a pretty stark contrast to the 220 fps measured in the graphics benchmark!

With the Core i3-8100 and Core i5-8400, not surprisingly things get even worse. The i5-8400 averages around 100-120 fps as well, with dips into the low 60s, while the i3-8100 averages just 80-100 fps and dips into the mid-40s at times! On Ryzen systems, the best AMD can do is to roughly match the i3-8100, with sometimes higher average fps (on the 1800X and 1600X), but all of the Ryzen CPUs dip well below 60 fps at times.

Even at 1080p extreme, your CPU can have a significant influence on framerates, particularly if you're running a GTX 1070/Vega 56 or higher. The 4-core/4-thread i3-8100 and Ryzen 3 1300X struggle at extreme quality, showing that Vermintide 2 benefits from both CPU clockspeed as well as additional cores and threads.

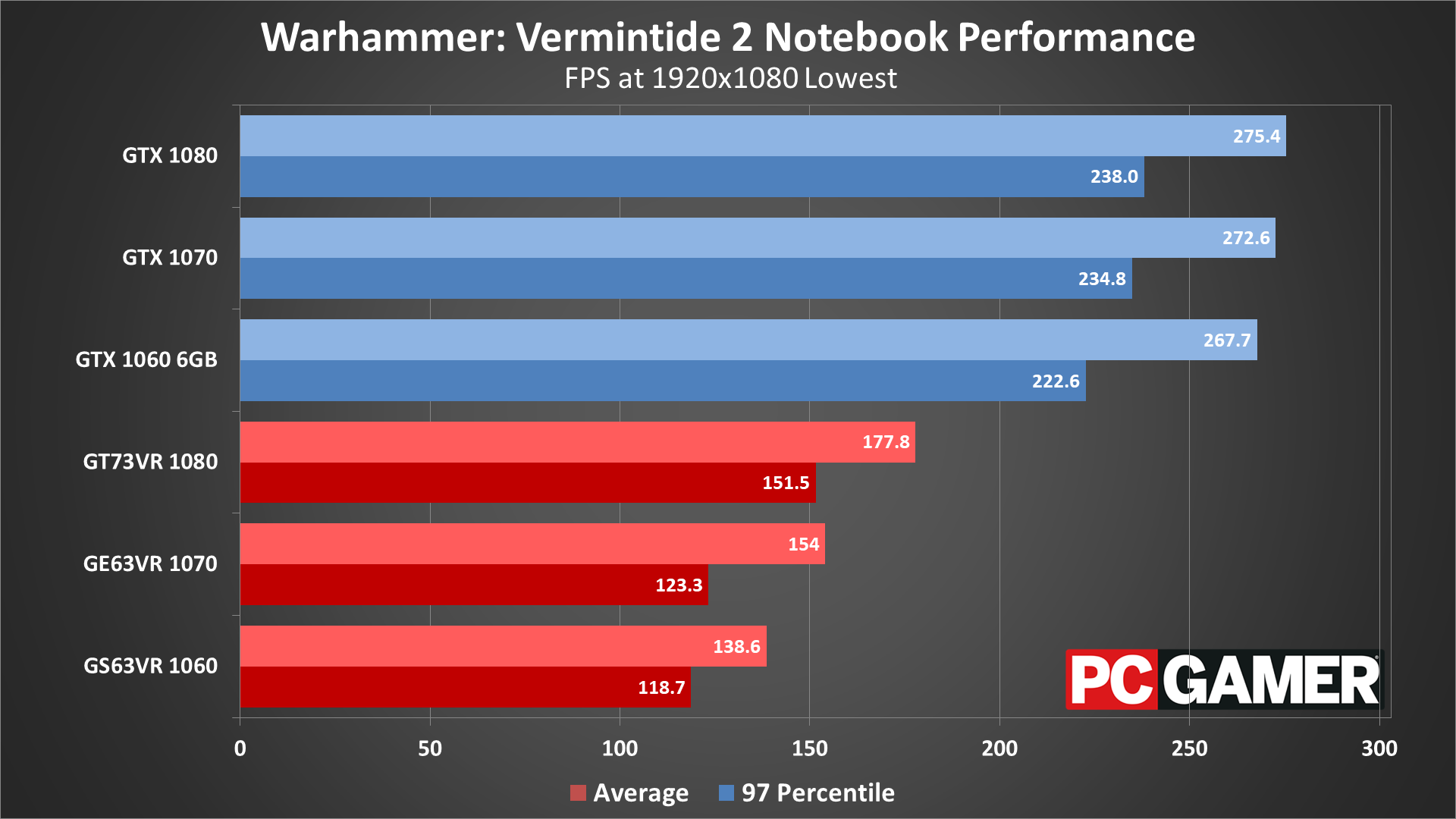

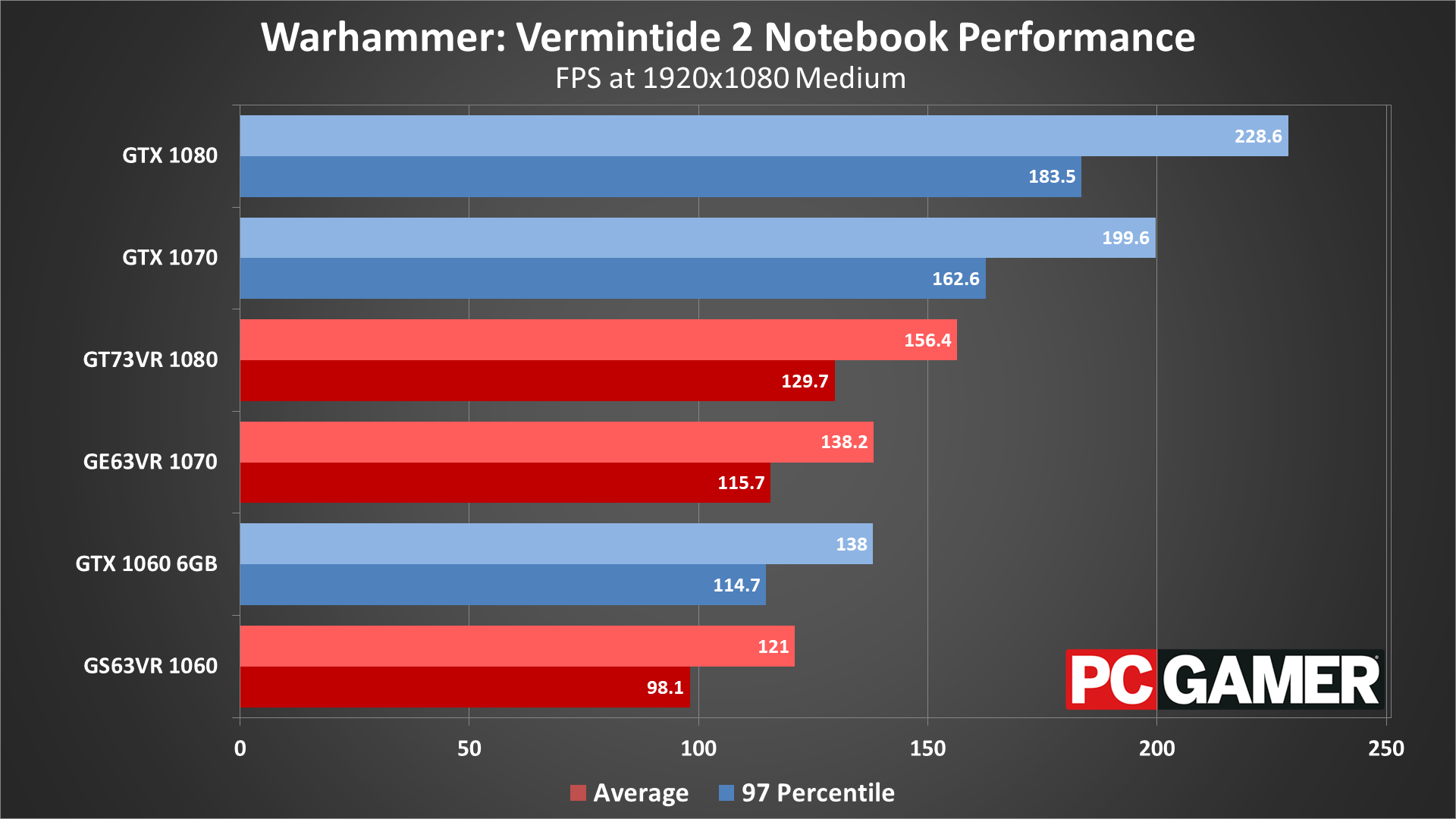

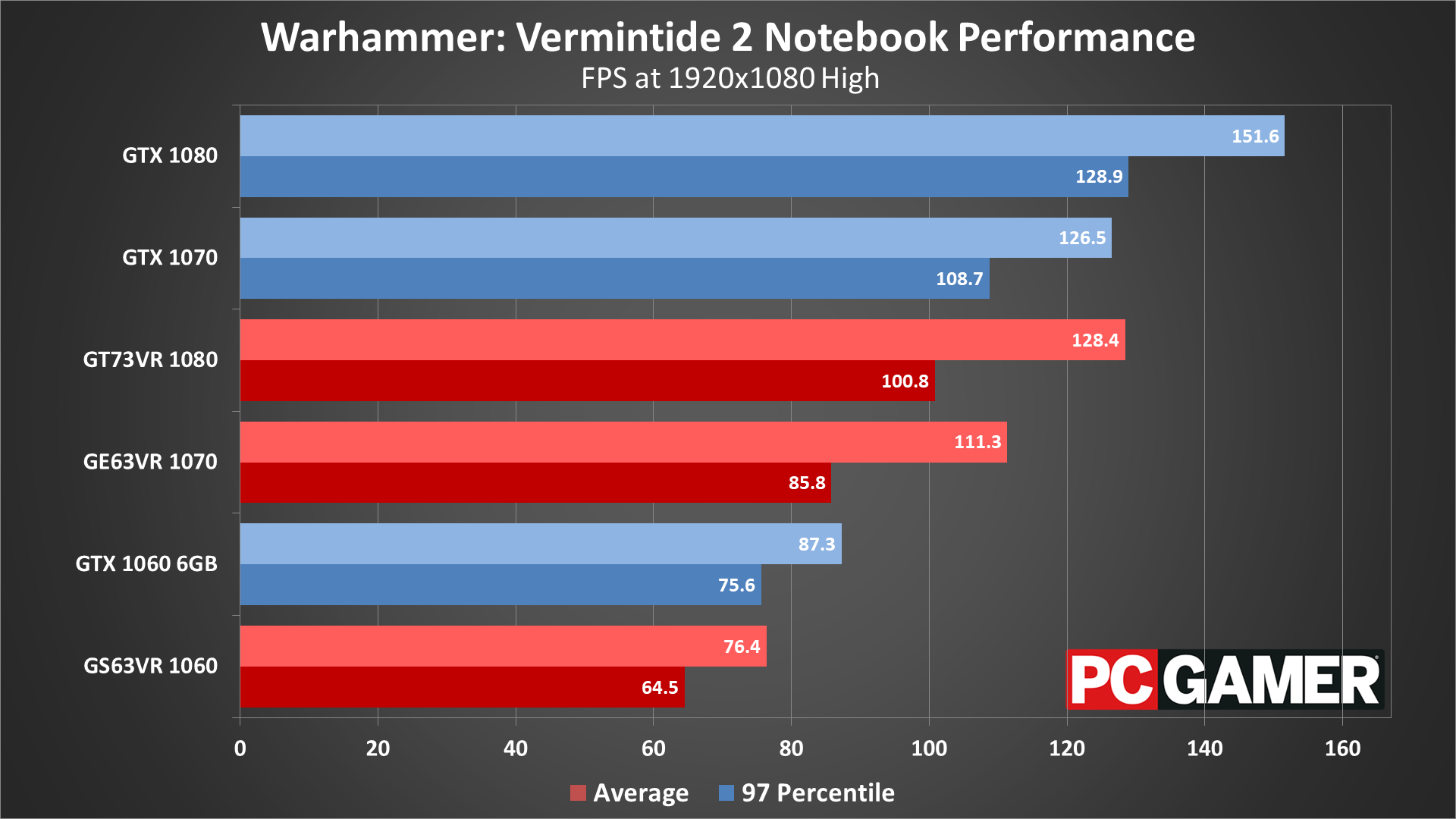

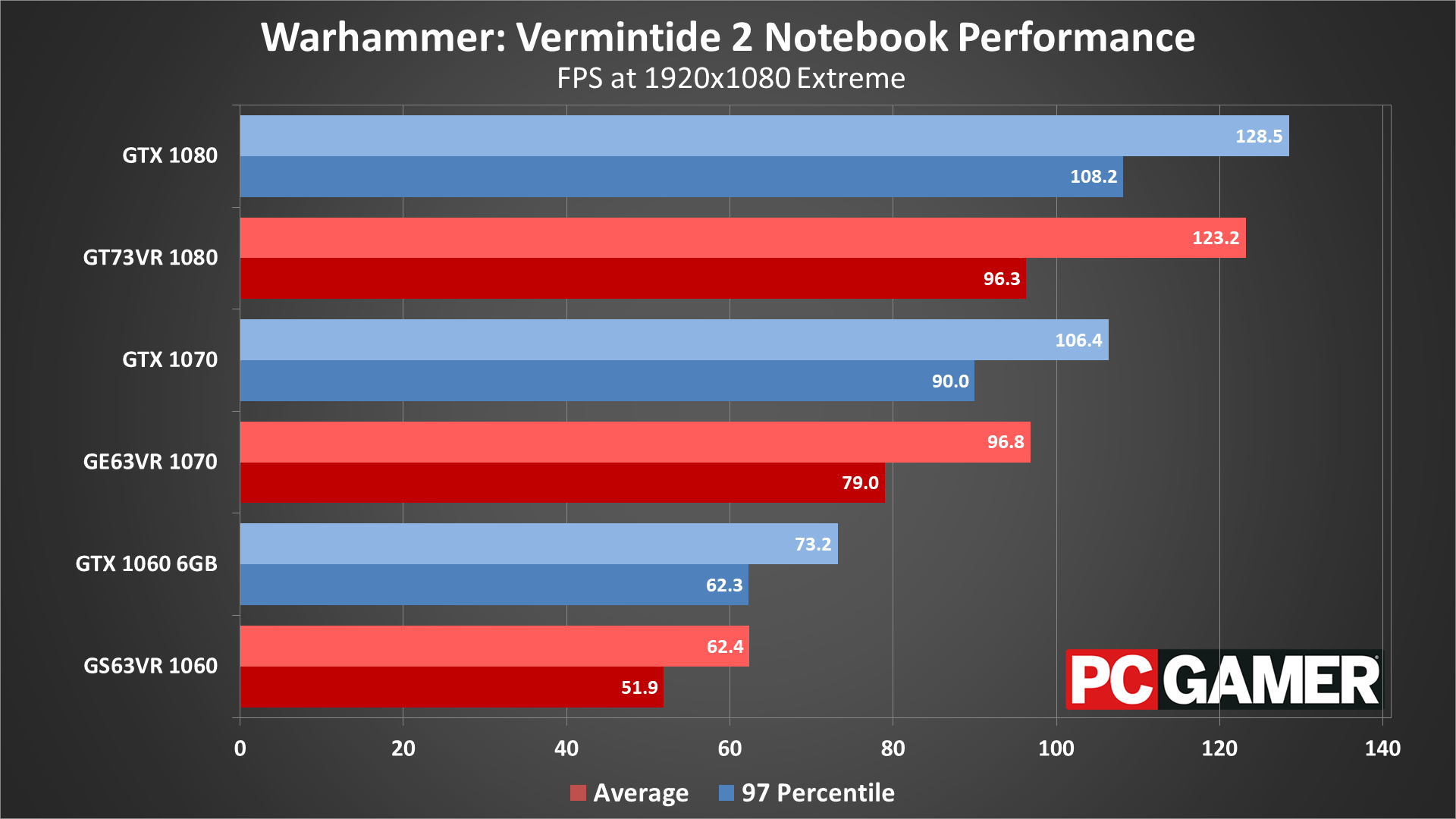

Vermintide 2 notebook performance

Given what I just said concerning CPU performance impacting framerates, moving from a 6-core/12-thread overclocked desktop CPU to a 4-core/8-thread mobile processor can put the brakes on the faster GPUs, particularly at lower quality settings. The desktop 1060 6GB is faster than all the tested notebooks at the low and lowest presets. Things start to return to 'normal' at 1080p medium, but it’s not until maximum quality that the GPUs start to fall more or less where I’d expect.

Keeping in mind that worst-case framerates can drop to about half of what I'm showing in these charts, the notebooks just barely manage to fully sustain 60fps at 1080p medium, and the GS63VR is hit particularly hard, since it has a thinner chassis and reduced cooling potential, resulting in lower CPU clocks. At 1080p extreme, only the GT73VR consistently stays above 60 fps when a Skaven swarm shows up.

Desktop PC / motherboards

MSI Aegis Ti3 VR7RE SLI-014US

MSI Z370 Gaming Pro Carbon AC

MSI X299 Gaming Pro Carbon AC

MSI Z270 Gaming Pro Carbon

MSI X370 Gaming Pro Carbon

MSI B350 Tomahawk

The GPUs

GeForce GTX 1080 Ti Gaming X 11G

MSI GTX 1080 Gaming X 8G

MSI GTX 1070 Ti Gaming 8G

MSI GTX 1070 Gaming X 8G

MSI GTX 1060 Gaming X 6G

MSI GTX 1060 Gaming X 3G

MSI GTX 1050 Ti Gaming X 4G

MSI GTX 1050 Gaming X 2G

MSI RX Vega 64 8G

MSI RX Vega 56 8G

MSI RX 580 Gaming X 8G

MSI RX 570 Gaming X 4G

MSI RX 560 4G Aero ITX

Gaming Notebooks

MSI GT73VR Titan Pro (GTX 1080)

MSI GE63VR Raider (GTX 1070)

MSI GS63VR Stealth Pro (GTX 1060)

If you'd like to compare performance to my results, watch the video included at the top of this article. Basically, I'm just running up the path at the start of the Empire in Flames mission, then turning around after about 15 seconds and running back to the starting point. There are no enemies present, which makes the benchmark easily repeatable and gives consistent results, and all the fog and volumetric lighting keep the sequence at least moderately demanding.

I collect frametimes using FRAPS or OCAT, and then calculate the average and 97 percentile minimums using Excel. 97 percentile minimums are calculated by finding the 97 percentile frametimes (the point where the frametime is higher than 97 percent of frames), then finding the average of all frames with a worse result. The real-time overlay graphs in the video are generated from the frametime data, using custom software that I've created.

Thanks again to MSI for providing the hardware. These test results were collected March 8-12, 2018, using the latest version of the game and the graphics drivers available at the start of testing (Nvidia 391.01 and AMD 18.3.1).

Vermintide 2 is interesting in that the AI director and large-scale melee combat make your CPU nearly as important as your graphics card—at least up to a certain point. The game can certainly run on lower spec PCs, and thankfully 30-60 fps is quite playable, but even at 1080p medium you'll need a faster CPU to consistently stay above 60 fps.

I haven't seen much in the way of changes immediately following the retail launch, but there’s clearly potential for improvements in performance over time, both via patches as well as through driver updates. DirectX 12 when properly optimized can often improve performance on AMD graphics cards, sometimes by 20 percent or more relative to DX11 code.

If that happens at some point (as it eventually did with The Division and Rise of the Tomb Raider), AMD GPUs could claim the lead, but for now Nvidia remains the overall leader at all price points. Not that most GPUs are worth buying right now, as prices remain inflated, but hopefully things return to normal soon. The more pertinent question in the meantime is whether Vermintide 2 will have staying power as a multiplayer co-op game.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

Join The Club

Join The Club