These are the best Nvidia gaming laptop features you're not using

A guide to the different Max Q features in the latest Nvidia features.

If you've managed to bag yourself one of the latest gaming laptops with a Max Q Nvidia RTX 3080, or other 30-series GPU, you may have noticed some of the fancy features Nvidia has been integrating recently. But what do they do and should you be bending over backwards to get them running in your shiny new laptop?

With such a slew of features, it's no wonder some of us are feeling overwhelmed by the sheer breadth of toggles and tweaks now laid before us. So we've created this handy guide so you know what they do, when to tamper with the settings, and what to expect from each feature.

While many of us will happily go about installing our favourite games on our new laptops straight out of the box, without a second thought for these (often convoluted) configuration settings, Nvidia has added some features we think are definitely worth trying out for yourself.

Article continues belowSome even utilise AI to automatically tune your machine as and when necessary, which is at least worth a look, right?

There has been some confusion recently about the Max Q branding. With the listing of GPU specs now being up to the manufacturer and whether they list laptops as such. So you may find yourself wondering what you're actually getting when you buy a new laptop.

It's all about the different max GPU power levels of the individual chips. One RTX 3080 machine may perform very differently compared to another RTX 3080. Make sure to check the product support page for any prospective purchases, before dropping large sums of money—an 85W RTX 3080 will almost always be slower than a 110W version, for example.

Cooling is the big thing, with performance being so dynamic within GPUs today, and so if you're picking up a thin and light machine it will perform differently to a more chunky laptop with the same graphics card.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

With that out of the way, let's go through a quick overview of each of these latest features and when's best to turn each one on, what the prime setting configurations are, as well as whether switching them on is actually worth it at all.

Nvidia Whisper Mode 2.0

Whisper mode is an Nvidia feature for the considerate souls among us. Say you want to take your laptop on a train, or are otherwise trying to sneak in a gaming session while others around you are trying to concentrate. When pushed to its limits, a Max Q laptop might exceed 30 decibels—that might be the level of noise a light smattering of rain registers at, but is still noticeable. Yet with Whisper Mode 2.0 enabled you can shave a good 10 decibels off that, bringing it down to the level of, well... a whisper.

The pitch might still upset your pets, but at least you can hear yourself think and the turbine whirring of your fans won't anger people around you.

Of course, there's an inevitable trade-off when you switch this mode on. First there's the build-up of heat as a result of suppressing the cooling system, though in our MSI test machine we were still looking at a maximum GPU temp of around 77°C (170.6°F), though what that looks like for you depends on your laptop's ability to dissipate heat. But Whisper Mode is designed to deal with that, because you also have the reduced frame rates to deal with.

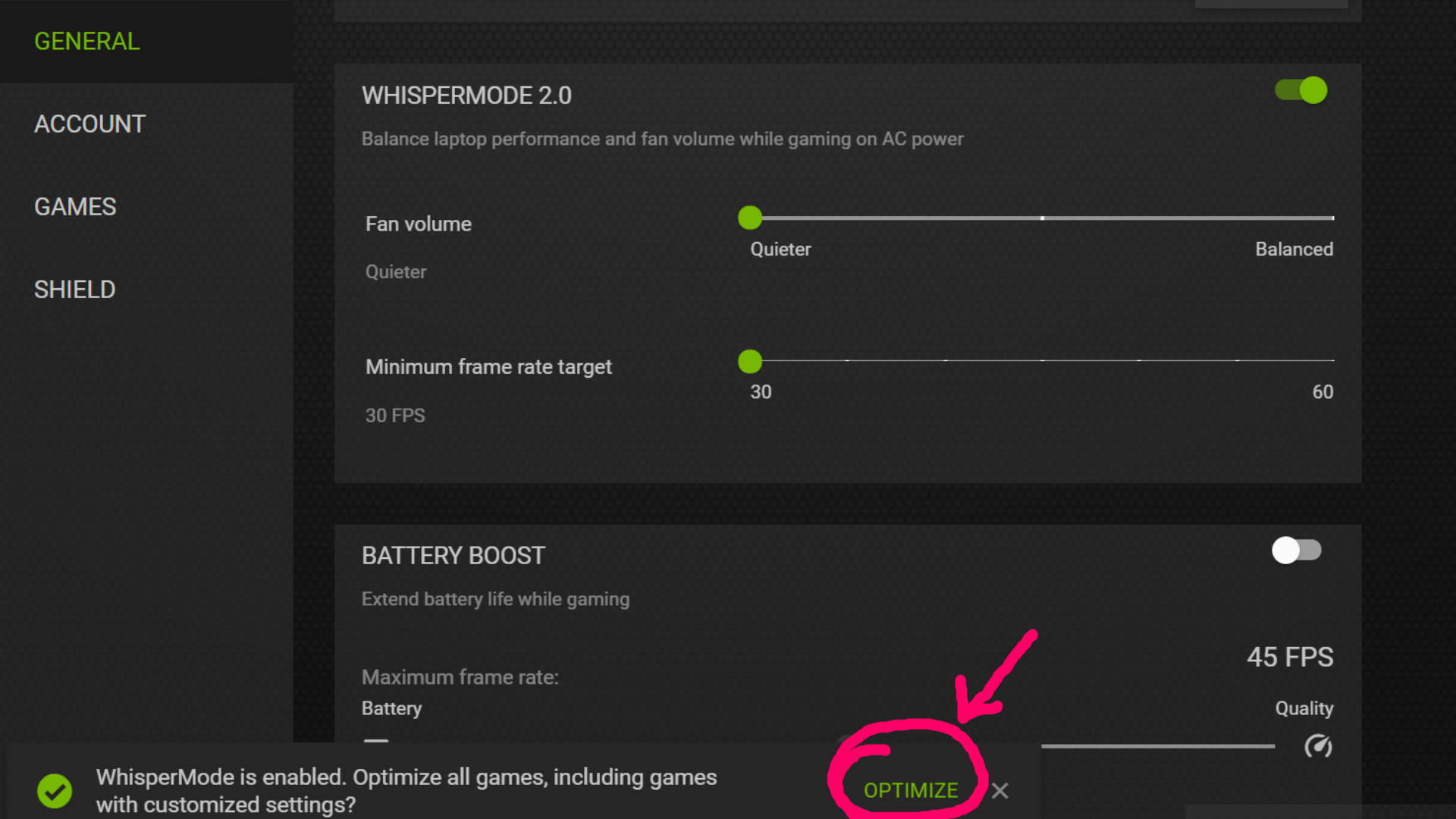

When you toggle the feature on in the GeForce Experience app it asks if you'd like to optimise your games for this mode (make sure you click optimise, it's easy to miss). The caveat being that not only does it override your current system settings, it may also knock your resolution down a notch. Depending on the game, Whisper Mode 2.0 will also generally nerf your other graphics settings in favour of performance and low noise.

There are two sliders to play with: the 'Fan volume' and 'Minimum frame rate target'. The first is a very simple three step slider from 'Quieter' to 'Balanced' and the second allows you to select a target fps level for you games.

Whisper Mode 2.0 then uses those sliders to optimise your installed games to ensure quiet gaming that still hits those frame rates. Dragging the fan slider to the quietest setting and pushing the fps target up to 60 fps will result in the game settings being dropped more considerably than if you were just chasing 45 fps, for example.

You may not have the in-game fidelity of an unleashed laptop, but you will still be able to play at decent frame rates without having your machine sound like you have a hovercraft atop your desk. And it means you'll be able to game around other humans without punishing their ears.

It's an impressive feature, and is incredibly simple and effective. The one-touch optimisation takes seconds from when you enable Whisper Mode 2.0 and will put everything back the way it was when you disable it too.

The only real drawback is that it can only be used when you're plugged into the wall for power, otherwise you only have Battery Boost's own dumb frame rate limiter to keep things moving away from the plug.

We're big fans (pun 100 percent intended) of Whisper Mode 2.0, but it's not the sort of feature you would want to use all the time. If you've spent big on an RTX 3080 gaming laptop you want to use that power, and sometimes you just have to sacrifice a little noise for the high-end ray traced pretties. But when you want to remain stealthy, and still game at high frame rates, Whisper Mode 2.0 is ace.

Battery Boost

Battery Boost hasn't seen an overhaul with the new Max-Q setup for Ampere gaming laptops, but it's still something to keep in mind as a feature that can extend your gaming life away from the plug.

Laptop batteries and discrete graphics cards do not make the greatest of bedfellows, but with Battery Boost enabled you can at least squeeze a little bit of extra game time out of your powerful machine. Sadly it's not quite as smart a system as Whisper Mode 2.0, in that it doesn't adjust your in-game settings, but it does still offer a simple frame rate limiter which can lower power demands on the system.

From within GeForce Experience you can set an frame rate limit in 5 fps increments from 30 fps to 60 fps, where the bottom end of the slider will deliver greater battery life.

In many machines Battery Boost is enabled by default, but it's something to keep in mind if you're trying to figure out why you're only getting 30 fps out of your RTX 3080 machine when you pull the cable out.

Honestly, gaming on a laptop battery is rarely a positive move in terms of gaming performance, but Battery Boost can be useful if you're playing a less graphically intensive game, such as a turn-based strategy.

Nvidia DLSS 2.0

DLSS, or Deep Learning Super Sampling, uses a deep neural network that intelligently extracts 'multidimensional features' of a scene, then combines multiple frame details into a final image, per frame.

Sounds like Voodoo, but it's one of Nvidia's most impressive recent GPU features and means your system will run games at a lower resolution, while using AI power to translate each frame into something that looks higher resolution than it actually is. It sharpens each pixel, allowing performance to take priority without much loss of detail.

And it's the feature which makes the performance hit of enabling ray traced visuals one that's actually palatable.

It uses the AI-focused Tensor cores from within RTX GPUs to enhance the look of your games, but does still need to be trained from the get-go. So DLSS is something that has to be enabled by game developers and isn't something that will magically work on every title in your Steam library.

But where it works, it works incredibly well. The first implementation had a tendency to muddy the image a little, but with the DLSS 2.0 update we're now at the point where an upscaled image can actually look better than at native resolution. Yes, the robots are getting that good.

It was initially something tied into ray tracing, but we're seeing more and more games enable the feature on its own in order to boost gaming frame rates for normal rendering.

I've found that in most cases, turning DLSS on does provide a good boost in frame rates, in plenty of top games, and manages to seriously improve rendering scores in real-time, ray-traced environments. I recommend trying it out where you can.

Advanced Optimus

Advanced Optimus is the evolution of Optimus mode. It automatically switches the display connection from GPU to your machine's integrated graphics using a 'dynamic hardware switch' to reduce the load on your GPU when unnecessary. The idea is to reduce system overhead and increase performance, while supposedly increasing battery life.

In Nvidia's initial employment of Optimus mode, the GPU was not directly connected to the display, meaning it didn't work with G-Sync. That restriction has since been fixed with Advanced Optimus so, as long as your FPS doesn't supersede your monitor's refresh rate, you can enable G-Sync for a smoother experience.

It's also necessary for the new wave of high refresh rate 1440p laptop panels too. Without it you're not going to get 240Hz out of your high-res display.

Not only does Advanced Optimus give you access to G-Sync capability, it should help make games playable for longer as your battery wanes. And in combination with DLSS, those numbers might give you a fighting chance as you battle through those last dregs of juice.

And, plugged in, it makes your beautiful high refresh rate screen shine. So why not give it a try?

To turn it on, simply right click your desktop and enable it in the Nvidia Control Panel, under the 'manage display mode' tab. Strangely it's not actually listed as 'Advanced Optimus,' instead it's labelled as 'Automatic Select' with the original 'Optimus' option still present, which looks like the default.

I would recommend making sure you dive into the Nvidia Control Panel and ensure that you have the 'Automatic Select' option enabled instead of the old-school version of Optimus. Way to make things clear, Nvidia...

Nvidia Reflex

Nvidia Reflex is supposed to improve latency to—as the title suggests—bolster your in-game reflexes. Though it's only available for certain games, and works best when paired with Reflex compatible mice such as the Razer Deathadder V2, or SteelSeries Rival 3. With a focus on fast-paced, competitive games such as those played in esports, this mode seeks to reduce the trade-off between high graphic settings and low latency, so you shouldn't have to sacrifice looks for frames, in theory.

It essentially has the power to boost GPU clocks, and give the CPU a significant hand in reducing the render queue. Since it works by controlling CPU render submissions through the game engine, Reflex produces better results than just the "Ultra Low Latency" tech hosted within the drivers.

In aiming, particularly in fast paced games that involve a ton of flick shots, higher latencies mean more variance in accuracy, as you're unable to predict where your flicks are going to land. So by reducing latency, your accuracy should improve too, right?

I'm too bad at competitive FPS to test accuracy with any authority, but I found Reflex was able to shave off a good dozen or more milliseconds off the machine's latency scores, so it should, in theory make a difference. Though, I couldn't quite get the 33 percent Nvidia claims it can achieve over having Reflex turned off. Combining Reflex with DLSS and Optimus however is likely to yield better results, especially when paired with a good reflex mouse.

There's something to be said about the innovation of this feature. And it does reduce latency, but there are a lot of factors that can contribute to your terrible performance in game as well, so don't think this is going to solve all your problems. Bad aim might just be the issue.

Nvidia Dynamic Boost 2.0

This one replaces outdated, fixed, static GPU and CPU power budgets with an AI-operated power balancing system. Nvidia claims Dynamic Boost can optimise your gaming experience on a frame-by-frame basis, with version 2.0 now able to intelligently shift power between the CPU and GPU. Previous versions only allowed power to be routed from the CPU to the GPU but now it works both ways, intelligently diverting power in order to keep the overall power consumption at a consistent level.

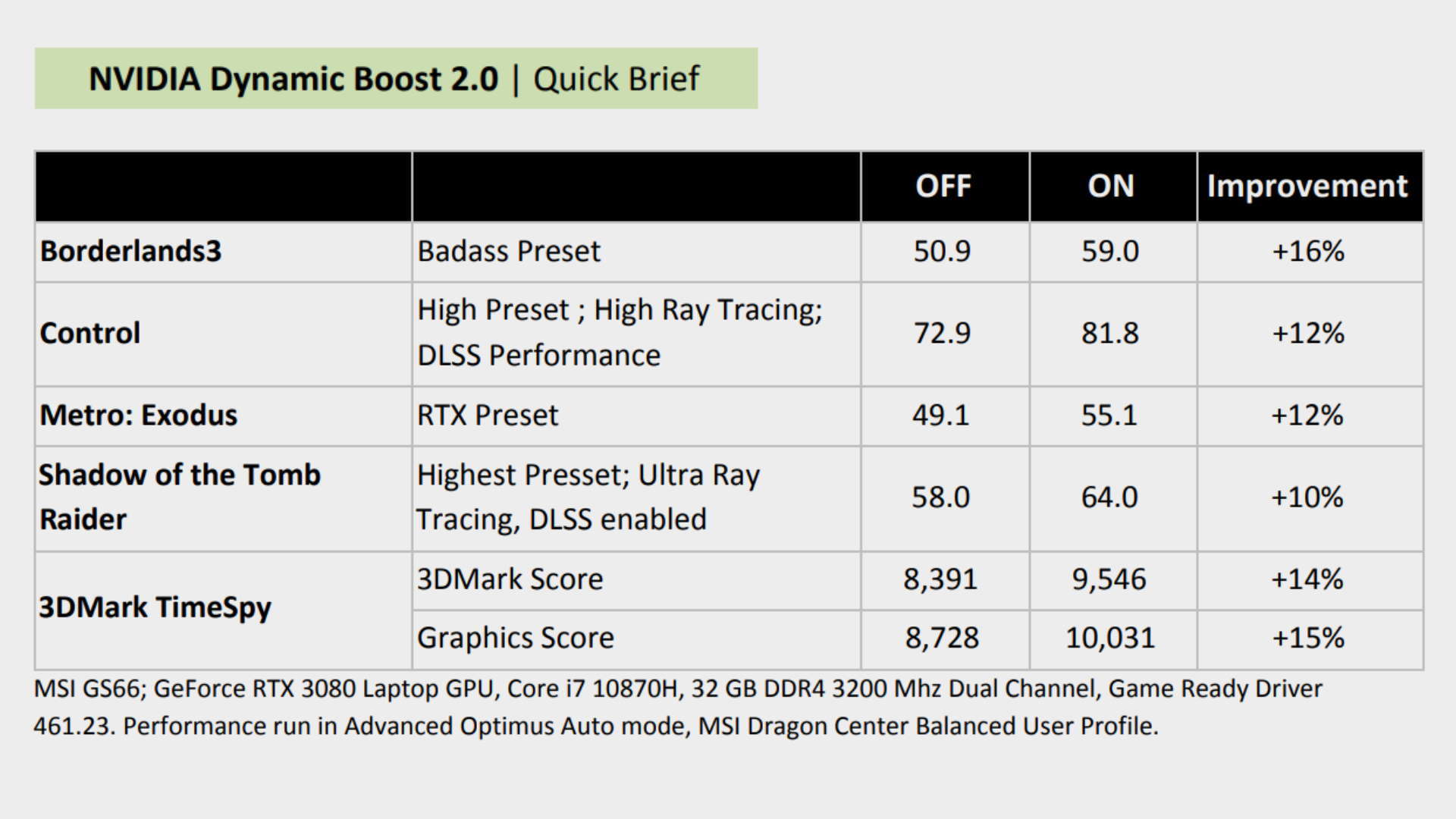

The tech is powered by multiple AI networks, which makes it sound like it'll solve all our problems, but is it really doing your machine any good? Nvidia has it pegged with the potential to increase performance by upwards of 16 percent according to the documentation.

Unfortunately, there's no way to turn Dynamic Boost off for testing, but Nvidia listed some of its own FPS averages and scores before enabling it. We'll just have to take the company's word for it on this one. And it's not like you can turn it off, anyway so... er, deal with it?

But it should help boost performance without you having to lift a finger. Which is always nice.

Nvidia Resizable BAR

Resizable BAR (Base Address Register) is Nvidia's equivalent to AMD's Smart Access Memory, or SAM for short. This one's on by default, so there's no need to fiddle around in the settings to switch it on.

The Resizable BAR basically increases the buffer between your machine's CPU and GPU. Where previously data exchange between these constantly interacting components was limited to just 256MB, the Resizable BAR feature simply unlocks the bandwidth limit between the two. Rather than exchanging data little and often, which can cause latency issues, your components can communicate freely as needed and means that the larger frame buffers of modern GPUs can actually be fully utilised.

This has the potential to give your machine a passive advantage that speeds up the data retrieval process, which in turn can bolster performance in certain games.

There have been only a few games that really sing with this new technology in place, though, with most upticks in framerate being relatively negligible—most are seeing performance increases under 10 percent. Still, every little helps.

Having been obsessed with game mechanics, computers and graphics for three decades, Katie took Game Art and Design up to Masters level at uni and has been writing about digital games, tabletop games and gaming technology for over five years since. She can be found facilitating board game design workshops and optimising everything in her path.

Join The Club

Join The Club