MSI prototypes for the future of graphics card cooling look impressive and expensive

Graphics cards are already huge, so manufacturers are going to have to get a bit more inventive with how to cool them.

Assuming the next-generation of graphics cards will be more power-hungry than the last, we could end up in a pickle trying to keep them cool. I mean, look at the RTX 4090, it's already massive. Our PC cases can't take much more. But there's more to cooling than bigger and bigger heatsinks, and over at Computex 2023, MSI has shown off a couple of concepts it's looking into that can drop GPU temps by as much as ten degrees.

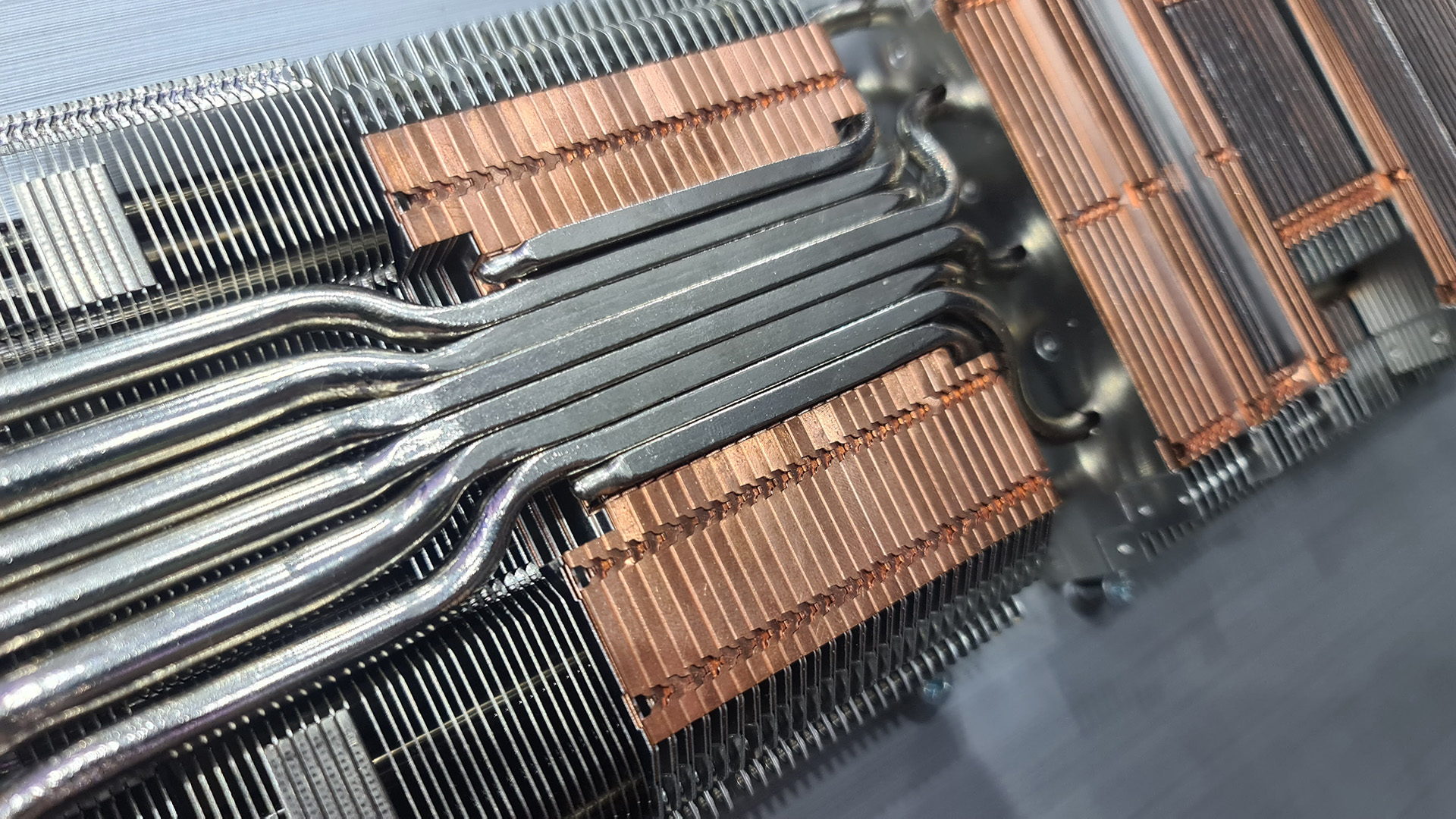

The first new cooling method is called Dynamic Bimetallic Fins, and it's essentially a fin sandwich. The bread, so to speak, is made up of two aluminium sheets, and the tasty filling is a copper sheet. The combined fins end up being around 1mm thick, which is roughly 3-4x thicker than your regular single fin, but the mixing of metals helps dissipate heat better—dropping temperatures by 3 degrees Celsius according to MSI.

The one downside is sure to be the cost of such a creation. Triple the fins, triple the materials. Not to mention manufacturing costs for a more complex process are likely higher. Though MSI did tell me that in theory you could use a much smaller heatsink than your usual aluminium one found in today's graphics cards and still get decent temps.

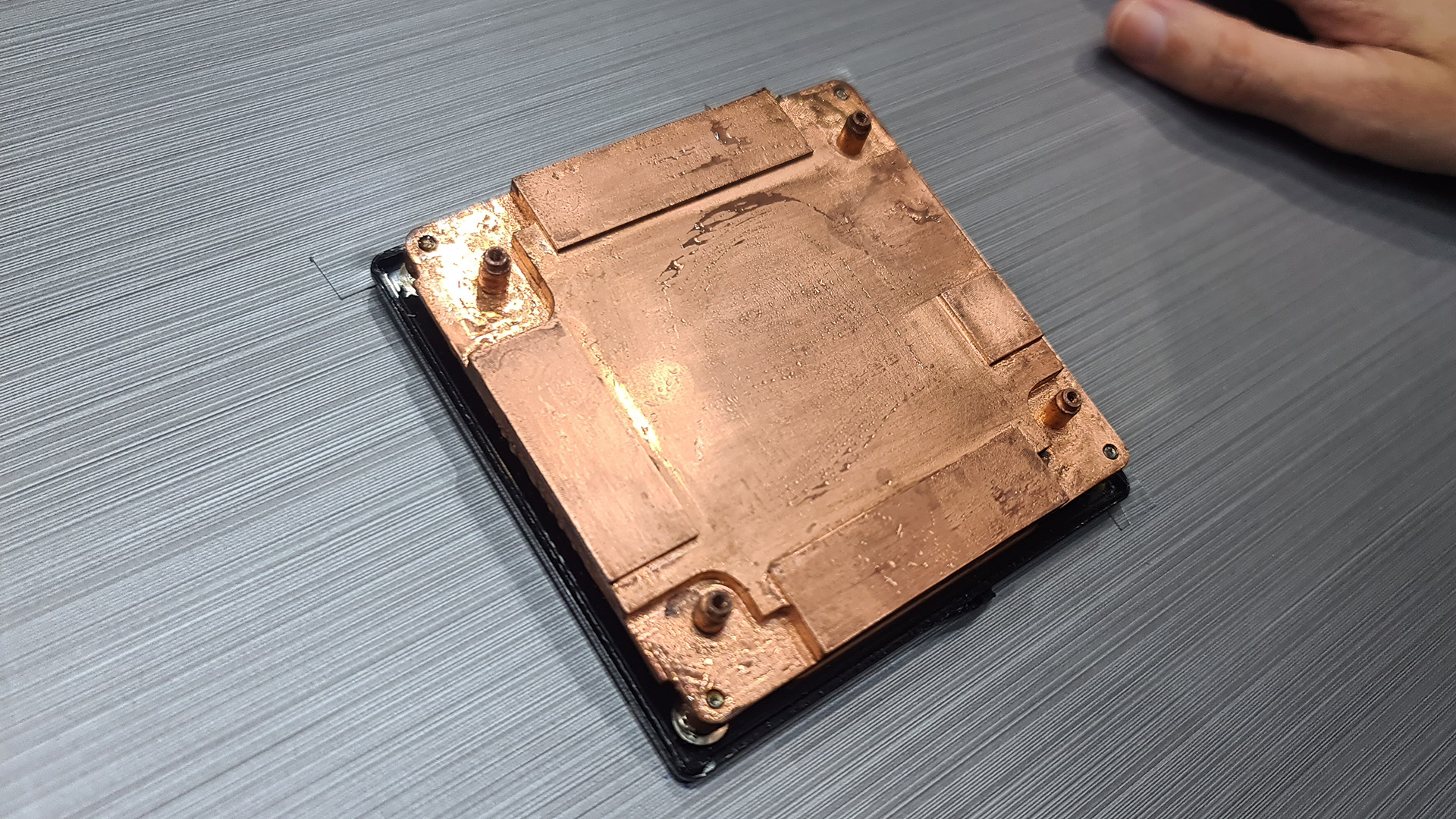

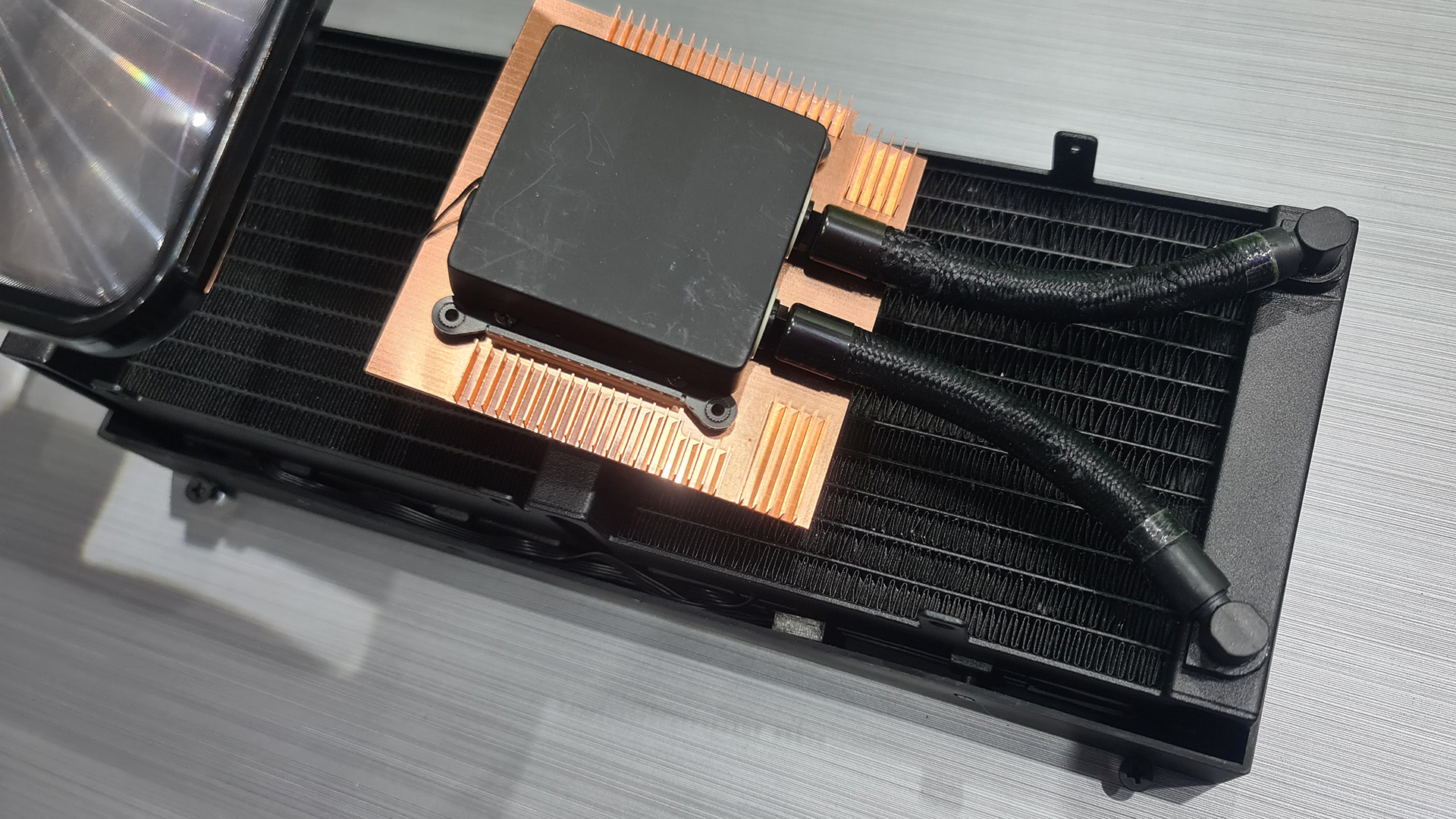

The next concept was to use a TEC, or Thermoelectric cooler, called Arctic Blast. The TEC plate is covering the GPU and memory and liquid coolant transfers the heat away to a radiator. There's potentially the issue of dealing with the condensation that often occurs with this sort of cooler, but CPU TEC blocks deal with that part pretty well, so that might be rosy. And in return this option does offer the benefit from sub-ambient temperatures, which is better than any heatsink can manage, though does requite a fair chunk of power to operate.

MSI lists a few benefits to a TEC design, namely high cooling capacity in even a small form factor and overclocking potential. I have to say this concept has me intrigued, but I don't foresee it being in any way affordable if it ever actually makes it to market. TEC blocks aren't cheap and neither are graphics cards—this is sure to be a mighty expensive combo.

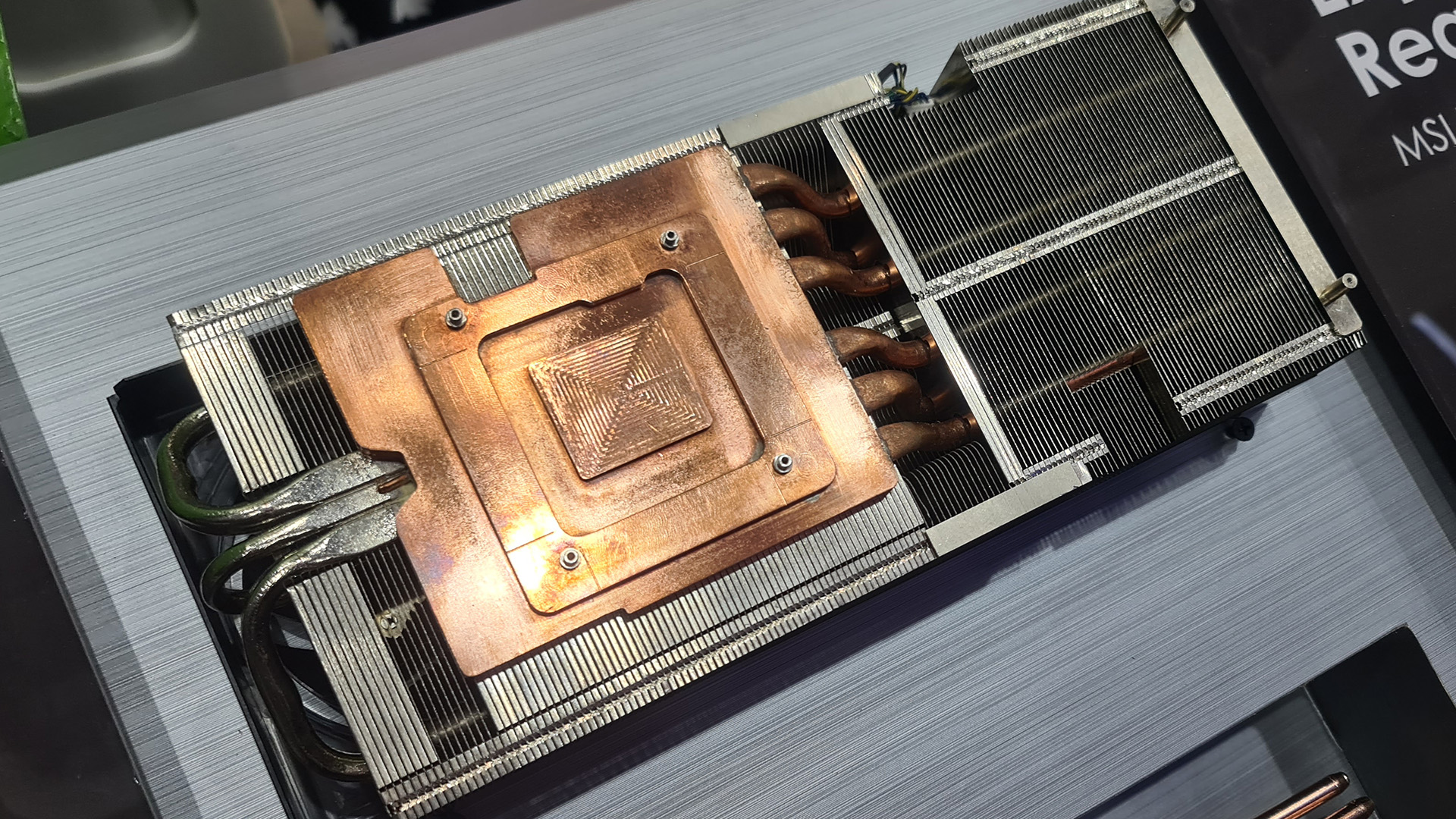

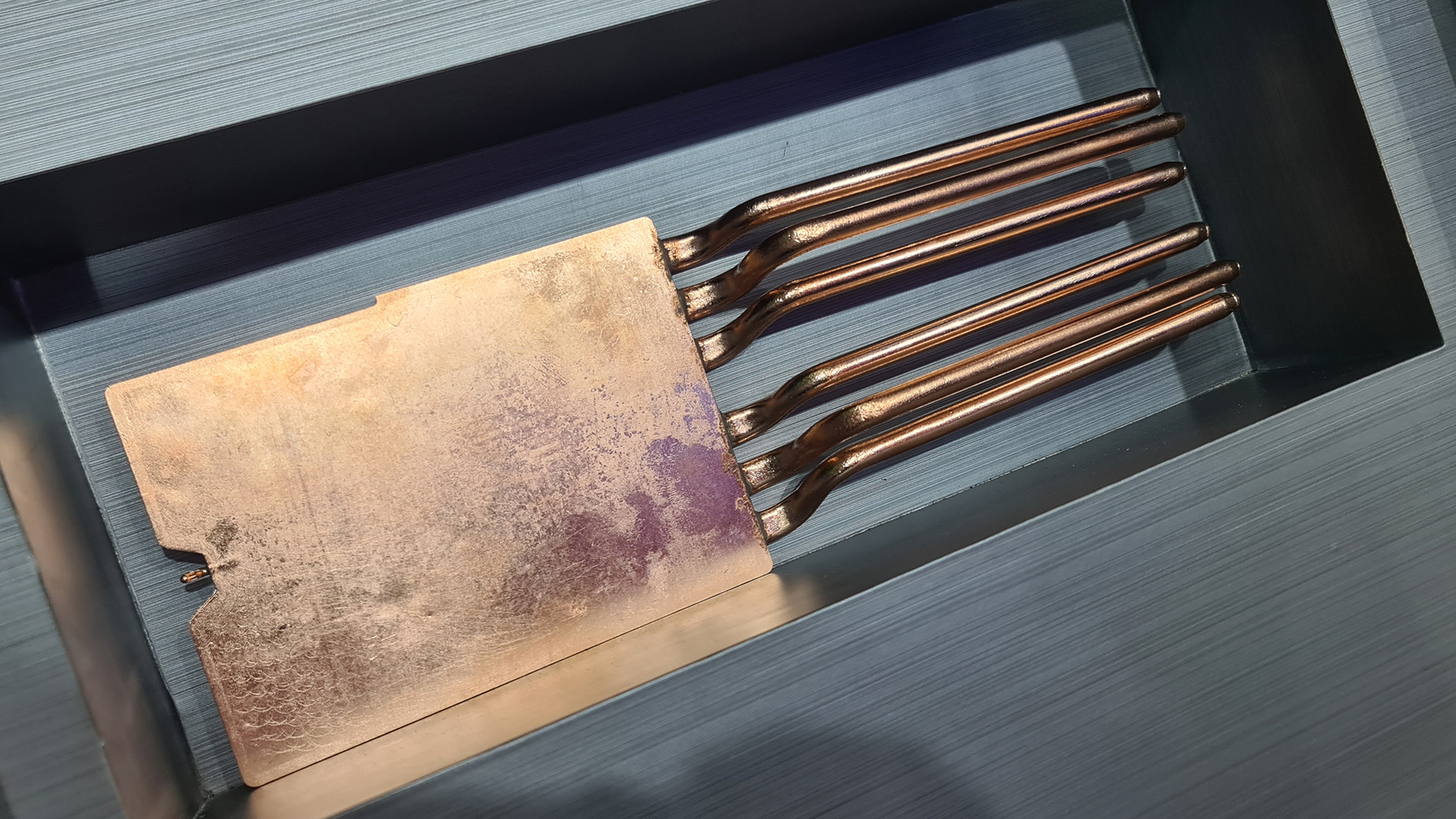

The potentially cheaper and more likely option is a 3D vapour chamber. MSI calls them MSI DynaVC. It's basically the graphics card's vapour chamber and heat pipes folded into one, and that means there's no need for any soldering. According to MSI, this reduces the heat transfer distance, and allows heat to travel more effectively between the main GPU base and heat pipes.

Only thing is, MSI tells me its original tests are yet to show a marked improvement in thermals with the new 3D vapour chambers, but it's still investigating the concept's potential.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Then there's FushionChill, a concept that takes the few high-end all-in-one-cooled graphics cards today and reimagines a smaller, smarter version. Essentially, FushionChill is a liquid-cooled GPU with every part—including the radiator that usually comes connected via tubing on today's cards—within the graphics card shroud.

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

The key to FushionChill is an advanced radiator that's deeper and has an increased fin pitch. The net result, MSI expects, is the best cooling of any of the aforementioned concepts—as much as a 10 degree Celsius reduction.

The important thing to remember is that, in theory, some of these designs to be combined into a single graphics card. MSI told me it's open to the idea, and I'd guess that the Dynamic Bimetallic Fins and 3D vapour chambers could work together. I did ask if MSI planned to use any of these designs for the next-gen, but a representative for the company wouldn't say. Only that they could be used to reduce the size of preexisting shrouds or be used for even more powerful cards.

Graphics cards will get bigger, more powerful, and more power-hungry, so at some point we might see GPU manufacturers getting even more creative with how they cool them. These are only concepts today, but perhaps one of these options will become a little more mainstream.

An MSI TEC RTX 5090, perhaps?

Jacob has been writing about PC hardware and technology for over eight years. He earned his first byline at PCGamesN before joining PC Gamer. He spends most of his time building PCs, running benchmarks, and trying his best to learn Linux.

Join The Club

Join The Club