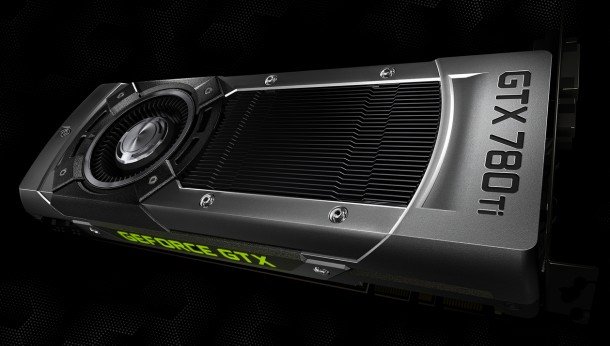

Nvidia GTX 780 Ti: what do we expect to see?

Nvidia announced they're hoping to spoil the AMD party by dropping a bomb on the gathered press out in Montreal last week: the GeForce GTX 780 Ti . If the rumours are true and the incoming AMD Radeon R9-290X can beat a GTX Titan in a stand up gaming fight then Nvidia are going to need some sort of riposte. But what exactly?

AMD have repeatedly assured the public the brand new Radeon R9-290X is going to be released this month and there's not a long time left in October. That's coming soon and I don't reckon the new GTX 780 Ti is going to be far behind.

The rumours and leaked benchmarks floating around the interwebs peg the new Hawaii GPU of the R9-290X at around the same sort of performance levels as the darling of the ultra-enthusiast graphics card crowd, the GTX Titan. Hitherto that had been the fastest single-GPU graphics card out in the wild and Nvidia have surely gotten quite attached to that label for their top-end card. The GTX 780 Ti then has got to have some serious performance chops to stand out.

So what do we expect to see from Nvidia's new card? Well, the obvious thing to do would be to take the GK 110 GPU from the Titan, boost it's core clockspeed a touch and give it a new badge. It's going to have to come in at the same sort of price as, or less than, the existing GTX 780 to have a chance against the touted $600-odd pricing of the Radeon R9-290X. That makes using the top chip a little more unlikely.

There is a bit of a gap between the 780 and the Titan though, with twelve SMX units playing some fourteen in the higher spec card. The GTX 780 Ti then could swan in with a healthy thirteen SMX units and some 2,496 CUDA cores.

Again, Nvidia could boost the clock over the relatively conservative GTX Titan's 836MHz base clockspeed and give it a shoeing in the gaming performance stakes.

I'd also expect Nvidia to make a bit more of an effort on the memory capacity too. The 3GB of the GTX 780 is not going to stand up so well along the road to 4K gaming as the 6GB of the GTX Titan. I doubt they'd go all the way up to implementing a 6GB framebuffer, but 4GB wouldn't be out of the question, would it?

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

With all the noises Nvidia have been making about 4K recently, with its BattleBox promotions and the like, they'll need to put their hardware where their mouth is. After all the rebranding exercises recently I'm looking forward to actually talking about new cards and new GPUs for a change and I don't think it'll be long before we know who's taking the Christmas number one GPU spot.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.