Cyberpunk 2077 GPU performance: AMD RX 6800 XT vs. Nvidia RTX 3080

With ray tracing off the Radeon cards, how do AMD and Nvidia's latest flagship GPUs fare in the biggest game of the year?

Cyberpunk 2077 is here, landing as we hit the next generation of gaming hardware from both sides of the GPU divide. Forgetting the over-priced top-end chips, the AMD RX 6800 XT and Nvidia RTX 3080 are the flagship cards from the red and green teams, and we're here to see how they perform head-to-head in the biggest game of the year.

This is next-gen hardware, and this is next-gen gaming. Floating trans-humans, stiletto cops, missing hair, missing faces, vehicles popping into existence out of nowhere, and a million other interesting features we'd never have expected to appear in one of the most anticipated games of the last five years.

I'm sure at some point in the future we'll start to recognise what we currently see as bugs or glitches as really smart bits of gameplay design from CDPR. Probably. Hell, we might even be able to buy one of these new GPUs by then too. But for now, no matter what the bugs are doing to your game, with Day One driver packages from AMD and Nvidia Cyberpunk 2077 is at least performing properly.

In that it's really punishing even the latest PC gaming hardware. Spare a thought for the poor PlayStation 4 Pro and Xbox One X peops out there. Solidarity, sisters and brothers.

Sadly, for us tech folk, there is no built-in benchmark in Cyberpunk 2077, though I've heard rumours one might be forthcoming in the future. In the meantime we've had to create our own test run. Our 60 second run includes an in-vehicle section, which takes in the ray traced finery of Night City (at night, obvs.) and ends with a first-person firefight to give a good mix of what the user will experience in the game.

And we've also had to wait for the final release version of the game to do proper real-world testing as the pre-release review version was DRM'd up to its cybernetic eyeballs which, according to Nvidia when we spoke to them, was tanking frame rates by anything up to 10fps. And when you're working with such a demanding game, that can make a big difference.

With the two flagship AMD and Nvidia GPUs going up against each other the big question is: What's the ray tracing like? Unfortunately we're still a little way off seeing what AMD's Big Navi GPUs are capable of with the DirectX Raytracing (DXR) API built into Cyberpunk 2077. At launch there is no AMD DXR support, as there isn't in either of the AMD-powered next-gen console versions as yet.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

We've heard rumours AMD's engineers have been on site with CDPR for a good while now, working deeply with the devs on implementing the fancy lighting tech for AMD GPUs, and I think that support is likely to appear alongside the red team's version of DLSS when that arrives. DLSS, or Deep Learning Super Sampling, is the AI-powered, performance enhancing, image enhancing, upscaling feature that makes Nvidia's own ray tracing frame rates actually playable.

Indeed, DLSS not only makes ray tracing playable, but you can enable it independently of ray tracing to boost high-resolution performance from your GeForce GPU.

Right now all AMD can offer on that front is its FidelityFX CAS in both Dynamic and Static trim. That's a feature which will lower the render resolution of a scene, adding contact adaptive shading to enhance the final image, and therefore deliver higher frame rates. It's not as slick as DLSS, nor as powerful a tool. You can absolutely see where AMD's lowering the scene fidelity, while that's a whole lot tougher with Nvidia's solution.

But, outside of that, how do the two GPU heavyweights stack up in terms of straight rasterizing performance?

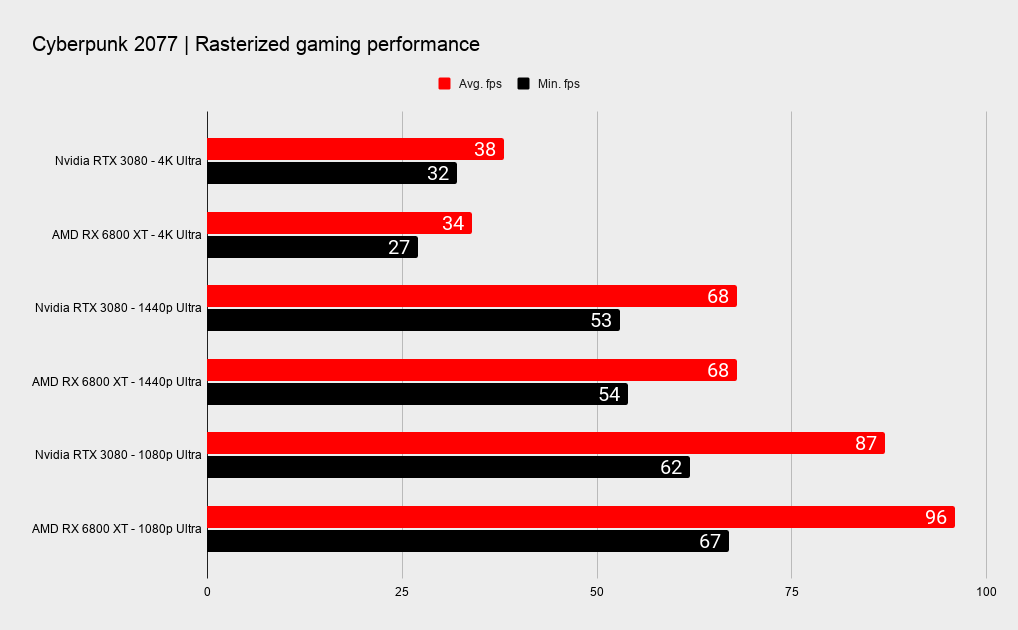

AMD RX 6800 XT vs. Nvidia RTX 3080: rasterized performance

AMD RX 6800 XT vs. Nvidia RTX 3080: rasterized performance

It's close. Real close. That's one of the main reasons we've been so impressed with AMD's Big Navi GPU; the way it manages to keep pace with Nvidia's flagship card for a shade less cash. We've been testing both cards at the punishing Ultra setting, and indeed at 1080p the RX 6800 XT outperforms the RTX 3080, and by a ten percent margin too.

That's an interesting data point, but in the harsh neon glare of the real world doesn't really amount to much. If you're looking at dropping $649 or $699 on a graphics card, you're not going to be feeding it games at 1080p.

But even at 1440p it's a dead heat, with the AMD card getting the nod ahead of Nvidia on pure efficiency alone. In terms of average wattage, at 300W the RX 6800 XT draws a little less than the 316W of the RTX 3080.

At 4K Ultra, however, the switcheroo is complete, with the RTX 3080 taking a 12 percent frame rate delta to the bank. Though again is doing so chowing down on a chunk more power than the less power-hungry RDNA 2 chip.

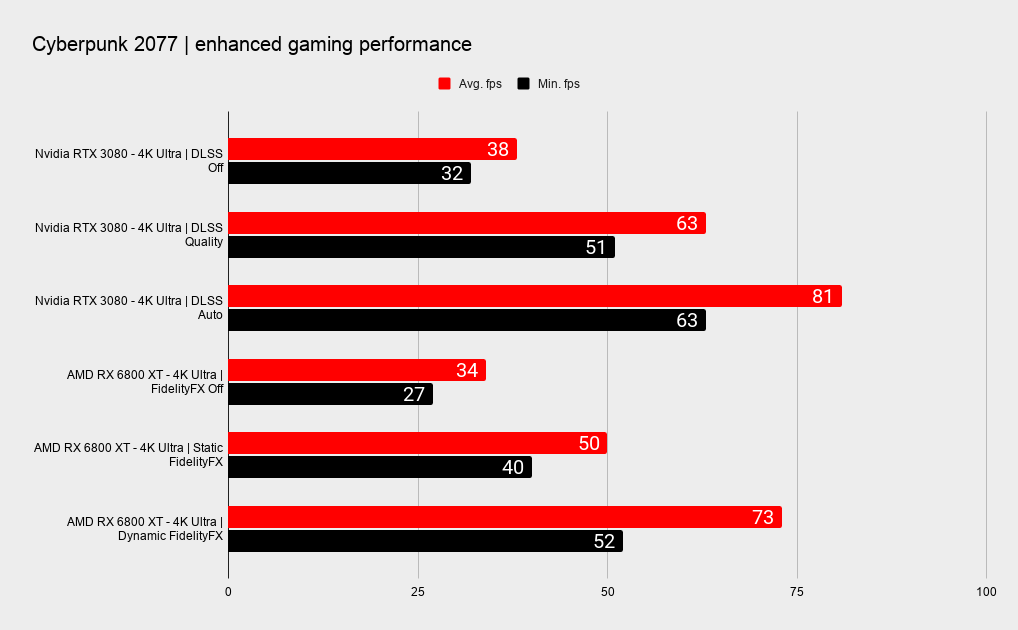

AMD RX 6800 XT vs. Nvidia RTX 3080: enhanced performance

AMD RX 6800 XT vs. Nvidia RTX 3080: enhanced performance

Straight rasterized gaming performance is becoming far less important as more games release employing Nvidia's DLSS tech. And once AMD's own version starts to appear—and I'm betting Cyberpunk 2077 will eventually mark its first outing—getting a bead on standard GPU-based frame rates will be much harder. But, in the end, potentially irrelevant. After all, who's going to want to turn that stuff off?

The joy of DLSS right now, especially since the blurred edges have been sharpened up in the evolution from version one to this second-gen DLSS implementation, is that it is almost a magic lever that instantly gives you higher gaming performance for free. The fidelity issue is seemingly a non-starter now; in fact its AI smarts sometimes offer sharper, more detailed images than you'd get with a native resolution paired with some aggressive anti-aliasing.

But there is no direct head-to-head really possible between AMD and Nvidia on this one, with DLSS necessarily restricted to GeForce GPUs of the 20-series and up. But it's possible to get a kind of apples vs. oranges comparison jury-rigged together thanks to the FidelityFX CAS upscaling settings in Cyberpunk 2077.

We've used the Auto and Quality settings of DLSS, while running the game at 4K Ultra settings, and without any ray tracing enabled. Auto is designed to offer the best version of DLSS for your chosen resolution and settings, while Quality aims at simply delivering the highest fidelity.

On the AMD side we had the Static FidelityFX CAS feature set at 80 percent of the 4K resolution with upscaling on the final image and, to compete with the Auto DLSS setting, we also ran the benchmark using Dynamic FidelityFX CAS set to target 80fps (as that is what the RTX 3080 managed with Auto) and allowed it to go as low as 50 percent for the render resolution.

This is where you'd absolutely feel like you were on the losing side if you'd picked up the RX 6800 XT. With the RTX 3080 utilising the Auto setting you're getting higher frame rates, and higher fidelity than anything AMD's poor FidelityFX CAS feature can offer. In fact you're getting higher performance at 4K Ultra than the RX 6800 XT can manage at straight1440p Ultra.

You would also be hard pressed to tell the difference between the 4K Ultra DLSS Quality image and the 4K Ultra AMD shot. Though it does look like the AMD version is using a different road texture, or map. The details are different, and the reflections more aggressive, as though it's trying to fake ray tracing into the scene like a GTA V injector mod.

Either way, without ray tracing finery the DLSS Quality setting of the RTX 3080 posts frame rates almost twice that of the vanilla AMD 4K Ultra run. And looks great doing it too.

In static images, you could say the FidelityFX CAS shots look good too, and the dynamically adjusting version gets close to its 80fps target. But those stills don't tell the whole story; when you're actually shifting around Night City it becomes crystal clear when the render resolution is being dropped to keep the frame rates up. Well, becomes fuzzy and muddy actually, but you get my drift.

For DLSS you can see a slight loss in hard detail in the middle distance in a still image, but conversely you'd never notice any difference in a moving scene. And that makes DLSS, whether you're running ray tracing or not, an absolute must for Cyberpunk 2077. Honestly, it's a must for whatever game supports it right now.

It's important for the RTX 3080, the high-end Nvidia flagship, at 4K, but is going to be vital for decent performance for RTX 30-series cards lower down the stack. Think about something like an RTX 3050 with support for DLSS and the performance improvement you'll see over rival cards in the same price bracket. It could be a real game-changer.

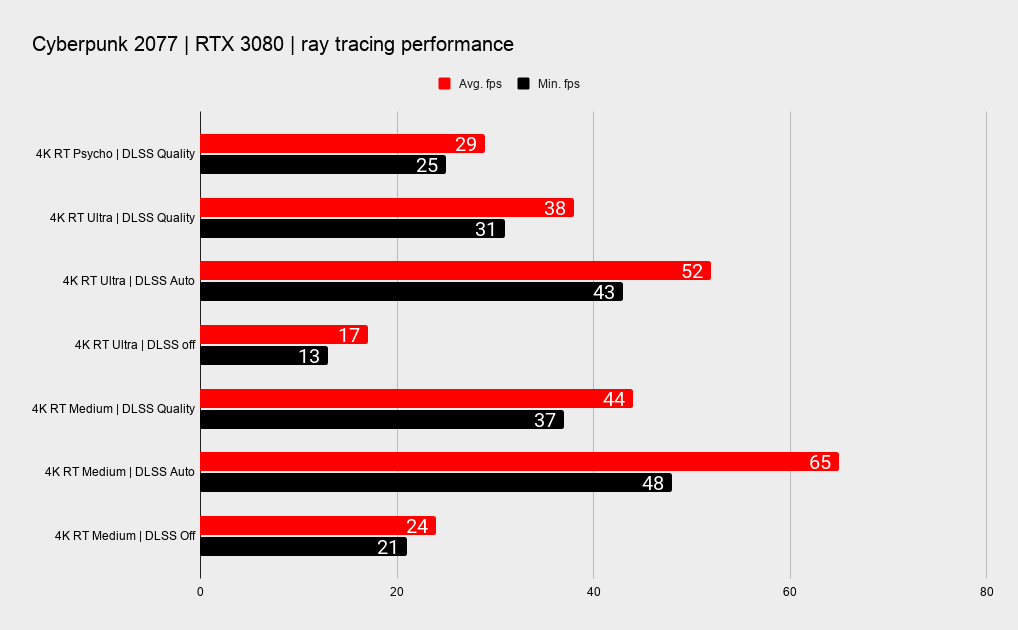

Nvidia RTX 3080: ray tracing performance

Nvidia RTX 3080: ray tracing performance

We couldn't leave without noting the ray tracing performance of the RTX 3080 in Cyberpunk 2077: It's intense. Stick ray tracing on its top setting at 4K and the flagship Ampere GPU will only deliver 17 frames per second without DLSS to give it a leg up. So yeah, again it's the AI to the rescue.

That's only brought into the light more by the fact that you get essentially the exact same 4K Ultra performance with ray tracing and DLSS enabled as you do with the RTX 3080 only taking on the rasterizing-only challenge of Cyberpunk 2077 without ray tracing or DLSS. That essentially means you're not really losing anything by enabling ray tracing… you'd just get more performance enabling DLSS on its own.

But then you'd be missing some of the loveliest ray traced lighting we've yet seen. But also some of the worst instances too. My V started her journey in the high-res environs of the Arasaka Corp. building, and that is absolutely not the stunning polished floored, glass-walled nirvana of the Oldest House in Control. It's a frankly weird-looking world of oddly reflexive metal, showing us hovering representations of NPCs, but nothing of ourselves.

Thankfully the wider world outside of the Night City Arasaka HQ is far better-looking, and not as over-done as I feared it might be, especially given its positioning as next-gen poster boi for ray tracing.

Even so, on the Quality DLSS preset the RTX 3080 will only give up 38fps on average at 4K Ultra settings. Give it the option to use the Auto setting instead and edging closer to the magic 60fps mark, though still a way short of it.

Given how demanding Cyberpunk 2077 is of the RTX 3080 and its second-gen RT Cores and third-gen Tensor Cores, when AMD does decide to pull the switch on enabling ray tracing on its own GPUs, it's got some work to do to ensure vaguely playable frame rates from even the best of Big Navi.

It's going to be interesting to see how that shakes out... and we're starting to see why AMD has delayed getting Cyberpunk rays traced until after launch. It is just using Microsoft's DXR after all, so in theory AMD could flick a driver switch and you'd be running your RX 6800 XT with DXR support. Until the red team has something that can give it a bit of a performance leg up, ray tracing in Cyberpunk won't be coming near a Radeon GPU in PC or console.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.