Stable Diffusion VR is a startling vision of the future of gaming

A glimpse into "Real-time immersive latent space."

A while ago I spotted someone working on real time AI image generation in VR and I had to bring it to your attention because frankly, I cannot express how majestic it is to watch AI-modulated AR shifting the world before us into glorious, emergent dreamscapes.

Applying AI to augmented or virtual reality isn't a novel concept, but there have been certain limitations in applying it—computing power being one of the major barriers to its practical usage. Stable Diffusion image generation software, however, is a boiled-down algorithm for use on consumer-level hardware and has been released on a Creative ML OpenRAIL-M licence. That means not only can developers use the tech to create and launch programs without renting huge amounts of server silicon, but they're also free to profit from their creations.

I was awoken in the middle of the night to conceptualize this project

Scottie Fox - Stable Diffusion VR dev

ScottieFoxTTV is one creator who's been showing off their work with the algorithm in VR on twitter. "I was awoken in the middle of the night to conceptualize this project," he says. As a creator myself, I understand that the Muses enjoy striking at ungodly hours.

What they brought to him was an amalgamation of Stable Diffusion VR and TouchDesigner app-building engine, the results of which he refers to as "Real-time immersive latent space." That might sound like some hippie nonsense to some, but latent space is a concept fascinating the world right about now.

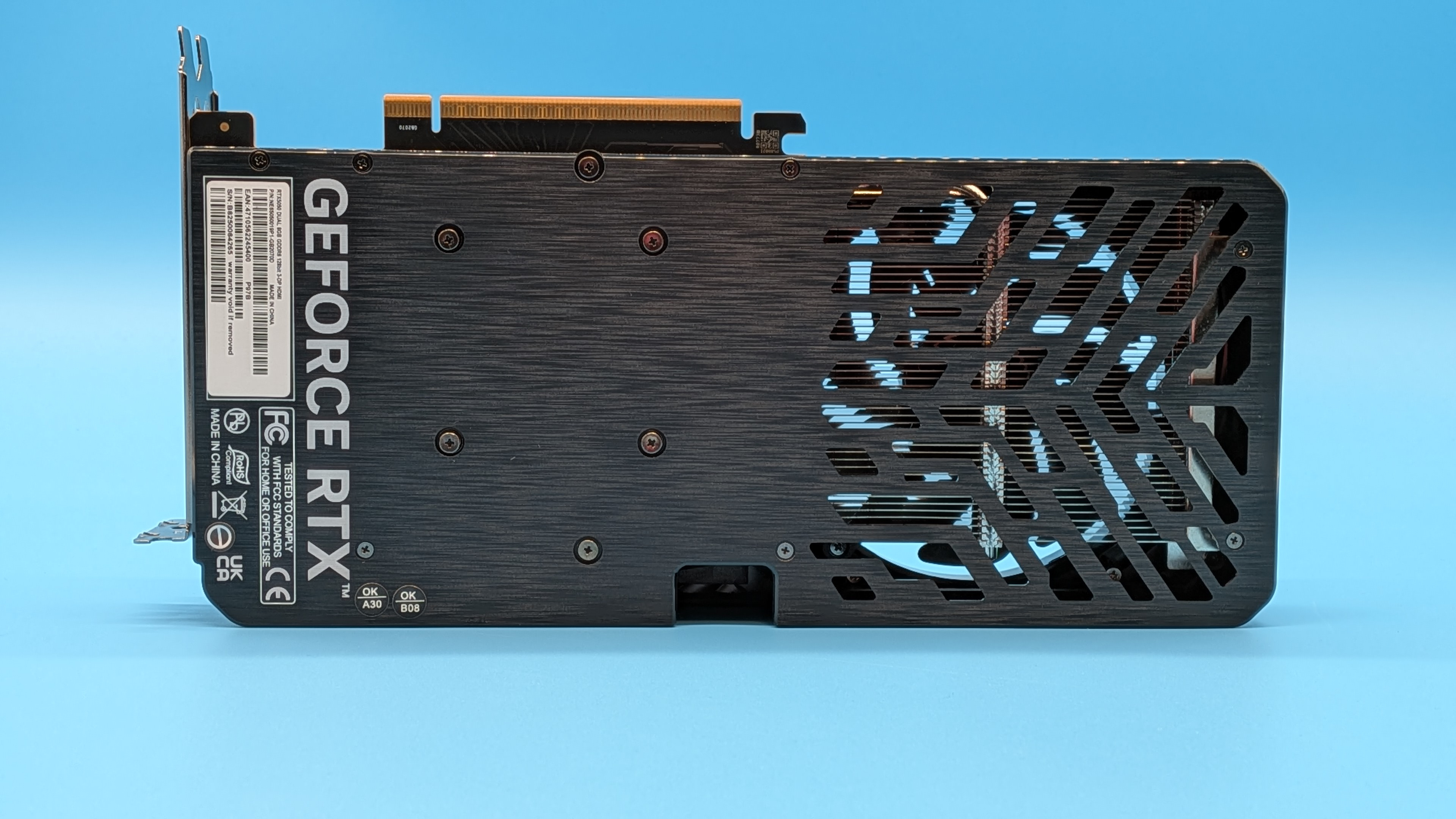

At a base level, it's a phrase that in this context describes the swelling potential that artificial intelligence brings to augmented reality as it pulls ideas together from the vastness of the unknown. While it's an interesting concept, it's one for a feature at a later date. Right now I'm interested in how Stable Diffusion VR manages to work so well in real time without turning any consumer GPU (even the recent RTX 4090) into a smouldering puddle.

Stable Diffusion VR Real-time immersive latent space. 🔥Small clips are sent from the engine to be diffused. Once ready, they're queued back into the projection.Tools used:https://t.co/UrbdGfvdRd https://t.co/DnWVFZdppT#aiart #vr #stablediffusionart #touchdesigner #deforum pic.twitter.com/x3QwQDkapTOctober 11, 2022

"Diffusing small pieces into the environment saves on resources," Scotty explains. "Small clips are sent from the engine to be diffused. Once ready, they're queued back into the projection." The blue boxes in the images here show the parts of the image being worked on by the algorithm at any one time. It's a much more efficient way to have it working in real time.

Anyone who's used an online image generation tool will understand that a single image can take up to a minute to create, but even if it does take a little while to work on each individual section, the results still feel like they're happening immediately as you're not focusing waiting for a single image to finish diffusing. And although not at the level of photorealism as they may one day be, the videos Scotty's been posting are utterly breathtaking.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

Flying fish in the living room, ever-shifting interior design ideas, lush forests and nightscapes evolving before your eyes. With AI able to make projections onto our physical world in real time, there is so much potential for use in the gaming space.

Midjourney CEO David Holz describes the potential for games to one day be "dreams" and it certainly feels like we're moving hastily in that direction. Though, the important next step is navigating the minefield that is the copyright and data protection issues arising around the datasets that algorithms like Stable Diffusion trained on.

Having been obsessed with game mechanics, computers and graphics for three decades, Katie took Game Art and Design up to Masters level at uni and has been writing about digital games, tabletop games and gaming technology for over five years since. She can be found facilitating board game design workshops and optimising everything in her path.

Join The Club

Join The Club