New research shows bots beat people at convincing computers they're not bots

Confusing. But not surprising.

The PC Gamer massive just collectively died of not-surprise. It turns out bots are both faster and more accurate at completing CAPTCHA tests widely used by websites to filter out the machines from human users.

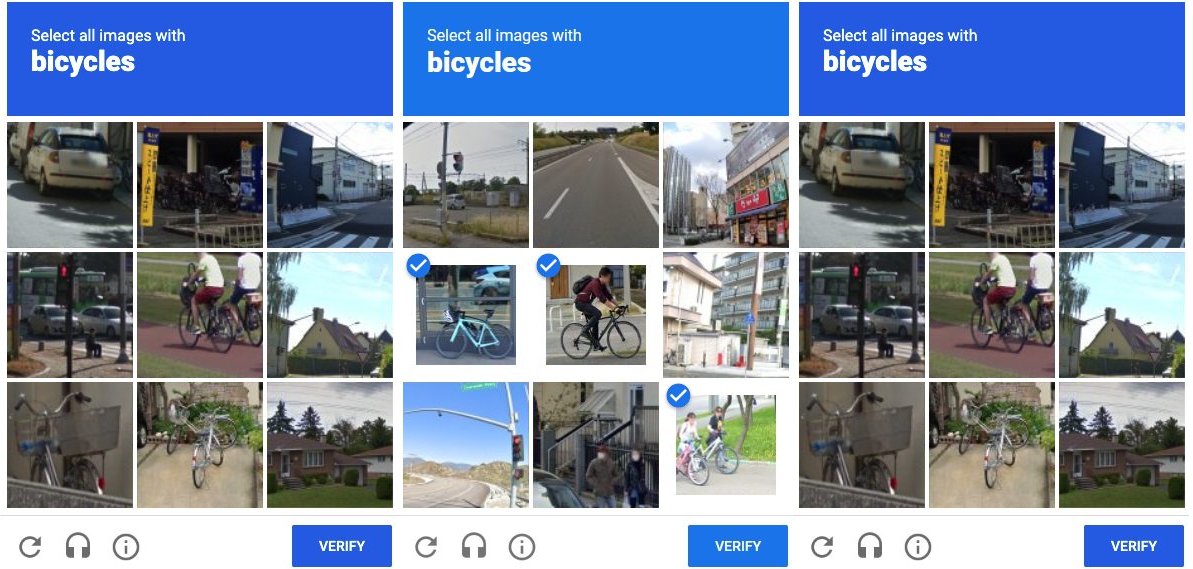

Yup, bots know better than you what a low-res bicycle looks like leaning up against a rusty fence.

The upsides to human verification tests, including the various CAPTCHA implementations (that's Completely Automated Public Turing test to tell Computers and Humans Apart, if you were wondering), are obvious enough. They prevent the bot hordes from scraping content, posting fake comments and dodgy links, setting up fraudulent accounts and all that bad stuff.

Obviously there's a trade off in terms of the inconvenience of repeatedly pecking away at often confusing images of buses, bicycles, fire hydrants and crosswalks or deciphering and then transcribing squiggly text. But it's worth it to keep our machine overlords at bay.

Of course, it's only worth it if it works. Which, apparently, it doesn't. So says new research conducted by Gene Tsudik and colleagues at the University of California, Irvine.

According to the New Scientist, Tsudik and co. parsed the world's 200 most popular websites, finding 120 of them using CAPTCHA tests. Next, they recruited 1,000 human candidates of varied age, sex, location and education to take 10 CAPTCHA tests each.

Comparing the performance of those humans to that of various bots coded by researchers and published in journals, it was the bots that scored higher.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Humans in the UC Irvine tests were between 50 and 84 percent accurate in the distorted text test, taking between nine and 15 seconds to complete the task. The bots? They were 99.8 percent accurate and did the job in less than a second.

With the latest large machine models capable of gleaning thoroughly abstract information, such as humour and emotion, from images, it's hardly a surprise to find the bots can now read slightly squiggly text or spot a bicycle.

And once a computer can do something, it's pretty much inevitable that it will do it faster than any human. So, the question that immediately follows is whether any of these CAPTCHA tests should continue to be used.

It probably depends on the extent to which bad actors have access to sufficiently advanced AI tools to make the tests useless. If it isn't already the case that all the bad guys have access to such tools, it surely won't be long.

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

So, the next conundrum is with what existing CAPTCHA tests should be replaced. Shujun Li at the University of Kent in the UK told the New Scientist, "new approaches are needed, like more dynamic approaches using behavioural analysis."

Meanwhile, Andrew Searles, team member at UC Irvine thinks a shift to running algorithms in the background to identify and weed out bot interactions on websites, rather than relying on actively testing users, may be the answer.

For sure, using formal tests gives the bots a clearly defined target for their learning. Presumably, they'll have a harder time adjusting to algorithms the scope and parameters of which would be entirely invisible.

In the very long run, it seems pretty unlikely that any measure could weed out a truly advanced AI pretending to be human. But so long as the bots are even bothering to fool us, well, that implies the machines haven't entirely won.

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.

Join The Club

Join The Club