Intel has launched its first wee discrete Arc GPUs, but desktop graphics cards are still a way off

From small acorns and all that...

Intel has taken the best bits of AMD and the best of Nvidia is preparing to make a splash in the discrete graphics card market. And I'm not just talking about the number of engineers, designers, and marketeers Intel has poached from the big two in the GPU game, either. From the design of the new Alchemist architecture itself, to the software, to the upscaling solution, it's clear Intel is learning from the best.

That's not to say Intel isn't bringing its own style to the party as it launches its mobile-first Arc GPUs, but when you're trying to muscle your way into a market that's been dominated by only two players for decades, you need to pay attention to what works and not reinvent the wheel.

Before you get too excited about a third player in the GPU market, we're still not yet talking about Intel's discrete desktop graphics cards. Intel has just announced its Arc 3 GPUs going into thin and light laptops from early April. Following that, in the early summer, will be Arc 5 and Arc 7 GPUs; the discrete silicon going into power all-Intel gaming laptops.

There's naught but a tease about the desktop versions, however, and so the wait goes on… But it's worth stating that the core design is going to be identical from the lowliest laptop GPU, to the beefiest desktop chip.

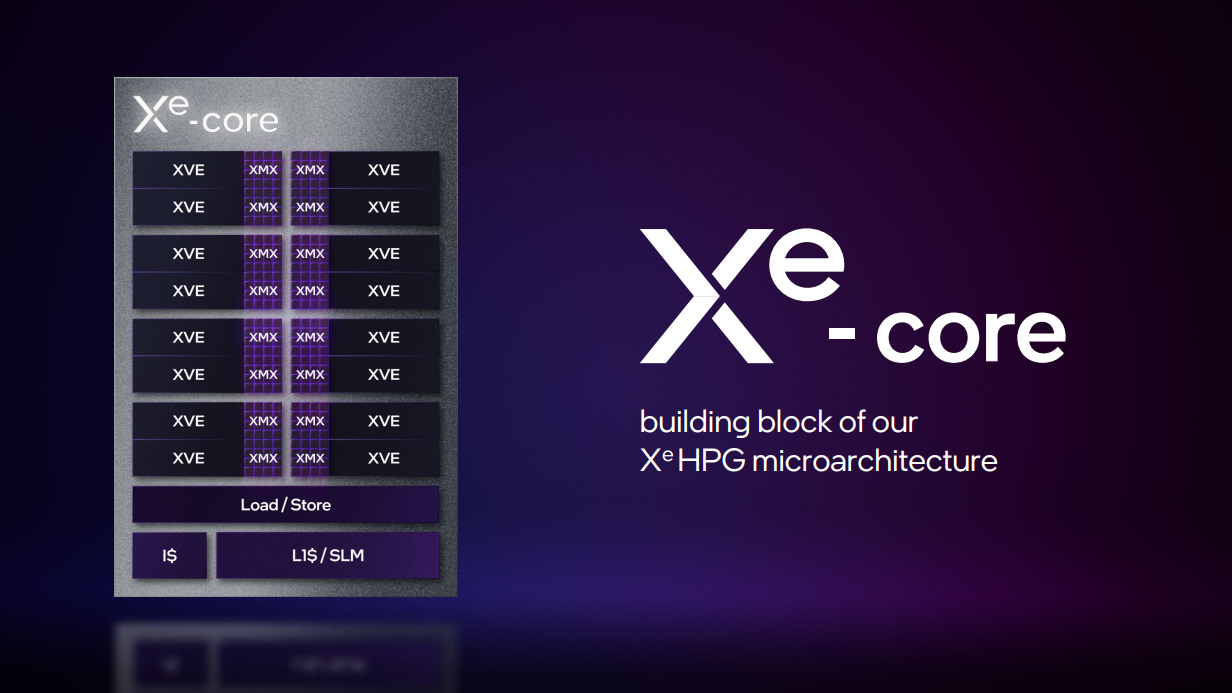

So, up first we have Arc 3 in A350M and A370M guises, with six and eight Xe-cores (think SM in Nvidia parlance) respectively. Then we'll get the bigger bois, the Arc 5 A550M with 16 Xe Cores, and then a pair of higher end Arc 7 GPUs—A730M and A770M—with 24 and 32 Xe Cores.

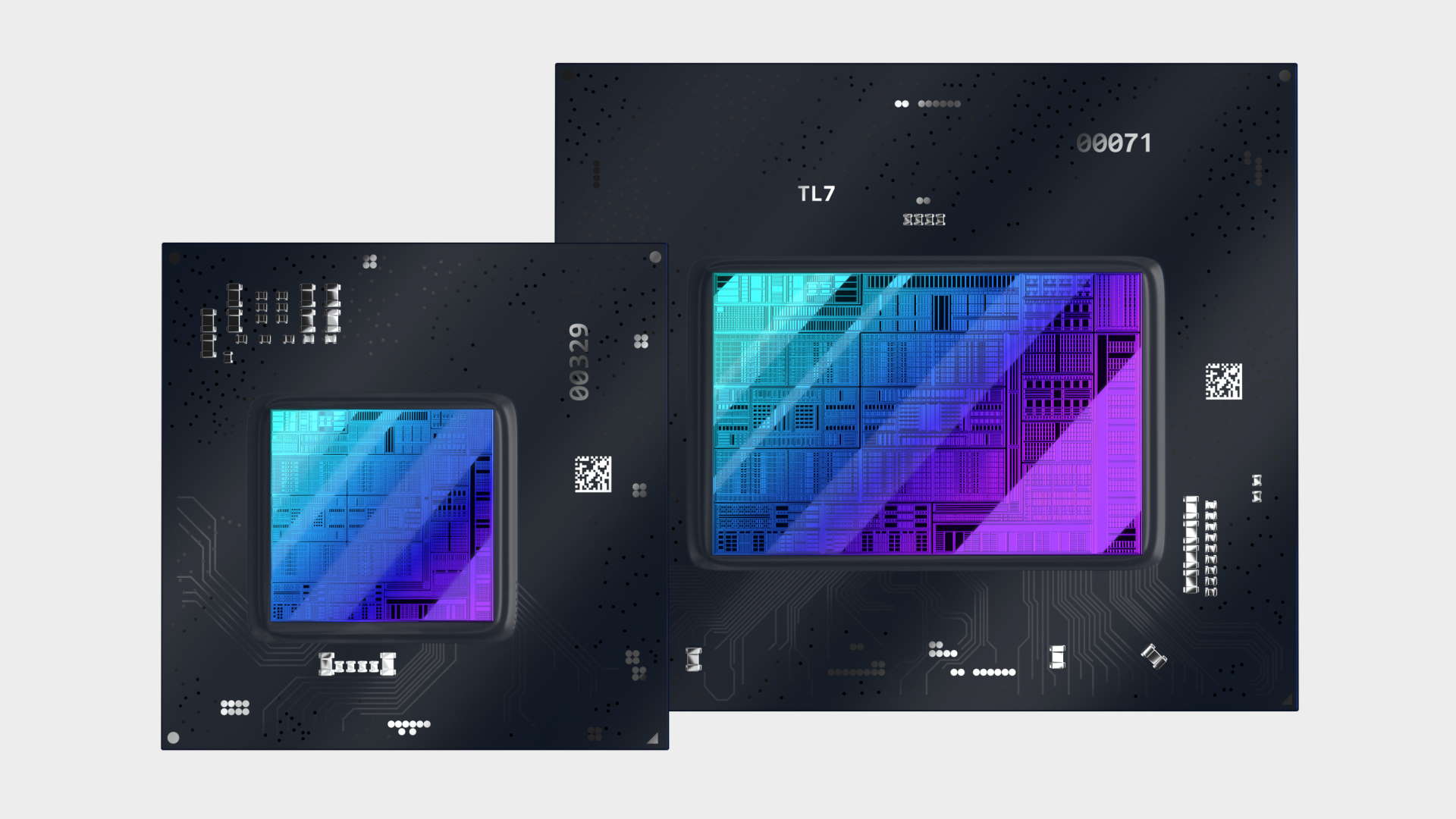

Intel is building these graphics cards from a pair of A-Series SoCs for mobile, the ACM-G11 with up to 8 Xe-cores for the Arc 3, and the ACM-G10 with up to 32 Xe-cores for the Arc 5 and 7 cards.

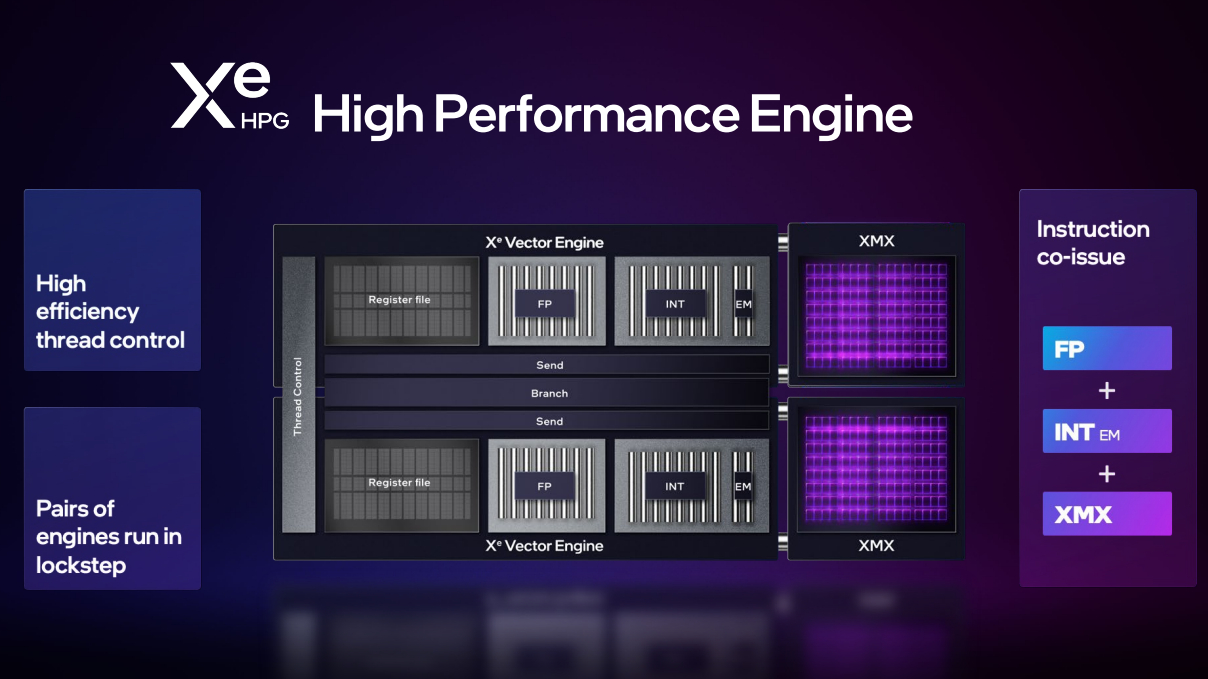

We've spoken before about these designs, from when they were first leaked many moons ago, in terms of their Execution Unit (EU) counts of 96, 128, 256, 384, and 512 EUs. The old EU design worked with Intel's old graphics chips, but they're now known as Xe vector engines (XVE), and look a lot like AMD's RDNA-based dual-workgroup design.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

But attached to each of the XVEs is XMX matrix engine that looks a whole lot like a mini Nvidia Tensor Core, and each Xe-core comes with a dedicated ray tracing unit. It's interesting that even the lowest spec GPU will come with ray tracing hardware, and it's going to be interesting, given the traditional demands of RT features, just how those are utlised.

The fact those top two mobile graphics cards come equipped with 12GB and 16GB of GDDR6 memory, attached to 192-bit and 256-bit memory buses, also shows how serious Intel is about making high-end GPUs for mobile devices.

Looking forward to the desktop versions, you can expect a certain amount of parity between the designs of these mobile chips and their non-mobile siblings. I would expect the cores counts to align (though we likely won't see a six Xe-core desktop chip), but they'll come with higher clock speeds and more extreme power demands as a consequence.

Again, showing how Intel has learned from the other two, the Xe Super Sampling (XeSS) feature utilises the XMX AI engine of the Arc design to power the temporal upscaler, much in the same way as Nvidia's DLSS uses the Tensor Cores of Ampere.

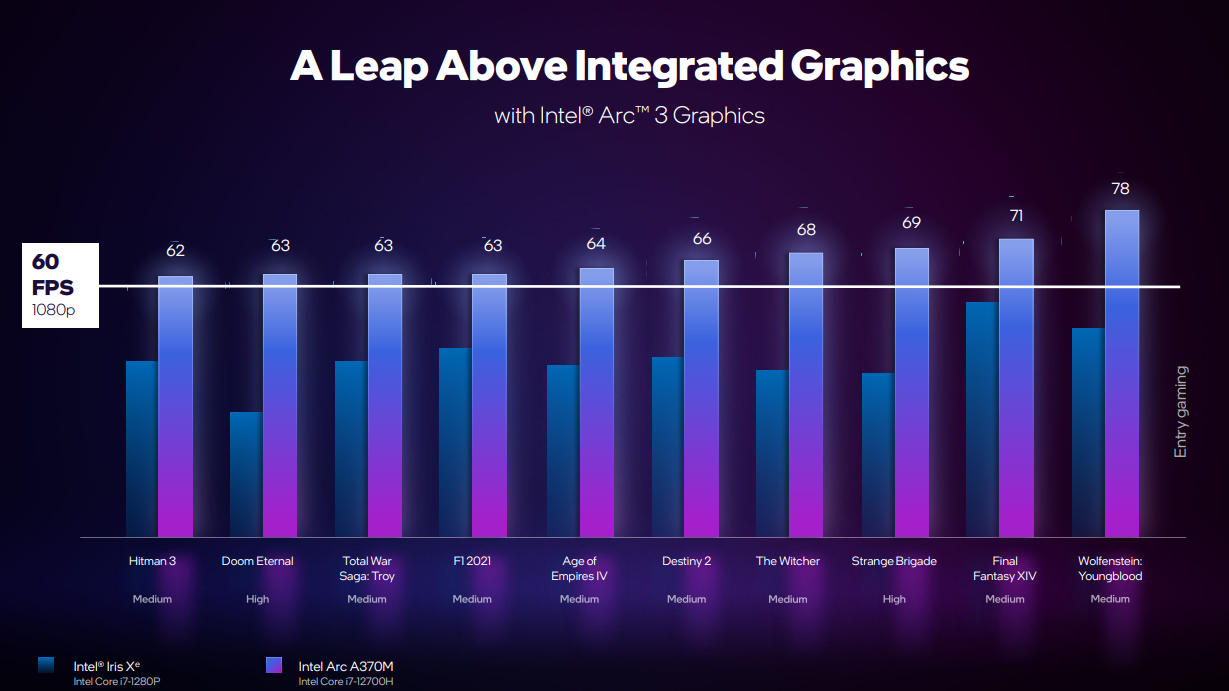

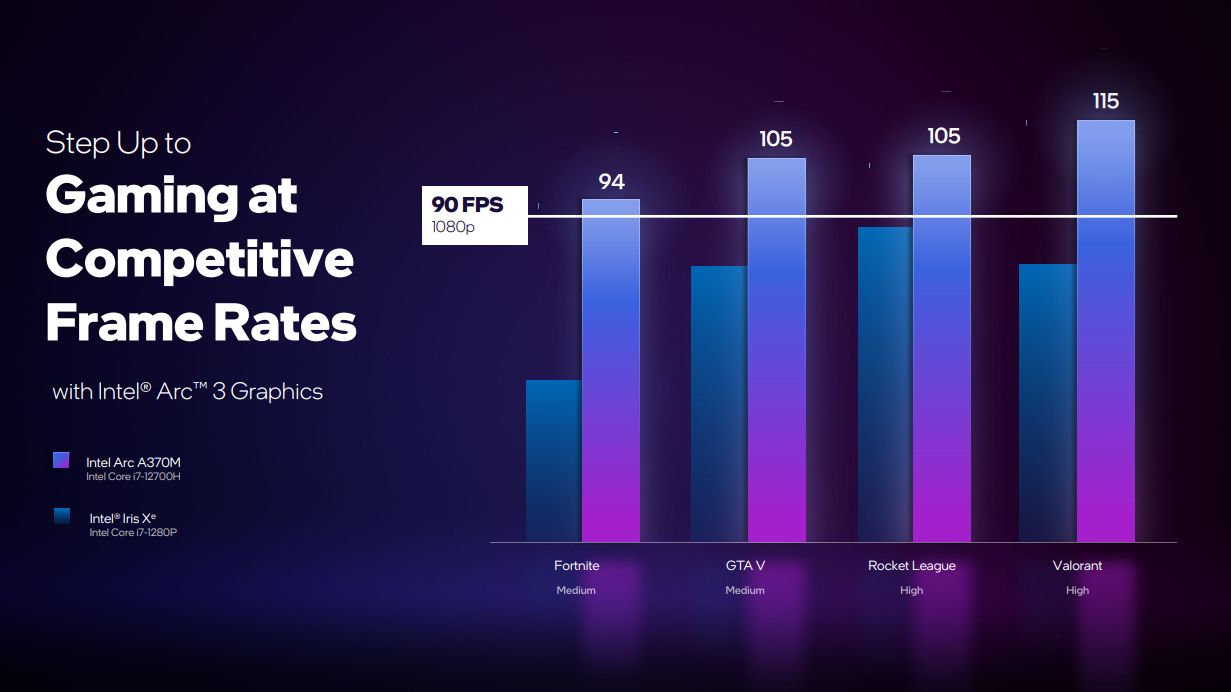

In terms of actual performance, we're still a little in the dark on the higher-end GPUs, but Intel has at least shared the level of mainstream gaming frame rates you can expect from the Arc A370M GPU. Basically, you can expect 1080p gaming from your new ultra-mobile laptop at a steady 60 fps. How that compares with AMD's latest integrated graphics we'll have to wait and see until we get comparative notebooks in for testing. But from the looks of things the 8 Xe-core chip can outperform the 680M.

Which ought to also place it above the Nvidia MX 450 at the lower-end of Nvidia's GPU stack.

It's an exciting time, to finally be on the verge of a third entrant into the previously two-horse GPU race. And, while the low-end mobile graphics chips aren't necessarily the ones we really want to get our techie mitts on, they may lay a firm foundation for Intel to build from. And I'm looking forward to testing them when I finally get hands on next month.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.

Join The Club

Join The Club