Apple's new M1 Ultra chip isn't a PC killer, it's just a sign of great things to come

Credit where credit's due—Apple's M1 Ultra chip is a modern monster.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Nvidia, AMD, and Intel have all been in something of a race to multi-die GPUs. The theory is if you take one powerful chip and glue it seamlessly to another, you'll end up with something twice as good. Simple, right? Well, it's not quite that easy, and while AMD has managed to make this concept work for its high-end supercomputer compute MI200 accelerator, no one else has had anything more to share as of yet.

M1 Ultra is another game-changer for Apple silicon that once again will shock the PC industry.

Johny Srouji, Apple SVP

Well, until Apple just rolled in with its new M1 Ultra System-on-Chip (SoC).

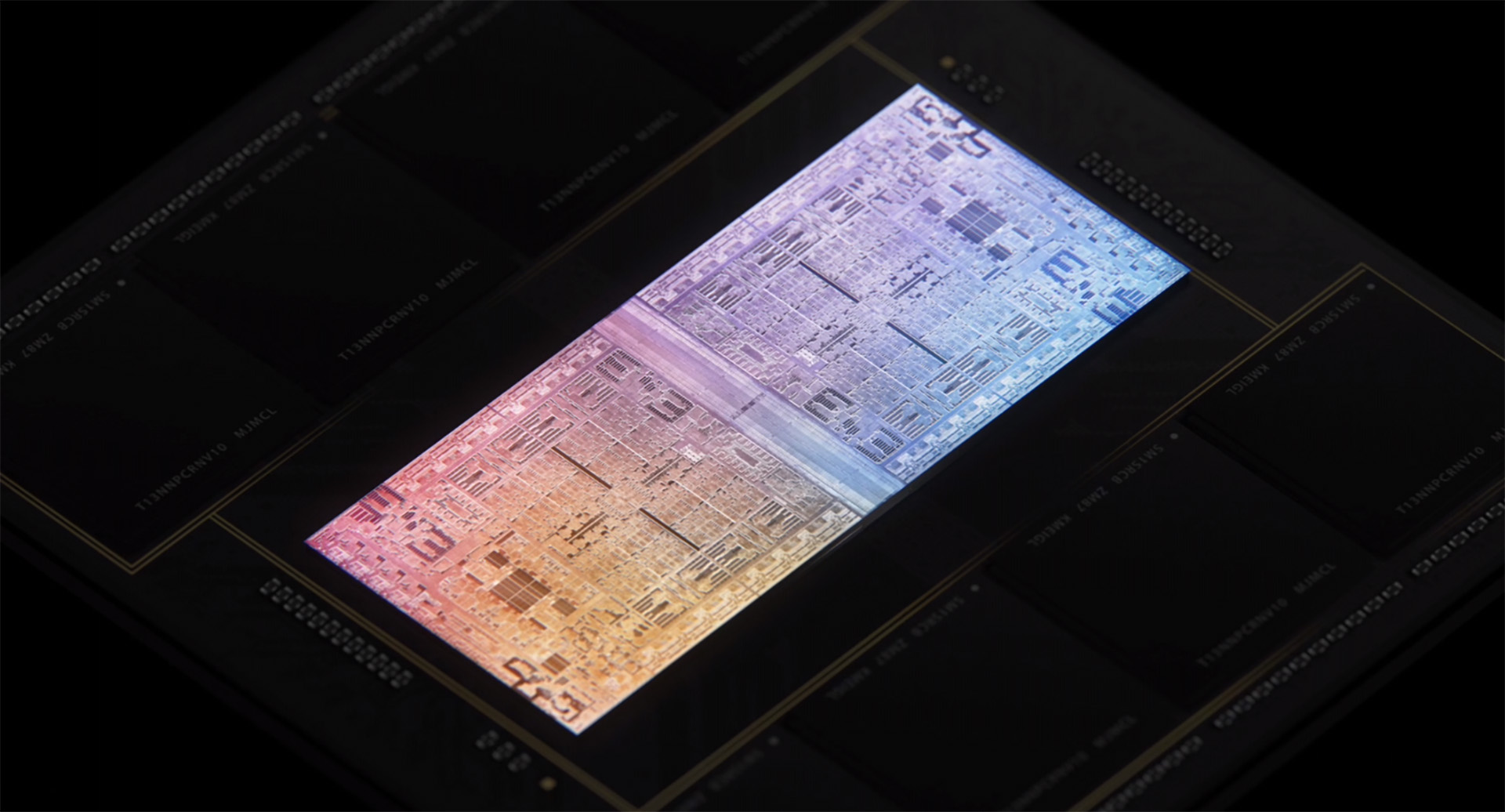

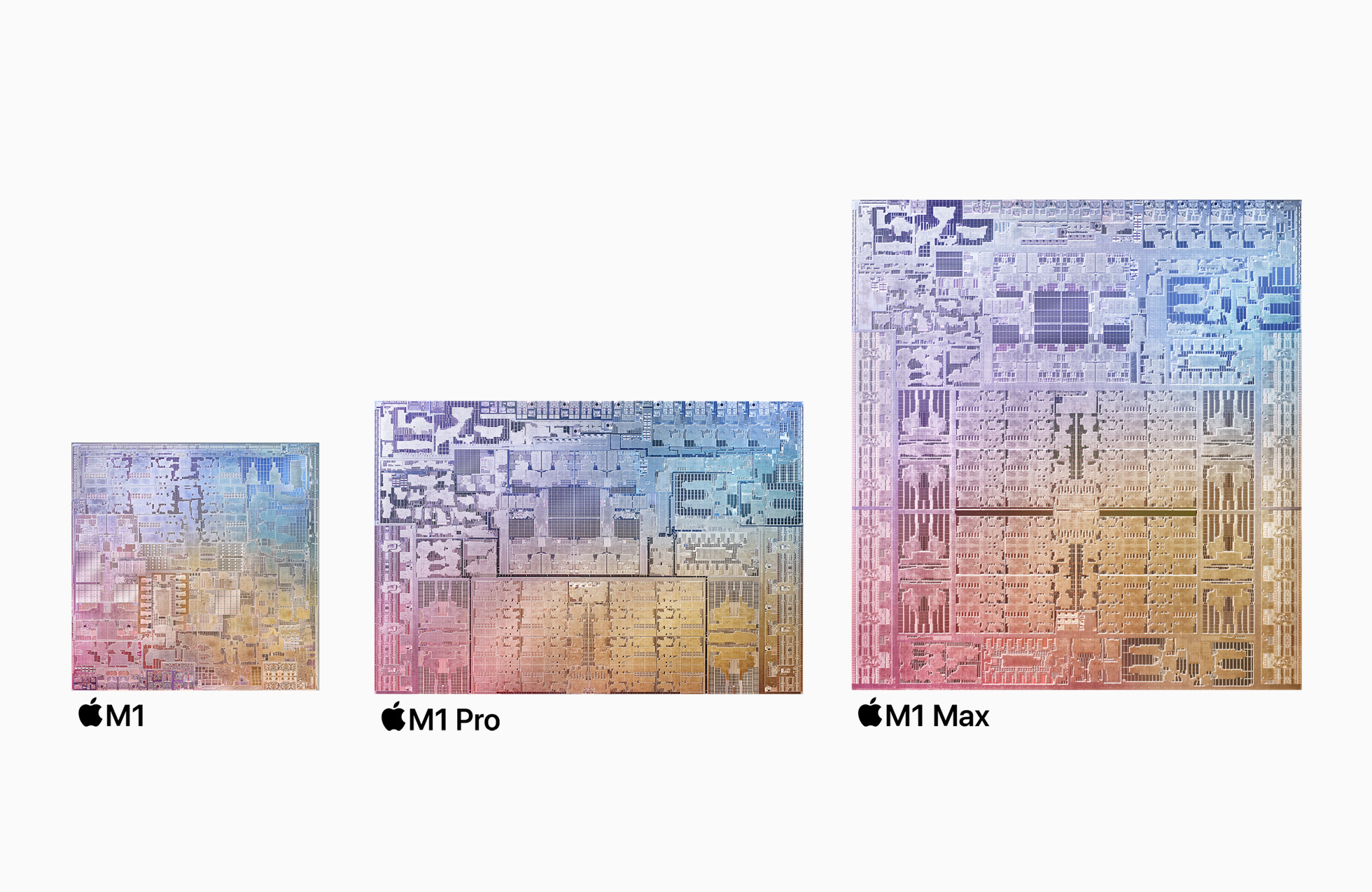

Combining two M1 Max SoCs, which launched late last year, the new Apple M1 Ultra brings together their many CPU and GPU cores, into a single package. That makes for a 20-core Arm-based CPU, 64-core GPU, and 32-core Neural Engine under one roof. That makes for a chip with 114 billion transistors in total. That can then be configured with up to 128GB memory on the side.

Article continues belowJust for a point of reference, the Nvidia GeForce RTX 3090 features 28.3 billion transistors in total. Granted, the Apple M1 Ultra is CPU, GPU, and I/O all in one package, and then doubled through an interconnect, but effectively Apple has thrown a whole lot of transistors at the compute problem to make it go away.

The key to it the Ultra chip is what Apple calls "UltraFusion"; its new packaging architecture. It's effectively a 10,000 signal strong bond along the edge of each of the chips, which is put there during the packaging process. This allows for high-speed communication between the two connected chips of up to 2.5TB/s. Which is a big number by any understanding.

The interconnect itself is not an entirely new concept, and Intel and AMD have their own high-bandwidth interconnects to match, but Apple's version definitely sees it throwing everything it can to keep abreast of the latest from the other major players in chip building.

Best CPU for gaming: The top chips from Intel and AMD

Best gaming motherboard: The right boards

Best graphics card: Your perfect pixel-pusher awaits

Best SSD for gaming: Get into the game ahead of the rest

"M1 Ultra is another game-changer for Apple silicon that once again will shock the PC industry. By connecting two M1 Max die with our UltraFusion packaging architecture, we're able to scale Apple silicon to unprecedented new heights," said Johny Srouji, Apple's senior vice president of Hardware Technologies. "With its powerful CPU, massive GPU, incredible Neural Engine, ProRes hardware acceleration, and huge amount of unified memory, M1 Ultra completes the M1 family as the world's most powerful and capable chip for a personal computer."

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Now Apple's M1 Ultra chip isn't a game-changer in the sense of changing games, at all, really. You can run games on an Apple machine, of course, but that's not what this GPU is in any way built to run.

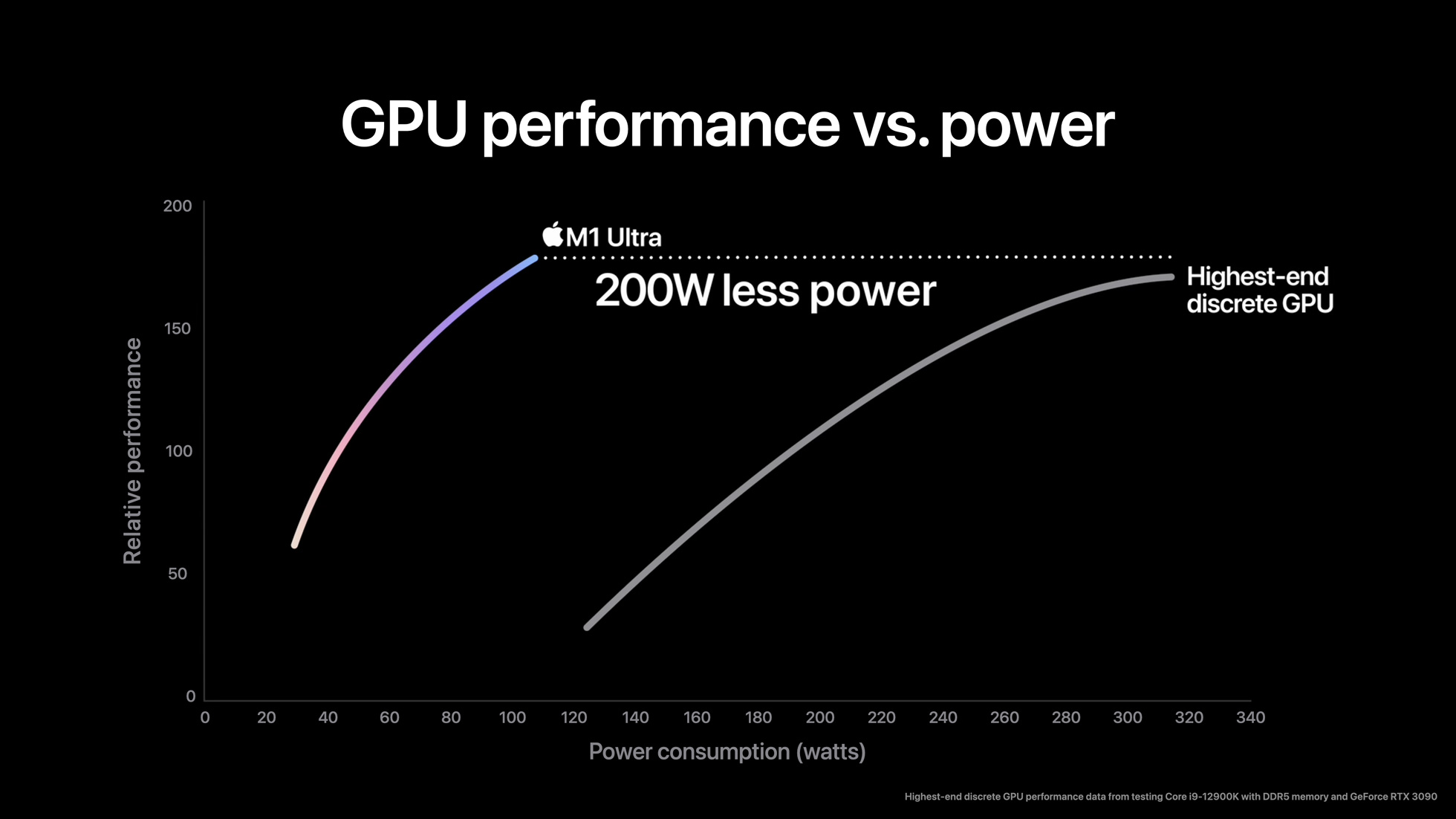

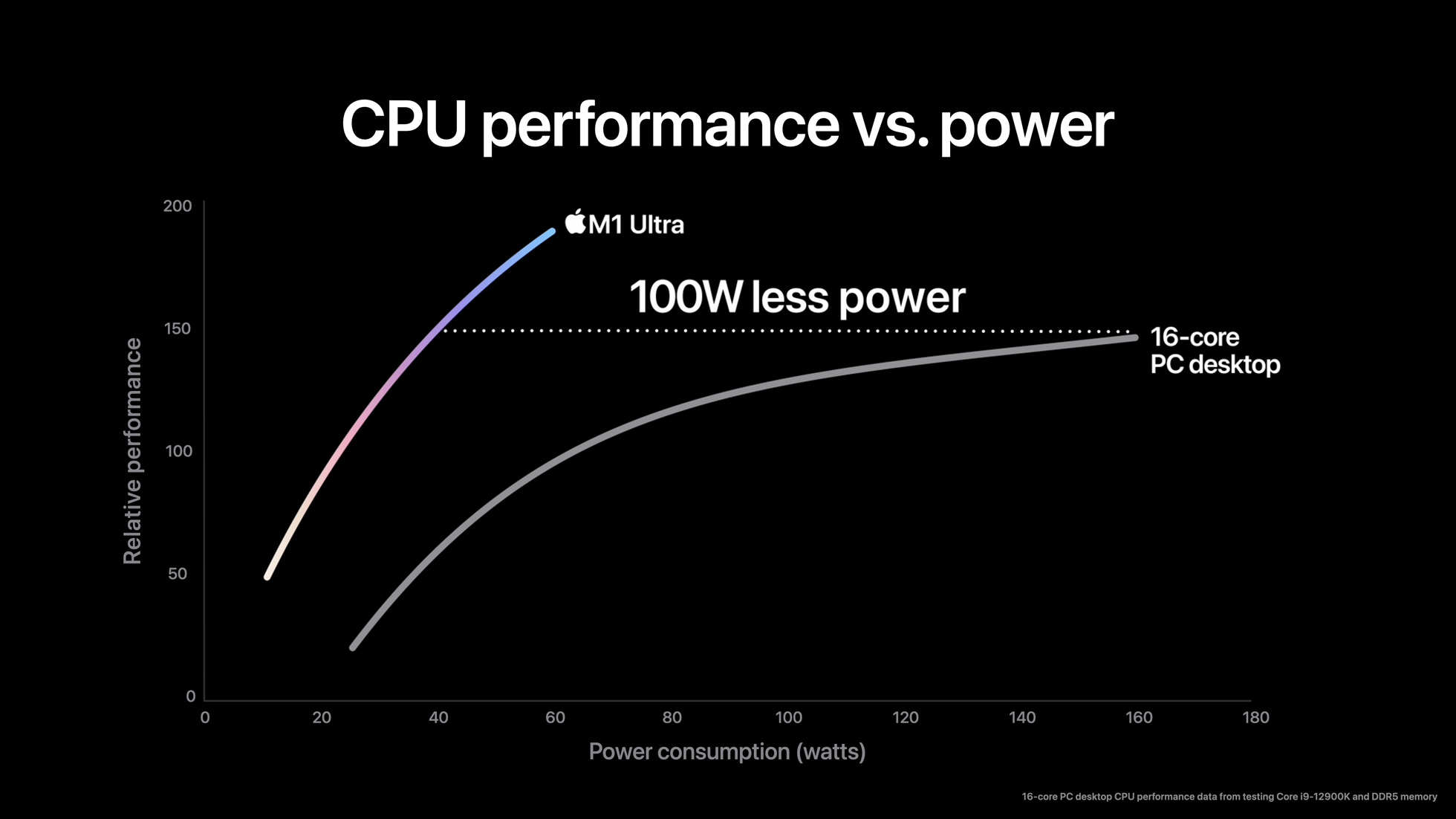

The company is also once again being incredibly cagey about the exact benchmarks it's used in order to show its relative performance/Watt here—all we know is that it used "select industry‑standard benchmarks" and that its "popular discrete GPU performance data tested from Core i9-12900K with DDR5 memory and GeForce RTX 3060 Ti. Highest-end discrete GPU performance data tested from Core i9-12900K with DDR5 memory and GeForce RTX 3090."

Still, Apple claims that this chip is able to surpass Nvidia's GeForce RTX 3090—technically Nvidia's top card as the RTX 3090 Ti is currently a no-show—under certain conditions and with far less energy consumption.

Now that's one heck of a claim, but as we saw with the M1 Max, which was roughly purported to be as good as Nvidia's GeForce RTX 3080, the reality of it is that there are caveats to everything. That's especially true if you're looking on as a PC gamer with expectations of gaming performance. While Apple's chip will be damn good at a lot, gaming is really not what it's designed for. Whereas Nvidia's Ampere architecture more or less is.

Even with a more generalist gauge of performance, TFLOPs, the M1 Ultra is still a little off the RTX 3090's 35.58 TFLOPs FP32. The M1 Max was roughly rated to 10.4 TFLOPs, and if you were to exactly double that (as is the case with the M1 Ultra's two M1 Max dies connected together), you'd hit 20.8 TFLOPs. Quite a bit lower, even if you account for TFLOPs not being a direct measure of actual performance.

That power efficiency is very impressive though. Apple is once again rolling out TSMC's 5nm process here, which is another feather in the company's cap and undoubtedly propelling it into new territory in energy efficiency. Intel, AMD, and Nvidia have all yet to use a comparable process node at scale.

| Header Cell - Column 0 | M1 | M1 Pro | M1 Max | M1 Ultra |

|---|---|---|---|---|

| Transistors | 16B | 33.7B | 57B | 114B |

| Process node | 5nm | 5nm | 5nm | 5nm |

| CPU cores (high-performance + high-efficiency) | 4+4 | Up to 8+2 | 8+2 | 16+4 |

| GPU cores | Up to 8 | Up to 16 | Up to 32 | Up to 64 |

| GPU ALUs | 1,024 | 2,048 | 4,096 | 8,192 |

And if Apple can get its dual-GPU SoC to be seen by a system as one singular chip, that's mighty impressive too. That's the real difficulty in creating a multi-die GPU: it's been exceptionally difficult to make these discrete chips appear as one to a system and not require any bespoke programming. At least for anything that isn't just doing raw compute tasks.

We don't want just another SLI/CrossFire situation here—where game developers or Nvidia/AMD are largely responsible for getting multiple GPUs working in tandem—multi-die GPUs need to be seen as one and work as one for all intents and purposes.

As for CPU performance, Intel and Apple have the company equivalent of a blood feud now, so you can imagine there's no love lost on either side. Apple has focused on comparing against the Intel Core i9 12900K with its unspecified benchmark results here—which are about as useful as a lead bouncy castle—but it claims nearly double performance at 60 watts. It's certainly likely that the M1 Ultra's 16 high-performance cores and four power efficient cores are capable of showing Intel's what for in some capacity and benchmarks, though further digging is required to really see how these two chips shake out performance-wise.

The M1 Ultra is a chip that no doubt looks great on paper, and will likely look great with those workloads that Apple has designed it for—those in the workstation creative space. We'll have to see how it fares in real-world benchmarks (where the benchmarks and test conditions are actually specified), however.

Though, that said, I think you can look at what Apple's managed to do with a multi-die SoC of its own design as a very promising sign of what's to come for PC gaming. Intel is working on tiled SoC designs that combined interconnected chiplets of Arc graphics and next-gen CPU architectures, starting with Meteor Lake in 2023. While AMD has stacked VRAM CPUs and multi-die GPUs just around the corner, apparently. Nvidia, too, is said to be gearing up for a major uplift in transistor count (and power) with its Lovelace and Hopper architectures.

We're on the precipice of a very exciting time in GPU development, and Apple's M1 Ultra is a glimpse of what's to come from heaps of companies now all fighting for performance dominance with intricate designs and cutting-edge process nodes.

And it'd be remiss of me not to discuss price in relation to Apple's M1 Ultra chip. Apple's chip comes in the Mac Studio, Apple's new fancy desktop box. With the full-fat M1 Ultra inside and 128GB of memory, you're looking at a $5,799 package. That's with just a 1TB SSD, too. It's $7,999 for an 8TB model. You can shave that price down to $3,999 if you ditch the top-tier M1 Ultra for a 48-core GPU model and go for only 64GB memory.

So consider the M1 Ultra as high-end a processor as they come. Apple also recently added height adjustment to its monitor stand and slapped another $400 onto its price tag for the privilege. Some things never change.

Jacob has been writing about PC hardware and technology for over eight years. He earned his first byline at PCGamesN before joining PC Gamer. He spends most of his time building PCs, running benchmarks, and trying his best to learn Linux.

Join The Club

Join The Club