3D audio is back, and VR needs it

Real immersion

If there's been one area of gaming that's been overlooked for years, it's been audio. We've been hard at work trying to bring greater awareness to audio recently, and it's more important now than ever. End users are now getting their hands on the Rift and Vive VR headsets, and it's only a matter of time before all the new games start hitting the virtual shelves. But nearly all the development effort in recent years has been put on visuals. It's time for audio to take a front row seat.

Put on a pair of headphones for the video above (and below).

Back in the late 90's, a company called Aureal 3D stunned gamers with its immersive A3D technology. Aureal was able to get puny desktop speakers to produce real 3D positional audio without you having to setup three or more extra speakers. With A3D, you were able to identify sounds coming from behind you, above you, below you, and by your sides. It was cool. Diamond Multimedia was the first to launch an A3D card called the Monster Sound 3D. At the time, gamers everywhere questioned Creative Labs' lifespan. Soon, Aureal died. (Creative Labs is still alive and kicking today.)

How did Aureal achieve 3D positional audio? First, we need to talk about how the ears and brain work in determining sound location.

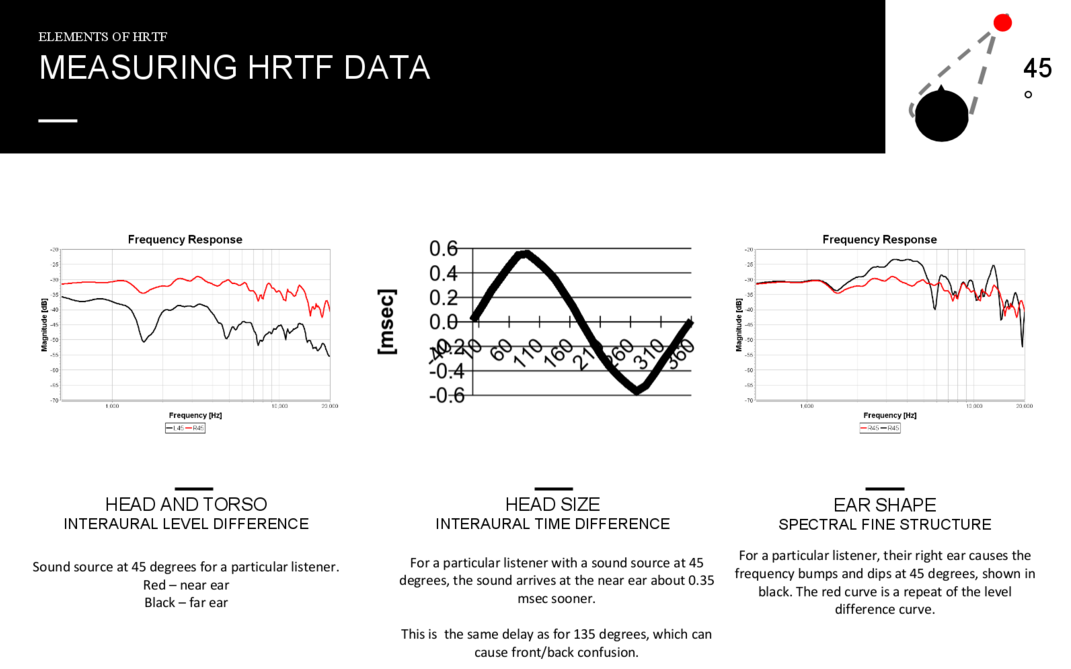

Our brains are masterful at identifying the location of sounds, but only with transients, or higher frequency sounds. Lower frequency sounds are a lot more difficult to locate. The brain uses three ways to identify a sound's location: the shape of the ear, the time difference between each ear, and the sound pressure level between each ear.

Your ears act as a sound filter, causing sounds to be modified differently depending on whether they originate from behind or in front of the ear. If they're coming from behind you, the sound waves will be filtered through your ear walls before entering your ear canal. The same happens for other directions.

The distance between your ears plays a pivotal role in sound localization too. Depending on the origin of the sound, the waves will arrive at each ear at slightly different times. Your head size also impacts the amount of delay between each ear as well as diffuse the sounds. Sound that arrives later to one side of your head will also have a different pressure level. All of the above factors allow the brain to pinpoint direction with incredible accuracy.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

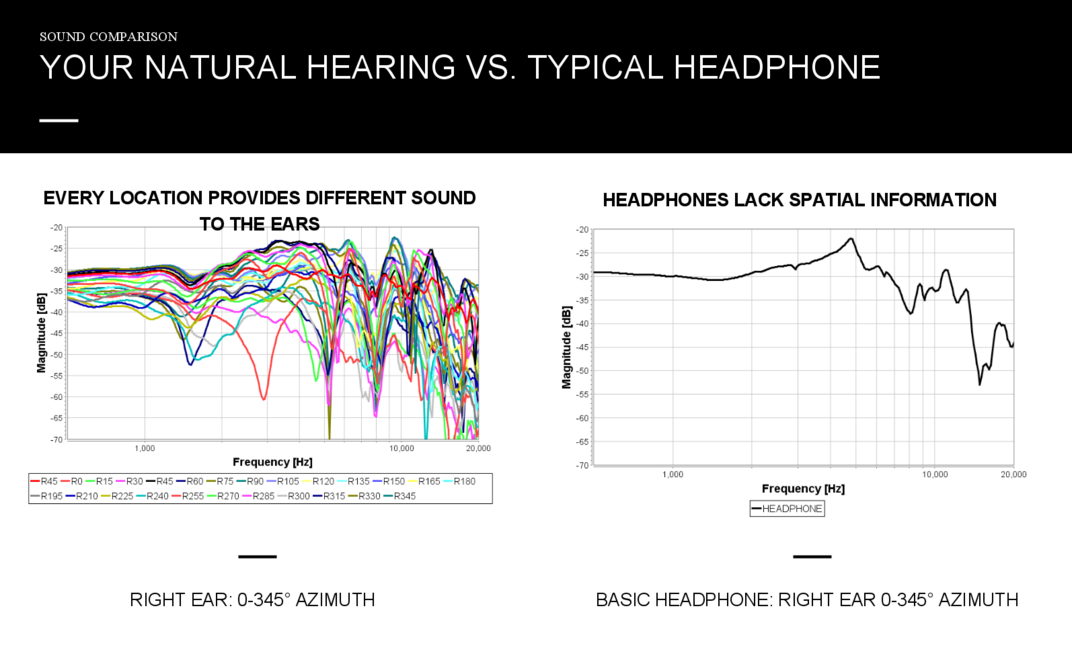

We can digitally create a model of the above properties into something called a head-related transfer function, or HRTF. Since everyone has a unique head and set of ears, and HRTFs are calculated according to a person's body, HRTFs differ from person to person.

Calculating a complete HRTF model for every individual in real time is not possible, since the data is primarily collected by placing microphones inside your ears as close to the canal as possible. And even then, to accurately generate aHRTF model unique to someone, the whole procedure ideally needs to be done in an anechoic chamber. This is obviously impractical, but general HRTF models can be used, which is what Aureal 3D did with A3D. In fact, in the original Aureal driver, you were asked to select your head size.

When sounds are played through the HRTFs, your brain gets tricks into identifying sound locations because the sound cues are modeled based on how they would have sounded in different directions. For a demo, put on a pair of headphones and play these demo videos from the original Aureal 3D driver disk:

Videos were uploaded by Toni Schnieder

So if creating accurate HRTFs are the way to go, how can Aureal and other 3D audio companies produce convincing sound based on averaged HRTFs? It turns out that the brain is pretty good at adapting. Initially, a person listening to audio using someone else's HRTFs won't be able to locate sounds. But after some time, the brain adapts and the brain learns the cues necessary to process direction.

So why do we need 3D audio to come back? Because of VR. With VR, you're no longer in front of an environment being displayed on a 2D screen, you're literally in the environment. Environmental sounds are supposed to be all around you now.

A few weeks ago I had the chance to visit Ossic VR's lab in downtown San Francisco. While there, I was given the chance to wear a prototype headphone that measured the properties of my head in order to generate a custom HRTF for me specifically.

Then, I strapped on a HTC Vive and played through a DOTA demo from Valve. The Vive was chosen specifically because of the ability to walk around in the virtual environment. In this way, you could really hear the sounds change in direction, volume, etc., as you moved around the room.

As the demo ran, I was clearly able to identify not only where sounds were coming from, but how close they were from me. Put simply, I felt much more immersed in the experience than I did with normal stereo sound. Immersion is a term that gets thrown around rather liberally, but in this case, it's completely legitimate.

Near the end of the demo, I heard a rumbling and cracking sound from behind and above where I was standing. When I turned around and looked up, the ceiling of the room began breaking apart. Moments later, I found myself taking a few steps back to avoid the monster breaking through the breach. I heard the event before I saw it. Without HRTFs, I would have heard the sounds coming from my side instead.

During a Steam Dev Days event in 2014, Oculus chief scientist Michael Abrash, who was at the time with Valve, spoke much about the aspect of "presence" as a critical level where one is convinced of realism and "being" in VR. Although Abrash spoke of presence in terms of the visual aspect of VR, it's clearly just as important to obtain the same "presence" in audio.

In case you're wondering, the Oculus Rift fully supports 3D audio as part of its API. It's clear that Oculus knows 3D audio is a critical part of VR immersion and presence, and we're hyped about how games are going to take advantage of this.

In a horror film, ambient sounds and creepy noises are half the movie. Have you ever tried watching a horror film in silence? It's not nearly as frightening. However, in a completely dark room where you're unable to see anything, hearing creepy sounds can really spook you, especially if they're coming from behind you.

There's no denying that 3D audio, when implemented with correct HRTFs, works really well. For the desktop gaming experience, it's an area that's been lacking attention for years, but it's coming back strong. Even though my experience was just a demo, the stimulus tricked me into behaving instinctively. In a VR game, that reaction could mean the difference between life and death.