Six lessons Valve has learned about VR

Deep in Valve's labs, engineers have been building improvised virtual reality and augmented reality systems for years. Hardware experts and game designers have been puzzling over the scope and limitations of this new technology, and are ready to share their knowledge with the world. At GDC this year Valve's Alex Vlachos gave a talk about advanced VR rendering that covered some of the important lessons the Valve hardware team has learned, and are a few nuggets amid the rendering pipeline talk.

Good latency is "fundamental"

VR developers have been vocal about this for a while. Former Valve VR tinkerer Michael Abrash (now Oculus' chief scientist) has blogged about why the importance of latency. "If too much time elapses between the time your head starts to turn and the time the image is redrawn to account for the new pose, the virtual image will drift far enough so that it has clearly wobbled," he writes, before laying out the target latency for effective VR and augmented reality (AR). "I can tell you from personal experience that more than 20 ms is too much for VR and especially AR, but research indicates that 15 ms might be the threshold, or even 7 ms."

That's much easier said than done, but Valve claims to have cracked it with the Vive headset. Gabe Newell told the New York Times that "zero percent of people" experience nausea using the Vive. Wes has tried it, so check out his account from GDC.

Even the smallest objects matter

Typically developers devote fewer resources to objects and textures that the player won't pay much attention to. According to Valve, in virtual reality players are more likely to pay close attention to everything. The low-poly mug a dev might deploy to save resources will stand out horribly if the player wanders near it.

“If it's in your tracked volume, it must be high fidelity” warns Valve's Alex Vlachos. “Even your floors need to be higher fidelity than we have traditionally authored.” That affects the scope of VR projects, as the effort required to produce quality art assets will be significantly greater per cubic metre of game space.

Aliasing is a big deal

Valve suggests that VR users really notice “sparkling” if object edges aren't smoothed out by decent anti-aliasing because the camera (your head) never stops moving. “We must increase the quality of our pixels,” Valve says. Good, fast anti-aliasing should help. Vlachos' talk indicates that 4xMSAA is the absolute minimum requirement, and he prefers to push it up to 8xMSAA if performance allows.

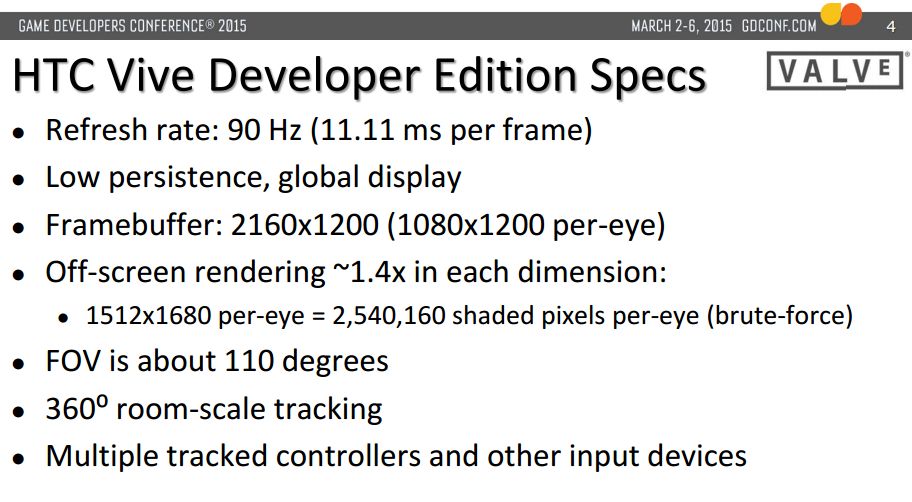

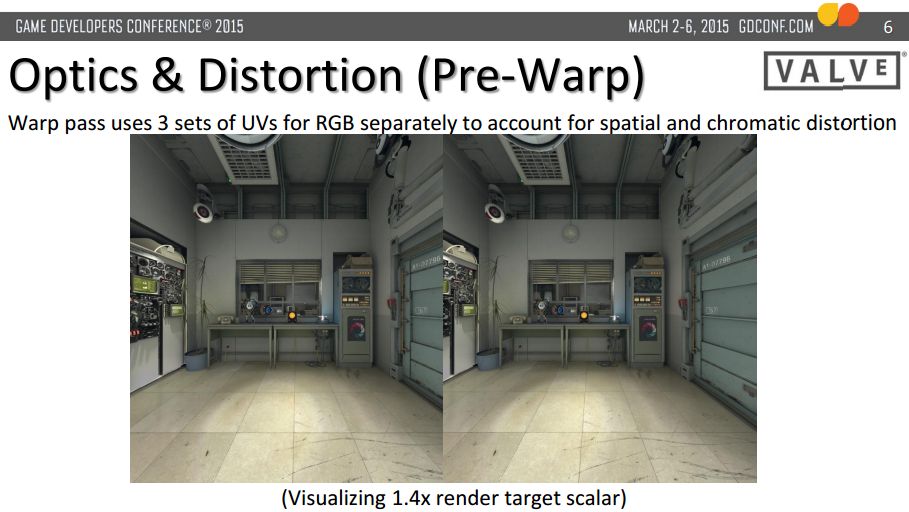

There are a lot of pixels to handle. One of Vlachos' slides highlights the disparity between traditional displays and VR headsets. A 60HZ 1080p monitor puts out 124 million shaded visible pixels per second. A VR headset with a pair of 1512x1680 screens at 90HZ (the bare minimum refresh rate to achieve “presence”, Valve reckons) puts out 457 million pixels a second, though that can be reduced to 378 million pixels/sec using technical tricks.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Minimum specs for VR games are an issue

So, VR environments need to be rendered at a higher fidelity than typical game environments, and scenes must be rendered at 90Hz with at least 4xMSAA on hardware that's pushing more than 370 million pixels a second. Right now it sounds like you'll need a Large Pixel Collider to support even basic VR environments.

Valve is keenly aware of this, too. It's aiming for the lowest GPU minimum spec possible for VR, for obvious reasons. “The lower the min spec, the more customers we have.” It sounds like there's still optimisation to be done on the VR software side, but top-end PC hardware will surely be a requirement in VR's early days. Nvidia is looking to capitalise with the recently announced Titan X, which houses 12 GB of VRAM and 8 billion transistors. “This GDC is about VR,” said Epic founder Tim Sweeny. “It needs an amazing GPU.”

VR is hard

Valve began research more than three years ago, assembling charmingly low-fi prototypes in their studio chop shop. The many stages of the headset's evolution were proudly displayed at GDC this year, and Wes took some shots. It's a cool insight into the work that's gone into rescuing virtual reality, considered a pointless pipe dream a couple of years ago. Now we're at a stage where the requirements for VR are understood, but engineering is needed to meet those requirements.

Micheal Abrash describes the challenge on the Oculus blog. "It's engineering, not research; hard engineering, to be sure, but clearly within reach. For example, there are half a dozen things that could be done to display panels that would make them better for VR, none of them pie in the sky. However, it's expensive engineering. And, of course, there's also a huge amount of research to do once we reach the limits of current technology, and that's not only expensive, it also requires time and patience—fully tapping the potential of VR will take decades."

Sharing tech is good for everyone

Valve has shared a lot of its tracking technology expertise with Oculus in the last year or so, while also developing a rival headset. Valve is also pushing its OpenVR APIs, which are "free to use" and don't require integration with Steam. Newell also told the NYT that Valve will give away its Lighthouse tracking system for free to VR hardware developers who want it. Many companies would keep their proprietary tech to themselves, hoping to retain a unique advantage in the market, but Valve has its own logic: if the market grows, everyone involved benefits.

The scary thing about VR is that there is no market yet. All of the expensive R&D that's going into headsets could be a waste of time if the hardware isn't quite good enough, or if no-one makes software for it. The hardware we've had the opportunity to try is increasingly convincing, but it all seems unreal somehow. Are we witnessing another passing fad, or the birth of, as Abrash puts it, "The Final Platform—the platform to end all platforms." What do you think?

Part of the UK team, Tom was with PC Gamer at the very beginning of the website's launch—first as a news writer, and then as online editor until his departure in 2020. His specialties are strategy games, action RPGs, hack ‘n slash games, digital card games… basically anything that he can fit on a hard drive. His final boss form is Deckard Cain.