How netcode works, and what makes 'good' netcode

Arm yourself with what you need to know about netcode, from tick rates to dedicated servers and peer-to-peer systems.

Netcode is a layman's term, used by gamers and developers alike, to talk about a broad and complicated topic: the networking of online games. You can think of it like the foundation of every multiplayer game, and when that foundation isn’t rock solid, nothing else matters. Playing online won't be any fun. When netcode is "good," you probably don't even think about it. But when netcode is "bad," what do people really mean? What issues are they talking about?

Netcode is the umbrella that encompasses many aspects of network gaming. This guide will break down those component parts and help you understand what goes into good and bad netcode. To start, here are some of the most important terms that are likely to come up in deeper netcode discussions.

For some common misconceptions about netcode and a summary of the components that make up good network gaming, jump over to page two.

Netcode terms you should know

Ping

Have you seen the movie The Hunt For Red October where a "ping" (active sonar pulse) was used to check the distance between the Russian and the US submarine?

The ping that online games show us in the scoreboard or the server browser is more or less the same thing.

When a PC or console "pings" the server, then it sends an ICMP (Internet Control Message Protocol) echo request to the game server, which then answers this request by returning an ICMP echo reply.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

The time between sending the request and receiving the answer is your ping to the game server. This means that with a ping of 20ms, it takes data 10ms to travel from the client to the server, as the ping is the round-trip time of your data.

Higher ping values mean that there is more delay or lag, which is why you want to play on servers with very low pings, as that is the basic prerequisite for games to feel snappy and responsive.

Routing

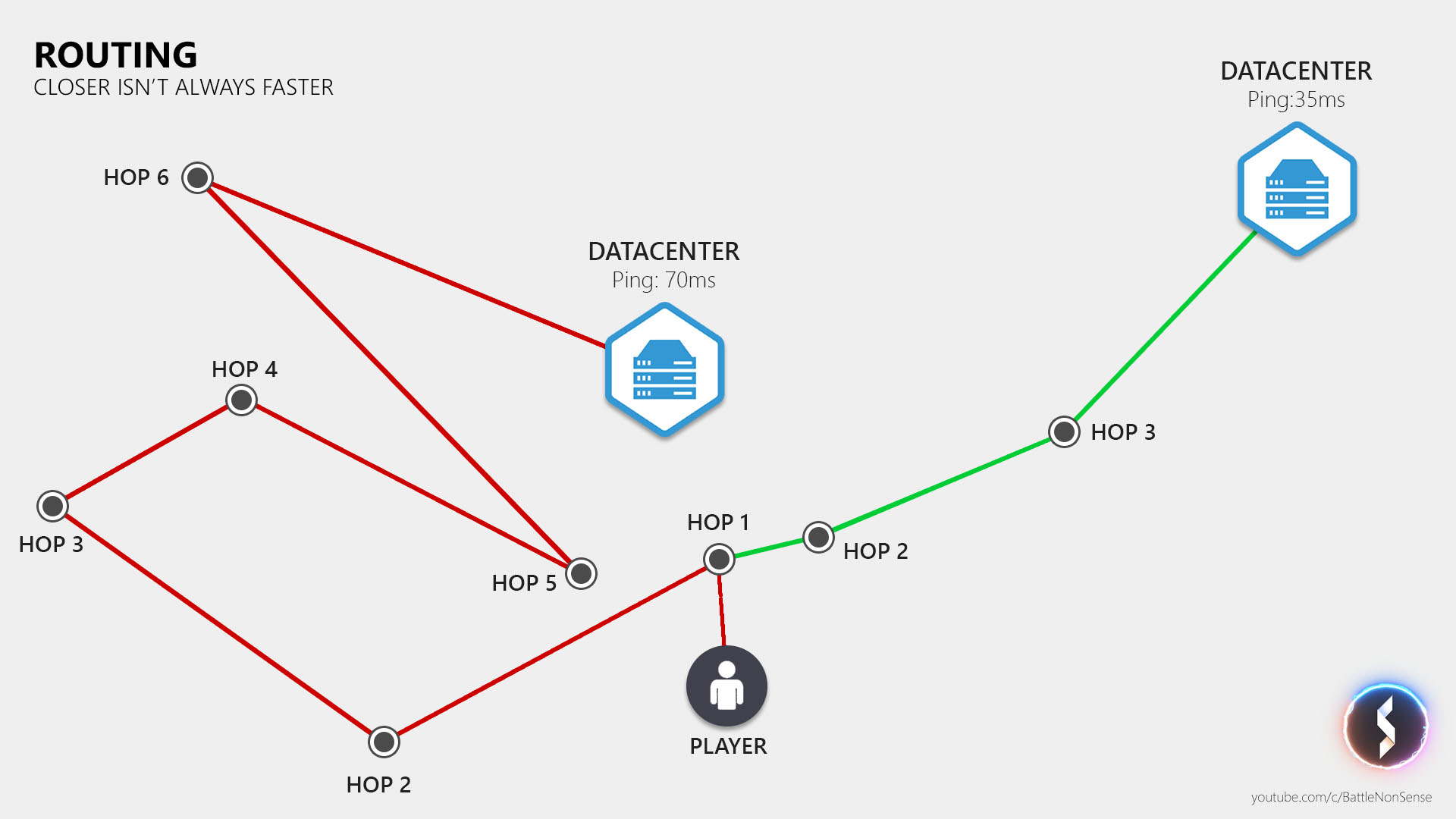

A data packet travels at a more or less fixed speed, so a player's ping is directly affected by the distance between the player and the server. However, the copper and fiber optic cables do not take a direct path to the data center, so the path, or route, to a data center that's physically farther away could actually end up being shorter than a route a data center that's physically closer to you.

Another factor that affects the data travel time is the number of stops (or hops) that your data packet must make on its way. Every additional hop also increases the risk that you lose a data packet.

There are tools like WTFast which try to provide you with "faster" (shorter) routes that have fewer hops. However, the effectiveness of these services depends on your location and your Internet Service Provider. If your ISP already provides very fast/short routes, then these services will not only be ineffective, they might even increase your latency. So, before you sign up for a paid subscription you should test if that service will decrease the latency of your connection.

Packet loss — where did my data go?

There's no guarantee that your packet will actually reach its destination. When a packet disappears, this is called packet loss, which is a big problem for real-time applications such as online games, as re-sending that data increases the delay.

So what causes packet loss?

- The issue could originate in your local network (LAN)

- Your PC could have a faulty network interface, or broken drivers

- Your WiFi or powerline could suffer from interference or congestion

- Or you could have a broken network port or network cable somewhere

Packet loss can also be caused by your router when it has a firmware issue, a hardware problem or when you are running out of upstream or downstream bandwidth.

What might help here is a firmware upgrade, a simple power cycle of the router, or in case that your issues are cause by someone else maxing out your entire up or downstream bandwidth, you might want to invest in router which prioritizes data from real time applications, like the Edge Router X from Ubiquity.

If packet loss happens outside your home network, then you can only try and contact the support of your Internet Service provider and see if they can help.

Update rate

What adds an extra delay on top of the travel time of our data (ping), is how frequently a game sends and receives that data.

When a game sends and receives updates at 30Hz (30 updates per second), then there is more time between updates than when it sends and receives updates at 60Hz.

By increasing the update rates you can decrease the additional delay that is added on top of the travel time of your data.

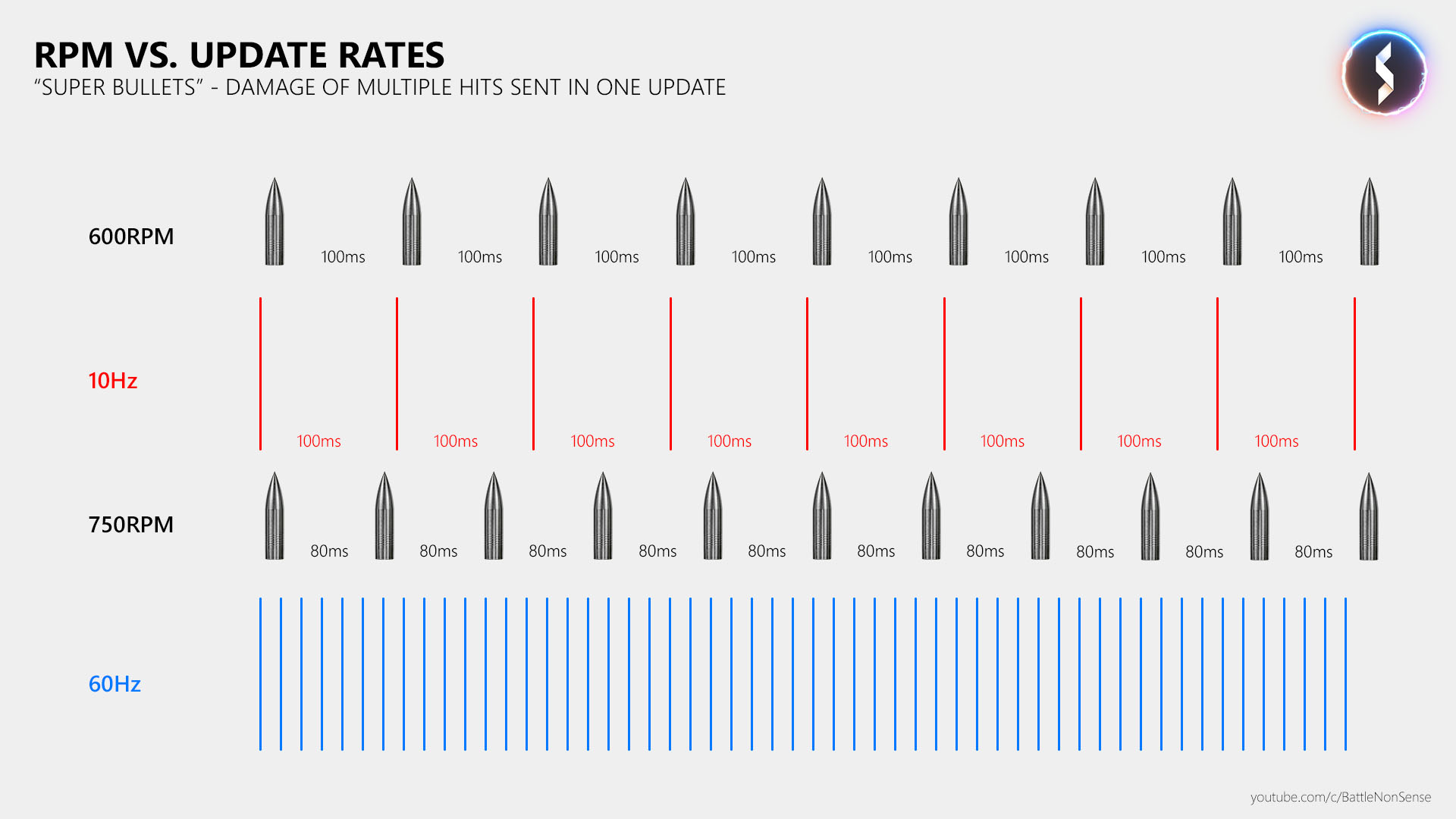

Low update rates do not only affect the network delay; they also cause issues like "super bullets," where a single hit from a gun deals more damage than it should be able to deal. This is also sometimes referred to as "1 frame death." Let me explain why this happens.

Let’s say that the game server sends 10 updates per second, like many Call of Duty games do when a client is hosting the match. At this update rate we have 100ms between the updates, which is the same time that we have between two bullets when a gun fires 600 rounds per minute.

But many shooters, including Call of Duty, have guns which fire 750 rounds per minute or more. As a result, we then run out of updates and so the game has to send multiple bullets with one update.

So if 2 bullets hit a player, then the damage of these 2 hits will be sent in a single update, and so the receiving player will get the experience that he got hit by a "super bullet" that dealt more damage than a single hit should be able to deal.

This should make clear why high update rates are not only required to keep the network delay short, but also to get a consistent online experience. A little bit of packet loss is also less of an issue at high update rates, while at low rates it can lead to major issues for both the player who is suffering from packet loss, as well as for the player who is on the receiving end of that gun. The fewer packets you get, the more important each one is.

Tick rate

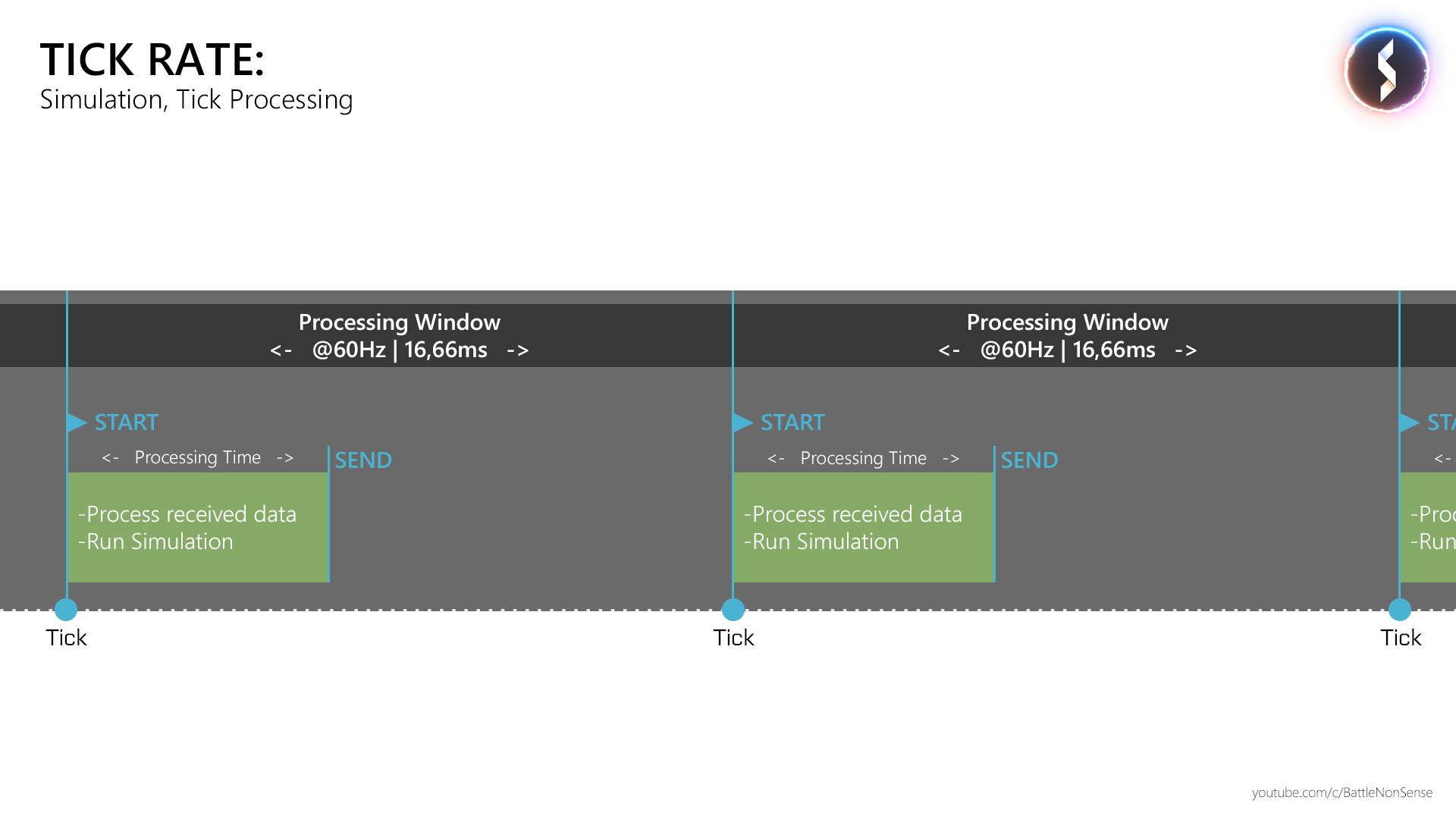

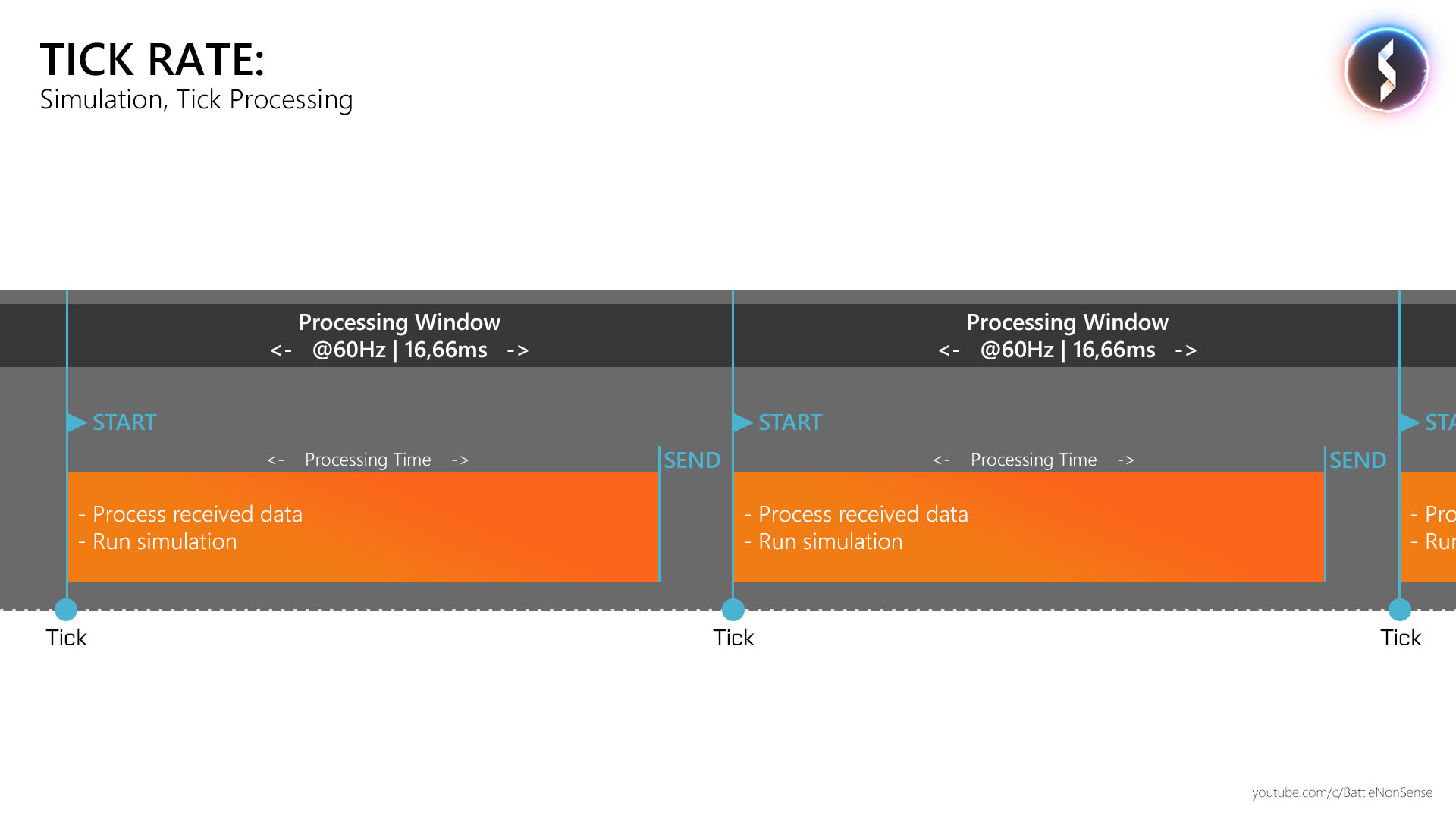

The tick rate, or simulation rate, tells us how many times per second the game produces and processes data.

At the beginning of a tick, the server starts to process the data it received and runs its simulations. Then it sends the result to the clients and sleeps until the next tick happens. The faster the server finishes a tick the earlier the clients will receive new data from the server, which reduces the delay between player and server. That leads to a more responsive hit registration.

A tick or simulation rate of 60Hz will cause less delay than a tick rate of 30Hz, as it decreases the time between the simulation steps. A tick rate of 60Hz will also allow the server to send 60 updates per second, which compared to 30Hz reduces the round trip delay between the client and the server by about 33ms (-16ms from the client to the server, and another -16ms from the server to the client).

At a tickrate of 60Hz, the server only has a processing window of 16.66ms inside which it must finish a simulation step. So when it comes to the performance of servers running at a fixed tick rate, it’s imperative that it finishes a simulation step as fast as possible, or at least inside the processing window that is given by the tick rate.

When a server gets close to the limit, or even fails to process a tick inside that timeframe, then you will instantly notice the results: all sorts of strange gameplay issues like rubber banding, players teleporting, hits getting rejected, and physics failing.

Network models

With that key terminology under our belts, let's look at the structure of how most online games work. Put simply, there are three different network models which developers can choose for a multiplayer game, each with its own pros and cons.

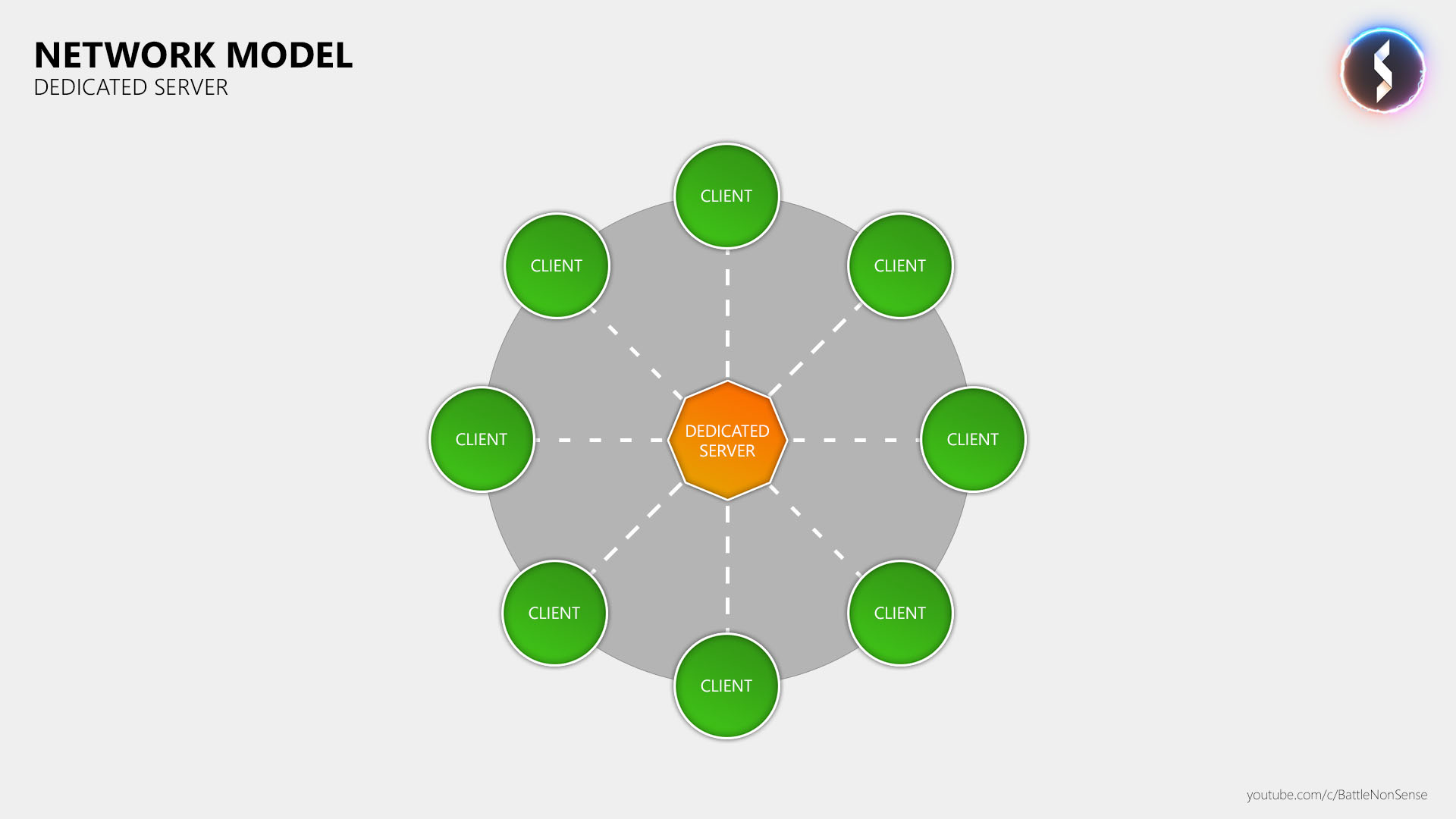

1. Dedicated game server

In this network model developers can contract companies like i3d, or utilize cloud services like Amazon’s AWS, Microsoft’s Azure or Google’s Cloud Service to host dedicated game server instances to which players connect to.

In this network model the game server runs on powerful hardware, and the datacenter provides enough bandwidth to handle all players that connect to it. Anti-cheat and the effect that players with high pings have on the hit registration are easier to control in this network model due to its centralized design.

The downside of dedicated servers is that if you don’t have a game that builds around the idea of the community running (paying for) these servers, then the publisher or game studio must pay for them, which is quite expensive.

Another challenge is that if you release your game worldwide, then you must also make sure that all players who buy the game also get access to low latency servers. If you don't, you force a lot of people to play at very high pings, which causes problems for the entire community.

Bottom line:

The dedicated game server network model is the best choice for fast paced online games with more than 2 players, but also the most expensive, as professional companies provide the processing power, bandwidth and hosting locations required for a great online experience.

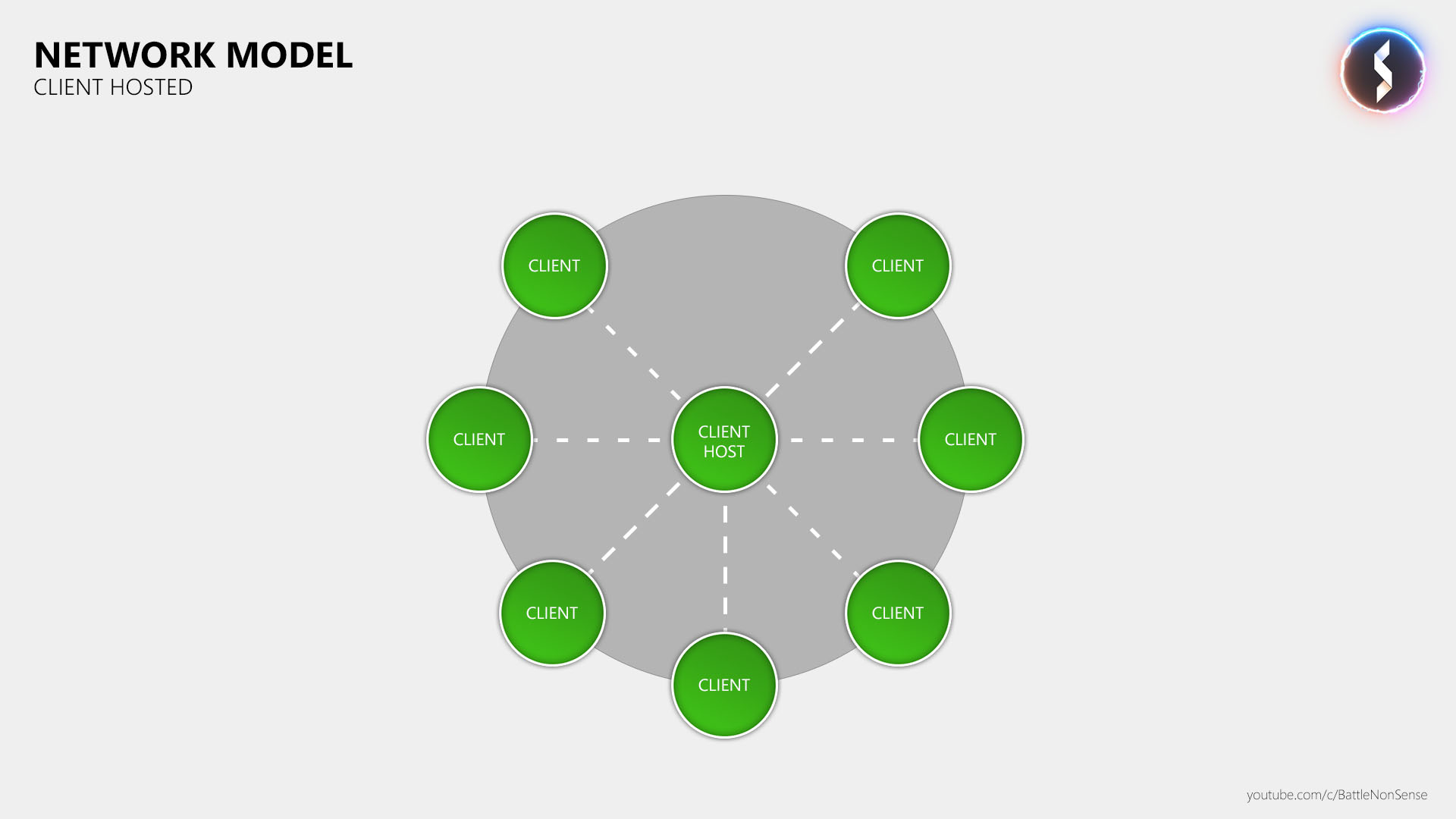

2. Client hosted (Listen server)

A different approach, which many people falsely refer to as peer-to-peer, is to use the PC or console of one of the players to host the game, making them player and server at the same time.

With this model, the game studio does not have to pay for expensive dedicated servers.

It also allows players located in remote regions to play with their friends at a relatively low latency (if they all live nearby).

One of the many downsides of this network model is that the player who is also the server gets an advantage, because they have zero lag. They can see you before you see them and fire first. The host is also able to cheat by artificially increasing the latency for all other players, which will increase his advantage even further (this is called lag switching, or standbying).

This network model also suffers from the problem that all players typically connect to the host through a residential internet connection. Worst case, the host PC is connected over Wi-Fi. This frequently results in a lot of lag, packet loss, rubber banding and an unreliable hit registration. It also limits the update rates of the game as most residential internet connections can’t handle the stress of hosting a 10+ player match at 60Hz.

The host can see the WAN IP addresses of all other players, which allows them to throttle or block these connections, as well as potentially DDoS their internet connection. And anti-cheat is also a big concern as a client runs the entire simulation.

However, the most frustrating part of such client-hosted matches is the host migration, which is the process where the whole game pauses for several seconds while a different player is elected to replace the host that just left.

Bottom line: When a developer chooses this network model over dedicated servers, it's to reduce costs, not to provide players with the best possible gameplay experience.

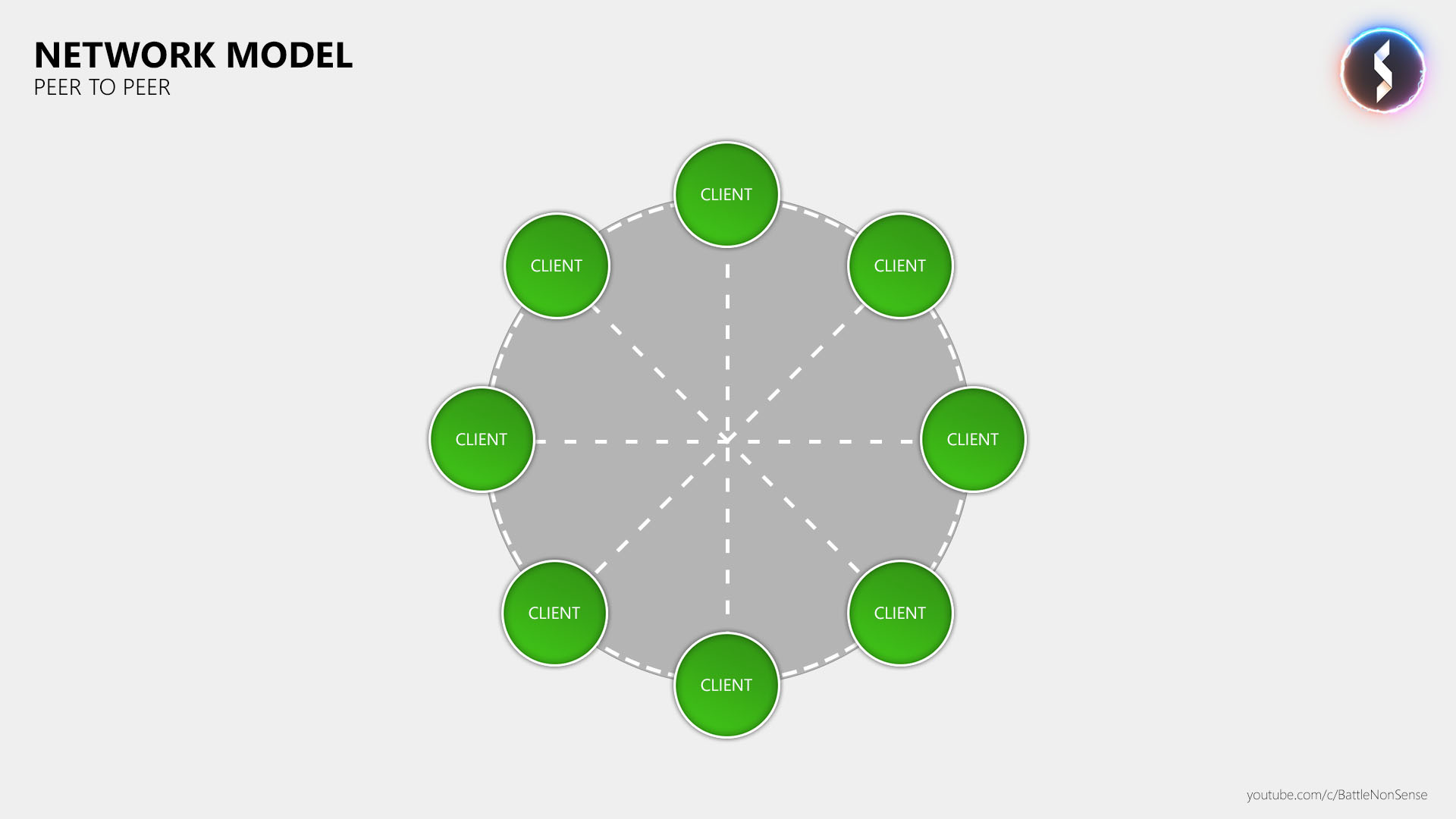

3. Peer-to-peer

The peer-to-peer network model is mostly seen in 1 v 1 fighting and sports games, but there are also a few other multiplayer games like Destiny and For Honor which use peer-to-peer for matches with more than 2 players.

While the implementation of the dedicated server and client hosted (listen server) network models don’t differ much between games, the same cannot be said about peer-to-peer as there are many different versions. The Destiny games, for example, even run some of the simulations on dedicated servers.

One thing that these peer-to-peer variations all have in common is that all clients directly communicate with each other.

As a result, players see the WAN IP addresses of every other player they are playing with, which some streamers do not like as this increases the risk of getting DDoS’ed.

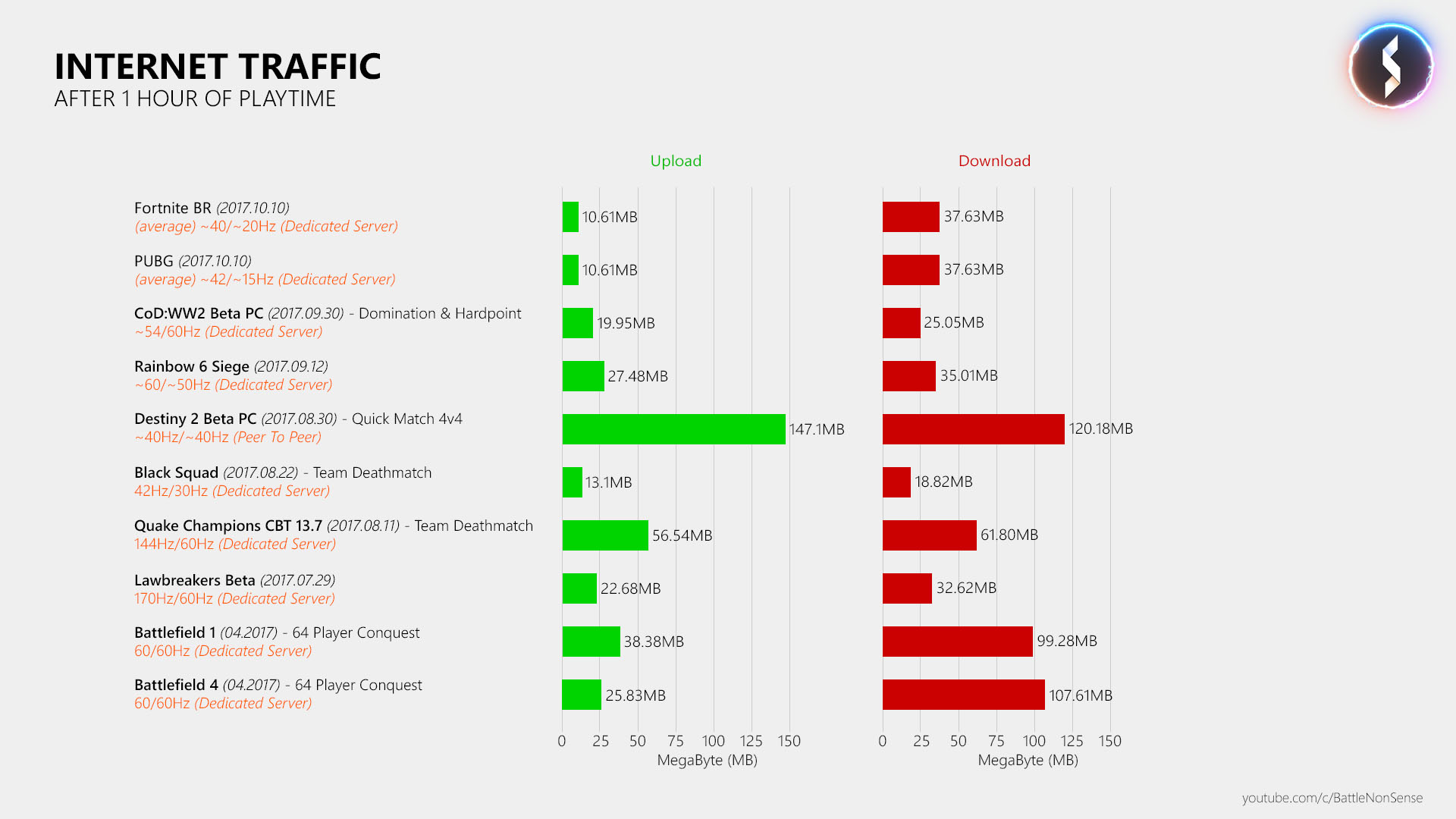

Another problem that games like For Honor and Destiny have is that using peer-to-peer for games with more than 2 players dramatically increases the network traffic, which can become an issue for a player's residential internet connection.

Compared to dedicated game servers, the developer’s ability to secure the game (anti-cheat) and handling players who have a high ping is also limited in this network model.

Bottom line: In 1v1 fighting or sports games, P2P (GGPO / lock step) is still the way to go, because a dedicated game server would just increase the delay, and the client hosted (listen server) network model would give one of the two players an unfair advantage.

But when it comes to any other kind of fast-paced, 2+ player online multiplayer game, then only the dedicated game server network model is able to provide the bandwidth and processing power required for high tick and update rates.

When a publisher or developer chooses the client hosted (listen server) or peer-to-peer network model over dedicated game servers for a 2+ player multiplayer game, it's to minimize costs, not because it offers a better online experience.

Lag compensation

Every player is affected by a certain amount of latency, which is the result of the distance to the server/host, the number of hops a packet has to make, and the server's tick rate and update rate.

This is where lag compensation comes in. Due to these delays, you would see where a player was a few milliseconds ago, not where they are right now. If the game didn't compensate for at least a portion of this delay, then you would be unable to hit other players. You'd essentially be shooting at ghosts.

However, while we do need lag compensation to combat network latency, many games compensate too much. This leads to a bad experience for players who have a stable and low latency connection to the server as they then receive damage very far behind cover when they get shot by a player who has a very high ping. Rainbow 6 Siege players surely know what I am talking about here.

So, while playing at a high ping is not fun at all, compensating more than a 100ms delay will cause both the player with the high ping and the player with the low ping to have a bad experience.

DICE tries to mitigate this issue in Battlefield 1 by only compensating for a small amount of the player's latency. Once the player's ping exceeds a threshold set by the developers, the server will not compensate for that additional delay, and so the player must start and lead his shots further in order to manually compensate for their delayed connection to the server.

This effectively limits how far behind cover a player will receive damage. The following video shows this mechanic in action:

One thing that I want to stress again is that publishers and studios must put effort into server coverage for a quality experience. The reason why some games have many players with very high pings is down to the fact that there aren't enough servers in every region a game is available. That results in many players simply not having access to servers with less than a 150ms ping.

Hit registration

This is how a shooter determines if your shot hit something.

There are two forms of hit registration. The simple and fast one is called "hitscan," where bullets have no travel time. You are basically shooting lasers (Instagib forever!). This form of hitreg is usually used in shooters which have very small maps, as bullet travel time is not a factor in close quarters combat.

Games like Battlefield use more complex and demanding bullet physics simulations for hit registration. Every projectile has a travel speed and trajectory, affected by various factors.

So that’s how a game can calculate hit registration. But where is that calculation done? There are a few options here, too.

Hit registration can be done by your game client (client side), which then tells the server (or in p2p, the other clients) that you hit something. While this provides almost pixel accurate hit registration, it is very risky because it makes it easy for hack developers to implement an aimbot or damage hack.

If a game does client side hit registration then it’s important to have the server do checks for every reported hit (client side, server authoritative).

The classic hit registration method is to have the server do it. The client basically tells the server in which direction you fired a shot, and the server then figures out if that shot hit something. Sadly this doesn’t eliminate the hacker problem, but it makes it harder for their developers.

De-sync

In a multiplayer game, every player should see the same thing. A "de-sync" is when, for example, a door is open for one player, but closed for the other. This can happen when the game is suffering from a bug, performance issues, or something else goes wrong and there's a breakdown of communication between client and server or among clients. For example, your client may not be told about a state change at all, or may be told that a barrel was blown up, but not where it landed.

On the next page, we'll list some common misconceptions about netcode, and wrap up with a summary of the most important ingredients for reliable online gaming.

Join The Club

Join The Club