Microsoft HoloLens hands on: the promise and disappointment of AR

The Microsoft HoloLens is not what I think of when I hear the word “hologram.” “Hologram,” for me, brings a few very specific images to mind. A flickering blue Princess Leia, beamed out of R2-D2’s projector eye. Star Trek: The Next Generation’s Holodeck, real as life. For children of the 80s, the glam pop dazzle of Jem and the Holograms. And if you watched CNN’s US presidential election coverage in 2008, some freakish real-life hybrid of the technology of Star Wars and Jem.

Microsoft’s HoloLens doesn’t really fit any of those molds. What Microsoft calls holograms, most of us have been calling augmented reality for years—overlaying digital images over our view of the real world. After trying on Microsoft’s headset, I came away impressed with the technology—especially if their final hardware is actually able to integrate all of the HoloLens’ necessary battery and processing power into a wire-free device—but skeptical that it will ever facilitate great AR games in its current form.

The HoloLens AR demos

Various augmented reality headsets have popped up at CES over the years, but none have taken off as consumer products. Google Glass has come the closest, but it’s a narrower form of augmented reality than Microsoft is shooting for with the HoloLens.

Where Google Glass gives you a small heads-up display, HoloLens is a much more powerful integrated system, capable of running real applications beyond a heads-up display of the weather or your calendar. Microsoft showed us four demos on unfinished dev kit hardware, which looked more like a delicate, greebly headset than the svelte “final hardware” they showed off on stage at their keynote. The unfinished hardware also didn’t have the integrated battery or processing of the final unit. Those components resided in a five-or-so-pound pack I slung around my neck—not ideal, but not so heavy as to be uncomfortable in the short demo experiences. A cord kept me tethered to a power source, in an experience similar to the standing demo of the Oculus Rift Crescent Bay.

Microsoft’s demos were designed to showcase the various AR implementations of the HoloLens. The first I tried was the most exotic: called OnSite, it put me on the surface of a 3D rendered surface of Mars. Microsoft designed OnSite with NASA JPL, and says this is actually software JPL is going to start using later this year. OnSite let me walk and look around the martian surface, and use an exaggerated tapping gesture—the only physical gesture any of the HoloLens demos supported—to ping or “flag” wherever I was looking. The best part of the demo was seeing how the 3D image I was looking at compared to the data that NASA gets from the Curiosity rover.

I looked at a computer monitor while wearing the headset, and saw a dense 2D image that I couldn’t make much sense of. That same imagery rendered in 3D, however, gave me a great feel for the Martian surface. Then I did something surreal—I moved the mouse cursor off the screen and into the “real” world, where I could see it through the augmented reality display of the HoloLens. That was a trip, and hints at the broader potential of the headset. I hope it’s a practical tool for NASA—because as cool as that little moment was, exploring Mars in augmented reality isn’t nearly as exciting or immersive as it would be in VR.

Let me explain. A VR headset, like the Oculus Rift Crescent Bay, takes up your entire field of vision with a display. You see nothing but the virtual world you inhabit. There are two major downsides to VR: one, because it requires an LCD screen, you need a very high pixel density display for a clear image. Two, you’re limited to entirely virtual creations, with none of the cool potential of mixing the real and the digital.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Microsoft’s HoloLens, in its current form, only projects an AR image onto your eyes in a rectangular area in the center of your field of vision. If AR was baseball, HoloLens would basically be hitting the strike zone—but we have a whole lot of vision outside of that zone, and it’s disappointingly limiting to see these “holograms” in such a small portion of our field of view. On Mars, for example, I’d have to look around to see more of the surface in my small rectangular vision zone, while my eyes were distracted by the walls of the demo room outside of that area.

It’s not immersive at all. I understand that NASA values the software for utility, not immersion, but while I was looking around, I couldn’t help but think how much better standing on the surface of Mars would’ve been in the Oculus Rift.

The last demo I tried was a game called Holobuilder. Most people would call it Minecraft. At least, it looks like Minecraft. It’s not the full game, just a prototype built out of Minecraft pieces to work in Holobuilder. The demo starts by scanning the room you’re in, then generating miniature voxel Minecraft scenery around the geometry of your room. Suddenly, there’s a Minecraft fortress on your coffee table, with a lake beneath it. When I used a voice command to switch to a digging tool, I could actually chip away at the material of a table and see through it to the virtual scenery below. It’s cool, and a little trippy—as long as you’re looking at things through the augmented reality rectangle.

The best part of the demo was the end, when the Microsoft rep encouraged me to dig through a wall and reveal that I could see through it. It made me want to walk through the wall to see more of the cave I’d just uncovered. And that’s the exciting potential of augmented reality—that it can change the way you view and interact with and think about a physical space.

But the limited size of the augmented reality area was still disappointing to me. There is a cool element of discovery in looking around, moving that viewing area across a room, and suddenly discovering something you didn’t see before out of the corner of your vision. But I’d gladly trade that for the scope and immersion of full-field-of-view AR.

Microsoft also demoed its 3D modeling program HoloStudio, which will allow you to 3D print the objects you craft. I think it could be an incredible playing and learning tool for kids, but it doesn’t allow for the fidelity to do real, professional 3D modeling. Weirdly enough, it was the most mundane demo that felt like the best use-case for augmented reality. And that demo was Skype.

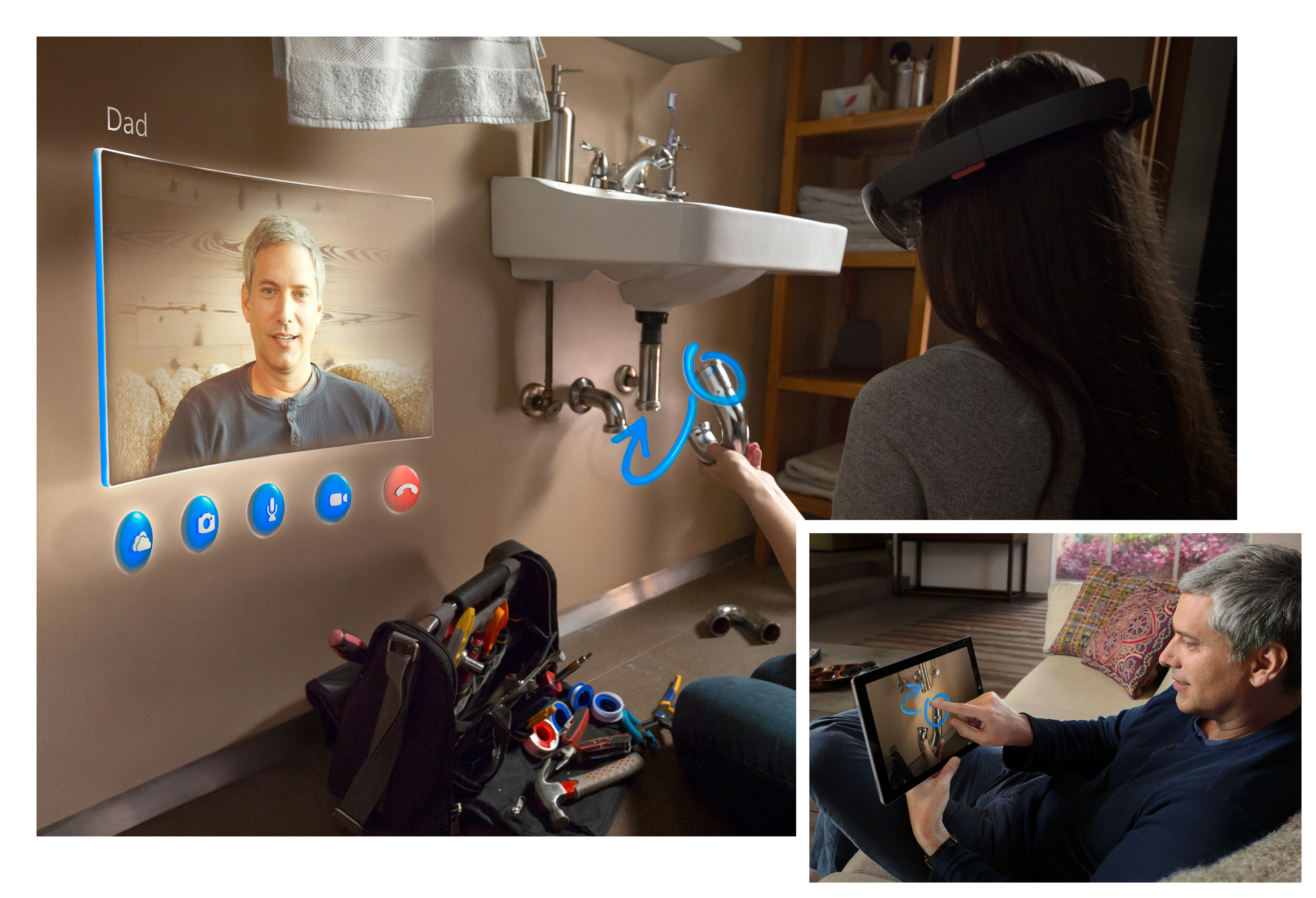

I talked to a Microsoft engineer on Skype, who showed up in my augmented reality FOV as a floating video feed. I could pin that window anywhere in space and look back at it to see her talk. Meanwhile, she could see everything I was looking at, and talked me through the process of installing a light switch. She could also draw things on her Surface tablet screen and have them appear in front of my eyes, which she did multiple times to point to a certain tool or a wire I needed to connect.

Mundane as a Skype call might be, this perfectly demonstrated how practical augmented reality can be, and how it can transform a familiar technology into something more useful and fun.

A hard road for VR

At this point, I’m sold on virtual reality as a great user experience for games and 3D videos and photos. The feeling of “presence” Oculus has attained with its latest headset is incredible. I’m not sold on AR in its current form in the HoloLens. It’s by far the best AR headset I’ve ever seen, but the view is disappointingly limited, and all the demos I saw were too brief, and too staged, for me to get a feel for everyday use of this headset.

Then there are the looming questions. What's the deal with Windows Holographic? How much will HoloLens cost? How long will the battery last? When is it coming out? Who’s making software for it?

I believe Michael Abrash said that the technology AR is even more difficult to realize than VR, and I’m skeptical that the HoloLens will be the device to really and truly make it work. But it’s a promising step in that direction. It’s up to the software to give us a reason to use it.

And we probably shouldn't be calling this stuff holograms. That can only lead to disappointment.

Wes has been covering games and hardware for more than 10 years, first at tech sites like The Wirecutter and Tested before joining the PC Gamer team in 2014. Wes plays a little bit of everything, but he'll always jump at the chance to cover emulation and Japanese games.

When he's not obsessively optimizing and re-optimizing a tangle of conveyor belts in Satisfactory (it's really becoming a problem), he's probably playing a 20-year-old Final Fantasy or some opaque ASCII roguelike. With a focus on writing and editing features, he seeks out personal stories and in-depth histories from the corners of PC gaming and its niche communities. 50% pizza by volume (deep dish, to be specific).