Nvidia launches several new GameWorks libraries

Nvidia Flow and Rigid Body Physics

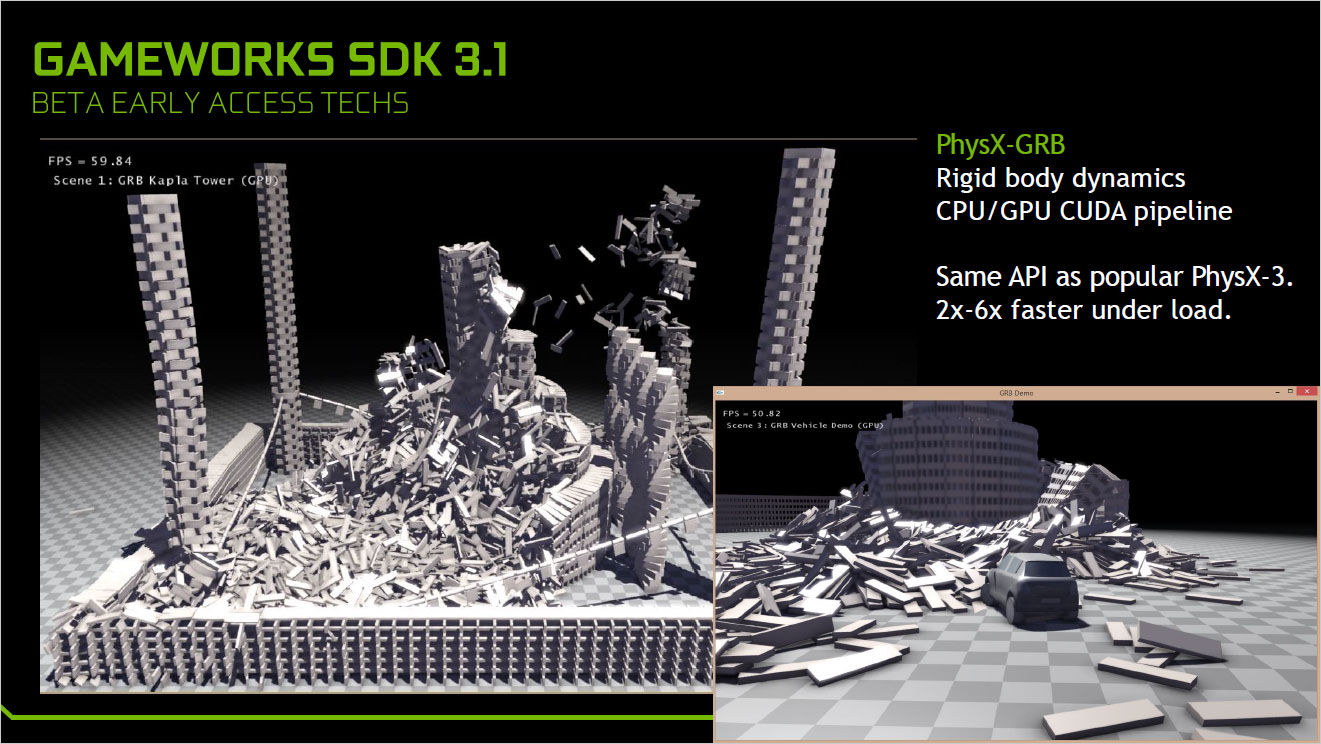

Besides thereleased libraries, some of which are available on GitHub with full source code (e.g., Volumetric Lighting), Nvidia has a couple new beta additions with the 3.2 SDK. The first is Nvidia Flow, slated to be integrated into Unreal Engine 4 in Q2 2016, and it's an updated library that provides for fluid, fire, smoke, and other effects. The second is a rigid body physics library, which has the same API as PhysX 3 but improves performance by 2x-6x under load.

What about VR Works?

Somewhat related to all of this is a discussion of Nvidia's VR Works libraries. Originally part of GameWorks, Nvidia separated them into their own SDK. We've discussed these previously, and other than a name change most of the previous information remains accurate. What's new is that there are several VR titles in the works that are making use of the new VR Works libraries, with one of the most interesting things being multi-resolution shading.

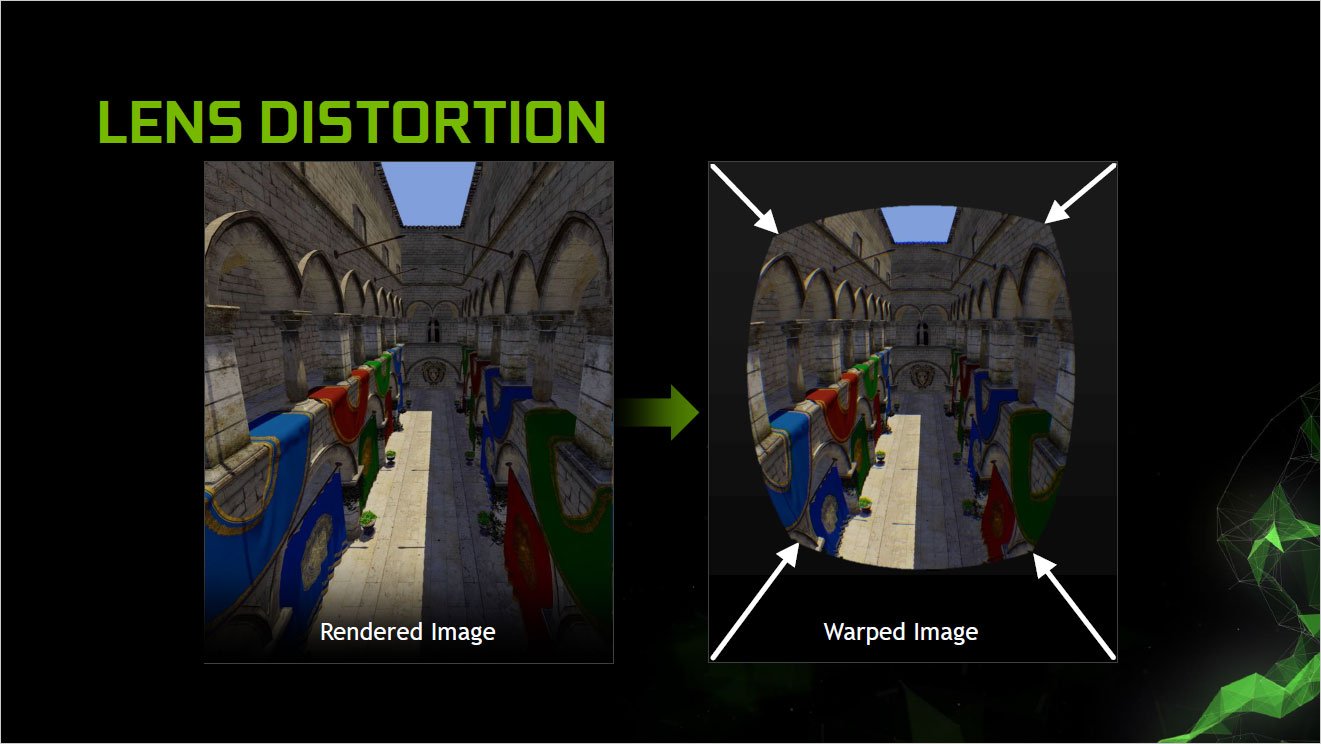

To understand why multi-res shading is useful, you need to look at how VR is being rendered for the current crop of headsets. Above, you can see the before and after images for VR that's projected through lenses. It starts with a standard image (two of them), which are then warped so that when they're viewed through the lenses of the VR kit, they look "correct." What Nvidia found is that their multi-projection engine could be used to split the image into nine viewports, which could each be rendered without having to recalculate everything. Since the warping of the image ends up losing a lot of data, they can "pre-warp" each of the edge viewports and end up reducing the number of pixels rendered by 30%. I've had the chance to look at VR scenes and switch between standard and multi-res views, and I literally can't tell the difference. It basically allows developers to use the GPU to do other cool stuff instead of rendering unnecessary pixels.

Case in point is the VR Everest demo, created using Unreal Engine 4. It uses multi-res shading (which is part of UE4), and the experience has you climbing the final few segments to the top of Mt. Everest. I was able to check out Solfar's demo (twice), and it's awe inspiring—and all without the risk of dying! The second time, I was a bit more daring and walked off the edge, but sadly I just ended up floating in the air, which is perhaps a better idea than a vomit comet down the mountain side. Solfar was apparently able to take the savings from multi-res shading and use those to enable Nvidia's turbulence effect for the blowing snow, which is pretty neat as well. I'm still not sure what the final "game" will be like, but if done properly Everest VR could be an amazing experience.

The other element of VR Works that's likely to see a lot of use is VR SLI. This is slated to be one of the easiest ways to utilize multiple graphics cards in the near future, as developers will be able to render the two images necessary for VR on two GPUs—without the normal latency disadvantage associated with normal SLI and alternate frame rendering. AMD is doing something similar with LiquidVR on their GPUs, and Unreal Engine, Unity, and Valve have all pledged support for VR SLI. That's good news for anyone who happens to have a minimum spec Oculus VR PC, as it means upgrading to a second GPU in the future could easily give you near-perfect performance scaling.

- Volumetric Lighting

- Voxel Ambient Occlusion (VXAO)

- High Fidelity Shadows

- Nvidia Flow and Rigid Body Physics, and VR Works

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

Join The Club

Join The Club