Hackers hack at unhackable new chip for three months. Chip remains unhacked

University of Michigan's Morpheus chip has passed its stiffest security test to far.

The dangerous-titled 'unhackable' processor has survived its biggest hacking test remarkably unscathed. Created by the University of Michigan (UoM), the Morpheus chip design has now been attacked by more than 500 cybersecurity researchers resolutely going at the chip for three months straight. Just imagine what that room smelled like by the end...

Best wireless gaming mouse: ideal cable-free rodents

Best wireless gaming keyboard: no wires, no worries

Best wireless gaming headset: top untethered audio

But in all that time not one has managed to exploit it. Now, three months doesn't necessarily mean it really is completely unhackable, but it certainly makes it pretty damned secure.

Naming a chip 'unhackable', much like a certain GPU limiter we could mention, is tantamount to begging someone to come along and crack it, and that is exactly what the UoM has done in putting Morpheus into the Finding Exploits to Thwart Tampering (FETT) Bug Bounty hunt from DARPA.

Bounty hunting. FETT. Cute, eh? The research was also supported by the DARPA SSITH program too.

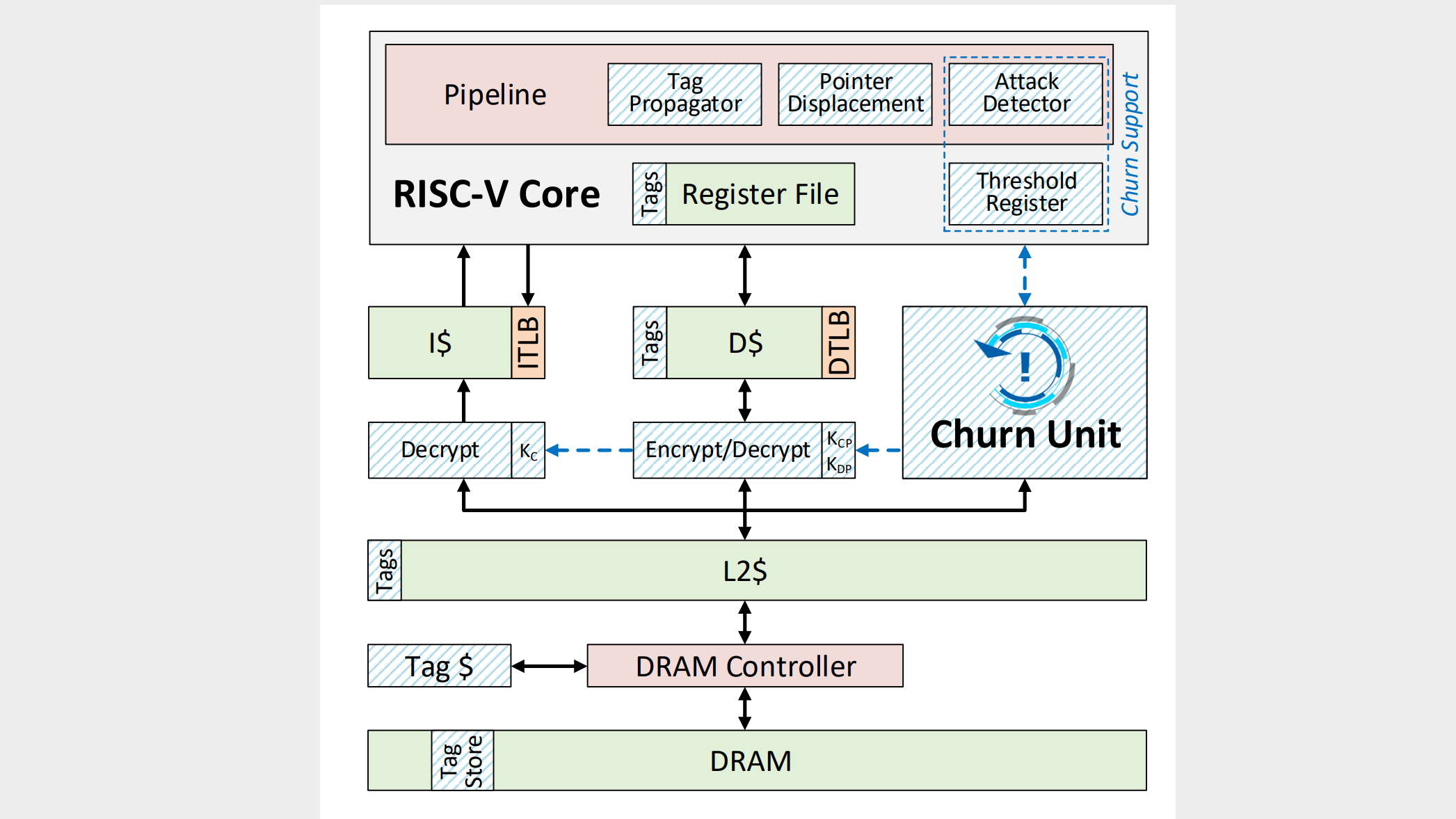

Anyway, the bug hunting hackathon ran from June through August last year, and the RISC-V based chip resisted all attempts to compromise it.

Dubbed Morpheus by its creators, the chip design uses a mixture of 'encryption and churn' to first, randomly obfuscate key data points—such as the location, format, and content of a program's core—and then re-randomise them all while the system is in operation.

"Imagine trying to solve a Rubik’s Cube that rearranges itself every time you blink," says team lead, Todd Austin (via Hexus). "That’s what hackers are up against with Morpheus. It makes the computer an unsolvable puzzle.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

"Developers are constantly writing code, and as long as there is new code, there will be new bugs and security vulnerabilities. With Morpheus, even if a hacker finds a bug, the information needed to exploit it vanishes within milliseconds. It’s perhaps the closest thing to a future-proof secure system."

This level of obfuscation and churn might sound like an absolute nightmare to code for, or even use, but Austin claims that the design is completely transparent to both software developers and users, because all the randomness occurs within data known as 'undefined semantics.'

What's more, the action of churning those data points is proved to have negligible impact on the actual performance of the system, with the architecture paper (PDF warning) itself claiming just a one percent performance hit.

So far, it's looking good for the 'unhackable' chip then.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.

Join The Club

Join The Club