Nvidia talks DX12, including GameWorks support

More tools and libraries for the low-level API, and better performance (for Nvidia).

Yesterday, Nvidia announced its new GTX 1080 Ti. That was the star of the show, but there was a lot of other information Nvidia covered as well, most of it not so sexy or glamorous as a new graphics card. Among the announcements was increased support for DX12, and it's the first times Nvidia has really started talked about DX12 performance. AMD in contrast has had plenty to say about DX12 over the past couple of years, and in many of the early DX12 titles AMD has benefited more than Nvidia. It took a while, but Nvidia is gearing up to fight back.

The low-level vs. high-level debate

Nvidia points out that the ages-old debate between low-level and high-level APIs goes back several decades. Right now we're talking about OpenGL/DX11 versus Vulkan/DX12, but in the 80s people were arguing about PHIGS versus IRIS GL.

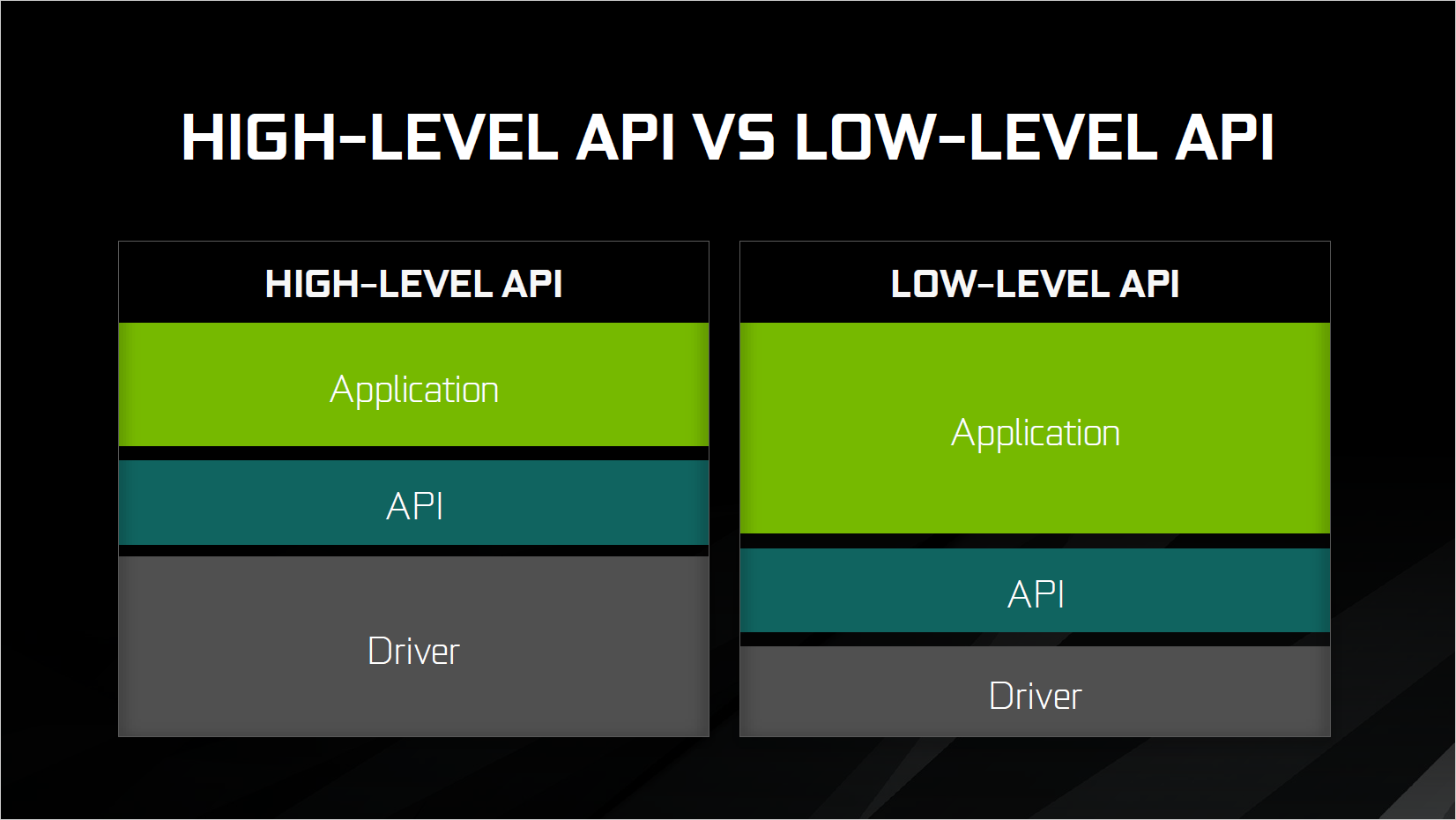

The key point Nvidia makes is that high-level APIs allow drivers to optimize performance, while low-level APIs restrict driver optimizations while in turn providing software developers more tools and flexibility. Nvidia gives the above representation of responsibilities in high-level vs. low-level APIs. The API work remains largely the same—passing calls between applications and the hardware drivers—but the amount of work involved in the drivers shifts over to work in the application. How much that balance shifts is open to debate, but inherently there will be more application developer support required to extract good performance from a low-level API.

Talking specifically about DX11 vs. DX12, Nvidia notes that DX11 has had hundreds of man-years of work put into optimization of the drivers. Most of the resource management is handled by the drivers, there are mature tools, and things like multi-GPU support can be implemented with good returns (with a small amount of developer support). In contrast, DX12 offers unprecedented levels of control over the hardware, with asynchronous compute and advanced dispatch and synchronization features, including multiple command queues. DX12 helps developers extract more performance from both high-end multi-core processors as well as low-end budget CPUs. Multi-GPU support is also available in varying forms, with the lowest level being explicit control over what work is done on each GPU.

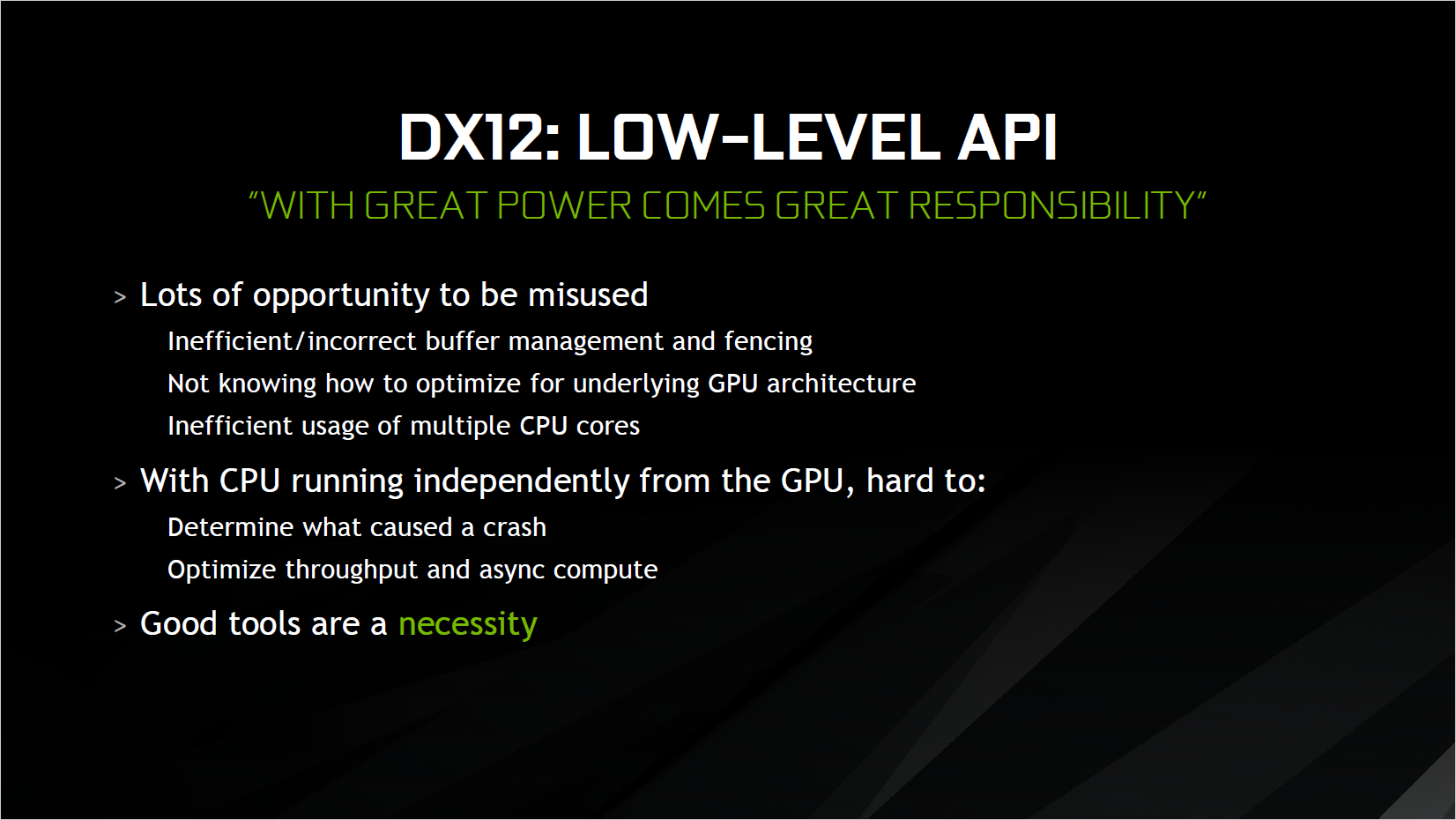

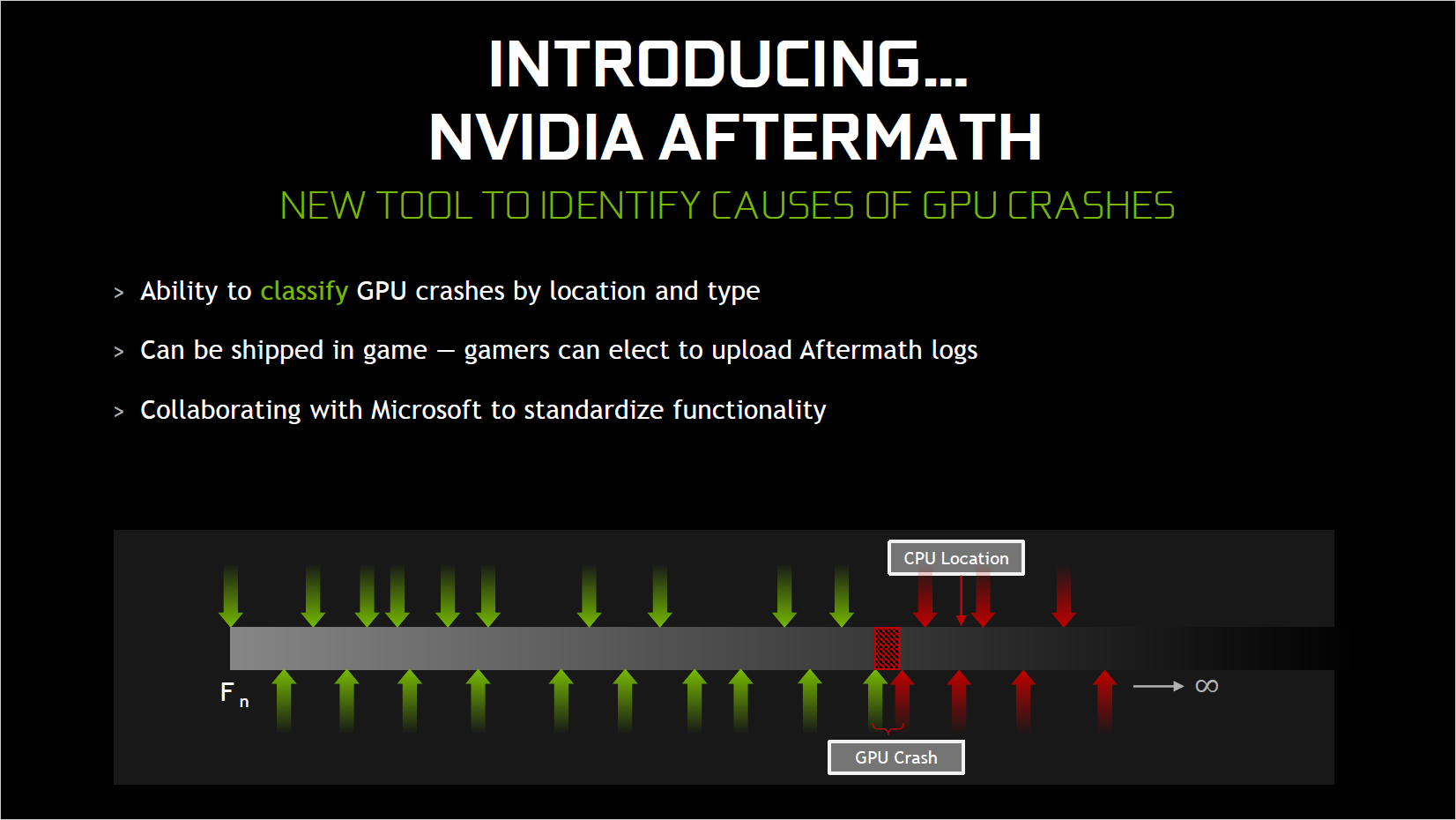

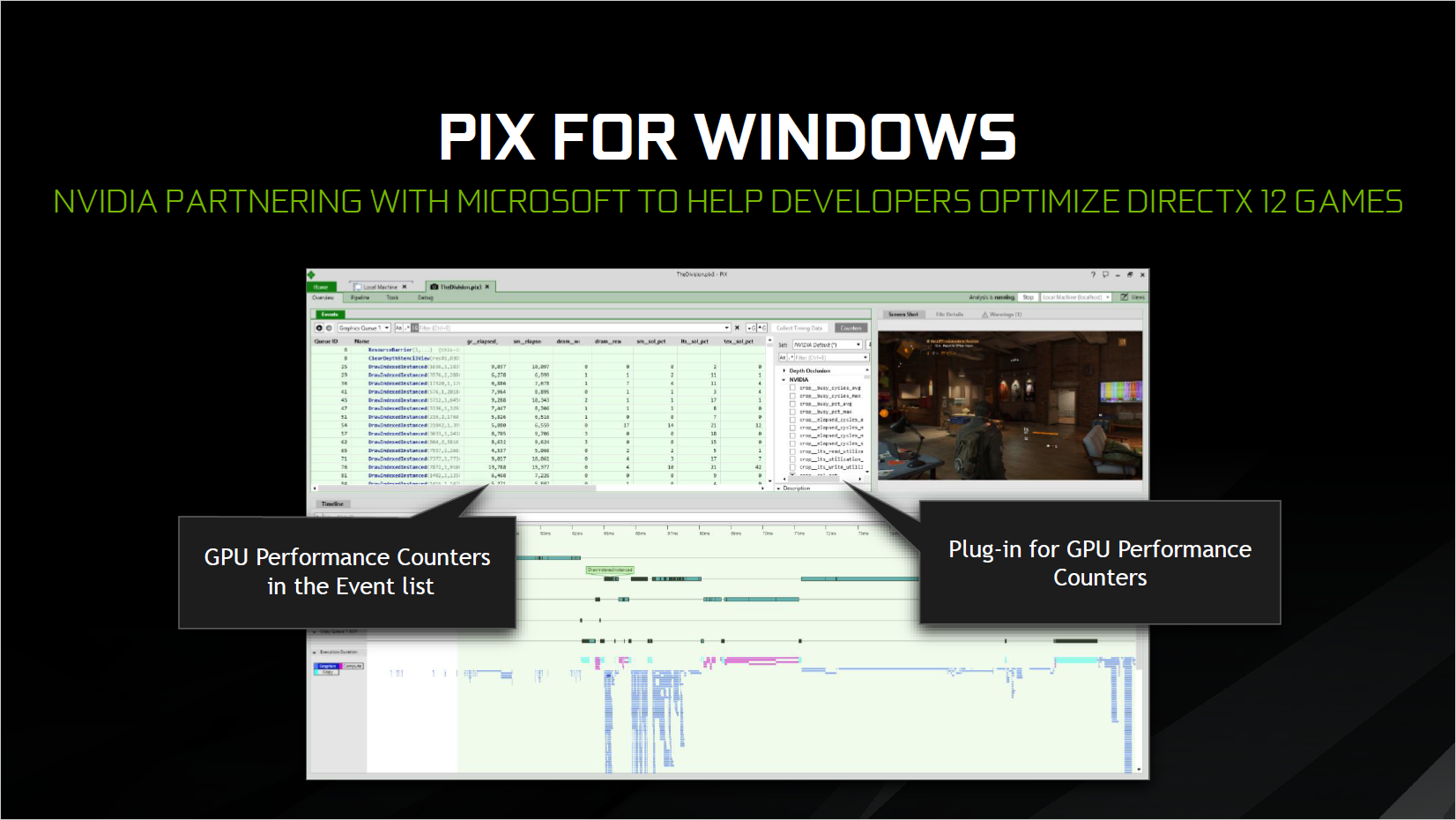

The problem with all of this low-level access is that developers are given plenty of rope with which to hang themselves. They need to optimize properly for the underlying architectures, manage buffers and work queues appropriately, and distribute work across multiple CPU cores. And when something inevitably goes wrong, figuring out the root cause becomes more complex. To help developers, Nvidia announced new tools for software development that will all work with DX12: nSight, Nvidia Aftermath, and PIX for Windows.

I don't want to get into the details, mostly because they get boring if you're not actually doing application development, but above three slides cover the basics.

Improved Nvidia DX12 performance

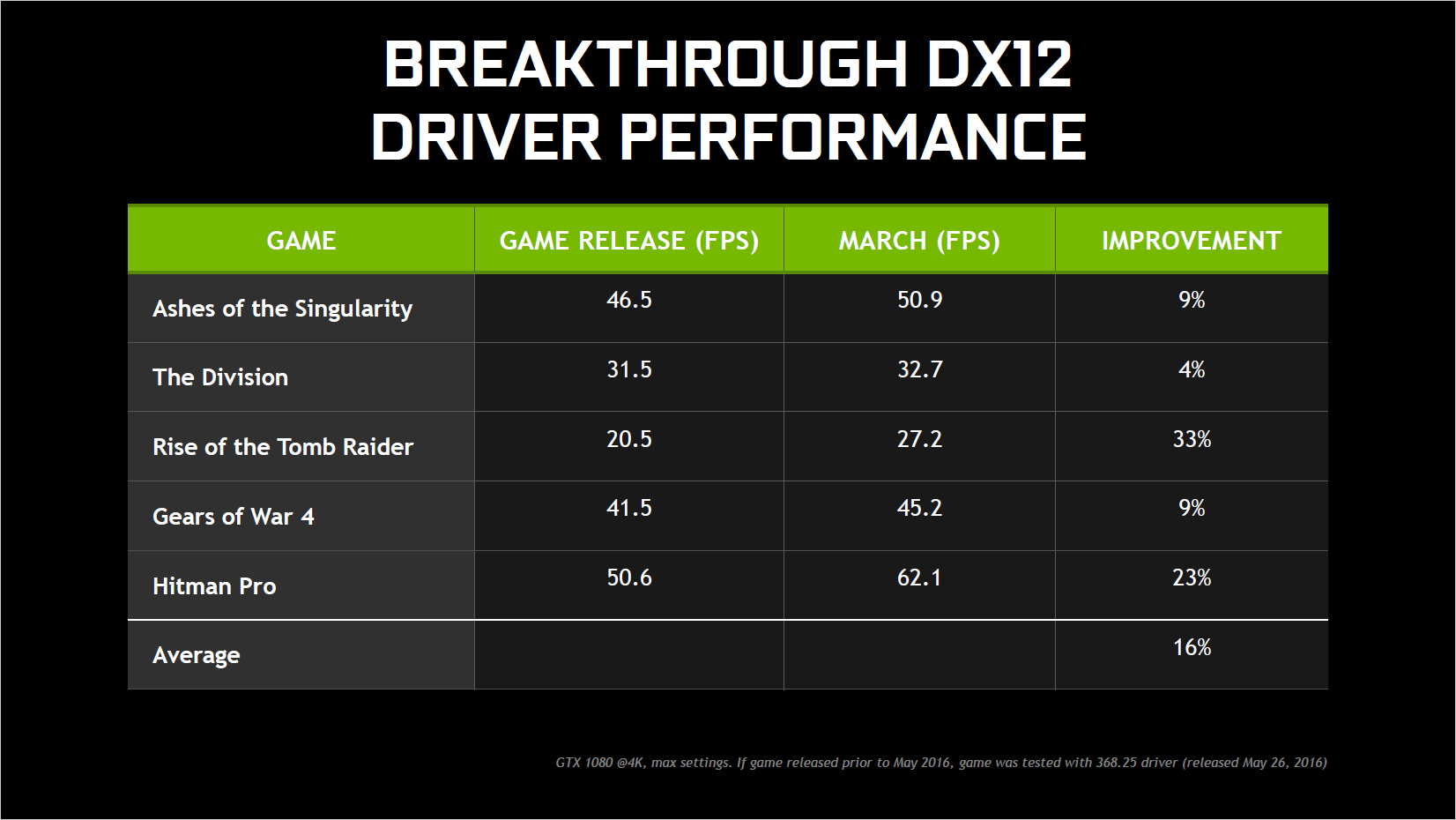

Moving on from development tools, Nvidia next talked about improving performance for DX12 games. Through a combination of driver tweaks, an understanding of the underlying Nvidia GPU architecture, and direct support of game developers, Nvidia has worked to improve their performance in many DX12 games. Compared to the initial DX12 performance, Nvidia provided the following figures:

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

An average performance increase of 16 percent is great to see, but of course there are a lot more DX12 games than the above five. Civilization VI, Total War: Warhammer, Deus Ex: Mankind Divided, and most recently Sniper Elite 4 all support DX12…and all three are part of AMD's Gaming Evolved initiative. There are also half a dozen Microsoft published DX12-exclusive games. But where did the above gains come from?

The majority of the work appears to have come from the application side of the equation, meaning Nvidia has helped companies like DICE better optimize their DX12 support for Nvidia GPUs. That entails having Nvidia engineers on-site with the game developers, and in the case of Battlefield 1 Nvidia showed DX12 performance improving by about 75 percent from the alpha stage in August 2016 through the release stage of October 2016.

Going forward, the Unreal Engine 4, Unity, and Amazon Lumberyard game engines all include support for DX12. It would have been great to see Nvidia look at more than just a handful of titles, and with more DX12 games planned for the future this is definitely a topic Nvidia wants to address. Which brings me to the final topic.

GameWorks DX12

GameWorks is Nvidia's library of effects and tools for software developers. Most of the GameWorks library has been developed for DX11, but with DX12 seeing increased uptake, Nvidia has ported most of the libraries to DX12 now. CEO Jen-Hsun in his 1080 Ti announcement said that "all GameWorks libraries are now available for DX12." I'm not sure if that's entirely accurate, though the long-term goal is to have all the libraries available. Definitely part of the DX12 libraries are HairWorks, Turf Effects, Flex, Flow, and VRWorks. In total, GameWorks represents over 500 man-years of labor by Nvidia engineers.

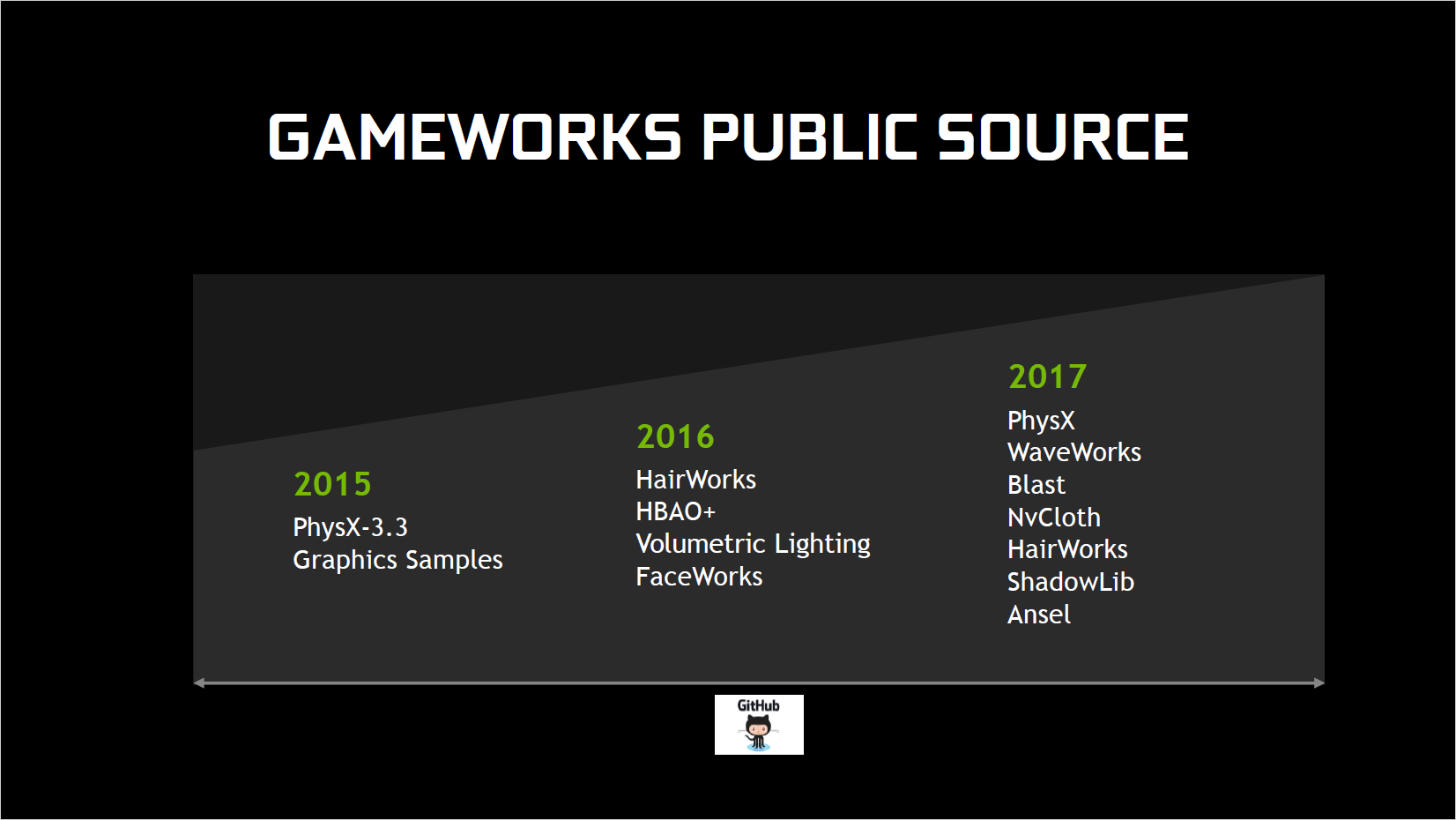

Nvidia announced some new features for existing GameWorks libraries, including improved GPU rigid body support in PhysX. Nvidia also discussed how it's putting even more content into publicly available source code on GitHub, including Ansel, WaveWorks, Blast, and NvCloth (all new GitHub additions for 2017). Related to this is improved application integration for some of Nvidia's GeForce Experience features like ShadowPlay—the upcoming game Lawbreakers as an example integrates ShadowPlay and will automatically trigger saves (which you can later reject) whenever certain events are triggered, like killing sprees.

GameWorks is also the subject of much hand wringing and lamentation when it comes to new games that have serious performance issues. The problem as I see it is that some people see certain graphics options and assume that just because they're present, the effects need to be enabled. On certain hardware configurations—sometimes AMD GPUs, sometimes older Nvidia GPUs—that leads to a sometimes major drop in performance. But GameWorks isn't the only thing to cause this sort of problem—just look at some of the graphics effects in Deus Ex: Mankind Divided.

So who's to blame, Nvidia or the developers? I put GameWorks into the same category as DX12 support—the whole "with great power comes great responsibility" business mentioned above. If a developer uses GameWorks and ships a buggy game that doesn't run well, that's on them—just like if they have a DX12 implementation that runs like molasses on an Nvidia GPU but not an AMD GPUs. So really, DX12 and GameWorks end up being two sides of the same coin, and Nvidia continues to try and cash in on both. Just don't be surprised if your 2-year-old graphics card doesn't seem to benefit as much as the new 1080 Ti.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.