First Grammarly cloned me without permission. Then another AI company asked if it could do the same—for $2,000

Welcome to writing about computer games in 2026.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

The ways in which AI has made our lives worse in the last few years feels, on some level, personal. The RAMpocalypse and Nvidia's pivot to AI datacenters have made PC gaming a dramatically less affordable hobby. Google is rewriting journalists' headlines to make them worse while also scraping our work into "AI overviews" that make it harder for our website to survive. Even DLSS 5 has soured developers on a popular technology by slopping on a sheen of generic AI gloss.

This is all stuff I care about, but none of it was explicitly about me until two weeks ago, when I found out an AI company was selling a product with my name on it.

The San Francisco of 2026 is a certain kind of dystopia. Not violent or ugly, as cable news may try to convince you—the city I've lived in for 14 years is still, on sunny days like March 6, staggeringly beautiful—but when you're out and about as I was that day, sitting in a Blue Bottle coffee shop for example, almost every conversation around you will be about AI. I was trying to ignore half a dozen techie dudes on my left talking about LLMs and another duo on my right saying something about agentic potential. I glanced up as a woman entered wearing a black baseball cap with the phrase "GPU poor" on it.

Article continues belowThen I looked down, scrolled through my Bluesky feed, and saw a screenshot of a Verge article by former PC Gamer contributor Stevie Bonifield about an AI company turning journalists into fake "editors."

One name in particular caught my eye: Mine.

Uh excuse me what the fuck

— @wes.readonlymemo.com (@wes.readonlymemo.com.bsky.social) 2026-03-20T22:00:53.604Z

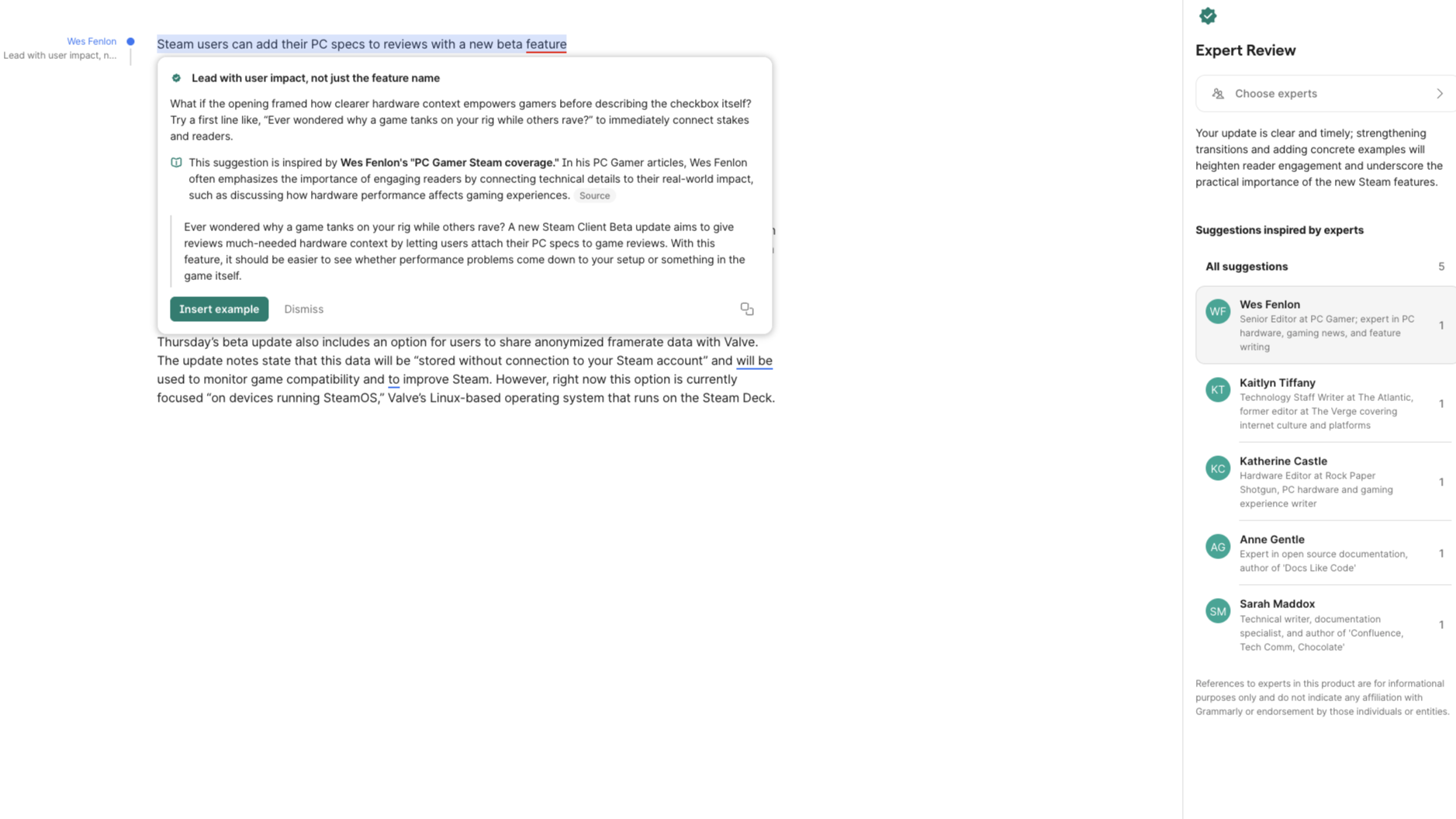

Grammarly, a proofreading app which last year jumped headfirst into the craze by rebranding itself as an AI company called Superhuman, had apparently rolled out a tool seven months ago that offered to review writing in the voice of "experts" ranging from Stephen King and Neil deGrasse Tyson to, well, me. It's a deeply offensive tool on multiple levels:

- Grammarly didn't bother to tell any of its experts it was cloning them

- It presumably mass scraped and fed the entire bodies of work of everyone it purported to represent into an LLM regurgitation machine

- The editing advice it then puppeteered out of shambling AI homunculi was dogshit.

As Wired summarized when it first broke this story on March 4, the software "noted that it was taking 'inspiration' from Elements of Style author William Strunk Jr. and the sociologist Pierre Bourdieu while applying 'ideas' from Gone With the Wind author Margaret Mitchell and using 'concepts' from writer and professor Virginia Tufte." All that brain power amounted to this stunning bit of guidance: "Replace repetition with vivid, varied sentence patterns."

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

And as Bonifield later pointed out on The Verge, the tool's suggestions clearly have no basis in the actual work that goes into editing journalism, because scraping a bunch of books and articles only gives you access to the finished, published material:

"One suggestion from Grammarly’s AI 'inspired by' Verge senior editor Sean Hollister was about adding a parenthetical with context that was already included elsewhere. The only problem is that I’ve actually been edited by the real Sean Hollister, who prefers avoiding repetitive or unnecessary explanations while using straightforward wording and organization."

Sitting in that coffee shop, everyone around me suddenly felt like the enemy. What were the odds one of them worked for Superhuman, which had an office just a 20 minute walk away? Should I interrupt their conversations to ask them "Hey, did you do this? Did you just steal my identity? What the hell man?"

It was like an out of body experience, except I couldn't stop thinking about another me out there giving people bad advice and making a company a bunch of money. (Superhuman raised $1 billion in funding last year to invest in AI and earns more than $700 million per year, according to Reuters).

AI is an existential threat to anyone trying to scrape out a living as a writer or artist

Grammarly's initial response was to set up an email address that people could mail to "opt-out" of being AI cloned, but without actually reaching out to tell people it was giving bad advice in their names. Not great Bob! I wasn't the only one who was mad, which is why there's now a class action lawsuit against the company.

This whole shitshow seemed to me like a clear warning sign to AI companies that journalists and academics do not take kindly to their work being copied, and that we will view any tool that claims to be able to instantly replicate what we do with our human brains every day as an existential threat. That they should slow down and rethink the very concept of replacing real human craft with infinite machine-generated mediocrity. But the thing about the AI industry in the year 2026 is that there is so much fucking money to be made every second that warning signs do not exist.

Grammarly getting sued and yanking its AI advice off the market was an opportunity for someone else to swoop in, dollar signs in their eyes. "I saw your name listed as one of Grammarly’s 'expert reviewers,' and given the recent coverage, wanted to reach out directly," someone from the company GPTZero emailed me on March 18. Unlike an email I got the same day from a reporter at a Danish newspaper, though, this one wasn't asking me for comment on the fiasco. It was asking me if I wanted to hand over my identity in the same way, but for money this time.

By "defining a few core principles [I] use when editing" and then "reviewing sample outputs to make sure it sounds like [me]," I could become an AI expert in the tool GPTZero is adding to its suite alongside an AI Detector which promises to "detect AI content from ChatGPT, GPT-5, Gemini, and checks writing quality to make every word worth reading."

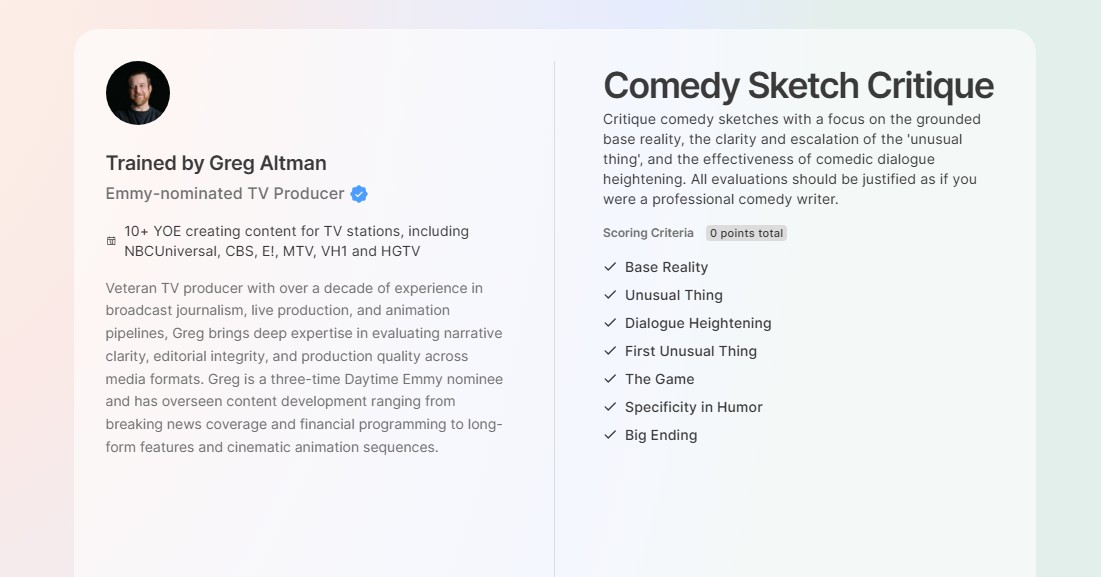

I'll give GPTZero this: at least its tool will be based on the process of editing, rather than inventing completely imaginary advice based on ingesting a body of writing. But the product itself remains offensive: the company offered me a one-time fee of $2,000 to help craft a template of a game reviewer. I wonder if Emmy-nominated TV producer Greg Altman, who agreed to train an AI model that will "critique comedy sketches with a focus on the grounded base reality, the clarity and escalation of the 'unusual thing', and the effectiveness of comedic dialogue heightening," negotiated for more? He was one of three examples GPTZero sent me of experts who have already signed on.

GPTZero explained that each "template" is built on top of an underlying AI model (GPT-4.1) which means that however I tried to distill my editing process, it would ultimately be massaging outputs that come from a vast corpus of stolen material. The New York Times, authors including George R.R. Martin, Encyclopedia Britannica, even Merriam freakin' Webster are suing OpenAI, Anthropic, Meta, and other AI companies over using their work as training data.

While at least one court case so far has found this process to legally be fair use (minus the bit where the AI companies sometimes pirated the books they fed into their models), I think there is overwhelming evidence that this technology is ultimately plagiarism at a trillion-dollar scale. AI is an existential threat to anyone trying to scrape out a living as a writer or artist. How do we convince the average person that what we create is worth paying for if the richest people on earth think they can take it for free to make even more money?

These companies show what they do and don't value when they argue they must be allowed to slurp up any and every bit of human writing they can find, but throw a hissy when another AI company gets anywhere near their data.

Feels a little violating, doesn't it? So does the suggestion that my career as a journalist could be boiled down to a few editing suggestions and $2,000. Surely signing away my soul and helping make my entire profession obsolete is worth more than a month's rent.

Wes has been covering games and hardware for more than 10 years, first at tech sites like The Wirecutter and Tested before joining the PC Gamer team in 2014. Wes plays a little bit of everything, but he'll always jump at the chance to cover emulation and Japanese games.

When he's not obsessively optimizing and re-optimizing a tangle of conveyor belts in Satisfactory (it's really becoming a problem), he's probably playing a 20-year-old Final Fantasy or some opaque ASCII roguelike. With a focus on writing and editing features, he seeks out personal stories and in-depth histories from the corners of PC gaming and its niche communities. 50% pizza by volume (deep dish, to be specific).

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.