We've run the numbers and Nvidia's RTX 4080 cards don't add up

$900 for a 192-bit graphics card? Seriously?

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Update: The following opinion piece is based on the specifications Nvidia has released for its RTX 40-series GPUs and not on our own testing of those cards.

And so it begins. The next-gen graphics fest we've all been waiting for is here. And yet I'm already massively disappointed. Nvidia has pulled the wraps off its new RTX 40-series graphics, otherwise known as Ada Lovelace, and the numbers don't add up.

The top-end RTX 4090 board and the AD102 GPU it contains look great from a technical perspective. But what Nvidia is doing with the two RTX 4080 boards is deeply, deeply disappointing, perhaps even cynical. Let's demonstrate that with numbers. Lots of numbers.

Article continues belowWhen Nvidia launched the existing Ampere generation and the RTX 30-series roughly two years ago, the RTX 3080 series board was a slightly cut-down version of the RTX 3090 using the same GA102 chip and with around 80% of the functional units of its bigger sibling. In turn, the RTX 3070 used the next-tier GA104 GPU and delivered in the region of 55% of the hardware of the RTX 3090.

Now compare that with the new Ada Lovelace series. The RTX 4080 12GB uses the AD104 chip and offers just 45% of the functional units of the RTX 4090. To give one obvious example, it packs well under half the shaders of the RTX 4090—7,680 compared with 16,384. For the RTX 3080 versus the RTX 3090, it was 8,704 shaders compared with 10,496. That's 80% of the shader count.

In terms of its relationship with the RTX 4090, the new RTX 4080 12GB is more akin to the RTX 3060 Ti with its 4,864 shaders. Except the RTX 3060 Ti at least had a 256-bit memory bus. The RTX 4080 12GB only has a 192-bit bus. Oh, and the RTX 4080 is $900.

Seriously? A $900 card with a 192-bit bus? The RTX 4080 16GB admittedly is a bit better, what with its 256-bit bus and based on the AD103 chip rather than AD104. But it's still miles off what the RTX 3080 was to the RTX 3090.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

| Header Cell - Column 0 | RTX 4080 (12GB) | RTX 4080 (16GB) | RTX 4090 |

|---|---|---|---|

| GPU | AD104-400 | AD103-300 | AD102-300 |

| CUDA Cores | 7,680 | 9,728 | 16,385 |

| Base Clock | 2,310MHz | 2,210MHz | 2,230MHz |

| Boost Clock | 2,610MHz | 2,510MHz | 2,520MHz |

| Memory Bus | 192-bit | 256-bit | 384-bit |

| Memory Type | 12GB GDDR6X | 16GB GDDR6X | 24GB GDDR6X |

| Memory Speed | 21Gbps | 23Gbps | 21Gbps |

| Graphics Card Power (W) | 285W | 320W | 450W |

| Required System power (W) | 700W | 750W | 850W |

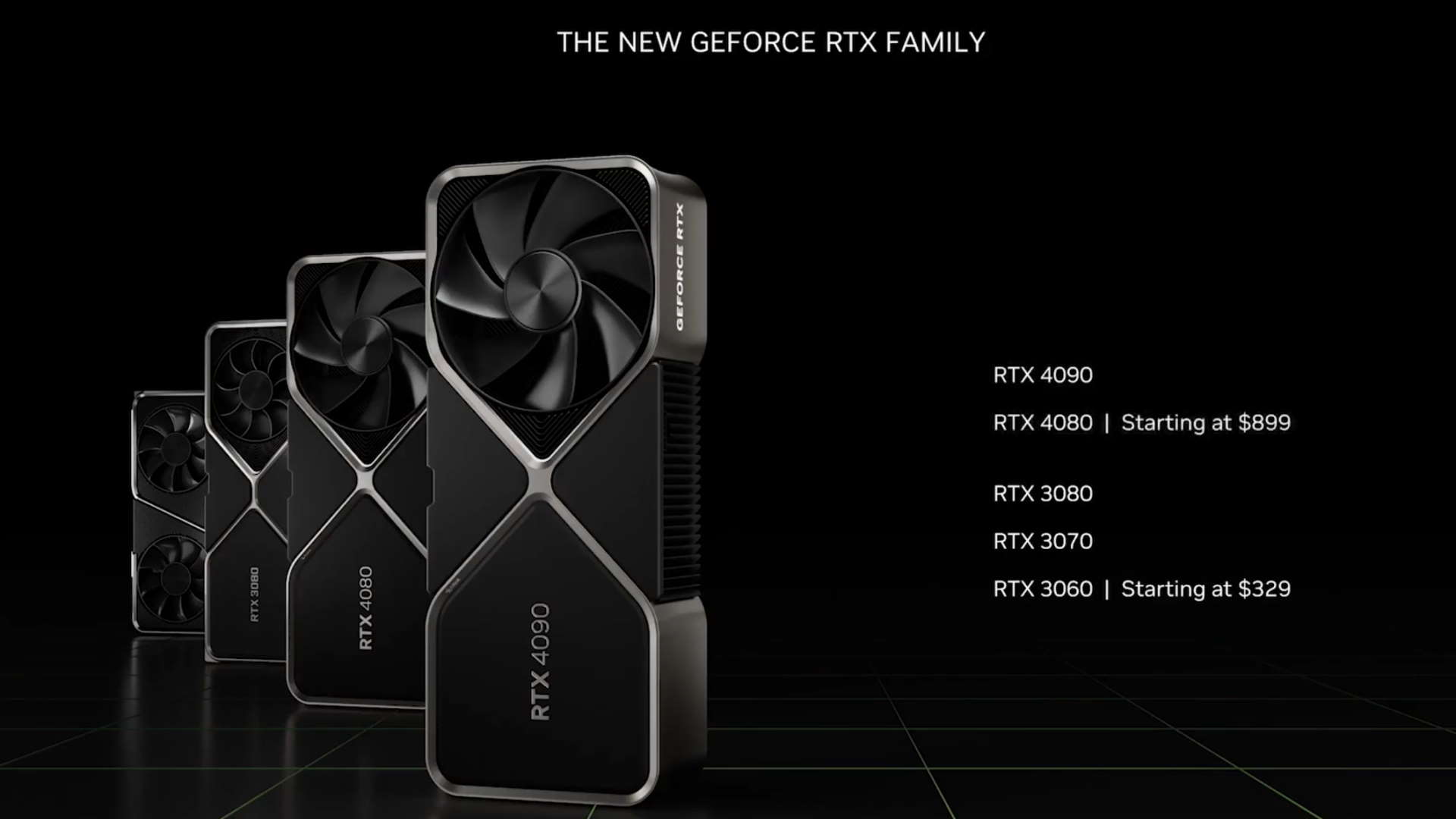

| Launch Price | $899 | $1,199 | $1,599 |

Since when did a top-tier GPU beat a lower-tier model for pure bang for buck? Since never.

At first glance, it's tricky to go back one more generation to the RTX 20-series and Turing given there was no RTX 2090. But that's really just branding—the RTX 2080 Ti was equivalent to the later xx90 series boards. In that case, the gaps between the Turing tiers were bigger than those of Ampere, but still nothing like the massive drop-off with Lovelace. The RTX 2070, for instance, was well over half an RTX 2080 Ti by pretty much every measure.

But perhaps the most damning indictment of what Nvidia is doing comes in the shape of value for money. At $1,600, the new RTX 4090 looks expensive enough to be irrelevant to the vast majority of gamers. But the fact that it looks like good value compared to the RTX 4080 12GB is completely crazy.

Put it this way, the cost in dollars per shader, per GB of VRAM, and almost certainly per ROP, per texture unit, and per everything once the full specs are released, is lower for the RTX 4090 than the RTX 4080.

Since when did a top-tier GPU beat a lower-tier model for pure bang for buck? Since never.

Okay, you could argue that one way to fix all this would be to simply tweak the branding. The RTX 4080 12GB is misbranded and should be, at most, an RTX 4070 or even an RTX 4060. Let's be generous and call it an RTX 4060 Ti. But that doesn't solve the value problem. You still have a RTX 4070 or RTX 4060 that's worse value than an RTX 4090, one that gives you less hardware, not just overall but actually per dollar, too.

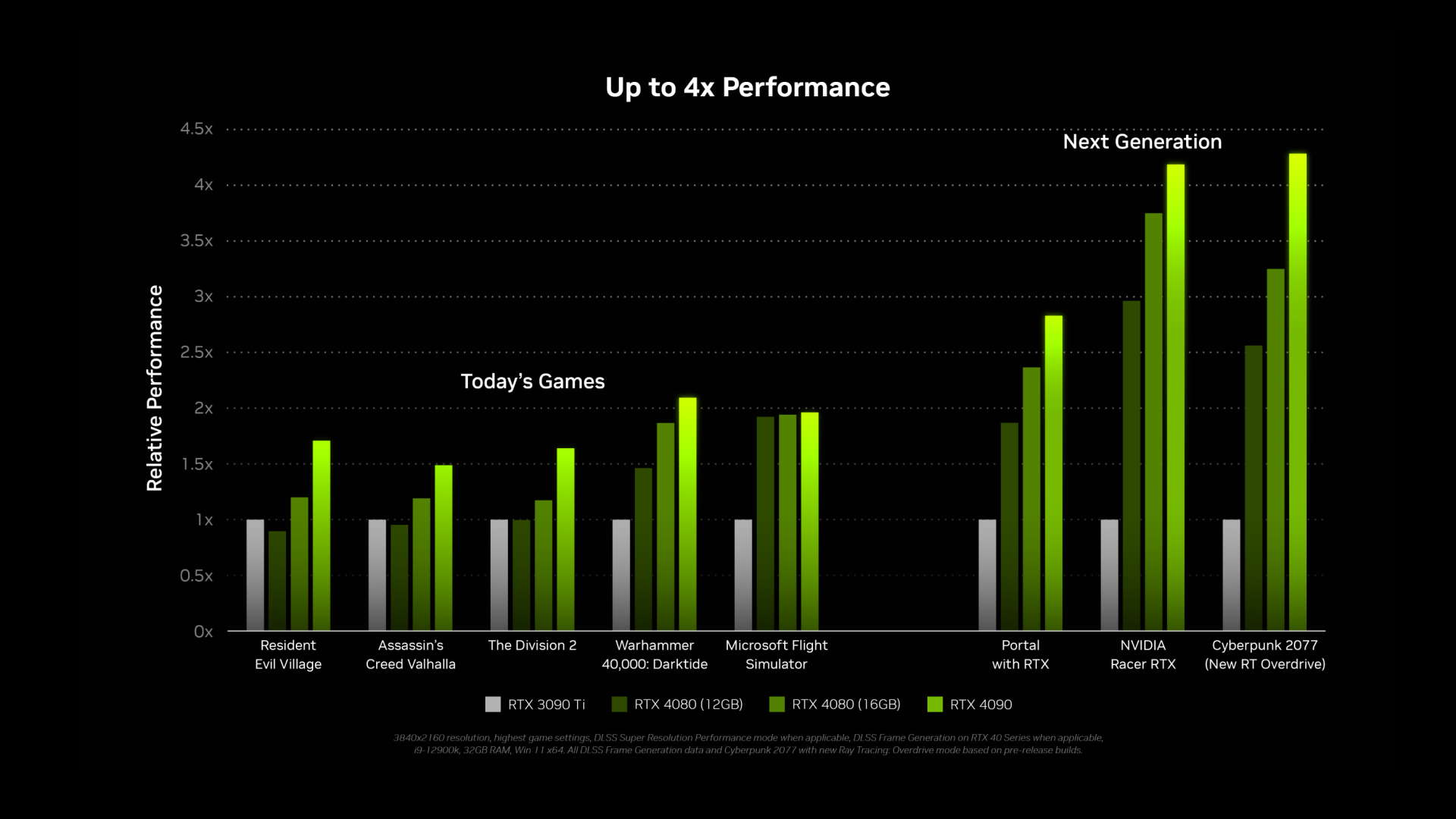

And no, before you suggest it, the higher clocks of Ada Lovelace over Ampere don't make up for this. They would if the RTX 4080 clocked something like 50% higher than the RTX 4090. But, instead, we're expecting the gap to be a few percentage points. All of which means the RTX 4080 series looks like it will very probably suck for regular rasterized games rather than those that use lots of fancy ray-tracing effects. In other words, the vast majority of games.

Hard to believe, but the RTX 4080 12GB has fewer shaders than the RTX 3080 and a much narrower memory bus. According to the best information we have, it will probably have fewer ROPs and fewer texture units, too. Yes, it has a much higher clock and Ada Lovelace's shader cores aren't directly comparable. But here's the rub. If you think the RTX 4080 12GB is going to be a big leap over the RTX 3080 in most games, just as the RTX 3080 was over the RTX 2080, you're in for a big old letdown.

It had the option of a much faster RTX 4080 but chose a massively hobbled GPU at megabucks pricing.

Looking forward, this is all very disappointing and worrying. If this is what the RTX 4080 looks like, what about the RTX 4070? Or the RTX 4060, how pathetic is that going to be? It's also worrying regarding what AMD has coming. At this point, Nvidia will know exactly what AMD's upcoming RDNA 3 graphics chips look like. OK, the SKUs and pricing may not be fully finalised. But Nvidia will know all the specs of the actual GPU dies themselves.

And in that knowledge, Nvidia thinks the RTX 4080 is good enough. Let's be clear, the AD102 GPU in the 4090 is one heck of a chip. If the RTX 4080 was based on that chip, just as the RTX 3080 was based on the big Ampere GPU, then this would all be very different. So, it's not that Nvidia has been caught off guard or failed in technological terms. It had the option of a much faster RTX 4080, but it chose a massively hobbled GPU at megabucks pricing.

Speaking of pricing, how can that be squared with what are frankly awful market conditions? After all, the world is heading into recession, ethereum mining has imploded, a glut of used GPUs is sitting in the market, all the while a mountain of current-gen GPUs is lying unsold.

Expect Nvidia to keep production numbers low for this generation. It knows the whole Ada Lovelace series is onto a loser in terms of market conditions. That can't be helped. It's going to be tough for the next 18 months to two years, at least, whatever Nvidia does. So, it will likely keep volumes uncharacteristically low and try to maintain higher margins courtesy of short supply, the idea being to keep very high prices for the longer term and for future generations when the market has picked up again, even if sales of Ada Lovelace suffer. That makes sense given Ada Lovelace has the potential to struggle, whatever, thanks to all those external factors.

AMD may still come to the rescue with RDNA 3 and the Radeon RX 7000 series, of course. But if this disappointing Ada Lovelace launch is what Nvidia thinks is good enough compared with what AMD is planning, that can't be counted on. We'll know soon enough, RDNA 3 is set to be announced on November 3. But this is a thoroughly inauspicious start to the 2022 graphics party, that's for sure.

Jeremy has been writing about technology and PCs since the 90nm Netburst era (Google it!) and enjoys nothing more than a serious dissertation on the finer points of monitor input lag and overshoot followed by a forensic examination of advanced lithography. Or maybe he just likes machines that go “ping!” He also has a thing for tennis and cars.

Join The Club

Join The Club