If Nvidia Ampere graphics cards feature a second processor I'll eat my 2080 Ti

And sick it up and eat it again if Nvidia creates standalone ray tracing accelerator cards.

Over the weekend rumours broke suggesting Nvidia was about to dump a second slice of processing silicon onto its upcoming Ampere GeForce graphics cards to aid with ray tracing performance. This, it was suggested, was the reason behind the strange, dual-sided cooler design, and this was how Nvidia was going to change the world of real-time ray tracing in its upcoming GPU generation.

That all seemed, quite honestly, about as likely as the company co-authoring a paper with AMD about "Why you should stop playing videogames and just go play outside". The idea of a traversal co-processor sitting on the same board as the GPU appealed to some as an elegant way of improving ray tracing performance while also making it easy to bin lower spec chips.

It also seemed there was an appetite for a standalone ray tracing accelerator card, featuring the tree traversal co-processor, that would allow gamers to add another PCIe device into their PC to aid RT performance. 'Cos that worked out so well for PhysX, right?

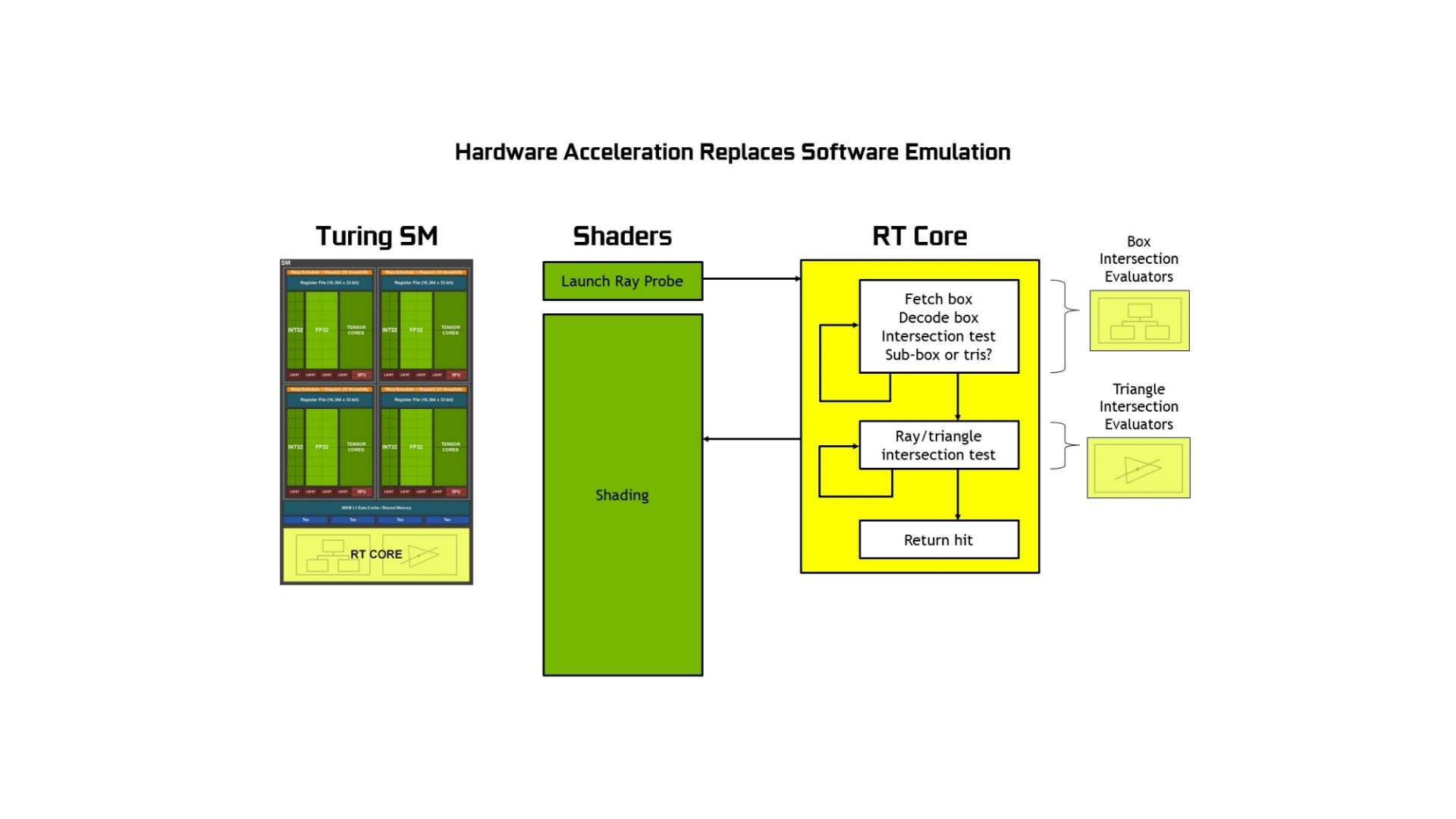

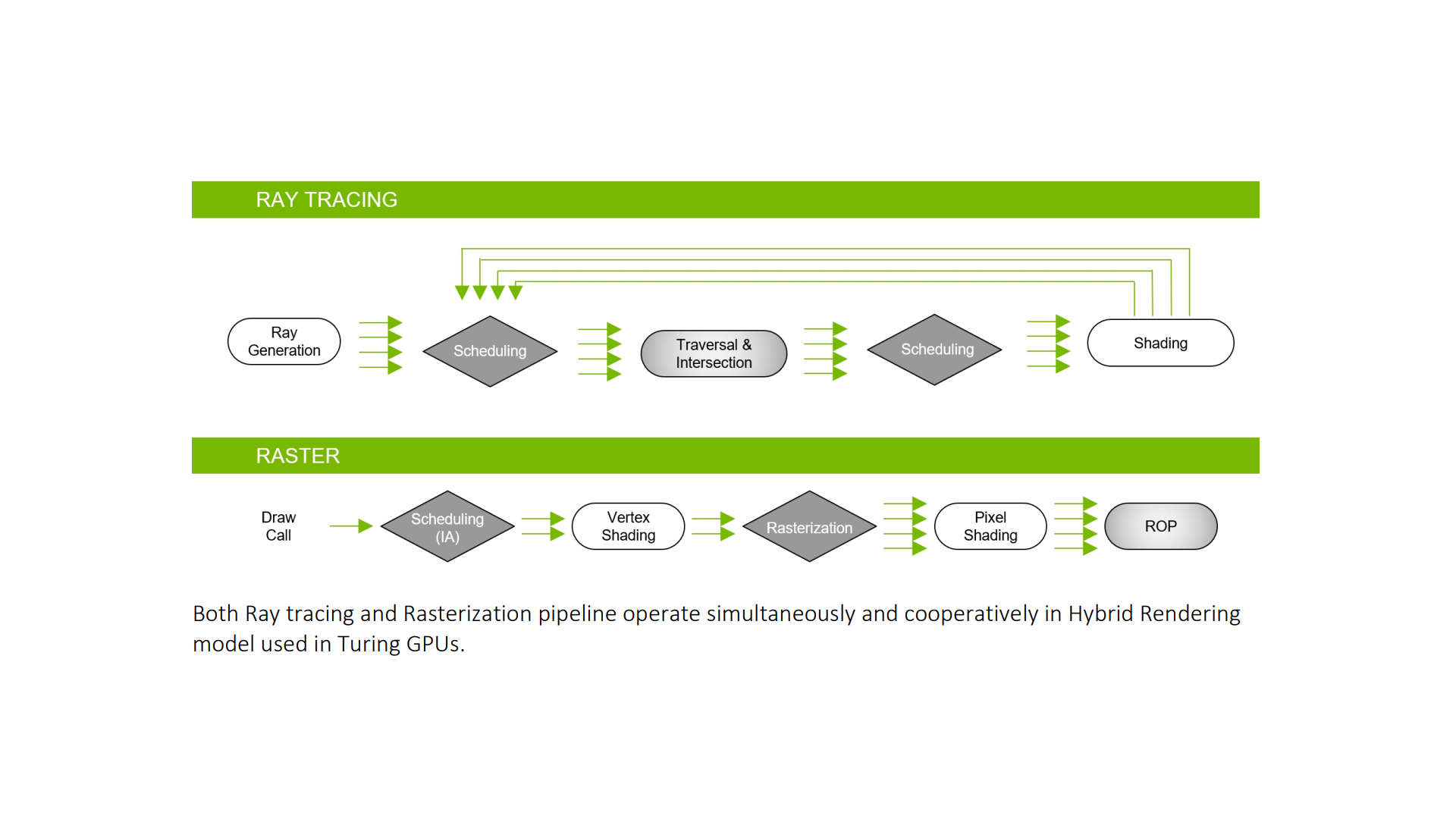

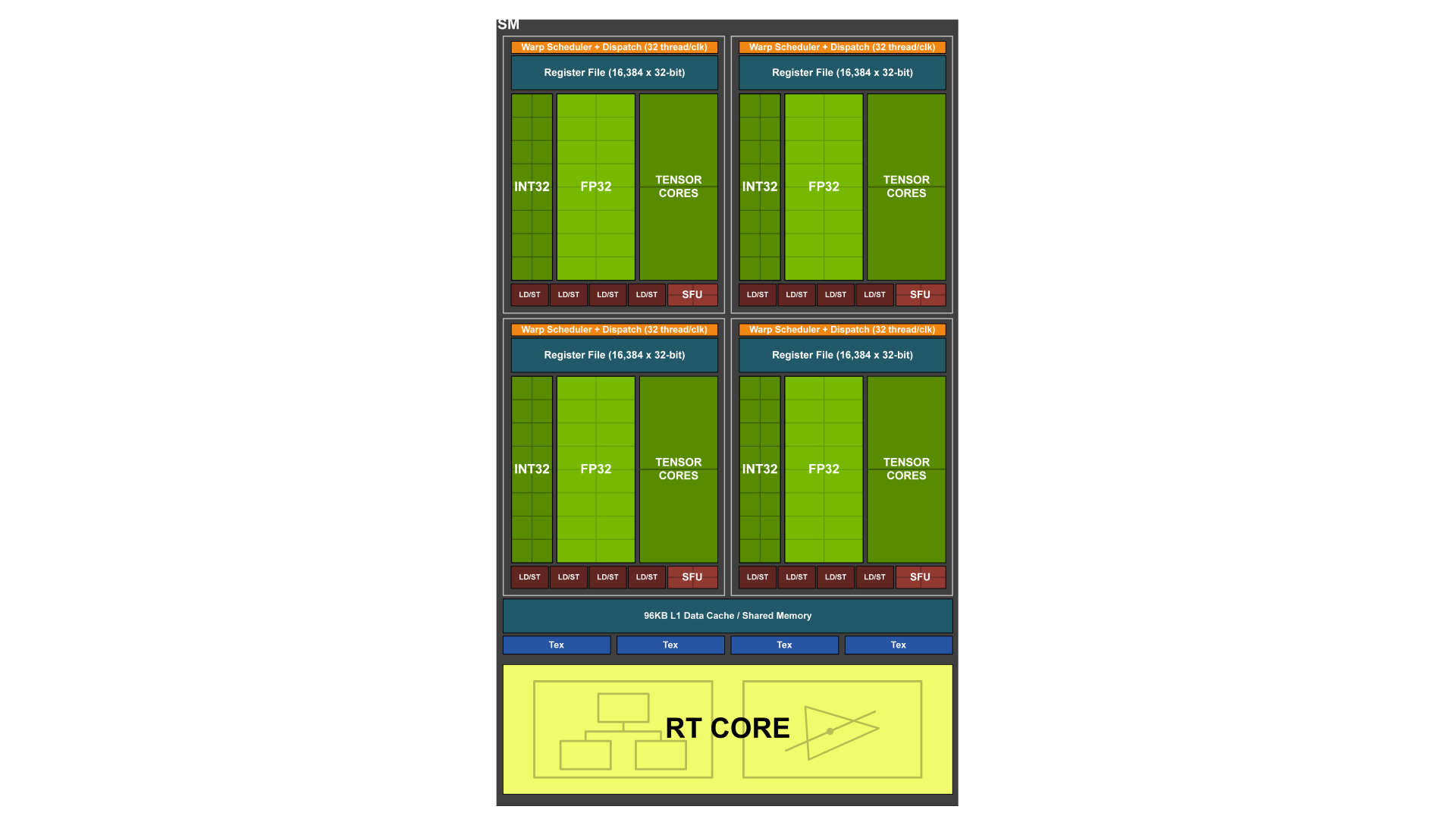

Article continues belowAs HardwareTimes says, however, the idea of pulling a vital cog in the game rendering engine out of the GPU sounds doomed to failure. The RT cores introduced with Turing are accelerators designed to improve the speed of calculating the vital bounding volume hierarchy (BVH) algorithm within the Microsoft DirectX Raytracing implementation of real-time ray tracing in-game. The algorithm splits up a 3D image into bounding boxes to make it easier to sort and process.

The Turing RT cores work concurrently with the rest of the Turing shaders, sitting alongside the standard rasterizing pipeline, and returning their own work to create a complete frame.

Pulling that acceleration outside of the pipeline would add unwanted latency into the proceedings, as it dips in and out of the GPU's own cache, which ultimately sounds like something no graphics engineer or game developer would ever want.

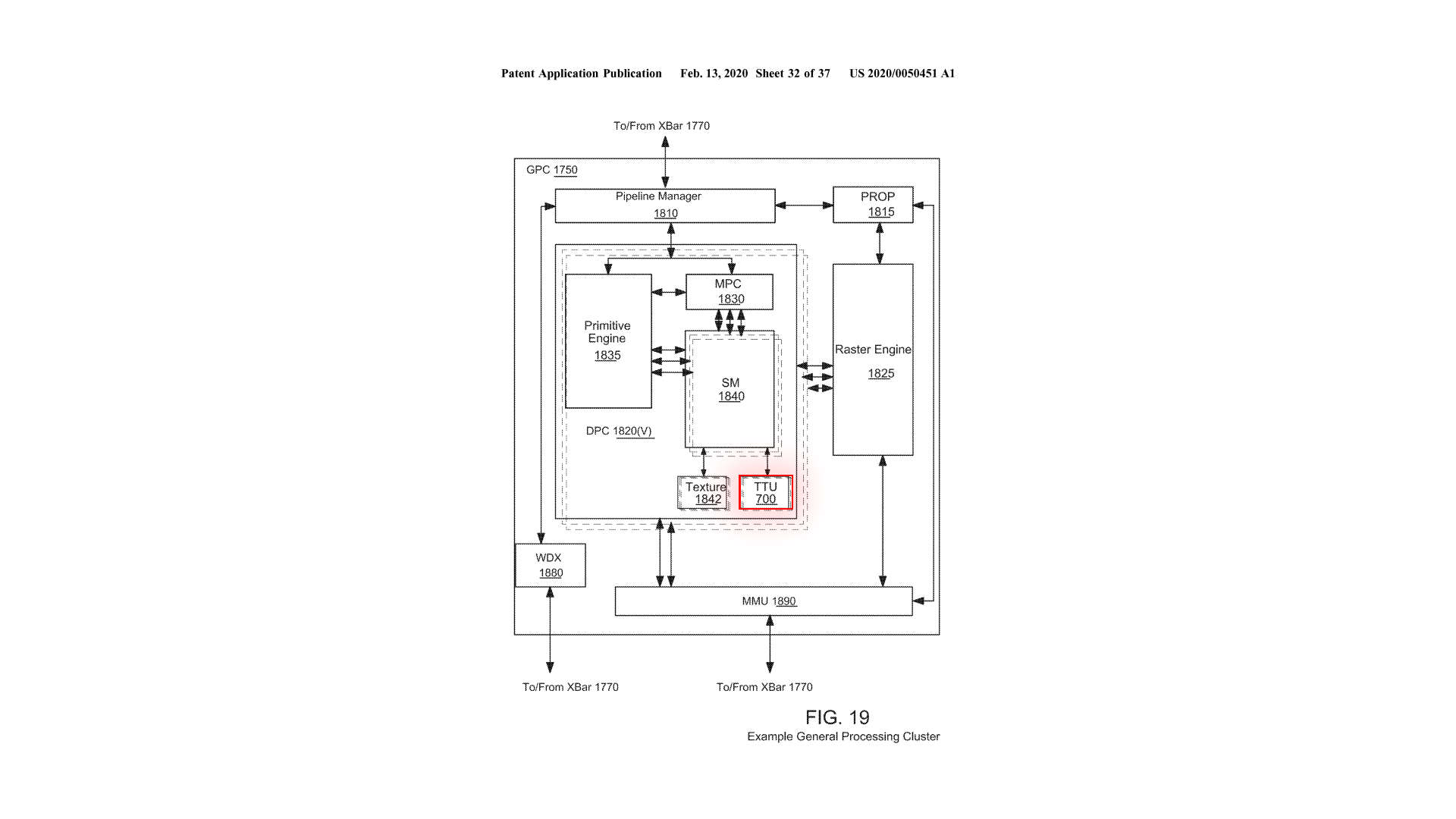

Part of the evidence given to support the credence of Coreteks' co-processor speculation was a patent filed in 2018, published earlier this year, which spoke of a particular tree traversal co-processing unit (TTU) which would essentially serve to accelerate ray tracing performance.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

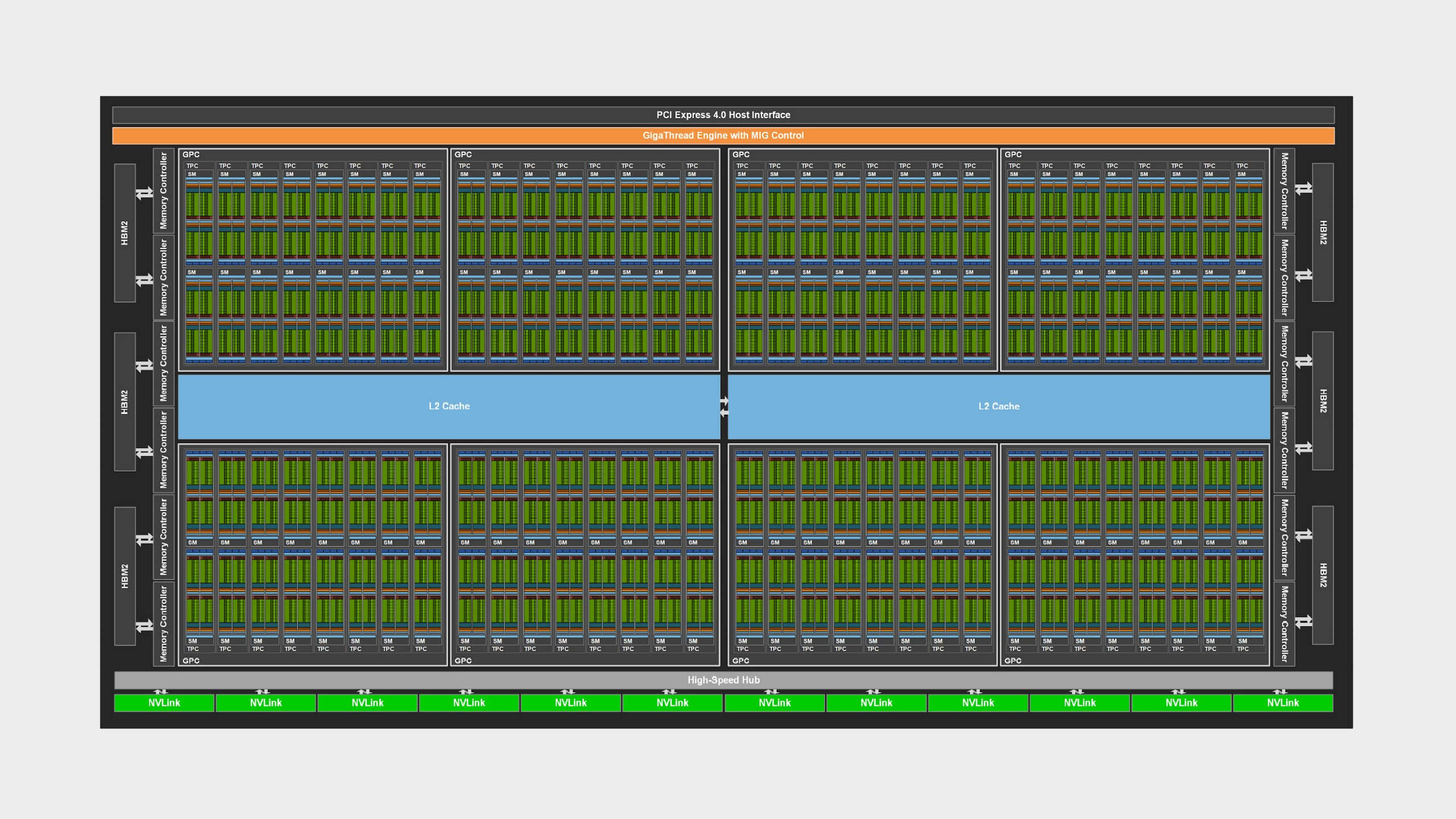

Which is fine, but the images submitted in that patent clearly show the TTU residing within the GPU, inside the general processing cluster (GPC), and alongside the streaming multiprocessors (SMs) in the texture processing cluster (TPC) of a chip.

Kinda like an RT core.

None of this sounds like a dedicated co-processor that sits outside of the general Ampere GPU silicon, and really just sounds like the sort of improved accelerator which might offer four times the ray tracing performance of Turing. Something Nvidia might want to call, I don't know, a second-gen RT core? Though that's probably an oversimplification, because I'm not a GPU engineer.

But it's also true that Nvidia patents dating back to 2015 have spoken about TTUs sitting alongside the SM to accelerate ray tracing, so none of this scans like a whole new way for Nvidia to make with the pseudo photonic pretties and more like an evolution of the architecture it's been working on for at least the last five years.

Still, it's fun to speculate, and hopefully we'll know a hell of a lot more over the next three months as the high-end GPU bunfight between AMD and Nvidia comes to pass.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.

Join The Club

Join The Club