Best graphics cards in 2025: I've tested pretty much every AMD and Nvidia GPU of the past 20 years and these are today's top cards

I've made a career out of prodding graphics cards and this has been the toughest set of recommendations I can remember.

I don't remember a time when picking the best graphics card was such a tough decision. In previous years we've maybe made it easier on ourselves by going on essentially a sliding performance scale, with a nod to price banding, but this year I want to be a bit more definitive about the actual GPUs we recommend rather than just giving you a big ol' list of graphics cards to pick from.

But I've tested and gamed on pretty much every new graphics card that's been released in this generation, from the Intel Battlemage cards that launched late last year, through the initial Nvidia RTX Blackwell launch, and on to AMD's RDNA 4 cards. I believe that makes me well placed to talk you through what the best graphics card is of this generation, and it's the AMD Radeon RX 9070.

For most PC gamers this is the graphics card which offers the most performance for the money, delivering decent 1440p performance, and much improved ray tracing over previous AMD generations of GPU. It's also rocking 16 GB of VRAM to be able to deliver on high graphics settings at that resolution now and into the future, too. It was priced appallingly at launch, but now it's settling down and delivering performance close to the RX 9070 XT and therefore the RTX 5070 Ti.

The absolute top, from a pure performance standpoint, however, is the Nvidia RTX 5090. That's the best high-end graphics card with no other contenders getting near its mix of style, features, and raw computational grunt. It's also a card where no-one gets close in terms of price, either, especially with prices the way they are right now.

High. That's what prices are right now, with quoted MSRPs being pure fiction while supply and demand seem so unevenly balanced, and then there's the considerable flux of tariff related price hikes, too. Essentially it's tough to be after a new graphics card in 2025.

Quick list

The best overall graphics card

The AMD Radeon RX 9070 is our current pick for the best graphics card for most PC gamers. Its price/performance combo, coupled with the improvements that AMD has made to both its ray tracing and upscaling tech, makes it a winner for me.

The best value graphics card

With a one-two right at the top of this guide, it shows just how well AMD's new RDNA 4 cards have done. The AMD Radeon RX 9060 XT shows both the red team's commitment to good value, and delivering a bunch of VRAM through the stack.

The best budget graphics card

It was anything but a shoo-in for Intel's cheap Arc B570 to get a pick in our best GPU guide, but it's got a lot of VRAM for the money, and when it's around its MSRP there's nothing that can touch it. Until the RTX 5050 comes back into stock, maybe.

The best mid-range graphics card

It's a bit of a fight between the RTX 5070 Ti and RX 9070 XT for this spot, but the Nvidia just wins as globally the price delta between them isn't so great. And prices being similar, the GeForce card has a better feature set, better overclocking, and higher overall fps.

The best high-end graphics card

While it may only be some 30% faster than the RTX 4090, there is no other consumer GPU on the planet that comes close to its power or potential. And it also has the Multi Frame Gen magic trick at its disposal to deliver ludicrous frame rates in compatible games.

Dave first started as a PC hardware journalist, after years of freelance games journalism, way back in 2005 when he started with PC Format magazine. In that role, and many others since, he's tested pretty much every single graphics card generation and architecture released in the intervening years. He's the person best placed around PC Gamer to tell you which graphics card is the best right now, because he's used them all.

July 5, 2025: I've added in a full rundown of exactly how we test each and every graphics card that crosses our desks. And there's a lot of work that goes into benchmarking and reviewing a new GPU, and we're now presenting all the data we collect here, as well as in all the reviews that we do.

July 4, 2025: Complete rewrite of the best graphics card, with an entirely new list and categorisation. I have also included the specs of all the latest generations of AMD and Nvidia graphics cards, as well as a GPU heirarchy listing for the latest graphics cards, too.

January 30, 2025: Added in the new RTX Blackwell cards now that they have been released... and immediately disappeared from stock. Though surely restocks will be coming along soon for the RTX 5090 and RTX 5080 GPUs.

1. Best overall graphics card: AMD Radeon RX 9070

Specifications

Reasons to buy

Reasons to avoid

✅ You want a good 1440p GPU, and a great 1080p one: With the ray tracing updates baked into RDNA 4, the RX 9070 is now a fantastic all-round graphics card. It can nail 1440p and 1080p resolutions at top settings, and can call on FSR and AMD's frame generation feature to give you a boost if you need it.

✅ You're happy to undervolt/overclock: With some easy tweaks in the AMD drivers you can boost the performance of the GPU to within a couple of percentage points of the RX 9070 and RTX 5070 Ti. And the best part is that it doesn't demand a ton more power or cooling to get there, so shouldn't stress you or your fancy new card.

❌ You can find an RX 9070 XT for anything close to the same price: While you can overclock the non-XT card to get within 2% of the performance, prices being equal the XT card is a no-brainer.

🪛 The Radeon RX 9070 is an excellent graphics card if you're looking for high 1440p performance without the almost $1,000 price points of the competing Nvidia cards. With the market as it is, the AMD card is the best GPU for most PC gamers.

The RX 9070 is a rather agonising pick as the best graphics card around right now. There are inevitable caveats around my decision, because quite obviously this second-tier RDNA 4 GPU is not the most powerful GPU you can buy. The AMD Radeon RX 9070, however, is the graphics card I think makes the most sense to the most PC gamers.

And, realistically, it's going to be the one that I would suggest to my PC gaming friends if they come looking for a recommendation. Luckily, I don't actually have friends.

The RX 9070 offers close to the performance of AMD's most powerful RDNA 4 GPU, still packs in 16 GB of GDDR6 video memory, can be easily overclocked without demanding much more cooling or power, and handily outperforms Nvidia's similarly priced, lower-spec RTX 5070.

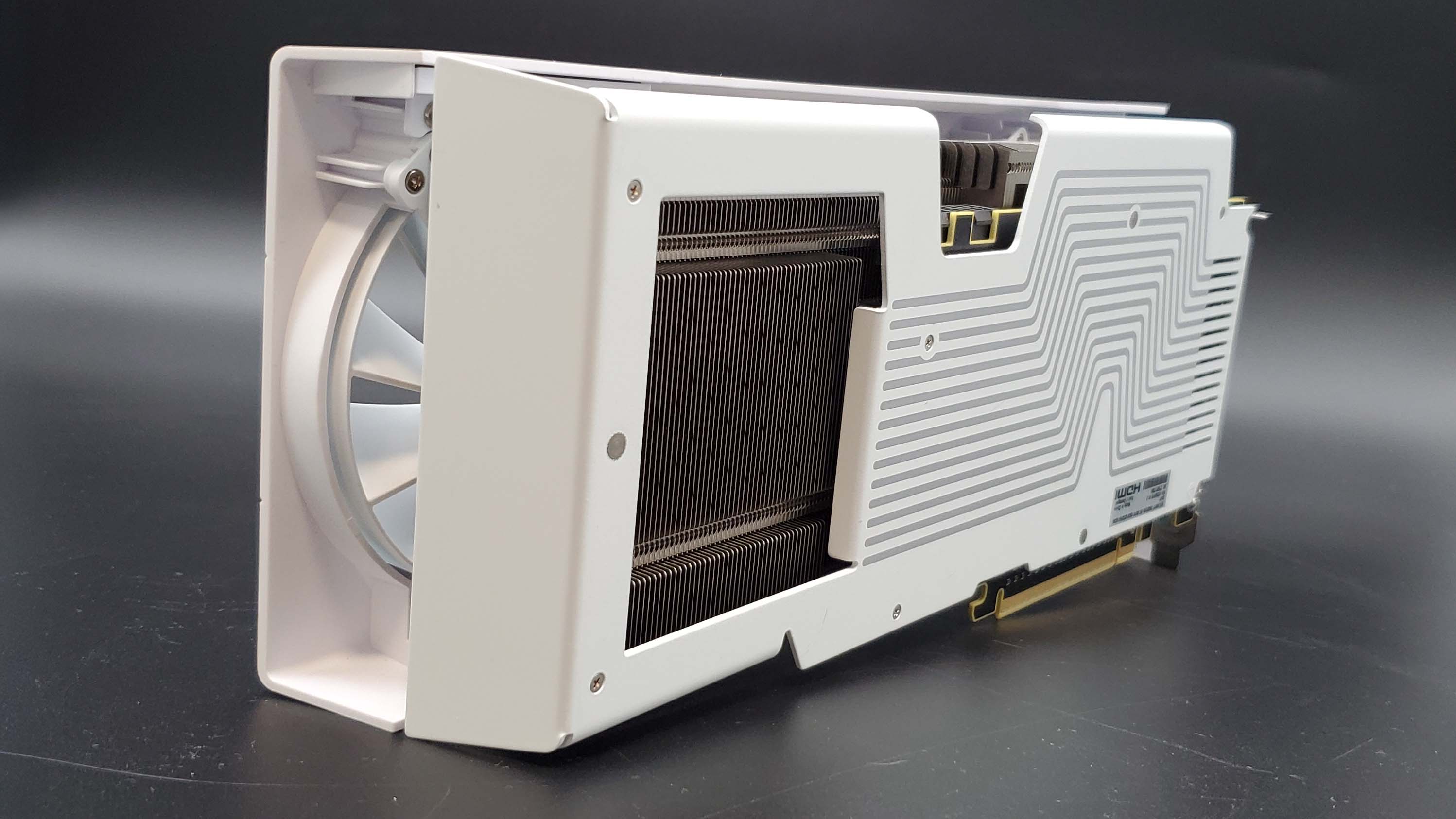

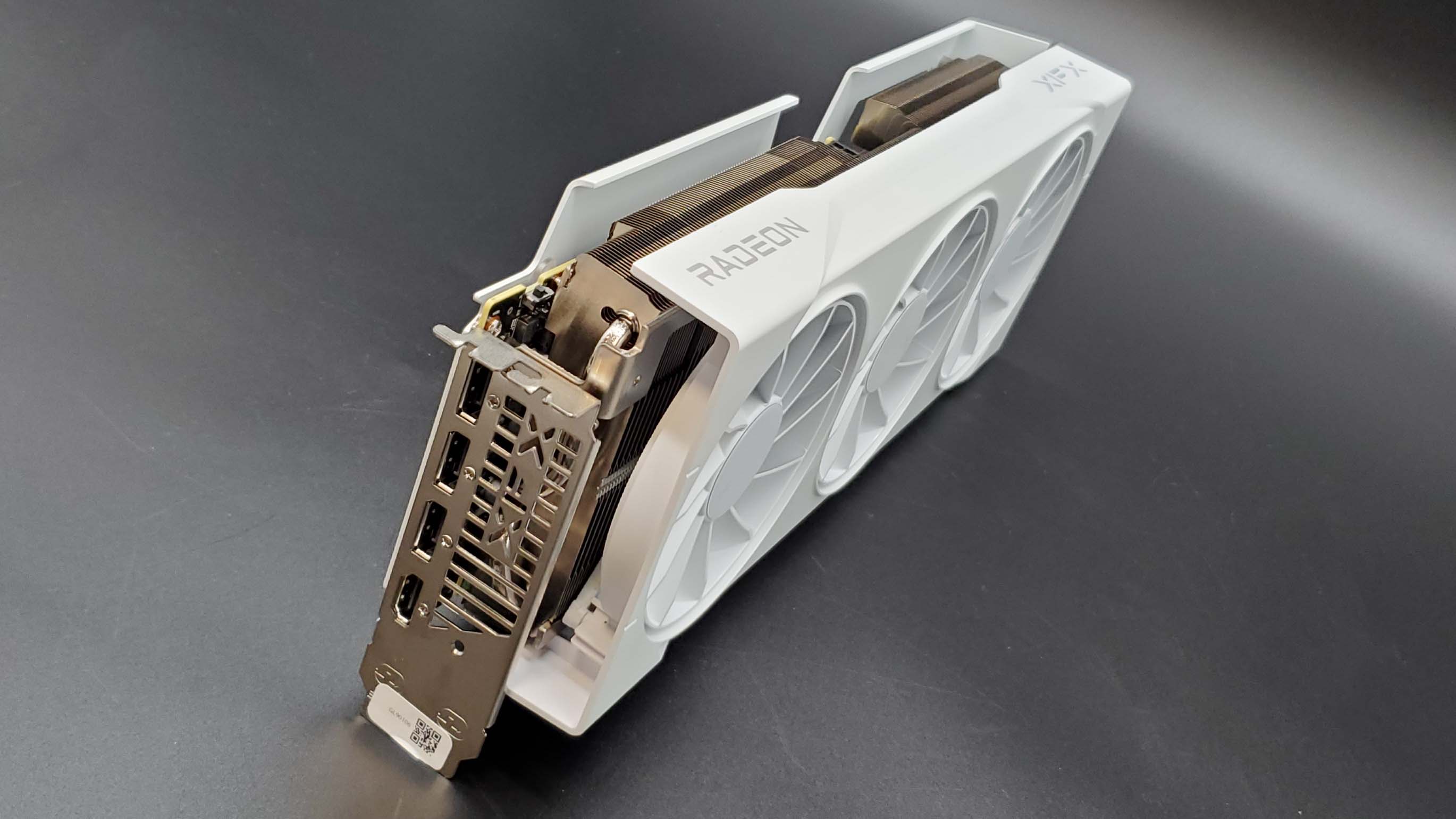

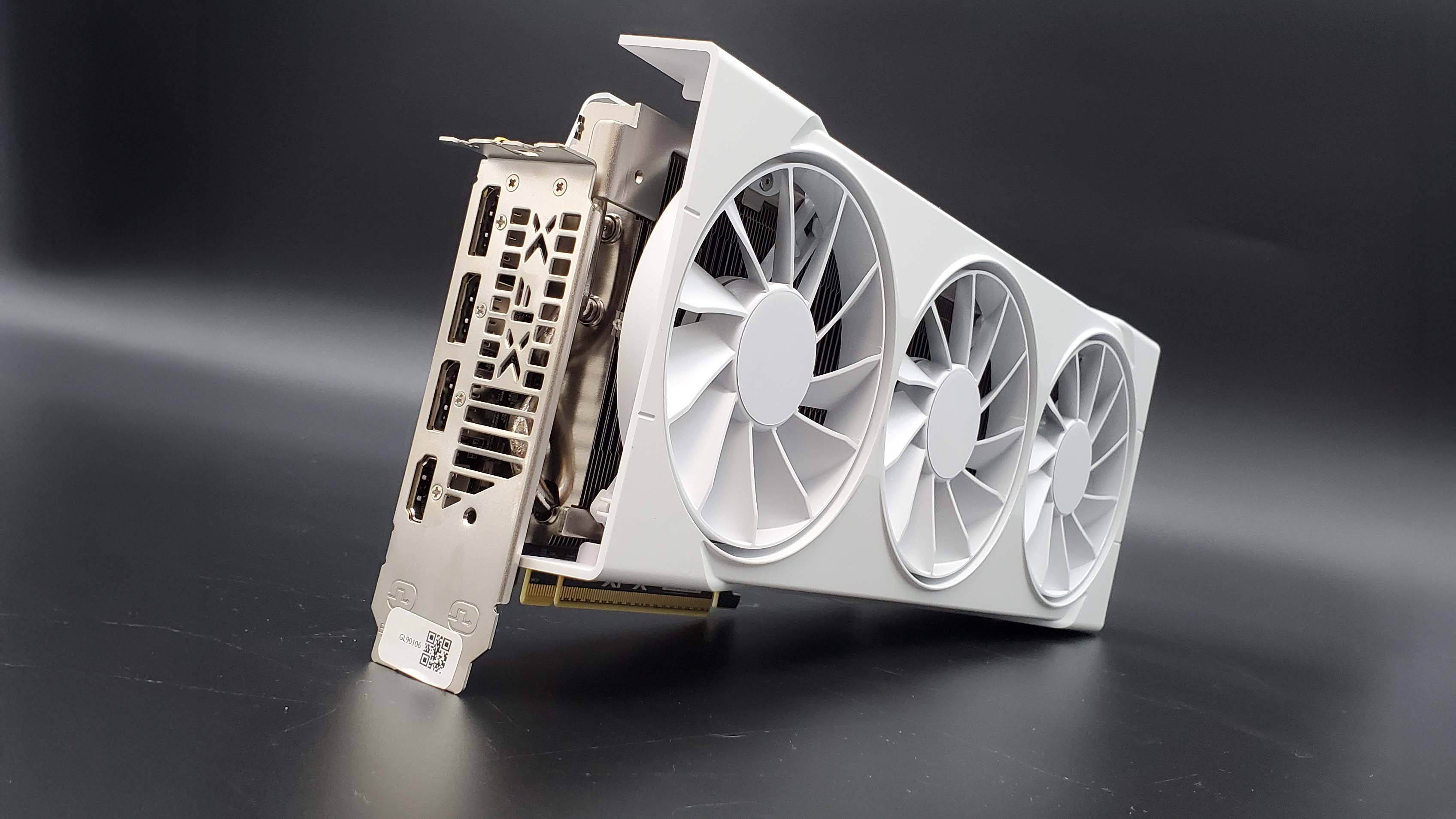

I was relatively cool on the card in my initial review of the GPU, mainly because our first taste of it was a card that XFX hiked the price of out the gate, which made it more than a reference RX 9070 XT. As things have evened out, there are reliably cheaper RX 9070 cards around and when you look at the graphics card market as a whole, the Radeon stands out as a great pick.

Yet I still haven't come to that conclusion lightly. My first thought was going to be recommending the $370 RX 9060 XT, because it is great value, performs well, and pretty much offers performance parity with the more expensive Nvidia option. But it doesn't feel like a great recommendation for the best graphics card, given that it is a third-tier Radeon that isn't particularly exciting.

The Radeon RX 9070 XT, though, is a fantastic card and were it not for the ludicrous over-pricing of AMD's finest it would absolutely be my top pick. With an MSRP of $599 it would be an easy choice for its impressive performance out of the box, stunning under-volting frame rate bumps, and the excellent filip of the new FSR4 upscaler. But the cheapest you can get it for these days is still near $750, with the RX 9070 generally around $100 cheaper with very similar gaming performance.

Though the RX 9070 is still $100 more than AMD's MSRP for the GPU, which does absolutely sting. These are the graphics card hell times, however, so that is almost to be expected. In the UK it is more reasonable, with a £540 price tag trending down towards its MSRP, and that is welcome news for PC gamers and hopefully gives a glimpse as to what price normalisation might start to look like for folk in the US down the line.

Though it is absolutely worth noting that Nvidia's competing, and competent, RTX 5070 is generally cheaper and closer to its own MSRP.

That card is generally behind the curve when it comes to gaming performance; we've found it on average around 7% slower in straight raster frame rates. It too is a great overclocking card, but when both the RTX 5070 and RX 9070 are pushed to their limits, the AMD card actually stretches its lead to around 10% faster than the GeForce GPU.

In pure performance terms, then, it's the AMD RX 9070 all the way. But there is one trick the Radeon cannot match, and that's the RTX 5070's Multi Frame Generation (MFG) feature. Being able to drop in up to three extra AI generated frames in between each actually rendered one can give the Nvidia card far more impressive performance in supported games, though it can also introduce graphical artifacts where the initial gaming performance is too low.

Basically, the lower you go down the GPU stack, the less effective MFG is. Though, I will say that with AMD's new machine learning-based FSR 4 upscaler being in so few of the games that you will be playing, Nvidia's DLSS 4 and Frame Gen feature package remains a very compelling one.

In the end though, the extra raster performance of the Radeon RX 9070—and how close it can get to the more expensive RTX 5070 Ti and RX 9070 XT GPUs—makes it the best graphics card to buy right now for most PC gamers.

Read our full AMD Radeon RX 9070 review.

⬇️ Click to load the benchmark data⬇️

2. Best value graphics card: AMD Radeon RX 9060 XT 16 GB

Specifications

Reasons to buy

Reasons to avoid

✅ You want a lot of VRAM without spending a ton of cash: The 16 GB version of the RX 9060 XT has an MSRP of $349, and even in these price-inflated times it's regularly available right now for well under $400.

✅ You want an affordable upgrade for modern 1080p or 1440p gaming: Smooth 4K gaming is beyond even the fastest budget cards, but at lower resolutions the RX 9060 XT delivers great performance for the cash, particularly compared to previous generations.

✅ You want bang for your buck: The RX 9060 XT might not be the fastest card on the market, but nothing touches it at this price.

❌ You can really stretch that budget: For under $400 there is nothing that touches the RX 9060 XT, but if you can push on to ~$450 that brings you into RTX 5060 Ti 16 GB territory. That is an overall faster GPU with more overclocking potential.

❌ You want productivity performance: This is a gaming card, through and through, so if you're looking for productivity chops then Nvidia is the way to go.

🪛 The AMD Radeon RX 9060 XT is not a sexy graphics card, it's not the fastest graphics card, but it's an honest GPU that will deliver good gaming performance at the most popular resolutions with top settings. And all for under $400.

To be able to pick up a genuine 16 GB graphics card, with a seriously performant GPU core at its heart, for under $400 is pretty great. It would be even better if the card in question was actually retailing for its original MSRP, but even at the current slightly over MSRP pricing, the Radeon RX 9060 XT is still our pick for the best value graphics card today.

Okay, the latest AMD RDNA 4 GPU is not quite living up to its launch claims of beating the RTX 5060 Ti, certainly not across our benchmarking suite, but at 1080p the Nvidia card is less than a percentage point ahead on average, and it's by only 3% at 1440p. Effectively, I think we can kinda call that parity.

The GeForce card is more consistently ahead at 4K—even though they both carry 16 GB on the top versions of the card—but neither are capable of actually playable frame rates at that resolution. And even the boon of Multi Frame Generation can't help in reality.

But the parity at 1440p and 1080p itself is impressive for the AMD card, especially when you take into account that we're testing games which have pretty hefty ray tracing workloads in them as part of our benchmarking gauntlet. Historically that's where AMD cards have fallen down against Nvidia, but the new RDNA 4 architecture has changed around how the Radeon cards deal with ray tracing, giving them more dedicated silicon to do the work, and that has made all the difference.

Another area where there has been historic Nvidia dominance is in the feature set, and honestly, the green team still has the edge on that count. The twin pillars of DLSS 4 upscaling and the Multi Frame Generation (MFG) feature do give the GeForce card some real merit. But the quality experience of MFG is very dependent on there being a relatively high, consistent frame rate before the interpolation of up to three extra frames kicks in. That means its effectiveness does diminish lower down the GPU stack.

AMD has introduced its own new upscaler in this generation, however, with FSR 4. That's a new take on the GPU feature, more closely resembling Nvidia's DLSS as it now uses machine learning to improve the visual fidelity of the upscaled image. It's only in a few of the games we're playing at the moment, but hopefully it will start to become as ubiquitous as standard FSR and DLSS going forward. It's certainly an impressive piece of tech, and when combined with AMD's own frame gen tech it does give the RDNA 4 architecture some effective weapons in its arsenal, closing that gap with Nvidia a whole bunch in this generation.

But don't expect much in the way of overclocking with this card. A surprising feature of all the latest GPUs from Nvidia and AMD has been their proclivity for overclocking or undervolting. Not so here. Our testing has shown that AMD's engineers have obviously pushed the Navi 44 silicon as hard as it can in order for it to get as close to the RTX 5060 Ti as possible. That means there's no real headroom there for the end user to play with.

The RTX 5060 Ti, however, does have a little extra in the tank, so if you are happy to overclock, we've found you can consistently create a performance delta of around 10% between the two cards in the Nvidia GPU's favour.

It's worth noting here that it's the 16 GB version of this card that we're recommending here (though if you simply cannot go north of $300, the 8 GB version wouldn't be a bad shout). Both AMD and Nvidia have released twin versions of their cards with 16 and 8 GB VRAM allocations and, given the memory-intensive direction detail-heavy modern games are going, that extra memory headroom will be valuable even if you're gaming at 1440p.

So, our recommendation would be to aim for that 16 GB version if you have the patience to save up a little longer, as it will help in the long run. Then you'll be rocking a real solid, pretty low power GPU, that can stand toe-to-toe with the more expensive Nvidia cards.

Read our full Radeon RX 9060 XT review.

⬇️ Click to load the benchmark data⬇️

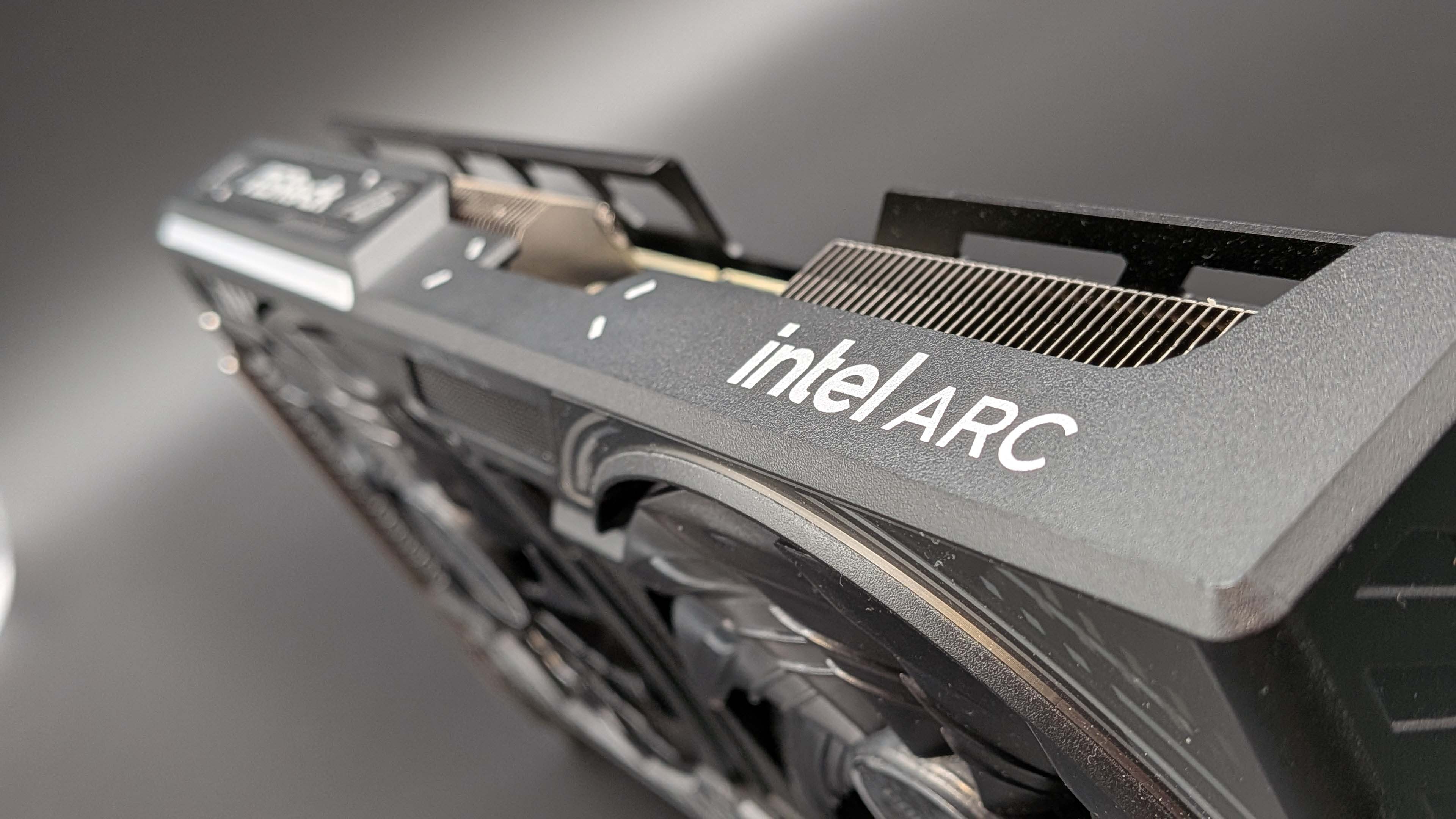

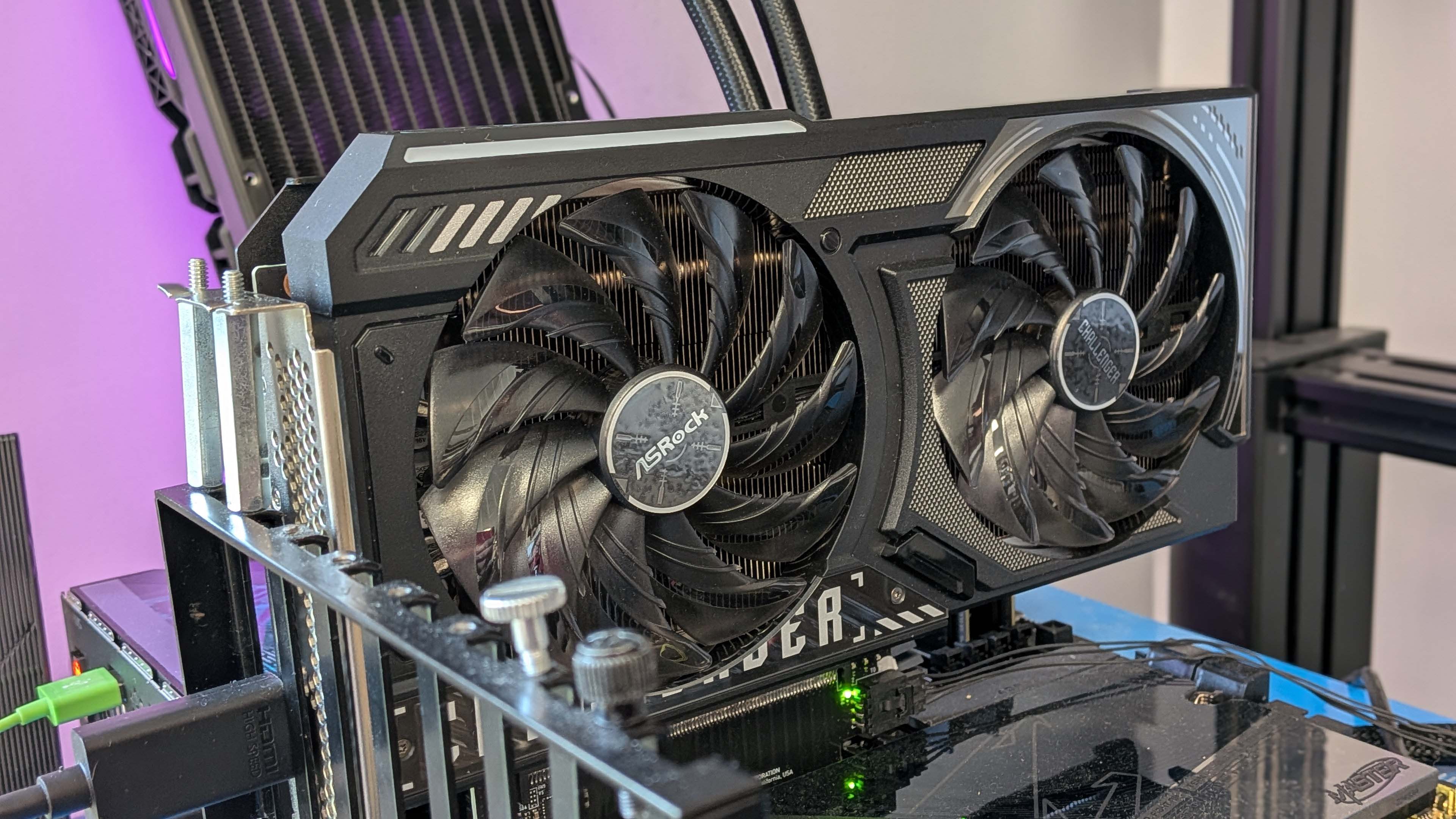

3. Best budget graphics card: Intel Arc B570

3. Intel Arc B570

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✅ You are on a restrictive budget: Around the Arc B570's MSRP there is nothing that can really compete right now. If you can spend a bit more you will get a lot more performance out of a $300 RTX 5060 8 GB, but that's only an option if you the spare cash.

✅ You're not afraid of finding a fix: As a relatively new player in discrete GPUs, Intel's drivers will turn up issues occasionally in newly released games, but if you can wait for a driver fix, or are willing to find a workaround they can be ironed out.

❌ You can find an RTX 5050 at a similar price: The cheapest RTX Blackwell GPU is currently suffering stock issues, but has a launch price that is close to the Arc B570 and should beat it by around 10% on average in games.

❌ You don't ever want to deal with driver issues: You will likely have some issue with the B570 at some point, in some new game, and if that's going to be a deal-breaker for you then maybe steer clear. Though it is worth noting that Nvidia's drivers have shown a proclivity for flakiness in recent times... Intet is not alone in that.

🪛 The Intel Arc B570 is a 'right now' recommendation for the best budget graphics card. If Nvidia's RTX 5050 hits its ~$250 price point then that becomes a far more compelling option than Intel's budget card unless that can drop below the $200 mark. But right now it is the best option for those on a limited budget looking for good 1080p gaming performance and a decent level of VRAM.

Difficult GPU recommendations is kinda the theme for this graphics card tier list, and they don't come much tougher than the budget pick. In a world where GPU pricing has been trending ever higher since the pandemic, and even prior to that with the ethereum mining booms, it's hard going trying to find a really cheap graphics card that's still worth the money in 2025.

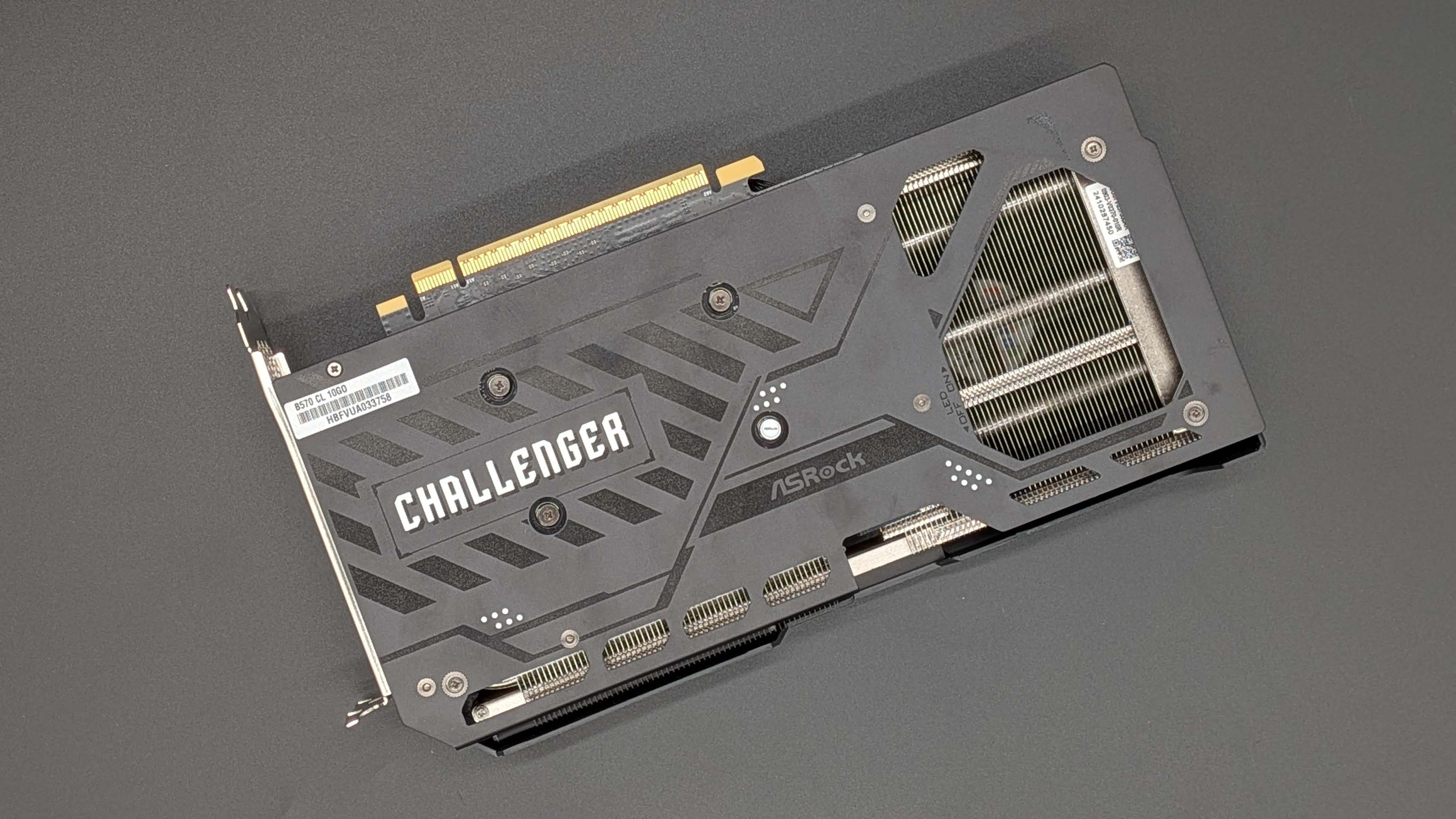

Now, bear with me, because you might be reasonably surprised at my recommendation of the Intel Arc B570 as the best of anything in the GPU market. But Intel's drivers have improved measurably since the first Arc Alchemist cards arrived, and while you will find some new games that don't play nice at launch, and there remain some compatibility issues with AMD's X3D chips, when it works properly it's the best sub-$300 card around.

At least until we're sure how the new RTX 5050 performs, but Nvidia has been holding back reviews of its 8 GB cards in this generation. For reasons. But, according to Nvidia's own numbers it's essentially an RTX 4060 level card with MFG, and if you can find that for $250 MSRP that's not a bad shout for best budget GPU as that would put it around 16% quicker than the Arc card on average; sometimes much quicker. It's just launched, though, so the nominally $250 card is out of stock everywhere right now anyways.

Which leaves the B570 as the only game in town. In the US it too is a ~$250 card—a bit higher than its $220 MSRP—but in the UK it's available for below £200. That does put it in proper budget GPU territory and if you take inflation into account it's around the same sort of level as the classic GTX 1050 Ti.

For the most part, that's going to get you around 60 fps at the very highest 1080p graphics settings, and that's native performance before you start to bring in either upscaling or frame generation. When you do that then the Arc B570 is actually capable of delivering that 60+ fps performance at 1440p, too, and obviously even higher at 1080p.

Part of that is because this isn't just another 8 GB graphics card, Intel has stuck 10 GB of GDDR6 on this budget card, and given it more memory bandwidth than the RX 9060 XT, too.

If pricing really is an issue, and you want a card that will deliver decent frame rates without breaking the bank, then Intel's Arc B570 is worth a look. The caveat, however, is that while drivers are better you will find some kinks still to be worked out in Intel's drivers (my B570 specifically won't work in Cyberpunk 2077 at 1080p with the Ryzen 7 9800X3D, but is fine at other resolutions and with other games) which means you have to be willing to accept that.

If you just want a budget card that will just work, then the RTX 5050 might well be a better card for you. Though, I will say that Nvidia's own drivers, which have previously been rock-solid, have been frustratingly unreliable with the RTX Blackwell generation of GPUs. When it comes to budget graphics cards, there is always going to be some compromise, I'm afraid.

⬇️ Click to load the benchmark data⬇️

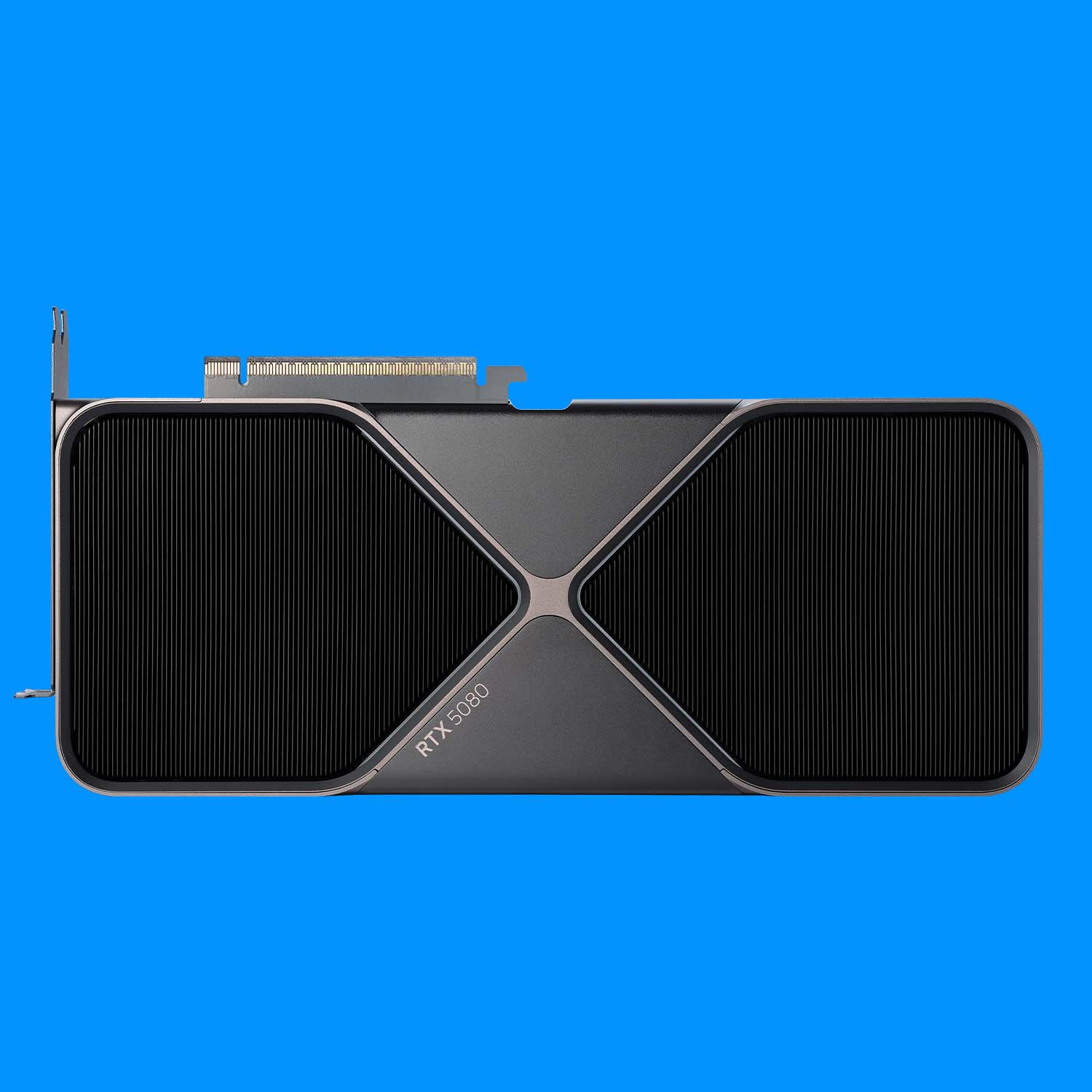

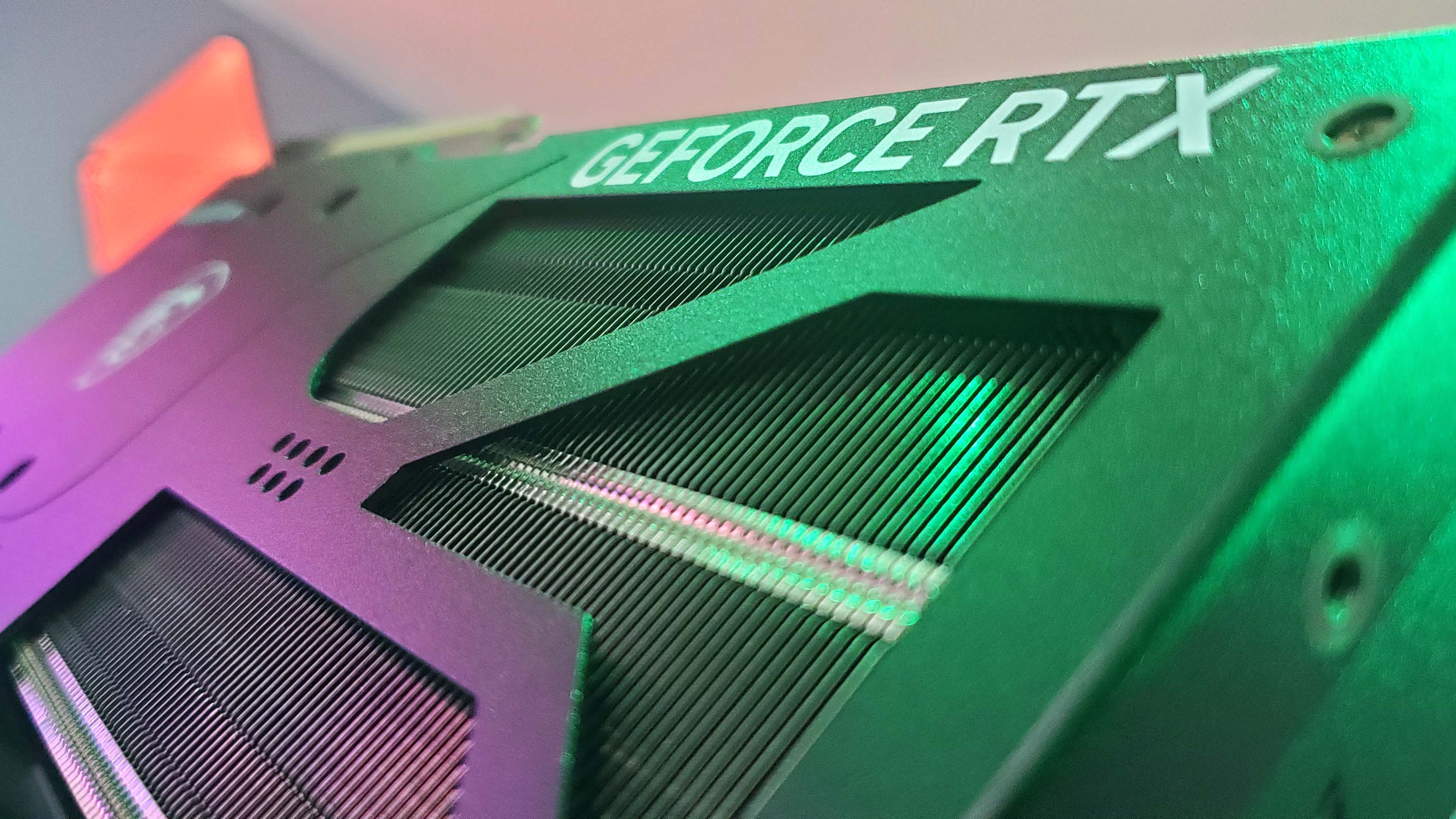

4. Best mid-range graphics card: Nvidia RTX 5070 Ti

Specifications

Reasons to buy

Reasons to avoid

✅ You can find it for close to MSRP: I get it, prices have been buck-wild recently, but they're coming down across most of the globe, and at its more reasonable price the RTX 5070 Ti is a fantastic graphics card.

✅ You are happy to overclock: Such is the level of GPU headroom in the GB203 that overclocking the RTX 5070 Ti is really a no-brainer. Even more so because it doesn't unduly stress the graphics silicon, is easy to do, and can genuinely get you a bunch more frames per second for little effort.

✅ You're not ideologically opposed to Multi Frame Generation: The RTX 5070 Ti's native performance comes in at a level where MFG really comes into its own, delivering super high, super smooth frame rates where it's supported. Which is a lot of games, right now. But if you're against it on principal no matter how good it looks then the RX 9070 XT is probably the GPU for you.

❌ The price delta between it and the RX 9070 XT is over $100: The RTX 5070 Ti and RX 9070 XT cards go head-to-head in the performance stakes, and Nvidia's cards just about has the edge when it comes to feature set and overclocking, but that's only if prices are relatively close. When there's a big gap between them then the cheaper AMD card makes for the best mid-range graphics card.

🪛 The Nvidia RTX 5070 Ti is the best mid-range graphics card you can buy if you can buy it for a reasonable price. It's an outstanding 1440p card, and with all the gaming assists on, such as upscaling and MFG, it's an impressive 4K GPU, too. But prices have been far too close to $900 in recent times, and that makes it a harder recommendation. Closer to its $750 MSRP, however, and it's a great graphics card.

If you were wondering where all the Nvidia cards were in this list you're about to hit the motherlode, because at the higher-end of the market the GeForce GPUs are the ones you really ought to covet. The RTX Blackwell architecture may not be a huge silicon improvement over its forebear, but there is enough in the mix of DLSS 4, Multi Frame Generation (MFG), and the slight GPU redesign to give you a serious boost in performance.

MFG does its best work when there is a decently high frame rate going in, which means you can smooth out performance, delivering very high frame rates without massively spiking the PC latency. That's the best case scenario for MFG, and with the RTX 5070 Ti as the best mid-range graphics card you're getting the Nvidia feature set working to its fullest potential.

There is still a case to be made for the cheaper AMD Radeon RX 9070 XT, because with subsequent driver updates its performance has improved to the point where at native resolutions it will sometimes outpace the Nvidia competition. Now prices are starting to settle down on the AMD side it's getting far more affordable, while in the US especially, the RTX 5070 Ti still commands a high price tag.

Things are more even in the UK and the rest of the world, which does make it harder to pick the AMD over the Nvidia GPU in terms of performance and gaming experience. Where prices are closer together I'm going to side with the GeForce card thanks to its more effective feature set, the fact that MFG does genuinely work at this level, and that it is a very capable overclocker.

To be fair, both AMD and Nvidia cards are at this level, but the RTX Blackwell has so much easily accessible GPU headroom that overclocking is not only simple, but bears little to no actual risk in running your card at a higher level permanently. It's not really any hotter, or more demanding of power, so why wouldn't you? I've personally tested four different RTX 5070 Ti cards and every single one of them could be pushed to around the same level of 3+ GHz clock speeds without even touching 70 °C.

With all the assists on, the RTX 5070 Ti will consistently deliver triple-figure frame rates in all the latest games at 1440p, and given its regular 60+ fps natively at that resolution, you'll also find it a more than capable 4K graphics card with upscaling and MFG thrown in for good measure.

And when prices normalise across the board as time marches inexorably along, then we'll get back towards the MSRP of the RTX 5070 Ti and that should cement its place as the best mid-range graphics card in every territory.

Read our full Nvidia RTX 5070 Ti review.

⬇️ Click to load the benchmark data⬇️

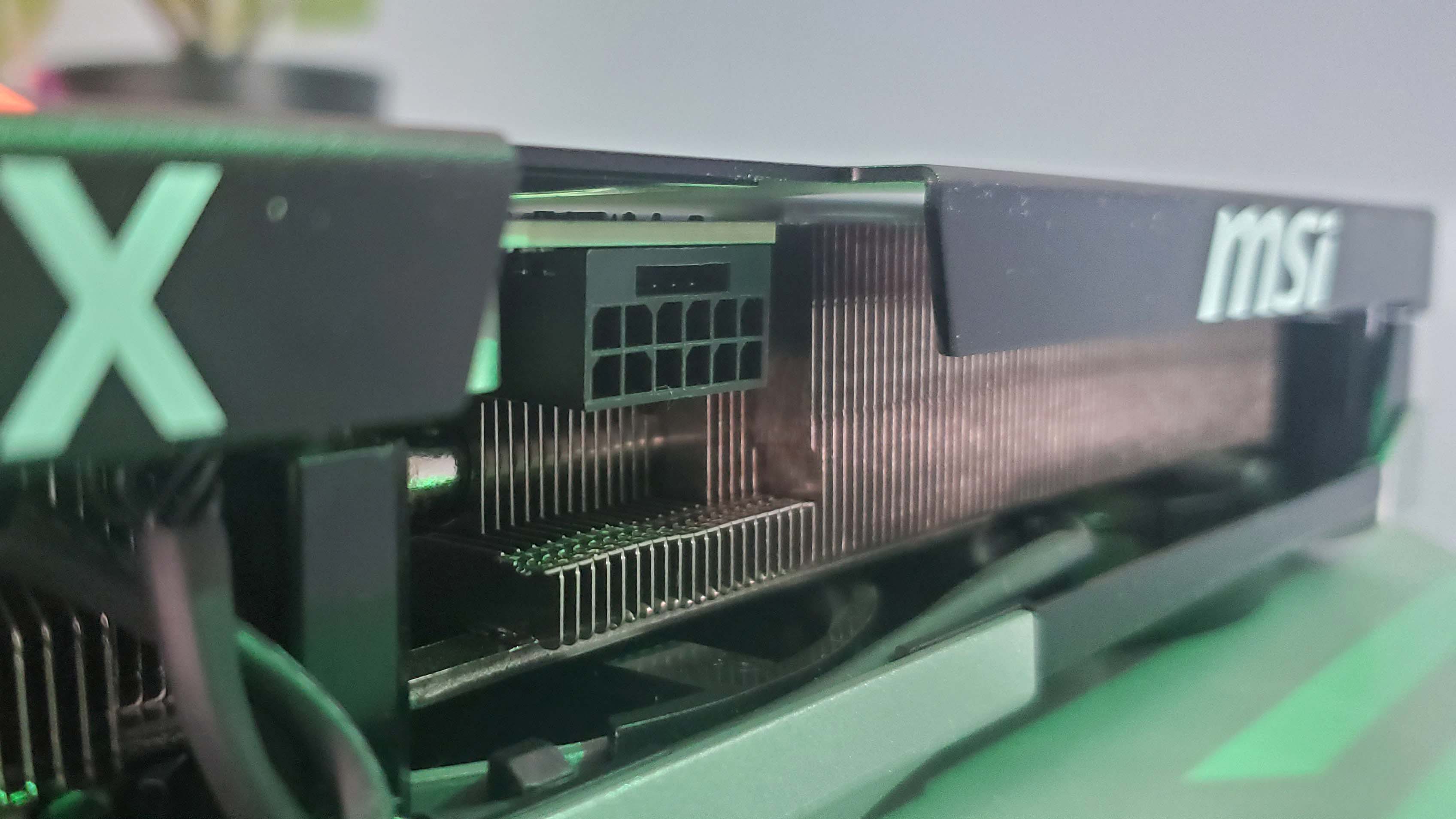

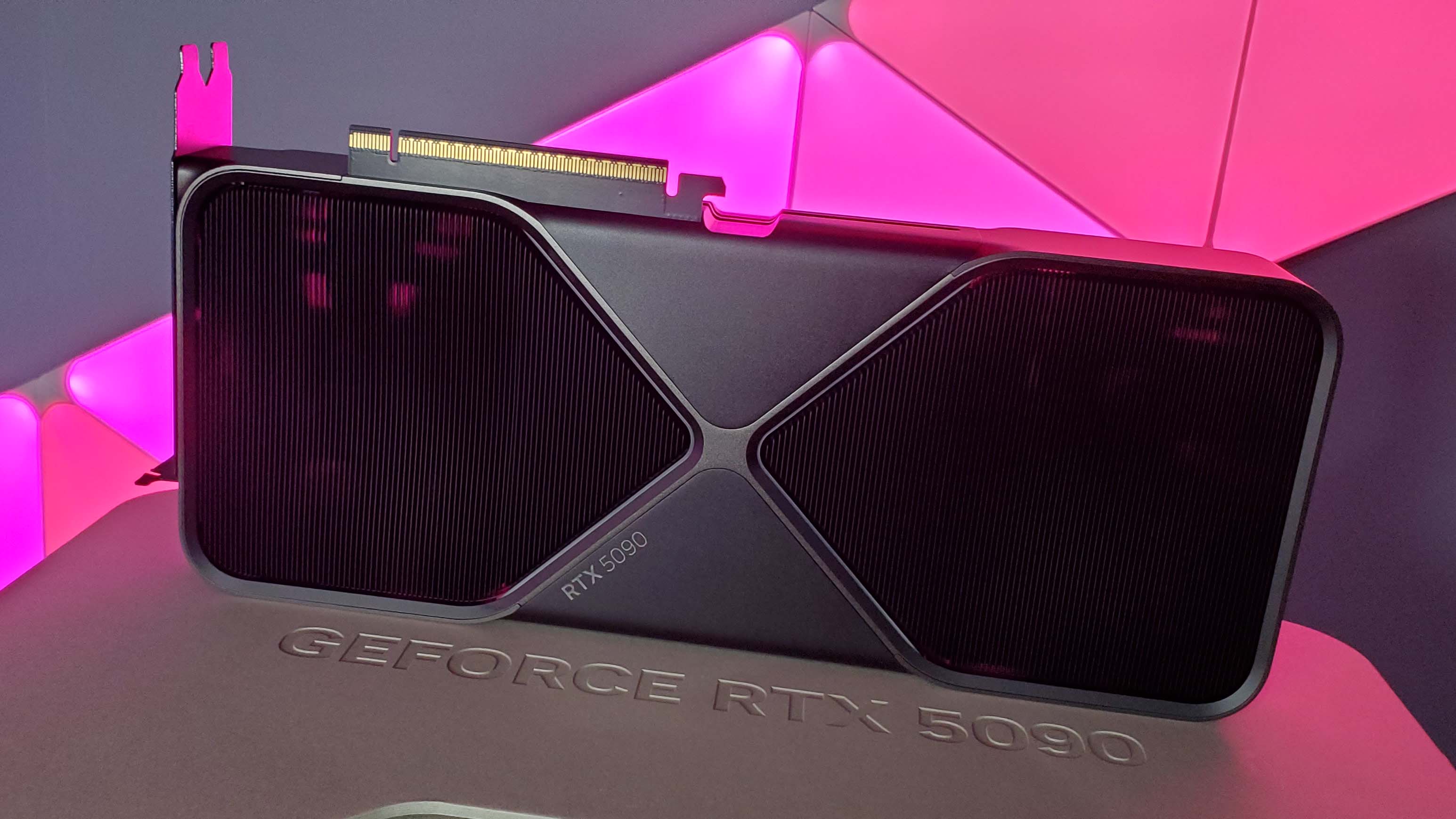

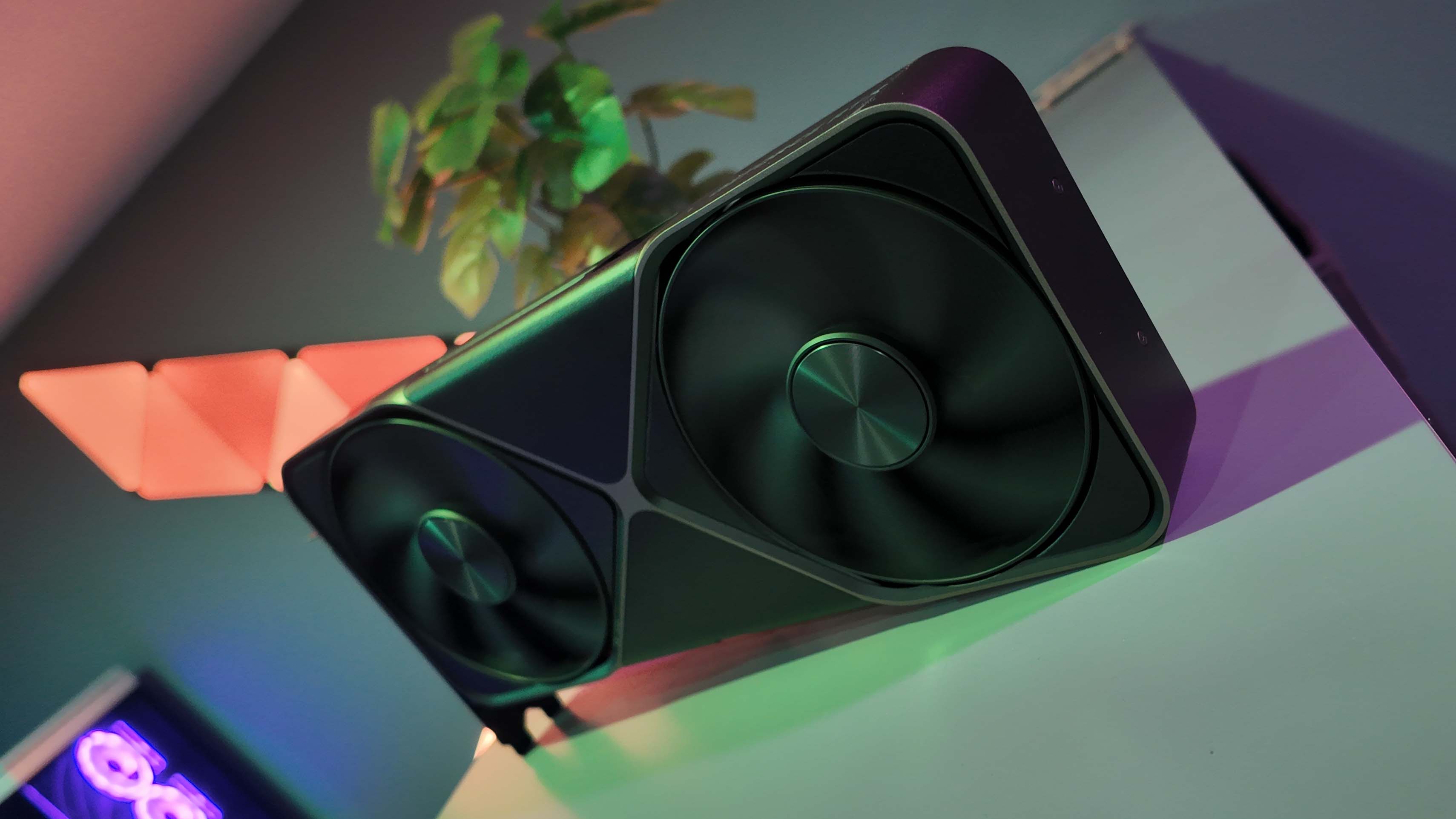

5. Best high-end graphics card: Nvidia GeForce RTX 5090

Specifications

Reasons to buy

Reasons to avoid

✅ You want the best: If you want to nail triple figure frame rates in the latest 4K games, then you're going to need the might and magic of Multi Frame Gen, and that's only available with the RTX 50-series cards. And yes, I do like alliteration.

✅ You to get in on the ground floor of neural rendering: The RTX Blackwell GPUs are the first chips to come with a full set of shaders that will have direct access to the Tensor Cores of the card. That will enable a new world of AI-powered gaming features... when devs get around to using them in released games.

✅ You're after a hyper-powerful SFF rig: The Founders Edition is deliciously slimline, and while it generates a lot of heat it will fit in some of the smallest small form factor PC chassis around.

❌ You need to ask the price: With a $400 price hike over the RTX 4090, the new RTX 5090 is a whole lot of cash at its $1,999 MSRP. The kicker, however, is that you'll be lucky to find one at that price given the third-party cards are looking like $2,500+ right now.

🪛 The RTX 5090 is the most powerful consumer graphics card on the planet right now, and delivers gaming performance far beyond what you could manage on other GPUs, especially if you're playing something which supports Multi Frame Generation.

The RTX Blackwell generation of new GPUs has kicked off with a bang, and means that right now, the best graphics card is undoubtedly the Nvidia RTX 5090. And is likely to remain that way for the next two years at least. Given the fact that AMD isn't going to release a competitor card for the top GeForce GPU in the RDNA 4 generation, you can be confident if you pick one of these up today (or when they come back in stock) you will still be gaming on the best GPU probably until the next Nvidia generation is released.

While that might make for miserable reading for AMD fans, it should be a little more comforting for anyone hellbent on spending $2,000+ on a new graphics card. It will, at least, last the course at the top of benchmarking tree.

Sure, you are only getting some 30% extra gen-on-gen performance over the RTX 4090 at 4K, and that is the smallest performance bump over a previous generation's top card since Turing came along. But there's a magic trick up the sleeve of the RTX Blackwell cards, and that is Multi Frame Generation.

Right now, it's limited to the RTX 50-series—else it would likely cannibalise sales to a huge extent down the stack if RTX 40-series GPUs were allowed into the MFG party—and it adds in up to three more frames in between each actually rendered pair. Using a feature called Flip Metering, which utilises an enhanced bit of silicon in the RTX 50-series Display Engine, it offloads all the burden of frame pacing from the CPU, puts it all on the GPU, and allows the RTX 5090 to queue up all these extra AI-generated frames perfectly for the display.

Along with a new AI-based frame generation model, Multi Frame Generation is able to hugely increase the potential performance of the RTX 5090 in any game which supports it, and the results are frankly incredible. There are some small artifacts—though nothing that would stop me using the feature—but the really impressive thing is that it adds practically no extra latency on top of the standard 2x Frame Generation experience.

And that itself has been lowered thanks to that new FG AI model which makes it 40% quicker and 30% less VRAM hungry at the same time. The good news for RTX 40-series patrons is that model at least is coming to standard Frame Gen in the Ada generation, too.

There are also a ton of games at launch with immediate compatibility with MFG, either with it natively implemented in the game, à la Cyberpunk 2077, or via the new DLSS Override feature in the Nvidia App, as in Dragon Age: The Veilguard.

It's also the most powerful consumer GPU when it comes to creator tasks, too, thanks to its hefty 32 GB of GDDR7 and its massive bandwidth, but also because it's so damned good with an AI noodling if GenAI is your thing.

In short, the RTX 5090 is the best graphics card for anyone who wants the absolute finest silicon and feature set of any consumer GPU going. You're just going to have to pay through the nose for it until stock settles down. If it ever does to an extent that MSRP versions of the card become readily available.

Read our full Nvidia GeForce RTX 5090 review.

⬇️ Click to load the benchmark data⬇️

How we test graphics cards

I have been benchmarking graphics cards since the 2000s, and have used many different games, applications, and methodologies over the intervening years. And, for the most part, testing GPUs is a largely objective process; you test the silicon in different ways, come out with a set of benchmark figures which you then compare against another set of numbers from a different card, or cards, to be able to objectively tell which is empirically the best.

Our current GPU test suite consists of Black Myth Wukong, Cyberpunk 2077, F1 24, Homeworld 3, Metro Exodus Enhanced Edition, The Talos Principle 2, andTotal War: Warhammer III for our gaming tests. These tests are carried out using the same game and system settings across all the graphics cards we test, and are run at 1080p, 1440p, and 4K resolutions.

We measure using both the average frame rate and the 1% Low FPS figure. This gives us a general measure of in-game performance as well as allowing us to see just how consistent that frame rate level is. The 1% Low FPS measure shows the average of the highest 1% of frame times in any given benchmarking run. Translating that into frames per second (1000/x ms) gives us the data to show whether there are regularly large drops in performance or whether it's relatively stable.

We capture this data using the Nvidia Frameview app running over the top of whatever game we are benchmarking, whether the game will give its own data output or not.

We also use the UL suite of benchmark software to get some synthetic testing done against high-end rastererisation performance with 3DMark Time Spy Extreme, and ray tracing performance with 3DMark Port Royal.

With the new RTX Blackwell cards, we've also been using a selection of games to help us get a bead on the impact of Multi Frame Generation on both frame rate and PC latency, too. As well as retesting different levels of MFG (at either 1440p for lower end cards or 4K for high-end GPUs) with Cyberpunk 2077, we also use the ultra-demanding Alan Wake 2 and Dragon Age: Veilguard for its implementation via the Nvidia App.

Actual gaming performance isn't the whole story with graphics cards, however, as they are used for different uses outside of gaming. While we are PC Gamer, we know that some people want to be able to use their PC for 3D rendering, editing, or generative AI uses, and so we run the Blender benchmark, PugetBench for DaVinci Resolve, and Procyon's image generation benchmark using StableDiffusion.

We also capture a ton of system data, too, using the Nvidia PCAT tool (a riser board which sits between the GPU and PCIe slot) to measure actual graphics card power draw. This means we can track both peak and average power use when gaming, and a given GPU's performance per Watt metrics, too.

For this we use three back-to-back runs of the Metro Exodus Enhanced Edition benchmark, at 1080p and 4K, to give us the power numbers, as well as peak and average temperatures and average GPU clock speed, too.

On top of this we will also test the overclocking capabilities of graphics cards, by pushing them as far as we can using standard overclocking methodologies; ie. the same as you would be able to easily do at home. No LN2 sniffing going on here.

But there are also subjective measures which come into play when actually picking the best graphics card. And that all comes down to a consistent driver experience when using the GPU, how loud the cooling fans can get, whether there is discomforting coil whine or other electrical noise, and just how much damned money manufacturers are charging for these cards.

In short, there's a lot that goes into our testing.

In the PC Gamer office—and sometimes my own satellite office up the hill if I'm testing into the wee hours of the morning—we have a dedicated test rig that we use for testing graphics cards. This is our AMD Ryzen 7 9800X3D-based system:

CPU: AMD Ryzen 7 9800X3D | Motherboard: Gigabyte X870E Aorus Master | RAM: G.Skill 32 GB DDR5-6000 CAS 30 | Cooler: Corsair H170i Elite Capellix | SSD: 2 TB Crucial T700 | PSU: Seasonic Prime TX 1600W | Case: DimasTech Mini V2

We also have two other systems, kindly provided by MSI and CyberpowerPC, which we use if another member of the team, such as Jacob, Nick, or Andy, need to benchmark a card. These travel around the UK, and both house the same set of components so we can maintain multiple testing PCs which deliver data we can use to accurate reference other cards.

Where are the best graphics card deals?

- Amazon - save on current and last-gen Nvidia & AMD graphics cards

- Best Buy - the only place to buy Founders Edition cards in the US

- Walmart - discounts of over $100

- B&H Photo - save up to $50 on select GPUs

- Newegg - discounts and offers on Nvidia and AMD graphics cards

- RTX 5090: $2,800 @ Newegg

- RTX 5080: $1,400 @ Newegg

- RTX 5070 Ti: $840 @ Amazon

- RTX 5070: $600 @ Newegg

- RTX 5060 Ti 16 GB: $430 @ Best Buy

- RTX 5060 Ti 8 GB: $370 @ Best Buy

- RTX 5060: $300 @ Best Buy

- RTX 5050: Coming soon

- RX 9070 XT: $700 @ Newegg

- RX 9070: $600 @ Newegg

- RX 9060 XT 16 GB: $370 @ Newegg

- RX 9060 XT 8 GB: $300 @ Newegg

- Arc B580: $290 @ B&H Photo

- Arc B570: $260 @ Amazon

- RX 7900 XTX: $900 @ Newegg

- RX 7600: $275 @ Amazon

GPU hierarchy

Below we have listed multiple generations of graphics card based on a simple 3DMark Time Spy Extreme GPU index score. This is only a rough approximation of relative gaming performance between the differnt graphics cards, as there is more variance than just with a single synthetic benchmark, but it is still a good snap shot of where the cards stack up against each other.

Nvidia GPU specs

Model | MSRP (US$) | Launch | GPU | Lithography | CUDA cores | Memory size (GB) | Die size (mm²) | Transistors (B) | SM count | TMUs | ROPs | Tensor cores | RT cores | L2 cache (MB) | Boost clock (MHz) | Memory type | Memory bus width (bits) | Memory bandwidth (GB/s) | TDP (W) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RTX 5050 | $249 | Jul 16, 2025 | GB207-300 | TSMC 4N | 2560 | 8 | 121 | 15.1 | 20 | 80 | 32 | 80 | 20 | 32 | 2572 | GDDR6 | 128 | 320 | 130 |

RTX 5060 | $299 | May, 19, 2025 | GB206-250 | TSMC 4N | 3840 | 8 | 181 | 21.9 | 30 | 120 | 48 | 120 | 30 | 32 | 2497 | GDDR7 | 128 | 448 | 145 |

RTX 5060 Ti | $379 / $429 | Apr, 16, 2025 | GB205-300 | TSMC 4N | 4608 | 8 / 16 | 181 | 21.9 | 36 | 144 | 48 | 144 | 36 | 32 | 2572 | GDDR7 | 128 | 448 | 180 |

RTX 5070 | $549 | Mar 5, 2025 | GB205-300 | TSMC 4N | 6144 | 12 | 263 | 31.1 | 48 | 192 | 80 | 192 | 48 | 48 | 2512 | GDDR7 | 192 | 672 | 250 |

RTX 5070 Ti | $749 | Feb 20, 2025 | GB203-300 | TSMC 4N | 8960 | 16 | 378 | 45.6 | 70 | 280 | 96 | 280 | 70 | 64 | 2452 | GDDR7 | 256 | 896 | 300 |

RTX 5080 | $999 | Feb 20, 2025 | GB203-400 | TSMC 4N | 10752 | 16 | 378 | 45.6 | 84 | 336 | 112 | 336 | 84 | 64 | 2617 | GDDR7 | 256 | 960 | 360 |

RTX 5090 | $1999 | Jan 30, 2025 | GB202-300 | TSMC 4N | 21760 | 32 | 750 | 92.2 | 170 | 680 | 176 | 680 | 170 | 96 | 2407 | GDDR7 | 512 | 1792 | 575 |

Model | MSRP (US$) | Launch | GPU | Lithography | CUDA cores | Memory size (GB) | Die size (mm²) | Transistors (B) | SM count | TMUs | ROPs | Tensor cores | RT cores | L2 cache (MB) | Boost clock (MHz) | Memory type | Memory bus width (bits) | Memory bandwidth (GB/s) | TDP (W) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RTX 4060 | $299 | Jun 29, 2023 | AD107-400 | TSMC 4N | 3072 | 8 | 159 | 18.9 | 24 | 96 | 32 | 96 | 24 | 24 | 2460 | GDDR6 | 128 | 272 | 115 |

RTX 4060 Ti | $399 | May 24, 2023 | AD106-350 | TSMC 4N | 4352 | 8 | 188 | 22.9 | 34 | 136 | 48 | 136 | 34 | 32 | 2535 | GDDR6 | 128 | 288 | 160 |

RTX 4060 Ti 16GB | $499 | Jul 18, 2023 | AD106-351 | TSMC 4N | 4352 | 16 | 188 | 22.9 | 34 | 136 | 48 | 136 | 34 | 32 | 2535 | GDDR6 | 128 | 288 | 165 |

RTX 4070 | $599 | Apr 13, 2023 | AD104-250 | TSMC 4N | 5888 | 12 | 294.5 | 35.8 | 46 | 184 | 64 | 184 | 46 | 36 | 2475 | GDDR6X | 192 | 504 | 200 |

RTX 4070 Super | $599 | Jan 17, 2024 | AD104-350 | TSMC 4N | 7168 | 12 | 294.5 | 35.8 | 56 | 224 | 80 | 224 | 56 | 48 | 2475 | GDDR6X | 192 | 504 | 220 |

RTX 4070 Ti | $799 | Jan 5, 2023 | AD104-400 | TSMC 4N | 7680 | 12 | 294.5 | 35.8 | 60 | 240 | 80 | 240 | 60 | 48 | 2610 | GDDR6X | 192 | 504 | 285 |

RTX 4070 Ti Super | $799 | Jan 24, 2024 | AD103-275 | TSMC 4N | 8448 | 16 | 379 | 45.9 | 66 | 264 | 96 | 264 | 66 | 48 | 2610 | GDDR6X | 256 | 672 | 285 |

RTX 4080 | $1199 | Nov 16, 2022 | AD103-300 | TSMC 4N | 9728 | 16 | 379 | 45.9 | 76 | 304 | 112 | 304 | 76 | 64 | 2505 | GDDR6X | 256 | 716.8 | 320 |

RTX 4080 Super | $999 | Jan 31, 2024 | AD103-400 | TSMC 4N | 10240 | 16 | 379 | 45.9 | 80 | 320 | 112 | 320 | 80 | 64 | 2550 | GDDR6X | 256 | 736 | 320 |

RTX 4090 | $1599 | Oct 12, 2022 | AD102-300 | TSMC 4N | 16384 | 24 | 608.5 | 76.3 | 128 | 512 | 176 | 512 | 128 | 72 | 2520 | GDDR6X | 384 | 1008 | 450 |

Model | MSRP (US$) | Launch | GPU | Lithography | CUDA cores | Memory size (GB) | Die size (mm²) | Transistors (B) | SM count | TMUs | ROPs | Tensor cores | RT cores | L2 cache (MB) | Boost clock (MHz) | Memory type | Memory bus width (bits) | Memory bandwidth (GB/s) | TDP (W) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RTX 3050 | $169 / $249 | Jan 27, 2022 | GA106-150 | Samsung 8nm | 2560 | 6 / 8 | 276 | 12 | 20 | 80 | 32 | 80 | 20 | 2 | 1777 | GDDR6 | 128 | 168 / 224 | 130 |

RTX 3060 | $329 | Feb 25, 2021 | GA106-300 | Samsung 8nm | 3584 | 8 / 12 | 276 | 12 | 28 | 112 | 48 | 112 | 28 | 3 | 1777 | GDDR6 | 192 | 240 / 360 | 170 |

RTX 3060 Ti | $399 | Dec 1, 2020 | GA104-200 | Samsung 8nm | 4864 | 8 | 392 | 17.4 | 38 | 152 | 80 | 152 | 38 | 4 | 1665 | GDDR6/X | 256 | 448 / 608 | 200 |

RTX 3070 | $499 | Oct 29, 2020 | GA104-300 | Samsung 8nm | 5888 | 8 | 392 | 17.4 | 46 | 184 | 96 | 184 | 46 | 4 | 1725 | GDDR6 | 256 | 448 | 220 |

RTX 3070 Ti | $599 | Jun 10, 2021 | GA104-400 | Samsung 8nm | 6144 | 8 | 392 | 17.4 | 48 | 192 | 96 | 192 | 48 | 4 | 1770 | GDDR6X | 256 | 608.3 | 290 |

RTX 3080 | $699 | Sep 17, 2020 | GA102-200 | Samsung 8nm | 8704 | 10 | 628 | 28.3 | 68 | 272 | 96 | 272 | 68 | 5 | 1710 | GDDR6X | 320 | 760.3 | 320 |

RTX 3080 12 GB | $799 | Jan 11, 2022 | GA102-220 | Samsung 8nm | 8960 | 12 | 628 | 28.3 | 70 | 280 | 96 | 280 | 70 | 5 | 1710 | GDDR6X | 384 | 912.4 | 350 |

RTX 3080 Ti | $1199 | Jun 3, 2021 | GA102-225 | Samsung 8nm | 10240 | 12 | 628 | 28.3 | 80 | 320 | 112 | 320 | 80 | 6 | 1665 | GDDR6X | 384 | 912.4 | 350 |

RTX 3090 | $1499 | Sep 24, 2020 | GA102-300 | Samsung 8nm | 10496 | 24 | 628 | 28.3 | 82 | 328 | 112 | 328 | 82 | 6 | 1695 | GDDR6X | 384 | 936.2 | 350 |

RTX 3090 Ti | $1999 | Mar 29, 2022 | GA102-350 | Samsung 8nm | 10752 | 24 | 628 | 28.3 | 84 | 336 | 112 | 336 | 84 | 6 | 1860 | GDDR6X | 384 | 1008 | 450 |

| Row 10 - Cell 0 | Row 10 - Cell 1 | Row 10 - Cell 2 | Row 10 - Cell 3 | Row 10 - Cell 4 | Row 10 - Cell 5 | Row 10 - Cell 6 | Row 10 - Cell 7 | Row 10 - Cell 8 | Row 10 - Cell 9 | Row 10 - Cell 10 | Row 10 - Cell 11 | Row 10 - Cell 12 | Row 10 - Cell 13 | Row 10 - Cell 14 | Row 10 - Cell 15 | Row 10 - Cell 16 | Row 10 - Cell 17 | Row 10 - Cell 18 | Row 10 - Cell 19 |

Model | MSRP (US$) | Launch | GPU | Lithography | CUDA cores | Memory size (GB) | Die size (mm²) | Transistors (B) | SM count | TMUs | ROPs | Tensor cores | RT cores | L2 cache (MB) | Boost clock (MHz) | Memory type | Memory bus width (bits) | Memory bandwidth (GB/s) | TDP (W) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RTX 2060 | $299 / $349 | Jan 15, 2019 | TU106-200 / TU106-300 / TU104-150 | TSMC 12 nm | 1920 / 2176 | 6 / 12 | 445 / 545 | 10.8 / 13.6 | 30 / 34 | 120 / 136 | 48 / 64 | 240 / 272 | 30 / 34 | 3 | 1650 / 1680 | GDDR6 | 192 | 336 | 160 / 185 |

RTX 2060 Super | $399 | Jul 9, 2019 | TU106-410 | TSMC 12 nm | 2176 | 8 | 445 | 10.8 | 34 | 136 | 64 | 272 | 34 | 4 | 1650 | GDDR6 | 256 | 448 | 175 |

RTX 2070 | $499 | Oct 17, 2019 | TU106-400 | TSMC 12 nm | 2304 | 8 | 445 | 10.8 | 36 | 144 | 64 | 288 | 36 | 4 | 1620 | GDDR6 | 256 | 448 | 175 |

RTX 2070 Super | $499 | Jul 9, 2019 | TU104-410 | TSMC 12 nm | 2560 | 8 | 545 | 13.6 | 40 | 160 | 64 | 320 | 40 | 4 | 1770 | GDDR6 | 256 | 448 | 215 |

RTX 2080 | $699 | Sep 20, 2018 | TU104-400 | TSMC 12 nm | 2944 | 8 | 545 | 13.6 | 46 | 184 | 64 | 368 | 46 | 4 | 1710 | GDDR6 | 256 | 448 | 215 |

RTX 2080 Super | $699 | Jul 23, 20190 | TU104-450 | TSMC 12 nm | 3072 | 8 | 545 | 13.6 | 48 | 192 | 64 | 384 | 48 | 4 | 1815 | GDDR6 | 256 | 499.2 | 250 |

RTX 2080 Ti | $999 | Sep 20, 2018 | TU102-300 | TSMC 12 nm | 4352 | 11 | 754 | 18.6 | 68 | 272 | 88 | 544 | 68 | 5.5 | 1545 | GDDR6 | 352 | 616 | 250 |

Titan RTX | $2499 | Dec 18, 2018 | TU102-400 | TSMC 12 nm | 4608 | 24 | 754 | 18.6 | 72 | 288 | 96 | 576 | 72 | 6 | 1770 | GDDR6 | 384 | 672 | 280 |

AMD GPU specs

Model | MSRP (US$) | Launch | GPU | Compute Units (CUs) | Memory Size (GB) | Fab | Transistors (B) | Die Size (mm²) | Shaders | TMUs | ROPs | Ray Accelerators | AI Accelerators | Memory Type | Memory bus | TBP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RX 9060 XT | $269 | May 25, 2023 | Navi 44 | 32 | 8 / 16 | TSMC N4P | 29.7 | 199 | 2048 | 128 | 64 | 32 | 64 | GDDR6 | 128 | 150 / 160 |

RX 9070 GRE | $449 | Sep 6, 2023 | Navi 48 | 48 | 12 | TSMC N4P | 53.9 | 356.5 | 3072 | 192 | 96 | 48 | 96 | GDDR6 | 192 | 220 |

RX 9070 | $499 | Sep 6, 2023 | Navi 48 | 56 | 16 | TSMC N4P | 53.9 | 356.5 | 3584 | 224 | 128 | 56 | 112 | GDDR6 | 256 | 220 |

RX 9070 XT | $899 | Dec 13, 2022 | Navi 48 | 64 | 16 | TSMC N4P | 53.9 | 356.5 | 4096 | 256 | 128 | 64 | 128 | GDDR6 | 256 | 304 |

Model | MSRP (US$) | Launch | Code Name | Compute Units (CUs) | Fab | Transistors (B) | Die Size (mm²) | Shaders | TMUs | ROPs | Ray Accelerators | AI Accelerators | Memory Size (GB) | Memory Type | Memory Bus | TBP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

RX 7600 | $269 | May 25, 2023 | Navi 33 | 32 | 6nm | 13.3 | 204 | 2048 | 128 | 64 | 32 | 64 | 8 | GDDR6 | 128 | 165 |

RX 7700 XT | $449 | Sep 6, 2023 | Navi 32 | 54 | 5nm | 28.1 | 346 | 3456 | 216 | 96 | 54 | 108 | 12 | GDDR6 | 192 | 245 |

RX 7800 XT | $499 | Sep 6, 2023 | Navi 32 | 60 | 5nm | 28.1 | 346 | 3840 | 240 | 96 | 60 | 120 | 16 | GDDR6 | 256 | 263 |

RX 7900 XT | $899 | Dec 13, 2022 | Navi 31 | 84 | 5nm | 57.7 | 529 | 5376 | 336 | 192 | 84 | 168 | 20 | GDDR6 | 320 | 315 |

RX 7900 XTX | $999 | Dec 13, 2022 | Navi 31 | 96 | 5nm | 57.7 | 529 | 6144 | 384 | 192 | 96 | 192 | 24 | GDDR6 | 384 | 355 |

Model | Launch | Code Name | Fab | Transistors (B) | Die Size (mm²) | Compute Units (CUs) | Shaders | TMUs | ROPs | Memory Size (GB) | Memory Type | MSRP (US$) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

RX 6600 | Oct 13, 2021 | Navi 23 | 7nm | 11.06 | 237 | 28 | 1792 | 112 | 64 | 8 | GDDR6 | $329 |

RX 6600 XT | Aug 11, 2021 | Navi 23 | 7nm | 11.06 | 237 | 32 | 2048 | 128 | 64 | 8 | GDDR6 | $379 |

RX 6700 XT | Mar 18, 2021 | Navi 22 | 7nm | 17.2 | 335 | 40 | 2560 | 160 | 64 | 12 | GDDR6 | $479 |

RX 6800 | Nov 18, 2020 | Navi 21 | 7nm | 26.8 | 520 | 60 | 3840 | 240 | 96 | 16 | GDDR6 | $579 |

RX 6800 XT | Nov 18, 2020 | Navi 21 | 7nm | 26.8 | 520 | 72 | 4608 | 288 | 128 | 16 | GDDR6 | $649 |

RX 6900 XT | Dec 8, 2020 | Navi 21 | 7nm | 26.8 | 520 | 80 | 5120 | 320 | 128 | 16 | GDDR6 | $999 |

RX 6950 XT | May 10, 2022 | Navi 21 | 7nm | 26.8 | 520 | 80 | 5120 | 320 | 128 | 16 | GDDR6 | $1099 |

Model | Launch | Code Name | Fab | Transistors (B) | Die Size (mm²) | Compute Units (CUs) | Shaders | TMUs | ROPs | Memory Size (GB) | Memory Type | MSRP (US$) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

RX 5500 XT | Dec 12, 2019 | Navi 14 | 7nm | 6.4 | 158 | 22 | 1408 | 88 | 32 | 4/8 | GDDR6 | $169 |

RX 5600 XT | Jan 21, 2020 | Navi 10 | 7nm | 10.3 | 251 | 36 | 2304 | 144 | 64 | 6 | GDDR6 | $279 |

RX 5700 | Jul 7, 2019 | Navi 10 | 7nm | 10.3 | 251 | 36 | 2304 | 144 | 64 | 8 | GDDR6 | $349 |

RX 5700 XT | Jul 7, 2019 | Navi 10 | 7nm | 10.3 | 251 | 40 | 2560 | 160 | 64 | 8 | GDDR6 | $399 |

PC Gamer graphics card reviews

Nvidia RTX 5080 | January 2025

"The RTX 5080 Founders Edition uses the same lovely shroud as the top RTX Blackwell card, and brings the same DLSS/MFG feature set to the table. But that's all that is really setting the second-tier card apart from the RTX 4080 Super as the gen-on-gen performance difference is marginal at best. It might not be an exciting GPU, but at least the veneer of Multi Frame Generation will make it feel like a generational leap to most gamers."

AMD RX 9070 XT | March 2025

"The Radeon RX 9070 XT, ably demonstrated by this Asus Prime version, is a great price/performance card that takes Nvidia to task on pricing, and shows AMD has taken great strides forward with both RT and AI processing. It could be a hugely consequential GPU, if only the AIBs can keep their worst pricing excesses in check."

Nvidia RTX 5070 | March 2025

"At this price, and with this competition looming large, I just don't know how I can recommend the RTX 5070 as a genuine purchase for any PC gamer without major caveats. The GPU at its heart feels like it should be the basis for an RTX 5060 and now the rest of market might just force that upon Nvidia."

Nvidia RTX 5060 Ti | April 2025

"A solid pick for an entry-level graphics card, especially at its lower-than-last-gen price. The RTX 5060 Ti would make a mean upgrade for someone still using an ageing GPU, provided you can get one close to MSRP."

Intel Arc B580 | December 2024

"The one thing the Arc B580 had to do was perform consistently, had it done so it would be the best budget gaming GPU out there. For games where it does perform it actually is right now. But inconsistency not only in straight performance, but also whether a game would run or not, have made it all but impossible to recommend this card at launch."

Nvidia GeForce RTX 4090 | October 2022

"The RTX 4090 may not be subtle but the finesse of DLSS 3 and Frame Generation, and the raw graphical grunt of a 2.7GHz GPU combine to make one hell of a gaming card."

Nvidia RTX 4080 Super

"The RTX 4080 Super is more a relaunch of the much maligned original RTX 4080 than an exciting new card in its own right, but it is still a GPU with serious gaming chops. But with no tangible performance difference it's the $200 relative price cut that does the work of rehabilitating the erstwhile RTX 4080. AMD's similarly priced, similarly performing RX 7900 XTX is still a thorn in the second-tier Ada card's side, however."

AMD Radeon RX 7900 XTX

"The RX 7900 XTX is not a direct competitor to the RTX 4080 in every way, but it's notably cheaper and delivers a whole lot more than the top RX 6000-series card."

⬇️ Click to load more GPU reviews⬇️

Nvidia GeForce RTX 3090 Ti | March 2022

"The RTX 3090 Ti is undoubtedly the fastest consumer GPU known to man, but it comes with a steep price and how long it remains on top is up in the air. This is as far as the Ampere GPUs can be pushed and, with the next-gen architectures from AMD and Nvidia on the way this year, its dominance could well be short lived."

PC Gamer score: 71%

Nvidia GeForce RTX 3090 |September 2020

"This frankly enormous graphics card is supremely powerful, but is more worthy of its Titan credentials than the GeForce branding. For your average gamer, it doesn't deliver enough over the RTX 3080 to make sense, but for the pro-creator, it's a workload-crushing card."

Nvidia GeForce RTX 3080 Ti | June 2021

The GeForce RTX 3080 Ti is helluva graphics card for 4K gaming, in much the same way Nvidia's RTX 3090 is. However, a price tag closer to Ampere's finest—and far in excess of the RTX 3080—will see this card only find its way into the most expensive PC builds around.

AMD Radeon RX 6950 XT | May 2022

"It's cool-running, quiet, and impressively powerful. Though far more thirsty than RDNA 2 cards before it. The real issues, however, are all external and stem from the expectation that AMD and Nvidia's next-gen GPUs are but a handful of months away now."

AMD Radeon RX 6900 XT | December 2020

"The AMD RX 6900 XT is an impressive feat of sheer performance uplift generation on generation. Yet its high cost and minimal performance benefit over cheaper graphics cards make it difficult to recommend."

Nvidia GeForce RTX 3080 | September 2020

"The new Nvidia card houses a monster of a GPU, tearing up the Turing generation and making ray traced gaming worthwhile. And this Founders Edition is the ultimate expression of the GeForce RTX 3080."

AMD Radeon RX 6800 XT | November 2020

"With the launch of the Radeon RX 6800 XT, AMD can claim 4K-capable performance in earnest. It marks a huge step in the right direction for team red, and delivers genuine competition to Nvidia's high-end."

AMD Radeon RX 6800 | November 2020

"The RX 6800 has no real opposite number over at Nvidia, but I can say it more than matches an RTX 2080 Ti at 4K and over-delivers at 1440p for high refresh rate gaming without compromise."

Nvidia GeForce RTX 3070 Ti | June 2021

"The GeForce RTX 3070 Ti delivers frustratingly variable performance, often too close to an RTX 3070 for a price smack between it and the RTX 3080. It doesn't have quite the same impact as Ampere's best cards, but at the very least offers PC gamers another chance to pick up a graphics card at MSRP this year."

Nvidia GeForce RTX 3070 | October 2020

"This diminutive third-tier Ampere GPU delivers gaming performance that would have topped the Turing generation, and does it while running cooler, with less power, and in a much smaller footprint."

Nvidia GeForce RTX 3060 Ti | December 2020

"The RTX 3060 Ti is exactly what we expected from a fourth-tier RTX 30-series graphics card, but that's no mark against it. Nvidia's Ampere cards offer an almost unprecedented leap in gaming performance over past generations, and the RTX 3060 Ti manages to deliver more of the same on a slimmer budget."

AMD Radeon RX 6750 XT | May 2022

"The Asus spin of the RX 6750 XT features a stunningly effective GPU cooler, a chip that runs at nearly 2.7 GHz on average, but draws a whole heap of power doing it. And it's also super expensive at a time where the second-hand market is reborn and the notion of an MSRP product is once more a thing."

Nvidia GeForce RTX 3060 12GB | February 2021

"A healthy upgrade over the RTX 2060, but a little of the wow factor has worn off for Ampere's cheapest. You can at least be confident that you won't run out of memory, at least, and that feels like it will be important for gaming into 2021 and beyond, even if we're not quite there yet."

Intel Arc A770 | October 2022

"The Arc A770 Limited Edition is far from perfect but does deliver very playable frame rates at 1440p and with ray tracing enabled. It's certainly not a bad start for Intel Arc. But while it focuses on facing down Nvidia and the popular RTX 3060, it is AMD's RX 6600 XT that simply offers a better alternative right now."

AMD Radeon RX 6600 XT | August 2021

"In an alternate universe, without the current silicon drought, AMD's RX 6600 XT is less than $300 and a great mid-range option. In this, the darkest timeline, it's almost $400, negligibly quicker than an RX 5700 XT and only slightly cheaper. Though at least it's better than the RTX 3060."

AMD Radeon RX 6600 | October 2021

"The Radeon RX 6600 delivers enough for modern 1080p gaming, which is good going considering AMD has preened this package into a much more power-savvy design than the RX 6600 XT. That said, Nvidia's RTX 3060 12 GB is still the card we'd recommend at this price point if you can find one in stock. Otherwise, the RX 6600 makes for a viable, if not sparkling, alternative."

Nvidia GeForce RTX 3050 | January 2022

"We're essentially looking at Nvidia taking away the 'GTX' prefix and giving us an RTX 1660 Ti. That makes it a good 1080p GPU, though the addition of DLSS support is far more tempting a proposition, especially at this level of GPU, than the promise of 1080p ray traced gaming. Fingers crossed it stays in stock at a reasonable price."

AMD Radeon RX 6500 XT | January 2022

"A graphics card built to offer the barest minimum of hardware fails to move the game on, and feels like the most cynical, GPU-crisis graphics card we've seen yet."

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.

- Jacob RidleyManaging Editor, Hardware

- Andy EdserHardware Writer