The spirit of PC building will keep our gaming machines from disappearing into the cloud

"Similar to how muscle cars from the '50s haven't disappeared, the love for building your own desktop PC from the ground up... will make desktop PCs prevail."

This article was originally published on 30th June this year, and we are republishing it today as part of a series celebrating some of our favourite pieces of the past 12 months.

Next-gen: What will a CPU look like in the future?

Time's up: Does silicon have an expiration date?

Quantum: What lies beyond classical computing?

For most of computing history, computational power has lived right next to where it was accessed from. Sure, you had instances of data transmitted over internet protocols for decades, but a CPU was firmly planted next to the person using it. That's no longer always how it works now. I'm talking about the cloud—a snappy title for 'another computer way over there.'

For gaming, there's a potential paradigm shift coming. One that sees our gaming PCs move from under our desks to the shelf of a server rack. As a fan of PC building and the consumer choice that comes with it, I for one am terrified of such a prospect, but how realistic is that end?

"It is possible but limited by several serious challenges," says Dr. Hao Zheng, Assistant Professor of Electrical and Computer Engineering at the University of Central Florida.

"We have seen many online gaming websites and applications, but all are very simple and don't require distributed computing. In distributed computing, a large task (i.e. a game) is partitioned, allocated, and executed on multiple computers. This means that communication is a major issue, and the performance of distributed learning is highly dependent on internet connections."

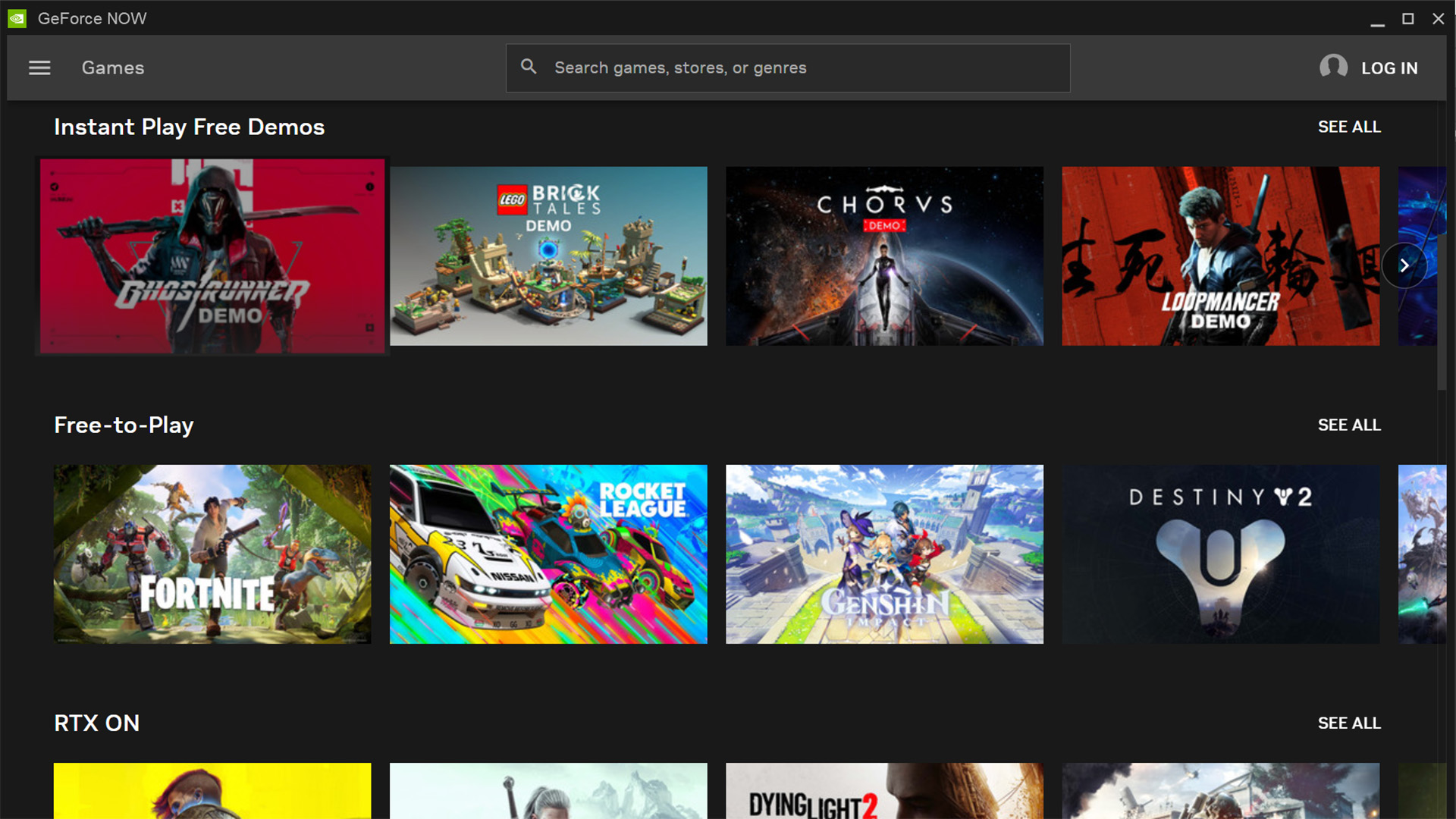

Ah, internet connectivity issues. Just consider how frustrating it is today to have your entire game session disconnected due to connectivity issues, but now amplify that tenfold because it'll instead be your entire PC that disappears without web access. Admittedly, I'm against the whole idea, even if I love streaming games from GeForce Now to my Steam Deck, and yes it's also because I talk about PC parts for a living.

"You'll always have some of those higher users who are trying to strive for that 8K. That you're never going to stream from the cloud because it's too frickin' expensive and our infrastructure is not good enough," Marcus Kennedy, general manager for gaming at Intel, tells me.

Thankfully, it's you (dear reader) and me that might help keep the hobby alive and well, even if the world does get a better handle on universally improved internet infrastructure. Though I have my doubts about that happening for the foreseeable, too.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You're never going to stream [8K] from the cloud because it's too frickin' expensive.

Marcus Kennedy, Intel

"Similar to how muscle cars from the '50s haven't disappeared, the love for building your own desktop PC from the ground up, modifying it to your hearts' contempt, pushing the limits of overclocking to bust a couple more FPS, or turning it into your own work of art, will make desktop PCs prevail," says Carlos Andrés Trasviña Moreno, Software Engineering Coordinator at CETYS Ensenada.

"If not for everybody, at least for the select few who enjoy the thrill of it."

Phew. PC building isn't going anywhere. But it's not because streaming compute power from the cloud doesn't have some benefits. Of course it does, and for AI we're already seeing local features replaced or empowered by cloud computing, where AI workloads have to go to leverage the massive algorithms powering Large Language Models and similar systems.

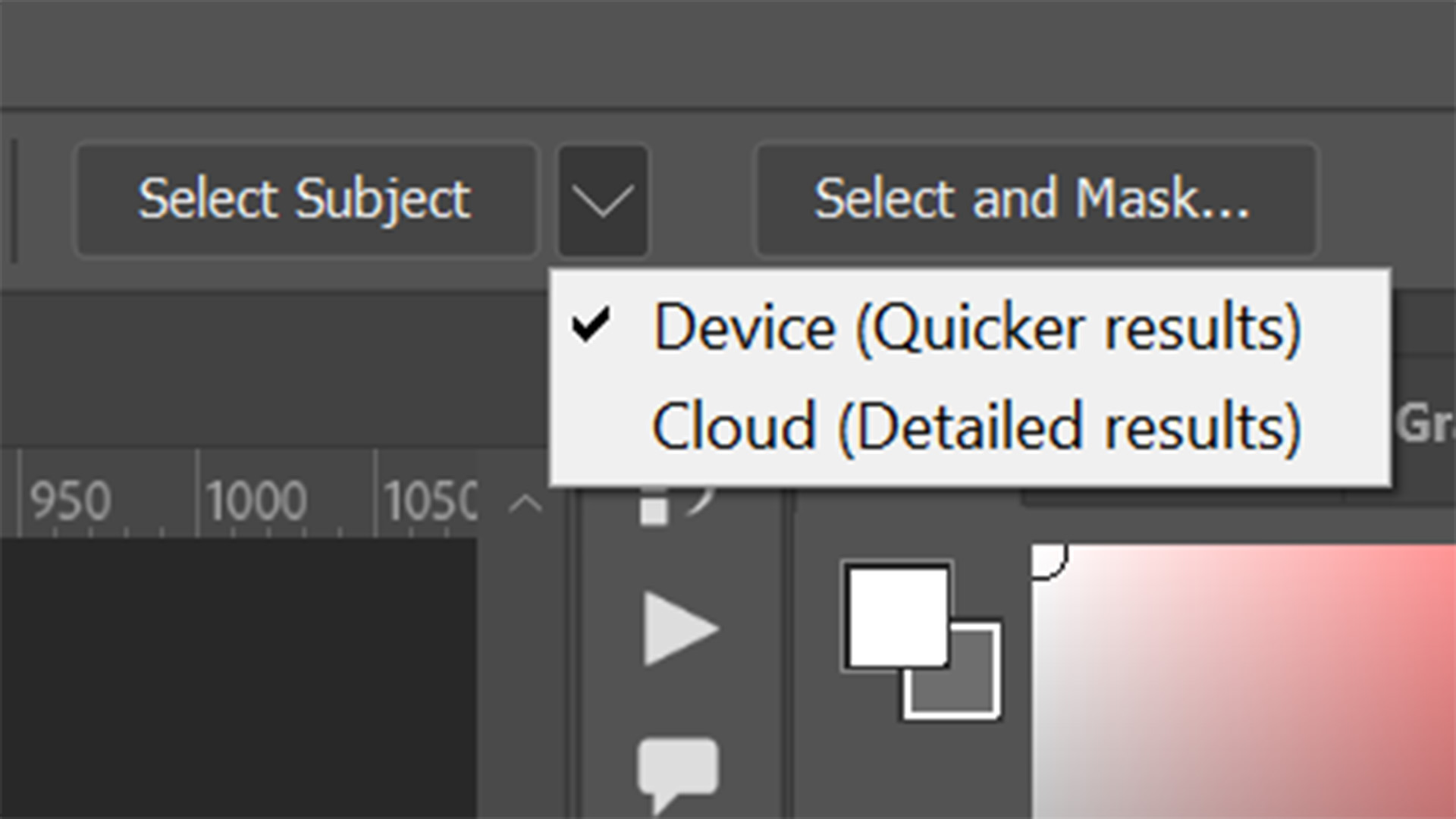

Take Photoshop's Object Selection Tool, which offers both a quicker localised version running on your own hardware or a more detailed, better version using compute in the cloud.

But for gaming specifically, we've already seen a rise in services that offer gaming on-the-go. Nvidia's GeForce Now is the best of the lot in my experience, mostly because I can use my Steam game library with it, but there have been a few genuinely impressive game streaming services. One such service, Shadow, even offered an entire PC in the cloud, and I actually thought it was pretty damn good.

But us gamers spend a lot of money on milliseconds. A mechanical gaming keyboard with magnetic switches, saving one thousandth of a second. That's worth it. A screen with a fractionally faster response time. Heck yeah. These are all purchases we're making all the time, and there's something in that which might just keep the localised gaming dream alive for a lot longer than some would have us believe.

Is silicon's time up? Is there a wonder material waiting in the wings to jump into its place? Well, yes and no. The experts all agree it'll take some sort of miracle to replace silicon in the short to mid-term, and even then there's probably more we can eke out of this age-old classic.

"The more compute you need is going to be driven by how fast you need that interaction to happen," Kennedy tells me. "And gaming is a usage from the beginning that is all about interactivity, it's all about low latency, it's all about what a gamer expects. You push a button, you click the mouse button, you push a key, and you expect something to happen immediately on the screen. Right? And because of that, you're going to need most of that to happen as close to the workload as humanly possible."

"Now, there are some games where you don't need that. But for the vast majority of games, I think you need that."

Am I going to play Crusader Kings over the cloud on my underpowered little work laptop? Yes, but is that going to eventually replace my tower at home? Probably not. And it's not just for the performance of it all, partially it's just for a love of the hobby.

Jacob earned his first byline writing for his own tech blog. From there, he graduated to professionally breaking things as hardware writer at PCGamesN, and would go on to run the team as hardware editor. He joined PC Gamer's top staff as senior hardware editor before becoming managing editor of the hardware team, and you'll now find him reporting on the latest developments in the technology and gaming industries and testing the newest PC components.