The ESRB wants to start using facial scanning technology to check people's ages

The FTC is now seeking public feedback on the ESRB's proposal.

Update: The ESRB has released a statement clarifying that this technology does not "confirm the identity of users," nor it it intended to scan the faces to minors to determine whether they're old enough to purchase particular games. It only uses images to determine the subject's age in order to ensure compliance with COPPA privacy requirements.

"To be perfectly clear: Any images and data used for this process are never stored, used for AI training, used for marketing, or shared with anyone," the ESRB said in its statement. "The only piece of information that is communicated to the company requesting VPC is a 'Yes' or 'No' determination as to whether the person is over the age of 25."

Original story:

Remember a couple years ago, when Chinese gaming giant Tencent began using facial recognition to keep the kids from playing too many videogames? It turns out that the Entertainment Software Rating Board, North America's videogame rating agency, is looking to do something quite similar.

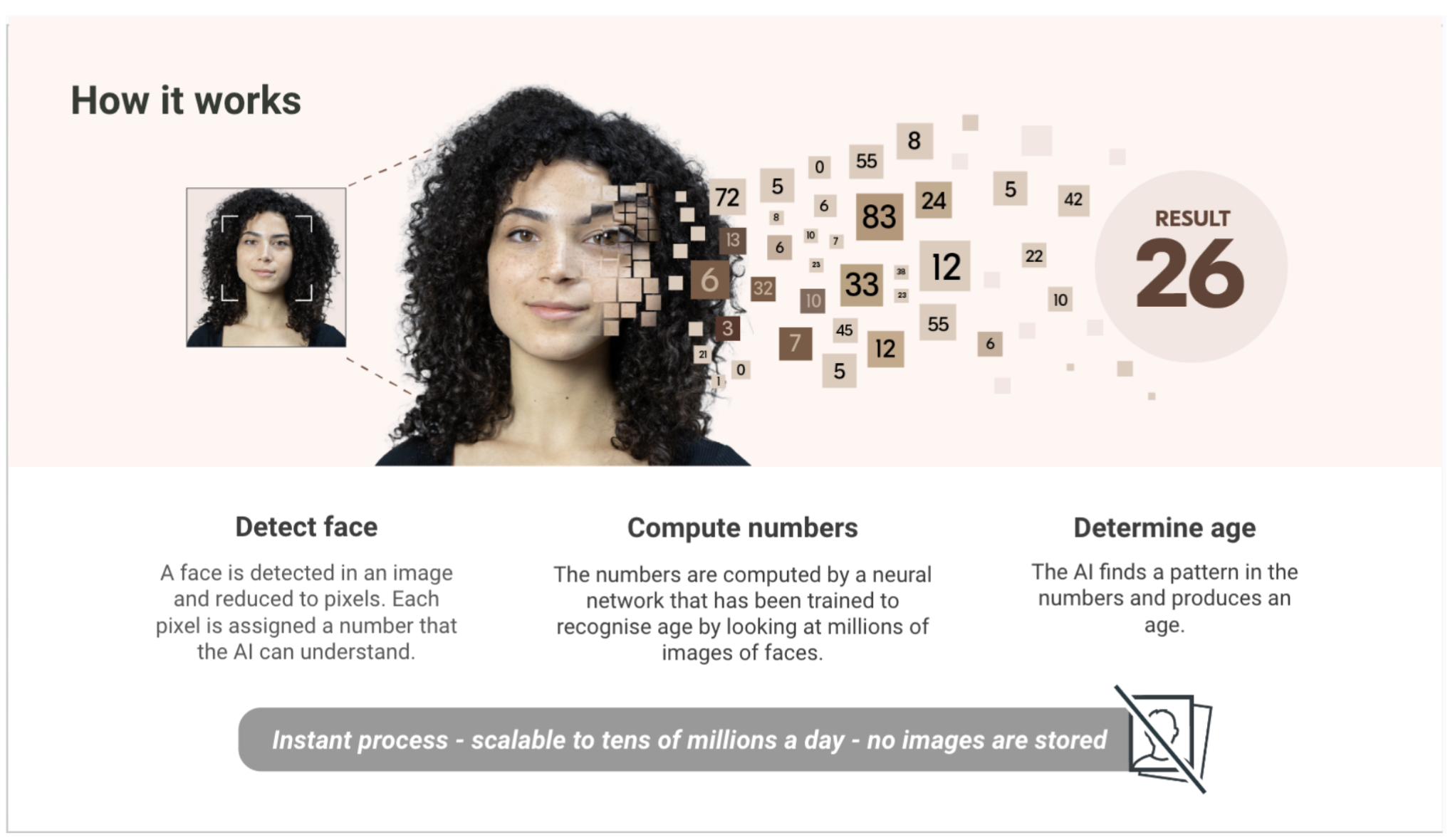

The ESRB, along with digital identity company Yoti and Epic Games-owned "youth digital media" company SuperAwesome, have filed a proposal with the FTC seeking approval for a new "verifiable parental consent mechanism" called Privacy-Protective Facial Age Estimation. Simply put, the parent takes a selfie, assisted by an "auto face capture module," which is then analyzed by the system to ensure it's the face of an adult, who can then grant whatever permissions are required. The entire process of verification takes less than a second "on average," and images are permanently deleted after the verification is complete.

"The upload of still images is not accepted, and photos that do not meet the required level of quality to create an age estimate are rejected," the filing states. "These factors minimize the risk of circumvention and of children taking images of unaware adults."

Of course, kids outsmarting the system isn't the only risk at play here. Accuracy strikes me as the big one, given that facial recognition technology is so notoriously racist: A study conducted in the US, for instance, found that Asian and African American people were up to 100 times more likely to be incorrectly identified by facial recognition systems than white people. And maybe I'm underestimating the magic at work here but determining whether someone is 16 or 18 based on a single selfie also strikes me as a real roll of the dice. The ESRB dismissed concerns about the "fairness" of the system, however, saying that "the difference in rejection rates between gender and skin tone is very small."

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

"The data suggests that for those between 25 and 35, 15 out of 1,000 females vs 7 out of 1,000 males might be incorrectly classified as under-25 (and would have the option of verifying using another method)," the filing states. "The range of difference by skin tone is between 8 out of 1,000 vs 28 out of 1,000. While bias exists, as is inherent in any automated system, this is not material, especially as compared to the benefits and the increase in access to certain groups of parents."

It's important to note that none of this is proposed as a replacement for current systems: Instead, the ESRB presented its facial age verification plan as "an additional, optional verification method" that will be of particular use to people who don't have photo ID. In a statement send to PC Gamer, Yoti also noted that the system works without actually recognizing or identifying individuals: Instead, the technology simply estimates the age of the image it sees.

That's all good, but in my eyes it doesn't change the fact that, yeah, this really is a gross invasion of privacy—I sure as hell don't want to be sharing my mug with the Great Digital Overmind just so my hypothetical kid can play some GTA Online. Quite honestly, I also don't think relying on potentially-dodgy technology to enforce our social mores is such a great idea to begin with. And come on, does anyone seriously think that a sharp 16-year-old won't have this system beat in about 15 minutes anyway?

The ESRB actually made its request to the FTC back on June 2, but it's only come to light now (via GamesIndustry) because the FTC is now seeking public comment on the plan. If you'd like to share your thoughts, you've got until August 21 to do so at federalregister.gov.

Andy has been gaming on PCs from the very beginning, starting as a youngster with text adventures and primitive action games on a cassette-based TRS80. From there he graduated to the glory days of Sierra Online adventures and Microprose sims, ran a local BBS, learned how to build PCs, and developed a longstanding love of RPGs, immersive sims, and shooters. He began writing videogame news in 2007 for The Escapist and somehow managed to avoid getting fired until 2014, when he joined the storied ranks of PC Gamer. He covers all aspects of the industry, from new game announcements and patch notes to legal disputes, Twitch beefs, esports, and Henry Cavill. Lots of Henry Cavill.

Join The Club

Join The Club