Steam's review bombing graph may only encourage review bombing

Some problems can't be solved with data.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

There's no doubt in my mind that Steam user review scores matter. I'm obviously more likely to check out a game with 15,000 overwhelmingly positive reviews than a game with 15 mixed reviews. We all have to make decisions about how we spend our time and money and we use the information available to us.

The question about Steam user reviews is whether or not that information is any good. A Steam user can post a review for any reason. Some want to share their considered thoughts on a whole experience, but others may just want to vent about an irritating bug, or their favorite character being nerfed. Some played for 20 minutes and don't really have anything to say.

Individually, we can all choose who to trust, but there's no way to aggregate so many motivations and methodologies into a single score without losing a ton of information in the process, and as our hardware editor Jarred pointed out to me, reviewers are self-selecting, which further undoes the system. But even understanding that Steam's overall review scores are only vague indicators of popularity isn't enough, as they might be further skewed by the topic of the week: review bombing, a coordinated effort to punish a developer with negative reviews which often occurs for reasons outside of the game itself.

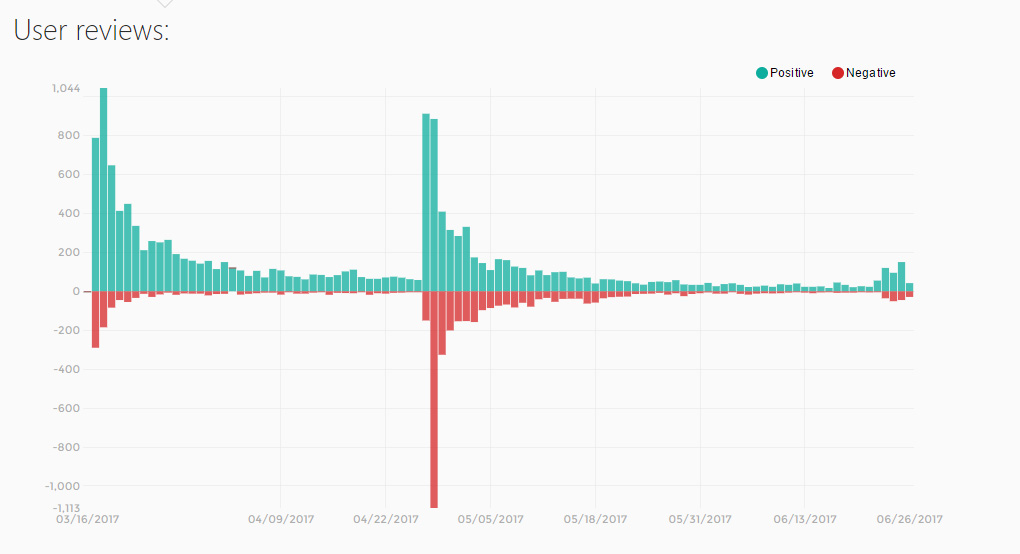

Article continues belowValve has a new 'solution' to review bombing: a histogram which displays the volume of negative and positive reviews over time to help users identify when external matters may be influencing the overall score. SteamSpy already offered graphs like this, which we recently used to demonstrate review bombing in China. The idea is that adding graphs will make it easier for a consumer to notice that a review bomb has happened. They can then read reviews posted during a heavy spike in negativity to try to suss out why—maybe players are mad Half-Life 3 hasn't been released, or there was no Chinese localization at launch, or PewDiePie said the n-word on a livestream and Campo Santo sent him a DMCA notice.

But the histogram isn't really a solution, per se, because Valve doesn't entirely see review bombing as a problem to be removed.

"On the one hand, the players doing the bombing are fulfilling the goal of User Reviews," wrote Valve's Alden Kroll in an update posted earlier this week, "they're voicing their opinion as to why other people shouldn't buy the game." On the other hand, out-of-game issues such as platform support "aren't very relevant when it comes to the value of the game itself," he says. Yet Kroll equivocates, saying that "some of them are real reasons why a player may be unhappy with their purchase."

The post finally settles on the idea that "review bombs make it harder for the Review Score to achieve its goal of accurately representing the likelihood that you'd be happy with your purchase if you bought a game." So it's a problem, but sometimes a problem that arises from legitimate gripes. And because Valve doesn't want to moderate Steam, which would require making judgments on what is or isn't a legitimate gripe, it has treated review bombing as a transparency issue. It's not stopping it from happening, it's just making sure we know it's happening, or at least have a way to find out.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

The no-judgments approach is Valve's whole philosophy toward Steam. In the rare cases where it does make a judgment call, as it has with sexual content, its reasoning isn't entirely clear. And Valve is a lean company for all that it does—around 360 employees at last check—so it prefers automation to time-consuming jobs like manual moderation. This is evidenced by its crowdsourced publishing system, Greenlight, which has been replaced with the even more open Steam Direct, as well as its many discoverability programs which rely on community curators and algorithms rather than staff picks. Valve has admitted in the past that it has a poor customer service record, and I'd wager the problem has stemmed from customer service not being easy to elegantly automate.

But even automation can involve judgment, or have a bias. Kroll notes that Valve could temporarily lock down reviews when there are anomalous surges, but says they don't want to "stop the community having a discussion about the issue they're unhappy about, even though there are probably better places to have that conversation than in Steam User Reviews." This is really, again, about moderation. Even if it's automated, temporarily restricting reviews is a declaration that the review bombing campaign illegitimate. But what if Valve thinks the players do have a legitimate complaint? It could manually bypass the automated lock-down, siding with angry players instead of the developer, but it could not stay neutral either way.

Valve would be forced to draw a line between good and bad reasons to give a game a negative review, and crucially, hire people to make those decisions rather than cleverly automate something—it's all too messy and human-driven for the Valve that we know. Hence, the histogram.

I believe Valve's 'solution' here is well intentioned—the thorough post explaining the decision suggests as much, at least—but as many game developers have pointed out, this particular graph may only cause problems. For one thing, it won't necessarily provide enough context for average users—say, people who don't follow every controversy documented in our news section. Are they really going to investigate the 'mixed reviews' indicator, pull up the graph, and then read intentionally vague reviews to try to identify whether the negativity is justified? I'm skeptical.

The graph also provides visual feedback to review bombers that tells them how they're doing. It gives them a screenshot to pass around as evidence of their success. It validates their campaign. As Evan pointed out during our livestream today, it provides an ammunition similar to concurrent player counts, which are often used as evidence that a game is bad, even though that's a faulty conflation. (LawBreakers is actually pretty good, I swear.)

Not entirely sure about how "give review bombs more exposure, a permanent record, and gamify it with a graph they can min/max" fixes things.September 19, 2017

Steam review scores already require further investigation. They're flawed, as all review aggregates are. The worst thing they can do, though, is encourage skewed results. It's some comfort that Valve has found that review-bombed games typically end up back where they were once the controversy has blown over, but now a big red bar will remain as a proud crater for those who created it—and perhaps motivation to keep it up.

Steam users clearly want to express their opinions on Steam, and that includes opinions about the character of a developer. Campo Santo also expressed its views on PewDiePie when it sent its DMCA. From Valve's current perspective—though Kroll admits it might need a better solution when it begins offering "personalized" reviews—the only important thing is to make sure there's some way for users to see who has more points.

Some problems don't have elegant, automated solutions.

Keeping score doesn't really help if the goal is to ensure reviews are evaluations of games and not vindictive. Moderation requires judgment: someone deciding what is and isn't a legitimate review. It also makes people mad. It cannot be neutral. If Valve wants to curb review bombing, it must decide when a review bomb is unjustified. Some problems don't have elegant, automated solutions.

It is admittedly difficult. Should Valve stop people from reviewing Dota 2 because they feel Valve abandoned them by focusing on games they don't like instead of single-player shooters? They legitimately don't like Dota 2, or they assume they don't, though their opinion isn't exactly a 'review' of the game. That decision is one of many that Valve knows would draw ire, and is choosing to avoid.

But either way it hasn't helped by adding more data. I'm broadly for having more information to base decisions off of—I think it would be cool to see which games have the most player overlap, for instance—but at the best, the review histogram won't really compensate for unjustified review bombs. At the worst, it's a leaderboard for review bombing, and PC gamers love leaderboards.

Tyler grew up in Silicon Valley during the '80s and '90s, playing games like Zork and Arkanoid on early PCs. He was later captivated by Myst, SimCity, Civilization, Command & Conquer, all the shooters they call "boomer shooters" now, and PS1 classic Bushido Blade (that's right: he had Bleem!). Tyler joined PC Gamer in 2011, and today he's focused on the site's news coverage. His hobbies include amateur boxing and adding to his 1,200-plus hours in Rocket League.

Join The Club

Join The Club