Of course Steam said 'yes' to generative AI in games: it's already everywhere

Here's hoping Steam's AI disclosure policy saves us from wasting so much time on speculation and sleuthing.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Valve has been pretty consistent about its attitude toward curating the games on Steam: It doesn't wanna. Even Alex Jones, a guy banned by YouTube and Facebook, has a game on Steam, so it's no surprise that Valve's new generative AI policy avoids ethical judgments, sticking to the company's 2018 declaration that it "shouldn't be choosing for you what content you can or can't buy."

The more prescient observation might be that Steam's new AI policy—which gives the thumbs up to generative AI in games on Steam so long as its use is disclosed and it doesn't break any laws—is really just an acknowledgement that generative AI is already everywhere, including on Steam. Valve hasn't opened any AI floodgate that wasn't already open:

- Free-to-play shooter The Finals, which released on Steam in December, controversially used AI to generate voice lines.

- Cyan Worlds acknowledged the use of "AI assisted content" in Firmament

- Square Enix released a free "AI tech preview" on Steam last year which slapped AI language processing into a 1983 adventure game (to boos, mainly)

- A roguelike that released on Steam in October claims to be the "world's first RPG in which all entities are AI-generated and all game mechanics are AI-directed."

- In 2022, a game called This Girl Does Not Exist released with some now very crude-looking Midjourney portraits.

Those are just a few examples of generative AI already being used on Steam, either during the production of a game or as a live feature—there are more, almost definitely including some we don't know about.

Even with their legal status in the air, generative AI tools have already been built into industry-standard applications like Photoshop, and when AI-generated imagery pops up in games and marketing material, even the company that produced the material is sometimes surprised by it. Wizards of the Coast initially denied the use of generative AI in a recent Magic: The Gathering marketing image, and then admitted it had made a mistake and, actually, "AI components" were included in the graphic.

I'll be very thankful to avoid the 'is it AI generated or just kinda bad art?' theorizing that now happens so often.

The outcome of legal challenges like The New York Times' recently-filed lawsuit against OpenAI could change the direction of commercial AI development this year, but for now there's a mad dash to profit from it, and I think we'll be seeing a lot more AI in games before the end of 2024. At CES this week, Nvidia showed off AI-powered NPCs from startup Convai—Jacob ordered ramen from one, and said its responses were "frighteningly good."

Knowing is half the battle?

Valve is being typical Valve by leaving it up to the law to decide what's acceptable on Steam, but it is making one direct demand, and I'm happy to see it: developers must disclose generative AI use in games submitted to Steam, and parts of that disclosure will be published on Steam store pages for all to see.

Assuming most developers are upfront (if not for the sake of honesty, then for fear of being found out later) and these disclosures are thorough, I'll be very thankful to avoid the 'is it AI generated or just kinda bad art?' theorizing that now happens so often and skip right to the part where we individually decide whether or not we're OK with a game's use of generative AI.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

It's certainly imperfect, but we'll have to rely on voluntary AI disclosure more and more to make decisions, because image generators probably won't mangle fingers forever. If AI continues to improve at the rate it has been, or faster, how will we ever know if something's AI generated, or AI assisted, or AI inspired without relying to some degree on trust?

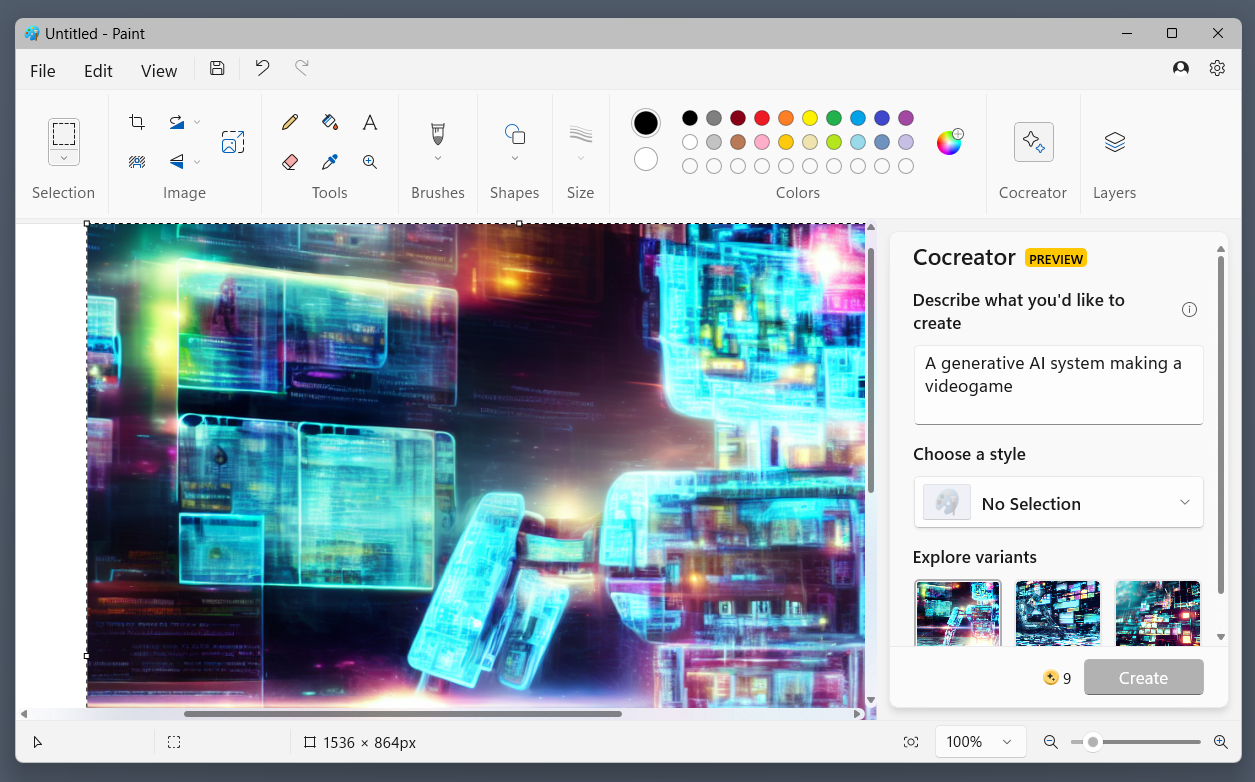

It would be nice if everyone would just slow down so we could take time to think about the consequences of generative AI before sticking it in every commercial product there is, but given that they clearly aren't—they even put it in MS Paint—it is significant that the biggest PC game store has established that a consequence of using generative AI is that you have to say that you used it and how, or be a liar.

If they can actually limit their custom AI model to only mimic legally obtained material, is it OK?

That of course leaves all of us with the hard problem of deciding what sorts of AI usage we will and won't accept in games, which I think will be one of the big themes of 2024. The question is not made easy by the novelty and versatility of machine learning, which is being experimented with all over the sciences, from self-driving car development to medical research. In games alone there are loads of applications, such as AI-powered upscaling like Nvidia's DLSS, agent-based game testing, on-the-fly animation generation, and tools like Neuralangelo, an AI model which transforms 2D smartphone videos into 3D models.

From a legal and ethical standpoint, generative AI tools like ChatGPT invite the obvious objection that they're trained on copyrighted material and can be prompted to reproduce aspects of it—the basis of the NYT lawsuit—but each case is different. One company, Hidden Door, plans to pay authors to use their settings, characters, and writing styles in generative AI storytelling games. If they can actually limit their custom AI model to only mimic legally obtained material, is it OK? Or is generative AI a fundamentally anti-human technology even when it respects copyright? I don't have an answer for that yet.

For now, I'll just be glad if we no longer have to listen to voice lines and wonder if they're from real people or computer people, allowing us to skip past the speculation and sleuthing to get to the hard questions.

Tyler grew up in Silicon Valley during the '80s and '90s, playing games like Zork and Arkanoid on early PCs. He was later captivated by Myst, SimCity, Civilization, Command & Conquer, all the shooters they call "boomer shooters" now, and PS1 classic Bushido Blade (that's right: he had Bleem!). Tyler joined PC Gamer in 2011, and today he's focused on the site's news coverage. His hobbies include amateur boxing and adding to his 1,200-plus hours in Rocket League.

Join The Club

Join The Club