Minecraft creator Notch says 'DLSS fundamentally makes no sense', but the X comments say 'um, actually'

Hey, we're all learning. All the time.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Minecraft creator and opinion-haver Notch has taken to X to complain about Nvidia's upscaling and Frame Generation tech, DLSS. You might have heard of it, particularly its controversial new iteration. However, it's the whole concept of DLSS that seems to be confusing Notch.

"DLSS fundamentally makes no sense," the creator of the best-selling video game of all time opines. "Because the graphics card is too slow to run the game at reasonable speeds, you use THE SAME HARDWARE to run a neural network to generate frames in between the existing ones."

DLSS fundamentally makes no sense.Because the graphics card is too slow to run the game at reasonable speeds, you use THE SAME HARDWARE to run a neural network to generate frames in between the existing ones.April 2, 2026

Putting aside the fact that Notch appears to be talking about Frame Generation specifically here, which is just one aspect of DLSS, lumping graphics card hardware into one monolith of a category is a touch reductionist, as specific ML-optimised Tensor Cores handle the...

You know what, the X thread replies have already done a lot of the work for me. So, I'll give you a choice selection of those instead.

its not the same hardware, its the hardware specialized and optimized to run neural networksApril 2, 2026

you're putting the load on another section of the pipeline, which leads to better relative performance pic.twitter.com/EeLs60SLE1April 2, 2026

"Anti-aliasing fundamentally makes no sense.Because the graphics card is too slow to run the game at reasonable speeds, you use THE SAME HARDWARE to run an ALGORITHM(?!?!) to generate good looking frames out of the existing ones."April 2, 2026

An overall point could be made, I guess, that graphics card design and development could (or should) be focussed towards raw raster performance, instead of developing machine learning-specific hardware to assist.

However, with the advent of ray tracing (and ever-more-demanding game engine tech), the industry has shifted away from resource-intensive brute force rendering techniques, and has instead prioritised AI developments to keep the graphical ball rolling.

As Nvidia's vice president of Applied Deep Learning Research, Bryan Catanzaro, explained back in 2023:

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

"Moore's Law is dead. We don't know as a civilisation how to keep turning the crank on traditional ways of doing things. We have to be smarter."

"You fundamentally realise you have to be more intelligent about the graphics rendering process," Catanzaro continued. "Brute force—let's re-render every frame 120 times a second at 2160p output—that is wasteful because we know that there are a lot of correlations in the output of any rendering process.

"We know that there are a lot of opportunities to be smarter, to reuse compute. And then deliver transformational image quality benefits, things like Cyberpunk, that we could never have imagined doing before."

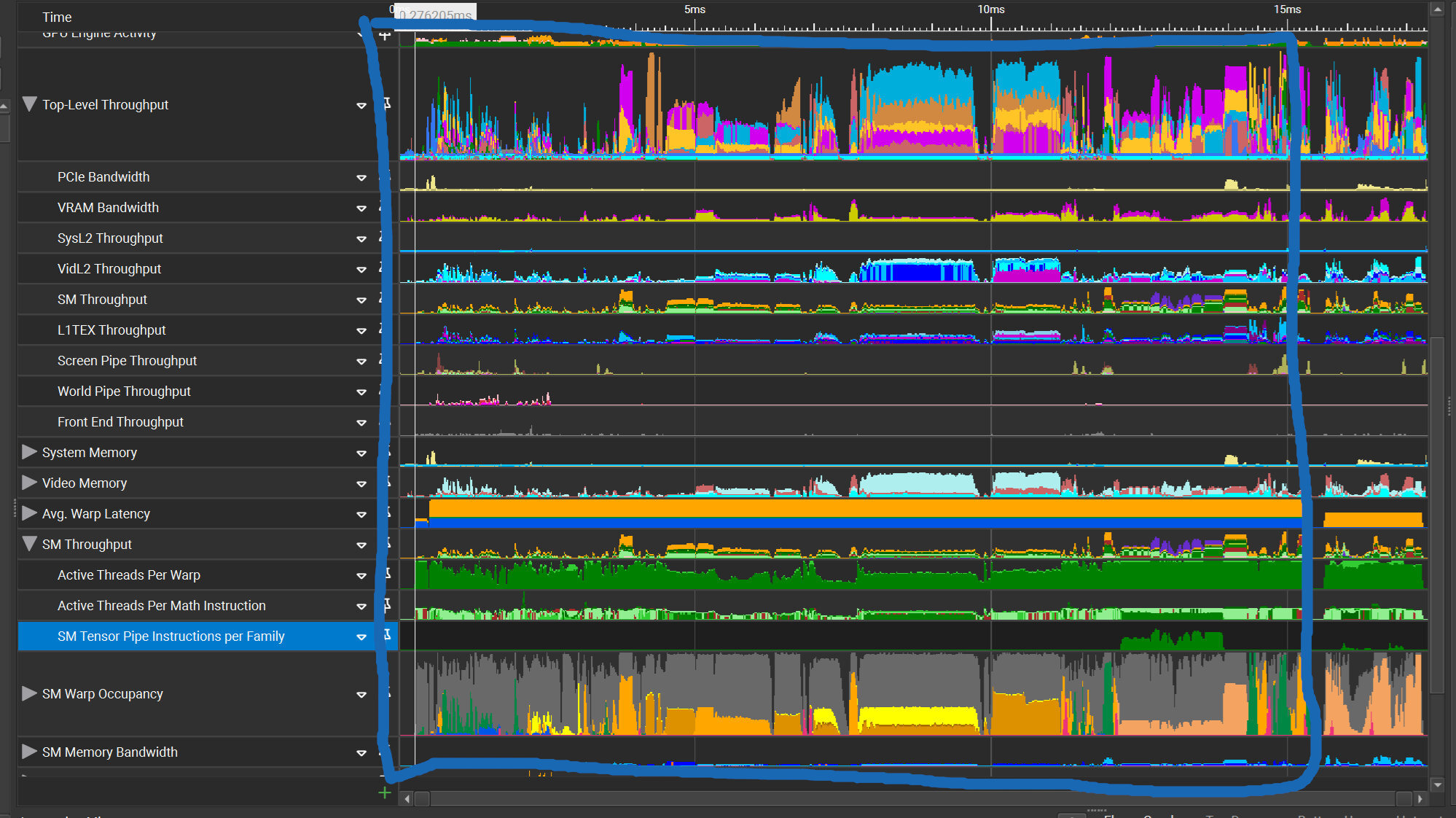

Our Nick is performing some in-depth testing of DLSS and Frame Generation at this very moment, and has passed me the image above from our own data to represent that very point.

"Everything in the blue box is what the GPU has to do to render one frame," Nick explains. "Everything after that is what the GPU has to do interpolate one additional frame with AI."

That's not to say that DLSS is everyone's cup of tea, or that Nvidia's AI enhancements are flawless. Opinions will vary greatly here. Nor is it entirely unreasonable to rail against the idea of machine learning algorithms generating frames within your games as a catch-all solution—particularly as the tech is not without caveats.

Especially when Multi Frame Generation on lower-spec cards gets involved, or developers rely too heavily on it for reasonable performance.

However, it does all make sense, at the very least. Hope this helps. I hate to be that guy, but sometimes this job just puts you there, y'know?

1. Best overall: AMD Radeon RX 9070

2. Best value: AMD Radeon RX 9060 XT 16 GB

3. Best budget: Nvidia RTX 5050

4. Best mid-range: Nvidia GeForce RTX 5070 Ti

5. Best high-end: Nvidia GeForce RTX 5090

Andy built his first gaming PC at the tender age of 12, when IDE cables were a thing and high resolution wasn't. 26 years later (yes he's getting old), he now spends his time travelling around the world attending hardware launches and trade shows, all the while writing about and reviewing graphics cards, CPUs, keyboards, mice, gaming headsets and much, much more. You name it, if it's PC gaming hardware he'll write words about it, with opinions and everything.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Join The Club

Join The Club