What DirectX 12 means for gamers and developers

Peter "Durante" Thoman is the creator of PC downsampling tool GeDoSaTo and the modder behind Dark Souls' DSfix. He has previously analyzed PC ports of Valkyria Chronicles, Dark Souls 2, The Witcher 3 and more. This article has recently been updated with new information about the first DX12 games.

The release of Windows 10 marked the first time the broad PC gaming public have access to a low-level, cross-vendor graphics API. Ever since AMD first presented Mantle in 2013, there’s been a lot of back and forth discussion on how significant the gains to be made by low-level APIs really are for games. Opinions range from considering it nothing less than a revolution in graphics processing, to little more than an overblown marketing campaign. This article aims to provide a level-headed outlook on what exactly DirectX12 will offer for gamers, in which situations, and when we will see these gains.

One of the first OpenGL 1.0 sample applications, 1995.

To explain not just the what, but also the why of it, I’ll detail the tradeoffs involved in various API design decisions, and the historical growth that led to the current state of the art. This will get technical. Very technical. If you are primarily interested in knowing how these changes will affect you as a gamer, and what you can expect from an upgrade to Windows 10 now and in the near future, then skip forward to the final section, which touches on the important points without the deep dive.

What this article is not about are the handful of new graphics hardware pipeline features exposed in DirectX 12. Every new release of the API adds support for a smattering of hardware features, and the fact that you can, for instance, implement order-independent transparency more effectively on DX12 feature-level graphics hardware is orthogonal to the high/low-level API tradeoff discussion. This separation is further supported by these features actually also being added to DX11.3 for developers who do not want to switch to DX12. The expectation is for such hardware features to become important in years to come, but have minimal impact on the first wave of Direct3D 12 games.

— Page 1: An introduction to graphics APIs

— Page 2: How DX12 and low-level APIs work

— Page 3: What DirectX 12 means for gamers

A brief history of DirectX and APIs

Before we get into the details of what changes in DirectX12, some basics need to be established. First of all, what is a 3D API, really? When the 3D accelerator hardware first appeared on the market, there was a need to provide an interface for programmers to leverage its capabilities—an Application Programming Interface, so to speak. While the vendor-specific Glide API developed by 3dfx Interactive managed to hold some ground for a while, it also became obvious that continued growth of the market would necessitate a hardware-agnostic, abstract interface. This interface was to be provided by OpenGL and DirectX.

GPUs back then were rather simple devices compared to now. In the end, the API only really needed to allow the developer to render textured (and perhaps lit) triangles to the screen, because that is what the hardware did. Hardware capabilities have evolved rapidly ever since, and APIs have been gradually adjusted to keep up, adding a slew of features here and entire pipeline stages there. That is not to say that there haven’t been significant API-side changes before—the switch completely away from immediate mode geometry generation for both OpenGL and DirectX was very significant, for example.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Nonetheless, high-level APIs on PC still operate on the basic principle of protecting programmers from worrying about hardware-level details. This can be a convenient feature, but sometimes fully understanding what is going on in hardware—which is the central principle of a low-level API—can be crucial for performance.

Why switch to low-level APIs?

Low-level APIs are a large change to 3D programming, and their introduction requires major design and engineering efforts on part of platform providers and hardware vendors, as well as game and middleware developers. For roughly two decades now, high-level APIs were ‘good enough’ on PC to push forward 3D rendering like no other platform has done. So why is this effort undertaken now? I believe the reason is a combination of several distinct developments, which, together, now outweigh the effort required to implement this change.

The Hardware Side

A primary driver are certainly hardware developments, both on the GPU, but perhaps even more so on the CPU side of things. It might be counter-intuitive to think of CPU hardware changes causing an upheaval in graphics APIs, but as the API serves as a bridge between a program running on the CPU and the rendering power of the GPU, it’s not really surprising.

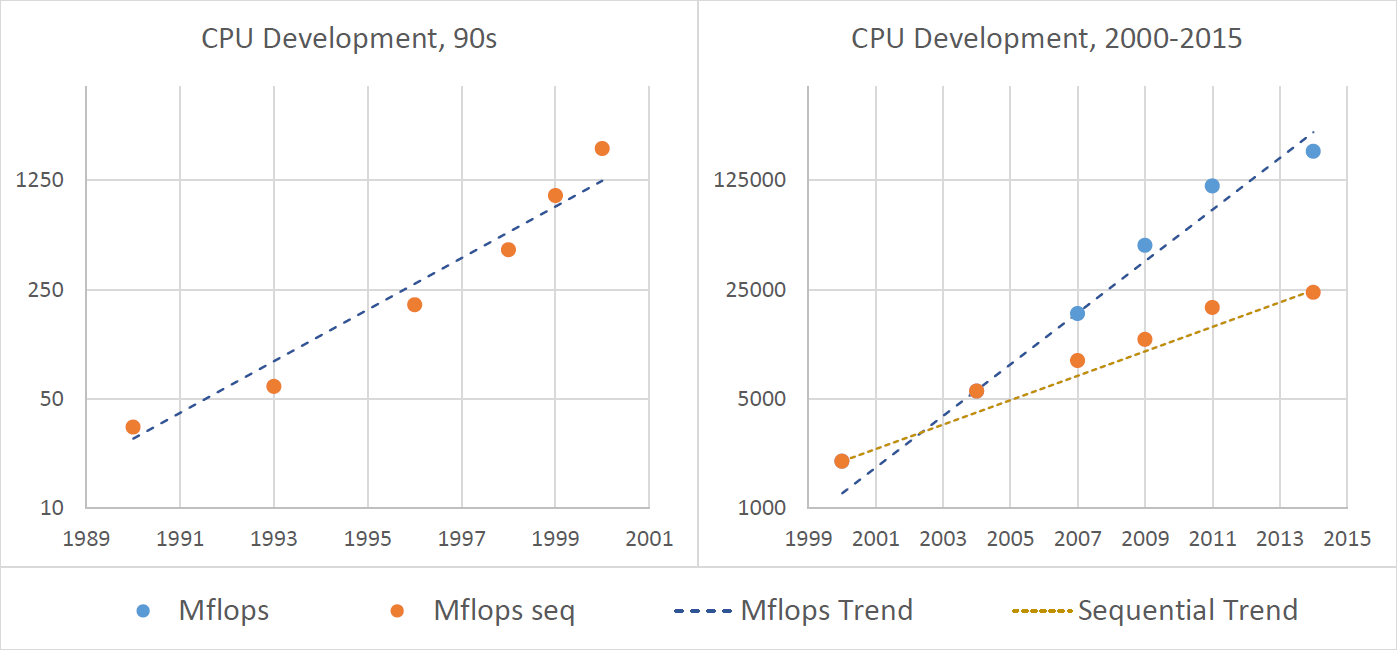

As noted earlier, high level APIs were seemingly good enough for about two decades of graphics development, starting in the early 90s. The chart above illustrates the floating point performance of high-end desktop CPUs over that same time period, and should give you an idea why the CPU performance implications and parallelization of graphics APIs were not at the forefront of their designer’s thoughts in the 90s and early 00s. Blue depicts parallel performance, while purely sequential performance is shown in orange. Until 2004, the two are in lockstep, but more recently increases in sequential performance have slowed down considerably. Therefore, one important goal of changing up the API landscape is improving parallelization.

However, while the severe slowdown in CPU sequential performance growth is likely the most important driver for these API changes, modern GPUs are also fundamentally different from the devices that we used in the 90s. They perform arbitrary calculations on a wide variety of data, creating geometry on their own rather than just handling an input stream, and leverage parallelism on a staggering number of levels. All of these changes can (and have been) bolted on to existing APIs, but not without some increasingly severe semantic mismatch.

The Software Side

Although the hardware side of the equation is arguably the most important, and certainly the most commonly publicized, there is another set of reasons to make a clean break with existing graphics APIs, and it’s entirely related to software. Every PC gamer should be familiar with this sequence of events: a new highly-anticipated AAA game is released, and at roughly the same time both relevant GPU vendors release new drivers “optimized” for the title. These might be significantly faster, or perhaps even necessary for the game to work at all.

This is not because game developers or driver engineers are incompetent. It’s due to the sheer size that modern high-level graphics APIs have arrived at after years of incremental changes, and the even more staggering complexity of graphics drivers which need to somehow wrestle these abstract APIs into a stream of instructions the GPU hardware can understand and effectively execute. Slimming down the driver responsibilities seems to be effective at improving their reliability—developers familiar with both claim that DX12 drivers are already in far better shape than DX11 ones were at a similar point in their development timeline, despite the greater changes imposed by the API.

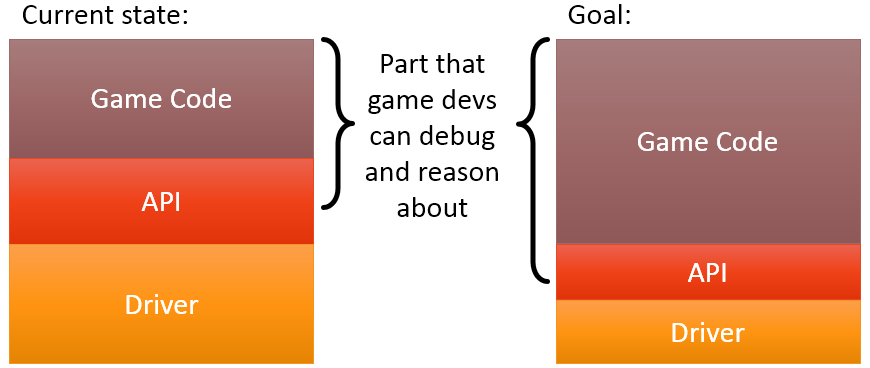

Having such a monstrous driver code base is not just difficult for GPU vendors: it also makes it more challenging—sometimes even virtually impossible—for developers to diagnose bugs or performance issues on their own. Everything in the actual game code base can be investigated and reasoned about using conventional development tools, but only the hardware vendor can say for certain what goes on behind the API wall.

If the driver decides to spend a lot of time every few seconds rebuilding some data structure, introducing stutter, it might be almost impossible to reverse engineer which part of the game code—which is the only thing developers have control over—is responsible. As illustrated above, making the API and driver smaller and more lightweight by moving more responsibilities to the game code will allow developers to get a more complete picture of what is going on in all cases.

On the next page: how low-level APIs like DirectX 12 actually work.