GTC 2016: GPUs can do more than gaming

Considering you're reading this on PC Gamer, chances are you have a reasonably potent graphics card inside your PC which you use to play games. Every year, Nvidia puts on their GPU Technology Conference (GTC—not to be confused with GDC, the Game Developer Conference we had last month), and the focus moves from games to all the other awesome things that are being done with high powered GPUs. The days of early GPUs, which were primarily designed to set up triangles and fill them with textures, are long since gone. If you've ever wanted to feel really stupid, you should hang out with some of the people trying to harness the power of the GPU in new and interesting ways.

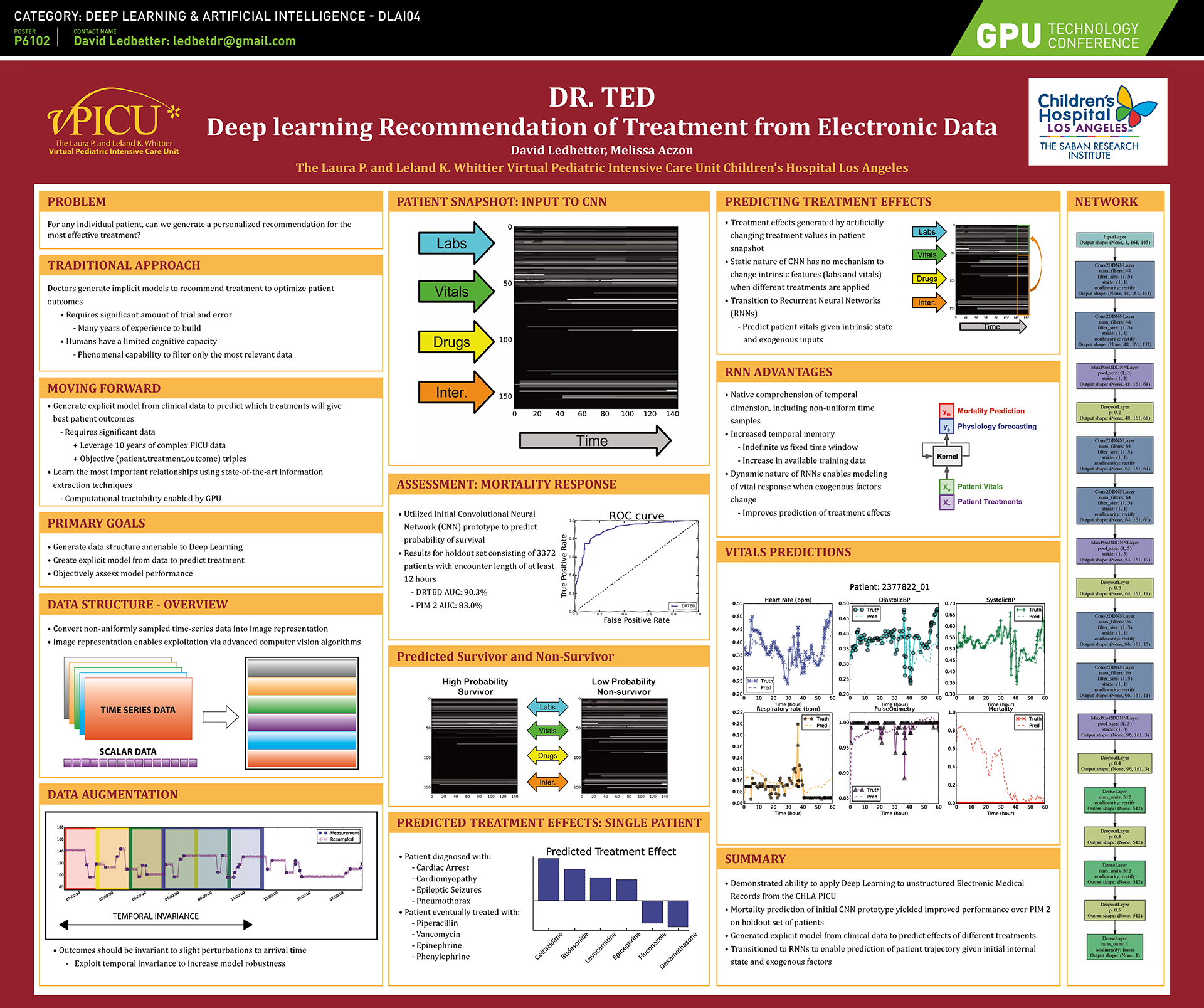

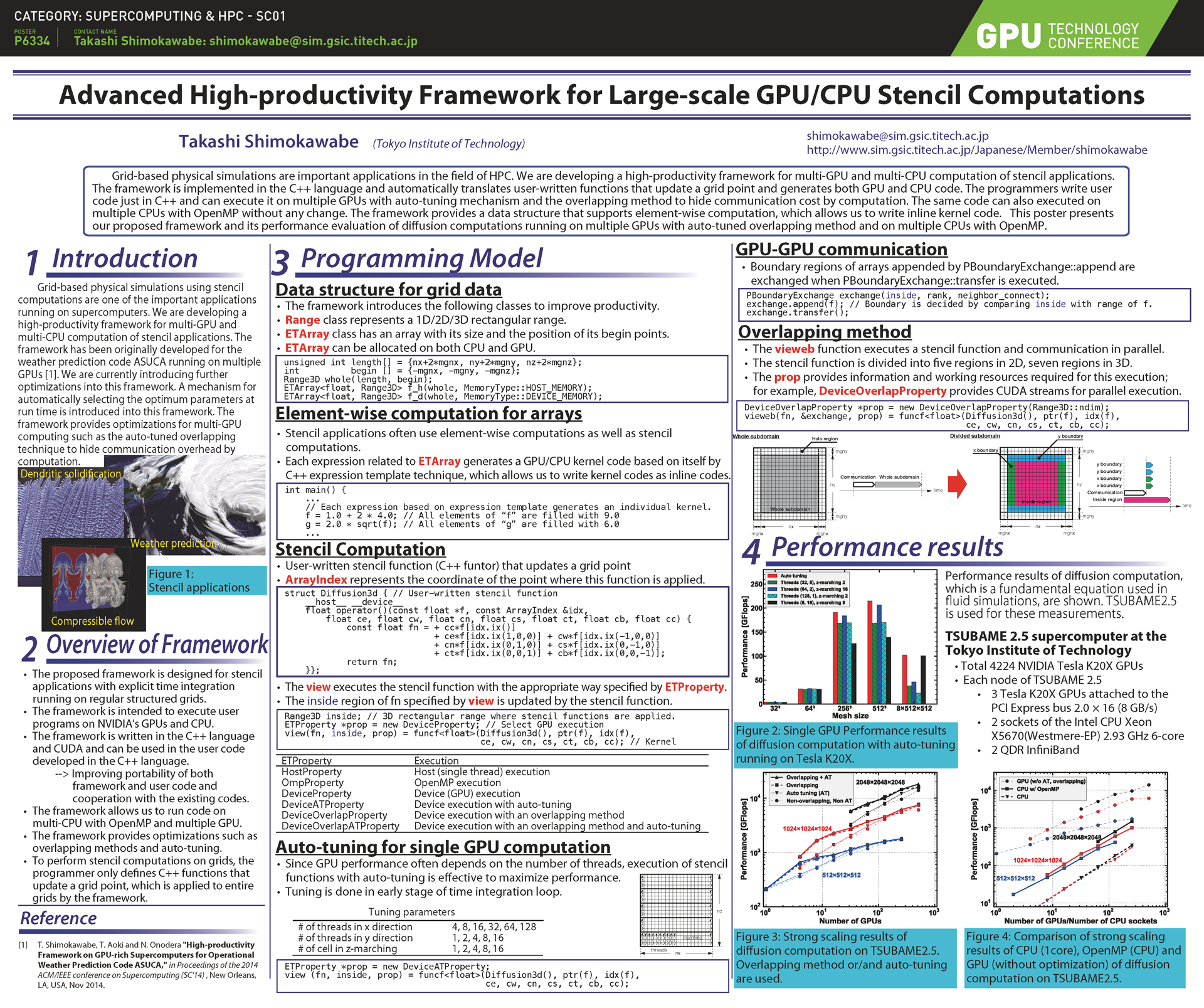

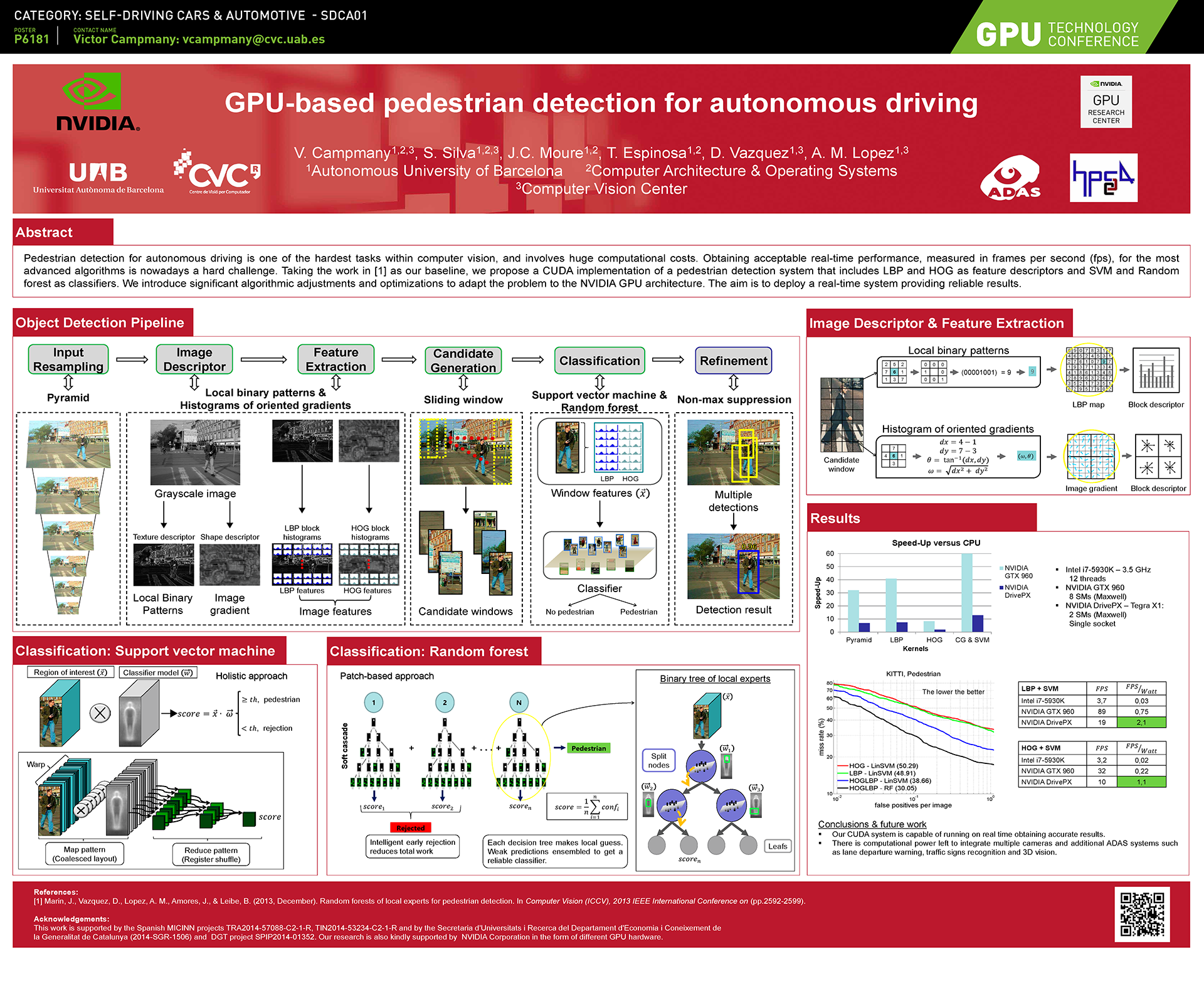

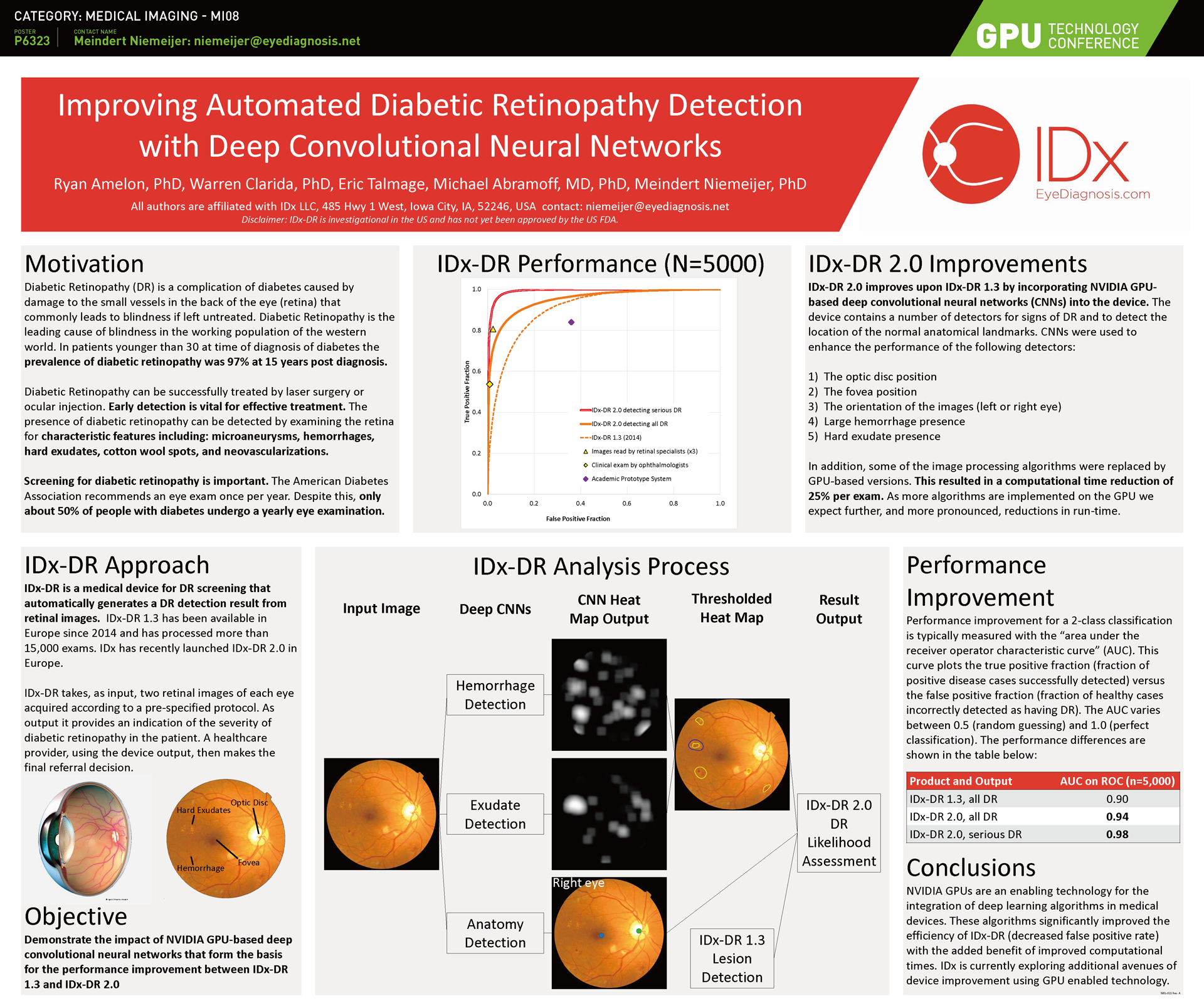

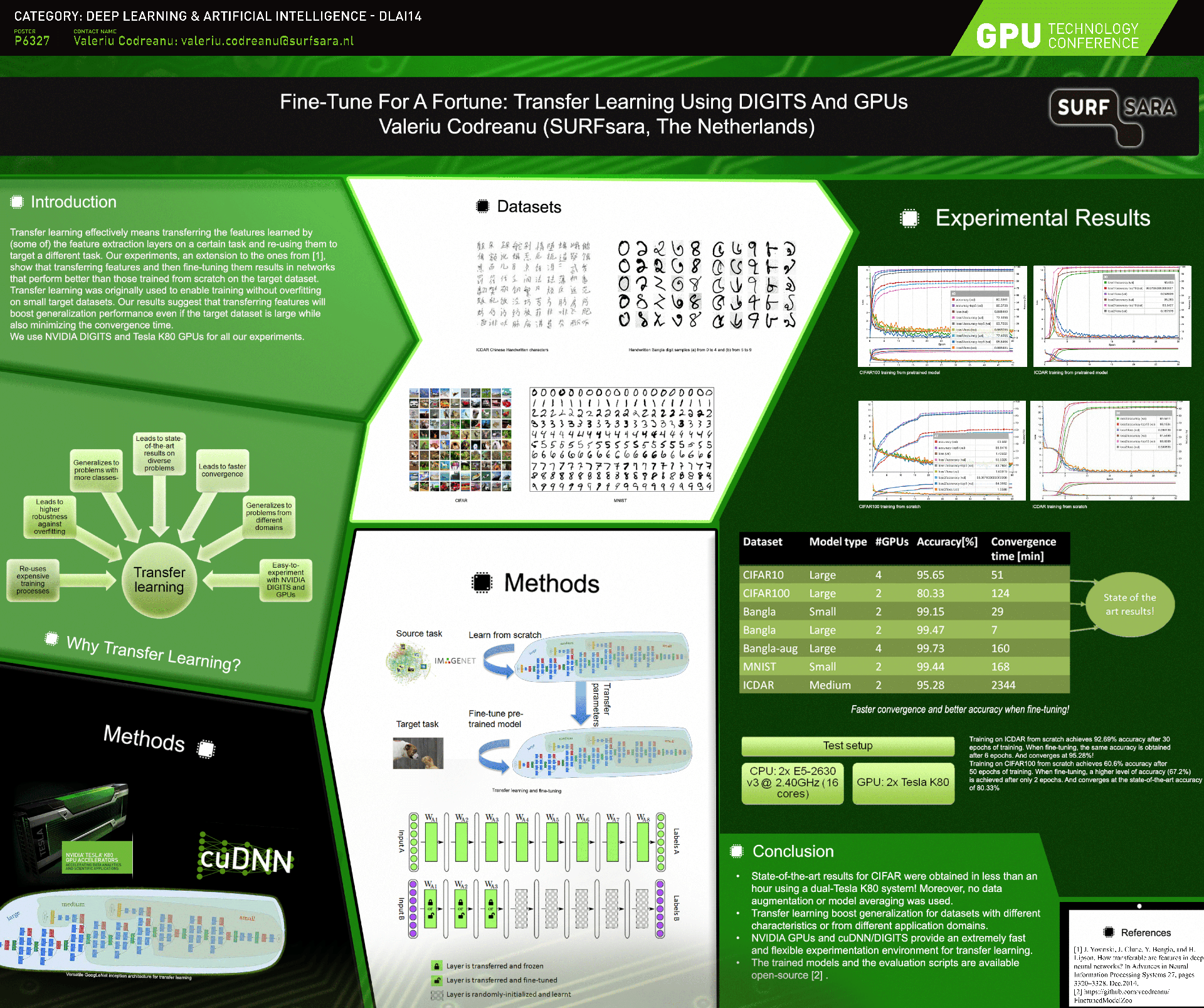

This evening at the Beer and Posters event, there was a gallery of over 140 posters showing off work and research being done via GPUs. It's basically a science fair for the uber-nerds of the graphics industry, and I won't even pretend to understand most of what the posters were saying. Each one represents hundreds of hours of work and research, summarized into a poster for everyone to look at. To give you an idea of the types of things being done, here are the "Top Five" poster finalists, and you can view the complete gallery online.

Deep learning, autonomous vehicles, chemistry, biology, medical imaging, supercomputing, real-time graphics, and many other topics can benefit from the highly parallel processing architectures modern GPUs afford. Many of the posters note speed ups in computational times of 10 to 100 times or more vs. a traditional CPU-only approach, and the enhanced performance opens up new areas of application. Reading through most of the projects, I feel like a toddler in the graphics world walking amongst intellectual giants. That's a pretty apt description of a technology journalist chilling with academic researchers.

What I found particularly interesting is how few of the researches are using modern GPUs. Many of the projects are still using GPUs from the Fermi generation (circa 2009-2011), and plenty more are running on Kepler hardware (2012-2014); Maxwell (2014 to present) was present as well, but not in the numbers I'd expect from the PC gaming community, where Kepler is getting to be "too old" and Fermi has largely been retired. Despite the cutting edge research, cost of equipment is still a big concern, and some of the research has been going on for years. There's also the fact that Fermi was "built for compute" while Kepler and Maxwell tended to target games more, and there were a few instances where researchers showed better performance on older hardware vs. newer equipment (e.g., GTX 780 Ti still packs a punch in some scenarios, besting even GM200).

While much of what we'll see at GTC doesn't directly impact the gaming world, it's impressive to see all the applications for what is frequently the core component in a gaming system. Some people may scoff at gaming as a waste of time, but some day I'm going to hop into my car and say, "Drive me to my mom's house." As the vehicle happily zips away on a 950 mile journey while I rest in comfort, playing games or writing or sleeping, it will be in large part due to the amazing potential of the GPU.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.