Is silicon doomed? IBM invests billions in quest to find alternative

The days of silicon sitting inside our CPUs and GPUs are numbered, according to a recent announcement by chip giant, IBM. They're betting a cool $3 billion dollars on being able to find a decent alternative before silicon starts to hinder hardware progress.

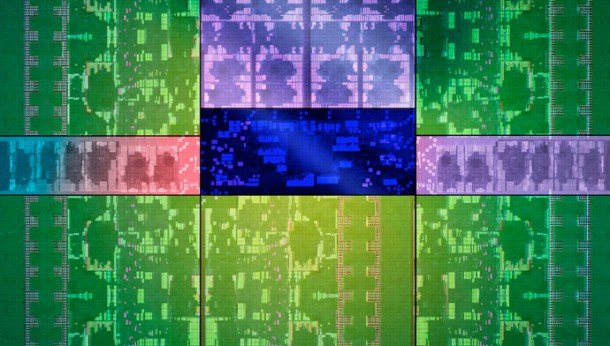

The problem is one of scale. The Moore's Law trend has been driving processor shrinkage as companies pack smaller transistors into our chips. Already those transistors are pretty darned small—14nm CPUs are coming in the shape of Intel's Broadwell at the start of 2015—and there's a pretty solid path all the way down to 5-7nm too.

Beyond that things get fuzzy. The problem is that with such teeny tiny transistors interference is a real issue. That could lead to genuine difficulty in keeping such minute-scale CPUs running stably. Graphics processors are also struggling with scale. We were meant to be seeing 20nm Maxwell GPUs from Nvidia this time around, but reportedly a problem with the 20nm production process and weak performance has encouraged them to stick with the aging 28nm process for now, and they could feasibly avoid the 20nm process altogether.

IBM has announced a $3 billion dollar investment in trying to find ways to get ever smaller chips to function effectively with or without the use of silicon. “We really do see the ticking clock on silicon,” IBM's Tom Rosamilia told Wired . As well as researching exciting materials like carbon nanotubes or light-based interconnects like silicon photonics, IBM is also investigating new ways to approach computing. How much of that $3 billion dollars is going to spent sticking cats in boxes and plugging them into graphics cards I'm not sure, but quantum computing is definitely on their radar too.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

Dave has been gaming since the days of Zaxxon and Lady Bug on the Colecovision, and code books for the Commodore Vic 20 (Death Race 2000!). He built his first gaming PC at the tender age of 16, and finally finished bug-fixing the Cyrix-based system around a year later. When he dropped it out of the window. He first started writing for Official PlayStation Magazine and Xbox World many decades ago, then moved onto PC Format full-time, then PC Gamer, TechRadar, and T3 among others. Now he's back, writing about the nightmarish graphics card market, CPUs with more cores than sense, gaming laptops hotter than the sun, and SSDs more capacious than a Cybertruck.