Ashes of the Singularity Beta 2

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

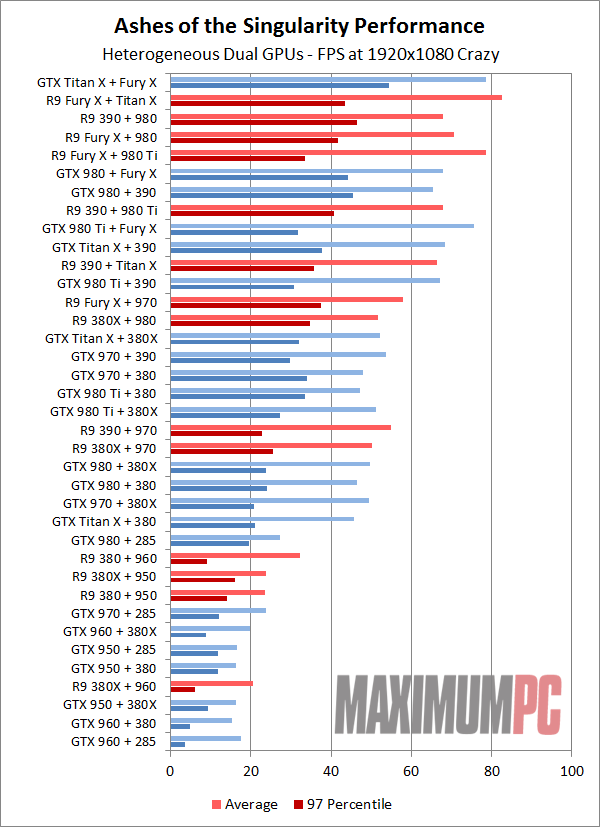

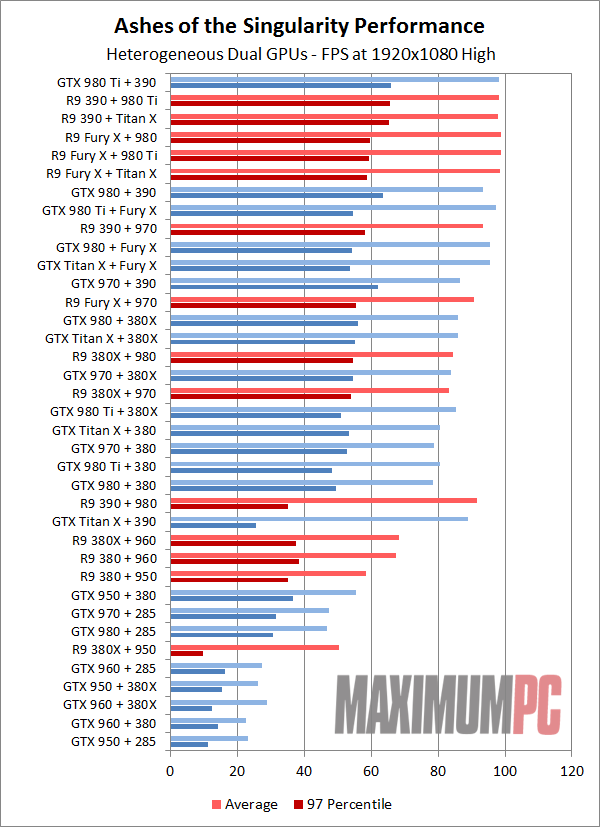

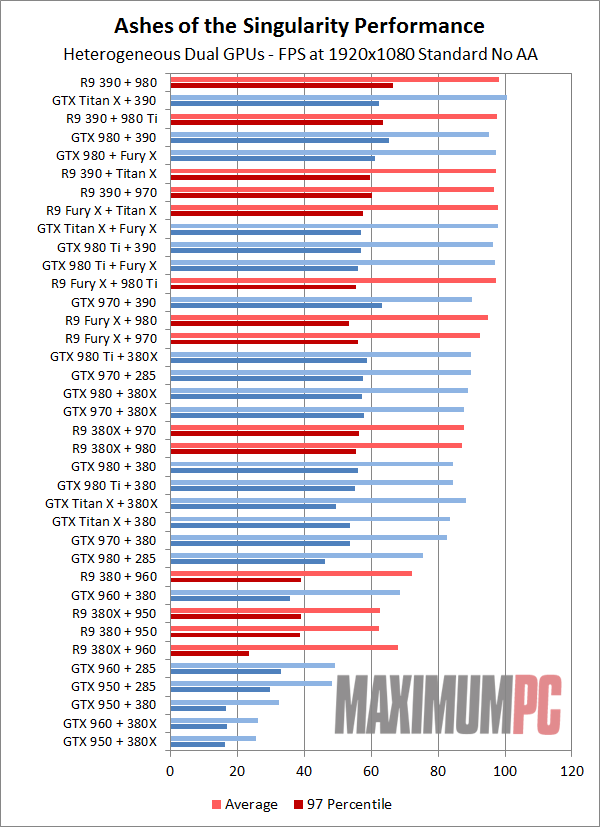

Heterogeneous dual-GPU DX12 performance

And this is where the gloves truly come off. "Fire and brimstone coming down from the skies! Rivers and seas boiling! Forty years of darkness! Earthquakes, volcanoes…. The dead rising from the grave! Human sacrifice, dogs and cats living together... mass hysteria!" (Thanks, Ghostbusters.) There are so many comparison points that the charts are a bit of a mess. We've color-coded things according to the primary GPU, with AMD in red and Nvidia in blue, but obviously the secondary GPU is from the other team. Where the homogeneous testing at least seems somewhat plausible for end users to own, our heterogeneous configurations mostly come from the realm of science fiction.

In general, we've started at the top of the performance ladder and paired each GPU with the other vendor's closest three or four offerings. In many instances, we've tested both A + B and B + A configurations, and there are very few cases where swapping the primary and secondary cards doesn't end up changing the performance standings. We can't possibly discuss everything the charts will show in the text, but if there are any particular pairings that are of interest (or missing), let us know in the comments.

Drivers are also a bit wonky, as if you're using a single display, both vendors don't tend to let you access the control panel for the secondary GPU. Plug in a second monitor to the other GPU and you can open up the settings options, but in practice it's not actually necessary. It's a bit weird to have AMD's Crimson drivers tell you there's "No AMD graphics driver installed, or the driver is not functioning properly," only to find everything working more or less as expected when you launch Ashes. Nvidia's control panel gives a similar message that's more helpful: "Nvidia Display settings are not available. You are not currently using a display attached to an Nvidia GPU." The net result in either case is the same: You can't open the settings dialog without a display attached to the GPU.

Article continues below

We're seeing plenty of strange behavior, like the Titan X and Fury X claiming the two top slots, but having the Fury X as the primary produces better average performance and substantially lower 97 percentiles, while the Titan X as primary tends to be more consistent. Having 12GB VRAM seems to compensate for a lot of potential problems, as the 980 Ti + Fury X doesn't do nearly as well, despite the Titan and 980 Ti generally performing within a few percent of each other in our individual test results.

Frankly, we're not quite sure what to make of the overall standings. They're crazy, just like the preset name. The good news is that we have at least 12 pairings breaking the 60FPS mark for average frame rates; the bad news is that 97 percentiles on those 12 pairings range from just barely over 30FPS to as high as 55FPS. Mass hysteria indeed! Perhaps the less said, the better.

1080p High starts smacking into CPU limits again, only a bit lower now thanks to the mixed GPUs. There are still oddities with various test configurations as well. Even though we exit the game between each benchmark setting, sometimes the GPUs seem to end up in a messed up state, or at least something is causing degraded performance. The GTX 960/950 paired with the R9 380X/390/285 should all fall within a cluster, but depending on luck of the draw, sometimes a particular combination will do well (e.g., GTX 950 + 380) while another combination will do poorly (GTX 960 + 380X). Remember that quote from earlier, about low-level APIs being hard to program for, doing a lot of things manually instead of relying on drivers? Some of that is clearly coming into play here.

The same story continues at 1080p Standard, where most of the higher end configurations are hitting CPU bottlenecks, while the 2GB cards in particular have confusing and unreliable results. And now is as good a time as any to talk about some of the rendering errors.

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.

On the Crazy setting, many of the 2GB pairings didn't render properly—or at least, they didn't consistently render properly. Some were always full of corrupted graphics and missing textures, like the 380/380X with the 285; others would look okay one run and then have flashing textures or missing objects on the next run. Most of the time, things looked okay with 1080p Standard, but then scaling from a single GPU would sometimes not work properly, with performance regressions in several instances.

If you're hoping to run some of your own tests, the second beta for Ashes should be publicly available on Steam Early Access tomorrow. Obviously, Windows 10 is required for DX12 testing and EMA support, but if you meet that requirement and have a few GPUs sitting around, give the benchmark a shot. There may be some particularly good combinations of GPUs out there that we didn't test, but be prepared for the occasional wonky behavior.

Frankenstein's monsters

Just looking at what Oxide has accomplished so far with DX12 and Ashes of the Singularity causes us to imagine scenes from the various Frankenstein movies. It's hard not to think of the developers roaming around the labs like mad scientists, playing with forces mere mortals can barely comprehend. And then after countless hours of debugging and head smashing, lightning strikes and there's a cackle of glee: "It's alive! It's aliiiiiiive!"

With life in his limbs, the misshapen beast lurches from the table, bangs its head, and falls to the floor. "Oops…back to the drawing board," thinks the mad doctor. More tests, a change in formulas, and more than a few uttered curses later, he tries again. Sometimes the creations are almost human, other times they fall well short, and still other times the laboratory is lucky to be in one piece after a particularly bad experiment. Maybe that's not quite how it went down, but it's probably not too far off the mark.

Ultimately, Oxide's monster is coming to life, and the official release date is currently set for March 22. There's obviously a lot of room left for fine tuning, but at some point you just have to take what you've got and hope no one holds a flame in front of your creation. Oxide looks to have spent most of their EMA efforts tuning for high-end cards, with lesser cards sometimes causing more harm than good. When things work properly, you end up with this crazy AMD + Nvidia graphics solution that by all rights shouldn't even exist. And for even trying to do that, and being the first out of the gate with working EMA support, Oxide deserves props.

Will we one day live in a world full of gaming engines that scale to properly utilize any and all compute resources in an intelligent fashion? If so, it will be thanks to the pioneering efforts of companies like Oxide—and AMD, Nvidia, and Microsoft as well, since without their support, most of what we're seeing today wouldn't be possible. But we're many years away from such a graphical utopia, and if I were a betting man, I'd guess we'll experience plenty of struggles en route.

If we ever do arrive at that destination, sporting VR headsets or whatever it is the future holds, we'll be able to look back fondly and tell our grand kids, "I remember the first time a game tried to support multiple graphics cards from different vendors." We'll raise a glass to the memories of Oxide and Ashes, and then we'll jack into the matrix and get back to our virtual entertainment.

Jarred's love of computers dates back to the dark ages when his dad brought home a DOS 2.3 PC and he left his C-64 behind. He eventually built his first custom PC in 1990 with a 286 12MHz, only to discover it was already woefully outdated when Wing Commander was released a few months later. He holds a BS in Computer Science from Brigham Young University and has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

Join The Club

Join The Club