AMD

Latest about AMD

A match made in heaven for gamers on RTX 30-series GPUs: AMD's frame generation and Nvidia's DLSS together at last

By Jeremy Laird published

news Cat and dogs living together, people!

AMD's launched a pair of new budget chips in its 8000-series, lopping the GPUs of its APUs

By Nick Evanson published

news One looks like it could be the bargain CPU of 2024. The other...err...sadly not.

AMD Linux devs jamming nearly 24,000 lines of RDNA 4 supporting code into its mainstream driver suggests next-gen launch may be close at hand

By Nick Evanson published

news Mesa mega merge means a magnificent moment may manifest…err…soon.

AMD will reportedly stop supporting Windows 10 starting with its new Strix Point APUs and you can all blame AI for that

By Dave James published

news If you wants the AI goodness from AMD's new NPU, you'll need Windows 11 and nothing else will do.

AMD continues to chip away at Intel's CPU market dominance, though the laptop market is still a tough market to crack

By Chris Szewczyk published

News And there's an upside with Zen 5 to come later this year.

Homeworld 3 performance analysis: Surprisingly scalable but no ultra-high frame rates

By Nick Evanson published

The frame rate of space Even old CPUs will run it well enough, provided they can handle six or more threads.

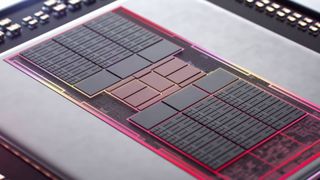

AMD's gaming graphics business looks like it's in terminal decline

By Jeremy Laird published

news There's no getting round it, the Radeon RX 7000 GPU family looks like a disaster.

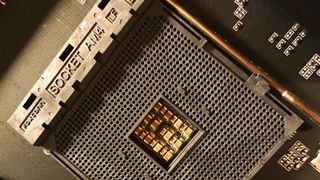

Despite being just a humble CPU socket, AMD 'boldly suggests' AM4 has 'legendary status'

By Nick Evanson published

Legend Few other CPU sockets have lasted as long or supported as many chips as this one, but its history isn't entirely free of hiccups.

The biggest gaming news, reviews and hardware deals

Keep up to date with the most important stories and the best deals, as picked by the PC Gamer team.